Professional Documents

Culture Documents

User Modeling in Web Transactional Tasks: Visual and Behavioral Patterns

User Modeling in Web Transactional Tasks: Visual and Behavioral Patterns

Uploaded by

Journal of ComputingOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

User Modeling in Web Transactional Tasks: Visual and Behavioral Patterns

User Modeling in Web Transactional Tasks: Visual and Behavioral Patterns

Uploaded by

Journal of ComputingCopyright:

Available Formats

JOURNAL OF COMPUTING, VOLUME 4, ISSUE 3, MARCH 2012, ISSN 2151-9617 https://sites.google.com/site/journalofcomputing WWW.JOURNALOFCOMPUTING.

ORG

User Modeling in Web Transactional Tasks: Visual and Behavioral patterns

Mashael Al-Saleh, Areej Al-Wabil

Abstract Constructing a model of how humans interact with the web is essential for informing the design and evaluation of interactive elements on the web. Most of the constructed frameworks and models have focused on navigational and informational tasks. The main contribution of this paper is the construction of a model of user interaction in transactional tasks which can be the basis of design guidelines for web forms in order to optimize the design of interfaces. The model was examined and tested in an exploratory eye tracking study. Evidence suggests that the model is effective in representing human behavior patterns in interacting with transactional tasks on the web.

Index Terms Eye tracking, user modeling, transactional task, visual attention

1 INTRODUCTION

of users can perform on the web The types to tasks thatclassification are navigational, according Broder's informational and transactional [1]. Tasks are considered navigational when the user wants to reach a particular page or element on the web site, informational when the user wants to find specific information available on the web site, and transactional when the user reaches a specific page and interaction occurs to complete a form; for example, completing a shopping transaction or filling out a form. User modeling has long been used in HumanComputer Interaction (HCI) research to investigate effective methods for optimizing user interfaces. User modeling is often used to understand how users interact with systems, such as examining critical incidents in which problems occur, and the levels of understanding of users. Services and transactions are increasingly being offered online for users, but there exists limited research on modeling users in transactional tasks when compared to navigational and informational tasks. Eye tracking has been shown to be an effective usability tool for measuring the cognitive and perceptual capabilities of users in their interaction with interfaces. Many metrics can be collected and analyzed based on the assumption that eye movement reflects the cognitive process during the users interaction. The ocular behaviors that are usually measured are: saccade, fixation, and scan path. These measures are used in many studies to

evaluate the efficiency and effectiveness of interactive systemsin supporting the users goal [2][3][4]. The focus of this study involves developing a user model that describes users behaviors in conducting transactional tasks on the web. The model is motivated by the Theory of Action [5], which quantifies the perceived relevance of web form elements to the user's goal by cognitive processing and behavioral mechanisms. The model combines behavioral and eye gaze metrics which are derived from visual exploration patterns exhibited by users. In exploratory eye tracking experiments, visual patterns of user interactions with web-based transactions were examined for verifying the models representation of user behavior and cognition.

2 RELATED WORK

Our interest in this paper is examining how users interact with forms in the context of the Web, and specifically transactional tasks. Most of the frameworks have been proposed for navigational and informational tasks. For example, navigational frameworks include the framework for Human-Web Interaction [6], and navigation in electronic worlds [7]. In terms of transactional tasks, the modal theory was proposed by Bargas-Avila et al. which depicted two modes of interacting with forms, namely the completion mode and the review mode [8]. General frameworks have been constructed based on the understanding of user navigation patterns in hyperlinked structures and are often informed by usability practice such as the User Action Framework [9]. In addition to frameworks, theories have been developed that describe the user behavior such as Normans Theory of Action [5].

Mashael Al-Saleh, MSc is with the Department of InformationTechnology, King Saud University, Riyadh, Saudi Arabia. Areej Al-Wabil, PhD is with the Department of InformationTechnology, King Saud University, Riyadh, Saudi Arabia.

JOURNAL OF COMPUTING, VOLUME 4, ISSUE 3, MARCH 2012, ISSN 2151-9617 https://sites.google.com/site/journalofcomputing WWW.JOURNALOFCOMPUTING.ORG

2.1 Frameworks & Models

There is a scarcity in HCI research dealing with modeling users in the context of web transactions. So, we examined four general frameworks, one transactional tasks model, and one theory related to general user interactions and considered them as the basis for developing a framework specific to web transactions. These frameworks take into consideration the navigation and information retrieval issues related to web interaction, however, they lack accurate and detailed representations of sequence of actions involved in iterative transactional tasks such as completing form fields, as well as some differences in the stages descriptions and the cognitive mechanisms involved in these stages. Table 1shows a summary of frameworks and theories related to user modeling. Frameworks have been constructed that focus on specific aspects of user interaction on the web. For example, in terms of information seeking several models have been reported which include Marchioninl's model, Ellis & Haugan's model, Choo, Detlor, & Turnball's model [10], and Broder's model for information retrieval [1]. In terms of navigation, frameworks include the navigation in electronic worlds framework [7], and the Framework for Human-Web Interaction [6]. General frameworks have been constructed such as the user action framework [9], which was based on Norman's theory of action, and the HuWI framework which was based on Niesser's perceptual cycle [11]. In addition to the frameworks, there are many general theories that describe the user behavior such as Norman's theory of action [5] and Niesser's perceptual cycle [11]. Since our interest in this project is examining Human-Computer Interaction (HCI) in the context of the Web, and specifically transactional tasks, we conducted a further investigation of the related frameworks that can inform the design of a user model in web transactions. While frameworks have been proposed for navigational and Informational tasks on the Web in HCI research, there is one previous model established in the context of web transactions [8], which dealt with modes of interacting with web forms and how the process of completing a form varies from the process of reviewing a form prior to submission. The proposed framework builds on previous work in HCI models and frameworks and provides insights into the cognitive processes involved in interacting with web form elements.

2.2 Web Form Design

Web forms are pages designed to enable users to enter data which can be submitted to servers for processing. A formal definition of HTML forms from W3C recommendations describes a form as "a section of a document containing normal content, markup, special elements called controls (checkboxes, radio buttons, menus, etc.), and labels, on those controls. User generally complete a form by modifying its controls (entering text, selecting menus items, etc.), before submitting the form to an agent for processing (e.g., to a web server, to a mail server, etc.)" [12]. Jarrett and Gaffney [13] have asserted that designing a web form involves three layers: relationship, conversation, appearance. The relationship layer focuses on who are the users of the form and what type of information you need to ask them. In the conversation layer, the design is focused on making questions easy to answer with useful instructions, choosing the form controls that meet user expectations, and arranging the form to be easy to follow. The appearance layer is concerned with the forms arrangement, and the use of color [13]. An example of the three layers in designing a sign up form of an Arabic educational portal, Mawhiba, is shown in Fig 1. In the relationship layer, the users of the form (i.e. target audience) are defined as students, parents, and educational specialists. The purpose of which the information will be used and the privacy statements were added in the beginning of the form to build trust with users. The relationship layer is shown in Fig. 1.

JOURNAL OF COMPUTING, VOLUME 4, ISSUE 3, MARCH 2012, ISSN 2151-9617 https://sites.google.com/site/journalofcomputing WWW.JOURNALOFCOMPUTING.ORG

Fig. 1: Relationship layer in the Mawhiba web portal

In conversation layers, one way to make the questions easy to answer or fields easy to fill-out is to allow the users to visually examine their responses by showing acceptance or rejection messages. Also, in some questions flexibility is offered to users in the sense that users are not forced to answer the questions from specific options. For example, providing "others" option and allowing users to choose the date format when they enter the date of birth. The second part of the conversation layer is to write useful instructions. In Mawhiba, this was reflected by using familiar words in writing the labels and the headings of the form, starting the form with short informative title and instructions that describe the purpose of the form. The first and second part of the conversation layer is shown in Fig. 2.

The fourth part of the conversation layer is to make the form easy to follow. In our Mawhiba example, the form is not long and it is suitable to be in one page, no unexpected variation from conventions is found in the form, and the conversation finished smoothly with a "Welcome" message to indicate the completion of the registration task and end of the process as shown in Fig 3.

Fig. 3: Coversation layer: "Welcome" and "Acknowledgment" messages in the form

The last layer is the appearance layer, which is concerned with the visual design details in designing fields and labels. Moreover, it deals with the arrangement of controls and selection of colors that contribute to the visual design of the web form.

Fig. 2: Conversation layer in Mawhiba

The third part of the conversation layer is to choose the form controls. Table 2 shows a sample of controls on forms and the users' expectations of using these controls in Arabic interfaces.

2.3 Eyetracking in Usability and Web form design In eye tracking experiments, the users are asked to perform a specific task while their ocular behavior is recorded. The eye movements can be played back and participants can be asked to describe their behavior while they examine their own interaction with the stimuli. This pro-

JOURNAL OF COMPUTING, VOLUME 4, ISSUE 3, MARCH 2012, ISSN 2151-9617 https://sites.google.com/site/journalofcomputing WWW.JOURNALOFCOMPUTING.ORG

cedure is called Retrospective Think Aloud (RTA). Many metrics can be measured and analyzed based on the assumption that eye movements reflect the cognitive processes of users during the user interaction. One of these measures is the fixations when the eye is stable and aimed to specific visual display elements. Some of the metrics related to the fixation are: Number of fixations Fixation duration Sequence of fixations across a visual display which is called a scan path. Recently, there has been a proliferation of studies using eye tracking in web usability for understanding the effect of some design issues on users and gaining insights into user behavior in performing specific web tasks. The most recent research concerned with optimizing the design of web interfaces using eye tracking methods has focused on navigational and informational tasks. While there are few studies on transactional tasks, their focus was how the changes of the design layout and elements affect user behavior. Nielsen and Pernice, in their Eye Tracking Web Usability book, have compiled a set of guidelines related to designing web forms from eye tracking studies on web forms [14]. They discussed positive and negative aspects of User Interface (UI) elements and presented advice on how the design can reduce unnecessary complexity measured by increased gaze fixations of users on web forms. Another set of guidelines was proposed from an eye tracking study conducted by C. Tan et al. [15].They focused their study on how easy the user can complete the form and user satisfaction. They chose four sign-in pages: Yahoo mail, Hotmail, Google mail, and eBay; where these pages showed different design combinations in layout design, grouping, and ways in indicating optional fields. Another study conducted by Penzo et al. was based on recommendations from the 'Web Form Design' book [16]. They conducted eye tracking tests measuring eye movement activity and duration for different label designs, formatting and types of form fields. They designed four forms for the test where each of them contains four input fields. Like the other studies, they concluded the study with design guidelines regarding label position, alignment of the labels, bold labels, the use of drop-down list boxes and placement of labels. A recent study was conducted by Terai et al. to investigate the effect of task types in information-seeking behavior [17]. Participants were asked to perform two types

of web search, an informational task and a transactional task, and their behavior was examined. Moreover a think aloud protocol was followed in that study to record users thoughts and opinions as they interacted with the system during the sessions. The participants behaviors have been classified into eight categories and the measures have been calculated for each category. Their results showed that the participants visit more pages and complete the tasks in shorter periods in transactional tasks when compared to informational tasks in interactive systems.

3 MODEL OF INTERACTION IN TRANSACTIONAL

TASKS

A model for human interaction in transactional tasks is depicted in Fig 4. The model describes how the user conducts a transactional task through filling out a web form.

Fig. 4. Model for Human-Web Interaction in Transactional Tasks

The model considers that each field in the form contains one or more of the following parts: label, introduction/ instruction, and controls (textbox, list) as shown in Fig 5.

Fig. 5. Field's structure

The model contains six major stages: form a goal, determine strategy, act, perceive system state, evaluate field, and evaluate form. Each main stage may contain sub stages. The user begins with the first two stages to choose

JOURNAL OF COMPUTING, VOLUME 4, ISSUE 3, MARCH 2012, ISSN 2151-9617 https://sites.google.com/site/journalofcomputing WWW.JOURNALOFCOMPUTING.ORG

the form. The following four steps are concerned with filling out individual fields. Finally, users end the transaction by evaluating the form prior to submission or moving on to a different activity in interaction.

3.5 Perceive system state

After users action, the system state changes. The field value entered by the users, or the selected options and elements appear to reflect users selection. Also, the system may show feedback for users as acceptance or rejection messages. The users read these messages or examine the interface for cues of changes in the systems state and attempt to recognize its meaning and its relation to the context of the activity being conducted.

3.1 Form a goal The users start the transaction with a goal or service they want to obtain. The assumption is that this goal is clear in the mind of the user and reflected by the target link that the users choose to reach the transactional interface. Moreover, the selection of the forms link is affected by the users knowledge. When the users have experience about transactions or some familiarity with the interactive system, they can choose the transaction form or locate it within the information structure easily and quickly. 3.2 Intention After entering the form to do the transaction the users check whether the form matches their needs or not. The intention can be established by verifying the action prior to proceeding with examining content by reading the forms introduction, instruction, or scanning the form. A helpful introduction, which is often the instructions in the beginning of the form with a heading, is often a paragraph that describes the purpose of the form, privacy statement, and list of anything the users might need to complete the form [13]. When the users perceive that this is not a form that matches their needs, they start searching for an alternate link reflecting their goal of locating the transactional form. 3.3 Determine strategy

After deciding that the form is the correct form, the users proceed to filling out the form fields by first determining the fields strategy. The field has a predefined strategy that the user should understand to manipulate the controls in the correct manner (e.g. click the arrow for a drop-down list). The information about the field strategy can be acquired from field instructions, label and user knowledge (e.g. prior experience in completing similar fields such as drop-down lists and calendar popup). The users perform this stage by scanning the field and/or label and moving to the next step when they understand the strategy.

3.6 Evaluate Field

After perceiving the system state, the users evaluate and understand the state. Then, according to the users understanding and acceptance of the system state, the users decide whether to continue to the next field or re-start the four stages of filling the field again: Determine strategy, Act, Perceive system state, and Evaluate field.

3.7 Evaluate Form After filling out all the fields, users evaluate the form by reading the form fields and examining whether there is any system feedback before ending the transaction.

4 MODEL VERIFICATION

An eye tracking exploratory experiment was conducted to verify this proposed model by examining visual and behavioral patterns that are exhibited in the different phases of the model.

4.1 Method

The model was tested against a detailed set of eye tracking data collected from 10 participants as they engaged in three transactional tasks using Arabic interfaces of web-based forms. The testing environment was controlled for consist lighting, temperature, and noise.

4.2 Participants

The number of participants in the experiment was 10. Participants ranged in age between 17-34 years. The average was 23.4 years, and standard deviation 4.54 years. Their computer experience ranged between 8-14 years, with mean 10.7 and standard deviation of 2.37 years. Their internet usage was between 7-14 years, with average 9.7 and standard deviation of 2.45 years. In order to record and analyze eye movements, aTobii X120 eye tracker was used. Eye movements were monitored using Tobii studio gaze analysis software version 2.2.1. The stimuli were displayed in Internet Explorer version 7 using

3.4 Act

After understanding the field strategy, the users start filling out the field. The field actions are categorized under two types: select and fill. The users start one of

these actions according to their understanding of the fields strategy.

JOURNAL OF COMPUTING, VOLUME 4, ISSUE 3, MARCH 2012, ISSN 2151-9617 https://sites.google.com/site/journalofcomputing WWW.JOURNALOFCOMPUTING.ORG

an HP 22 inch monitor. The resolution was set to 1024 X 768 in the external monitor and the laptop monitor.

5.1 Goal and intention

The investigation of this part of the model was done in navigation tasks in which users were asked to search for the contact form on the ministry's home page. There were two links that all participants expected that these links may help them in finding the goal. The link that most of the participants perceived as more descriptive and closer to achieve their goal received a larger number of fixations and longer fixation duration. The intention was exhibited by the participants through scanning the form introduction and form fields to assess whether the intended form has been reached. When the form introduction and instruction are clear, this facilitates understanding whether the form matches the users goal or not. For example, one of the participants started to scan the form to understand whether it was the correct form or not. The introduction of the form showed the same goal the participant wanted to achieve but, the form fields did not reflect that. The participant was able to find the correct form link after 29.91 seconds. In terms of usability, a clear form introduction is essential to determine whether the form matches users goals.

4.3 Procedure

Participants were tested individually. The experiment started with a 5 minute introduction about this study followed by requesting participants to fill out a demographic questionnaire. Then the experiment began with calibration, followed by asking the participants to conduct 3 web transactional tasks. After that, a Retrospective Think Aloud protocol (RTA) was conducted by playing back the participant's eye movement in selected tasks. The aim of the RTA was to ask the participants to describe their reasoning for observed behavior when they were conducting the transactional task.

4.4 Task and Stimuli

Three web transactions in three different Arabic web sites have been used in the experiment listed in Table 3.

5.2 Determine strategy

When the users start to fill out the form they often start by determining the strategy for completing each field prior to interacting with the controls. This is often conducted by visual exploration or hover over controls to explore affordances of form elements. Familiarity with the controls and prior experience plays a key role in this stage of the model. In order to verify this part of the model, we examined a relatively complex task in web forms. This task was how users determine the strategy in interacting with the calendar field represented in the task of booking a flight. Four participants understood the calendar strategy directly. The RTA protocol provided supporting evidence of familiarity for participants, as they clarified that this was due to their experience in using the same web site or similar web sites. The scan path of their understanding of the calendar strategy was a pattern of scanning the labels to understand the information that was required at this point in interaction, followed by the text box to understand the data entry mode offered by the interface, and finally the calendar icon, or they scanned the text box then clicked on calendar button to determine the strategy as shown in Fig. 6. The calendar overlaid on the form to allow users to choose the dates is shown in Fig 7.

5 RESULTS AND DISCUSSION

Analysis was conducted to verify the appropriateness of the model that has been proposed, and to examine whether it provide a good match to participants' interactions in transactional tasks. This was conducted by investigating how the visual patterns related to the models phases. The model was divided into four parts, and the experiments' results are discussed according to these parts.

JOURNAL OF COMPUTING, VOLUME 4, ISSUE 3, MARCH 2012, ISSN 2151-9617 https://sites.google.com/site/journalofcomputing WWW.JOURNALOFCOMPUTING.ORG

Fig. 6 Visual pattern of participant understand strategy directly

system state, and evaluating the field. The verification of this part was studied in the task of registration in educational portal (i.e. Mawhiba stimulus). For the first step [Act], it was observed that users found this intuitive and directly completed this step without any difficulties or hesitation in responding. An example of this step is shown in Fig 10, in which the users attempt to understand the strategy by scanning the label and text box and then filling out the form. The eye gaze and mouse click shows the user understanding of what is required to complete this step of the transaction. Common controls are often dealt with by users intuitively because they have been previously exposed to them in other web forms and they are familiar with how they work.

Fig. 7 The calender overlaid on the form

The rest of participants did not understand the calendar strategy directly. They tried to understand the calendar strategy by exhibiting a focused visual examination of the area, as this was evident by long fixations on the calendar button as shown in Fig 8, or by exhibiting random fixations around the calendar as shown in Fig 9.

Fig. 10. The user action after understanding the strategy

Fig. 8. Scan path of a participant who did not understand the calender strategy directly-1

To study the users perception of the system state and the Evaluate field segments, we examined cases in which users entered incorrectly-formatted data and the system responded with feedback. This change was shown as the change of field value, and system feedback to indicate the acceptance or rejection of the entered value. It was observed that the participants deal with system state as follows: 50% of the participants scanned their entered value and whether there was an error message provided by the system as shown in Fig 11.

Fig. 9. Scan path of a participant who did not understand calender strategy directly-2

By comparing the behavior of the two participants' groups, they were markedly different in the duration of visual examination and the number of fixations. The participants who understood the strategy directly exhibited a mean fixation duration of .81s and a total of 12 fixations in this segment. In contrast, the participants who didn't understand the strategy directly exhibited a mean fixation duration of 1.7s and a total of 33 fixations in the same segment.

Fig. 11. The participant reviews the data that was entered incorrectly

40% of the participants scanned the error messages immediately after filling out the field. Moreover, 30% of the participants scanned the acceptance message immediately after filling out the fields as shown in Fig 12.

5.3 Act, Perceive system state, Evaluate field

According to the proposed model, after determining the field strategy, users start with a series of steps for each field. These include filling out the field, perceiving the

JOURNAL OF COMPUTING, VOLUME 4, ISSUE 3, MARCH 2012, ISSN 2151-9617 https://sites.google.com/site/journalofcomputing WWW.JOURNALOFCOMPUTING.ORG

Fig. 12. Visual pattern of a participant visually scanning the acceptance message

Fig. 15. Visual pattern of participant who did not understand the system feedback and repeated the same stages [ Act- precive system state- evalute field]-2

After perceiving the system state, the user is expected to examine and understand the system feedback. This understanding affects the user behavior after the feedback. For example, when the system provide error message the participants after understanding the error message, corrected the error by repeating the same steps (determinestrategy>act>perceivesystemstate>evaluation). The movement of the users in conducting these four steps can be easy if the form conforms to good design principles in the conversation layer in writing instructions, choosing the form controls, and validation and showing messages [13]. Finally, when the result was accepted by the users, they move on to the next field as shown in Fig 13.

5.4 Evaluate form

Evaluating the form is similar to the the review mode described in [8]. The experiments results showed that while four participants exhibited transition to the end of the form directly, six participants conducted the form evaluation as either top-down or bottom-up approaches. Three of the participants did the evaluation as top-down, starting from the first field and moving down the form as shown in Fig 16.

Fig. 13: The user accepted the system state and moved to the next field

When the system feedback is not acceptable or not understandable for the user, the steps are repeated more than one time. This was reflected on their behavior and it was evident as long fixations, and long scans on the field and label in attempts to try to understand this issue and determine the source of the error as shown in Figures 1415.

Fig 16. Top-down form evaluation

Fig. 14. Visual pattern of participant who did not understand the system feedback and repeated the same stages [ Act- precive system state- evalute field]-1

The other three conducted a bottom-up evaluation, starting from the last field and moving up while scanning the interface as shown in Figures 17-18. During the evaluation, the users scan correctly entered data, check wrong fields or in some cases both.

JOURNAL OF COMPUTING, VOLUME 4, ISSUE 3, MARCH 2012, ISSN 2151-9617 https://sites.google.com/site/journalofcomputing WWW.JOURNALOFCOMPUTING.ORG

line with cognitive engineering approaches in HCI research, a reliable model needs to be established in order to utilize this information in automated evaluations of interactive systems.

ACKNOWLEDGMENT

This work was supported in part by grant #A-S-110565 from King Abdulaziz City for Science and Technology (KACST), Riyadh, Saudi Arabia.

REFERENCES

[1] A Broder, "A taxonomy of web search," SIGIR Forum, vol. 36, [2] L. Ball and A. Pool, "Eye Tracking in Human-Computer Interaction and Usability Research: Current Status and Future Prospects," in Interaction, Encyclopedia of Human Computer, Ghaou [3] L. Cowen, "An eye movement analysis of web-page usability," M.S by research in the Desgin and Evalualtion of Advanced Interactive Systems 2001. [4] C. Ehmke and S. Wlison, "Identify web usability issues from eye tracking data.," in paper persented at 21st Group Annual Conference,University Lancaster,UK, vol. 1, UK, 2007, pp. 119-128. [5] D. Norman, "Cognitive Engineering," in User Centered System Desgin, LEA, Ed. Hillsdale, 1986, ch. 3, pp. 31-62. [6] C. Pilgrim, G. Lindgaard, and Y. Leung, "A Framework for Human-Web Interaction," in CHISIG Annual Conference on HumanComputer Interaction (OZCHI ), Ergonomics Society of Australia, Wollongong., 2004. [7] S. Jul and G. Furnas, "Navigation in Electronic Worlds," CHI 97 Workshop Report 1997. [8] J.A. Bargas-Avila, G. Oberholzer, P. Schmutz, M. de Vito, and K. Opwis. Usable error message presentation in the World Wide Web: Do not show errors right away. Interacting with Computers, Volume 19, Issues 3 , pp. 330-341, May. 2007. [9] T. Andre, H. Hartson, S. Belz, and F. Mccreary, "The user action framework: a reliable foundation for usability engineering support tools," Human-Computer Studies, vol. 54, pp. 107-136, 2001. [10] C. Choo, B. Detlo, and B. Turnbull, "A Behavioral Model of Information Seeking on the Web-- Preliminary Results of a Study of How Managers and IT Specialists Use the Web," ASIS Annual Meeting Contributed Paper 1998. [11] S. Farris, "The Human-Web Interaction Cycle: Aproposed and tested framework of perception, cognition, and action on the Web," Kansas State Uninversity, Manhattan, Kansas, PhD Thesis 2003. [12] The World Wide Web Consortium (W3C). W3C recommendation[Online]. http://www.w3.org/TR/html4/interact/forms.html#h-17.1 [13] C. Jarrett and G. Gaffney, Forms that Work. Burlington, USA: Elsevier, 2009. [14] J. Nilsen and K. Pernince, Eyetracking Web Usability. New York, USA: New Rider, 2010. [15] C. Tan. (2009, April) Web form design guidelines: an eyetracking study. [Online]. www.cxpartners.co.uk/thoughts/web_forms_design_guidelines _an_eyetracking_study.htm [16] M. Penzo. (2006, July) Label Placement in Forms. [Online]. www.uxmatters.com/mt/archives/2006/07/label-placement-informs.php [17] H. Terai, H.Saito, Y. Egusa, M. Takaku, M. Miwa, and N. Kando.

Fig. 17: Bottom-up form evaluation

Fig. 18: Bottom up form evaluation-2

6 CONCLUSION

This work aimed to develop a model of user behavior in transactional web tasks. This was accomplished by constructing a user model based on HCI cognitive and behavioral models. Moreover, the model was verified by examining visual attention of users and investigating the degree to which visual patterns relate to the model in an exploratory eye tracking study. The model provided a good match to participants' interactions in transactional tasks, particularly completing text and selection fields. In

JOURNAL OF COMPUTING, VOLUME 4, ISSUE 3, MARCH 2012, ISSN 2151-9617 https://sites.google.com/site/journalofcomputing WWW.JOURNALOFCOMPUTING.ORG

10

2008. Differences between informational and transactional tasks in information seeking on the web. In Proceedings of the second international symposium on Information interaction in context (IIiX '08), Pia Borlund, Jesper W. Schneider, Mounia Lalmas, Anastasios Tombros, John Feather, Diane Kelly, and Arjen P. de Vries (Eds.). ACM, New York, NY, USA, 152-159.

You might also like

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (122)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (590)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (843)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5807)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (401)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (346)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (897)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1091)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (74)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- Music Expectancy and Thrills. (Huron and Margulis, 2010)Document30 pagesMusic Expectancy and Thrills. (Huron and Margulis, 2010)David Quiroga50% (2)

- Building Blocks of Negotiation: Perception, Cognition, and Emotions in NegotiationDocument23 pagesBuilding Blocks of Negotiation: Perception, Cognition, and Emotions in NegotiationJaideip KhaatakNo ratings yet

- Sequence of Events Lesson Plan Using UDLDocument8 pagesSequence of Events Lesson Plan Using UDLcpollo3100% (1)

- Research - First Long TestDocument4 pagesResearch - First Long TestStacy Ann VergaraNo ratings yet

- Research Methods - Planning - VariablesDocument1 pageResearch Methods - Planning - VariablesPradeep KumarNo ratings yet

- Leo Strauss - LOCKE Seminar (1958)Document332 pagesLeo Strauss - LOCKE Seminar (1958)Giordano BrunoNo ratings yet

- Philosophy Has Shaped The WorldDocument13 pagesPhilosophy Has Shaped The WorldPrince KhalidNo ratings yet

- Nihilism, Nature and The Collapse of The CosmosDocument20 pagesNihilism, Nature and The Collapse of The Cosmoscasper4321No ratings yet

- Product Lifecycle Management Advantages and ApproachDocument4 pagesProduct Lifecycle Management Advantages and ApproachJournal of ComputingNo ratings yet

- Informative Versus Persuasive Speeches - Types of Public SpeechesDocument11 pagesInformative Versus Persuasive Speeches - Types of Public Speechesmedisak73No ratings yet

- Impact of Facebook Usage On The Academic Grades: A Case StudyDocument5 pagesImpact of Facebook Usage On The Academic Grades: A Case StudyJournal of Computing100% (1)

- Cloud Computing: Deployment Issues For The Enterprise SystemsDocument5 pagesCloud Computing: Deployment Issues For The Enterprise SystemsJournal of ComputingNo ratings yet

- Problem Based LearningDocument8 pagesProblem Based Learningapi-290507434No ratings yet

- WEEK 2 Lecture Syllabus DesignDocument39 pagesWEEK 2 Lecture Syllabus DesignLee Kean WahNo ratings yet

- Hybrid Network Coding Peer-to-Peer Content DistributionDocument10 pagesHybrid Network Coding Peer-to-Peer Content DistributionJournal of ComputingNo ratings yet

- Divide and Conquer For Convex HullDocument8 pagesDivide and Conquer For Convex HullJournal of Computing100% (1)

- Business Process: The Model and The RealityDocument4 pagesBusiness Process: The Model and The RealityJournal of ComputingNo ratings yet

- Decision Support Model For Selection of Location Urban Green Public Open SpaceDocument6 pagesDecision Support Model For Selection of Location Urban Green Public Open SpaceJournal of Computing100% (1)

- Complex Event Processing - A SurveyDocument7 pagesComplex Event Processing - A SurveyJournal of ComputingNo ratings yet

- Hiding Image in Image by Five Modulus Method For Image SteganographyDocument5 pagesHiding Image in Image by Five Modulus Method For Image SteganographyJournal of Computing100% (1)

- Energy Efficient Routing Protocol Using Local Mobile Agent For Large Scale WSNsDocument6 pagesEnergy Efficient Routing Protocol Using Local Mobile Agent For Large Scale WSNsJournal of ComputingNo ratings yet

- K-Means Clustering and Affinity Clustering Based On Heterogeneous Transfer LearningDocument7 pagesK-Means Clustering and Affinity Clustering Based On Heterogeneous Transfer LearningJournal of ComputingNo ratings yet

- An Approach To Linear Spatial Filtering Method Based On Anytime Algorithm For Real-Time Image ProcessingDocument7 pagesAn Approach To Linear Spatial Filtering Method Based On Anytime Algorithm For Real-Time Image ProcessingJournal of ComputingNo ratings yet

- ESP and WritingDocument24 pagesESP and WritingRegina Rizkia UtamiNo ratings yet

- Multidimensional Thinking Beyond The Discourse (Imagination, Metaphor and Living Utopia)Document32 pagesMultidimensional Thinking Beyond The Discourse (Imagination, Metaphor and Living Utopia)Soledad Hernández BermúdezNo ratings yet

- 3rd Grade Greeting Card Lesson Plan-OnoratoDocument5 pages3rd Grade Greeting Card Lesson Plan-Onoratoapi-280922658No ratings yet

- A Critical Appraisal of Grices Cooperative PrinciDocument4 pagesA Critical Appraisal of Grices Cooperative PrinciVkvik007No ratings yet

- Division of Labor: 14 Principles of Management (HENRY FAYOL)Document11 pagesDivision of Labor: 14 Principles of Management (HENRY FAYOL)mary michelle m.miguelNo ratings yet

- Freedom and DisciplineDocument13 pagesFreedom and DisciplineBlack MaestroNo ratings yet

- Basistrening Presentasjon NTG 2015-EngelskDocument28 pagesBasistrening Presentasjon NTG 2015-Engelskapi-264075612No ratings yet

- Modes of ReadingDocument3 pagesModes of ReadingMaria Francessa AbatNo ratings yet

- Social Psychology in IndiaDocument4 pagesSocial Psychology in IndiaGargi YaduvanshiNo ratings yet

- SemioticsDocument3 pagesSemioticsapi-3701311100% (2)

- Katelyn Veteto Teacher Toolkit Content StrategiesDocument7 pagesKatelyn Veteto Teacher Toolkit Content Strategiesapi-399137202No ratings yet

- The Problem of Positivism in The Work of Nicos Poulantzas by Martin PlautDocument9 pagesThe Problem of Positivism in The Work of Nicos Poulantzas by Martin PlautCarlosNo ratings yet

- Bonjour's A Priori Justification of InductionDocument10 pagesBonjour's A Priori Justification of Inductionראול אפונטהNo ratings yet

- Greek PhilosophersDocument27 pagesGreek PhilosophersLNo ratings yet

- Sharpening The Focus of Force Field Analysis: Journal of Change Management January 2014Document23 pagesSharpening The Focus of Force Field Analysis: Journal of Change Management January 2014Jiana NasirNo ratings yet

- Stage 1: Denial: Common Phrases That Learners Might Use at This Stage AreDocument5 pagesStage 1: Denial: Common Phrases That Learners Might Use at This Stage AresheilaNo ratings yet

- Lesson 3.3 Basic Approaches To Leadrship: 05/19/2020 Abdurahman AbdulahiDocument19 pagesLesson 3.3 Basic Approaches To Leadrship: 05/19/2020 Abdurahman AbdulahidevaNo ratings yet

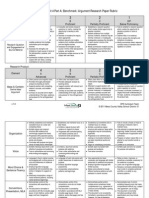

- Grade 6 ELA Unit4 Benchmark Assessment RubricDocument2 pagesGrade 6 ELA Unit4 Benchmark Assessment RubricBecky JohnsonNo ratings yet

- A History of Philosophic Ideas About SportDocument21 pagesA History of Philosophic Ideas About SportEmmanuel Estrada AvilaNo ratings yet