Professional Documents

Culture Documents

Chapter3 - BP

Chapter3 - BP

Uploaded by

Davin OktavianOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Chapter3 - BP

Chapter3 - BP

Uploaded by

Davin OktavianCopyright:

Available Formats

16

3. The Back Propagation Algorithm

Having established the basis of neural nets in the previous chapters, lets now have a

look at some practical networks, their applications and how they are trained.

Many hundreds of Neural Network types have been proposed over the years. In fact,

because Neural Nets are so widely studied (for example, by Computer Scientists,

Electronic Engineers, Biologists and Psychologists), they are given many different

names. Youll see them referred to as Artificial Neural Networks (ANNs),

Connectionism or Connectionist Models, Multi-layer Percpetrons (MLPs) and

Parallel Distributed Processing (PDP).

However, despite all the different terms and different types, there are a small group of

classic networks which are widely used and on which many others are based. These

are: Back Propagation, Hopfield Networks, Competitive Networks and networks

using Spiky Neurons. There are many variations even on these themes. Well consider

these networks in this and the following chapters, starting with Back Propagation.

3.1 The algorithm

Most people would consider the Back Propagation network to be the quintessential

Neural Net. Actually, Back Propagation

1,2,3

is the training or learning algorithm rather

than the network itself. The network used is generally of the simple type shown in

figure 1.1, in chapter 1 and in the examples up until now. These are called Feed-

Forward Networks (well see why in chapter 7 on Hopfield Networks) or

occasionally Multi-Layer Perceptrons (MLPs).

The network operates in exactly the same way as the others weve seen (if you need to

remind yourself, look at worked example 2.3). Now, lets consider what Back

Propagation is and how to use it.

A Back Propagation network learns by example. You give the algorithm examples of

what you want the network to do and it changes the networks weights so that, when

training is finished, it will give you the required output for a particular input. Back

Propagation networks are ideal for simple Pattern Recognition and Mapping Tasks

4

.

As just mentioned, to train the network you need to give it examples of what you want

the output you want (called the Target) for a particular input as shown in Figure 3.1.

Figure 3.1, a Back Propagation training set.

Inputs

Targets

(the output you 0 1 1 0 1 1

want for each pattern)

For this

particular

input

pattern to

the

network, we

would like

to get this

output.

17

So, if we put in the first pattern to the network, we would like the output to be 0 1 as

shown in figure 3.2 (a black pixel is represented by 1 and a white by 0 as in the

previous examples). The input and its corresponding target are called a Training Pair.

Figure 3.2, applying a training pair to a network.

Tutorial question 3.1:

Redraw the diagram in figure 3.2 to show the inputs and targets for the second

pattern.

Once the network is trained, it will provide the desired output for any of the input

patterns. Lets now look at how the training works.

The network is first initialised by setting up all its weights to be small random

numbers say between 1 and +1. Next, the input pattern is applied and the output

calculated (this is called the forward pass). The calculation gives an output which is

completely different to what you want (the Target), since all the weights are random.

We then calculate the Error of each neuron, which is essentially: Target - Actual

Output (i.e. What you want What you actually get). This error is then used

mathematically to change the weights in such a way that the error will get smaller. In

other words, the Output of each neuron will get closer to its Target (this part is called

the reverse pass). The process is repeated again and again until the error is minimal.

Let's do an example with an actual network to see how the process works. Well just

look at one connection initially, between a neuron in the output layer and one in the

hidden layer, figure 3.3.

Figure 3.3, a single connection learning in a Back Propagation network.

0

0 1

1

Input 1

Wed like this

neuron to give a

0 out.

Wed like this

neuron to give a

1 out.

Targets

Input 2

Input 3

Input 4

A B

C

W

AB

W

AC

18

The connection were interested in is between neuron A (a hidden layer neuron) and

neuron B (an output neuron) and has the weight W

AB.

The diagram also shows another

connection, between neuron A and C, but well return to that later. The algorithm

works like this:

1. First apply the inputs to the network and work out the output remember this

initial output could be anything, as the initial weights were random numbers.

2. Next work out the error for neuron B. The error is What you want What you

actually get, in other words:

Error

B

= Output

B

(1-Output

B

)(Target

B

Output

B

)

The Output(1-Output) term is necessary in the equation because of the Sigmoid

Function if we were only using a threshold neuron it would just be (Target

Output).

3. Change the weight. Let W

+

AB

be the new (trained) weight and W

AB

be the initial

weight.

W

+

AB

= W

AB

+ (Error

B

x Output

A

)

Notice that it is the output of the connecting neuron (neuron A) we use (not B). We

update all the weights in the output layer in this way.

4. Calculate the Errors for the hidden layer neurons. Unlike the output layer we cant

calculate these directly (because we dont have a Target), so we Back Propagate

them from the output layer (hence the name of the algorithm). This is done by

taking the Errors from the output neurons and running them back through the

weights to get the hidden layer errors. For example if neuron A is connected as

shown to B and C then we take the errors from B and C to generate an error for A.

Error

A

= Output

A

(1 - Output

A

)(Error

B

W

AB

+ Error

C

W

AC

)

Again, the factor Output (1 - Output

) is present because of the sigmoid squashing

function.

5. Having obtained the Error for the hidden layer neurons now proceed as in stage 3

to change the hidden layer weights. By repeating this method we can train a

network of any number of layers.

This may well have left some doubt in your mind about the operation, so lets clear

that up by explicitly showing all the calculations for a full sized network with 2

inputs, 3 hidden layer neurons and 2 output neurons as shown in figure 3.4. W

+

represents the new, recalculated, weight, whereas W (without the superscript)

represents the old weight.

19

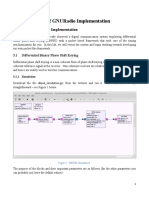

Figure 3.4, all the calculations for a reverse pass of Back Propagation.

1. Calculate errors of output neurons

= out

(1 - out

) (Target

- out

= out

(1 - out

) (Target

- out

)

2. Change output layer weights

W

+

A

= W

A

+

out

A

W

+

A

= W

A

+

out

A

W

+

B

= W

B

+

out

B

W

+

B

= W

B

+

out

B

W

+

C

= W

C

+

out

C

W

+

C

= W

C

+

out

C

3. Calculate (back-propagate) hidden layer errors

A

= out

A

(1 out

A

) (

W

A

+

W

A

)

B

= out

B

(1 out

B

) (

W

B

+

W

B

)

C

= out

C

(1 out

C

) (

W

C

+

W

C

)

4. Change hidden layer weights

W

+

A

= W

A

+

A

in

W

+

A

= W

+

A

+

A

in

W

+

B

= W

B

+

B

in

W

+

B

= W

+

B

+

B

in

W

+

C

= W

C

+

C

in

W

+

C

= W

+

C

+

C

in

The constant (called the learning rate, and nominally equal to one) is put in to speed

up or slow down the learning if required.

Inputs Hidden layer Outputs

A

B

C

W

A

W

A

W

B

W

B

W

C

W

C

W

A

W

A

W

B

W

B

W

C

W

C

20

To illustrate this lets do a worked Example.

Worked example 3.1:

Consider the simple network below:

Assume that the neurons have a Sigmoid activation function and

(i) Perform a forward pass on the network.

(ii) Perform a reverse pass (training) once (target = 0.5).

(iii) Perform a further forward pass and comment on the result.

Answer:

(i)

Input to top neuron = (0.35x0.1)+(0.9x0.8)=0.755. Out = 0.68.

Input to bottom neuron = (0.9x0.6)+(0.35x0.4) = 0.68. Out = 0.6637.

Input to final neuron = (0.3x0.68)+(0.9x0.6637) = 0.80133. Out = 0.69.

(ii)

Output error =(t-o)(1-o)o = (0.5-0.69)(1-0.69)0.69 = -0.0406.

New weights for output layer

w1

+

= w1+( x input) = 0.3 + (-0.0406x0.68) = 0.272392.

w2

+

= w2+( x input) = 0.9 + (-0.0406x0.6637) = 0.87305.

Errors for hidden layers:

1 = x w1 = -0.0406 x 0.272392 x (1-o)o = -2.406x10

-3

2= x w2 = -0.0406 x 0.87305 x (1-o)o = -7.916x10

-3

New hidden layer weights:

w3

+

=0.1 + (-2.406 x 10

-3

x 0.35) = 0.09916.

w4

+

= 0.8 + (-2.406 x 10

-3

x 0.9) = 0.7978.

w5

+

= 0.4 + (-7.916 x 10

-3

x 0.35) = 0.3972.

w6

+

= 0.6 + (-7.916 x 10

-3

x 0.9) = 0.5928.

(iii)

Old error was -0.19. New error is -0.18205. Therefore error has reduced.

Input

A = 0.35

Input

B = 0.9

Output

0.1

0.8

0.6

0.4

0.3

0.9

21

Tutorial question 3.2:

Try a training pass on the following example. Target = 1, Learning rate = 1:

See Tips for Programmers in appendix A for ideas about coding networks (but try

programming exercise 3.1 first).

3.2 Running the algorithm

Now that weve seen the algorithm in detail, lets look at how its run with a large

data set. Suppose we wanted to teach a network to recognise the first four letters of

the alphabet on a 5x7 grid, as shown in figure 3.5.

Figure 3.5, the first four letters of the alphabet.

The correct way to train the network is to apply the first letter and change ALL the

weights in the network ONCE (ie do all the calculations in figure 3.4 or worked

example 3.1, once only). Next apply the second letter and do the same, then the third

and so on. Once you have done all four letters, return to the first one again and repeat

the process until the error becomes small (which means that it is recognising all the

letters). Figure 3.6 summarises how the algorithm should work.

Input

A = 0.1

Input

B = 0.7

Output

0.1

0.5

0.2

0.3

0.2

0.1

22

Figure 3.6, the correct running of the algorithm.

One of the most common mistakes for beginners is to apply the first letter to the

network, run the algorithm and then repeat it until the error reduces, then apply the

second letter and do the same. If you did this, the network would learn to recognise

the first letter, then forget it and learn the second letter, etc and youd only end up

with the last letter the network learned.

3.3 Stopping training

When do we stop the training? We could stop it once the network can recognise all

the letters successfully, but in practice it is usual to let the error fall to a lower value

first. This ensures that the letters are all being well recognised. You can evaluate the

total error of the network by adding up all the errors for each individual neuron and

then for each pattern in turn to give you a total error as shown in figure 3.7.

Apply this

latter first.

Calculate the error and

change all the weights

in the network once.

Apply this

letter next

Change all the

weights again

Apply this

letter 3

rd

.

Change

weights

Finally apply

this letter.

Change

weights and

start again at A

23

Figure 3.7, total error for network.

In other words, the network keeps training all the patterns repeatedly until the total

error falls to some pre-determined low target value and then it stops. Note that when

calculating the final error used to stop the network (which is the sum of all the

individual neuron errors for each pattern) you need to make all errors positive so that

they add up and do not subtract (an error of -0.5 is just as bad as an error of +0.5).

Once the network has been trained, it should be able to recognise not just the perfect

patterns, but also corrupted or noisy versions as was explained in section 2.1. In fact if

we deliberately add some noisy versions of the patterns into the training set as we

train the network (say one in five), we can improve the networks performance in this

respect. The training may also benefit from applying the patterns in a random order to

the network.

There is a better way of working out when to stop network training - which is to use a

Validation Set. This stops the network overtraining (becoming too accurate, which

can lessen its performance). It does this by having a second set of patterns which are

Make total

error =0

Apply first

pattern and train

Get error for each

output neuron in

network, make

positive, and add to

total error.

If total error < final

target error then

stop

If last pattern has

trained, start again

with first pattern

otherwise load next

pattern and train

Stop yes

No

No, last

pattern

has not

trained

Yes, last

pattern

has

trained

24

noisy versions of the training set (but arent used for training themselves). Each time

after the network has trained; this set (called the Validation Set) is used to calculate an

error. When the error becomes low the network stops. Figure 3.8 shows the idea.

Figure 3.8, use of validation sets.

When the network has fully trained, the Validation Set error reaches a minimum.

When the network is overtraining (becoming too accurate) the validation set error

starts rising. If the network overtrains, it wont be able to handle noisy data so well.

This topic will be explored further in section 6.4.

3.4 Problems with Backpropagation

Backpropagtion has some problems associated with it. Perhaps the best known is

called Local Minima. This occurs because the algorithm always changes the

weights in such a way as to cause the error to fall. But the error might briefly have to

rise as part of a more general fall, as shown in figure 3.9. If this is the case, the

algorithm will gets stuck (because it cant go uphill) and the error will not decrease

further.

Figure 3.9, local minima.

There are several solutions to this problem. One is very simple and that is to reset the

weights to different random numbers and try training again (this can also solve

several other problems). Another solution is to add momentum to the weight

change. This means that the weight change this iteration depends not just on the

current error, but also on previous changes. For example:

Network

error

Training cycles

Error measured Error measured on

on training set validation set

Network

trained

Network

error

Local Minima

Weight

Global Minima - the

lowest error (the weight

value you really want to

find)

25

W

+

= W + Current change + (Change on previous iteration * constant)

Constant is < 1.

There are other lesser known problems with Backpropagation as well. These tend to

manifest themselves as the network gets larger, but many can be overcome by

reinitialising the weights to different starting values. Its also worth noting that many

variations on the standard Backpropagation Algorithm have been developed over the

years to overcome such problems

5,6

.

3.5 Network size

A commonly arising question regards the size of network to use for a particular

problem. The most commonly used networks consist of an input layer, a single hidden

layer and an output layer (the type of network shown in figure 3.4). The input layer

size is set by the type of pattern or input you want the network to process; for

example, in figure 3.2, the network must have 4 inputs because there are four pixels in

the pattern. Similarly, the size of the output layer is set by the number of patterns you

want to recognise and how you want to code these outputs, looking again at figure

3.2, for this problem we need two output neurons. This really leaves only the number

of hidden layer neurons to sort out. There are no hard and fast rules for this and the

network typically works well over a range of this variable. In the example shown in

figure 3.5 where we are recognising characters on a 5 x 7 grid (35 inputs), if we have

26 output neurons (one for each letter of the alphabet). The network will train to

recognise all 26 letters with anywhere between 6 and 22 hidden layer neurons. Below

6 neurons and the network hasnt got enough weights to store all the patterns, above

22 neurons the network becomes inefficient and doesnt perform as well. So, all in all,

the number of hidden layer neurons needs to be experimented with for the best results.

We will return to this question again in section 6.4.

3.6 Strengths and weaknesses

Finally, before leaving the subject of Back Propagation, let us spend a moment

considering its strengths and weaknesses. What it is very good at, is the recognition of

patterns of the type we have been discussing in this section (in fact it is usually better

than a human). They are presented directly to the network with each pattern well

positioned on a grid and correctly sized. What it cant do, is handle patterns in a noisy

scene, like recognising a face in a crowd or a letter in a page of print. So it gets

confused with what you might term an unconstrained environment. You have to

pre-process the data to get it into the right format (the correct size and position) before

letting the network loose on it. We will discuss this problem further in section 5.3.

Other, more advanced, networks which perform similar tasks to Back Propagation

MLPs include Radial Basis

7

networks and Support Vector Machines

8

.

26

The programming exercise below will test you knowledge of what you gave learned

so far and give you a simple network to play with.

Programming exercise 3.1:

Code, using a high level programming language, a neural network trained using Back

Propagation to recognise two patterns as shown below (dont look at the

programming tips in appendix A until youve finished your program):

Pattern number 1 Pattern number 2

Use the neural network topology shown below:

When pattern 1 is applied the outputs should be: Output 1 = 1, Output 0 = 0.

When pattern 2 is applied the outputs should be: Output 1 = 0, Output 0 = 1.

Start by making each weight and input a separate variable. Once this is working,

rewrite the program using arrays to store of the network parameters.

Once you have finished coding the network, try training some different patterns (or

more patterns) and adding noise into the patterns.

Input 1

Input 2

Output 1

Output 2

1

2

3

4

a

b

c

d

e

f

g

h

27

Answers to tutorial questions

3.1

3.2

(i) Forward Pass

Input to top neuron = (0.1x0.1)+(0.7x0.5)=0.36. Out = 0.589.

Input to bottom neuron = (0.1x0.3)+(0.7x0.2) = 0.17. Out = 0.5424.

Input to final neuron = (0.589x0.2)+(0.5424x0.1) = 0.17204. Out = 0.5429.

(ii) Reverse Pass

Output error =(t-o)(1-o)o = (1-0.5429)(1-0.5429)0.5429 = 0.11343.

New weights for output layer

w1(+) = w1+( x input) = 0.2+(0.11343x0.589) = 0.2668.

w2(+) = w2+( x input) = 0.1+(0.11343x0.5424)=0.16152.

Errors for hidden layers:

1 = xw1 = 0.11343x0.2668= 0.030263(x(1-o)o)= 0.007326.

2= xw2= 0.11343x0.16152=0.018321(x(1-o)o)= 0.004547.

New hidden layer weights:

w3(+)=0.1+(0.007326x0.1)=0.1007326.

w4(+) = 0.5+(0.007326x0.7)=0.505128.

w5(+)=0.3+(0.004547x0.1)=0.3004547.

w6(+) = 0.2+(0.004547x0.7)=0.20318.

1

1 0

0

Input 1

Wed like this

neuron to give a

1 out.

Wed like this

neuron to give a

0 out.

Targets

Input 2

Input 3

Input 4

You might also like

- P66 B1 M5 Lufthansa PDFDocument196 pagesP66 B1 M5 Lufthansa PDFDAVID FAJARDO PUERTO100% (1)

- King-Rook Vs King With Neural NetworksDocument10 pagesKing-Rook Vs King With Neural NetworksfuzzieNo ratings yet

- Receipt of Lab Report Submission (To Be Kept by Student)Document4 pagesReceipt of Lab Report Submission (To Be Kept by Student)Dylan YongNo ratings yet

- MATLAB by Examples - Starting With Neural Network in MatlabDocument6 pagesMATLAB by Examples - Starting With Neural Network in Matlabzhee_pirateNo ratings yet

- Neuron ModelDocument8 pagesNeuron Modelعبد السلام حسينNo ratings yet

- Mind - How To Build A Neural Network (Part One)Document9 pagesMind - How To Build A Neural Network (Part One)Marcos Moreira AlvesNo ratings yet

- Multiple-Layer Networks Backpropagation AlgorithmsDocument46 pagesMultiple-Layer Networks Backpropagation AlgorithmsZa'imahPermatasariNo ratings yet

- 3 2MLP Extra NotesDocument27 pages3 2MLP Extra Notesyoussef hossamNo ratings yet

- Back-Propagation Algorithm of CHBPN CodeDocument10 pagesBack-Propagation Algorithm of CHBPN Codesch203No ratings yet

- A Multilayer Feed-Forward Neural NetworkDocument9 pagesA Multilayer Feed-Forward Neural NetworkdebmatraNo ratings yet

- Pattern Recognition Using Neural Network (Project Proposal For Image Processing)Document6 pagesPattern Recognition Using Neural Network (Project Proposal For Image Processing)Dil Prasad KunwarNo ratings yet

- Chap 7 Neural NetworksDocument42 pagesChap 7 Neural NetworksRashed KaiserNo ratings yet

- Multi-Layer Perceptron TutorialDocument87 pagesMulti-Layer Perceptron TutorialSari AyuNo ratings yet

- Understanding and Creating Neural NetworksDocument69 pagesUnderstanding and Creating Neural Networksnegisid126No ratings yet

- Neural BP 02 PDFDocument12 pagesNeural BP 02 PDFAlshabwani SalehNo ratings yet

- Cp5191 MLT Unit IIDocument27 pagesCp5191 MLT Unit IIbala_07123No ratings yet

- Interview Preparation KitDocument132 pagesInterview Preparation KitSus ManNo ratings yet

- Assignment 4Document4 pagesAssignment 4SelmanurtoNo ratings yet

- Understanding Backpropagation Algorithm - Towards Data ScienceDocument11 pagesUnderstanding Backpropagation Algorithm - Towards Data ScienceKashaf BakaliNo ratings yet

- BackpropagationDocument12 pagesBackpropagationali.nabeel246230No ratings yet

- Neural Network GUI TutorialDocument11 pagesNeural Network GUI TutorialsunnymeharwalNo ratings yet

- PPT2 NN - Neuron ModelDocument26 pagesPPT2 NN - Neuron ModelMuhammad Harith B. BadzliNo ratings yet

- Ann R16 Unit 4 PDFDocument16 pagesAnn R16 Unit 4 PDFsricharanreddymamidiNo ratings yet

- Unit 2Document38 pagesUnit 2you • were • trolledNo ratings yet

- Problem Set 1: Perceptron Learning: Omputer Cience (Spring Term 2005) Neural Networks & Graphical ModelsDocument5 pagesProblem Set 1: Perceptron Learning: Omputer Cience (Spring Term 2005) Neural Networks & Graphical ModelsHilton SeheresNo ratings yet

- Principles of Training Multi-Layer Neural Network Using BackpropagationDocument15 pagesPrinciples of Training Multi-Layer Neural Network Using Backpropagationkamalamdharman100% (1)

- Back-Propagation Is Very Simple. Who Made It ComplicatedDocument26 pagesBack-Propagation Is Very Simple. Who Made It ComplicatedEleodor Paul CirlugeaNo ratings yet

- Artificial Neural Networks - Lect - 3Document16 pagesArtificial Neural Networks - Lect - 3ma5395822No ratings yet

- Neural Network: Throughout The Whole Network, Rather Than at Specific LocationsDocument8 pagesNeural Network: Throughout The Whole Network, Rather Than at Specific LocationsSrijal ManandharNo ratings yet

- The BACKPROPAGATION Algorithm: KD KDDocument10 pagesThe BACKPROPAGATION Algorithm: KD KDSwathi ReddyNo ratings yet

- The BACKPROPAGATION Algorithm: KD KDDocument10 pagesThe BACKPROPAGATION Algorithm: KD KDSwathi ReddyNo ratings yet

- The Perceptron, Delta Rule and Its VariantsDocument7 pagesThe Perceptron, Delta Rule and Its VariantsMaryam FarisNo ratings yet

- Tuto 6 Optimisation ENSIADocument3 pagesTuto 6 Optimisation ENSIAsarra.arabNo ratings yet

- Programacio Basica 2Document20 pagesProgramacio Basica 2guille1989No ratings yet

- 0111CS191028Document4 pages0111CS19102870madhureeNo ratings yet

- Chapter 3Document12 pagesChapter 3HARSH SHUKLA IET LucknowNo ratings yet

- Neural NetworksDocument27 pagesNeural NetworksDania DallahNo ratings yet

- D.Y. Patil College of Engineering, Akurdi Department of Electronics & Telecommunication EngineeringDocument41 pagesD.Y. Patil College of Engineering, Akurdi Department of Electronics & Telecommunication EngineeringP SNo ratings yet

- Simulating RAM in ClojureDocument43 pagesSimulating RAM in ClojureJose Luis LoyaNo ratings yet

- Components of Artificial Neural NetworksDocument27 pagesComponents of Artificial Neural NetworksEmy MuhamedNo ratings yet

- Seminar AnnDocument27 pagesSeminar AnnShifa ThasneemNo ratings yet

- Artificial Neural NetworkDocument3 pagesArtificial Neural NetworkmUZANo ratings yet

- Neural Net NotesDocument7 pagesNeural Net Notesdiankusuma123No ratings yet

- Backpropagation AlgorithmDocument29 pagesBackpropagation AlgorithmNhật Khoa DươngNo ratings yet

- ANN Simulink ExamplesDocument14 pagesANN Simulink ExamplesSantosh NandaNo ratings yet

- Application of Artificial Neural NetworkDocument7 pagesApplication of Artificial Neural NetworkRahul Kumar JhaNo ratings yet

- Lab ANDandXOR REGRESSION ANNDocument13 pagesLab ANDandXOR REGRESSION ANNtechoverlord.contactNo ratings yet

- Convolution Lab: 0.1 Short Discussion of Calculating ConvolutionsDocument7 pagesConvolution Lab: 0.1 Short Discussion of Calculating Convolutionsdervisis1No ratings yet

- C# Artificial Intelligence (Ai) Programming - A Basic Object Oriented (Oop) Framework For Neural PDFDocument18 pagesC# Artificial Intelligence (Ai) Programming - A Basic Object Oriented (Oop) Framework For Neural PDFjonathanbc25100% (1)

- FAM Fuzzy Associative MemoryDocument19 pagesFAM Fuzzy Associative Memorydessayaniputri100% (1)

- 01b. neuralNetNumpy - ExplanationDocument2 pages01b. neuralNetNumpy - ExplanationArquitetogeek PontocomNo ratings yet

- Name:-Time Allowed: - 3 Hours: Artificial Neural Networks ExamDocument11 pagesName:-Time Allowed: - 3 Hours: Artificial Neural Networks ExamahmedNo ratings yet

- Neural Networks Question BankDocument42 pagesNeural Networks Question BankJosphat OtienoNo ratings yet

- Feedforward Propagation: 1.1 Visualizing The DataDocument11 pagesFeedforward Propagation: 1.1 Visualizing The DataAstryiah FaineNo ratings yet

- Artificial Neural Network Application in Logic System: Siddharth Saxena TCET, MumbaiDocument5 pagesArtificial Neural Network Application in Logic System: Siddharth Saxena TCET, Mumbaisidd14feb92No ratings yet

- COMP 110 Project 6: Sudoku PuzzleDocument9 pagesCOMP 110 Project 6: Sudoku PuzzleDevansh DubeyNo ratings yet

- Training Artificial Neural Networks For Fuzzy LogicDocument15 pagesTraining Artificial Neural Networks For Fuzzy LogicHala EidNo ratings yet

- Lab 2 Gnuradio ImplementationDocument8 pagesLab 2 Gnuradio ImplementationSreeraj RajendranNo ratings yet

- BackpropagationDocument7 pagesBackpropagationShruti JamsandekarNo ratings yet

- BAJAJ ELECTRICALS LIMITED - AddendumDocument1 pageBAJAJ ELECTRICALS LIMITED - AddendumRohan BatraNo ratings yet

- Covidien ForceTriad ESU - Service Manual PDFDocument212 pagesCovidien ForceTriad ESU - Service Manual PDFsergio ribeiroNo ratings yet

- Evaluation ToolkitDocument34 pagesEvaluation ToolkitshaberaNo ratings yet

- Pes Institute of Technology & Management, ,: Prerana Educational & Social Trust (R), ShivamoggaDocument2 pagesPes Institute of Technology & Management, ,: Prerana Educational & Social Trust (R), ShivamoggaarjuninformationNo ratings yet

- 0705181607main - Afd Dealer List-7518Document222 pages0705181607main - Afd Dealer List-7518rajendra kumar100% (1)

- Chemistry - CBCS 4TH SEMESTER PRACTICAL CHEMISTRYDocument5 pagesChemistry - CBCS 4TH SEMESTER PRACTICAL CHEMISTRYkpatel2932004No ratings yet

- Digital Marketing Strategic Planning IntegrationDocument61 pagesDigital Marketing Strategic Planning Integrationruby.nicholson732100% (54)

- Scheduling Guide For Program ManagersDocument96 pagesScheduling Guide For Program Managersapi-27145250100% (3)

- DataIOAPI UserGuideDocument34 pagesDataIOAPI UserGuideHakim SabRiNo ratings yet

- LAB #5 CPU Scheduling Algorithms-1: Objective TheoryDocument2 pagesLAB #5 CPU Scheduling Algorithms-1: Objective Theorykanwar zainNo ratings yet

- SCRIPT - SENIOR HIGH SCHOOL EditedDocument3 pagesSCRIPT - SENIOR HIGH SCHOOL EditedJesary Marc ArnosaNo ratings yet

- TASK 4 - Self Reflection - Communicating Insights and AnalysisDocument2 pagesTASK 4 - Self Reflection - Communicating Insights and Analysis20T3136 Nainika TrivediNo ratings yet

- Question Bank of Managerial Economics - 4 MarkDocument23 pagesQuestion Bank of Managerial Economics - 4 MarklakkuMS100% (4)

- ASTM A-27M-87 Steel Castings, Carbon, For General Application PDFDocument3 pagesASTM A-27M-87 Steel Castings, Carbon, For General Application PDFErwin GultomNo ratings yet

- Cleaning Guide Gen2 Gen2 Univ Gen3 MNL 000054 DDocument13 pagesCleaning Guide Gen2 Gen2 Univ Gen3 MNL 000054 DMichael LawrenceNo ratings yet

- 4TH Quarter Lesson PlanDocument8 pages4TH Quarter Lesson PlanDG SanchezNo ratings yet

- Report On Saras DairyDocument91 pagesReport On Saras DairyAjay Singh Rathore100% (9)

- Rel APMDocument20 pagesRel APMoptisearch100% (1)

- Bi ComponentDocument13 pagesBi ComponentA.K.M. Rashedul IslamNo ratings yet

- A Vegan Diet in KenyaDocument2 pagesA Vegan Diet in Kenyalarrylico2017No ratings yet

- A Choice of Three BookcasesDocument8 pagesA Choice of Three Bookcasesdanio69No ratings yet

- ISE328 QlikSense (Part A) - 18102021Document19 pagesISE328 QlikSense (Part A) - 18102021LIAONo ratings yet

- WHO Surgical Safety ChecklistDocument1 pageWHO Surgical Safety ChecklistArabelle GO100% (1)

- Innovative Strategies Used by Artisans To Promote HandicraftsDocument16 pagesInnovative Strategies Used by Artisans To Promote HandicraftsMadhviJainNo ratings yet

- Managerial Economics PDFDocument110 pagesManagerial Economics PDFSean Lester S. NombradoNo ratings yet

- Quality AssuranceDocument6 pagesQuality AssurancePhoebe LlameloNo ratings yet

- DLL - Science 6 - Q2 - W7Document8 pagesDLL - Science 6 - Q2 - W7Mak Oy MontefalconNo ratings yet

- Penalty Point Will Be Deducted For Incorrect Answers.) : ST THDocument4 pagesPenalty Point Will Be Deducted For Incorrect Answers.) : ST THISABELITA100% (1)