Professional Documents

Culture Documents

Big-O Algorithm Complexity Cheat Sheet

Uploaded by

Q12W3ER40 ratings0% found this document useful (0 votes)

73 views6 pagesGOOD FOR STUDYING

Copyright

© © All Rights Reserved

Available Formats

PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentGOOD FOR STUDYING

Copyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

0 ratings0% found this document useful (0 votes)

73 views6 pagesBig-O Algorithm Complexity Cheat Sheet

Uploaded by

Q12W3ER4GOOD FOR STUDYING

Copyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

You are on page 1of 6

Complexities!

Good Fair Poor

Searching

Algorithm Data Structure Time Complexity Space

Complexity

Average Worst Worst

Depth First Search

(DFS)

Graph of |V|

vertices and |E|

edges

- O(|E| + |V|) O(|V|)

Breadth First

Search (BFS)

Graph of |V|

vertices and |E|

edges

- O(|E| + |V|) O(|V|)

Binary search Sorted array of n

elements

O(log(n)) O(log(n)) O(1)

Linear (Brute

Force)

Array

O(n) O(n) O(1)

Shortest path by

Dijkstra,

using a Min-heap

as priority queue

Graph with |V|

vertices and |E|

edges

O((|V| + |E|)

log |V|)

O((|V| + |E|)

log |V|)

O(|V|)

Shortest path by

Dijkstra,

using an unsorted

array as priority

queue

Graph with |V|

vertices and |E|

edges

O(|V|^2) O(|V|^2) O(|V|)

Shortest path by

Bellman-Ford

Graph with |V|

vertices and |E|

edges

O(|V||E|) O(|V||E|) O(|V|)

Sorting

Algorithm Data

Structure

Time Complexity Worst Case Auxiliary

Space Complexity

Best Average Worst Worst

Quicksort Array

O(n

log(n))

O(n

log(n))

O(n^2) O(n)

Mergesort Array

O(n

log(n))

O(n

log(n))

O(n

log(n))

O(n)

Heapsort Array

O(n

log(n))

O(n

log(n))

O(n

log(n))

O(1)

Bubble Sort Array

O(n) O(n^2) O(n^2) O(1)

Insertion

Sort

Array

O(n) O(n^2) O(n^2) O(1)

Select Sort Array

O(n^2) O(n^2) O(n^2) O(1)

Bucket Sort Array

O(n+k) O(n+k) O(n^2) O(nk)

Radix Sort Array

O(nk) O(nk) O(nk) O(n+k)

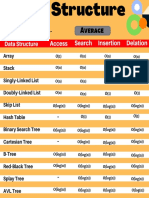

Data Structures

Data Structure Time Complexity Space

Complex

ity

Average Worst Worst

Indexing Search Insertion Deletion Indexing Search Insertion Deletion

Basic Array

O(1) O(n) - - O(1) O(n) - - O(n)

Dynamic Array

O(1) O(n) O(n) O(n) O(1) O(n) O(n) O(n) O(n)

Singly-Linked

List

O(n) O(n) O(1) O(1) O(n) O(n) O(1) O(1) O(n)

Doubly-Linked

List

O(n) O(n) O(1) O(1) O(n) O(n) O(1) O(1) O(n)

Skip List

O(log(n)) O(log(n)) O(log(n)) O(log(n)) O(n) O(n) O(n) O(n) O(n

log(n))

Hash Table

- O(1) O(1) O(1) - O(n) O(n) O(n) O(n)

Binary Search

Tree

O(log(n)) O(log(n)) O(log(n)) O(log(n)) O(n) O(n) O(n) O(n) O(n)

Cartresian Tree

- O(log(n)) O(log(n)) O(log(n)) - O(n) O(n) O(n) O(n)

B-Tree

O(log(n)) O(log(n)) O(log(n)) O(log(n)) O(log(n)) O(log(n)) O(log(n)) O(log(n)) O(n)

Red-Black Tree

O(log(n)) O(log(n)) O(log(n)) O(log(n)) O(log(n)) O(log(n)) O(log(n)) O(log(n)) O(n)

Splay Tree

- O(log(n)) O(log(n)) O(log(n)) - O(log(n)) O(log(n)) O(log(n)) O(n)

AVL Tree

O(log(n)) O(log(n)) O(log(n)) O(log(n)) O(log(n)) O(log(n)) O(log(n)) O(log(n)) O(n)

Heaps

Heaps Time Complexity

Heapify Find Max Extract

Max

Increase

Key

Insert Delete Merge

Linked

List

(sorted)

- O(1) O(1) O(n) O(n) O(1) O(m+n)

Linked

List

(unsorted)

- O(n) O(n) O(1) O(1) O(1) O(1)

Binary

Heap

O(n) O(1) O(log(n)) O(log(n)) O(log(n)) O(log(n)) O(m+n)

Binomial

Heap

- O(log(n)) O(log(n)) O(log(n)) O(log(n)) O(log(n)) O(log(n))

Fibonacci

Heap

- O(1) O(log(n))* O(1)* O(1) O(log(n))* O(1)

Graphs

Node / Edge

Management

Storage Add

Vertex

Add

Edge

Remove

Vertex

Remove

Edge

Query

Adjacency list

O(|V|+|E|) O(1) O(1) O(|V| +

|E|)

O(|E|) O(|V|)

Incidence list

O(|V|+|E|) O(1) O(1) O(|E|) O(|E|) O(|E|)

Adjacency

matrix

O(|V|^2) O(|V|^2) O(1) O(|V|^2) O(1) O(1)

Incidence

matrix

O(|V|

|E|)

O(|V|

|E|)

O(|V|

|E|)

O(|V|

|E|)

O(|V|

|E|)

O(|E|)

Notation for asymptotic growth

letter bound growth

(theta) upper and lower, tight

[1]

equal

[2]

(big-oh) O upper, tightness unknown less than or equal

[3]

(small-oh) o upper, not tight less than

(big omega) lower, tightness unknown greater than or equal

(small omega) lower, not tight greater than

[1] Big O is the upper bound, while Omega is the lower bound. Theta requires both Big O and

Omega, so that's why it's referred to as a tight bound (it must be both the upper and lower

bound). For example, an algorithm taking Omega(n log n) takes at least n log n time but has no

upper limit. An algorithm taking Theta(n log n) is far preferential since it takes AT LEAST n log

n (Omega n log n) and NO MORE THAN n log n (Big O n log n).

SO

[2] f(x)=(g(n)) means f (the running time of the algorithm) grows exactly like g when n (input

size) gets larger. In other words, the growth rate of f(x) is asymptotically proportional to g(n).

[3] Same thing. Here the growth rate is no faster than g(n). big-oh is the most useful because

represents the worst-case behavior.

In short, if algorithm is __ then its performance is __

algorithm performance

o(n) < n

O(n) n

(n) = n

(n) n

(n) > n

Big-O Complexity Chart

You might also like

- Big-O Algorithm Complexity Cheat Sheet PDFDocument4 pagesBig-O Algorithm Complexity Cheat Sheet PDFKumar Gaurav100% (1)

- Ultimate Cheat Sheet for Heaps, Graphs, Searching, Sorting, Data StructuresDocument2 pagesUltimate Cheat Sheet for Heaps, Graphs, Searching, Sorting, Data StructuresGateSanyasiNo ratings yet

- Know Thy ComplexitiesDocument8 pagesKnow Thy ComplexitiesbhuvangatesNo ratings yet

- Searching: Depth First Search (DFS)Document7 pagesSearching: Depth First Search (DFS)AdityaNo ratings yet

- Big O CheatsheetDocument7 pagesBig O CheatsheetDan-CristianNo ratings yet

- Big-O Cheat Sheet-LetterDocument4 pagesBig-O Cheat Sheet-LetterFidel EnriqueNo ratings yet

- Time ComplexityDocument5 pagesTime ComplexitySutanu MUKHERJEENo ratings yet

- Big-O Algorithm Complexity Cheat SheetDocument9 pagesBig-O Algorithm Complexity Cheat SheetManoj PandeyNo ratings yet

- Big-O Algorithm Complexity Cheat SheetDocument4 pagesBig-O Algorithm Complexity Cheat SheetMìrägé Σ∞ ÃçêNo ratings yet

- Plexity AnalysisDocument9 pagesPlexity AnalysisDev ArenaNo ratings yet

- Big-O Algorithm Complexity Cheat SheetDocument6 pagesBig-O Algorithm Complexity Cheat Sheetgamezone89No ratings yet

- Big-O Algorithm Complexity Cheat Sheet (Know Thy Complexities!) @ericdrowellDocument9 pagesBig-O Algorithm Complexity Cheat Sheet (Know Thy Complexities!) @ericdrowelllaureNo ratings yet

- Complexity AnalysisDocument5 pagesComplexity AnalysisAtul VishwakarmaNo ratings yet

- Big-O Algorithm Complexity Cheat SheetDocument4 pagesBig-O Algorithm Complexity Cheat SheetPravind KumarNo ratings yet

- Space & Time Complexity ChartDocument2 pagesSpace & Time Complexity Chartmanan00No ratings yet

- Big-O Algorithm Complexity Cheat Sheet PDFDocument1 pageBig-O Algorithm Complexity Cheat Sheet PDFbhavana manglikNo ratings yet

- Time ComplexityDocument1 pageTime ComplexityMohamaad SihatthNo ratings yet

- Time ComplexityDocument6 pagesTime Complexitynrd522tjccNo ratings yet

- Know Thy Complexities!: Big-O Complexity ChartDocument2 pagesKnow Thy Complexities!: Big-O Complexity ChartaldugreNo ratings yet

- Know Thy Complexities!: Big-O Complexity ChartDocument2 pagesKnow Thy Complexities!: Big-O Complexity ChartRhythm PatelNo ratings yet

- Know Thy Complexities!: Big-O Complexity ChartDocument2 pagesKnow Thy Complexities!: Big-O Complexity ChartsssssssssssssNo ratings yet

- Big-O Complexity CheatsheetDocument2 pagesBig-O Complexity Cheatsheetiram juttNo ratings yet

- Big O Cheat SheetDocument2 pagesBig O Cheat SheetShekhar TarareNo ratings yet

- Big O Sheet PDFDocument2 pagesBig O Sheet PDFShekhar TarareNo ratings yet

- Big O Cheat Sheet: Array Sorting AlgorithmsDocument5 pagesBig O Cheat Sheet: Array Sorting Algorithmspravin patilNo ratings yet

- Big o Cheat SheetDocument6 pagesBig o Cheat SheetXinyu HuNo ratings yet

- Big-O Complexity Chart & Data Structure OperationsDocument2 pagesBig-O Complexity Chart & Data Structure OperationsDhruvaSNo ratings yet

- Big-O Algorithm Complexity Cheat Sheet (Know Thy Complexities!) @ericdrowell2Document1 pageBig-O Algorithm Complexity Cheat Sheet (Know Thy Complexities!) @ericdrowell2Mono Nightmare2No ratings yet

- DSA CheatSheet 1669861526Document9 pagesDSA CheatSheet 16698615261DS19CS050 GM LohithNo ratings yet

- Data Structure Cheatsheet - CombinedDocument9 pagesData Structure Cheatsheet - Combinedshiqiao zhuNo ratings yet

- Big-O Complexity Cheat SheetDocument5 pagesBig-O Complexity Cheat SheetNurlign YitbarekNo ratings yet

- Mid Term Cheet Sheet 2023 JulyDocument2 pagesMid Term Cheet Sheet 2023 JulyHtet Kaung SanNo ratings yet

- Data Structure ComplexityDocument3 pagesData Structure ComplexityPartha BanerjeeNo ratings yet

- Cheat SheetDocument3 pagesCheat Sheetecartman2No ratings yet

- O (N) O (Log Log N) )Document4 pagesO (N) O (Log Log N) )ArviNo ratings yet

- Algorithm Data Structure Time Complexity:Best Time Complexity:Average Time Complexity:Worst Space Complexity:WorstDocument1 pageAlgorithm Data Structure Time Complexity:Best Time Complexity:Average Time Complexity:Worst Space Complexity:WorstDrJayakanthan NNo ratings yet

- Redis command time complexity cheat sheetDocument6 pagesRedis command time complexity cheat sheetDiogenesNo ratings yet

- Big-O Algorithm Complexity Cheat Sheet (Know Thy Complexities!) @ericdrowellDocument9 pagesBig-O Algorithm Complexity Cheat Sheet (Know Thy Complexities!) @ericdrowelldungNo ratings yet

- Big O Cheat SheetDocument1 pageBig O Cheat Sheetramesh0% (1)

- Big O Cheat Sheet 1620815140Document1 pageBig O Cheat Sheet 1620815140biofdidou0% (1)

- Time and Space Complexity Comparison of Sorting AlgorithmsDocument2 pagesTime and Space Complexity Comparison of Sorting AlgorithmsHimanshu MishraNo ratings yet

- Introduction To C++Document5 pagesIntroduction To C++nartiishartiiNo ratings yet

- DS ComplexitiesDocument5 pagesDS Complexitiesk224827 Anum AkhtarNo ratings yet

- Time and Space Complexity of Common AlgorithmsDocument8 pagesTime and Space Complexity of Common AlgorithmsNetriderTheThechieNo ratings yet

- Case StudyDocument6 pagesCase Studyzaid bin shafiNo ratings yet

- Big O Notation PDFDocument9 pagesBig O Notation PDFravigobiNo ratings yet

- IUPUI CSCI 240 Course Analyzes Algorithms and Big-Oh NotationDocument15 pagesIUPUI CSCI 240 Course Analyzes Algorithms and Big-Oh NotationLaxman AdhikariNo ratings yet

- Properties of Big-Oh Notation: Dale RobertsDocument14 pagesProperties of Big-Oh Notation: Dale Robertsramurama12No ratings yet

- Sample Mid Term For Review: 2. A. Iteration (S)Document5 pagesSample Mid Term For Review: 2. A. Iteration (S)Romulo CostaNo ratings yet

- LITTLE O’S AND BIG O’SDocument6 pagesLITTLE O’S AND BIG O’SmrlsdNo ratings yet

- Design & Analysis of Algorithms: Aunsia Khan Week 04Document23 pagesDesign & Analysis of Algorithms: Aunsia Khan Week 04ASIMNo ratings yet

- Big-O Algorithm Complexity Cheat SheetDocument8 pagesBig-O Algorithm Complexity Cheat SheetNileshNo ratings yet

- Tables of Laguerre Polynomials and Functions: Mathematical Tables Series, Vol. 39From EverandTables of Laguerre Polynomials and Functions: Mathematical Tables Series, Vol. 39No ratings yet

- Discrete Series of GLn Over a Finite Field. (AM-81), Volume 81From EverandDiscrete Series of GLn Over a Finite Field. (AM-81), Volume 81No ratings yet

- Green's Function Estimates for Lattice Schrödinger Operators and Applications. (AM-158)From EverandGreen's Function Estimates for Lattice Schrödinger Operators and Applications. (AM-158)No ratings yet

![Mathematical Tables: Tables of in G [z] for Complex Argument](https://imgv2-2-f.scribdassets.com/img/word_document/282615796/149x198/febb728e8d/1699542561?v=1)