Professional Documents

Culture Documents

Richardsons Least Square Notes

Richardsons Least Square Notes

Uploaded by

Radit SoeriawinataCopyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Richardsons Least Square Notes

Richardsons Least Square Notes

Uploaded by

Radit SoeriawinataCopyright:

Available Formats

Extracted from 2003 Inverse Problems Lecture Notes of:

Randall M. Richardson and George Zandt

Department of Geosciences

University of Arizona

Tucson, Arizona 85721

Geosciences 567: CHAPTER 3 (RMR/GZ)

3.4 Minimizing the MisfitLeast Squares

3.(4.1 Least Squares Problem for a Straight Line

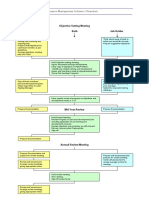

Consider the figure below (after Figure 3.1 from Menke, page 36):

(a)

(b)

.

.

.

... .. .

.....

... .

obs

di

d pre

ei

(a) Least squares fitting of a straight line to (z, d) pairs. (b) The error ei for each

observation is the difference between the observed and predicted datum: ei = dobs

i

dpre

i .

The ith predicted datum dipre for the straight line problem is given by

dipre = m 1 + m 2zi

(3.5)

where the two unknowns, m 1 and m2, are the intercept and slope of the line, respectively, and z i is

the value along the z axis where the ith observation is made.

For N points we have a system of N such equations that can be written in matrix form as:

d1 1 z1

M M M

m1

di = 1 zi

M M M m2

d N 1 z N

(3.6)

Or, in the by now familiar matrix notation, as

d = G

m

(N 1) (N 2) (2 1)

30

(1.13)

Geosciences 567: CHAPTER 3 (RMR/GZ)

The total misfit E is given by

N

E=e e=

[d

dipre

obs

i

(3.7)

[d

obs

i

( m1 + m2 zi )

(3.8)

Dropping the obs in the notation for the observed data, we have

N

E=

[d

2

i

2 di m1 2 di m2 zi + 2 m1m2 zi + m12 + m22 zi2

(3.9)

Then, taking the partials of E with respect to m1 and m2, respectively, and setting them to zero yields

the following equations:

E

= 2 Nm1 2

m1

d + 2m z = 0

2

(3.10)

i =1

i =1

and

E

= 2

m2

d z + 2m z + 2m z

1

i i

i =1

i =1

2

i

=0

(3.11)

i =1

Rewriting Equations (3.10) and (3.11) above yields

z = d

(3.12)

z + m z = d z

(3.13)

Nm1 + m2

and

m1

2

i

i i

Combining the two equations in matrix notation in the form Am = b yields

zi m1 di

=

zi2 m2 di zi

(3.14)

A

m =

b

(2 2) (2 1) (2 1)

(3.15)

N

z

i

or simply

31

Geosciences 567: CHAPTER 3 (RMR/GZ)

Note that by the above procedure we have reduced the problem from one with N equations in two

unknowns (m1 and m2) in Gm = d to one with two equations in the same two unknowns in Am =

b.

The matrix equation Am = b can also be rewritten in terms of the original G and d when

one notices that the matrix A can be factored as

1 z1

N zi 1 1 L 1 1 z2

T

=

z z 2 z z L z M M = G G

N

2

i 1

i

1 z

N

(2 2)

(2 N)

(N 2) (2 2)

(3.16)

Also, b above can be rewritten similarly as

di 1

d z = z

i i 1

1

z2

d1

L 1 d2

= GTd

L z N M

d

N

(3.17)

Thus, substituting Equations (3.16) and (3.17) into Equation (3.14), one arrives at the so-called

normal equations for the least squares problem:

GTGm = GTd

(3.18)

The least squares solution mLS is then found as

mLS = [GTG]1 GTd

(3.19)

assuming that [GTG]1 exists.

In summary, we used the forward problem (Equation 3.6) to give us an explicit relationship

between the model parameters (m1 and m2) and a measure of the misfit to the observed data, E.

Then, we minimized E by taking the partial derivatives of the misfit function with respect to the

unknown model parameters, setting the partials to zero, and solving for the model parameters.

3.4.2 Derivation of the General Least Squares Solution

We start with any system of linear equations which can be expressed in the form

d = G

m

(N 1) (N M) (M 1)

(1.13)

Again, let E = eTe = [d dpre]T[d dpre]

E = [d Gm]T[d Gm]

32

(3.20)

Geosciences 567: CHAPTER 3 (RMR/GZ)

N

E=

i =1

di

Gij m j

j =1

di

Gik mk

k =1

M

(3.21)

As before, the procedure is to write out the above equation with all its cross terms, take partials of E

with respect to each of the elements in m, and set the corresponding equations to zero. For

example, following Menke, page 40, Equations (3.6)(3.9), we obtain an expression for the partial

of E with respect to mq:

E

=2

mq

m G G

k =1

iq ik

i =1

G d = 0

iq i

(3.22a)

i =1

We can simplify this expression by recalling Equation (2.4) from the introductory remarks on

matrix manipulations in Chapter 2:

M

Cij =

a b

(2.4)

ik kj

k =1

Note that the first summation on i in Equation (3.22a) looks similar in form to Equation (2.4), but

the subscripts on the first G term are backwards. If we further note that interchanging the

subscripts is equivalent to taking the transpose of G, we see that the summation on i gives the qk-th

entry in GTG:

N

G G = [G

iq ik

i =1

T] G

qi ik

= [G T G]qk

(3.22b)

i =1

Thus, Equation (3.22a) reduces to

E

=2

mq

m [G G ]

T

qk

k =1

G d = 0

iq i

(3.23)

i =1

Now, we can further simplify the first summation by recalling Equation (2.6) from the same section

M

di =

G m

ij

(2.6)

j =1

To see this clearly, we rearrange the order of terms in the first sum as follows:

M

k =1

mk [G T G]qk

[G G ]

T

qk mk

= [G T Gm]q

(3.24)

k =1

which is the qth entry in GTGm. Note that GTGm has dimension (M N)(N M)(M 1) = (M

1). That is, it is an M-dimensional vector.

In a similar fashion, the second summation on i can be reduced to a term in [GTd]q, the qth

entry in an (M N)(N 1) = (M 1) dimensional vector. Thus, for the qth equation, we have

33

Geosciences 567: CHAPTER 3 (RMR/GZ)

0=

E

= 2[G T Gm]q 2[G T d]q

mq

(3.25)

Dropping the common factor of 2 and combining the q equations into matrix notation, we arrive at

GTGm = GTd

(3.26)

The least squares solution for m is thus given by

mLS = [GTG]1GTd

(3.27)

1 , is thus given by

The least squares operator, GLS

1 = [GTG]1GT

GLS

(3.28)

Recalling basic calculus, we note that mLS above is the solution that minimizes E, the total misfit.

Summarizing, setting the q partial derivatives of E with respect to the elements in m to zero leads to

the least squares solution.

We have just derived the least squares solution by taking the partial derivatives of E with

respect to mq and then combining the terms for q = 1, 2, . . ., M. An alternative, but equivalent,

formulation begins with Equation (3.2) but is written out as

E = [d Gm]T[d Gm]

(3.20)

= [dT mTGT][d Gm]

= dTd dTGm mTGTd + mTGTGm

(3.29)

Then, taking the partial derivative of E with respect to mT turns out to be equivalent to what was

done in Equations (3.22)(3.26) for mq, namely

E/mT = GTd + GTGm = 0

(3.30)

GTGm = GTd

(3.26)

mLS = [GTG]1GTd

(3.27)

which leads to

and

It is also perhaps interesting to note that we could have obtained the same solution without

taking partials. To see this, consider the following four steps.

Step 1. We begin with

Gm = d

34

(1.13)

Geosciences 567: CHAPTER 3 (RMR/GZ)

Step 2.

We then premultiply both sides by GT

GTGm = GTd

Step 3.

Premultiply both sides by [GTG]1

[GTG]1GTGm = [GTG]1GTd

Step 4.

(3.26)

(3.31)

This reduces to

mLS = [GTG]1GTd

(3.27)

as before. The point is, however, that this way does not show why mLS is the solution

which minimizes E, the misfit between the observed and predicted data.

All of this assumes that [GTG]1 exists, of course. We will return to the existence and

properties of [GTG]1 later. Next, we will look at two examples of least squares problems to show

a striking similarity that is not obvious at first glance.

3.4.3 Two Examples of Least Squares Problems

Example 1. Best-Fit Straight-Line Problem

We have, of course, already derived the solution for this problem in the last section. Briefly,

then, for the system of equations

d = Gm

(1.13)

given by

d1 1 z1

d 1 z m

2 1

2=

M M M m2

1 z

N

d N

we have

1

1

L

1

1

1

G G = z z L z

1 2

N M

1

T

and

35

z1

z2 N zi

=

M zi zi2

z N

(3.6)

(3.32a)

Geosciences 567: CHAPTER 3 (RMR/GZ)

d1

d

1 1 L 1 d

GT d = z z L z 2 = i

di zi

M

N

1 2

d N

(3.32b)

Thus, the least squares solution is given by

N

m1

m = z

2 LS i

zi 1 di

zi2 di zi

(3.32c)

Example 2. Best-Fit Parabola Problem

The ith predicted datum for a parabola is given by

d i = m 1 + m 2 z i + m 3 z i2

(3.33)

where m1 and m2 have the same meanings as in the straight line problem, and m 3 is the coefficient

of the quadratic term. Again, the problem can be written in the form:

d = Gm

(1.13)

where now we have

d1 1 z1

M

M M

di = 1 zi

M

M M

d N 1 z N

and

N

T

G G = zi

zi2

zi

zi2

zi3

z12

M m1

zi2 m2

M m3

z N2

zi2

zi3 ,

zi4

di

G d = di zi

di zi2

T

(3.34)

(3.35)

As before, we form the least squares solution as

mLS = [GTG]1GTd

(3.27)

Although the forward problems of predicting data for the straight line and parabolic cases

look very different, the least squares solution is formed in a way that emphasizes the fundamental

similarity between the two problems. For example, notice how the straight line problem is buried

within the parabola problem. The upper left hand 2 2 part of GTG in Equation (3.35) is the same

as Equation (3.32a). Also, the first two entries in GTd in Equation (3.35) are the same as Equation

(3.32b).

36

You might also like

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5807)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1091)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (842)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (590)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (897)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (346)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (122)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (401)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (74)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- Smartphone Camera Advances Made Possible by Mems Cam Technologies February 20131Document14 pagesSmartphone Camera Advances Made Possible by Mems Cam Technologies February 20131Vitor PamplonaNo ratings yet

- Student Example - Career Action Plan AssignmentDocument5 pagesStudent Example - Career Action Plan AssignmentYash SNo ratings yet

- Formulating A Research ProblemDocument12 pagesFormulating A Research ProblemAesha Upadhyay100% (2)

- Ms 6 Science SyllabusDocument4 pagesMs 6 Science Syllabusapi-322611900No ratings yet

- Ross-Jones POD ILP Template - OverviewDocument204 pagesRoss-Jones POD ILP Template - OverviewEli MuchaiNo ratings yet

- Web Inspection For Reaching New Heights - BST International GMBHDocument4 pagesWeb Inspection For Reaching New Heights - BST International GMBHQuý Đình Mai MaiNo ratings yet

- Control Yaskawa Servo Drive Using TRIO Motion Controller: Thesis ProposalDocument11 pagesControl Yaskawa Servo Drive Using TRIO Motion Controller: Thesis ProposalNeel GandhiNo ratings yet

- SPE 149078 Essence of The SPE Petroleum Resources Management System Definitions and GuidingDocument2 pagesSPE 149078 Essence of The SPE Petroleum Resources Management System Definitions and GuidingBrajhann TámaraNo ratings yet

- Reality in The Name of God - Chapter 2 - The Kabbalah of Being - Horwitz NoahDocument55 pagesReality in The Name of God - Chapter 2 - The Kabbalah of Being - Horwitz NoahVladimir EstragonNo ratings yet

- New ICD-10-CM Codes For BlindnessDocument6 pagesNew ICD-10-CM Codes For BlindnessdrrskhanNo ratings yet

- Soalan BiologiDocument9 pagesSoalan BiologiKeluarga IzzatNo ratings yet

- PMS FlowchartDocument1 pagePMS FlowchartPrabhuraaj98No ratings yet

- C++ ManualDocument36 pagesC++ ManualNancyNo ratings yet

- Substitution CiphersDocument38 pagesSubstitution CiphersPooja ThakkarNo ratings yet

- The Franck-Hertz Experiment Phy 344: E = hν originally proposed for theDocument4 pagesThe Franck-Hertz Experiment Phy 344: E = hν originally proposed for thebayman66No ratings yet

- Resume 09Document2 pagesResume 09mmaft74No ratings yet

- English4 - q4 - Week - 1 - v4 (1) 12wqDocument9 pagesEnglish4 - q4 - Week - 1 - v4 (1) 12wqDivina Pedrozo MalinaoNo ratings yet

- Exploring Random VariablesDocument22 pagesExploring Random VariablesJaneth MarcelinoNo ratings yet

- Teaching Social Studies in Elementary Grades GroupingsDocument10 pagesTeaching Social Studies in Elementary Grades GroupingsBabate AxielvieNo ratings yet

- Accountability Part 2: Lecturer: Dr. Ann Downer, EddDocument1 pageAccountability Part 2: Lecturer: Dr. Ann Downer, EddMark Anthony EllanaNo ratings yet

- Implementing Rules and Regulations of R.A. 9184: Rule Vi and Rule ViiDocument15 pagesImplementing Rules and Regulations of R.A. 9184: Rule Vi and Rule ViiMark Joseph Verzosa FloresNo ratings yet

- SDOF - Free VibrationDocument12 pagesSDOF - Free VibrationPaul Bryan GuanNo ratings yet

- Micro MomentsDocument9 pagesMicro MomentsHasnain AbbasNo ratings yet

- đề anh số 13Document19 pagesđề anh số 13an nhaNo ratings yet

- Welcome!: Please Scroll Down To Read Introductory InformationDocument12 pagesWelcome!: Please Scroll Down To Read Introductory InformationbahbaguruNo ratings yet

- Molecular Mechanism of High Pressure Action On LupanineDocument6 pagesMolecular Mechanism of High Pressure Action On Lupanineruty_9_3No ratings yet

- Knjizica Za Cargo DVD Jednostrana PDFDocument12 pagesKnjizica Za Cargo DVD Jednostrana PDFkorone91No ratings yet

- Advantx RF Schematics 19095Document277 pagesAdvantx RF Schematics 19095JUAN CARLOSNo ratings yet

- Original Biography of Richard TrevithickDocument3 pagesOriginal Biography of Richard TrevithickracbabyNo ratings yet

- Pba 5 Polyurethane Bonded Revetments Design Manual WWW Arcadis NL IMPORTANTDocument102 pagesPba 5 Polyurethane Bonded Revetments Design Manual WWW Arcadis NL IMPORTANTvoch007No ratings yet