Professional Documents

Culture Documents

Context Free

Uploaded by

adviful0 ratings0% found this document useful (0 votes)

13 views5 pages,,,,,,,,,,,,

Copyright

© © All Rights Reserved

Available Formats

TXT, PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this Document,,,,,,,,,,,,

Copyright:

© All Rights Reserved

Available Formats

Download as TXT, PDF, TXT or read online from Scribd

0 ratings0% found this document useful (0 votes)

13 views5 pagesContext Free

Uploaded by

adviful,,,,,,,,,,,,

Copyright:

© All Rights Reserved

Available Formats

Download as TXT, PDF, TXT or read online from Scribd

You are on page 1of 5

In this video, we're gonna begin our

discussion of parsing technology with

context-free grammars. Now as we know, not

all strings of tokens are actually valid

programs and the parser has to tell the

difference. It has to know which strings

of tokens are valid and which ones are

invalid and give error messages for the

invalid ones. So, we need some way of

describing the valid strings of tokens and

then some kind of algorithm for

distinguishing the valid and invalid

strings of tokens from each other. Now

we've also discussed that programming

languages have a natural recursive

structure, So for example in Cool, an

expression That can be anyone of a very

large number of things. So two of the

things that can be are an if expression

and a while expression but these

expressions are themselves recursively

composed of other expressions. So for

example, the predicate of an if is a, a

[inaudible] expression as is the then

branch and the else branch and in a while

loop the termination test is an expression

and so is the loop body. And context-free

grammars are in natural notation for

describing such recursive structures. So

within a context-free grammar so formally

it consist a set of terminals t, a set of

nonterminals n, a start symbol s and s is

one of the nonterminals and a set of

productions and what's a production? A

production is a symbol followed by an

arrow followed by a list of symbols. And

these symbols, there are certain rules

about them so the x thing on the left hand

side of the arrow has to be a nonterminal.

That's what it means to be on the left

hand side so the set of things on the left

hand side of productions are exactly the

nonterminals. And then the right hand side

every yi on the right hand side can be

either a nonterminal or it can be a

terminal or it can be the special symbol

epsilon. So let's do a simple example of a

Context-free Grammar. Strings of balanced

parenthesis which we discussed in an

earlier video can be expressed as follows.

So, we have our start symbol and. One

possibility for a string o f balanced

parentheses is that it consists of an open

paren on another string of balanced

parentheses and a close paren. And, the

other possibility for a string of balanced

parentheses that is empty because the

empty string is also a string of balanced

parentheses. So, there are two productions

for this grammar and just to go over the

to, to relate this example to the formal

definition we gave on the previous slide,

what is our set of nine terminals, it's

just. The singles nonterminal s, what our

terminal symbols in this context-free

grammar is just open and close paren. No

other symbols. What's the start symbol?

Well, it's s. It's the only nonterminal so

it has to be the start symbol but

generally we will, when we give grammars

the first production will name a start

symbol so rather than name and explicitly

whichever production occurs first the

symbol on the left hand side will be the

nonterminal for that particular

context-free grammar. And then finally,

what are the productions with the, we said

there could be a set of productions and

here are the two productions for this

particular Context-Free Grammar. Now,

productions can be read as rules. So,

let's write down one of our productions

from the from the example grammar and what

does this mean? This means wherever we see

an s, we can replace it by the string of

symbols on the right hand side. So,

Wherever I see an s I can substitute and I

can take the s out. If that important, I

remove the s that appears on the left side

and I replace it by the string of symbols

on the right hand side so productions can

be read as replacement rule so right hand

side replaces the left hand side. So

here's a little more formal description of

that process. We begin with the string

that has only the start symbol s, so we

always start with just the start symbol.

And now, we look at our string initially

it's just a start symbol but it changes

overtime, and we can replace any

non-terminal in the string by the right

hand side, side of some production for

that non-terminal. So for exam ple, I can

replace a non-terminal x by the right hand

side of some production for x. X in this

case, x goes to y1 through yn. And then we

just repeat step two over and over again

until there are no non-terminals left

until the string consist of only

terminals. And at that point, we're done.

So, to write this out slightly more

formally, a single step here consist of a

state which is a, which is a string of

symbols, so this can be terminals and

non-terminals. And, somewhere in the

string is a non-terminal x and there is a

production for x, in our grammar. So this

is part of the grammar, okay? This is a

production And we can use now production

to take a step from, to a new state Where

we have replaced X by the right hand side

of the production, Okay? So this is one

step of a context-free derivation. So now

if you wanted to do multiple steps, we

could have a bunch of steps, alpha zero

goes to alpha one goes to alpha two and

these are strings now. Alpha i's are all

strings and as we go along we eventually

get to some strong alpha n, alright. And

then we say that alpha zero rewrites in

zero or more steps to alpha n so this

means n zero, greater than or equal to

zero steps. Okay. So this is just a short

hand for saying there is some sequence of

individual productions. Individual rules

being applied to a string that gets us

from the string alpha string zero to the

string alpha n and remember that in

general we start with just the start

symbol and so we have a whole bunch of

sequence of steps like this that get us

from start symbol to some other string. So

finally, we can define the language of a

Context-Free Grammar. So, [inaudible]

context-free grammar has a start symbol s,

so then the language of the context-free

grammar is gonna be the string of symbols

alpha one through alpha n such that for

all i. Alpha i is an element of the

terminals of g, okay. So t here is the set

of terminals of g and s goes, the start

symbol s goes in zero or more steps to

alpha one, I'm sorry a1 to an, okay. And

so we're just saying, this is just saying

that all the strings of terminals that I

can derive beginning with just the start

symbol, those are the strings in the

language. So the name terminal comes from

the fact that once terminals are included

in the string, there is no rule of

replacing them. That is once the terminal

is generated, it's a permanent feature of

the string and in applications to

programming languages and context-free

grammars, the terminals are to be the

tokens of the language that we are

modeling with our context-free grammar.

With that in mind, let's try the

context-free grammar for a fragment of

[inaudible]. So, [inaudible] expressions,

we talked about these earlier, but one

possibility for a [inaudible] expression

is that it's an if statement or an if

expression. And, we call that [inaudible]

if statements have three parts. And they

end with the keyword [inaudible] which is

a little bit unusual. And so looking at

this looking at this particular rule, we

can see some conventions that way, that

are pretty standard and that we'll use so

that non-terminals are in all caps. Okay,

so in this case was just [inaudible] we'll

try that in caps and then the terminal

symbols are in, in lower case, all right?

And another possibility Is that it could

be a while expression. And finally the

last possibility Is that it could be

identifier id and there actually many,

many more possibilities and lots of other

cases for expressions and let me just show

you one bit of notation to make things

look a little bit nicer. So we have many

we have many productions for the same

non-terminal. We usually group those

together in the grammar and we only write

a non-terminal on the right hand side once

and then we write explicit alternative. So

this is actually. Completely the same as

writing out expert arrow two more times

but we here we just is, this is just a way

of grouping these three productions

together and saying that expr- is the

non-terminal for all three right hand

sides. Let's take a look at some of the

strings on the language of this ContextFree Grammar. So, a valid Kuhl expression

is just a single identifier and that's

easy to see because EXPR is our start

symbol, I'll call it EXPR. And, so the

production it does says it goes to id. So

I can take the start symbol directly to a

string of terminals, a single variable

name is a valid Kuhl expression. Another

example is an e-th expression where e-th

of the subexpressions is just a variable

name. So this is perfectly fine structure

for a Kuhl expression. Similarly I can do

the same thing with the while expression.

I can take the structure of a while and

then replace each of the subexpressions

just with a single variable name and that

would be a syntactically valid cool while

loop. There are more complicated

expressions so for example, here we have a

why loop as the predicate of an if

expression. That's something you might

normally think or writing but perfectly

well form and tactically. Similarly, I

could have an if statement or an if

expression as the predicate of and if it's

inside of an if expression. So, so nested

if expressions like this one are also

syntactically valid. Let's do another

grammar, this time for simple arithmetic

expressions. So, we'll have our start

symbol and only non-terminal for this

grammar be called e and one of the

possibilities while e could be the sum of

expressions. Or and remember this is an

alternative notation for e arrow. It's

just a way of saying I'm going to use the

nonterminal for another production. We can

have a sum of two expressions or we could

have the Multiplication of two

expressions. And then we could have

expressions that appear inside the

parentheses, so parenthesized expressions.

And just to keep things simple, we could

just have as our base, only base case

simple identifier so variable names. And

here's a small grammar over plus and times

to see and in parentheses and variable

names. [inaudible] a few elements of this

language. So for example, a single

variable name is a perfectly good element

of the language id + id is also in this

language. Which s is id + id id and we

could also use parens to group things so

we could say id + id id that's also

something you can generate using these

rules and so on and so forth. There are

many, many more strings in this language.

Context-free grammars are our big step

towards being able to say what we want in

a parser but, we still need some other

things. First of all, a context-free

grammar at least as we define it so far,

just gives us a yes or no answer. Yes

something, yes a string is in the language

of the Context-free grammar or no it is

not. We also need a method for building a

Parse Tree at the input. So in those cases

where it is on the language, we need to

know how it's in the language. We need the

actual Parse Tree not just yes or no. In

the cases where the string is not in the

language, we have to be able to handle

errors gracefully and give some kind of

feedback to the programmer so we need a

method for doing that. And finally if we

have these two things we need an actual

implementation of them in order to

actually implement context-free grammars.

One last comment before we wrap up this

video. The form of the context-free

grammar can be important. Tools are often

sensitive to the particular you write the

grammar and while there are many ways to

write a grammar for the same language,

only some of them may be accepted by the

tools. And as we'll see there are cases

where it's necessary to modify the grammar

in order to get the tools to accept it.

This happens actually sometimes as well

with regular expressions but it's much

less common. So normally for most regular

expressions you would want to write the

tools would be able to digest them. That's

fine. That's not also true. That's not

true of an arbitrary context-free grammar.

You might also like

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- 2Document92 pages2adviful100% (2)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

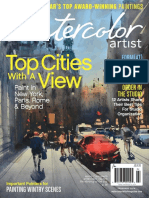

- Topcities View ViewDocument92 pagesTopcities View Viewadviful100% (7)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5794)

- Exploring Color Workshop, 30th Anniversary Edition PDFDocument178 pagesExploring Color Workshop, 30th Anniversary Edition PDFvoodooch1ld2100% (28)

- RE TutorialDocument20 pagesRE TutorialadvifulNo ratings yet

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (400)

- Chapter 23 - Project PlanningDocument52 pagesChapter 23 - Project PlanningadvifulNo ratings yet

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (588)

- Manhattan School of Driving LLC, Management System: Date HereDocument9 pagesManhattan School of Driving LLC, Management System: Date HereadvifulNo ratings yet

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- 2Document92 pages2adviful100% (2)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- CS 389 - Software Engineering: Lecture 2 - Part 2 Chapter 2 - Software ProcessesDocument26 pagesCS 389 - Software Engineering: Lecture 2 - Part 2 Chapter 2 - Software ProcessesadvifulNo ratings yet

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (895)

- Case Study Mental Health Care Patient Management System (MHCPMS)Document15 pagesCase Study Mental Health Care Patient Management System (MHCPMS)advifulNo ratings yet

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- CS 389 - Software Engineering: Lecture 2 - Part 2 Chapter 2 - Software ProcessesDocument26 pagesCS 389 - Software Engineering: Lecture 2 - Part 2 Chapter 2 - Software ProcessesadvifulNo ratings yet

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (838)

- CS 389 - Software Engineering: Lecture 2 - Part 1 Chapter 2 - Software ProcessesDocument29 pagesCS 389 - Software Engineering: Lecture 2 - Part 1 Chapter 2 - Software ProcessesadvifulNo ratings yet

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- Chapter 3 - Agile Software Development: Part 1bDocument24 pagesChapter 3 - Agile Software Development: Part 1badvifulNo ratings yet

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

- 1 Chapter 7 Design and ImplementationDocument56 pages1 Chapter 7 Design and ImplementationHoàng Đá ĐỏNo ratings yet

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (74)

- CS 389 - Software EngineeringDocument55 pagesCS 389 - Software EngineeringadvifulNo ratings yet

- Customer Bank Account Management System: Technical Specification DocumentDocument15 pagesCustomer Bank Account Management System: Technical Specification DocumentadvifulNo ratings yet

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- AWS Certified Solution Architect - Associate: Amazon Web Services - AWSDocument27 pagesAWS Certified Solution Architect - Associate: Amazon Web Services - AWSadviful0% (1)

- Ch8 SummaryDocument59 pagesCh8 SummaryadvifulNo ratings yet

- Ch22 SummaryDocument46 pagesCh22 SummaryadvifulNo ratings yet

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (345)

- DemystifyingDocument25 pagesDemystifyingadvifulNo ratings yet

- 1) Warehouse Management For Open SystemsDocument3 pages1) Warehouse Management For Open SystemsadvifulNo ratings yet

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- Developing Business Architecture With TOGAF With TOGAF: Building Business Capability 2013 Las Vegas, NVDocument23 pagesDeveloping Business Architecture With TOGAF With TOGAF: Building Business Capability 2013 Las Vegas, NVadvifulNo ratings yet

- As 506Document301 pagesAs 506advifulNo ratings yet

- 2014 JRCLS Leadership TrainingDocument6 pages2014 JRCLS Leadership TrainingadvifulNo ratings yet

- AMCAT Sentence CorrectionDocument19 pagesAMCAT Sentence CorrectionadvifulNo ratings yet

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1090)

- Language Specification & Compiler Construction: Hanspeter Mössenböck University of LinzDocument26 pagesLanguage Specification & Compiler Construction: Hanspeter Mössenböck University of Linz00matrix00No ratings yet

- Figure 1two Parse Trees For 9-5+2Document3 pagesFigure 1two Parse Trees For 9-5+2Amal AhmadNo ratings yet

- Programming Language ConceptsDocument76 pagesProgramming Language ConceptsMosope CokerNo ratings yet

- Lesson 6 3rd ReleaseDocument15 pagesLesson 6 3rd ReleaseRowena Matte FabularNo ratings yet

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (121)

- 1.describing Syntax and SemanticsDocument110 pages1.describing Syntax and Semanticsnsavi16eduNo ratings yet

- NLP Lab FileDocument66 pagesNLP Lab FileVIPIN YADAV100% (1)

- The Linguistics of DNADocument14 pagesThe Linguistics of DNAAhmed AbdullahNo ratings yet

- Compiler Design: 4. Language GrammarsDocument14 pagesCompiler Design: 4. Language GrammarsShyamNo ratings yet

- Chapter 1 - Principles of Programming LangugesDocument37 pagesChapter 1 - Principles of Programming LangugesdileepNo ratings yet

- Foundations of MathematicsDocument352 pagesFoundations of MathematicsPatrick MorgadoNo ratings yet

- Cs 8501 Toc Unit1 Ppt1Document67 pagesCs 8501 Toc Unit1 Ppt1Anamika PadmanabhanNo ratings yet

- CS8602 Compiler Design Two Marks Questions 1Document22 pagesCS8602 Compiler Design Two Marks Questions 1Pradeepkumar PradeepkumarNo ratings yet

- CSE18R274 - Compiler DesignDocument117 pagesCSE18R274 - Compiler DesignTHE DICTATOR GAMINGNo ratings yet

- Lesson 3: Syntax Analysis: Risul Islam RaselDocument106 pagesLesson 3: Syntax Analysis: Risul Islam RaselRisul Islam RaselNo ratings yet

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- Bison Is A General-Purpose Parser Generator That Converts: The Concepts of BisonDocument3 pagesBison Is A General-Purpose Parser Generator That Converts: The Concepts of BisonAdilKhanNo ratings yet

- Cs - 402 F-T Mcqs by Vu - ToperDocument18 pagesCs - 402 F-T Mcqs by Vu - Topermirza adeelNo ratings yet

- Describing Syntax and Semantics: Isbn 0-321-49362-1Document55 pagesDescribing Syntax and Semantics: Isbn 0-321-49362-1ranga231980No ratings yet

- Syntax & SemanticsDocument34 pagesSyntax & SemanticsMayank GroverNo ratings yet

- Retargeting A C Compiler For A DSP Processor: Henrik AnteliusDocument93 pagesRetargeting A C Compiler For A DSP Processor: Henrik AnteliusBiplab RoyNo ratings yet

- Programming Language Processors in Java Compilers and Interpreters.9780130257864.25356Document438 pagesProgramming Language Processors in Java Compilers and Interpreters.9780130257864.25356Sigit KurniawanNo ratings yet

- PPL NotesDocument144 pagesPPL Notessanyasirao1No ratings yet

- M Language, Power Query, MicrosoftDocument968 pagesM Language, Power Query, MicrosoftMax TomasNo ratings yet

- Grammar and Parse Trees (Syntax) : What Makes A Good Programming Language?Document50 pagesGrammar and Parse Trees (Syntax) : What Makes A Good Programming Language?Ainil Hawa100% (1)

- MCode Language - Power QueryDocument963 pagesMCode Language - Power QueryScribd-OnlineNo ratings yet

- Lecture 3-4&5Document91 pagesLecture 3-4&5Kids break free worldNo ratings yet

- Describing Syntax and Semantics: CS 350 Programming Language Design Indiana University - Purdue University Fort WayneDocument73 pagesDescribing Syntax and Semantics: CS 350 Programming Language Design Indiana University - Purdue University Fort WayneHarvey James ChuaNo ratings yet

- Compilers Design: M. T. Bennani Assistant Professor, FST - El Manar University, LISI-INSATDocument13 pagesCompilers Design: M. T. Bennani Assistant Professor, FST - El Manar University, LISI-INSATDorra BoutitiNo ratings yet

- Context Free Grammar181007800Document42 pagesContext Free Grammar181007800James KifotNo ratings yet

- Unit III Regular GrammarDocument54 pagesUnit III Regular GrammarDeependraNo ratings yet