Professional Documents

Culture Documents

The Development of Technology-Based Music 1 - Synths & MIDI

The Development of Technology-Based Music 1 - Synths & MIDI

Uploaded by

simonballemusicOriginal Description:

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

The Development of Technology-Based Music 1 - Synths & MIDI

The Development of Technology-Based Music 1 - Synths & MIDI

Uploaded by

simonballemusicCopyright:

Available Formats

The Development of Technology - based Music 1 Synthesizers & MIDI

SYNTHESIZERS

Synthesizers are at the forefront of music technology today, and can be heard in film and classical music as well as pop music. The basic concept is that an electronically generated signal is processed, resulting in a unique sound which cannot be duplicated by conventional instruments. A synthesizer is a device that generates sounds electronically. The classic synthesizer uses a technique called `subtractive synthesis'. This is so called because a raw wave-form (such as a sawtooth wave) is used as a starting point. Its inherent sound is rather buzzy and uninteresting, so the sound is `sculpted' by shaping and filtering, `subtracting' from the basic wave-form. A basic synthesizer is made up of four elements: oscillators, filters, envelopes and modulators. Even though few synthesizers nowadays are analogue, these core elements of synthesizers remain fundamentally the same. OSCILLATORS provide the sound sources for the instrument. In the 1960s and 70s the sound sources were analogue wave-forms. As synthesis evolved, the analogue wave-forms were gradually replaced by samples of basic wave-forms or of actual instruments (as in the Korg Trinity), or by mathematically modelled reproductions of wave-forms (via the process of `physical modelling') as in the Clavia Nord Lead. An analogue oscillator is referred to as a 'VCO' (voltage controlled oscillator). A FILTER shapes the tone of the sound, e.g. making it bright or dull. The type of filter used greatly influences the overall sound of a synthesizer. Moog filters are known for their warm and `fat' sound. In older synthesizers, filters were analogue, and are often referred to as VCFs (voltage controlled filters). The term ENVELOPE refers to the dynamic shape of a sound. An envelope commonly has four parameters known collectively as ADSR (attack, decay, sustain, release).

ATTACK - how long the sound takes to reach maximum. A piano's attack is fast, while a violin's attack can be very slow. DECAY - how quickly the sound decays from maximum to the `sustain' level. SUSTAIN - the continuing level of loudness while the finger remains on the keyboard. RELEASE - how long the sound takes to decay to silence after the key is released. There is normally one ADSR envelope for the amplifier section (or VCA), which can be used to shape the overall volume, and one for the filter (or VCF), which works the same way but affects the tone instead. MODULATION most commonly uses an LFO (low frequency oscillator), which does not make an audible sound itself but can be used to alter or `modulate' the oscillator (or whatever sound source is being used). This causes variations in pitch, often used to mimic vibrato. The LFO can also modulate the filter, causing changes in tone quality (e.g. a slow `sweeping' effect that is often heard in dance music). Additionally, the LEO can modulate the amplifier section, often to create rapid changes in volume, resulting in a tremolo effect. The ADSR envelope can also be utilized to modulate the oscillator(s), filter and amplifier. The earliest synthesizers were modular, i.e. they were not made in a self-contained unit but in separate

The Development of Technology - based Music 1 Synthesizers & MIDI

modules (such as VCO, VCF, etc.), connected together by cables. The connections between cables were patched together, and a particular sound was known as a patch - a term which is still in use today to describe the individual sounds stored in a synthesizer's memory. In 1955 the first synthesizer was developed at the RCA studios in New York. Using valve-based technology, it was programmed by punched paper tape. It was huge and very expensive, occupying a whole room. Robert A Moog developed a smaller transistorized synthesizer, the Moog modular, which became available to order in 1965. This meant that electronic music could be cr eated more easily, although the machine was still cumbersome and not really suited to live performance. The introduction of the smaller and more affordable Minimoog in 1969 saw the birth of the first truly portable integrated synthesizer. It was also much simpler to use, as no patch cords were needed. The Moog synthesizer could produce a wide range of sounds which could not be created on conventional instruments. In 1968, Walter Carlos recorded a collection of J S Bach's keyboard and orchestral pieces played on the Moog. The album, Switched on Bach, sold over a million copies and brought electronic music to a wider audience. At the same time in England, EMS (Electronic Music Studios) were also manufacturing small, portable synthesizers. Their most famous instrument was the VCS3, which was very popular with British psychedelic bands as well as with the BBC's Radiophonic Workshop (it was used extensively on the Doctor Who theme). Its keyboard was separate from the main synthesizer, so it could not be described as truly integrated. The VCS3 was a highly flexible but eccentric instrument, with extensive patching capabilities that allowed a great variety of sounds to be generated. However the Minimoog's `fat' sound and ease of use made it the more popular synthesizer. The drawbacks of the older analogue synthesizers were that polyphony (the number of notes playable at one time) was very limited, and tuning stability was generally poor. In the late 1970s synthesizers with digital oscillators (DCOs) began to be produced. Their tuning stability was a vast improvement over their analogue predecessors. One of the earliest DCO synthesizers was EDP's Wasp, first produced in 1978.

FM SYNTHESIS The 1980s In the early 1980s FM (frequency modulation) synthesis arrived. This worked by additive synthesis which, as its name implies, combines sine waves, rather than starting off with a raw waveform and `sculpting' it as in subtractive synthesis. Probably the most famous FM synthesizer was Yamaha's DX7, first produced in 1983. FM synthesis was very good at reproducing sounds such as electric pianos and brass, but its often hard and metallic sounds were less successful at reproducing richer timbres such as strings. In 1987 Roland brought out the D-50, which used memory-hungry samples of real instruments for the short, attack part of the sound, combined with more traditional synthesis for the much longer remaining portion. This system was known as S+S or sample and synthesis. It resulted in richer timbres that were quite realistic in their attempts to reproduce acoustic sounds. As memory has become cheaper and processors faster, many synthesizers now have large numbers of samples on board, which can be processed through filters and special effects to produce very complex, as well as realistic, sounds. At the time of writing, the most recent development has been `physical modelling', also known as virtual synthesis. In a traditional synthesizer, different parts of the circuit board form the oscillator, filter, etc. Virtual modelling synthesizers are derived from mathematical models of `real-world' sounds, or of analogue sythesizers, which are then recreated inside the virtual synthesizer's software. A virtual synthesizer may attempt to recreate an old analogue synthesizer, including the distinctive way that the components of that particular synthesizer react with each other. Or it may take an acoustic instrument as a starting point. The Yamaha AN1X and. Clavia Nord Modular are examples of hardware virtual synthesizers. Many virtual synthesis instruments are software-only, e.g. Propellerheads' Reason, and Native Instruments' Reaktor.

The Development of Technology - based Music 1 Synthesizers & MIDI

The Atari 1040st was my first music focused computer....it rocked.

DIGITAL TECHNOLOGY

Digital technology opened up the way for a host of new developments in music. These were not confined to the realm of digital music playback, which was discussed in the previous chapter. The introduction of MIDI in 1983 had huge implications both for pop musicians and for consumers, opening up a new range of creative possibilities. Today's musician is able to produce an entire song from start to finish with only a computer and a microphone.

MIDI

MIDI - Musical Instrument Digital Interface is a digital communications protocol. In August of 1983, music manufacturers agreed on a document that is called "MIDI 1.0 Specification". This became possible when manufacturers agreed a common system in 1983 (the MIDI 1.0 spec.) which allowed electronic instruments to control and communicate with each other. This took the form of a set of standards for hardware connections, and for messages which could then be relayed between devices. By 1985, virtually every new musical keyboard on the market had a MIDI interface. MIDI also provides the means for electronic instruments to communicate with computers - which can then send, store and process MIDI data. This is central to the function of a sequencer: a device for inputting, editing, storing and playing back data from a musical performance. It records many details of a performance - such as duration, pitch and rhythm - which can then be edited. Why was it developed? MIDI was perhaps the first true effort at joint development among a large number of musical manufacturers. An industry standard enabling musical communication between musical hardware synths and sequencers for example. By 1985, virtually every new musical keyboard on the market had a MIDI interface. What is contained in Midi data? It is important to remember that MIDI transmits commands, but it does not transmit an audio signal. The MIDI specification includes a common language that provides information about events, such as note on and off, preset changes, sustain pedal, pitch bend, and timing information. Binary Data (just to aid understanding). Computers use binary data. A base 2 numeric system. We are used to a base 10 or decimal system.

The Development of Technology - based Music 1 Synthesizers & MIDI

In a binary system there are only 2 numeric values 0 and 1- on or off. So in a binary number the first column records single units up to 1, the second column records 2s, the third 4s, the fourth 8s and so on. Each digit of resolution is called a bit and can represent the values on or off. A byte is 8 digits of digital information. What is a MIDI message? By combining bytes together, MIDI messages are constructed. There are 2 categories of MIDI message: 1. Channel messages are mostly to do with performance information sent on MIDI channels. 2. System messages handle system wide jobs like MIDI Timecode and are not sent on channels. All MIDI messages are constructed from at least 1 status byte and 1 data byte, often many, many more. The first byte is a status byte (identifies the nature of the instruction eg. Note on, Main Volume) the following data bytes provide data pertinent to that instruction. Of course, MIDI messages are sent against a clock/timing protocol and as such are relative to time. Logics Matrix (piano roll) editor shows MIDI note on, note off and velocity data relative to time in an easy to understand graphic format. Because of the binary system 4 bit resolution provides a range of 16 numerical possibilities. 8-bit = 256 possibilities. The first digit (most significant bit or MSB) of a data byte is always 0. The seven remaining digits of the data byte thus give a range of 127. Example MIDI message Here is an example of a simple 3 byte MIDI message comprising a Status byte and 2 Data bytes. This message is telling a sound module set to respond on MIDI channel 1 to start playing a note (C3) at a velocity of 101.

Channel Voice Messages Whenever a MIDI instrument is played, its controllers (keyboard, pitch wheel etc) transmit channel voice messages. There are 7 channel voice message types ... 1. Note On 2. Note Off 3. Polyphonic key pressure 4. Aftertouch 1. Note-On This message indicates the beginning of a MIDI note and consists of 3 bytes. The 1st byte (Status byte) specifies a note-on event and channel. The 2nd byte specifies the number of the note played. The 3rd byte specifies the velocity with which the note was played. 2. Note-off This message indicates the end of a MIDI note. The 1st byte (Status byte) specifies a note-off event and channel. The 2nd byte specifies the number of the note played. The 3rd byte specifies the release velocity. 3. Polyphonic key pressure Key pressure or aftertouch, with each key sending its own independent message 5. Program change 6. Control change 7. Pitch bend change

The Development of Technology - based Music 1 Synthesizers & MIDI

4. Aftertouch This message is similar to polyphonic key pressure but is sent when additional pressure is applied to a key that is already being held down. Also, there is only one sensor for the whole keyboard i.e. its monophonic aftertouch 5. Program change These messages will change the programs of devices responding on the channel on which they are sent. 6. Control change These messages alter the way a note sounds by sliding it, sustaining it, modulating it etc. Here are the numbers for all defined MIDI control change controllers. The first 32 numbers (0 to 31) are coarse adjustments for various parameters. The numbers from 32 to 63 are their respective fine adjustments (ie, add 32 to the coarse adjust number to get the fine adjust number, except for the General Purpose Sliders which have no fine adjust equivalents). For example, the coarse adjustment for Channel Volume is controller number 7. The fine adjustment for Channel Volume is controller number 39 (7+32). Many devices use only the coarse adjustments, and ignore the fine adjustments.

0 Bank Select (coarse) 1 Modulation Wheel (coarse) 2 Breath controller (coarse) 4 Foot Pedal (coarse) 5 Portamento Time (coarse) 6 Data Entry (coarse) 7 Volume (coarse) 8 Balance (coarse) 10 Pan position (coarse) 11 Expression (coarse) 12 Effect Control 1 (coarse) 13 Effect Control 2 (coarse) 16 General Purpose Slider 1 17 General Purpose Slider 2 18 General Purpose Slider 3 19 General Purpose Slider 4 32 Bank Select (fine) 33 Modulation Wheel (fine) 34 Breath controller (fine) 36 Foot Pedal (fine) 37 Portamento Time (fine) 38 Data Entry (fine) 39 Volume (fine) 40 Balance (fine) 42 Pan position (fine) 43 Expression (fine) 44 Effect Control 1 (fine) 45 Effect Control 2 (fine) 64 Hold Pedal (on/off) 65 Portamento (on/off) 66 Sustenuto Pedal (on/off) 67 Soft Pedal (on/off) 68 Legato Pedal (on/off) 69 Hold 2 Pedal (on/off) 70 Sound Variation 71 Sound Timbre 72 Sound Release Time 73 Sound Attack Time 74 Sound Brightness 75 Sound Control 6 76 Sound Control 7 77 Sound Control 8 78 Sound Control 9 79 Sound Control 10 80 General Purpose Button 1 (on/off) 81 General Purpose Button 2 (on/off) 82 General Purpose Button 3 (on/off) 83 General Purpose Button 4 (on/off) 91 Effects Level 92 Tremolo Level 93 Chorus Level 94 Celeste Level 95 Phaser Level 96 Data Button increment 97 Data Button decrement 98 Non-registered Parameter (fine) 99 Non-registered Parameter (coarse) 100 Registered Parameter (fine) 101 Registered Parameter (coarse) 120 All Sound Off 121 All Controllers Off 122 Local Keyboard (on/off) 123 All Notes Off 124 Omni Mode Off 125 Omni Mode On 126 Mono Operation 127 Poly Operation

7. Pitch bend These messages are transmitted whenever an instruments pitch wheel is moved. While almost all channel voice messages assign a single data byte to a single parameter such as key # or velocity (128 values because they start with '0,' so = 2^7=128), the exception is pitch bend. If pitch bend used only 128 values, discreet steps might be heard if the bend range were large (this range is set on the instrument, not by MIDI). So the 7 non-zero bits of the first data byte (called the most significant byte or MSB) are combined with the 7 non-zero bits from the second data byte (called the least significant byte or LSB) to create a 14-bit data value, giving pitch bend data a range of 16,384 values. MIDI Files

The Development of Technology - based Music 1 Synthesizers & MIDI

Midi Files contain info on notes, velocities, controller codes which can be interpreted by any program that supports Standard MIDI file format. These are accessed and created by the import and export MIDI file commands. MIDI composition and arrangement takes advantage of MIDI 1.0 and General MIDI (GM) technology to allow musical data files to be shared among many different files due to some incompatibility with various electronic instruments by using a standard, portable set of commands and parameters. Because the music is stored as instructions rather than recorded audio waveforms, the data size of the files is very small by comparison. MIDI FILES VS. AUDIO FILES The Pros and Cons: Advantages of MIDI: File Size - Because MIDI files simply contain the data about the music, they are much smaller than other music files. Fully Editable - You can edit and change the music of a MIDI file in ways which are impossible with other audio files. If you want to change a note in the middle of the track from a D to a C, you can do it in a snap. You can also adjust the tempo to make the music play faster without worrying about it sounding like the Chipmunks are performing it.

Disadvantages of MIDI: Limited Timbres when creating MIDI files one is limited to the GM sound bank.

You might also like

- Mark Vail - The SynthesizerDocument463 pagesMark Vail - The SynthesizerSoundslike Rekon100% (11)

- Polysynthi Manual PDFDocument19 pagesPolysynthi Manual PDFZack DagobaNo ratings yet

- RA The Book Vol 3: The Recording Architecture Book of Studio DesignFrom EverandRA The Book Vol 3: The Recording Architecture Book of Studio DesignNo ratings yet

- Diatonic Harmony 1Document1 pageDiatonic Harmony 1simonballemusicNo ratings yet

- Drum Programming LectureDocument16 pagesDrum Programming LectureEli Stine100% (6)

- Read Violin MusicDocument1 pageRead Violin MusicDaniela SánchezNo ratings yet

- History & Development of ReverbDocument2 pagesHistory & Development of Reverbsimonballemusic50% (2)

- History & Development of Delay FXDocument2 pagesHistory & Development of Delay FXsimonballemusicNo ratings yet

- Review of Digital Emulation of Vacuum-Tube Audio Amplifiers and PDFDocument14 pagesReview of Digital Emulation of Vacuum-Tube Audio Amplifiers and PDFZoran Dimic100% (1)

- DIY Bass TrapDocument22 pagesDIY Bass Trapdipling2No ratings yet

- Buchla ManualDocument53 pagesBuchla ManualZack Dagoba100% (1)

- Moog - Electronic Music Composition and PerformanceDocument4 pagesMoog - Electronic Music Composition and PerformanceWill BennettNo ratings yet

- Unit 4 Composition Written Account TemplateDocument5 pagesUnit 4 Composition Written Account TemplatesimonballemusicNo ratings yet

- African SymphonyDocument7 pagesAfrican SymphonyJesus SaenzNo ratings yet

- Festival de CaretaDocument2 pagesFestival de CaretaRamon Taveras MedinaNo ratings yet

- The Development of Technology Based Music 1 Synths MIDIDocument6 pagesThe Development of Technology Based Music 1 Synths MIDIBenJohnsonNo ratings yet

- Myagi TheSynthPrimerDocument17 pagesMyagi TheSynthPrimerBernard Marx100% (2)

- Sound DesignDocument10 pagesSound DesignRichardDunnNo ratings yet

- Choosing The Right Reverb "How Best To Use Different Reverbs"Document9 pagesChoosing The Right Reverb "How Best To Use Different Reverbs"adorno5100% (1)

- The Beginner's Guide To SynthsDocument11 pagesThe Beginner's Guide To SynthsAnda75% (4)

- Sound Synthesis Theory-IntroductionDocument2 pagesSound Synthesis Theory-IntroductionagapocorpNo ratings yet

- Sound Design TipsDocument3 pagesSound Design TipsAntoine100% (1)

- ReverbDocument3 pagesReverbMatt GoochNo ratings yet

- SAE Institute - LoudspeakersDocument8 pagesSAE Institute - LoudspeakersSequeTon Enlo100% (1)

- Differential MicrophonesDocument5 pagesDifferential Microphonesklepkoj100% (1)

- DIY MasteringDocument10 pagesDIY MasteringEarl SteppNo ratings yet

- Psycho Acoustic MODELS and Non-Linear - Human - HearingDocument14 pagesPsycho Acoustic MODELS and Non-Linear - Human - HearingKazzuss100% (1)

- Introduction To Ambisonics - Rev. 2015Document25 pagesIntroduction To Ambisonics - Rev. 2015Francesca OrtolaniNo ratings yet

- EQitDocument5 pagesEQitThermionicistNo ratings yet

- Sound Synthesis TheoryDocument31 pagesSound Synthesis TheoryDavid Wolfswinkel100% (2)

- Wireless Microphone Systems and Personal Monitor System For Houses of WorshipDocument55 pagesWireless Microphone Systems and Personal Monitor System For Houses of Worshipwilsonchiu7No ratings yet

- Ambisonics 2 Studio TechniquesDocument13 pagesAmbisonics 2 Studio TechniquesaffiomaNo ratings yet

- D4 - Live SoundDocument6 pagesD4 - Live SoundGeorge StrongNo ratings yet

- SSM Additive SynthesisDocument10 pagesSSM Additive SynthesisMeneses LuisNo ratings yet

- Sound Synthesis MethodsDocument8 pagesSound Synthesis MethodsjerikleshNo ratings yet

- BBC Engineering Training Manual - Microphones (Robertson)Document380 pagesBBC Engineering Training Manual - Microphones (Robertson)Nino Rios100% (1)

- Music Synthesizers - AMODAC - Delton T HortonDocument349 pagesMusic Synthesizers - AMODAC - Delton T HortonAndre50% (2)

- Electronic MusicDocument19 pagesElectronic MusicClark Vincent ConstantinoNo ratings yet

- Audio Spotlighting NewDocument30 pagesAudio Spotlighting NewAnil Dsouza100% (1)

- Jecklin DiskDocument4 pagesJecklin DiskTity Cristina Vergara100% (1)

- RealTraps - Room Measuring SeriesDocument16 pagesRealTraps - Room Measuring SeriesstocazzostanisNo ratings yet

- Synthesizer: A Pattern Language For Designing Digital Modular Synthesis SoftwareDocument11 pagesSynthesizer: A Pattern Language For Designing Digital Modular Synthesis SoftwareSoundar SrinivasanNo ratings yet

- DIY VU MetersDocument2 pagesDIY VU MetersXefNedNo ratings yet

- HiFi Audio Circuit Design PDFDocument12 pagesHiFi Audio Circuit Design PDFleonel montilla100% (1)

- 2 AES ImmersiveDocument5 pages2 AES ImmersiveDavid BonillaNo ratings yet

- 12 Less-Usual Sound-Design TipsDocument14 pages12 Less-Usual Sound-Design Tipssperlingrafael100% (1)

- Microphone Techniques Live Sound Reinforcement SHURE PDFDocument39 pagesMicrophone Techniques Live Sound Reinforcement SHURE PDFNenad Grujic100% (1)

- Sound Design For Film and TelevisionDocument14 pagesSound Design For Film and TelevisionRonald Jesus100% (1)

- 1990 SDocument7 pages1990 SsimonballemusicNo ratings yet

- Recording StereoDocument42 pagesRecording StereoovimikyNo ratings yet

- Microphones and Miking TechniquesDocument32 pagesMicrophones and Miking TechniquesNameeta Parate100% (1)

- History of GuitarsDocument11 pagesHistory of GuitarsMartin LewisNo ratings yet

- The Art of MixingDocument11 pagesThe Art of MixingYesse BranchNo ratings yet

- The Story of Stereo, John Sunier, 1960, 161 PagesDocument161 pagesThe Story of Stereo, John Sunier, 1960, 161 Pagescoffeecup44No ratings yet

- HD Recording and Sampling TipsDocument16 pagesHD Recording and Sampling TipsBrian NewtonNo ratings yet

- Granular Synthesis - Sound On SoundDocument13 pagesGranular Synthesis - Sound On SoundMario DimitrijevicNo ratings yet

- A Paradigm For Physical Interaction With Sound in 3-D Audio SpaceDocument9 pagesA Paradigm For Physical Interaction With Sound in 3-D Audio SpaceRicardo Cubillos PeñaNo ratings yet

- Recording, Eq & FX KeywordsDocument7 pagesRecording, Eq & FX KeywordssimonballemusicNo ratings yet

- Analog Bass Drum Workshop UpdatedDocument7 pagesAnalog Bass Drum Workshop UpdatedJuan Duarte ReginoNo ratings yet

- Kosmas Lapatas The Art of Mixing Amp Mastering Book PDF FreeDocument59 pagesKosmas Lapatas The Art of Mixing Amp Mastering Book PDF FreeĐặng Phúc NguyênNo ratings yet

- RA The Book Vol 2: The Recording Architecture Book of Studio DesignFrom EverandRA The Book Vol 2: The Recording Architecture Book of Studio DesignNo ratings yet

- The History of CompressionDocument4 pagesThe History of Compressionsimonballemusic100% (2)

- Music Department Work PlanDocument6 pagesMusic Department Work PlansimonballemusicNo ratings yet

- As Trial Recording Task GenericDocument2 pagesAs Trial Recording Task GenericsimonballemusicNo ratings yet

- July IV 2012 Concert FinalDocument1 pageJuly IV 2012 Concert FinalsimonballemusicNo ratings yet

- Drum and Bass RevisionDocument3 pagesDrum and Bass Revisionsimonballemusic100% (1)

- As Trial Recording Task GenericDocument2 pagesAs Trial Recording Task GenericsimonballemusicNo ratings yet

- A2 Music Tech Brief 2012 2013Document12 pagesA2 Music Tech Brief 2012 2013Hannah Louise Groarke-YoungNo ratings yet

- As Music Tech Brief 2012 2013Document20 pagesAs Music Tech Brief 2012 2013Hannah Louise Groarke-YoungNo ratings yet

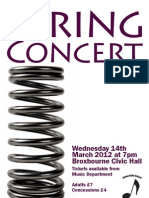

- Spring 2012 ConcertDocument2 pagesSpring 2012 ConcertsimonballemusicNo ratings yet

- Intro MidiDocument16 pagesIntro Midiwizard_of_keysNo ratings yet

- As Music Tech. Logbook 2011 TemplateDocument11 pagesAs Music Tech. Logbook 2011 TemplatesimonballemusicNo ratings yet

- The 1980s: New WaveDocument7 pagesThe 1980s: New WavequartalistNo ratings yet

- Diatonic Melody FragmentsDocument1 pageDiatonic Melody FragmentssimonballemusicNo ratings yet

- Distribution FormatsDocument2 pagesDistribution Formatsmws_97No ratings yet

- Tips From A Mastering EngineerDocument2 pagesTips From A Mastering EngineersimonballemusicNo ratings yet

- A Revolution in Pop Music - 1960sDocument3 pagesA Revolution in Pop Music - 1960ssimonballemusicNo ratings yet

- The Late 1960s: You Can Do What You Want ToDocument4 pagesThe Late 1960s: You Can Do What You Want TosimonballemusicNo ratings yet

- Concert Details For ParentsDocument7 pagesConcert Details For ParentssimonballemusicNo ratings yet

- Rock and RollDocument3 pagesRock and RollsimonballemusicNo ratings yet

- History of RecordingDocument6 pagesHistory of RecordingMatt Gooch0% (1)

- The Development of Technology-Based Music 3 - InstrumentsDocument5 pagesThe Development of Technology-Based Music 3 - Instrumentssimonballemusic100% (1)

- Sri Lankan: Endemic Music InstrumentsDocument2 pagesSri Lankan: Endemic Music InstrumentstharakaNo ratings yet

- 750G HDDDocument9 pages750G HDDMoses AbdulkassNo ratings yet

- NYSSMA SGMI Band - BeginnerDocument3 pagesNYSSMA SGMI Band - BeginnerkittlesonaNo ratings yet

- Bum Bum Tam TamDocument28 pagesBum Bum Tam TamAugusto PiccininiNo ratings yet

- Holy Wars TabDocument15 pagesHoly Wars TabgastonNo ratings yet

- Emily Dolan Davies: Music Production GuideDocument36 pagesEmily Dolan Davies: Music Production Guideluca1114No ratings yet

- Epic Sax Guy: Family BrassDocument4 pagesEpic Sax Guy: Family BrassTheMega AndroidNo ratings yet

- Mchenry Stalmox PDFDocument2 pagesMchenry Stalmox PDFdada trapaNo ratings yet

- Moten Swing 4vDocument20 pagesMoten Swing 4vFrankNo ratings yet

- Gonna Fly Now Maynard FergusonDocument24 pagesGonna Fly Now Maynard FergusonTens AiepNo ratings yet

- POD HD400 GuideDocument83 pagesPOD HD400 GuideChai Wei JunNo ratings yet

- The Names of Instruments and Voices in Foreign LanguagesDocument5 pagesThe Names of Instruments and Voices in Foreign LanguageslapalapalNo ratings yet

- A Group Course For BeginnersDocument3 pagesA Group Course For BeginnerslucasNo ratings yet

- November Rain Concert Band Full ScoreDocument19 pagesNovember Rain Concert Band Full ScoreJoaquin GuerraNo ratings yet

- Touch The Sky Chords (Ver 2) by Julie FowlisDocument3 pagesTouch The Sky Chords (Ver 2) by Julie FowlisjoostNo ratings yet

- 10 Kick Ass Hair Metal Riffs! PDFDocument9 pages10 Kick Ass Hair Metal Riffs! PDFian100% (1)

- March Militaire - Brass5 - Aaron - SchubertDocument10 pagesMarch Militaire - Brass5 - Aaron - SchubertCraig_Gill13No ratings yet

- Toxic BiohazardDocument16 pagesToxic BiohazardDj-XienefMixNo ratings yet

- 2015 Castelluccia Menilmontant: Home Guitars Gypsy Jazz Guitars: European and North AmericanDocument2 pages2015 Castelluccia Menilmontant: Home Guitars Gypsy Jazz Guitars: European and North AmericanowirwojNo ratings yet

- Tenor Sax Book SampleDocument9 pagesTenor Sax Book SampleRudmar De Aguiar RoussengNo ratings yet

- 1 It Ain't Necessarily So Score and Parts - Copie (Glissé (E) S)Document22 pages1 It Ain't Necessarily So Score and Parts - Copie (Glissé (E) S)spacehunter2014No ratings yet

- Polka - PolkaDocument5 pagesPolka - PolkaLucas DonatoNo ratings yet

- El Honor de Un Caballero 2007Document50 pagesEl Honor de Un Caballero 2007José FranciscoNo ratings yet

- Music of LebanonDocument2 pagesMusic of LebanonruahNo ratings yet

- Guitar Care For BeginnersDocument13 pagesGuitar Care For BeginnersDouglas HillNo ratings yet

- Stravinsky, Igor - MovementsDocument13 pagesStravinsky, Igor - MovementsnormancultNo ratings yet