Professional Documents

Culture Documents

Data Structures Related Algorithms Correctness Time Space Complexity

Data Structures Related Algorithms Correctness Time Space Complexity

Uploaded by

Vikas SainiOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Data Structures Related Algorithms Correctness Time Space Complexity

Data Structures Related Algorithms Correctness Time Space Complexity

Uploaded by

Vikas SainiCopyright:

Available Formats

0

CS 130 A: Data Structures and Algorithms

Focus of the course:

Data structures and related algorithms

Correctness and (time and space) complexity

Prerequisites

CS 20: stacks, queues, lists, binary search trees,

CS 40: functions, recurrence equations, induction,

CS 60: C, C++, and UNIX

1

Course Organization

Grading:

See course web page

Policy:

No late homeworks.

Cheating and plagiaris: F grade and disciplinary actions

Online info:

Homepage: www.cs.ucsb.edu/~cs130a

Email: cs130a@cs.ucsb.edu

Teaching assistants: See course web page

2

Introduction

A famous quote: Program = Algorithm + Data Structure.

All of you have programmed; thus have already been exposed

to algorithms and data structure.

Perhaps you didn't see them as separate entities;

Perhaps you saw data structures as simple programming

constructs (provided by STL--standard template library).

However, data structures are quite distinct from algorithms,

and very important in their own right.

3

Objectives

The main focus of this course is to introduce you to a

systematic study of algorithms and data structure.

The two guiding principles of the course are: abstraction and

formal analysis.

Abstraction: We focus on topics that are broadly applicable

to a variety of problems.

Analysis: We want a formal way to compare two objects (data

structures or algorithms).

In particular, we will worry about "always correct"-ness, and

worst-case bounds on time and memory (space).

4

Textbook

Textbook for the course is:

Data Structures and Algorithm Analysis in C++

by Mark Allen Weiss

But I will use material from other books and

research papers, so the ultimate source should be

my lectures.

5

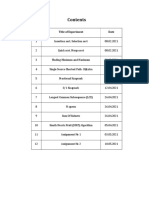

Course Outline

C++ Review (Ch. 1)

Algorithm Analysis (Ch. 2)

Sets with insert/delete/member: Hashing (Ch. 5)

Sets in general: Balanced search trees (Ch. 4 and 12.2)

Sets with priority: Heaps, priority queues (Ch. 6)

Graphs: Shortest-path algorithms (Ch. 9.1 9.3.2)

Sets with disjoint union: Union/find trees (Ch. 8.18.5)

Graphs: Minimum spanning trees (Ch. 9.5)

Sorting (Ch. 7)

6

130a: Algorithm Analysis

Foundations of Algorithm Analysis and Data Structures.

Analysis:

How to predict an algorithms performance

How well an algorithm scales up

How to compare different algorithms for a problem

Data Structures

How to efficiently store, access, manage data

Data structures effect algorithms performance

7

Example Algorithms

Two algorithms for computing the Factorial

Which one is better?

int factorial (int n) {

if (n <= 1) return 1;

else return n * factorial(n-1);

}

int factorial (int n) {

if (n<=1) return 1;

else {

fact = 1;

for (k=2; k<=n; k++)

fact *= k;

return fact;

}

}

8

Examples of famous algorithms

Constructions of Euclid

Newton's root finding

Fast Fourier Transform

Compression (Huffman, Lempel-Ziv, GIF, MPEG)

DES, RSA encryption

Simplex algorithm for linear programming

Shortest Path Algorithms (Dijkstra, Bellman-Ford)

Error correcting codes (CDs, DVDs)

TCP congestion control, IP routing

Pattern matching (Genomics)

Search Engines

9

Role of Algorithms in Modern World

Enormous amount of data

E-commerce (Amazon, Ebay)

Network traffic (telecom billing, monitoring)

Database transactions (Sales, inventory)

Scientific measurements (astrophysics, geology)

Sensor networks. RFID tags

Bioinformatics (genome, protein bank)

Amazon hired first Chief Algorithms Officer (Udi Manber)

10

A real-world Problem

Communication in the Internet

Message (email, ftp) broken down into IP packets.

Sender/receiver identified by IP address.

The packets are routed through the Internet by special

computers called Routers.

Each packet is stamped with its destination address, but not

the route.

Because the Internet topology and network load is constantly

changing, routers must discover routes dynamically.

What should the Routing Table look like?

11

IP Prefixes and Routing

Each router is really a switch: it receives packets at several

input ports, and appropriately sends them out to output

ports.

Thus, for each packet, the router needs to transfer the

packet to that output port that gets it closer to its

destination.

Should each router keep a table: IP address x Output Port?

How big is this table?

When a link or router fails, how much information would need

to be modified?

A router typically forwards several million packets/sec!

12

Data Structures

The IP packet forwarding is a Data Structure problem!

Efficiency, scalability is very important.

Similarly, how does Google find the documents matching your

query so fast?

Uses sophisticated algorithms to create index structures,

which are just data structures.

Algorithms and data structures are ubiquitous.

With the data glut created by the new technologies, the need

to organize, search, and update MASSIVE amounts of

information FAST is more severe than ever before.

13

Algorithms to Process these Data

Which are the top K sellers?

Correlation between time spent at a web site and purchase

amount?

Which flows at a router account for > 1% traffic?

Did source S send a packet in last s seconds?

Send an alarm if any international arrival matches a profile

in the database

Similarity matches against genome databases

Etc.

14

Max Subsequence Problem

Given a sequence of integers A1, A2, , An, find the maximum possible

value of a subsequence Ai, , Aj.

Numbers can be negative.

You want a contiguous chunk with largest sum.

Example: -2, 11, -4, 13, -5, -2

The answer is 20 (subseq. A2 through A4).

We will discuss 4 different algorithms, with time complexities O(n

3

),

O(n

2

), O(n log n), and O(n).

With n = 10

6

, algorithm 1 may take > 10 years; algorithm 4 will take a

fraction of a second!

15

int maxSum = 0;

for( int i = 0; i < a.size( ); i++ )

for( int j = i; j < a.size( ); j++ )

{

int thisSum = 0;

for( int k = i; k <= j; k++ )

thisSum += a[ k ];

if( thisSum > maxSum )

maxSum = thisSum;

}

return maxSum;

Algorithm 1 for Max Subsequence Sum

Given A

1

,,A

n

, find the maximum value of A

i

+A

i+1

++A

j

0 if the max value is negative

Time complexity: O(n

3

)

) 1 ( O

) ( i j O

) 1 ( O

) 1 ( O

) 1 ( O

)) ( (

1

=

n

i j

i j O ) ) ( (

1

0

1

=

n

i

n

i j

i j O

16

Algorithm 2

Idea: Given sum from i to j-1, we can compute the

sum from i to j in constant time.

This eliminates one nested loop, and reduces the

running time to O(n

2

).

into maxSum = 0;

for( int i = 0; i < a.size( ); i++ )

int thisSum = 0;

for( int j = i; j < a.size( ); j++ )

{

thisSum += a[ j ];

if( thisSum > maxSum )

maxSum = thisSum;

}

return maxSum;

17

Algorithm 3

This algorithm uses divide-and-conquer paradigm.

Suppose we split the input sequence at midpoint.

The max subsequence is entirely in the left half,

entirely in the right half, or it straddles the

midpoint.

Example:

left half | right half

4 -3 5 -2 | -1 2 6 -2

Max in left is 6 (A1 through A3); max in right is 8 (A6

through A7). But straddling max is 11 (A1 thru A7).

18

Algorithm 3 (cont.)

Example:

left half | right half

4 -3 5 -2 | -1 2 6 -2

Max subsequences in each half found by recursion.

How do we find the straddling max subsequence?

Key Observation:

Left half of the straddling sequence is the max

subsequence ending with -2.

Right half is the max subsequence beginning with -1.

A linear scan lets us compute these in O(n) time.

19

Algorithm 3: Analysis

The divide and conquer is best analyzed through

recurrence:

T(1) = 1

T(n) = 2T(n/2) + O(n)

This recurrence solves to T(n) = O(n log n).

20

Algorithm 4

Time complexity clearly O(n)

But why does it work? I.e. proof of correctness.

2, 3, -2, 1, -5, 4, 1, -3, 4, -1, 2

int maxSum = 0, thisSum = 0;

for( int j = 0; j < a.size( ); j++ )

{

thisSum += a[ j ];

if ( thisSum > maxSum )

maxSum = thisSum;

else if ( thisSum < 0 )

thisSum = 0;

}

return maxSum;

}

21

Proof of Correctness

Max subsequence cannot start or end at a negative Ai.

More generally, the max subsequence cannot have a prefix

with a negative sum.

Ex: -2 11 -4 13 -5 -2

Thus, if we ever find that Ai through Aj sums to < 0, then we

can advance i to j+1

Proof. Suppose j is the first index after i when the sum

becomes < 0

The max subsequence cannot start at any p between i and

j. Because A

i

through A

p-1

is positive, so starting at i would

have been even better.

22

Algorithm 4

int maxSum = 0, thisSum = 0;

for( int j = 0; j < a.size( ); j++ )

{

thisSum += a[ j ];

if ( thisSum > maxSum )

maxSum = thisSum;

else if ( thisSum < 0 )

thisSum = 0;

}

return maxSum

The algorithm resets whenever prefix is < 0.

Otherwise, it forms new sums and updates

maxSum in one pass.

23

Why Efficient Algorithms Matter

Suppose N = 10

6

A PC can read/process N records in 1 sec.

But if some algorithm does N*N computation, then it takes

1M seconds = 11 days!!!

100 City Traveling Salesman Problem.

A supercomputer checking 100 billion tours/sec still

requires 10

100

years!

Fast factoring algorithms can break encryption schemes.

Algorithms research determines what is safe code length. (>

100 digits)

24

How to Measure Algorithm Performance

What metric should be used to judge algorithms?

Length of the program (lines of code)

Ease of programming (bugs, maintenance)

Memory required

Running time

Running time is the dominant standard.

Quantifiable and easy to compare

Often the critical bottleneck

25

Abstraction

An algorithm may run differently depending on:

the hardware platform (PC, Cray, Sun)

the programming language (C, Java, C++)

the programmer (you, me, Bill Joy)

While different in detail, all hardware and prog models are

equivalent in some sense: Turing machines.

It suffices to count basic operations.

Crude but valuable measure of algorithms performance as a

function of input size.

26

Average, Best, and Worst-Case

On which input instances should the algorithms performance

be judged?

Average case:

Real world distributions difficult to predict

Best case:

Seems unrealistic

Worst case:

Gives an absolute guarantee

We will use the worst-case measure.

27

Examples

Vector addition Z = A+B

for (int i=0; i<n; i++)

Z[i] = A[i] + B[i];

T(n) = c n

Vector (inner) multiplication z =A*B

z = 0;

for (int i=0; i<n; i++)

z = z + A[i]*B[i];

T(n) = c + c

1

n

28

Examples

Vector (outer) multiplication Z = A*B

T

for (int i=0; i<n; i++)

for (int j=0; j<n; j++)

Z[i,j] = A[i] * B[j];

T(n) = c

2

n

2

;

A program does all the above

T(n) = c

0

+ c

1

n + c

2

n

2

;

29

Simplifying the Bound

T(n) = c

k

n

k

+ c

k-1

n

k-1

+ c

k-2

n

k-2

+ + c

1

n + c

o

too complicated

too many terms

Difficult to compare two expressions, each with

10 or 20 terms

Do we really need that many terms?

30

Simplifications

Keep just one term!

the fastest growing term (dominates the runtime)

No constant coefficients are kept

Constant coefficients affected by machines, languages,

etc.

Asymtotic behavior (as n gets large) is determined entirely

by the leading term.

Example. T(n) = 10 n

3

+ n

2

+ 40n + 800

If n = 1,000, then T(n) = 10,001,040,800

error is 0.01% if we drop all but the n

3

term

In an assembly line the slowest worker determines the

throughput rate

31

Simplification

Drop the constant coefficient

Does not effect the relative order

32

Simplification

The faster growing term (such as 2

n

) eventually will

outgrow the slower growing terms (e.g., 1000 n) no

matter what their coefficients!

Put another way, given a certain increase in

allocated time, a higher order algorithm will not reap

the benefit by solving much larger problem

33

T(n)

n n n log n n

2

n

3

n

4

n

10

2

n

10 .01s .03s .1s 1s 10s 10s 1s

20 .02s .09s .4s 8s 160s 2.84h 1ms

30 .03s .15s .9s 27s 810s 6.83d 1s

40 .04s .21s 1.6s 64s 2.56ms 121d 18m

50 .05s .28s 2.5s 125s 6.25ms 3.1y 13d

100 .1s .66s 10s 1ms 100ms 3171y 410

13

y

10

3

1s 9.96s 1ms 1s 16.67m 3.1710

13

y 3210

283

y

10

4

10s 130s 100ms 16.67m 115.7d 3.1710

23

y

10

5

100s 1.66ms 10s 11.57d 3171y 3.1710

33

y

10

6

1ms 19.92ms 16.67m 31.71y 3.1710

7

y 3.1710

43

y

Complexity and Tractability

Assume the computer does 1 billion ops per sec.

34

log n n n log n n

2

n

3

2

n

0 1 0 1 1 2

1 2 2 4 8 4

2 4 8 16 64 16

3 8 24 64 512 256

4 16 64 256 4096 65,536

5 32 160 1,024 32,768 4,294,967,296

0

10000

20000

30000

40000

50000

60000

70000

n

1

10

100

1000

10000

100000

n

2

n

n

2

n log n

n

log n

log n

n

n log n

n

2

n

3

n

3

2

n

35

Another View

More resources (time and/or processing power) translate into

large problems solved if complexity is low

T(n) Problem size

solved in 10

3

sec

Problem size

solved in 10

4

sec

Increase in

Problem size

100n 10 100 10

1000n 1 10 10

5n

2

14 45 3.2

N

3

10 22 2.2

2

n

10 13 1.3

36

T(n) keep one drop coef

3n

2

+4n+1 3 n

2

n

2

101 n

2

+102 101 n

2

n

2

15 n

2

+6n 15 n

2

n

2

a n

2

+bn+c a n

2

n

2

Asympotics

They all have the same growth rate

37

Caveats

Follow the spirit, not the letter

a 100n algorithm is more expensive than n

2

algorithm when n < 100

Other considerations:

a program used only a few times

a program run on small data sets

ease of coding, porting, maintenance

memory requirements

38

Asymptotic Notations

Big-O, bounded above by: T(n) = O(f(n))

For some c and N, T(n) s cf(n) whenever n > N.

Big-Omega, bounded below by: T(n) = O(f(n))

For some c>0 and N, T(n) > cf(n) whenever n > N.

Same as f(n) = O(T(n)).

Big-Theta, bounded above and below: T(n) = O(f(n))

T(n) = O(f(n)) and also T(n) = O(f(n))

Little-o, strictly bounded above: T(n) = o(f(n))

T(n)/f(n) 0 as n

39

By Pictures

Big-Oh (most commonly used)

bounded above

Big-Omega

bounded below

Big-Theta

exactly

Small-o

not as expensive as ...

0

N

0

N

0

N

40

Example

3 3

2 5

10

0

(?) (?)

n n

n n

n n

O

O

2 3

2 ) ( n n n T + =

41

Examples

) (log / 1

) ) / ( ( !

) (

) (

) (

) (

) (

) 1 ( c

Asymptomic ) (

1

0

1

1

3 2

1

2

1

1

n i

e n n n

r r

n i

n i

n i

n n c

n f

n

i

n

n i n

i

k k n

i

n

i

n

i

k i

i

k

i

O E

O

O E

O E

O E

O E

O E

O

=

=

+

=

=

=

=

42

Summary (Why O(n)?)

T(n) = c

k

n

k

+ c

k-1

n

k-1

+ c

k-2

n

k-2

+ + c

1

n + c

o

Too complicated

O(n

k

)

a single term with constant coefficient dropped

Much simpler, extra terms and coefficients do not

matter asymptotically

Other criteria hard to quantify

43

Runtime Analysis

Useful rules

simple statements (read, write, assign)

O(1) (constant)

simple operations (+ - * / == > >= < <=

O(1)

sequence of simple statements/operations

rule of sums

for, do, while loops

rules of products

44

Runtime Analysis (cont.)

Two important rules

Rule of sums

if you do a number of operations in sequence, the

runtime is dominated by the most expensive operation

Rule of products

if you repeat an operation a number of times, the total

runtime is the runtime of the operation multiplied by

the iteration count

45

Runtime Analysis (cont.)

if (cond) then O(1)

body

1

T

1

(n)

else

body

2

T

2

(n)

endif

T(n) = O(max (T

1

(n), T

2

(n))

46

Runtime Analysis (cont.)

Method calls

A calls B

B calls C

etc.

A sequence of operations when call sequences are

flattened

T(n) = max(T

A

(n), T

B

(n), T

C

(n))

47

Example

for (i=1; i<n; i++)

if A(i) > maxVal then

maxVal= A(i);

maxPos= i;

Asymptotic Complexity: O(n)

48

Example

for (i=1; i<n-1; i++)

for (j=n; j>= i+1; j--)

if (A(j-1) > A(j)) then

temp = A(j-1);

A(j-1) = A(j);

A(j) = tmp;

endif

endfor

endfor

Asymptotic Complexity is O(n

2

)

49

Run Time for Recursive Programs

T(n) is defined recursively in terms of T(k), k<n

The recurrence relations allow T(n) to be unwound

recursively into some base cases (e.g., T(0) or T(1)).

Examples:

Factorial

Hanoi towers

50

Example: Factorial

int factorial (int n) {

if (n<=1) return 1;

else return n * factorial(n-1);

}

factorial (n) = n*n-1*n-2* *1

n * factorial(n-1)

n-1 * factorial(n-2)

n-2 * factorial(n-3)

2 *factorial(1)

T(n)

T(n-1)

T(n-2)

T(1)

) (

* ) 1 (

* ) 1 ( ) 1 (

....

) 3 (

) 2 (

) 1 (

) (

n O

d n c

d n T

d d d n T

d d n T

d n T

n T

=

+ =

+ =

=

+ + + =

+ + =

+ =

51

Example: Factorial (cont.)

int factorial1(int n) {

if (n<=1) return 1;

else {

fact = 1;

for (k=2;k<=n;k++)

fact *= k;

return fact;

}

}

Both algorithms are O(n).

) 1 ( O

) 1 ( O

) (n O

52

Example: Hanoi Towers

Hanoi(n,A,B,C) =

Hanoi(n-1,A,C,B)+Hanoi(1,A,B,C)+Hanoi(n-1,C,B,A)

) 2 (

) 1 2 ... 2 2 (

) 1 2 ... 2 ( ) 1 ( 2

....

2 2 ) 3 ( 2

2 ) 2 ( 2

) 1 ( 2

) (

2 1

2 1

2 3

2

n

n n

n n

O

c

c T

c c c n T

c c n T

c n T

n T

=

+ + + + =

+ + + + =

=

+ + + =

+ + =

+ =

53

// Early-terminating version of selection sort

bool sorted = false;

!sorted &&

sorted = true;

else sorted = false; // out of order

Worst Case

Best Case

template<class T>

void SelectionSort(T a[], int n)

{

for (int size=n; (size>1); size--) {

int pos = 0;

// find largest

for (int i = 1; i < size; i++)

if (a[pos] <= a[i]) pos = i;

Swap(a[pos], a[size - 1]);

}

}

Worst Case, Best Case, and Average Case

54

T(N)=6N+4 : n0=4 and c=7, f(N)=N

T(N)=6N+4 <= c f(N) = 7N for N>=4

7N+4 = O(N)

15N+20 = O(N)

N

2

=O(N)?

N log N = O(N)?

N log N = O(N

2

)?

N

2

= O(N log N)?

N

10

= O(2

N

)?

6N + 4 = W(N) ? 7N? N+4 ? N

2

? N log N?

N log N = W(N

2

)?

3 = O(1)

1000000=O(1)

Sum i = O(N)?

T(N)

f(N)

c f(N)

n

0

T(N)=O(f(N))

55

An Analogy: Cooking Recipes

Algorithms are detailed and precise instructions.

Example: bake a chocolate mousse cake.

Convert raw ingredients into processed output.

Hardware (PC, supercomputer vs. oven, stove)

Pots, pans, pantry are data structures.

Interplay of hardware and algorithms

Different recipes for oven, stove, microwave etc.

New advances.

New models: clusters, Internet, workstations

Microwave cooking, 5-minute recipes, refrigeration

You might also like

- Writing Invitation Lesson PlanDocument3 pagesWriting Invitation Lesson PlanCatalina Arion80% (5)

- Introduction To AlgorithmsDocument214 pagesIntroduction To AlgorithmsasherNo ratings yet

- Jim DSA QuestionsDocument26 pagesJim DSA QuestionsTudor CiotloșNo ratings yet

- Algorithm Analysis: Bernard Chen Spring 2006Document30 pagesAlgorithm Analysis: Bernard Chen Spring 2006Joseph KinfeNo ratings yet

- Ds&algoritms MCQDocument14 pagesDs&algoritms MCQWOLVERINEffNo ratings yet

- Lecture 2: Asymptotic Analysis: CSE 373 Edition 2016Document32 pagesLecture 2: Asymptotic Analysis: CSE 373 Edition 2016khoalanNo ratings yet

- Lecture 1Document35 pagesLecture 1Mithal KothariNo ratings yet

- MaxsubseqsumDocument5 pagesMaxsubseqsumKotesh ChunduNo ratings yet

- Basic PRAM Algorithm Design TechniquesDocument13 pagesBasic PRAM Algorithm Design TechniquessandeeproseNo ratings yet

- Correctness1 PDFDocument50 pagesCorrectness1 PDFTavros TeamsNo ratings yet

- Vet. AssignmentDocument16 pagesVet. AssignmentAbdulsamadNo ratings yet

- Algorithm AssignmenteeeeeeeDocument13 pagesAlgorithm Assignmenteeeeeeekassahun gebrieNo ratings yet

- Daa QbaDocument48 pagesDaa QbaDurga KNo ratings yet

- Introduction To Analysis of Algorithms: Al-KhowarizmiDocument11 pagesIntroduction To Analysis of Algorithms: Al-KhowarizmiRuba NiaziNo ratings yet

- Analysis and Design of Algorithms PDFDocument54 pagesAnalysis and Design of Algorithms PDFazmiNo ratings yet

- Introduction & Median Finding: 1.1 The CourseDocument8 pagesIntroduction & Median Finding: 1.1 The CourseRameeshPaulNo ratings yet

- JEDI Slides-DataSt-Chapter01-Basic Concepts and NotationsDocument23 pagesJEDI Slides-DataSt-Chapter01-Basic Concepts and NotationsAbdreyll GerardNo ratings yet

- Study Material On Data Structure and AlgorithmsDocument43 pagesStudy Material On Data Structure and AlgorithmsGod is every whereNo ratings yet

- Chapter9 NokeyDocument14 pagesChapter9 NokeyTran Quang Khoa (K18 HCM)No ratings yet

- Chapter 01Document67 pagesChapter 01Anh VietNo ratings yet

- DAA Module-1Document21 pagesDAA Module-1Krittika HegdeNo ratings yet

- C 3 EduDocument5 pagesC 3 EduAlex MuscarNo ratings yet

- Big O MIT PDFDocument9 pagesBig O MIT PDFJoanNo ratings yet

- Mathematics For Computer ScienceDocument161 pagesMathematics For Computer ScienceBda Ngọc Phước100% (1)

- Introduction To Algorithm Complexity: News About Me Links ContactDocument8 pagesIntroduction To Algorithm Complexity: News About Me Links Contactjay_prakash02No ratings yet

- Ds 1Document8 pagesDs 1michaelcoNo ratings yet

- Finding Frequent Items in Data StreamsDocument11 pagesFinding Frequent Items in Data StreamsIndira PokhrelNo ratings yet

- Chapter 1 - IntroductionDocument46 pagesChapter 1 - Introductionmishamoamanuel574No ratings yet

- Algorithm PDFDocument54 pagesAlgorithm PDFPrakash SinghNo ratings yet

- Sem 4 AoADocument90 pagesSem 4 AoAGayatri JethaniNo ratings yet

- Question Paper3Document12 pagesQuestion Paper3Divya KakumanuNo ratings yet

- SEM5 - ADA - RMSE - Questions Solution1Document58 pagesSEM5 - ADA - RMSE - Questions Solution1DevanshuNo ratings yet

- Analysis of AlgorithmDocument11 pagesAnalysis of Algorithmprerna kanawadeNo ratings yet

- A Simple Algorithm For Finding Frequent Elements in Streams and BagsDocument5 pagesA Simple Algorithm For Finding Frequent Elements in Streams and BagsNoah Paul EvansNo ratings yet

- Algorithm and Method AssignmentDocument8 pagesAlgorithm and Method AssignmentOm SharmaNo ratings yet

- Omplexity of AlgorithmsDocument12 pagesOmplexity of AlgorithmskalaNo ratings yet

- Chapter3 AlgorithmsDocument68 pagesChapter3 AlgorithmsNguyen LinNo ratings yet

- BakaDocument85 pagesBakaM NarendranNo ratings yet

- Algos Cs Cmu EduDocument15 pagesAlgos Cs Cmu EduRavi Chandra Reddy MuliNo ratings yet

- Class ChapterDocument33 pagesClass ChapterSami MoharibNo ratings yet

- Algorithmic Designing: Unit 1-1. Time and Space ComplexityDocument5 pagesAlgorithmic Designing: Unit 1-1. Time and Space ComplexityPratik KakaniNo ratings yet

- DAA Notes CompleteDocument242 pagesDAA Notes CompleteVarnika TomarNo ratings yet

- Computer Science Notes: Algorithms and Data StructuresDocument21 pagesComputer Science Notes: Algorithms and Data StructuresMuhammedNo ratings yet

- DAA Question BankDocument10 pagesDAA Question BankAVANTHIKANo ratings yet

- Basic Data StructureDocument53 pagesBasic Data StructureSaloniNo ratings yet

- The Test On DsaDocument9 pagesThe Test On DsamichaelgodfatherNo ratings yet

- DSA Unit 1Document28 pagesDSA Unit 1savitasainstarNo ratings yet

- Final Term Test Soln 2020Document9 pagesFinal Term Test Soln 2020Sourabh RajNo ratings yet

- 1.unit 1 IntroductionDocument38 pages1.unit 1 IntroductionMOGILIPURI KEERTHINo ratings yet

- CS246 Hw1Document5 pagesCS246 Hw1sudh123456No ratings yet

- D21mca11809 DaaDocument13 pagesD21mca11809 DaaVaibhav BudhirajaNo ratings yet

- Suffix Array TutorialDocument17 pagesSuffix Array Tutorialarya5691No ratings yet

- Lab 09Document13 pagesLab 09Waqas AliNo ratings yet

- Algorithm Analysis NewDocument8 pagesAlgorithm Analysis NewMelatNo ratings yet

- Unit I Asymptotic Notations Asymptotic Notation: O, , !, andDocument59 pagesUnit I Asymptotic Notations Asymptotic Notation: O, , !, andsrivisundararajuNo ratings yet

- International Open UniversityDocument10 pagesInternational Open UniversityMoh'd Khamis SongoroNo ratings yet

- 1 Computer Sciences DepartmentDocument78 pages1 Computer Sciences Departmentshashank dwivediNo ratings yet

- Unit Ii 9: SyllabusDocument12 pagesUnit Ii 9: SyllabusbeingniceNo ratings yet

- Data Structure QPDocument8 pagesData Structure QPTinku The BloggerNo ratings yet

- String Algorithms in C: Efficient Text Representation and SearchFrom EverandString Algorithms in C: Efficient Text Representation and SearchNo ratings yet

- Advanced English Writing ENG104Document9 pagesAdvanced English Writing ENG104v2m1No ratings yet

- MathDocument30 pagesMathZubair RahmanNo ratings yet

- Language Test Taking in Albanian SchoolsDocument12 pagesLanguage Test Taking in Albanian SchoolsGlobal Research and Development ServicesNo ratings yet

- 7.2.1.8 Lab - Using Wireshark To Observe The TCP 3-Way HandshakeDocument6 pages7.2.1.8 Lab - Using Wireshark To Observe The TCP 3-Way HandshakepuracremasNo ratings yet

- OOPL Lab ManualDocument51 pagesOOPL Lab ManualRohan ShelarNo ratings yet

- 10 Points Checklist of Problem Areas On TOEFL Section 2Document21 pages10 Points Checklist of Problem Areas On TOEFL Section 2Mariana UlfaNo ratings yet

- A Practical Foundation For I Rab and TarkibDocument6 pagesA Practical Foundation For I Rab and Tarkiblucianocom100% (2)

- Winisis Step by StepDocument26 pagesWinisis Step by Stepmuhammadwaseemzia100% (7)

- Ssis BibleDocument95 pagesSsis BibleharzNo ratings yet

- 57 Mantra RecitationDocument4 pages57 Mantra RecitationTertBKRRR100% (1)

- SCSI WikiDocument137 pagesSCSI WikijalvarNo ratings yet

- Future Tense ExercisesDocument3 pagesFuture Tense Exercisesnew.novendra109No ratings yet

- Perimeter of A 2D ShapeDocument4 pagesPerimeter of A 2D ShapeBotle MakotanyaneNo ratings yet

- Software Model Checking Via Large BlockDocument8 pagesSoftware Model Checking Via Large BlockZsófia ÁdámNo ratings yet

- Diagnostic Test English 1Document3 pagesDiagnostic Test English 1Shiela LisayNo ratings yet

- Daily Lesson Plan: 1. To Speak About Their SelfDocument2 pagesDaily Lesson Plan: 1. To Speak About Their SelfSiti Nur Najmin AminuddinNo ratings yet

- The Obligation and Need For The Chains of NarrationDocument12 pagesThe Obligation and Need For The Chains of NarrationAbu SamirahNo ratings yet

- Department of Software Engineering JIGJIGA University Software Process Management Hand-Out of Chapter 2Document6 pagesDepartment of Software Engineering JIGJIGA University Software Process Management Hand-Out of Chapter 2Abdulaziz OumerNo ratings yet

- Perfect Tenses 2020Document16 pagesPerfect Tenses 2020Ana Bratulic100% (1)

- Recount TextDocument18 pagesRecount TextNein NaraNo ratings yet

- Analogies For Critical Thinking G4Document66 pagesAnalogies For Critical Thinking G4juliet diaz100% (2)

- Diptamoy's ResumeDocument1 pageDiptamoy's ResumeDiptamoy SinhaNo ratings yet

- Post A StatusDocument43 pagesPost A StatusTashi Ara100% (1)

- Learning Activity Sheet Module 3Document8 pagesLearning Activity Sheet Module 3Mycho AlasaNo ratings yet

- Early Life of Prophet (SAW)Document9 pagesEarly Life of Prophet (SAW)Teck TieNo ratings yet

- Lab02 Reading MaterialDocument20 pagesLab02 Reading MaterialHariom NarangNo ratings yet

- Looping Control Structures: WhileDocument5 pagesLooping Control Structures: WhileBello, Romalaine Anne C.No ratings yet

- SQL Server NotesDocument7 pagesSQL Server NotesSuzzane KhanNo ratings yet

- The Quranic Hermenuetics of Imam Ahmed Raza KhanDocument77 pagesThe Quranic Hermenuetics of Imam Ahmed Raza KhanNicholas John100% (1)