Professional Documents

Culture Documents

Balls Bins Adv

Balls Bins Adv

Uploaded by

Barbara FlemingCopyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Balls Bins Adv

Balls Bins Adv

Uploaded by

Barbara FlemingCopyright:

Available Formats

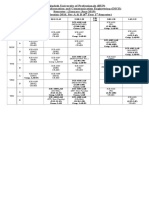

Outline

Balls and Bins (Advanced)

K. Subramani1

1 Lane Department of Computer Science and Electrical Engineering West Virginia University

6 March, 2012

Subramani

Balls and Bins

Outline

Outline

Recap

Subramani

Balls and Bins

Outline

Outline

Recap

The Poisson Approximation Some theorems and lemmas

Subramani

Balls and Bins

Outline

Outline

Recap

The Poisson Approximation Some theorems and lemmas

Applications to Hashing Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Recap

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Recap

Main issues

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Recap

Main issues The experiment of throwing m balls into n bins,

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Recap

Main issues The experiment of throwing m balls into n bins, each bin being chosen independently and uniformly at random.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Recap

Main issues The experiment of throwing m balls into n bins, each bin being chosen independently and uniformly at random.Several questions regarding the above random process were examined,

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Recap

Main issues The experiment of throwing m balls into n bins, each bin being chosen independently and uniformly at random.Several questions regarding the above random process were examined, such as expected maximum load,

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Recap

Main issues The experiment of throwing m balls into n bins, each bin being chosen independently and uniformly at random.Several questions regarding the above random process were examined, such as expected maximum load, expected number of balls in a bin,

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Recap

Main issues The experiment of throwing m balls into n bins, each bin being chosen independently and uniformly at random.Several questions regarding the above random process were examined, such as expected maximum load, expected number of balls in a bin, expected number of empty bins, and

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Recap

Main issues The experiment of throwing m balls into n bins, each bin being chosen independently and uniformly at random.Several questions regarding the above random process were examined, such as expected maximum load, expected number of balls in a bin, expected number of empty bins, and expected number of bins with r balls.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Recap

Main issues The experiment of throwing m balls into n bins, each bin being chosen independently and uniformly at random.Several questions regarding the above random process were examined, such as expected maximum load, expected number of balls in a bin, expected number of empty bins, and expected number of bins with r balls. We also examined the Poisson random variable and its applications to Balls and Bins questions.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

The Poisson Approximation

Main Issues

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

The Poisson Approximation

Main Issues Is bin emptiness events independent?

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

The Poisson Approximation

Main Issues Is bin emptiness events independent? We know that if m balls are thrown uniformly and independently into n bins, the distribution is approximately Poisson with mean m . n

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

The Poisson Approximation

Main Issues Is bin emptiness events independent? We know that if m balls are thrown uniformly and independently into n bins, the distribution is approximately Poisson with mean m . n We wish to approximate the load at each bin with independent Poisson random variables.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

The Poisson Approximation

Main Issues Is bin emptiness events independent? We know that if m balls are thrown uniformly and independently into n bins, the distribution is approximately Poisson with mean m . n We wish to approximate the load at each bin with independent Poisson random variables. We will show that this can be achieved by provable bounds.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

The Poisson Approximation

Main Issues Is bin emptiness events independent? We know that if m balls are thrown uniformly and independently into n bins, the distribution is approximately Poisson with mean m . n We wish to approximate the load at each bin with independent Poisson random variables. We will show that this can be achieved by provable bounds.

Note There is a difference between throwing m balls randomly and assigning each bin a number of balls that is Poisson distributed with mean m . n

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

The Poisson Approximation

Main Issues Is bin emptiness events independent? We know that if m balls are thrown uniformly and independently into n bins, the distribution is approximately Poisson with mean m . n We wish to approximate the load at each bin with independent Poisson random variables. We will show that this can be achieved by provable bounds.

Note There is a difference between throwing m balls randomly and assigning each bin a number of balls that is Poisson distributed with mean m . However, if you use Poisson distribution and end n with m balls, the distributions are identical!

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

Outline

Recap

The Poisson Approximation Some theorems and lemmas

Applications to Hashing Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

Theorem I

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

Theorem I

Theorem Let Xi

(m )

, 1 i n be the number of balls in the i th bin.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

Theorem I

Theorem Let Xi , 1 i n be the number of balls in the i th bin. Let Yi . Poisson random variables with mean m n

(m ) (m )

, 1 i n denote independent

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

Theorem I

Theorem Let Xi , 1 i n be the number of balls in the i th bin. Let Yi . Poisson random variables with mean m n The distribution of (Y1

(m ) (m ) (m )

, 1 i n denote independent

(k ) (k )

, . . . , Yn

(m )

) conditioned on i Yi

(m )

= k is the same as (X1 , . . . , Xn ).

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

Theorem II

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

Theorem II

Theorem Let f (x1 , x2 , . . . , xn ) denote a nonnegative function.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

Theorem II

Theorem Let f (x1 , x2 , . . . , xn ) denote a nonnegative function. Then,

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

Theorem II

Theorem Let f (x1 , x2 , . . . , xn ) denote a nonnegative function. Then, E[f (X1

(m )

(m ) (m ) (m) , . . . , Xn )] e m E[f (Y1 , . . . , Yn )]

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

Theorem II

Theorem Let f (x1 , x2 , . . . , xn ) denote a nonnegative function. Then, E[f (X1 Corollary Any event that takes place with probability p in the Poisson case, takes place with probability at most

(m )

(m ) (m ) (m) , . . . , Xn )] e m E[f (Y1 , . . . , Yn )]

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

Theorem II

Theorem Let f (x1 , x2 , . . . , xn ) denote a nonnegative function. Then, E[f (X1 Corollary Any event that takes place with probability p in the Poisson case, takes place with probability at most p e m in the exact case.

(m )

(m ) (m ) (m) , . . . , Xn )] e m E[f (Y1 , . . . , Yn )]

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

Theorem III

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

Theorem III

Theorem Let f (x1 , x2 , . . . , xn ) denote a nonnegative function,

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

Theorem III

Theorem Let f (x1 , x2 , . . . , xn ) denote a nonnegative function, such that E[f (X1 monotonically increasing or monotonically decreasing in m.

(m )

, . . . , Xn )] is either

(m )

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

Theorem III

Theorem Let f (x1 , x2 , . . . , xn ) denote a nonnegative function, such that E[f (X1 monotonically increasing or monotonically decreasing in m. Then,

(m )

, . . . , Xn )] is either

(m )

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

Theorem III

Theorem Let f (x1 , x2 , . . . , xn ) denote a nonnegative function, such that E[f (X1 monotonically increasing or monotonically decreasing in m. Then, E[f (X1

(m ) (m )

, . . . , Xn )] is either

(m )

, . . . , Xn )] 2 E[f (Y1

(m )

(m )

, . . . , Yn

(m )

)]

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

Theorem III

Theorem Let f (x1 , x2 , . . . , xn ) denote a nonnegative function, such that E[f (X1 monotonically increasing or monotonically decreasing in m. Then, E[f (X1 Corollary Let be an event whose probability is either monotonically increasing or decreasing in the number of balls.

(m ) (m )

, . . . , Xn )] is either

(m )

, . . . , Xn )] 2 E[f (Y1

(m )

(m )

, . . . , Yn

(m )

)]

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

Theorem III

Theorem Let f (x1 , x2 , . . . , xn ) denote a nonnegative function, such that E[f (X1 monotonically increasing or monotonically decreasing in m. Then, E[f (X1 Corollary Let be an event whose probability is either monotonically increasing or decreasing in the number of balls. If has probability p in the Poisson case, then it has probability at most

(m ) (m )

, . . . , Xn )] is either

(m )

, . . . , Xn )] 2 E[f (Y1

(m )

(m )

, . . . , Yn

(m )

)]

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

Theorem III

Theorem Let f (x1 , x2 , . . . , xn ) denote a nonnegative function, such that E[f (X1 monotonically increasing or monotonically decreasing in m. Then, E[f (X1 Corollary Let be an event whose probability is either monotonically increasing or decreasing in the number of balls. If has probability p in the Poisson case, then it has probability at most 2 p in the exact case.

(m ) (m )

, . . . , Xn )] is either

(m )

, . . . , Xn )] 2 E[f (Y1

(m )

(m )

, . . . , Yn

(m )

)]

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Some theorems and lemmas

Theorem III

Theorem Let f (x1 , x2 , . . . , xn ) denote a nonnegative function, such that E[f (X1 monotonically increasing or monotonically decreasing in m. Then, E[f (X1 Corollary Let be an event whose probability is either monotonically increasing or decreasing in the number of balls. If has probability p in the Poisson case, then it has probability at most 2 p in the exact case. Lemma When n balls are thrown independently into n bins, the maximum load is at least 1 probability at least (1 n ), for sufciently large n.

ln n ln ln n

(m )

, . . . , Xn )] is either

(m )

(m )

, . . . , Xn )] 2 E[f (Y1

(m )

(m )

, . . . , Yn

(m )

)]

with

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Outline

Recap

The Poisson Approximation Some theorems and lemmas

Applications to Hashing Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Chain Hashing

Main Issues

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Chain Hashing

Main Issues The password checking problem.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Chain Hashing

Main Issues The password checking problem. The Hashing Approach and Hash functions.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Chain Hashing

Main Issues The password checking problem. The Hashing Approach and Hash functions. f : U [0, n 1].

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Chain Hashing

Main Issues The password checking problem. The Hashing Approach and Hash functions. f : U [0, n 1]. Chain Hashing.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Chain Hashing

Main Issues The password checking problem. The Hashing Approach and Hash functions. f : U [0, n 1]. Chain Hashing. Assumption: Hash function maps words into bins in random fashion.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Chain Hashing

Main Issues The password checking problem. The Hashing Approach and Hash functions. f : U [0, n 1]. Chain Hashing. Assumption: Hash function maps words into bins in random fashion. For each x U , the probability that f (x ) = j is

1 , n

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Chain Hashing

Main Issues The password checking problem. The Hashing Approach and Hash functions. f : U [0, n 1]. Chain Hashing. Assumption: Hash function maps words into bins in random fashion. For each x U , the probability that f (x ) = j is

1 , n

and

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Chain Hashing

Main Issues The password checking problem. The Hashing Approach and Hash functions. f : U [0, n 1]. Chain Hashing. Assumption: Hash function maps words into bins in random fashion. For each x U , the probability that f (x ) = j is independent of each other.

1 , n

and the values of f (x ) for each x are

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Chain Hashing

Main Issues The password checking problem. The Hashing Approach and Hash functions. f : U [0, n 1]. Chain Hashing. Assumption: Hash function maps words into bins in random fashion. For each x U , the probability that f (x ) = j is independent of each other. Search approach.

1 , n

and the values of f (x ) for each x are

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Chain Hashing

Main Issues The password checking problem. The Hashing Approach and Hash functions. f : U [0, n 1]. Chain Hashing. Assumption: Hash function maps words into bins in random fashion. For each x U , the probability that f (x ) = j is independent of each other. Search approach. If the word is not in dictionary, expected time is

m , n 1 , n

and the values of f (x ) for each x are

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Chain Hashing

Main Issues The password checking problem. The Hashing Approach and Hash functions. f : U [0, n 1]. Chain Hashing. Assumption: Hash function maps words into bins in random fashion. For each x U , the probability that f (x ) = j is independent of each other. Search approach. If the word is not in dictionary, expected time is

m , n 1 , n

and the values of f (x ) for each x are

1 otherwise, expected time is 1 + mn .

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Chain Hashing

Main Issues The password checking problem. The Hashing Approach and Hash functions. f : U [0, n 1]. Chain Hashing. Assumption: Hash function maps words into bins in random fashion. For each x U , the probability that f (x ) = j is independent of each other. Search approach. If the word is not in dictionary, expected time is

m , n 1 , n

and the values of f (x ) for each x are

1 otherwise, expected time is 1 + mn .

Choosing n = m, gives constant expected search time.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Chain Hashing

Main Issues The password checking problem. The Hashing Approach and Hash functions. f : U [0, n 1]. Chain Hashing. Assumption: Hash function maps words into bins in random fashion. For each x U , the probability that f (x ) = j is independent of each other. Search approach. If the word is not in dictionary, expected time is

m , n 1 , n

and the values of f (x ) for each x are

1 otherwise, expected time is 1 + mn .

Choosing n = m, gives constant expected search time.

ln n When n = m, the maximum load is ( ln ), w.h.p.; ln n

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Chain Hashing

Main Issues The password checking problem. The Hashing Approach and Hash functions. f : U [0, n 1]. Chain Hashing. Assumption: Hash function maps words into bins in random fashion. For each x U , the probability that f (x ) = j is independent of each other. Search approach. If the word is not in dictionary, expected time is

m , n 1 , n

and the values of f (x ) for each x are

1 otherwise, expected time is 1 + mn .

Choosing n = m, gives constant expected search time.

ln n When n = m, the maximum load is ( ln ), w.h.p.; faster than binary search. ln n

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Chain Hashing

Main Issues The password checking problem. The Hashing Approach and Hash functions. f : U [0, n 1]. Chain Hashing. Assumption: Hash function maps words into bins in random fashion. For each x U , the probability that f (x ) = j is independent of each other. Search approach. If the word is not in dictionary, expected time is

m , n 1 , n

and the values of f (x ) for each x are

1 otherwise, expected time is 1 + mn .

Choosing n = m, gives constant expected search time.

ln n When n = m, the maximum load is ( ln ), w.h.p.; faster than binary search. ln n

Wasted space.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Outline

Recap

The Poisson Approximation Some theorems and lemmas

Applications to Hashing Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing

Main Issues

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing

Main Issues Goal is to save space instead of time.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing

Main Issues Goal is to save space instead of time. Assume that passwords are restricted to 64 bits and that a hash function maps words into 32 bits.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing

Main Issues Goal is to save space instead of time. Assume that passwords are restricted to 64 bits and that a hash function maps words into 32 bits. Fingerprinting and false positives.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing

Main Issues Goal is to save space instead of time. Assume that passwords are restricted to 64 bits and that a hash function maps words into 32 bits. Fingerprinting and false positives. The approximate set membership problem:

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing

Main Issues Goal is to save space instead of time. Assume that passwords are restricted to 64 bits and that a hash function maps words into 32 bits. Fingerprinting and false positives. The approximate set membership problem: Given a set S = {s1 , s2 , . . . , sm } of m elements from a large universe U ,

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing

Main Issues Goal is to save space instead of time. Assume that passwords are restricted to 64 bits and that a hash function maps words into 32 bits. Fingerprinting and false positives. The approximate set membership problem: Given a set S = {s1 , s2 , . . . , sm } of m elements from a large universe U , we would like to represent elements in such a way,

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing

Main Issues Goal is to save space instead of time. Assume that passwords are restricted to 64 bits and that a hash function maps words into 32 bits. Fingerprinting and false positives. The approximate set membership problem: Given a set S = {s1 , s2 , . . . , sm } of m elements from a large universe U , we would like to represent elements in such a way, as to efciently answer queries of the form Is x S ?

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing

Main Issues Goal is to save space instead of time. Assume that passwords are restricted to 64 bits and that a hash function maps words into 32 bits. Fingerprinting and false positives. The approximate set membership problem: Given a set S = {s1 , s2 , . . . , sm } of m elements from a large universe U , we would like to represent elements in such a way, as to efciently answer queries of the form Is x S ? The disallowed passwords correspond to S .

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing

Main Issues Goal is to save space instead of time. Assume that passwords are restricted to 64 bits and that a hash function maps words into 32 bits. Fingerprinting and false positives. The approximate set membership problem: Given a set S = {s1 , s2 , . . . , sm } of m elements from a large universe U , we would like to represent elements in such a way, as to efciently answer queries of the form Is x S ? The disallowed passwords correspond to S . We also want to be space efcient and are willing to tolerate some error.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing

Main Issues Goal is to save space instead of time. Assume that passwords are restricted to 64 bits and that a hash function maps words into 32 bits. Fingerprinting and false positives. The approximate set membership problem: Given a set S = {s1 , s2 , . . . , sm } of m elements from a large universe U , we would like to represent elements in such a way, as to efciently answer queries of the form Is x S ? The disallowed passwords correspond to S . We also want to be space efcient and are willing to tolerate some error. Assume that we use b bits to create a ngerprint.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing

Main Issues Goal is to save space instead of time. Assume that passwords are restricted to 64 bits and that a hash function maps words into 32 bits. Fingerprinting and false positives. The approximate set membership problem: Given a set S = {s1 , s2 , . . . , sm } of m elements from a large universe U , we would like to represent elements in such a way, as to efciently answer queries of the form Is x S ? The disallowed passwords correspond to S . We also want to be space efcient and are willing to tolerate some error. Assume that we use b bits to create a ngerprint. The probability that an acceptable password has a ngerprint that is different from any specic password is

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing

Main Issues Goal is to save space instead of time. Assume that passwords are restricted to 64 bits and that a hash function maps words into 32 bits. Fingerprinting and false positives. The approximate set membership problem: Given a set S = {s1 , s2 , . . . , sm } of m elements from a large universe U , we would like to represent elements in such a way, as to efciently answer queries of the form Is x S ? The disallowed passwords correspond to S . We also want to be space efcient and are willing to tolerate some error. Assume that we use b bits to create a ngerprint. The probability that an acceptable password has a ngerprint that is different from any specic password is (1 21 b ).

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing (contd.)

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing (contd.)

Main Issues (contd.)

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing (contd.)

Main Issues (contd.) Since S has size m, what is the probability that an acceptable password ngerprint will match the ngerprints of one of the elements of S ?

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing (contd.)

Main Issues (contd.) Since S has size m, what is the probability that an acceptable password ngerprint will match the ngerprints of one of the elements of S ? 1 (1

1 m ) 2b

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing (contd.)

Main Issues (contd.) Since S has size m, what is the probability that an acceptable password ngerprint will match the ngerprints of one of the elements of S ? 1 (1

1 m ) 2b

1 e 2b .

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing (contd.)

Main Issues (contd.) Since S has size m, what is the probability that an acceptable password ngerprint will match the ngerprints of one of the elements of S ? 1 (1

1 m ) 2b

1 e 2b .

Since we want the probability of a false positive to be less than c , we need,

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing (contd.)

Main Issues (contd.) Since S has size m, what is the probability that an acceptable password ngerprint will match the ngerprints of one of the elements of S ? 1 (1

1 m ) 2b

1 e 2b .

Since we want the probability of a false positive to be less than c , we need, 1 e 2b

m

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing (contd.)

Main Issues (contd.) Since S has size m, what is the probability that an acceptable password ngerprint will match the ngerprints of one of the elements of S ? 1 (1

1 m ) 2b

1 e 2b .

Since we want the probability of a false positive to be less than c , we need, 1 e 2b

m

c 1c

m e 2b

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing (contd.)

Main Issues (contd.) Since S has size m, what is the probability that an acceptable password ngerprint will match the ngerprints of one of the elements of S ? 1 (1

1 m ) 2b

1 e 2b .

Since we want the probability of a false positive to be less than c , we need, 1 e 2b

m

c 1c log2 m

1 ln 1 c

m e 2b

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing (contd.)

Main Issues (contd.) Since S has size m, what is the probability that an acceptable password ngerprint will match the ngerprints of one of the elements of S ? 1 (1

1 m ) 2b

1 e 2b .

Since we want the probability of a false positive to be less than c , we need, 1 e 2b

m

c 1c log2 m

1 ln 1 c

m e 2b

If we choose b = 2 log2 m, the probability of a false positive is:

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing (contd.)

Main Issues (contd.) Since S has size m, what is the probability that an acceptable password ngerprint will match the ngerprints of one of the elements of S ? 1 (1

1 m ) 2b

1 e 2b .

Since we want the probability of a false positive to be less than c , we need, 1 e 2b

m

c 1c log2 m

1 ln 1 c 1 m ) m2

m e 2b

If we choose b = 2 log2 m, the probability of a false positive is: 1 (1

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bit String Hashing (contd.)

Main Issues (contd.) Since S has size m, what is the probability that an acceptable password ngerprint will match the ngerprints of one of the elements of S ? 1 (1

1 m ) 2b

1 e 2b .

Since we want the probability of a false positive to be less than c , we need, 1 e 2b

m

c 1c log2 m

1 ln 1 c 1 m ) m2 1 . m

m e 2b

If we choose b = 2 log2 m, the probability of a false positive is: 1 (1

<

If our dictionary has 216 words, using 32 bits when hashing, leads to an error probability of at . most 65,1 536

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Outline

Recap

The Poisson Approximation Some theorems and lemmas

Applications to Hashing Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bloom Filters

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bloom Filters

Main Issues

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bloom Filters

Main Issues Chain hashing optimizes time,

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bloom Filters

Main Issues Chain hashing optimizes time, while bit string hashing optimizes space.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bloom Filters

Main Issues Chain hashing optimizes time, while bit string hashing optimizes space. Can we get a better trade-off?

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bloom Filters

Main Issues Chain hashing optimizes time, while bit string hashing optimizes space. Can we get a better trade-off? Bloom lters!

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bloom Filters

Main Issues Chain hashing optimizes time, while bit string hashing optimizes space. Can we get a better trade-off? Bloom lters! A Bloom lter consists of an array of n bits, A[0] to A[n 1],

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bloom Filters

Main Issues Chain hashing optimizes time, while bit string hashing optimizes space. Can we get a better trade-off? Bloom lters! A Bloom lter consists of an array of n bits, A[0] to A[n 1], initially set to 0.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bloom Filters

Main Issues Chain hashing optimizes time, while bit string hashing optimizes space. Can we get a better trade-off? Bloom lters! A Bloom lter consists of an array of n bits, A[0] to A[n 1], initially set to 0. k hash function h1 , h2 , . . . , hk with range {0, . . . , n 1} are used.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bloom Filters

Main Issues Chain hashing optimizes time, while bit string hashing optimizes space. Can we get a better trade-off? Bloom lters! A Bloom lter consists of an array of n bits, A[0] to A[n 1], initially set to 0. k hash function h1 , h2 , . . . , hk with range {0, . . . , n 1} are used. We wish to represent a set S = {s1 , s2 , . . . , sm } of m elements.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bloom Filters

Main Issues Chain hashing optimizes time, while bit string hashing optimizes space. Can we get a better trade-off? Bloom lters! A Bloom lter consists of an array of n bits, A[0] to A[n 1], initially set to 0. k hash function h1 , h2 , . . . , hk with range {0, . . . , n 1} are used. We wish to represent a set S = {s1 , s2 , . . . , sm } of m elements. Set A[hi (s)] to 1, for each 1 i k and each s S .

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bloom Filters

Main Issues Chain hashing optimizes time, while bit string hashing optimizes space. Can we get a better trade-off? Bloom lters! A Bloom lter consists of an array of n bits, A[0] to A[n 1], initially set to 0. k hash function h1 , h2 , . . . , hk with range {0, . . . , n 1} are used. We wish to represent a set S = {s1 , s2 , . . . , sm } of m elements. Set A[hi (s)] to 1, for each 1 i k and each s S . How to check if x S ?

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bloom Filters

Main Issues Chain hashing optimizes time, while bit string hashing optimizes space. Can we get a better trade-off? Bloom lters! A Bloom lter consists of an array of n bits, A[0] to A[n 1], initially set to 0. k hash function h1 , h2 , . . . , hk with range {0, . . . , n 1} are used. We wish to represent a set S = {s1 , s2 , . . . , sm } of m elements. Set A[hi (s)] to 1, for each 1 i k and each s S . How to check if x S ? Check all locations A[hi (x )], 1 i k .

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bloom Filters

Main Issues Chain hashing optimizes time, while bit string hashing optimizes space. Can we get a better trade-off? Bloom lters! A Bloom lter consists of an array of n bits, A[0] to A[n 1], initially set to 0. k hash function h1 , h2 , . . . , hk with range {0, . . . , n 1} are used. We wish to represent a set S = {s1 , s2 , . . . , sm } of m elements. Set A[hi (s)] to 1, for each 1 i k and each s S . How to check if x S ? Check all locations A[hi (x )], 1 i k . How could we go wrong?

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Bloom Filters

Main Issues Chain hashing optimizes time, while bit string hashing optimizes space. Can we get a better trade-off? Bloom lters! A Bloom lter consists of an array of n bits, A[0] to A[n 1], initially set to 0. k hash function h1 , h2 , . . . , hk with range {0, . . . , n 1} are used. We wish to represent a set S = {s1 , s2 , . . . , sm } of m elements. Set A[hi (s)] to 1, for each 1 i k and each s S . How to check if x S ? Check all locations A[hi (x )], 1 i k . How could we go wrong? False positives.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Analyzing error probability

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Analyzing error probability

Analysis

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Analyzing error probability

Analysis

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Analyzing error probability

Analysis What is the probability that a specic bit is 0, after the preprocessing?

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Analyzing error probability

Analysis

1 k m ) What is the probability that a specic bit is 0, after the preprocessing? (1 n

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Analyzing error probability

Analysis

1 k m ) = e What is the probability that a specic bit is 0, after the preprocessing? (1 n

k m n

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Analyzing error probability

Analysis

1 k m ) = e What is the probability that a specic bit is 0, after the preprocessing? (1 n

k m n

Assume that a fraction p = e Bloom lter.

k m n

of the entries are still 0, after S has been hashed into the

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Analyzing error probability

Analysis

1 k m ) = e What is the probability that a specic bit is 0, after the preprocessing? (1 n

k m n

Assume that a fraction p = e Bloom lter.

k m n

of the entries are still 0, after S has been hashed into the

The probability of a false positive is then precisely the probability that all the hash functions map the input string x S , to 1,

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Analyzing error probability

Analysis

1 k m ) = e What is the probability that a specic bit is 0, after the preprocessing? (1 n

k m n

Assume that a fraction p = e Bloom lter.

k m n

of the entries are still 0, after S has been hashed into the

The probability of a false positive is then precisely the probability that all the hash functions map the input string x S , to 1, which is,

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Analyzing error probability

Analysis

1 k m ) = e What is the probability that a specic bit is 0, after the preprocessing? (1 n

k m n

Assume that a fraction p = e Bloom lter.

k m n

of the entries are still 0, after S has been hashed into the

The probability of a false positive is then precisely the probability that all the hash functions map the input string x S , to 1, which is,

(1 (1 )k m )k

n

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Analyzing error probability

Analysis

1 k m ) = e What is the probability that a specic bit is 0, after the preprocessing? (1 n

k m n

Assume that a fraction p = e Bloom lter.

k m n

of the entries are still 0, after S has been hashed into the

The probability of a false positive is then precisely the probability that all the hash functions map the input string x S , to 1, which is,

(1 (1 )k m )k

n

(1 e

k m n

)k

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Analyzing error probability

Analysis

1 k m ) = e What is the probability that a specic bit is 0, after the preprocessing? (1 n

k m n

Assume that a fraction p = e Bloom lter.

k m n

of the entries are still 0, after S has been hashed into the

The probability of a false positive is then precisely the probability that all the hash functions map the input string x S , to 1, which is,

(1 (1 )k m )k

n

= =

(1 e

f ()

k m n

)k

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Analyzing error probability

Analysis

1 k m ) = e What is the probability that a specic bit is 0, after the preprocessing? (1 n

k m n

Assume that a fraction p = e Bloom lter.

k m n

of the entries are still 0, after S has been hashed into the

The probability of a false positive is then precisely the probability that all the hash functions map the input string x S , to 1, which is,

(1 (1 )k m )k

n

= =

m

(1 e

f ()

k m n

)k

Optimizing for k , we get k = ln 2 and f (0.6185) n .

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Outline

Recap

The Poisson Approximation Some theorems and lemmas

Applications to Hashing Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Breaking Symmetry

Main Issues

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Breaking Symmetry

Main Issues Resource contention.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Breaking Symmetry

Main Issues Resource contention. Sequential ordering.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Breaking Symmetry

Main Issues Resource contention. Sequential ordering. The Hashing approach.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Breaking Symmetry

Main Issues Resource contention. Sequential ordering. The Hashing approach. Hash each user identier into b bits.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Breaking Symmetry

Main Issues Resource contention. Sequential ordering. The Hashing approach. Hash each user identier into b bits. How likely that two users will have the same hash value?

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Breaking Symmetry

Main Issues Resource contention. Sequential ordering. The Hashing approach. Hash each user identier into b bits. How likely that two users will have the same hash value? Fix one user.

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Breaking Symmetry

Main Issues Resource contention. Sequential ordering. The Hashing approach. Hash each user identier into b bits. How likely that two users will have the same hash value? Fix one user. What is the probability that some other user obtains the same hash value?

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Breaking Symmetry

Main Issues Resource contention. Sequential ordering. The Hashing approach. Hash each user identier into b bits. How likely that two users will have the same hash value? Fix one user. What is the probability that some other user obtains the same hash value? 1 (1 1 2b

)n1

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Breaking Symmetry

Main Issues Resource contention. Sequential ordering. The Hashing approach. Hash each user identier into b bits. How likely that two users will have the same hash value? Fix one user. What is the probability that some other user obtains the same hash value? 1 (1 1 2b

)n1

n1 2b

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Breaking Symmetry

Main Issues Resource contention. Sequential ordering. The Hashing approach. Hash each user identier into b bits. How likely that two users will have the same hash value? Fix one user. What is the probability that some other user obtains the same hash value? 1 (1 1 2b

)n1

n1 2b

By the union bound, the probability that any user has the same hash values as the xed user is

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Breaking Symmetry

Main Issues Resource contention. Sequential ordering. The Hashing approach. Hash each user identier into b bits. How likely that two users will have the same hash value? Fix one user. What is the probability that some other user obtains the same hash value? 1 (1 1 2b

)n1

n1 2b

By the union bound, the probability that any user has the same hash values as the xed user is

n(n1) . 2b

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Breaking Symmetry

Main Issues Resource contention. Sequential ordering. The Hashing approach. Hash each user identier into b bits. How likely that two users will have the same hash value? Fix one user. What is the probability that some other user obtains the same hash value? 1 (1 1 2b

)n1

n1 2b

By the union bound, the probability that any user has the same hash values as the xed user is b=

n(n1) . 2b 1 In order to guarantee success with probability (1 n ), choose

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Breaking Symmetry

Main Issues Resource contention. Sequential ordering. The Hashing approach. Hash each user identier into b bits. How likely that two users will have the same hash value? Fix one user. What is the probability that some other user obtains the same hash value? 1 (1 1 2b

)n1

n1 2b

By the union bound, the probability that any user has the same hash values as the xed

1 user is 2b . In order to guarantee success with probability (1 n ), choose b = 3 log n2 . n(n1)

Subramani

Balls and Bins

Recap The Poisson Approximation Applications to Hashing

Chain Hashing Bit String Hashing Bloom Filters Breaking Symmetry

Breaking Symmetry

Main Issues Resource contention. Sequential ordering. The Hashing approach. Hash each user identier into b bits. How likely that two users will have the same hash value? Fix one user. What is the probability that some other user obtains the same hash value? 1 (1 1 2b

)n1

n1 2b

By the union bound, the probability that any user has the same hash values as the xed

1 user is 2b . In order to guarantee success with probability (1 n ), choose b = 3 log n2 . n(n1)

Can also be used for the leader election problem.

Subramani

Balls and Bins

You might also like

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5810)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1092)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (843)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (590)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (897)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (540)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (346)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (822)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (122)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (401)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (74)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- Assessment 3 - Graph of Circular Functions and Situational ProblemsDocument1 pageAssessment 3 - Graph of Circular Functions and Situational ProblemsProject Fam PlanNo ratings yet

- Fundamentals of Gas-Liquid-Solid FluidizationDocument34 pagesFundamentals of Gas-Liquid-Solid Fluidizationfaisal58650100% (1)

- Takehome QuizDocument2 pagesTakehome QuizLe GNo ratings yet

- Gateway To AIIMS2019-Solutions Physics PDFDocument183 pagesGateway To AIIMS2019-Solutions Physics PDFAyush Pandey100% (1)

- Chapter 1 - Overview: Specific Type of Opportunity CostDocument17 pagesChapter 1 - Overview: Specific Type of Opportunity CostFTU.CS2 Nghiêm Mai Yến NhiNo ratings yet

- Design Principles For Aqueduct and Siphon AqueductDocument16 pagesDesign Principles For Aqueduct and Siphon AqueductartiNo ratings yet

- Assignment 2 SolutionDocument9 pagesAssignment 2 SolutionAhmed AttallaNo ratings yet

- Mean Free PathDocument9 pagesMean Free PathRestiAyuNo ratings yet

- Piezoelectric Accelerometers and Vibration PreamplifierDocument160 pagesPiezoelectric Accelerometers and Vibration PreamplifierBogdan Neagoe100% (1)

- Spatial Econometric Modeling Using PROC SPATIALREG - Subconscious MusingsDocument3 pagesSpatial Econometric Modeling Using PROC SPATIALREG - Subconscious MusingsRic KobaNo ratings yet

- Math 114 Exam 3 ReviewDocument7 pagesMath 114 Exam 3 ReviewGenesa Buen A. PolintanNo ratings yet

- A Era Do Capital ImprodutivoDocument225 pagesA Era Do Capital ImprodutivoEdinei Reis100% (1)

- Dielectric Image MethodsDocument14 pagesDielectric Image Methodssh1tty_cookNo ratings yet

- Ball LevitationDocument7 pagesBall LevitationsumlusemloNo ratings yet

- AdvaithamDocument2 pagesAdvaithamBhogar SishyanNo ratings yet

- Access-2021-02956 Proof HiDocument15 pagesAccess-2021-02956 Proof HiMuhammad Imran KhanNo ratings yet

- Heizer - Om12 - MC FinalDocument31 pagesHeizer - Om12 - MC FinalfozNo ratings yet

- Math 340: Introduction To Ordinary Differential Equations: Course WebsiteDocument2 pagesMath 340: Introduction To Ordinary Differential Equations: Course WebsitePurificacion EstandianNo ratings yet

- Class Routine Final 13.12.18Document7 pagesClass Routine Final 13.12.18RakibNo ratings yet

- Unit 3 Mastery Standard Questions LMH %: 4.NBT.4: FluentlyDocument7 pagesUnit 3 Mastery Standard Questions LMH %: 4.NBT.4: Fluentlyapi-377862928No ratings yet

- Models of The AtomDocument38 pagesModels of The AtomKC TorresNo ratings yet

- Capital Structure MCQsDocument27 pagesCapital Structure MCQsTejas BhavsarNo ratings yet

- Measurement of Fluid Flow in Closed ConduitsDocument38 pagesMeasurement of Fluid Flow in Closed ConduitsxhaneriNo ratings yet

- Circuit Theory - Solved Assignments - Semester Fall 2005Document18 pagesCircuit Theory - Solved Assignments - Semester Fall 2005Muhammad Umair50% (2)

- Fruit Loop Probability Lesson Planfor PortfolioDocument8 pagesFruit Loop Probability Lesson Planfor Portfolioapi-349267871No ratings yet

- Solution Oct 19 P2Document19 pagesSolution Oct 19 P2CBoon's VlogsNo ratings yet

- Aero SemDocument182 pagesAero SemNaveen Singh100% (1)

- CE 579 Lecture 3 Stability-Energy MethodDocument30 pagesCE 579 Lecture 3 Stability-Energy MethodbsitlerNo ratings yet

- Knowledge and ScepticismDocument15 pagesKnowledge and ScepticismJuan IslasNo ratings yet

- Math 101 SyllabusDocument9 pagesMath 101 SyllabusBea Garcia AspuriaNo ratings yet