Probability, Random Variables and Random

Processes with applications to Signal Processing

Ganesh.G

Member(Research Staff),

Central Research Laboratory, Bharat Electronics Limited, Bangalore-13

02/May/2007 1 of 30

ganesh.crl@gmail.com

Notes :

1

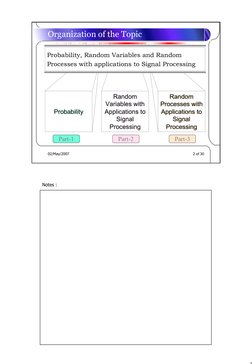

� Organization of the Topic

Probability, Random Variables and Random

Processes with applications to Signal Processing

Random Random

Random

Variables with Processes

Processes with

with

Probability Applications to Applications

Applications to

to

Signal Signal

Signal

Processing Processing

Processing

Part-1 Part-2 Part-3

02/May/2007 2 of 30

Notes :

2

� Contents – 1. Probability

• Probability

– Why study Probability

– Four approaches to Probability definition

– A priori and A posteriori Probabilities

– Concepts of Joint, Marginal, Conditional and Total Probabilities

– Baye’s Theorem and its applications

– Independent events and their properties

• Tips and Tricks

• Example

02/May/2007 3 of 30

Notes :

3

� Contents – 2. Random Variables

• The Concept of a Random Variable

– Distribution and Density functions

– Discrete, Continuous and Mixed Random variables

– Specific Random variables: Discrete and Continuous

– Conditional and Joint Distribution and Density functions

• Functions of One Random Variable

– Transformations of Continuous and Discrete Random variables

– Expectation

– Moments: Moments about the origin, Central Moments, Variance

and Skew

– Characteristic Function and Moment Generating Functions

– Chebyshev and Shwarz Inequalities

– Chernoff Bound

02/May/2007 4 of 30

Notes :

1. Distribution and Density functions/ Discrete, Continuous and Mixed

Random variables/ Specific Random variables: Discrete and

Continuous/ Conditional and Joint Distribution and Density

functions

2. Why functions of Random variables are important to signal

processing/ Transformations of Continuous and Discrete Random

variables/ Expectation/ Moments: Moments about the origin, Central

Moments, Variance and Skew/ Functions that give Moments:

Characteristic Function and Moment Generating Functions/

Chebyshev and Shwarz Inequalities/ Chernoff Bound

4

� Contents – 2. Random Variables Contd..

• Multiple Random Variables

– Joint distribution and density functions

– Joint Moments (Covariance, Correlation Coefficient, Orthogonality)

and Joint Characteristic Functions

– Conditional distribution and density functions

• Random Vectors and Parameter Estimation

– Expectation Vectors and Covariance Matrices

– MMSE Estimator and ML Estimator

• Sequences of Random Variables

– Random Sequences and Linear Systems

– WSS and Markov Random Sequences

– Stochastic Convergence and Limit Theorems /Central Limit Theorem

– Laws of Large Numbers

02/May/2007 5 of 30

Notes :

1. Functions of two Random variables/ Joint distribution and density

functions/ Joint Moments (Covariance, Correlation Coefficient,

Orthogonality) and Joint Characteristic Functions/ Conditional

distribution and density functions

2. Expectation Vectors and Covariance Matrices/ Linear Estimator,

MMSE Estimator/ ML Estimators {S&W}*

3. Random Sequences and Linear Systems/ WSS Random Sequences

/Markov Random Sequences {S&W} / Stochastic Convergence and

Limit Theorems/ Central Limit Theorem {Papoulis} {S&W}/ Laws

of Large Numbers {S&W}

*Note: Shown inside the brackets {..} are codes for Reference Books.

See page 30 of 30 of this document for references.

5

� Contents – 3. Random Processes

• Introduction to Random Processes

– The Random Process Concept

– Stationarity, Time Averages and Ergodicity

– Some important Random Processes

• Wiener and Markov Processes

• Spectral Characteristics of Random Processes

• Linear Systems with Random Inputs

– White Noise

– Bandpass, Bandlimited and Narrowband Processes

• Optimum Linear Systems

– Systems that maximize SNR

– Systems that minimize MSE

02/May/2007 6 of 30

Notes :

1. Correlation functions of Random Processes and their properties –

{Peebles}; {S&W}; {Papoulis}

2. Power Spectral Density and its properties, relationship with

autocorrelation ; White and Colored Noise concepts and definitions-

{Peebles}

3. Spectral Characteristics of LTI System response; Noise Bandwidth-

{Peebles};{S&W}

4. Matched Filter for Colored Noise/White Noise; Wiener Filters-

{Peebles}

6

� Contents – 3. Random Processes Contd..

• Some Practical Applications of the Theory

– Noise in an FM Comm.System

– Noise in a Phase-Locked Loop

– Radar Detection using a single Observation

– False Alarm Probability and Threshold in GPS

• Applications to Statistical Signal Processing

– Wiener Filters for Random Sequences

– Expectation-Maximization Algorithm(E-M)

– Hidden Markov Models (and their specifications)

– Spectral Estimation

– Simulated Annealing

02/May/2007 7 of 30

Notes :

1. {Peebles}; Consider ‘Code Acquisition’ scenario in GPS

applications for one example in finding the false alarm rate

2. Kalman Filtering; Applications of HMM (Hidden Markov Model)s

to Speech Processing –{S&W}

7

� Probability ………….Part 1 of 3

02/May/2007 8 of 30

Notes :

8

� Why study Probability

• Probability plays a key role in the description of

noise like signals

• Nearly uncountable number of situations where we

cannot make any categorical deterministic

assertion regarding a phenomenon because we

cannot measure all the contributing elements

• Probability is a mathematical model to help us

study physical systems in an average sense

• Probability deals with averages of mass phenomena

occurring sequentially or simultaneously:

– Noise, Radar Detection, System Failure, etc

02/May/2007 9 of 30

Notes :

1. {R.G.Brown},pp1

2. {S&W},pp2

3. {S&W},pp2

4. {Papoulis}, pp1 [ 4.1Add Electron Emission, telephone calls,

queueing theory, quality control, etc.]

5. Extra: {Peebles} pp2: [How do we characterize random signals:

One:how to describe any one of a variety of a random phenomena–

Contents shown in Random Variables is required; Two: how to bring

time into the problem so as to create the random signal of interest--

Contents shown in Random Processes is required] – ALL these

CONCEPTS are based on PROBABILITY Theory.

9

� Four approaches to Probability definition

• Probability as Intuition

nE

• Probability as the ratio of P[A] =

n

Favorable to Total Outcomes

P[A] = Lim E

n

• Probability as a measure

n→∞ n

of Frequency of Occurrence

02/May/2007 10 of 30

Notes :

1. Refer their failures from {Papoulis} pp6-7

2. {S&W} pp2-4

3. Slide not required!? Only of Historical Importance?

4. Classical Theory or ratio of Favorable to Total Outcomes approach

cannot deal with outcomes that are not equally likely and it cannot

handle uncountably infinite outcomes without ambiguity.

5. Problem with relative frequency approach is that we can never

perform the experiment infinite number of times so we can only

estimate P(A) from a finite number of trails.Despite this, this

approach is essential in applying probability theory to the real world.

10

� Four approaches to Probability definition

• Probability Based on an Axiomatic Theory

(i) P ( A) ≥ 0 (Probabili ty is a nonnegativ e number)

(ii) P (Ω ) = 1 (Probabili ty of the whole set is unity)

(iii) If A ∩ B = φ , then P ( A ∪ B ) = P ( A) + P ( B ).

- A.N.Kolmogorov

• P(A1+ A2+ + An) = 1

02/May/2007 11 of 30

Notes :

1. Experiment, Sample Space, Elementary Event (Outcome), Event,

Discuss the equations why they are so? - :Refer {Peebles},pp10

2. Axiomatic Theory Uses- Refer {Kolmogorov}

3. Consider a simple resistor R = V(t) / I(t) - is this true

under all conditions? Fully accurate?(inductance and

capacitance?)clearly specified terminals? Refer{Papoulis}, pp5

4. Mutually Exclusive/Disjoint Events? [(refer point (iii) above) when

P(AB) = 0]. When a set of Events is called

Partition/Decomposition/Exhaustive (refer last point in the above

slide); what is its use?(Ans: refer Tips and Tricks page of this

document )

11

� A priori and A posteriori Probabilities

• A priori Probability

– Relating to reasoning from self-evident propositions or

presupposed by experience

Before the Experiment

is conducted

• A posteriori Probability

– Reasoning from the observed facts

After the Experiment

is conducted

02/May/2007 12 of 30

Notes :

1. {S&W}, pp3

2. Also called ‘Prior Probability’ and ‘Posterior Probability’

3. Their role; Baye’s Theorem: Prior: Two types: Informative Prior and

Uninformative(Vague/diffuse) Prior; Refer {Kemp},pp41-42

12

� Concepts of Joint, Marginal, Conditional and Total

Probabilities

• Let A and B be two experiments

– Either successively conducted OR simultaneously

conducted

• Let A1+ A2+ + An be a partition of A and

B1+ B2+ + Bn be a partition of B

• This leads to the Array of Joint and Marginal

Probabilities

02/May/2007 13 of 30

Notes :

1. {R.G.Brown} pp12-13

2. Conditional probability, in contrast, usually is explained through

relative frequency interpretation of probability see for example

{S&W} pp16

13

� Concepts of Joint, Marginal, Conditional and Total

Probabilities

B Event B1 Event B2 Event Bn Marginal

Probabiliti

A es

Event A1 P ( A1 ∩ B1 ) P( A1 ∩ B2 ) P( A1 ∩ Bn ) P(A1)

Event A2 P ( A2 ∩ B1 ) P ( A2 ∩ B2 ) P ( A2 ∩ Bn ) P(A2)

Event An P ( An ∩ B1 ) P ( An ∩ Bn ) P(A2)

Marginal P(B1) P(B2) P(Bn) SUM = 1

Probabilities

02/May/2007 14 of 30

Notes :

1. From {R.G.Brown} pp12-13

2. Joint Probability?

3. What happens if Events A1,A2,….An are not a partition but just

some disjoint/Mutually Exclusive Events?Similarly for Events Bs?

4. Summing out a row for example gives the probability of an event A

of Experiment A irrespective of the oucomes of Experiment A

5. Why they are called marginal? (because they used to be written in

margins)

6. Sums of the Shaded Rows and Columns..

14

� Concepts of Joint, Marginal, Conditional and Total

Probabilities

B Event B1 Event B2 Event Bn Marginal

Probabiliti

A es

Event A1 P ( A1 ∩ B1 ) P( A1 ∩ B2 ) P( A1 ∩ Bn ) P(A1)

Event A2 P ( A2 ∩ B1 ) P ( A2 ∩ B2 ) P ( A2 ∩ Bn ) P(A2)

Event An P ( An ∩ B1 ) P ( An ∩ Bn ) P(A2)

Marginal P(B1) P(B2) P(Bn) SUM = 1

Probabilities

02/May/2007 15 of 30

Notes :

1. From {R.G.Brown} pp12-13

2. This table also contains information about the relative frequency of

occurrence of various events in one experiment given a particular

event in the other experiment .

3. Look at the Column with Red Box outline.Since no other entries of

the table involve B2, list of these entries gives the relative

distribution of events A1,A2,…..An given B2 has occurred.

4. However, Probabilities shown in the Red Box are not Legitimate

Probabilities!(Because their sum is not unity, it is P(B2) ). So,

imagine renormalizing all the entries in the column by dividing by

P(B2). The new set of numbers then is P(A1.B2)/P(B2),

P(A2.B2)/P(B2) … P(An.B2)/P(B2) and their sum is unity. And the

relative distribution corresponds to the relative frequency of

occurrence events A1,A2,…..An given B2 has occurred.

5. This heuristic reasoning leads us to the formal definition of

‘Conditional Probability’.

15

� Concepts of Joint, Marginal, Conditional and Total

Probabilities

• Conditional Probability : a measure of “the event A given that

B has already occurred”. We denote this conditional

probability by

P(A|B) = Probability of “the event A given

that B has occurred”.

We define

P ( AB )

P( A | B) = ,

P( B)

provided P( B) ≠ 0.

The above definition satisfies all probability axioms discussed

earlier.

02/May/2007 16 of 30

Notes :

16

� Concepts of Joint, Marginal, Conditional and Total

Probabilities

• Let A1, A2, An be a partition on the probability

space A

• Let B be any event defined over the same

probability space.

Then,

P(B) = P(B|A1)P(A1)+P(B|A2)P(A2)+ +P(B|An)P(An)

P(B) is called the “average” or “Total” Probability

02/May/2007 17 of 30

Notes :

1. {S&W} pp20

2. Average because expression looks likes averaging; Total because

P(B) is sum of parts

3. In shade is ‘Total Probability Theorem’

17

� Baye’s Theorem and its applications

• Bayes theorem:

– One form: P ( AB ) P ( AB )

P( A | B) = , P ( B | A) =

P( B) P ( A)

hence, P ( B | A) P ( A )

P( A | B) =

P(B)

– Other form:

P ( B | Ai ) P ( Ai )

P ( Ai | B ) = n

,

∑

i =1

P ( B | Ai ) P ( Ai )

02/May/2007 18 of 30

Notes :

1. {Peebles} pp16

2. What about P(A) and P(B); both should not be zero or only P(B)

should not be zero?

3. Ai’s form partition of Sample Space A; B is any event on the same

space

18

� Baye’s Theorem and its applications

• Consider Elementary Binary Symmetric Channel

BSC

Transmit Receive

‘0’ or ‘1’ ‘0’ or ‘1’

P(0t) = 0.4 & Channel Effect P(0r) = ? &

P(1t) = 0.6 P(1r|1t) = 0.9 & P(1r) = ?

P(0r|1t) = 0.1

Symmetric; 0t is no different

System Errors (BER)? Out of 100 Zeros/Ones

received, how many are in errors?

02/May/2007 19 of 30

Notes :

1. {Peebles} pp17

2. BSC Transition Probabilities

19

� Baye’s Theorem and its applications

• P(0r) = 0.42 and P(1r) = 0.58 using total probability theorem

(for each or P(1r) = 1- P(0r) )

• Using Baye’s Theorem:

– P(0t|0r) = 0.857

Out of 100 Zeros received,

– P(1t|0r) = 0.143 14 are in errors

Out of 100 Ones received,

– P(0t|1r) = 0.069 6.9 are in errors

P(0t) = 0.4 &

P(1t) = 0.6

– P(0t|0r) = 0.931

02/May/2007 20 of 30

Notes :

1. {Peebles}

2. Average BER of the system is [(14 x 60 % )+ (6.9 x 40%) ] =

11.16% > 10% Erroneous Channel effect. This is due to unequal

probabilities of 0t and 1t.

3. What happens if 0t and 1t are equi-probable? P(1t|0r) = 10% =

P(0t|1r); and average BER of the system is [(10 x 50 % )+ (10 x

50%) ] = 10% = Erroneous Channel effect

4. Add: Bayesian methods of inference involve the systematic

formulation and use of Baye’s Theorem. These approaches are

distinguished from other statistical approaches in that, prior to

obtaining the data, the statistician formulates degrees of belief

concerning the possible models that may give rise to the data. These

degrees of belief are regarded as probabilities. {Kemp} pp41 “

Posterior odds are equal to the likelihood ratio times the prior odds.”

[Note:Odds on A = P(A)/P(Ac); Ac= A compliment]

20

� Independent events and their properties

• Let two events A and B have nonzero probabilities of

occurrence; assume P( A) ≠ 0 & P( B) ≠ 0.

• We call these independent if occurrence of one event is not

affected by the other event

P(A|B) = P(A) and P(B|A) = P(A)

• Consequently,

Test

P ( AB ) = P ( A ) ⋅ P ( B ) for

Independece

02/May/2007 21 of 30

Notes :

1. {Peebles} pp19

2. Can two independent events be mutually exclusive? Never (see the

first point in the slide; when both P(A) and P(B) are non-zero, how

can P(AB) be zero? ).

21

� Independent events and their properties

• Independence of Multiple Events: independence by pairs

(pair-wise) is not enough.

• E.g., in case of three events A, B, C; the are

independent if and only if they are independent

pair-wise and are also independent as a triple,

satisfying the following four equations:

P ( AB ) = P ( A ) ⋅ P ( B )

P ( BC ) = P ( B ) ⋅ P ( C ) P ( ABC ) = P ( A ) ⋅ P ( B ) ⋅ P ( C )

P ( AC ) = P ( A ) ⋅ P ( C )

02/May/2007 22 of 30

Notes :

1. {Peebles} pp19-20

2. How many Equations are needed for ‘N’ Events to be independent?

2^n – 1 – n (add 1+n to nc2+…+ncn and find what it is and subtract

the same from that)

22

� Independent events and their properties

• Many properties of independent events can be summarized by

the statement:

“If N events are independent then any of

them is independent of any event formed by

unions, intersections and complements of

the others events.”

02/May/2007 23 of 30

Notes :

1. {Peebles} pp20

23

� Tips and Tricks

• Single most difficult step in solving probability

problems:

Correct Mathematical Modeling

• Many difficult problems can be solved by ‘going the

other way’ and by recursion principle

• Model independent events in solving

• Use conditioning and partitioning to solve tough

problems

02/May/2007 24 of 30

Notes :

1. {Peebles} and {Papoulis}

24

� Example

“simple textbook examples” to practical problems of interest

•Day-trading strategy : A box contains n randomly numbered balls (not

1 through n but arbitrary numbers including numbers greater than n).

•Suppose a fraction of those balls are initially − say m = np ; p < 1 −

drawn one by one with replacement while noting the numbers on those

balls.

•The drawing is allowed to continue until a ball is drawn with a

number larger than the first m numbers.

Determine the fraction p to be initially drawn, so as to

maximize the probability of drawing the largest among the n

numbers using this strategy.

02/May/2007 25 of 30

Notes :

1. Example and all notes relating to this example are taken with

humble gratitude in mind from S.Unnikrishnan Pillai’s Web support

for the book “A. Papoulis, S.Unnikrishnan Pillai, Probability,

Random Variables and Stochastic Processes, 4th Ed: McGraw Hill,

2002”

25

� Example

•Let “X = ( k + 1) stdrawn ball has the largest number among all n

k

balls, and the largest among the first k balls is in the group of first m

balls, k > m.”

•Note that X k is of the form A ∩ B,

where

A = “largest among the first k balls is in the

group of first m balls drawn” and

B = “(k+1)st ball has the largest number among

all n balls”.

02/May/2007 26 of 30

Notes :

1. P(A) = m/k and P(B) = 1/n

26

� Example

Notice that A and B are independent events, and

hence 1 m 1 np p

P ( X k ) = P ( A) P ( B ) = = = .

nk n k k

Where m = np represents the fraction of balls to be

initially drawn.

This gives

P (“selected ball has the largest number among all

balls”) = n −1 P( X ) = p n −1 1 ≈ p n 1 = p ln k n

∑ k ∑k ∫ np k np

k =m k =m

= − p ln p.

02/May/2007 27 of 30

Notes :

27

� Example

Maximization of the desired probability with respect to

p gives d

(− p ln p ) = −(1 + ln p ) = 0

dp

or p = e−1 0.3679.

The maximum value for the desired probability

of drawing the largest number also equals 0.3679

02/May/2007 28 of 30

Notes :

1. Interestingly the above strategy can be used to “play the stock market”.

2. Suppose one gets into the market and decides to stay up to 100 days. The stock

values fluctuate day by day, and the important question is when to get out?

3. According to the above strategy, one should get out at the first opportunity after 37

days, when the stock value exceeds the maximum among the first 37 days. In that

case the probability of hitting the top value over 100 days for the stock is also

about 37%. Of course, the above argument assumes that the stock values over the

period of interest are randomly fluctuating without exhibiting any other trend.

4. Interestingly, such is the case if we consider shorter time frames such as inter-day

trading. In summary if one must day-trade, then a possible strategy might be to get

in at 9.30 AM, and get out any time after 12 noon (9.30 AM + 0.3679 6.5 hrs =

11.54 AM to be precise) at the first peak that exceeds the peak value between 9.30

AM and 12 noon. In that case chances are about 37% that one hits the absolute top

value for that day! (disclaimer : Trade at your own risk)

5. Author’s note: The same example can be found in many ways in other contexts,

e.g., Puzzle No.34 “The Game of Googol” from {M.Gardner}; the ancient Indian

concept of ‘Swayamvara’ to name a few.

28

� What Next?

• Random Variables with applications to Signal Processing

– The Concept of a Random Variable

– Functions of One Random Variable

– Multiple Random Variables Part - 2

– Random Vectors and Parameter Estimation

– Sequences of Random Variables

• Random Processes with Applications to Signal Processing

– Introduction to Random Processes

– Spectral Characteristics of Random Processes

– Linear Systems with Random Inputs

Part - 3

– Optimum Linear Systems

– Some Practical Applications of the Theory

– Applications to Statistical Signal Processing

02/May/2007 29 of 30

Notes :

29

� References

• 1. A. Papoulis, S.Unnikrishnan Pillai, Probability, Random Variables

and Stochastic Processes, 4th Ed: McGraw Hill,2002. {Papoulis}

• 2. Henry Stark, John W.Woods, Probability and Random Processes with

Applications to Signal Processing,3rd Ed: Pearson Education, 2002. {S&W}

• 3. Peebles Peyton Z., Jr, Probability, Random Variables and Random

Signal Principles,2nd Ed: McGraw Hill,1987. {Peebles}

• 4. Norman L.Johnson, Adrienne W.Kemp, Samuel Kotz, Univariate

Discrete Distributions, 3rd Ed: Wiley, 2005. {Kemp}

• 5. A.N.Kolmogorov, Foundations of the Theory of Probability: Chelsea,

1950. {Kolmogorov}

• 6. Robert Grover Brown, Introduction to Random Signal analysis and

Kalman Filtering: John Wiley,1983. {R.G.Brown}

• 7. J.L.Doob, Stochastic Processes:John Wiley,1953 {Doob}

• 8. Martin Gardner, My Best Mathematical and Logic Puzzles: Dover

Publications, Inc, New York, 1994. {M.Gardner}

02/May/2007 30 of 30

Notes :

1. Shown in the { } brackets are the codes used to annotate them in the

notes area.

30