Professional Documents

Culture Documents

Experimental Design: 1. Testable Question: 2. Aim: 3. Hypothesis: 4. Variables

Experimental Design: 1. Testable Question: 2. Aim: 3. Hypothesis: 4. Variables

Uploaded by

Raghav Jaitely0 ratings0% found this document useful (0 votes)

19 views1 pageThe document outlines key aspects of experimental design including defining a testable question with variables, developing a hypothesis, identifying control and experiment groups, ensuring accuracy and precision of measurements, and accounting for reliability, validity, uncertainty, and potential errors. Key factors are having a dependent and independent variable, hypotheses stated as "if...then" predictions, maintaining consistent controls, and repeating experiments to reduce random errors and improve reliability and validity of results.

Original Description:

Original Title

Experimental+design

Copyright

© © All Rights Reserved

Available Formats

PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentThe document outlines key aspects of experimental design including defining a testable question with variables, developing a hypothesis, identifying control and experiment groups, ensuring accuracy and precision of measurements, and accounting for reliability, validity, uncertainty, and potential errors. Key factors are having a dependent and independent variable, hypotheses stated as "if...then" predictions, maintaining consistent controls, and repeating experiments to reduce random errors and improve reliability and validity of results.

Copyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

0 ratings0% found this document useful (0 votes)

19 views1 pageExperimental Design: 1. Testable Question: 2. Aim: 3. Hypothesis: 4. Variables

Experimental Design: 1. Testable Question: 2. Aim: 3. Hypothesis: 4. Variables

Uploaded by

Raghav JaitelyThe document outlines key aspects of experimental design including defining a testable question with variables, developing a hypothesis, identifying control and experiment groups, ensuring accuracy and precision of measurements, and accounting for reliability, validity, uncertainty, and potential errors. Key factors are having a dependent and independent variable, hypotheses stated as "if...then" predictions, maintaining consistent controls, and repeating experiments to reduce random errors and improve reliability and validity of results.

Copyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

You are on page 1of 1

Experimental design

1. Testable question: A question that can be answered by conducting tests/

experiments. It contains a dependent and independent variable in it

2. Aim: Contains how the testable question will be answered

3. Hypothesis: A prediction about the answer to the testable question. They need to

be very specific to what the variables are. Use the format, if…then

4. Variables:

• Dependent – variable that is being measured

• Independent – variable that is being changed between tests

• Controlled – variables that are kept constant between tests to prevent them

influencing the dependent variable

• Experiment group – the group that is exposed to the independent variable

• Control group – the group not exposed to the independent variable. It is used to

ensure that the independent variable is what is affecting the dependent

variable not any other factor. i.e. it acts as a comparison.

5. Controls:

• Positive control – ensures test works and will give a positive result

• Negative control – doesn’t contain an independent variable and a negative

result is expected. It acts as a comparison to ensure that the independent

variable is what is affecting the dependent variable not any other factor.

6. Accuracy: How close is your measurement to the actual value

7. Precision: How close are the measurements from one another.

8. Reliability: affected by sample size, wether it is a random sample representative of

the whole population, absence of bias, lack of errors, use of controls, presence of

outliers (these will drastically affect the average value and hence need to be

discounted)

9. Validity: Can the experiment be repeated with similar results? Were positive and/or

negative controls used?

10. Uncertainty: When you know that a value is around a certain number but may be

slightly more or less. E.g. if it took about 25 minutes to drive to school, and you

know it took more then 20 but less then 30, there is an uncertainty of +/- 5 minutes

11. Type of errors:

• Mistakes – avoidable errors, e.g. spillage, misreading numbers on scales.

Values from experiments with errors should be disregarded

• Systematic – produces a constant bias that cannot be eliminated by

repeating the experiment. Most commonly due to incorrect technique

• Random – random errors following no regular pattern. To reduce their impact

repeat the experiment until you have 3 concordant values

You might also like

- General Mathematics: Quarter 1 - Module 17: Exponential Functions, Equations and InequalitiesDocument20 pagesGeneral Mathematics: Quarter 1 - Module 17: Exponential Functions, Equations and InequalitiesTeds TV89% (53)

- GIS in Physical PlanningDocument7 pagesGIS in Physical PlanningGianni GorgoglioneNo ratings yet

- Handbook of Research Methods in Tourism-Larry Dwyer, Alison Gill, Neelu SeetaramDocument509 pagesHandbook of Research Methods in Tourism-Larry Dwyer, Alison Gill, Neelu SeetaramGerda Warnholtz100% (1)

- Experimental Design: Reported byDocument26 pagesExperimental Design: Reported byrinaticiaNo ratings yet

- 6 Experimental Design Lectrue - 24.9.2022 PDFDocument46 pages6 Experimental Design Lectrue - 24.9.2022 PDFSahethi100% (1)

- The Scientific MethodDocument2 pagesThe Scientific MethodshrinithielangumanianNo ratings yet

- Experimental Design and Analysis of VarianceDocument66 pagesExperimental Design and Analysis of Variancealtheajade jabanNo ratings yet

- Lesson 1 - Essentials of ExperimentDocument3 pagesLesson 1 - Essentials of ExperimentLawrenceNo ratings yet

- CHAPTER 9 - EXPERIMENTS - FinalDocument29 pagesCHAPTER 9 - EXPERIMENTS - FinalVJ DecanoNo ratings yet

- Chapter 5Document21 pagesChapter 5FrancoNo ratings yet

- BRM Experimentation LectureDocument4 pagesBRM Experimentation Lectureabc xyzNo ratings yet

- Kathy Mae F. Daclan (Experimental Design Final)Document7 pagesKathy Mae F. Daclan (Experimental Design Final)YHTAK1792No ratings yet

- CC 2Document12 pagesCC 2PojangNo ratings yet

- Research MethodsDocument20 pagesResearch Methodsjanina ebelNo ratings yet

- Physics Lab - PHY102 Manual - Monsoon 2016 (With Correction) PDFDocument82 pagesPhysics Lab - PHY102 Manual - Monsoon 2016 (With Correction) PDFlanjaNo ratings yet

- 497 ExperimentsDocument42 pages497 ExperimentsJesse SandersNo ratings yet

- Principles of Experimental Design: Nur Syaliza Hanim Che Yusof Sta340Document10 pagesPrinciples of Experimental Design: Nur Syaliza Hanim Che Yusof Sta340Nur ShuhadaNo ratings yet

- Chapter 9 Marketing ResearchDocument20 pagesChapter 9 Marketing Researchkamaruljamil4No ratings yet

- Practical Research 11 STEMDocument3 pagesPractical Research 11 STEMlaynojannamarizxNo ratings yet

- Chapter 08 - Experimental DesignsDocument24 pagesChapter 08 - Experimental DesignsNelsenSANo ratings yet

- EARG - Factorial DesignDocument13 pagesEARG - Factorial DesignGie_Anggriyani_4158No ratings yet

- Classs XX - Research DesignsDocument80 pagesClasss XX - Research DesignsWilbroadNo ratings yet

- Experimental Design and TerminologiesDocument2 pagesExperimental Design and TerminologiesKwong Hui TanNo ratings yet

- Research Methods: The Logic of Experimental DesignDocument27 pagesResearch Methods: The Logic of Experimental DesignColinNo ratings yet

- Socoiology 004 Exam 2 NotesDocument1 pageSocoiology 004 Exam 2 Notescramo085No ratings yet

- Assignment - 4th SEMDocument3 pagesAssignment - 4th SEMSujit Kumar OjhaNo ratings yet

- RM 6Document26 pagesRM 6janreycatuday.manceraNo ratings yet

- 8.2 Research Strategies or Methods, ExperimentDocument14 pages8.2 Research Strategies or Methods, ExperimentSir WebsterNo ratings yet

- Research Q1-Designing Exp & Laboratory RulesDocument5 pagesResearch Q1-Designing Exp & Laboratory RulesLian VergaraNo ratings yet

- Experimental DesignsDocument73 pagesExperimental DesignsAman HussainNo ratings yet

- 01 Scientific Method-StoesselDocument26 pages01 Scientific Method-StoesselMDolo GSNo ratings yet

- Bstat DesignDocument47 pagesBstat DesignAby MathewNo ratings yet

- Chapter 1Document22 pagesChapter 1Adilhunjra HunjraNo ratings yet

- Experimental ResearchDocument21 pagesExperimental ResearchNam Phương Nguyễn ThịNo ratings yet

- 8604 Unit 4 Revised Experimental Research 1Document22 pages8604 Unit 4 Revised Experimental Research 1amirkhan30bjrNo ratings yet

- Lesson 3 Experimental ResearchsDocument14 pagesLesson 3 Experimental ResearchsHazell CondimanNo ratings yet

- Experimental Design, IDocument19 pagesExperimental Design, ILeysi GonzalezNo ratings yet

- ReliabilityDocument75 pagesReliabilityCheasca AbellarNo ratings yet

- 497 ExperimentsDocument42 pages497 ExperimentsCyn SyjucoNo ratings yet

- Q - 1 Explain The Four Types of Experimental Designs. Ans. Experimental Designs Are Conducted To Infer Causality. in An Experiment, ADocument8 pagesQ - 1 Explain The Four Types of Experimental Designs. Ans. Experimental Designs Are Conducted To Infer Causality. in An Experiment, AAnshul JainNo ratings yet

- Quasi-Experimental Research DesignDocument22 pagesQuasi-Experimental Research DesignSushmita ShresthaNo ratings yet

- Methodology Quantitative+methodsDocument54 pagesMethodology Quantitative+methodszuniojohnreyNo ratings yet

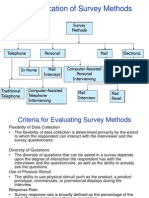

- A Classification of Survey MethodsDocument31 pagesA Classification of Survey Methodscyanide202No ratings yet

- Experimental ResearchDocument14 pagesExperimental ResearchAnzala SarwarNo ratings yet

- Answers The Question "What If?" - The Researcher Manipulates Independent VariableDocument8 pagesAnswers The Question "What If?" - The Researcher Manipulates Independent VariableLiebe Ruiz TorresNo ratings yet

- Chapter 7Document27 pagesChapter 7بيان بنتنNo ratings yet

- Creating Scientific Theories Should Be Backed Up On Experiments. With Trial and Error Approaches We Reject False Theories and Create NewDocument1 pageCreating Scientific Theories Should Be Backed Up On Experiments. With Trial and Error Approaches We Reject False Theories and Create NewPiter ĆwiekNo ratings yet

- Unit 9 Measurements - ShortDocument27 pagesUnit 9 Measurements - ShortMitiku TekaNo ratings yet

- Dr. Mohammed Ammar Department of Electric Power and Machines Cairo UniversityDocument29 pagesDr. Mohammed Ammar Department of Electric Power and Machines Cairo Universitygeo_biNo ratings yet

- Experimental Research Design: Module IV ContinuedDocument54 pagesExperimental Research Design: Module IV ContinuedHruday ChandNo ratings yet

- Experimental MethodDocument7 pagesExperimental MethodpravinnahelanmaryNo ratings yet

- Experimental ResearchDocument7 pagesExperimental ResearchZaher Al-RashedNo ratings yet

- True Experimental Design - PPTX ReportDocument13 pagesTrue Experimental Design - PPTX Reportaltheajade jabanNo ratings yet

- Research MethodDocument30 pagesResearch Methodmahmoudkhal334No ratings yet

- 4 Reliability ValidityDocument47 pages4 Reliability ValidityMin MinNo ratings yet

- Experimental DesignsDocument20 pagesExperimental DesignsaliNo ratings yet

- Experimentation in Market ReasearchDocument86 pagesExperimentation in Market ReasearchcalmchandanNo ratings yet

- Psyc 224 - Lecture 4bDocument21 pagesPsyc 224 - Lecture 4bNana Kweku Obeng SakyiNo ratings yet

- Bloc 3 Causal Designs: 1. Concomitant VariationDocument18 pagesBloc 3 Causal Designs: 1. Concomitant VariationNúria Ventura CallNo ratings yet

- Chapter Two Revision: Exam Type QuestionDocument7 pagesChapter Two Revision: Exam Type QuestionNaeemaKraanNo ratings yet

- 5 Module 5 ReliabilityDocument18 pages5 Module 5 ReliabilityCHRISTINE KYLE CIPRIANO100% (1)

- Summary ch12Document6 pagesSummary ch12Eman KhalilNo ratings yet

- AQA Psychology A Level – Research Methods: Practice QuestionsFrom EverandAQA Psychology A Level – Research Methods: Practice QuestionsNo ratings yet

- MathematicsDocument89 pagesMathematicsrasromeo100% (1)

- Analysis of Multi-Column of Unskewed Bridge Using STAAD Pro Under Static and Dynamic LoadDocument8 pagesAnalysis of Multi-Column of Unskewed Bridge Using STAAD Pro Under Static and Dynamic LoadsweetqistNo ratings yet

- Lecture 2019.1 Project - Equity Hedge Simulation V0.1Document19 pagesLecture 2019.1 Project - Equity Hedge Simulation V0.1pogamo6573No ratings yet

- Lab Report Microwave EngineeringDocument4 pagesLab Report Microwave EngineeringKarthi DuraisamyNo ratings yet

- UNRMO Sample Papers For Class 9Document7 pagesUNRMO Sample Papers For Class 9Jomar EjedioNo ratings yet

- Sider Theodore - Logic For PhilosophyDocument362 pagesSider Theodore - Logic For PhilosophyRodrigo Del Rio Joglar100% (3)

- Contoh Presentasi Bahasa InggrisDocument15 pagesContoh Presentasi Bahasa Inggrisjohny100% (1)

- Mathematics P2 May-June 2016 Memo Afr & EngDocument21 pagesMathematics P2 May-June 2016 Memo Afr & Engaleck mthethwaNo ratings yet

- S01 CountingDocument178 pagesS01 Countingocean2012cosmicNo ratings yet

- Backtesting Pinnacles Odds1Document4 pagesBacktesting Pinnacles Odds1fco07leandroNo ratings yet

- Optical Flow Horn and SchunckDocument21 pagesOptical Flow Horn and SchunckkirubhaNo ratings yet

- Acoustic Scene ClassificationDocument6 pagesAcoustic Scene ClassificationArunkumar KanthiNo ratings yet

- Precalculus Budget of Works 1st Sem Sy 2023 2024Document2 pagesPrecalculus Budget of Works 1st Sem Sy 2023 2024Paluan RhuNo ratings yet

- Finite Difference WikipediaDocument6 pagesFinite Difference Wikipedialetter_ashish4444No ratings yet

- Hydraulic Structures-2Document32 pagesHydraulic Structures-2ناهض عهد عبد المحسن ناهضNo ratings yet

- TDS ET With Solution PKDocument26 pagesTDS ET With Solution PKAyush KumarNo ratings yet

- RPH MATE DLP Y1 Topic 2Document10 pagesRPH MATE DLP Y1 Topic 2g-93275307No ratings yet

- Ancova 2Document8 pagesAncova 2Hemant KumarNo ratings yet

- t13 PDFDocument8 pagest13 PDFMaroshia MajeedNo ratings yet

- Cook TheoremDocument11 pagesCook TheoremlosoloresNo ratings yet

- ISB 1 For Students PDFDocument54 pagesISB 1 For Students PDFRitik AggarwalNo ratings yet

- Class Routine Final 13.12.18Document7 pagesClass Routine Final 13.12.18RakibNo ratings yet

- 6.1.a VisualPrinciplesElementsIDDocument4 pages6.1.a VisualPrinciplesElementsIDfarfenNo ratings yet

- (Akustik LB) Wang2018Document120 pages(Akustik LB) Wang2018Syamsurizal RizalNo ratings yet

- Piezoelectric Accelerometers and Vibration PreamplifierDocument160 pagesPiezoelectric Accelerometers and Vibration PreamplifierBogdan Neagoe100% (1)

- 135 264 1 SMDocument9 pages135 264 1 SMmingNo ratings yet

- Numerical Modeling of Rock Slopes in Siwalik Hills Near Manali Region: A Case StudyDocument21 pagesNumerical Modeling of Rock Slopes in Siwalik Hills Near Manali Region: A Case Studywidayat81No ratings yet