Professional Documents

Culture Documents

Applications of Hidden Markov Models To Detecting Multi-Stage Network Attacks

Uploaded by

Pramono PramonoOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Applications of Hidden Markov Models To Detecting Multi-Stage Network Attacks

Uploaded by

Pramono PramonoCopyright:

Available Formats

Applications of Hidden Markov Models to Detecting Multi-stage

Network Attacks

Dr. Dirk Ourston, Ms. Sara Matzner, Mr. William Stump, and Dr. Bryan Hopkins,

Applied Research Laboratories University of Texas at Austin

P.O. Box 8029, Austin, TX 78713-8029

1-512-835-3200

{ourston, matzner, stump, bhopkins}@arlut.utexas.edu

Abstract. This paper describes a novel describe why HMMs are particularly useful

approach using Hidden Markov Models when there is an order to the actions

(HMM) to detect complex Internet attacks. constituting the attack (that is, for the case

These attacks consist of several steps that where one action must precede or follow

may occur over an extended period of time. another action in order to be effective).

Within each step, specific actions may be Because of this property, we show that HMMs

interchangeable. A perpetrator may are well suited to address the multi-step

deliberately use a choice of actions within a attack problem. In a direct comparison with

step to mask the intrusion. In other cases, two other classic machine learning

alternate action sequences may be random techniques, decision trees and neural nets, we

(due to noise) or because of lack of show that HMMs perform generally better

experience on the part of the perpetrator. For than decision trees and substantially better

an intrusion detection system to be effective than neural networks in detecting these

against complex Internet attacks, it must be complex intrusions.

capable of dealing with the ambiguities

described above. We describe research Keywords: Coordinated Internet attacks,

results concerning the use of HMMs as a Hidden Markov Models, rare data, noise,

defense against complex Internet attacks. We multi-stage network intrusions, partial data.

Introduction detect, and although a successful multi-stage attack

Among the computer network attacks most destructive normally requires that each phase of the attack be

and difficult to detect are those that occur in stages completed before the next stage, the specific actions

over time. In accomplishing a compromise of this type, taken within a given stage may be interchangeable.

an intruder must go through a process of Moreover, certain stages may not be present or

reconnaissance, penetration, attack, and exploitation. discernable and data corresponding to a stage may be

Whereas current intrusion detection methods may be fragmentary or lost, particularly in the case where

able to identify the individual stages of an attack with wireless communications are being utilized. Truly

more or less accuracy and completeness, the sophisticated Internet attacks of this type may occur

recognition of a sophisticated multi-stage attack only rarely when compared to the more ubiquitous

involving a complex set of steps under the control of a network intrusion activities such as probes and scans.

master hacker remains difficult. The benefits of The small number of examples of complex Internet

applying machine learning techniques to this domain attacks contributes to the difficulty associated with

are that they eliminate the manual knowledge applying machine learning (ML) techniques to this

acquisition phase required by rule-based approaches problem, since most ML algorithms require a training

and provide a generalization of the models for the set containing many examples. In addition, the small

attack types. However, several challenges also face the number of examples also makes it difficult to measure

use of machine learning methods in the construction of the performance of the detection algorithm. In response

intrusion detection systems in response to complex to the difficulties outlined above, we have chosen an

intrusions. The correlation of stages separated by a approach using Hidden Markov Models (in the

significant amount of time is difficult to model and remainder of the paper, HMMs will be used as the

Proceedings of the 36th Hawaii International Conference on System Sciences (HICSS’03)

0-7695-1874-5/03 $17.00 © 2002 IEEE

plural of HMM) to describe the sequential and extraordinary events and does not target misuse

probabilistic nature of multi-stage Internet attacks [1]. specifically, as does the HMM representation that we

The remainder of this paper is organized as have implemented. The IBM group uses an association

follows. Based on the problem domain being addressed rule mining algorithm for identifying events that

and the techniques used, we compare our work to that frequently occur together within the incoming alert

of other research groups. We present in detail the data stream. These rules are then used to characterize

salient properties of an HMM that motivated our “normal” operation of the network. Any deviation from

approach. We present our system architecture, showing the conditions characterized by these rules is

the HMM within the deployed system. Then we discuss considered to be an anomaly, or a potential attack. An

the process that we employed to prepare the incoming approach using an HMM could be applied to this

data for use by the HMM algorithm. We present problem domain, with the exception that the HMM

experimental results using a variety of measures, and output would be various specific attack types, rather

we compare the performance of HMMs against two than the single broad category of “anomalies.”

classic machine learning algorithms, decision trees and Our approach, using Hidden Markov Models [6],

neural networks. We provide an introduction to has only recently been applied to the intrusion

techniques that can be applied to the rare data problem detection problem [7]. The work at the University of

because, as we have already mentioned, complex New Mexico [7] considered HMMs as a tool for

Internet attacks may occur only rarely. Finally, we detecting misuse based on operating system calls. This

discuss our plans for future work, including study found that HMMs provided greater classification

investigation of the rare data problem, investigation of accuracy than other methods, but at the expense of

methods for recognizing the early stages of an attack, additional computations.

and methods for coping with noisy or missing data. The HMM technique has been extensively applied

to problems in speech recognition [8], text

Related Work understanding [9], image identification [10], and

Other researchers have also studied multi-stage microbiology [11]. Finally, several institutions have

Internet attacks. For example, work at Stanford addressed the problem of complex Internet attacks

Research Institute (SRI) [2] has included a probabilistic [12], [13], applying non-probabilistic ML techniques

approach to intrusion detection. The SRI approach [14], [15].

calculates the similarity between alerts of various

types, emanating from multiple sensors. If the alerts are The HMM

sufficiently similar, they are fused into a meta-alert that The Hidden Markov Model (see Figure 1) starts

summarizes the information contained in the individual with a finite set of states. Transitions among the states

alerts. In the SRI approach, the probability of are governed by a set of probabilities (transition

transitioning from one attack phase to another (attack probabilities) associated with each state. In a particular

states in our formulation) is specified by the incident state, an outcome or observation can be generated

class similarity matrix. This matrix is comparable to according to a separate probability distribution

the state transition matrix in the HMM representation. associated with the state. It is only the outcome, not the

Therefore, a significant difference between our state, that is visible to an external observer. The states

approach and the SRI approach is that we compute the are “hidden” to the outside; hence the name Hidden

state transition matrix automatically, using ML Markov Model. The Markov Model used for the hidden

techniques, whereas the SRI approach derives the layer is a first-order Markov Model, which means that

incident class similarity matrix manually, using human the probability of being in a particular state depends

judgment. However, an HMM could be used as a only on the previous state. While in a particular state,

source for the correlated attack reports, discussed in the Markov Model is said to “emit” an observable

Ref. [2], using the meta-alerts as input. corresponding to that state. One of the goals of using

A group at Purdue [3] has included temporal an HMM is to deduce from the set of emitted

sequence learning in their approach to intrusion observables the most likely path in state space that was

detection. This work has focused on host-based followed by the system.

intrusions, rather than network intrusions. The ML Given the set of observables contained in an

algorithm that they have selected is instance-based example corresponding to an attack, an HMM can also

learning [4]. In this technique, a set of exemplars is determine the likelihood of an attack of a specific type.

chosen for the class to be modeled. These exemplars In our case, the observables are the set of alerts

are then compared with example data using a similarity contained in the example. Each example is constructed

metric to determine if the example is a member of the to contain all of the alerts that occurred between a

given class. The HMM representation could be applied specified source host and a specified target host, over a

to this domain directly using the same format for the specified time period (in our case 24 hours). The goal

examples that was used at Purdue. for the HMM is therefore to determine the most likely

Researchers at IBM [5] developed a system that attack type corresponding to a sequence of alerts.

incorporates network alarms instead of raw network

data. This system is limited in that it is sensitive to

Proceedings of the 36th Hawaii International Conference on System Sciences (HICSS’03)

0-7695-1874-5/03 $17.00 © 2002 IEEE

t

ec

nn

t

2

ec

ry

IN

s R ied

d

nn

Co

i st

nie

TF DM

ow Den

Co

eg

De

R

L

R

ind ess

SE A

AI

ss

M

NT N_

SA

W Acc

ce

SA

Ac

I

NT

-B

NT

NT

T

Observables

SM

N

Markov Model

Compromise

Consolidate

Exploit

Probe

Figure 1. A simple HMM

To train the HMM, a set of training examples is several attack categories. This approach facilitates

created, with each example labeled by category (see parallelization in the operational system, if that is

Developing Labeled Data, below). The maximum desired.

likelihood method [16] is used to adjust the HMM

parameters for optimal classification of the example Why Use an HMM?

set. The HMM parameters are the initial probability There are several properties of alert sequences

distribution for the HMM states, the state transition corresponding to multi-stage Internet attacks that

probability matrix, and the observable probability match well with the HMM representation. A multi-

distribution. stage Internet attack often consists of several steps,

The state transition probability matrix is a square each of which is dependent on the outcome of the

matrix with size equal to the number of states. Each of previous step. These steps are identified in an input

the elements represents the probability of transitioning example by the alert corresponding to the step. In the

from a given state to another possible state. The matrix HMM, each step is probabilistically dependent on the

is not symmetric. For example, the likelihood of previous step. Also, in the HMM representation, each

transitioning from the state corresponding to an ip scan step is identified by the observable, that is, the alert,

into the state corresponding to a port probe is very corresponding to the step. The steps themselves are

high, since these two events are likely to occur in modeled internally in the HMM as states.

proximity to each other in the order given. However, An intruder mounting an Internet attack has many

the transition port probe to ip scan is much less likely, ways to accomplish his goals. Within the HMM, each

since port probes are usually conducted on hosts that alternative is represented as a specific state sequence.

have been identified by ip scans. The observable Furthermore, the HMM calculates a probability that a

probability distribution is a non-square matrix, with particular state sequence was followed during the

dimensions number of states by number of observables. attack. This probability is related to the frequency with

The observable probability distribution represents the which the set of transitions contained in the sequence

probability that a given observable will be emitted by a was seen in the HMM training data.

given state. For example, in the late stages of an attack, There may also be different ways to accomplish

the observable “root login” would be much more likely each step in the state sequence. For example, suppose

than “port probe,” since port probes typically happen at that the goal for a given step is to discover whether or

the beginning of an attack and a root login typically not a host exists at a given ip address. One method to

happens towards the end. achieve this goal is to attempt to initiate a telnet

We implemented our system using a separate connection with the host. A second is to consult the

HMM for each classification category since the DNS server responsible for the network. A third

standard HMM is designed to simulate a single method might involve attempting to establish an FTP

category and the intrusion detection domain includes connection. Out of the various possible actions, only

Proceedings of the 36th Hawaii International Conference on System Sciences (HICSS’03)

0-7695-1874-5/03 $17.00 © 2002 IEEE

one is chosen, resulting in the observable for the step. sometimes not then. The HMM observable layer

The likelihood associated with each alert type is then models the overt portion of the attack, whereas the

calculated according to the frequency with which the hidden level represents the attacker’s true intentions, a

particular alert type had been associated with the given useful property when the attacker attempts to hide his

state in the training data. The HMM therefore can true intentions.

simulate variations in both the attack step sequence and

the choice of methods for accomplishing the goals of System Architecture

each step. The hidden and visible layers of the HMM Figure 2 shows the architecture for the intrusion

also have semantic value and could support the detection system. The current status of the system is

development of a cognitive model of the way attackers that all of the modules shown in the architecture have

accomplish their goals. For example, attackers been implemented as research prototypes, and the

sometimes attempt to disguise their work as normal system is currently undergoing extensive test and

network activities. The attacker only reveals his evaluation (the results of some of our experimentation

motives when he has gained his objectives—and form the basis for this paper).

Sensor 1

Connection

Records

Network Alarm Data Pre- HMM

Sensor 2

Database Filtering

Sensor n

Figure 2. System architecture

The flow through the system is as follows: One Each example consists of the temporally ordered

or more sensors monitor network traffic and declare sequence of alerts that occurred between a given

alerts when potential intrusions are detected. The source/destination host pair over a 24-hour period.

alerts from the sensors are stored in an alarm Using the network sensor data as input allows us

database for later processing by the system. Data pre- to work at a higher level of abstraction than is

filtering (see Preparing the Data, below) is used to possible with raw packet data. The network sensors

eliminate redundant data from the input data. After filter out a large number of normal connections,

the alarm data has been pre-filtered, it is assembled letting our algorithms focus on events that more

into examples for analysis by the HMM classification probably correspond to intrusions. Although using

module. Currently, the example input to the HMM sensors improves the quality of the data being

consists of a source address (the source host), a processed by our algorithms, we still have to deal

destination address, and an ordered sequence of alerts with the “false positive” problem. This problem

that occurred between the source ip and the originates from the fact that most, if not all, network

destination ip during a 24-hour period. The HMM sensors adhere to the philosophy that it is preferable

highlights high-interest examples for further analysis. to include many erroneously identified intrusions in

In the event that an example is incorrectly classified the input data stream (false positives) rather than to

by the HMM, the network analyst corrects the miss one real intrusion (false negative).

classification and sends the example back to the alert In addition, our input data originally suffered

database so that the HMM can be updated using that from the alert repetition problem: a single alert type

example. or a set of alert types being repeated over and over in

the example. A particular example of this

Preparing the Data phenomenon occurs during probe activities. In this

In the real-time data stream, alerts come in as case the source host sends out service requests over

independent events from each of many network all possible port numbers on the target machine,

sensors. A single record of the real-time data consists attempting to find vulnerabilities in the service

of the type of alert being reported by the network applications. An alert is generated for each probe

sensor, as well as various types of context attempt, resulting in …pppp… appearing in the

information including bytes transferred, source host example, where p corresponds to a probe alert. It can

address, target host address, and so on. Viewing the also happen that a particular alert sequence gets

data in this manner tends to obscure correlations repeated in the example as …abcabcabc…, where a,

among the alerts, and makes identification of b, and c are each unique alert types. However, for our

coordinated attacks more difficult. Consequently, we purposes in identifying coordinated attacks these

elected to group the data when forming our examples. repetitive signatures provide little or no information

Proceedings of the 36th Hawaii International Conference on System Sciences (HICSS’03)

0-7695-1874-5/03 $17.00 © 2002 IEEE

content to the learning algorithm. As a result, we compared to manually labeling thousands of

eliminated these repetitive signatures from the input examples. Next, we automatically assigned examples

examples used for training and testing, replacing to categories using this alert mapping. This was done

them with a single alert or alert sequence of the given by assigning each example to the category associated

type. with the highest category alert contained in the

Developing Labeled Data example. Using this approach, we were able to avoid

One of the main problems associated with using labeling individual examples by hand instead

machine-learning algorithms is that of finding automatically creating an initial set of labeled

realistic training data. In general, there is a paucity of examples. For research purposes, we have used the

labeled data available for most problem domains. By examples labeled by this method in order to generate

labeled data we refer to an example that has been comparisons among candidate learning algorithms.

annotated with its associated category. Labeling In the operational system, incorrectly identified

examples requires both a domain expert and a great examples would be re-labeled correctly by the

deal of time. analyst, and then the HMM would be updated using

We have implemented a semi-automatic the corrected examples. It should be noted that while

approach that greatly accelerates the labeling process we are not able to discuss in detail the characteristics

by using subject matter experts to develop category of the input data, due to security issues, the

models. These models are based on the feature sets of information used in our examples is real network

the examples. The feature sets result in a simplistic data, generated by real network sensors connected to

assignment of examples to categories. Specifically, an operational network.

what we required of the subject matter experts is that Empirical Results

they identify a mapping between alert types and Figure 3, below, compares the performance of

intrusion categories. For example, an alert of type the HMM algorithm against the 4.8 (latest public)

“port probe” might be mapped to intrusion category version of the C4.5 decision tree algorithm and a

“initial recon.” This mapping was accomplished standard neural network implementation (both the

using the following simple rule to assign an alert to a C4.5 algorithm and the neural network software were

category. “Assign the alert to the most interesting taken from the WEKA [17] machine learning

category in which the alert might appear at the end of library). By “performance” we mean the fraction of

an attack sequence.” Thus, a port probe would appear test examples that were correctly classified. The

in category 1 (least interest) by this rule, since a port HMM software that we used for the experiments was

probe does not normally appear at the end of any obtained from HmmLib [18], an HMM software

attack sequence, and a port probe by itself is not a library written in Java. HmmLib is an exact

particularly interesting event. Note that this mapping implementation of the HMM algorithm described in

only required assignment of alert types to categories; Ref. [6].

in our case there were about 200 alert types, as

1

HMM

P 0.9

e

r 0.8

f

C4.5

o 0.7

r

m

0.6

a

n

c 0.5 NN

e

0.4

0.3

0 50 100 150 200 250 300 350 400 450 500

Number of training examples

Figure 3. Performance versus number of training examples

Proceedings of the 36th Hawaii International Conference on System Sciences (HICSS’03)

0-7695-1874-5/03 $17.00 © 2002 IEEE

We extended the HmmLib software by adding When compared with the C4.5 algorithm, the

the capability to learn from multiple examples and to HMM algorithm is competitive with C4.5 at all levels

process HMMs for all attack categories in parallel. and significantly out-performs C4.5 at the lower

For these test results, we used 10 to 500 training training levels. For instance, with only 10 examples

examples, 100 test examples, and averaged the results from which to learn, the HMM algorithm scores 45

over 100 samples. The training and test populations percent versus 30 percent for the C4.5 algorithm. In

were obtained using sampling without replacement addition, the HMM algorithm learns much more

(i.e., the training and test populations were disjoint). quickly. By 50 training examples, the HMM

The parameters used for the neural net, which were algorithm is already achieving 79 percent

default values for the WEKA [17] neural network performance versus 67 percent for C4.5.

implementation, are as follows (note that the WEKA The neural network algorithm was clearly

neural network uses the standard back propagation inferior to the HMM for this domain, where its

algorithm for learning): likelihood of correctly classifying an example only

slightly exceeded chance at the highest training level.

Learning rate = .3 It should also be noted that the neural network

Momentum = .2 required vastly more time than the other two

Number of epochs = 500 algorithms to complete its training. The significant

Number of hidden layers = 1 performance advantage displayed by the HMM at

Nodes per hidden layer = 20 low training levels indicated potential applicability to

the “rare data” problem: the case where only a few

examples of a complex Internet attack are available.

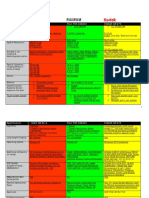

Figure 4 presents the confusion matrix [19], category 1 were correctly assigned to category 1 by the

precision, recall, and F-measure data for our current classifier.

system. The confusion matrix presents the possibility The second column of the figure shows the

of assigning an input example of a particular class to a average number of test examples in a category,

particular output category, while precision, recall, and averaged over the number of iterations for testing. The

F-measure are defined as follows [20]: row in the figure that is just above the confusion

tp matrix, labeled “Examples assigned to category,”

Precision = indicates the average number of examples that were

tp + fp classified into a particular category. Points along the

diagonal of the confusion matrix (where one would like

tp to see “1’s”) are shown in bold. Precision, recall, and

Recall = F-measure are given in the last three rows of the figure

tp + fn (just below the confusion matrix). Each element in

these rows corresponds to a given category, starting a

1 category 1 on the left and running to category 13 at the

F-measure = far right. Values containing question marks indicate

1 1

α + (1 − α ) cases where the denominator of the corresponding

P R expression was zero.

where tp is the number of positive examples in the In order to avoid data security issues, the category

input data that have been correctly identified by the labels do not indicate the specific category definitions.

classifier; fp is the number of negative examples that It can be said that the progression among the categories

have been incorrectly identified as positive by the is from less interesting to more interesting in terms of

classifier; fn is the number of positive examples that analyst interest. Category 1 is the least interesting

have been identified as negative by the classifier; α is a category, and category 13 is the most interesting. The

parameter designed to allow a weighting of the point should also be made that the data used for our

importance ascribed to precision or recall; P is analysis is “real” data corresponding to suspected

precision and R is recall. For equal weighting of intrusions.

precision and recall, as was done for our experiments, As can be seen in Figure 4, good performance can

α is set to 0.5. be obtained from this level of training (overall

Figure 4 was generated from the 300 training performance is 92.5 percent). However, cases for

example case data point shown above in Figure 3. The which there are few examples tend to have a lower

confusion matrix is shown in the box in Figure 4. Each performance result. For example, category 6 had, on

element of the confusion matrix indicates the the average, only .2 examples in the test population; it

proportion of examples from a particular category that was classified with an accuracy of 35 percent. Category

were assigned to another category by the classifier. For 7 also had, on average, .2 examples and achieved only

example, the value of “.96” in the upper left cell of the 15 percent classification accuracy.

matrix indicates that 96 percent of the test examples in

Proceedings of the 36th Hawaii International Conference on System Sciences (HICSS’03)

0-7695-1874-5/03 $17.00 © 2002 IEEE

Figure 4. Confusion matrix, precision, recall, and F-measure

Considering the precision, recall, and F-measure high priority attacks. For example, in the confusion

calculations, these values are very good, except for matrix of Figure 4, there are very few example of

those categories where there are insufficient training category 2, and almost all of these examples are

and test examples. In addition, for categories 2 and 7, mistakenly assigned to category 1, a very common

the classifier attained a high value for precision, but category. Similarly, the examples of category 5 (rare)

low value for recall. This means that for those are also assigned to category 1, and the examples of

categories the classifier had few false positives but had category 6 are mistakenly assigned to category 8. This

a high proportion of false negatives. This could mean problem is known in the literature as the problem of

that the training population, with only a few examples, “skewed distributions” or “imbalanced data sets” [22],

had almost no positive examples, causing the classifier [23], [24], [25]. This phenomenon has only recently

to learn that almost all examples should be labeled as been addressed from within the ML community and to

negative. Figure 5 shows the ROC curves [21] obtained date is not well explored. Because of the incipient

from the testing activity. Note that the ROC curves nature of the work in this area, in this section we only

presented in Figure 6 were obtained from the category provide a brief list of approaches that have been used

1 testing data. ROC curves for the other categories are to attack the rare data problem in the past. It is our

similar. The point that should be made regarding this intention to look more deeply at this problem in the

figure is that the ROC curves gain most of their next stage of our research

performance in the region from 0 to 40 percent false The standard approaches for handling the rare data

alarm rate, and the ROC curves improve as the training problem are as follows:

level is increased. Over-sample the rare data population [25]. In this

The improvement in performance with training case, the samples of the rare data population are

level is shown more clearly in Figure 6, which presents duplicated so as to increase their representation during

area under the ROC curve as a function of training the learning process. Under-sample the abundant data

level. Note that categories for which there were population [25]. This is simply the inverse of the

insufficient test examples to generate a valid curve are method described above. In both cases the objective is

not included in Figure 6. Categories that start out at to obtain a balance between the most populous sample

lower performance values increase performance with types and the rare samples.

training, as expected. However, the top two categories, Apply recognition filters to the data to eliminate

8 and 11, actually appear to decrease in performance as samples of one class or another [25]. “Boost” the

training is increased, an effect for which we currently performance of the algorithm against rare data [24].

have no explanation. Teach the classification algorithm in two stages

The Rare Data Problem consisting of: 1) training the classifier to properly

In general, the most dangerous attacks happen only classify all of the positive examples, and 2) amending

rarely. Therefore the training set for the HMM contains the classifier by teaching it to properly classify all of

only a few examples of these attack types. In addition, the negative examples that were improperly classified

the input alert data stream contains many low priority as positive during the first stage [22], [26].

alerts that tend to mask identification of these rare,

Proceedings of the 36th Hawaii International Conference on System Sciences (HICSS’03)

0-7695-1874-5/03 $17.00 © 2002 IEEE

Category 1 ROC Curve Results for Various Training

1

300 Training Examples

100 Training Samples

50 Training Samples

0.8

True Positive Rate

0.6

0.4

0.2

0

0 0.2 0.4 0.6 0.8 1

False Positive Rate

Figure 5. ROC curves as a function of training level

1 'Category 11

'Category 8

0.8 Category 1

Category 3

Area Under the ROC Curve

Category 4

Category 10

Category 9

0.6

Category 5

0.4

0.2

0

0 50 100 150 200 250 300

Number of Training Examples

Figure 6. Area under the ROC curve as a function of training level

Each of the approaches listed has strengths and accounting for rare data in the intrusion detection

weaknesses in the context of the intrusion detection domain. This work is especially vital in regard to

problem. When only a few examples (i.e., < 10) of a multi-stage attacks, as these attacks are almost always

particular attack exist in the training data, none of these rare.

approaches may work and we may have to resort to Future Work

other approaches to solve the rare data problem. The work done so far concerning coordinated

The approaches listed above represent possible Internet attacks has identified areas where further work

starting points for the work that needs to be done in is required. One problem that needs to be addressed is

Proceedings of the 36th Hawaii International Conference on System Sciences (HICSS’03)

0-7695-1874-5/03 $17.00 © 2002 IEEE

the “rare data” problem, as we have discussed above.

In addition, we need to investigate the “partial data” by Using Hidden Markov Models. Applied Statistics

problem, that is, how to identify slowly developing 49(2) (2000) 269

attacks at an early stage. Many coordinated Internet 12. Green, J., Marchette, D., Northcutt, S., and Ralph, B.:

attacks take place over a long period of time (in some Analysis Techniques for Detecting Coordinated Attacks

cases, weeks or months). and Probes. In: Proceedings of the 1st USENIX

We need a method for detecting early actions that Workshop on Intrusion Detection and Network

Monitoring (1999)

are likely preludes to more complex intrusions. Such 13. Cuppens, F.: Managing Alerts in a Multi-intrusion

early detections would allow us to monitor these “slow Detection Environment. In: 17th Annual Computer

rolling” attacks as they are developing and take Security Applications Conference. New Orleans,

appropriate action. Some work that may be applicable Louisiana (2001)

to this problem has been done at the University of 14 Bloedorn, E., et al.: Data Mining for Network Intrusion

Waikato on prediction by partial matching (PPM) [27], Detection: How to Get Started. Mitre Corporation.

a technique that has been used in text mining for http://www.mitre.org/support/papers/tech_papers_01/bl

recognizing partial sequences. The final area requiring oedorn_datamining/index.shtml (2001)

research is the problem of noise contaminating the 15. Sinclair, C., Pierce L., Matzner, S.: An Application of

Machine Learning Techniques to Network Intrusion

input data [28]. This problem can occur when there are

Detection. Proceedings of the 15th Annual Computer

problems with the communications channel, Security Applications Conference (1999)

particularly in the case of wireless communications, or 16. DeGroot, M.H.: Probability and Statistics. 2nd edn.

when the sensors malfunction, or when the hacker Addison Wesley Longman

mounting the intrusion simply makes a mistake in the 17. Witten, I.H., Eibe, F.: Data Mining: Practical Machine

attack sequence. This area has been studied previously Learning Tools and Techniques with Java

in the field of automated knowledge base repair Implementations. Morgan Kaufmann (2000) Ch. 8. http:

[29],[30]. //www.cs.waikato.ac.nz/ml/weka/

18. Yoon, H.: HmmLib: Hidden Markov Model Library in

Java. http://www.vilab.com/hmmlib/home.html

References 19. Townsend, J.T.: Theoretical Analysis of an Alphabetic

Confusion Matrix. Perception and Psychophysics 9(1A)

1. Ourston, D., Matzner, S., Stump, W., Hopkins, B., (1971) 40-50

Richards, K. Identifying Coordinated Internet Attacks. 20. Manning, C., Schutze, H: Foundations of Statistical

In: Proceedings of the Second SSGRR Conference. Natural Language Processing. The MIT Press,

Rome, Italy (2001) Cambridge, Massachusetts, USA 267-270

2. Valdes, A., Skinner, K.: Probabilistic Alert Correlation. 21. Egan, J.P.: Signal Detection Theory and ROC Analysis.

In: The Proceedings of Recent Advances in Intrusion Series in Cognition and Perception. Academic Press,

Detection (RAID). Springer Verlag Lecture Notes in New York (1975)

Computer Science (2001) 22. Agarwal, R., Joshi, M.: PNrule: A New Framework for

3. Lane, T., Brodley, C.: Temporal Sequence Learning for Learning Classifier Models in Data Mining (A Case-

Anomaly Detection. In: 5th ACM Conference on Study in Network Intrusion Detection). Technical

Computer & Communications Security. San Francisco, Report TR 00-015, Department of Computer Science,

California, USA (1998) 150-158 University of Minnesota, USA (2000)

4. Aha, D.W., Kibler, D., Albert, M.K.: Instance-Based 23. Provost, F., Fawcett, T.: Robust Classification for

Learning Algorithms, Machine Learning 6 (1991) 37-66 Imprecise Environments. Machine Learning 42 (2001)

5. Manganaris, S., et al.: A Data Mining Analysis of RTID 203-231

Alarms. In: 2nd International Workshop on Recent 24. Freitag, D., Kushmerick, N.: Boosted Wrapper

Advances in Intrusion Detection. Purdue University, Induction. In: Proceedings of the Seventeenth National

West Lafayette, Indiana, USA (1999) Conference on Artificial Intelligence. Austin, Texas,

6. Rabiner, L.R., Juang, B.H.: An Introduction to Hidden USA (2000)

Markov Models. In: IEEE ASSP Magazine, 1986 25. Japkowicz, N.: Learning from Imbalanced Data Sets: A

7. Warrender, C., Forrest S., and Pearlmutter, B.: Comparison of Various Strategies. In: Papers from the

Detecting Intrusions Using System Calls: Alternative AAAI Workshop on Learning from Imbalanced Data

Data Models. In: Proceedings of the 1999 IEEE Sets. Technical Report WS-00-05, AAAI Press, Menlo

Symposium on Security and Privacy. (1999) Park, California, USA (2000)

8. Huang, X.D., Ariki, Y., Jack, M.A.: Hidden Markov 26. Joshi, M. Agarwal, R., Kumar, V.: Mining Needles in

Models for Speech Recognition, Edinburgh University a Haystack: Classifying Rare Classes via Two-Phase

Press (1990) Rule Induction. In: Proceedings of ACM SIGMOD’01

9. Wen, Y.: Text Mining Using HMM and PPM. Master’s Conference on Management of Data. Santa Barbara,

thesis. Department of Computer Science, University of California, USA (2001)

Waikato (2001) 27. Hand, D., Till, R.: A Simple Generalization of the Area

10. Bunke, H., Caelli, T. (eds.): Hidden Markov Models: under the Roc Curve for Multiple Class Classification

Applications in Computer Vision. World Scientific, Problems. Machine Learning 45 (2001) 171-186

Series in Machine Perception and Artificial 28. Eskin, E.: Anomaly Detection over Noisy Data Using

Intelligence, Vol. 45. (2001) Learned Probability Distributions. In: Proceedings of

11. Boys, R.J., Henderson, D.A., Wilkinson, D.J.: the Seventeenth International Conference on Machine

Detecting Homogeneous Segments in DNA Sequences Learning (ICML-2000) (2000)

Proceedings of the 36th Hawaii International Conference on System Sciences (HICSS’03)

0-7695-1874-5/03 $17.00 © 2002 IEEE

29. Ourston, D.: Using Explanation-Based and Empirical

Methods in Theory Revision. Ph.D. thesis. The

University of Texas, Austin, Texas, USA (1991)

30. Mooney, R. J., Ourston, D.: Theory Refinement with

Noisy Data. Technical Report AI91-153, Artificial

Intelligence Laboratory. The University of Texas,

Austin, Texas, USA (1991)

Proceedings of the 36th Hawaii International Conference on System Sciences (HICSS’03)

0-7695-1874-5/03 $17.00 © 2002 IEEE

You might also like

- Malware Diffusion Models for Modern Complex Networks: Theory and ApplicationsFrom EverandMalware Diffusion Models for Modern Complex Networks: Theory and ApplicationsNo ratings yet

- Applications of Hidden Markov Models To Detecting Multi-Stage Network AttacksDocument10 pagesApplications of Hidden Markov Models To Detecting Multi-Stage Network AttacksPramono PramonoNo ratings yet

- Multi-Layer Hidden Markov Model Based Intrusion Detection SystemDocument22 pagesMulti-Layer Hidden Markov Model Based Intrusion Detection SystemPramono PramonoNo ratings yet

- WordDocument6 pagesWordJaya ShreeNo ratings yet

- Prepare For Trouble and Make It Double SupervisedDocument16 pagesPrepare For Trouble and Make It Double SupervisedVictor KingbuilderNo ratings yet

- Layered Approach Using Conditional Random Fields For Intrusion DetectionDocument15 pagesLayered Approach Using Conditional Random Fields For Intrusion Detectionmom028No ratings yet

- New Modeling Technique For Detecting, Analyzing, and Mitigating Multi AttacksDocument8 pagesNew Modeling Technique For Detecting, Analyzing, and Mitigating Multi AttackserpublicationNo ratings yet

- Intrusion Detection System by Layered Approach and Hidden Markov ModelDocument8 pagesIntrusion Detection System by Layered Approach and Hidden Markov ModelArnav GudduNo ratings yet

- Can Machine:Deep Learning Classifiers Detect Zero-Day Malware With High Accuracy PDFDocument8 pagesCan Machine:Deep Learning Classifiers Detect Zero-Day Malware With High Accuracy PDFMike RomeoNo ratings yet

- Evaluating Model Drift in Machine Learning AlgorithmsDocument8 pagesEvaluating Model Drift in Machine Learning Algorithmsstalker23armNo ratings yet

- A Comparative Study of Hidden Markov Model and SupDocument10 pagesA Comparative Study of Hidden Markov Model and Supasdigistore101No ratings yet

- Analyst Intuition Based Hidden Markov Model On High Speed Temporal Cyber Security Big DataDocument4 pagesAnalyst Intuition Based Hidden Markov Model On High Speed Temporal Cyber Security Big DataKSHITIJ TAMBENo ratings yet

- Toward An Integrated Dynamic Defense System For Strategic Detecting Attacks in Cloud Networks Using Stochastic GameDocument21 pagesToward An Integrated Dynamic Defense System For Strategic Detecting Attacks in Cloud Networks Using Stochastic GameCoder CoderNo ratings yet

- Evaluating Machine Learning Algorithms F-1Document6 pagesEvaluating Machine Learning Algorithms F-1keerthiksNo ratings yet

- A Machine Learning Approach To Anomaly DetectionDocument13 pagesA Machine Learning Approach To Anomaly DetectionAli RezaiNo ratings yet

- Intrusion Detection Techniques: A State-of-Art: International Journal of Mobile Communications March 2016Document4 pagesIntrusion Detection Techniques: A State-of-Art: International Journal of Mobile Communications March 2016skamelNo ratings yet

- Adaptive Distributed Mechanism Against Flooding Network Attacks Based On Machine LearningDocument11 pagesAdaptive Distributed Mechanism Against Flooding Network Attacks Based On Machine LearningKevin MondragonNo ratings yet

- Detecting 0dayDocument8 pagesDetecting 0daycharly36No ratings yet

- OMMA: Open Architecture For Operator-Guided Monitoring of Multi-Step AttacksDocument25 pagesOMMA: Open Architecture For Operator-Guided Monitoring of Multi-Step AttacksLee HeaverNo ratings yet

- Seminar 2Document15 pagesSeminar 2Prasenjit PaulNo ratings yet

- Citation 52 - An In-Depth Analysis On Traffic Flooding Attacks Detection and System Using Data Mining Techniques - Yu2013 - DT - DDOS - IDSDocument8 pagesCitation 52 - An In-Depth Analysis On Traffic Flooding Attacks Detection and System Using Data Mining Techniques - Yu2013 - DT - DDOS - IDSZahedi AzamNo ratings yet

- Threat Detection Model Based On MachineDocument5 pagesThreat Detection Model Based On MachineShehara FernandoNo ratings yet

- JournalDocument11 pagesJournalVinoth KumarNo ratings yet

- Minor ProjectDocument17 pagesMinor Projectvibz09No ratings yet

- Review On Network Intrusion Detection Techniques Using Machine LearningDocument6 pagesReview On Network Intrusion Detection Techniques Using Machine Learningdinesh nNo ratings yet

- Intrusion Alert Prediction Using A Hidden Markov ModelDocument8 pagesIntrusion Alert Prediction Using A Hidden Markov ModelPramono PramonoNo ratings yet

- Fuzzy Detection of Malicious Attacks On Web ApplicDocument8 pagesFuzzy Detection of Malicious Attacks On Web ApplicPramono PramonoNo ratings yet

- Conference-template-A4 (AutoRecovered)Document6 pagesConference-template-A4 (AutoRecovered)Nitin GavandeNo ratings yet

- The Next Generation Cognitive Security O PDFDocument22 pagesThe Next Generation Cognitive Security O PDFNurgiantoNo ratings yet

- Architectures For Detecting Interleaved Multi-Stage Network Attacks Using Hidden Markov ModelsDocument16 pagesArchitectures For Detecting Interleaved Multi-Stage Network Attacks Using Hidden Markov ModelsPramono PramonoNo ratings yet

- Icc40277 2020 9149413Document6 pagesIcc40277 2020 9149413Kurniabudi ZaimarNo ratings yet

- Thesis Robust-Machine-Learning-Systems-Survey - Ieee-Dt20Document22 pagesThesis Robust-Machine-Learning-Systems-Survey - Ieee-Dt20Đăng Nguyên Trịnh VũNo ratings yet

- Intrusion Detection System For Mobile Ad Hoc Networks - A SurveyDocument8 pagesIntrusion Detection System For Mobile Ad Hoc Networks - A SurveyitanilvitmNo ratings yet

- Breaking Cryptographic Implementations Using Deep Learning TechniquesDocument25 pagesBreaking Cryptographic Implementations Using Deep Learning TechniquesAnjishnu MahantaNo ratings yet

- Computer Communications: Li Ming Chen, Meng Chang Chen, Wanjiun Liao, Yeali S. SunDocument14 pagesComputer Communications: Li Ming Chen, Meng Chang Chen, Wanjiun Liao, Yeali S. Sunkentang bakarNo ratings yet

- Proceedings of Spie: Incremental Learning-Based Jammer ClassificationDocument11 pagesProceedings of Spie: Incremental Learning-Based Jammer ClassificationM FarhanNo ratings yet

- Network Intrusion Detection Using Supervised Machine Learning Technique With Feature SelectionDocument4 pagesNetwork Intrusion Detection Using Supervised Machine Learning Technique With Feature SelectionShahid AzeemNo ratings yet

- Deep Learning For Classification of Malware System Call SequencesDocument12 pagesDeep Learning For Classification of Malware System Call SequencesAlina Ioana MoldovanNo ratings yet

- A Machine Learning Based Intrusion Detection SysteDocument15 pagesA Machine Learning Based Intrusion Detection Systechaurasia.nikhil2001No ratings yet

- Network Intrusion Detection Combined Hybrid Sampling With Deep Hierarchical NetworkDocument13 pagesNetwork Intrusion Detection Combined Hybrid Sampling With Deep Hierarchical Networkjahangir shaikhNo ratings yet

- M.tech Thesis in Computer Sciencewormhole AttackDocument8 pagesM.tech Thesis in Computer Sciencewormhole Attackanashahwashington100% (1)

- Intrusion Detection in Mobile Ad Hoc Networks ThesisDocument7 pagesIntrusion Detection in Mobile Ad Hoc Networks Thesisvaj0demok1w2100% (1)

- A Survey On Effective Machine Learning Algorithm For Intrusion Detection SystemDocument4 pagesA Survey On Effective Machine Learning Algorithm For Intrusion Detection SystemArthee PandiNo ratings yet

- Module 2Document80 pagesModule 2mykle ambroseNo ratings yet

- Apc 40 Apc210116Document8 pagesApc 40 Apc210116Chris MEDAGBENo ratings yet

- Framework For The Establishment of A HoneynetDocument6 pagesFramework For The Establishment of A Honeynetsohanarg34No ratings yet

- Artificial Neural Networks Optimized With Unsupervised Clustering For IDS ClassificationDocument7 pagesArtificial Neural Networks Optimized With Unsupervised Clustering For IDS ClassificationNewbiew PalsuNo ratings yet

- A Study On Supervised Machine Learning Algorithm To Improvise Intrusion Detection Systems For Mobile Ad Hoc NetworksDocument10 pagesA Study On Supervised Machine Learning Algorithm To Improvise Intrusion Detection Systems For Mobile Ad Hoc NetworksDilip KumarNo ratings yet

- Xiong2021 Article CyberSecurityThreatModelingBasDocument21 pagesXiong2021 Article CyberSecurityThreatModelingBascontravelelNo ratings yet

- Detection of Black Hole Attack in MANET Using FBC TechniqueDocument4 pagesDetection of Black Hole Attack in MANET Using FBC TechniqueInternational Journal of Application or Innovation in Engineering & ManagementNo ratings yet

- A Review On The Effectiveness of Machine Learning and Deep Learning Algorithms For Cyber SecurityDocument19 pagesA Review On The Effectiveness of Machine Learning and Deep Learning Algorithms For Cyber SecurityShehara FernandoNo ratings yet

- A Dynamic Approach For Anomaly Detection in AODVDocument9 pagesA Dynamic Approach For Anomaly Detection in AODVijasucNo ratings yet

- Fuzzy Clustering For Misbeha Viour Detection in VanetDocument5 pagesFuzzy Clustering For Misbeha Viour Detection in VanetAchref HaddajiNo ratings yet

- v1 CoveredDocument10 pagesv1 CoveredimeemkhNo ratings yet

- Profiling Side-Channel Analysis in The Efficient Attacker FrameworkDocument29 pagesProfiling Side-Channel Analysis in The Efficient Attacker FrameworkTran QuyNo ratings yet

- Research Paper On Manet SecurityDocument5 pagesResearch Paper On Manet Securityafmctmvem100% (1)

- Science Direct 2Document7 pagesScience Direct 2ghouse1508No ratings yet

- Ali 2018Document7 pagesAli 2018ahmedsaeedobiedNo ratings yet

- Distributed and Cooperative Hierarchical Intrusion Detection On ManetsDocument9 pagesDistributed and Cooperative Hierarchical Intrusion Detection On ManetsleloiboiNo ratings yet

- Machine Learning Based Intrusion Detection SystemDocument5 pagesMachine Learning Based Intrusion Detection Systemeshensanjula2002No ratings yet

- 2018 A BDL Hamed PDFDocument186 pages2018 A BDL Hamed PDFPramono PramonoNo ratings yet

- Ada 559041Document152 pagesAda 559041Pramono PramonoNo ratings yet

- Detection of SQL Injection Attacks Using Hidden Markov ModelDocument7 pagesDetection of SQL Injection Attacks Using Hidden Markov ModelPramono PramonoNo ratings yet

- Sqlidds: SQL Injection Detection Using Document Similarity MeasureDocument35 pagesSqlidds: SQL Injection Detection Using Document Similarity MeasurePramono PramonoNo ratings yet

- IJSRDV6I10368Document2 pagesIJSRDV6I10368Pramono PramonoNo ratings yet

- CISSE v08 I01 p11 PreDocument13 pagesCISSE v08 I01 p11 PrePramono PramonoNo ratings yet

- Detection of SQL Injection Using Machine Learning: A SurveyDocument8 pagesDetection of SQL Injection Using Machine Learning: A SurveyPramono PramonoNo ratings yet

- Architectures For Detecting Interleaved Multi-Stage Network Attacks Using Hidden Markov ModelsDocument16 pagesArchitectures For Detecting Interleaved Multi-Stage Network Attacks Using Hidden Markov ModelsPramono PramonoNo ratings yet

- HMM Based On-Line Handwriting RecognitionDocument8 pagesHMM Based On-Line Handwriting RecognitionPramono PramonoNo ratings yet

- Fuzzy Detection of Malicious Attacks On Web ApplicDocument8 pagesFuzzy Detection of Malicious Attacks On Web ApplicPramono PramonoNo ratings yet

- SQL-injection Vulnerability Scanning Tool For Automatic Creation of SQL-injection AttacksDocument6 pagesSQL-injection Vulnerability Scanning Tool For Automatic Creation of SQL-injection AttacksPramono PramonoNo ratings yet

- Paper 16-Detection of SQL Injection Using A Genetic Fuzzy Classifier SystemDocument9 pagesPaper 16-Detection of SQL Injection Using A Genetic Fuzzy Classifier SystemPramono PramonoNo ratings yet

- Intrusion Alert Prediction Using A Hidden Markov ModelDocument8 pagesIntrusion Alert Prediction Using A Hidden Markov ModelPramono PramonoNo ratings yet

- SQL Injection Detection Using Machine LearningDocument51 pagesSQL Injection Detection Using Machine LearningPramono PramonoNo ratings yet

- Sqligot: Detecting SQL Injection Attacks Using Graph of Tokens and SVMDocument42 pagesSqligot: Detecting SQL Injection Attacks Using Graph of Tokens and SVMPramono PramonoNo ratings yet

- SQL Injection Detection and Prevention Techniques: University Technology MalaysiaDocument8 pagesSQL Injection Detection and Prevention Techniques: University Technology MalaysiaPramono PramonoNo ratings yet

- 21st Bomber Command Tactical Mission Report 178, OcrDocument49 pages21st Bomber Command Tactical Mission Report 178, OcrJapanAirRaidsNo ratings yet

- Section 8 Illustrations and Parts List: Sullair CorporationDocument1 pageSection 8 Illustrations and Parts List: Sullair CorporationBisma MasoodNo ratings yet

- 7 TariffDocument22 pages7 TariffParvathy SureshNo ratings yet

- MDOF (Multi Degre of FreedomDocument173 pagesMDOF (Multi Degre of FreedomRicky Ariyanto100% (1)

- Simoreg ErrorDocument30 pagesSimoreg Errorphth411No ratings yet

- Computer First Term Q1 Fill in The Blanks by Choosing The Correct Options (10x1 10)Document5 pagesComputer First Term Q1 Fill in The Blanks by Choosing The Correct Options (10x1 10)Tanya HemnaniNo ratings yet

- Sustainable Urban Mobility Final ReportDocument141 pagesSustainable Urban Mobility Final ReportMaria ClapaNo ratings yet

- Historical Development of AccountingDocument25 pagesHistorical Development of AccountingstrifehartNo ratings yet

- Guide To Growing MangoDocument8 pagesGuide To Growing MangoRhenn Las100% (2)

- Shahroz Khan CVDocument5 pagesShahroz Khan CVsid202pkNo ratings yet

- ST JohnDocument20 pagesST JohnNa PeaceNo ratings yet

- Use of EnglishDocument4 pagesUse of EnglishBelén SalituriNo ratings yet

- IdM11gR2 Sizing WP LatestDocument31 pagesIdM11gR2 Sizing WP Latesttranhieu5959No ratings yet

- Photon Trading - Market Structure BasicsDocument11 pagesPhoton Trading - Market Structure Basicstula amar100% (2)

- Binary File MCQ Question Bank For Class 12 - CBSE PythonDocument51 pagesBinary File MCQ Question Bank For Class 12 - CBSE Python09whitedevil90No ratings yet

- Ibbotson Sbbi: Stocks, Bonds, Bills, and Inflation 1926-2019Document2 pagesIbbotson Sbbi: Stocks, Bonds, Bills, and Inflation 1926-2019Bastián EnrichNo ratings yet

- 3412C EMCP II For PEEC Engines Electrical System: Ac Panel DC PanelDocument4 pages3412C EMCP II For PEEC Engines Electrical System: Ac Panel DC PanelFrancisco Wilson Bezerra FranciscoNo ratings yet

- Securitron M38 Data SheetDocument1 pageSecuritron M38 Data SheetJMAC SupplyNo ratings yet

- Micron Interview Questions Summary # Question 1 Parsing The HTML WebpagesDocument2 pagesMicron Interview Questions Summary # Question 1 Parsing The HTML WebpagesKartik SharmaNo ratings yet

- General Financial RulesDocument9 pagesGeneral Financial RulesmskNo ratings yet

- How Yaffs WorksDocument25 pagesHow Yaffs WorkseemkutayNo ratings yet

- Cdi 2 Traffic Management and Accident InvestigationDocument22 pagesCdi 2 Traffic Management and Accident InvestigationCasanaan Romer BryleNo ratings yet

- Presentation Report On Customer Relationship Management On SubwayDocument16 pagesPresentation Report On Customer Relationship Management On SubwayVikrant KumarNo ratings yet

- How To Control A DC Motor With An ArduinoDocument7 pagesHow To Control A DC Motor With An Arduinothatchaphan norkhamNo ratings yet

- Wiley Chapter 11 Depreciation Impairments and DepletionDocument43 pagesWiley Chapter 11 Depreciation Impairments and Depletion靳雪娇No ratings yet

- Kaitlyn LabrecqueDocument15 pagesKaitlyn LabrecqueAmanda SimpsonNo ratings yet

- Role of The Government in HealthDocument6 pagesRole of The Government in Healthptv7105No ratings yet

- Agfa CR 85-X: Specification Fuji FCR Xg5000 Kodak CR 975Document3 pagesAgfa CR 85-X: Specification Fuji FCR Xg5000 Kodak CR 975Youness Ben TibariNo ratings yet

- The Effectiveness of Risk Management: An Analysis of Project Risk Planning Across Industries and CountriesDocument13 pagesThe Effectiveness of Risk Management: An Analysis of Project Risk Planning Across Industries and Countriesluisbmwm6No ratings yet

- 1 PBDocument14 pages1 PBSaepul HayatNo ratings yet

- ChatGPT Side Hustles 2024 - Unlock the Digital Goldmine and Get AI Working for You Fast with More Than 85 Side Hustle Ideas to Boost Passive Income, Create New Cash Flow, and Get Ahead of the CurveFrom EverandChatGPT Side Hustles 2024 - Unlock the Digital Goldmine and Get AI Working for You Fast with More Than 85 Side Hustle Ideas to Boost Passive Income, Create New Cash Flow, and Get Ahead of the CurveNo ratings yet

- ChatGPT Money Machine 2024 - The Ultimate Chatbot Cheat Sheet to Go From Clueless Noob to Prompt Prodigy Fast! Complete AI Beginner’s Course to Catch the GPT Gold Rush Before It Leaves You BehindFrom EverandChatGPT Money Machine 2024 - The Ultimate Chatbot Cheat Sheet to Go From Clueless Noob to Prompt Prodigy Fast! Complete AI Beginner’s Course to Catch the GPT Gold Rush Before It Leaves You BehindNo ratings yet

- Scary Smart: The Future of Artificial Intelligence and How You Can Save Our WorldFrom EverandScary Smart: The Future of Artificial Intelligence and How You Can Save Our WorldRating: 4.5 out of 5 stars4.5/5 (55)

- Generative AI: The Insights You Need from Harvard Business ReviewFrom EverandGenerative AI: The Insights You Need from Harvard Business ReviewRating: 4.5 out of 5 stars4.5/5 (2)

- 100M Offers Made Easy: Create Your Own Irresistible Offers by Turning ChatGPT into Alex HormoziFrom Everand100M Offers Made Easy: Create Your Own Irresistible Offers by Turning ChatGPT into Alex HormoziNo ratings yet

- The Master Algorithm: How the Quest for the Ultimate Learning Machine Will Remake Our WorldFrom EverandThe Master Algorithm: How the Quest for the Ultimate Learning Machine Will Remake Our WorldRating: 4.5 out of 5 stars4.5/5 (107)

- Artificial Intelligence & Generative AI for Beginners: The Complete GuideFrom EverandArtificial Intelligence & Generative AI for Beginners: The Complete GuideRating: 5 out of 5 stars5/5 (1)

- Four Battlegrounds: Power in the Age of Artificial IntelligenceFrom EverandFour Battlegrounds: Power in the Age of Artificial IntelligenceRating: 5 out of 5 stars5/5 (5)

- ChatGPT Millionaire 2024 - Bot-Driven Side Hustles, Prompt Engineering Shortcut Secrets, and Automated Income Streams that Print Money While You Sleep. The Ultimate Beginner’s Guide for AI BusinessFrom EverandChatGPT Millionaire 2024 - Bot-Driven Side Hustles, Prompt Engineering Shortcut Secrets, and Automated Income Streams that Print Money While You Sleep. The Ultimate Beginner’s Guide for AI BusinessNo ratings yet

- The Roadmap to AI Mastery: A Guide to Building and Scaling ProjectsFrom EverandThe Roadmap to AI Mastery: A Guide to Building and Scaling ProjectsNo ratings yet

- HBR's 10 Must Reads on AI, Analytics, and the New Machine AgeFrom EverandHBR's 10 Must Reads on AI, Analytics, and the New Machine AgeRating: 4.5 out of 5 stars4.5/5 (69)

- Artificial Intelligence: A Guide for Thinking HumansFrom EverandArtificial Intelligence: A Guide for Thinking HumansRating: 4.5 out of 5 stars4.5/5 (30)

- The AI Advantage: How to Put the Artificial Intelligence Revolution to WorkFrom EverandThe AI Advantage: How to Put the Artificial Intelligence Revolution to WorkRating: 4 out of 5 stars4/5 (7)

- Artificial Intelligence: The Insights You Need from Harvard Business ReviewFrom EverandArtificial Intelligence: The Insights You Need from Harvard Business ReviewRating: 4.5 out of 5 stars4.5/5 (104)

- T-Minus AI: Humanity's Countdown to Artificial Intelligence and the New Pursuit of Global PowerFrom EverandT-Minus AI: Humanity's Countdown to Artificial Intelligence and the New Pursuit of Global PowerRating: 4 out of 5 stars4/5 (4)

- Who's Afraid of AI?: Fear and Promise in the Age of Thinking MachinesFrom EverandWho's Afraid of AI?: Fear and Promise in the Age of Thinking MachinesRating: 4.5 out of 5 stars4.5/5 (13)

- Working with AI: Real Stories of Human-Machine Collaboration (Management on the Cutting Edge)From EverandWorking with AI: Real Stories of Human-Machine Collaboration (Management on the Cutting Edge)Rating: 5 out of 5 stars5/5 (5)

- Your AI Survival Guide: Scraped Knees, Bruised Elbows, and Lessons Learned from Real-World AI DeploymentsFrom EverandYour AI Survival Guide: Scraped Knees, Bruised Elbows, and Lessons Learned from Real-World AI DeploymentsNo ratings yet

- Python Machine Learning - Third Edition: Machine Learning and Deep Learning with Python, scikit-learn, and TensorFlow 2, 3rd EditionFrom EverandPython Machine Learning - Third Edition: Machine Learning and Deep Learning with Python, scikit-learn, and TensorFlow 2, 3rd EditionRating: 5 out of 5 stars5/5 (2)

- A Brief History of Artificial Intelligence: What It Is, Where We Are, and Where We Are GoingFrom EverandA Brief History of Artificial Intelligence: What It Is, Where We Are, and Where We Are GoingRating: 4.5 out of 5 stars4.5/5 (3)

- The Digital Mind: How Science is Redefining HumanityFrom EverandThe Digital Mind: How Science is Redefining HumanityRating: 4.5 out of 5 stars4.5/5 (2)

- Hands-On System Design: Learn System Design, Scaling Applications, Software Development Design Patterns with Real Use-CasesFrom EverandHands-On System Design: Learn System Design, Scaling Applications, Software Development Design Patterns with Real Use-CasesNo ratings yet

- AI and Machine Learning for Coders: A Programmer's Guide to Artificial IntelligenceFrom EverandAI and Machine Learning for Coders: A Programmer's Guide to Artificial IntelligenceRating: 4 out of 5 stars4/5 (2)

- Architects of Intelligence: The truth about AI from the people building itFrom EverandArchitects of Intelligence: The truth about AI from the people building itRating: 4.5 out of 5 stars4.5/5 (21)