Professional Documents

Culture Documents

Stock Watson 4E Exercisesolutions Chapter4 Instructors

Uploaded by

Rodrigo PassosCopyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Stock Watson 4E Exercisesolutions Chapter4 Instructors

Uploaded by

Rodrigo PassosCopyright:

Available Formats

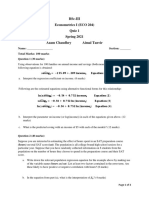

Introduction

to Econometrics (4th Edition)

by

James H. Stock and Mark W. Watson

Solutions to End-of-Chapter Exercises: Chapter 4*

(This version September 14, 2018)

*Limited distribution: For Instructors Only. Answers to all odd-numbered

questions are provided to students on the textbook website. If you find errors in

the solutions, please pass them along to us at mwatson@princeton.edu.

©2018 Pearson Education, Inc.

Stock/Watson - Introduction to Econometrics 4th Edition - Answers to Exercises: Chapter 4 1

_____________________________________________________________________________________________________

4.1. (a) The predicted average test score is

! = 520.4 − 5.82 × 22 = 392.36

TestScore

(b) The predicted change in the classroom average test score is

The change is

! = (−5.82 × 23) − (−5.82 × 19) = −23.28

ΔTestScore

that is, the regression predicts that test scores will fall by 23.28 points.

(c) Using the formula for b̂ 0 in Equation (4.8), we know the sample average of the

test scores across the 100 classrooms is

TestScore = bˆ 0 + bˆ 1 ´ CS = 520.4 - 5.82 ´ 21.4 = 395.85.

(d) Use the formula for the standard error of the regression (SER) in Equation (4.19)

to get the sum of squared residuals:

SSR = (n - 2)SER2 = (100 - 2) ´11.52 = 12961.

Use the formula for R 2 in Equation (4.16) to get the total sum of squares:

SSR 12961

TSS = 2

= = 14088.

1− R 1− 0.08

The sample variance is sY2 = TSS

n−1

= 14088

99

= 142.3. Thus, standard deviation is

sY = sY2 = 11.9.

©2018 Pearson Education, Inc.

Stock/Watson - Introduction to Econometrics 4th Edition - Answers to Exercises: Chapter 4 2

_____________________________________________________________________________________________________

4.2. The sample size n = 200. The estimated regression equation is

! = (2.15) − 99.41+ (0.31) 3.94Height,

Weight R 2 = 0.81, SER = 10.2.

(a) Substituting Height = 70, 65, and 74 inches into the equation, the predicted

weights are 176.39, 156.69, and 192.15 pounds.

! = 3.94 × ΔHeight = 3.94 × 1.5 = 5.91.

(b) ΔWeight

(c) We have the following relations: 1 in = 2.54 cm and 1 lb = 0.4536 kg. Suppose

the regression equation in the centimeter-kilogram space is

! = ˆ + ˆ Height .

Weight γ0 γ1

The coefficients are gˆ 0 = -99.41´ 0.4536 = -45.092 kg;

gˆ1 = 3.94 ´ 0.24536

.54 = 0.7036 kg

per cm. The R 2 is unit free, so it remains at

R 2 = 0.81 . The standard error of the regression is

SER = 10.2 ´ 0.4536 = 4.6267 kg.

©2018 Pearson Education, Inc.

Stock/Watson - Introduction to Econometrics 4th Edition - Answers to Exercises: Chapter 4 3

_____________________________________________________________________________________________________

4.3. (a) The coefficient 9.6 shows the marginal effect of Age on AWE; that is, AWE is

expected to increase by $9.6 for each additional year of age. 696.7 is the intercept

of the regression line. It determines the overall level of the line.

(b) SER is in the same units as the dependent variable (Y, or AWE in this

example). Thus SER is measures in dollars per week.

(c) R2 is unit free.

(d) (i) 696.7 + 9.6 ´ 25 = $936.7;

(ii) 696.7 + 9.6 ´ 45 = $1,128.7

(e) No. The oldest worker in the sample is 65 years old. 99 years is far outside the

range of the sample data.

(f) No. The distribution of earning is positively skewed and has kurtosis larger

than the normal.

(g) bˆ0 = Y - bˆ1 X , so that Y = bˆ0 + bˆ1 X . Thus the sample mean of AWE is 696.7

9.6 ´ 41.6 $1,096.06.

©2018 Pearson Education, Inc.

Stock/Watson - Introduction to Econometrics 4th Edition - Answers to Exercises: Chapter 4 4

_____________________________________________________________________________________________________

4.4. (a) ( R - R f ) = b ( Rm - R f ) + u ,

so that var ( R - R f ) = b 2 ´ var( Rm - R f ) + var(u) + 2b ´ cov(u, Rm - R f ).

But cov(u , Rm - R f ) = 0, thus var( R - R f ) = b 2 ´ var( Rm - R f ) + var(u).

With b > 1, var(R - Rf) > var(Rm - Rf), because var(u) ³ 0.

(b) Yes. Using the expression in (a)

var ( R - R f ) - var ( Rm - R f ) = (b 2 - 1) ´ var ( Rm - R f ) + var(u), which will be

positive if var(u) > (1 - b 2 ) ´ var ( Rm - R f ).

(c) Rm − R f = 5.3% − 2.0% = 3.3%. Thus, the predicted returns are

R̂ = R f + β̂ (Rm − R f ) = 2.0% + β̂ × 3.3%

Wal-Mart: 2.0% + 0.1×3.3% = 2.3%

Coca-Cola 2.0% + 0.6×3.3% = 4.0%

Verizon: 2.0% + 0.7×3.3% = 4.3%

Google: 2.0% + 1.0×3.3% = 5.3%

Boeing: 2.0% + 1.3×3.3% = 6.3%

Bank of America: 2.0% + 1.7×3.3% = 7.6%

©2018 Pearson Education, Inc.

Stock/Watson - Introduction to Econometrics 4th Edition - Answers to Exercises: Chapter 4 5

_____________________________________________________________________________________________________

4.5. (a) ui represents factors other than time that influence the student’s performance

on the exam including amount of time studying, aptitude for the material, and so

forth. Some students will have studied more than average, other less; some

students will have higher than average aptitude for the subject, others lower, and

so forth.

(b) Because of random assignment ui is independent of Xi. Since ui represents

deviations from average E(ui) = 0. Because u and X are independent E(ui|Xi) =

E(ui) = 0.

(c) (2) is satisfied if this year’s class is typical of other classes, that is, students in

this year’s class can be viewed as random draws from the population of students

that enroll in the class. (3) is satisfied because 0 £ Yi £ 100 and Xi can take on

only two values (90 and 120).

(d) (i) 49 + 0.24 ´ 90 = 70.6; 49 + 0.24 ´120 = 77.8; 49 + 0.24 ´150 = 85.0

(ii) 0.24 ´10 = 2.4.

©2018 Pearson Education, Inc.

Stock/Watson - Introduction to Econometrics 4th Edition - Answers to Exercises: Chapter 4 6

_____________________________________________________________________________________________________

4.6. Using E (ui |X i ) = 0, we have

E (Yi |X i ) = E ( b0 + b1 X i + ui |X i ) = b0 + b1E ( X i |X i ) + E (ui |X i ) = b0 + b1 X i .

©2018 Pearson Education, Inc.

Stock/Watson - Introduction to Econometrics 4th Edition - Answers to Exercises: Chapter 4 7

_____________________________________________________________________________________________________

4.7. The expectation of bˆ0 is obtained by taking expectations of both sides of Equation

(4.8):

é æç 1 n ö÷ ù

E ( bˆ0 ) = E (Y - bˆ1 X ) = E ê ç b 0 + b1 X + å ui ÷ - bˆ1 X ú

ë çè n i =1 ÷ø û

n

1

= b 0 + E ( b1 - bˆ1 ) X + å E (ui )

n i =1

= b0

where the third equality in the above equation has used the facts that E(ui) = 0 and

E[( b̂1 −b1) X ] = E[ X × (E( b̂1 −b1)| X )] = 0 because E[( b1 - bˆ1 ) | X ] = 0 (see text

equation (4.31).)

©2018 Pearson Education, Inc.

Stock/Watson - Introduction to Econometrics 4th Edition - Answers to Exercises: Chapter 4 8

_____________________________________________________________________________________________________

4.8. The only change is that the mean of bˆ0 is now b0 + 2. An easy way to see this is

this is to write the regression model as

Yi = (b0 + 2) + b1 X i + (ui - 2).

The new regression error is (ui - 2) and the new intercept is (b0 + 2). All of the

assumptions of Key Concept 4.3 hold for this regression model.

©2018 Pearson Education, Inc.

Stock/Watson - Introduction to Econometrics 4th Edition - Answers to Exercises: Chapter 4 9

_____________________________________________________________________________________________________

4.9. (a) With bˆ1 = 0, bˆ0 = Y , and Yˆi = bˆ0 = Y . Thus ESS = 0 and R2 = 0.

(b) If R2 = 0, then ESS = 0, so that Ŷi = Y for all i. But, using the formula for β̂0 ,

Ŷi = β̂0 + β̂1 X i = Y − β̂1 ( X i − X ) , Thus Yˆi = Y for all i implies that bˆ1 = 0, or that

å

n

Xi is constant for all i. If Xi is constant for all i, then i -1

( X i - X )2 = 0 and b̂1

is undefined (see equation (4.7)).

©2018 Pearson Education, Inc.

Stock/Watson - Introduction to Econometrics 4th Edition - Answers to Exercises: Chapter 4 10

_____________________________________________________________________________________________________

4.10. (a) E(ui|X = 0) = 0 and E(ui|X = 1) = 0. (Xi, ui) are i.i.d. so that (Xi, Yi) are i.i.d.

(because Yi is a function of Xi and ui). Xi is bounded and so has finite fourth

moment; the fourth moment is non-zero because Pr(Xi = 0) and Pr(Xi = 1) are both

non-zero. Following calculations like those exercise 2.13, ui also has nonzero

finite fourth moment.

(b) var( X i ) = 0.2 ´ (1 - 0.2) = 0.16 and µ X = 0.2. Also

var[( X i - µ X )ui ] = E[( X i - µ X )ui ]2

= E[( X i - µ X )ui |X i = 0]2 ´ Pr( X i = 0) + E[( X i - µ X )ui |X i = 1]2 ´ Pr( X i = 1)

where the first equality follows because E[(Xi - µX)ui] = 0, and the second

equality follows from the law of iterated expectations.

E[( X i - µ X )ui |X i = 0]2 = 0.22 ´1, and E[( X i - µ X )ui |X i = 1]2 = (1 - 0.2) 2 ´ 4.

Putting these results together

1 (0.22 ´1´ 0.8) + ((1 - 0.2) 2 ´ 4 ´ 0.2) 1

s =2

bˆ

= 21.25

1

n 0.162 n

©2018 Pearson Education, Inc.

Stock/Watson - Introduction to Econometrics 4th Edition - Answers to Exercises: Chapter 4 11

_____________________________________________________________________________________________________

å

n

4.11. (a) The least squares objective function is i =1

(Yi - b1 X i )2. Differentiating with

= -2åi =1 X i (Yi - b1 X i ). Setting this zero, and

¶ åin=1 (Yi -b1 X i )2 n

respect to b1 yields ¶b1

solving for the least squares estimator yields bˆ1 =

åin=1 X iYi

åin=1 X i2

.

(b) Following the same steps in (a) yields bˆ1 = åin=1 X i (Yi - 4)

åin=1 X i2

©2018 Pearson Education, Inc.

Stock/Watson - Introduction to Econometrics 4th Edition - Answers to Exercises: Chapter 4 12

_____________________________________________________________________________________________________

4.12. (a) Write

n n n

ESS = å (Yˆi - Y ) 2 = å ( bˆ0 + bˆ1 X i - Y ) 2 = å [ bˆ1 ( X i - X )]2

i =1 i =1 i =1

2

n éë åin=1 ( X i - X )(Yi - Y ) ùû

= b å(Xi - X ) =

ˆ

1

2 2

.

i =1 åin=1 ( X i - X )2

This implies

2

ESS éë åin=1 ( X i - X )(Yi - Y ) ùû

R = n

2

=

åi =1 (Yi - Y ) 2 åin=1 ( X i - X ) 2 åin=1 (Yi - Y ) 2

2

é 1

åin=1 ( X i - X )(Yi - Y ) ù

=ê n -1

ú

êë 1

n -1 åin=1 ( X i - X ) 2 n -1 å i =1 (Yi - Y ) û

1 n 2

ú

2

és ù

= ê XY ú = rXY

2

ë s X sY û

(b) This follows from part (a) because rXY = rYX.

s XY s

(c) Because rXY = , rXY Y =

s X sY sX

n

1 n

s XY å

(n - 1) i =1

( X i - X )(Yi - Y ) å ( X i - X )(Yi - Y )

= n

= i =1 n = bˆ1

sX2 1

å(Xi - X )

(n - 1) i =1

2

åi =1

(Xi - X ) 2

©2018 Pearson Education, Inc.

Stock/Watson - Introduction to Econometrics 4th Edition - Answers to Exercises: Chapter 4 13

_____________________________________________________________________________________________________

4.13. The answer follows the derivations in Appendix 4.3 in “Large-Sample Normal

Distribution of the OLS Estimator.” In particular, the expression for ni is now ni =

(Xi − µX)kui, so that var(ni) = k3var[(Xi − µX)ui], and the term k2 carry through the rest

of the calculations.

©2018 Pearson Education, Inc.

Stock/Watson - Introduction to Econometrics 4th Edition - Answers to Exercises: Chapter 4 14

_____________________________________________________________________________________________________

4.14. Because bˆ0 = Y - bˆ1 X , Y = bˆ0 + b1 X . The sample regression line is y = β̂0 + β̂1x ,

so that the sample regression line passes through ( X , Y ).

©2018 Pearson Education, Inc.

Stock/Watson - Introduction to Econometrics 4th Edition - Answers to Exercises: Chapter 4 15

_____________________________________________________________________________________________________

4.15

(a.i) Because (Yoos, Xoos) are from some population as the in-sample observation,

E(Yoos|Xoos = xoos) = E(Y|X = xoos) = b0 + b1xoos.

(a.ii)

E(Ŷ oos | X oos = x oos ) = E( β̂0 + β̂1x oos | X oos = x oos )

= E( β̂0 + β̂1x oos ) = E( β̂0 ) + E( β̂1 )x oos

= β0 + β1x oos

where the second line follows because Xoos is independent of ( β̂0 , β̂1 ) because they

depend only on the in-sample observations.

(a.iii) From appendix 4.4:

( ) ( )

û oos = u oos − ⎡⎢ β̂0 − β0 + β̂1 − β1 x oos ⎤⎥

⎣ ⎦

and the result follows by noting that the two terms on the right hand side of the equation

are independent, and therefore uncorrelated with each other.

(b.i) No. Because (Yoos, Xoos) are drawn from distribution different that the sample data,

then in general E(Yoos|Xoos = xoos) ≠ E(Y|X = xoos) = b0 + b1xoos.

(b.ii) Yes. Same reason as (a.ii).

©2018 Pearson Education, Inc.

You might also like

- Stock Watson 4E Exercisesolutions Chapter5 InstructorsDocument18 pagesStock Watson 4E Exercisesolutions Chapter5 InstructorsRodrigo PassosNo ratings yet

- Stock Watson 4E Exercisesolutions Chapter7 Students PDFDocument6 pagesStock Watson 4E Exercisesolutions Chapter7 Students PDFAryaman BangaNo ratings yet

- Stock Watson 3u Exercise Solutions Chapter 4 InstructorsDocument16 pagesStock Watson 3u Exercise Solutions Chapter 4 InstructorsGianfranco ZuñigaNo ratings yet

- Stock Watson 3U ExerciseSolutions Chapter2 StudentsDocument15 pagesStock Watson 3U ExerciseSolutions Chapter2 Studentsk_ij9658No ratings yet

- Stock Watson 3U ExerciseSolutions Chapter9 InstructorsDocument16 pagesStock Watson 3U ExerciseSolutions Chapter9 Instructorsvivo15100% (4)

- Stock Watson 3u Exercise Solutions Chapter 11 InstructorsDocument13 pagesStock Watson 3u Exercise Solutions Chapter 11 InstructorsTardan TardanNo ratings yet

- Stock Watson 3U ExerciseSolutions Chapter5 Students PDFDocument9 pagesStock Watson 3U ExerciseSolutions Chapter5 Students PDFSubha MukherjeeNo ratings yet

- Exercise Solutions Chapter 2Document29 pagesExercise Solutions Chapter 2Julio Cisneros EncisoNo ratings yet

- Stock Watson 4E Exercisesolutions Chapter3 InstructorsDocument25 pagesStock Watson 4E Exercisesolutions Chapter3 InstructorsRodrigo PassosNo ratings yet

- Stock Watson 3U ExerciseSolutions Chapter3 Students PDFDocument13 pagesStock Watson 3U ExerciseSolutions Chapter3 Students PDFJa SaNo ratings yet

- Stock Watson 3u Exercise Solutions Chapter 13 InstructorsDocument15 pagesStock Watson 3u Exercise Solutions Chapter 13 InstructorsTardan TardanNo ratings yet

- StockWatson 3e EmpiricalExerciseSolutions PDFDocument65 pagesStockWatson 3e EmpiricalExerciseSolutions PDFChristine Yan0% (1)

- Calculating Different Types of AnnuitiesDocument7 pagesCalculating Different Types of AnnuitiesZohair MushtaqNo ratings yet

- Theory of Interest Kellison Solutions Chapter 011Document15 pagesTheory of Interest Kellison Solutions Chapter 011Virna Cristina Lazo100% (1)

- Standard Normal Distribution TableDocument3 pagesStandard Normal Distribution TableQuang NguyenNo ratings yet

- Solutions Chapter 5Document21 pagesSolutions Chapter 5Arslan QayyumNo ratings yet

- Stock3e Empirical SM PDFDocument79 pagesStock3e Empirical SM PDFVarin Ali94% (18)

- Chapter 8 Solution To Example Exercises PDFDocument3 pagesChapter 8 Solution To Example Exercises PDFShiela RengelNo ratings yet

- Stock Watson 3U ExerciseSolutions Chapter04 Students PDFDocument8 pagesStock Watson 3U ExerciseSolutions Chapter04 Students PDFTamim IqbalNo ratings yet

- Stock Watson 3U ExerciseSolutions Chapter2 InstructorsDocument29 pagesStock Watson 3U ExerciseSolutions Chapter2 InstructorsBenny Januar83% (6)

- Introduction To Econometrics (3 Updated Edition, Global Edition)Document8 pagesIntroduction To Econometrics (3 Updated Edition, Global Edition)gfdsa123tryaggNo ratings yet

- Introduction To Econometrics (4th Edition) : (This Version September 14, 2018)Document15 pagesIntroduction To Econometrics (4th Edition) : (This Version September 14, 2018)이이No ratings yet

- Stock Watson 3U ExerciseSolutions Chapter7 Instructors PDFDocument12 pagesStock Watson 3U ExerciseSolutions Chapter7 Instructors PDFOtieno MosesNo ratings yet

- Stock Watson 3U ExerciseSolutions Chapter6 StudentsDocument8 pagesStock Watson 3U ExerciseSolutions Chapter6 StudentswincrestNo ratings yet

- Stock Watson 4E Exercisesolutions Chapter14 Students PDFDocument7 pagesStock Watson 4E Exercisesolutions Chapter14 Students PDFHarish ChowdaryNo ratings yet

- Introduction To Econometrics (3 Updated Edition, Global Edition)Document9 pagesIntroduction To Econometrics (3 Updated Edition, Global Edition)gfdsa123tryaggNo ratings yet

- Introduction To Econometrics (3 Updated Edition, Global Edition)Document7 pagesIntroduction To Econometrics (3 Updated Edition, Global Edition)gfdsa123tryaggNo ratings yet

- Introduction To Econometrics (3 Updated Edition, Global Edition)Document8 pagesIntroduction To Econometrics (3 Updated Edition, Global Edition)gfdsa123tryaggNo ratings yet

- Introduction To Econometrics (4th Edition) : ©2018 Pearson Education, IncDocument7 pagesIntroduction To Econometrics (4th Edition) : ©2018 Pearson Education, IncAnonymous wJ8jxANo ratings yet

- Stock Watson 3U ExerciseSolutions Chapter13 Instructors PDFDocument14 pagesStock Watson 3U ExerciseSolutions Chapter13 Instructors PDFArun PattanayakNo ratings yet

- Important Notice: Statistics 4040 GCE O Level 2007Document12 pagesImportant Notice: Statistics 4040 GCE O Level 2007mstudy123456No ratings yet

- Introduction To Econometrics (3 Updated Edition) : (This Version July 20, 2014)Document7 pagesIntroduction To Econometrics (3 Updated Edition) : (This Version July 20, 2014)Sanjna ChimnaniNo ratings yet

- Solution Manual For Introduction To Econometrics 4th by StockDocument2 pagesSolution Manual For Introduction To Econometrics 4th by StockCecelia Sams100% (41)

- Stock Watson 3U ExerciseSolutions Chapter10 StudentsDocument7 pagesStock Watson 3U ExerciseSolutions Chapter10 Studentsgfdsa123tryaggNo ratings yet

- Csec 2012 Physics Jan P2Document19 pagesCsec 2012 Physics Jan P2Crystalia SeeramsinghNo ratings yet

- Suggested Solutions: Problem Set 5: β = (X X) X YDocument7 pagesSuggested Solutions: Problem Set 5: β = (X X) X YqiucumberNo ratings yet

- Scott and Watson CHPT 4 SolutionsDocument4 pagesScott and Watson CHPT 4 SolutionsPitchou1990No ratings yet

- Ap10 Physics B Scoring GuidelinesDocument16 pagesAp10 Physics B Scoring GuidelinesBo DoNo ratings yet

- Resolucion Capítulo 2 Econometria Stock y Watson PDFDocument29 pagesResolucion Capítulo 2 Econometria Stock y Watson PDFFabio Gamarra MamaniNo ratings yet

- Assignment TodayDocument9 pagesAssignment TodayharisNo ratings yet

- Stock Watson 4E Exercisesolutions Chapter9 StudentsDocument10 pagesStock Watson 4E Exercisesolutions Chapter9 Studentsgfdsa123tryaggNo ratings yet

- Econometrics I Quiz 1 Spring 2022Document4 pagesEconometrics I Quiz 1 Spring 2022Esha IftikharNo ratings yet

- Econometrics FinalDocument16 pagesEconometrics FinalDanikaLiNo ratings yet

- Time Value of Money: Objectives: After Reading This Chapter, You Should Be Able ToDocument20 pagesTime Value of Money: Objectives: After Reading This Chapter, You Should Be Able Tovikash KumarNo ratings yet

- 1 IPS EoT2 Revision+Pack G8Document31 pages1 IPS EoT2 Revision+Pack G8ipsj.20214570No ratings yet

- MATLAB - Manual (Engineering Mathematics-IV)Document25 pagesMATLAB - Manual (Engineering Mathematics-IV)Advaith ShettyNo ratings yet

- Quiz 1 EconDocument3 pagesQuiz 1 EconMahnoorNo ratings yet

- Stock Watson 3U ExerciseSolutions Chapter12 StudentsDocument6 pagesStock Watson 3U ExerciseSolutions Chapter12 Studentsgfdsa123tryaggNo ratings yet

- A Level Pure2 2022Document13 pagesA Level Pure2 2022mata4cholNo ratings yet

- Asgkit Prog4Document22 pagesAsgkit Prog4Obed DiazNo ratings yet

- Stock Watson 3u Exercise Solutions Chapter 7 InstructorsDocument13 pagesStock Watson 3u Exercise Solutions Chapter 7 InstructorsZarate Mora David EduardoNo ratings yet

- Math 8 2nd Exam FINALDocument2 pagesMath 8 2nd Exam FINALMaeNo ratings yet

- Mathematics All inDocument317 pagesMathematics All inAnthony Caputol100% (7)

- Genmath q1 Mod4 Solvingreallifeproblemsinvolvingfunctions v2-1-1Document18 pagesGenmath q1 Mod4 Solvingreallifeproblemsinvolvingfunctions v2-1-1Edel Polistico Declaro BisnarNo ratings yet

- Preboard Exam XII Applied MathematicsDocument9 pagesPreboard Exam XII Applied MathematicsSmriti SaxenaNo ratings yet

- Mid Term UmtDocument4 pagesMid Term Umtfazalulbasit9796No ratings yet

- Number of Children Over 5 Number of Households Relative Frequency 0 1 2 3 4Document19 pagesNumber of Children Over 5 Number of Households Relative Frequency 0 1 2 3 4hello helloNo ratings yet

- Ec220 ST2021-examDocument7 pagesEc220 ST2021-examAniNo ratings yet

- Regression Models: To AccompanyDocument75 pagesRegression Models: To AccompanySyed Noman HussainNo ratings yet

- SBR Tutorial 4 - Solution - UpdatedDocument4 pagesSBR Tutorial 4 - Solution - UpdatedZoloft Zithromax ProzacNo ratings yet

- Injection LogDocument1 pageInjection Log123ahuNo ratings yet

- Panduan Ujian Mahasiswa Semester Ganjil 2022-2023 - Final ExamDocument42 pagesPanduan Ujian Mahasiswa Semester Ganjil 2022-2023 - Final ExamGrace ShirleyNo ratings yet

- Private Employers - UdupiDocument3 pagesPrivate Employers - UdupiAnita SundaramNo ratings yet

- CloudComputing600A AssignmentDocument21 pagesCloudComputing600A AssignmentThuto DintweNo ratings yet

- Book Summary PLCDocument53 pagesBook Summary PLCSaiful Islam sagarNo ratings yet

- Capcut UseDocument16 pagesCapcut UseDatu Donnavie100% (1)

- Computer Science - (A) - 1 / 1Document10 pagesComputer Science - (A) - 1 / 1KrishnaNo ratings yet

- WT UNIT 1 Lecture 1.6 The Internet and Its ServicesDocument7 pagesWT UNIT 1 Lecture 1.6 The Internet and Its ServicesSACHIDANAND CHATURVEDINo ratings yet

- Closure Library user list tracking functionDocument1 pageClosure Library user list tracking functionPankaj RaikwarNo ratings yet

- LeafSpy Help 1 2 1 IOSDocument42 pagesLeafSpy Help 1 2 1 IOSthiva1985100% (1)

- GL ExtencionS24UltraDocument4 pagesGL ExtencionS24Ultrajoelreyesotazu99No ratings yet

- SqlinjectablesDocument116 pagesSqlinjectablesJacob Pochin50% (2)

- Ch03-Using Information Technology To Engage in Electronic Commerce PDFDocument42 pagesCh03-Using Information Technology To Engage in Electronic Commerce PDFamykresnaNo ratings yet

- SWTCH ManualDocument324 pagesSWTCH ManualKleng KlengNo ratings yet

- Cisco Catalyst 2950 Series SwitchesDocument12 pagesCisco Catalyst 2950 Series SwitchesEDUARDONo ratings yet

- Poly Video Os 4 1 0 382263 RNDocument30 pagesPoly Video Os 4 1 0 382263 RNBono646No ratings yet

- Hrms SDD PDF Free 2Document38 pagesHrms SDD PDF Free 2charles hendersonNo ratings yet

- Test Accredited Configuration Engineer ACE Exam PANOS 8 0 VersionDocument12 pagesTest Accredited Configuration Engineer ACE Exam PANOS 8 0 VersionMunfed Rana83% (23)

- Token Economics: What Is Braintrust?Document2 pagesToken Economics: What Is Braintrust?CaxonNo ratings yet

- Para Entender La Bolsa Arturo Rueda DescargarDocument3 pagesPara Entender La Bolsa Arturo Rueda DescargarElías CMNo ratings yet

- Autoload RealDocument2 pagesAutoload RealrodrigoNo ratings yet

- Fs2311001 Autopulse AP 4001 Product Overview Us DigitalDocument2 pagesFs2311001 Autopulse AP 4001 Product Overview Us DigitalengrjameschanNo ratings yet

- Grade 5 FractionspptDocument46 pagesGrade 5 FractionspptRonaldo ManaoatNo ratings yet

- Printlink5-ID - IV DICOM 3.0 Conformance StatementDocument20 pagesPrintlink5-ID - IV DICOM 3.0 Conformance StatementjamesNo ratings yet

- Aubay - Sap On AzureDocument3 pagesAubay - Sap On AzureAnkush AdlakhaNo ratings yet

- 2007-2008 NEW Electrical Engineering TitlesDocument40 pages2007-2008 NEW Electrical Engineering Titlesmeenakshi sharmaNo ratings yet

- Atp Assv1 Pl72888213 LetterDocument1 pageAtp Assv1 Pl72888213 LetterNarin SangrungNo ratings yet

- CompTIA Network+ N10-007 Exam - DumpsTool - MansoorDocument3 pagesCompTIA Network+ N10-007 Exam - DumpsTool - MansoorMansoor AhmedNo ratings yet

- Global Master's in Blockchain Technologies: Master's Degree by The IL3-Universitat de BarcelonaDocument4 pagesGlobal Master's in Blockchain Technologies: Master's Degree by The IL3-Universitat de BarcelonaAungsinghla MarmaNo ratings yet

- Information AgeDocument17 pagesInformation AgeAubrey AppeguNo ratings yet