Professional Documents

Culture Documents

How We Really Compute Pagerank

How We Really Compute Pagerank

Uploaded by

justin courtois0 ratings0% found this document useful (0 votes)

7 views6 pagesThe document discusses algorithms for mining massive datasets. It notes that a key step is matrix-vector multiplication, but this is difficult if the matrix does not fit in main memory. It proposes representing the matrix as a combination of a sparse matrix and redistribution of probability mass evenly across all items. The PageRank algorithm is summarized as taking an input graph and parameter and iteratively computing the PageRank vector by multiplying the current vector by the transition matrix and redistributing any absorbed probability mass evenly.

Original Description:

Original Title

how_we_really_compute_pagerank

Copyright

© © All Rights Reserved

Available Formats

PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentThe document discusses algorithms for mining massive datasets. It notes that a key step is matrix-vector multiplication, but this is difficult if the matrix does not fit in main memory. It proposes representing the matrix as a combination of a sparse matrix and redistribution of probability mass evenly across all items. The PageRank algorithm is summarized as taking an input graph and parameter and iteratively computing the PageRank vector by multiplying the current vector by the transition matrix and redistributing any absorbed probability mass evenly.

Copyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

0 ratings0% found this document useful (0 votes)

7 views6 pagesHow We Really Compute Pagerank

How We Really Compute Pagerank

Uploaded by

justin courtoisThe document discusses algorithms for mining massive datasets. It notes that a key step is matrix-vector multiplication, but this is difficult if the matrix does not fit in main memory. It proposes representing the matrix as a combination of a sparse matrix and redistribution of probability mass evenly across all items. The PageRank algorithm is summarized as taking an input graph and parameter and iteratively computing the PageRank vector by multiplying the current vector by the transition matrix and redistributing any absorbed probability mass evenly.

Copyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

You are on page 1of 6

Mining of Massive Datasets

Leskovec, Rajaraman, and Ullman

Stanford University

Key step is matrix-vector multiplication

rnew = A ∙ rold

Easy if we have enough main memory to

hold A, rold, rnew

Say N = 1 billion pages

We need 4 bytes for A = ∙M + (1- ) [1/N]NxN

each entry (say) ½ ½ 0 1/3 1/3 1/3

2 billion entries for A = 0.8 ½ 0 0 +0.2 1/3 1/3 1/3

0 ½ 1 1/3 1/3 1/3

vectors, approx 8GB

Matrix A has N2 entries 7/15 7/15 1/15

1018 is a large number! = 7/15 1/15 1/15

1/15 7/15 13/15

J. Leskovec, A. Rajaraman, J. Ullman (Stanford University) Mining of Massive Datasets 49

Suppose there are N pages

Consider page j, with dj out-links

We have Mij = 1/|dj| when j i

and Mij = 0 otherwise

The random teleport is equivalent to:

Adding a teleport link from j to every other page

and setting transition probability to (1- )/N

Reducing the probability of following each

out-link from 1/|dj| to /|dj|

Equivalent: Tax each page a fraction (1- ) of its

score and redistribute evenly

J. Leskovec, A. Rajaraman, J. Ullman (Stanford University) Mining of Massive Datasets 50

= , where = +

=

= +

= +

= + since =1

So we get: = +

Note: Here we assumed M

[x]N … a vector of length N with all entries x

has no dead-ends.

J. Leskovec, A. Rajaraman, J. Ullman (Stanford University) Mining of Massive Datasets 51

We just rearranged the PageRank equation

= +

where [(1- )/N]N is a vector with all N entries (1- )/N

M is a sparse matrix! (with no dead-ends)

10 links per node, approx 10N entries

So in each iteration, we need to:

Compute rnew = M ∙ rold

Add a constant value (1- )/N to each entry in rnew

Note if M contains dead-ends then < and

we also have to renormalize rnew so that it sums to 1

J. Leskovec, A. Rajaraman, J. Ullman (Stanford University) Mining of Massive Datasets 52

Input: Graph and parameter

Directed graph with spider traps and dead ends

Parameter

Output: PageRank vector

Set: = , =1

do:

( )

( )

: =

( )

= if in-deg. of is 0

Now re-insert the leaked PageRank:

( )

: = + where: =

= +

( ) ( )

while >

J. Leskovec, A. Rajaraman, J. Ullman (Stanford University) Mining of Massive Datasets 53

You might also like

- Creating A Company Culture For Security-Design DocumentsDocument2 pagesCreating A Company Culture For Security-Design DocumentsOnyebuchiNo ratings yet

- MM Super User ManualDocument31 pagesMM Super User ManualXavier Francisco Bajaña PozoNo ratings yet

- Solution Manual For Introduction To Nonlinear Finite Element Analysis - Nam-Ho KimDocument10 pagesSolution Manual For Introduction To Nonlinear Finite Element Analysis - Nam-Ho KimSalehNo ratings yet

- Why Teleports Solve The ProblemDocument8 pagesWhy Teleports Solve The Problemjustin courtoisNo ratings yet

- Treaps, Verification, and String MatchingDocument6 pagesTreaps, Verification, and String MatchingGeanina BalanNo ratings yet

- SE-Comps SEM4 AOA-CBCGS DEC18 SOLUTIONDocument15 pagesSE-Comps SEM4 AOA-CBCGS DEC18 SOLUTIONSagar SaklaniNo ratings yet

- How Works: M. Ram Murty, FRSC Queen's Research Chair Queen's UniversityDocument29 pagesHow Works: M. Ram Murty, FRSC Queen's Research Chair Queen's UniversityAakash AkkyNo ratings yet

- Pagerank Power IterationDocument6 pagesPagerank Power Iterationjustin courtoisNo ratings yet

- Lab PDFDocument11 pagesLab PDFaliNo ratings yet

- Linear Algebra and Applications: Benjamin RechtDocument42 pagesLinear Algebra and Applications: Benjamin RechtKarzan Kaka SwrNo ratings yet

- 872-892 Chapter 11Document26 pages872-892 Chapter 11kahinaNo ratings yet

- E9 205 - Machine Learning For Signal Processing: Practice Midterm ExamDocument4 pagesE9 205 - Machine Learning For Signal Processing: Practice Midterm Examrishi guptaNo ratings yet

- 7.0 More MLEDocument27 pages7.0 More MLERakshay PawarNo ratings yet

- Massachusetts Institute of Technology: 6.867 Machine Learning, Fall 2006 Problem Set 3: SolutionsDocument3 pagesMassachusetts Institute of Technology: 6.867 Machine Learning, Fall 2006 Problem Set 3: Solutionsjuanagallardo01No ratings yet

- Dspfoehu Matlab02 Thediscrete Timefourieranalysis 170324210057 PDFDocument50 pagesDspfoehu Matlab02 Thediscrete Timefourieranalysis 170324210057 PDFBến trần vănNo ratings yet

- Lab 1RTDocument8 pagesLab 1RTMatlali SeutloaliNo ratings yet

- 18CSE397T - Computational Data Analysis Unit - 3: Session - 8: SLO - 2Document4 pages18CSE397T - Computational Data Analysis Unit - 3: Session - 8: SLO - 2HoShang PAtelNo ratings yet

- The Fast Convergence of Incremental PCADocument17 pagesThe Fast Convergence of Incremental PCAQuarktopNo ratings yet

- Design & Analysis of Algorithms: Aunsia Khan Week 04Document23 pagesDesign & Analysis of Algorithms: Aunsia Khan Week 04ASIMNo ratings yet

- FinalDocument5 pagesFinalBeyond WuNo ratings yet

- Computer Programming and Application: 3 Interpolation and Curve FittingDocument43 pagesComputer Programming and Application: 3 Interpolation and Curve Fittingvia ardhaniNo ratings yet

- CSE-1819-Test2 AnswersdjbdsjsdDocument5 pagesCSE-1819-Test2 Answersdjbdsjsdnsy42896No ratings yet

- Laplace Transform PDFDocument34 pagesLaplace Transform PDFمنتظر احمد حبيترNo ratings yet

- X (X X - . - . - . - . - X) : Neuro-Fuzzy Comp. - Ch. 3 May 24, 2005Document20 pagesX (X X - . - . - . - . - X) : Neuro-Fuzzy Comp. - Ch. 3 May 24, 2005Oluwafemi DagunduroNo ratings yet

- EEE 3104labsheet Expt 03Document7 pagesEEE 3104labsheet Expt 03Sunvir Rahaman SumonNo ratings yet

- Chapter 3 Part 1 (Laplace Transform)Document60 pagesChapter 3 Part 1 (Laplace Transform)TJ HereNo ratings yet

- Ch-5 SolutionDocument75 pagesCh-5 SolutionIdrissMahdi OkiyeNo ratings yet

- Unit XIV - Session 35Document23 pagesUnit XIV - Session 35prasadNo ratings yet

- Multi Layer PerceptronDocument62 pagesMulti Layer PerceptronKishan Kumar GuptaNo ratings yet

- Digital Signals Processing ﺔﯿﻤﻗﺮﻟا تارﺎﺷﻻا ﺔﺠﻟﺎﻌﻣ: Lectures (Nine and Ten)Document14 pagesDigital Signals Processing ﺔﯿﻤﻗﺮﻟا تارﺎﺷﻻا ﺔﺠﻟﺎﻌﻣ: Lectures (Nine and Ten)AwadhNo ratings yet

- Digital Signals Processing ﺔﯿﻤﻗﺮﻟا تارﺎﺷﻻا ﺔﺠﻟﺎﻌﻣ: Lectures (Nine and Ten)Document14 pagesDigital Signals Processing ﺔﯿﻤﻗﺮﻟا تارﺎﺷﻻا ﺔﺠﻟﺎﻌﻣ: Lectures (Nine and Ten)AwadhNo ratings yet

- Rls PaperDocument12 pagesRls Paperaneetachristo94No ratings yet

- 01 Interpolation 1Document14 pages01 Interpolation 1kevincarloscanalesNo ratings yet

- Lecture 12Document21 pagesLecture 12ValentinNo ratings yet

- Amer Math Monthly 123 1 97Document10 pagesAmer Math Monthly 123 1 97Vlogs EGMNo ratings yet

- Cramer RaoDocument11 pagesCramer Raoshahilshah1919No ratings yet

- Calculation of Lyapunov Exponents in Time-Delayed SystemsDocument8 pagesCalculation of Lyapunov Exponents in Time-Delayed Systemscsernak2No ratings yet

- This Homework Is Due On Wednesday, February 26, 2020, at 11:59PM. Self-Grades Are Due On Monday, March 2, 2020, at 11:59PMDocument18 pagesThis Homework Is Due On Wednesday, February 26, 2020, at 11:59PM. Self-Grades Are Due On Monday, March 2, 2020, at 11:59PMBella ZionNo ratings yet

- Week1 PDFDocument22 pagesWeek1 PDFJohana Coen JanssenNo ratings yet

- Ebook Course in Probability 1St Edition Weiss Solutions Manual Full Chapter PDFDocument63 pagesEbook Course in Probability 1St Edition Weiss Solutions Manual Full Chapter PDFAnthonyWilsonecna100% (7)

- Boltzmann's Canonical Distribution LawDocument6 pagesBoltzmann's Canonical Distribution Lawssc.zainahmed.2003No ratings yet

- Statistics: Assignment 6Document6 pagesStatistics: Assignment 6Houcem Bn SalemNo ratings yet

- 小考20181026 (第一次期中考)Document8 pages小考20181026 (第一次期中考)940984326No ratings yet

- Mathematical Association of AmericaDocument9 pagesMathematical Association of AmericathonguyenNo ratings yet

- 2.electric Potential and Capacitance-12th Phy-CbseDocument31 pages2.electric Potential and Capacitance-12th Phy-CbseChris Thereise JoshiNo ratings yet

- Algebraic Methods in Data Science: Lesson 3: Dan GarberDocument14 pagesAlgebraic Methods in Data Science: Lesson 3: Dan Garberrany khirbawyNo ratings yet

- Antenna Arrays: 1 Array Factor For N ElementsDocument18 pagesAntenna Arrays: 1 Array Factor For N ElementsZakaria MounirNo ratings yet

- MIT6 042JS10 Lec39 SolDocument4 pagesMIT6 042JS10 Lec39 SolRaihan Kabir RifatNo ratings yet

- The World of Eigenvalues-Eigenfunctions: Eigenfunction EigenvalueDocument9 pagesThe World of Eigenvalues-Eigenfunctions: Eigenfunction EigenvalueRaj KumarNo ratings yet

- ML AssignmentDocument17 pagesML Assignmentveyeda7265No ratings yet

- L-21 M (X) - G-1 ModelDocument8 pagesL-21 M (X) - G-1 ModelAnisha GargNo ratings yet

- Fourier Analysis and Spectral Representation of SignalsDocument16 pagesFourier Analysis and Spectral Representation of SignalsZahid DarNo ratings yet

- Lecture 14: Principal Component Analysis: Computing The Principal ComponentsDocument6 pagesLecture 14: Principal Component Analysis: Computing The Principal ComponentsShebinNo ratings yet

- Ps 5 SolDocument5 pagesPs 5 SolRandom SwagNo ratings yet

- EEE/INSTR F434 Digital Signal Processing Test-1 Answers: N U Na N XDocument4 pagesEEE/INSTR F434 Digital Signal Processing Test-1 Answers: N U Na N XAMAL. A ANo ratings yet

- Simulations ExSh8Document2 pagesSimulations ExSh8deepaktab31No ratings yet

- Massachusetts Institute of TechnologyDocument3 pagesMassachusetts Institute of TechnologyManikanta Reddy ManuNo ratings yet

- 3: Divide and Conquer: Fourier Transform: PolynomialDocument8 pages3: Divide and Conquer: Fourier Transform: PolynomialIrmak ErkolNo ratings yet

- 3: Divide and Conquer: Fourier Transform: PolynomialDocument8 pages3: Divide and Conquer: Fourier Transform: PolynomialCajun SefNo ratings yet

- 21 JanDocument5 pages21 JanLONG XINNo ratings yet

- NLAFull Notes 22Document59 pagesNLAFull Notes 22forspamreceivalNo ratings yet

- Application To Meassuring Proximity in GraphsDocument8 pagesApplication To Meassuring Proximity in Graphsjustin courtoisNo ratings yet

- Computing Pagerank On Big GraphsDocument9 pagesComputing Pagerank On Big Graphsjustin courtoisNo ratings yet

- Pagerank The Google FormulationDocument9 pagesPagerank The Google Formulationjustin courtoisNo ratings yet

- Why Teleports Solve The ProblemDocument8 pagesWhy Teleports Solve The Problemjustin courtoisNo ratings yet

- Pagerank Power IterationDocument6 pagesPagerank Power Iterationjustin courtoisNo ratings yet

- Pagerank The Flow FormulationDocument6 pagesPagerank The Flow Formulationjustin courtoisNo ratings yet

- Pagerank The Matrix FormulationDocument4 pagesPagerank The Matrix Formulationjustin courtoisNo ratings yet

- Link Analysis and PagerankDocument14 pagesLink Analysis and Pagerankjustin courtoisNo ratings yet

- Differential EquationsDocument187 pagesDifferential EquationsMihai Alexandru Iulian100% (2)

- Writing Practice Problem Page Worksheet PDFDocument2 pagesWriting Practice Problem Page Worksheet PDFSC Priyadarshani de Silva0% (1)

- Starting Analysis of Induction Motor by EtapDocument6 pagesStarting Analysis of Induction Motor by Etapgusgif50% (2)

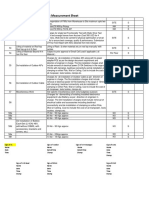

- Joint Measurement Sheet: Name of CollegeDocument1 pageJoint Measurement Sheet: Name of CollegeVenu GopalNo ratings yet

- 325632Document21 pages325632daniel cantosNo ratings yet

- Release Notes Amplifier Xe LinuxDocument33 pagesRelease Notes Amplifier Xe LinuxMuhammad NurAriff Abdul NasirNo ratings yet

- 6/TCAF: Theatre - Coursework Authentication FormDocument2 pages6/TCAF: Theatre - Coursework Authentication Formhala khouryNo ratings yet

- GenericDocument7 pagesGenericPablo Antu Manque RodriguezNo ratings yet

- QXAS ManualDocument214 pagesQXAS ManualJosu Fri WcrystNo ratings yet

- You Are Here: 1. 2. 3. Government Degree College Khairatabad HyderabadDocument12 pagesYou Are Here: 1. 2. 3. Government Degree College Khairatabad HyderabadAnonymous d8d8k1LiyNo ratings yet

- SAPIADocument2 pagesSAPIAJulio SweeneyNo ratings yet

- Business Continuity and Information SecurityDocument14 pagesBusiness Continuity and Information SecurityGeorge ChavulaNo ratings yet

- Panasonic TC 20kl03a 29kl03a mx5zbDocument48 pagesPanasonic TC 20kl03a 29kl03a mx5zbAriel SantosNo ratings yet

- Bus Writing Linc7Document38 pagesBus Writing Linc7manuNo ratings yet

- Acom Ethernet Interface Unit (EIU)Document21 pagesAcom Ethernet Interface Unit (EIU)Sohaib Omer SalihNo ratings yet

- Boa Ventura A Corrida Do Ouro No Brasil 1697 1810 Ebook Kindle - Aapq PDFDocument2 pagesBoa Ventura A Corrida Do Ouro No Brasil 1697 1810 Ebook Kindle - Aapq PDFAbenisNo ratings yet

- Sample Quality ManualDocument49 pagesSample Quality ManualNigel Lim100% (1)

- orDocument9 pagesorZain ShahNo ratings yet

- Lecture Notes: Load BalancingDocument6 pagesLecture Notes: Load BalancingTESFAHUNNo ratings yet

- SDSHCDocument18 pagesSDSHCMauro RinconNo ratings yet

- PrereleaseAgmtLicense Main Standard en 20230911Document5 pagesPrereleaseAgmtLicense Main Standard en 20230911Mich DezaNo ratings yet

- Important QuestionDesign of Power ConvertersDocument13 pagesImportant QuestionDesign of Power ConvertersSandhiya KNo ratings yet

- BAYESIANDocument8 pagesBAYESIANGhubaida HassaniNo ratings yet

- Config Cs ServerDocument3 pagesConfig Cs ServerXergexNo ratings yet

- Nitin Aggarwal - LinkedIn PDFDocument5 pagesNitin Aggarwal - LinkedIn PDFnams tatNo ratings yet

- Deployment of Kemp Loadmaster On Microsoft Azure: Lab GuideDocument48 pagesDeployment of Kemp Loadmaster On Microsoft Azure: Lab GuideVilla Di CalabriNo ratings yet

- Packet Tracer Skills Based AssessmentDocument15 pagesPacket Tracer Skills Based AssessmentBrave GS SplendeurNo ratings yet