Professional Documents

Culture Documents

Proceedings of Spie

Uploaded by

Wided HechkelOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Proceedings of Spie

Uploaded by

Wided HechkelCopyright:

Available Formats

PROCEEDINGS OF SPIE

SPIEDigitalLibrary.org/conference-proceedings-of-spie

Convolutional neural network based

on feature enhancement and

attention mechanism for Alzheimer's

disease prediction using MRI images

Fei Liu, Huabin Wang, Yonglin Chen, Yu Quan, Liang Tao

Fei Liu, Huabin Wang, Yonglin Chen, Yu Quan, Liang Tao, "Convolutional

neural network based on feature enhancement and attention mechanism for

Alzheimer's disease prediction using MRI images," Proc. SPIE 12083,

Thirteenth International Conference on Graphics and Image Processing

(ICGIP 2021), 120830X (16 February 2022); doi: 10.1117/12.2623580

Event: Thirteenth International Conference on Graphics and Image

Processing (ICGIP 2021), 2021, Kunming, China

Downloaded From: https://www.spiedigitallibrary.org/conference-proceedings-of-spie on 23 Feb 2023 Terms of Use: https://www.spiedigitallibrary.org/terms-of-use

Convolutional Neural Network based on Feature Enhancement and

Attention Mechanism for Alzheimer's Disease Prediction Using MRI

Images

Fei Liu Huabin Wang* Yonglin Chen Yu Quan Liang Tao

Anhui Provincial Key Laboratory of Multimodal Cognitive Computation, School of

Computer Science and Technology, Anhui University, Hefei, China

ABSTRACT

Nuclear Magnetic Resonance Imaging(MRI) is the mainstream way to predict Alzheimer's disease, but the

accuracy of traditional machine learning method based on MRI to predict Alzheimer's disease is low. Although

Convolutional Neural Network(CNN) can automatically extract image features, convolution operations only focus

on local regions and lose global connections. The attention mechanism can focus on local and global information at

the same time, and improve the performance of the model by strengthening the key information to suppress invalid

information.Therefore, this paper constructs a deep CNN based on multiple attention mechanisms for Alzheimer's

disease prediction. Firstly, the MRI image is enhanced by cyclic convolution to enhance the feature information of

the original image, so as to improve the prediction accuracy and stability. Secondly, multiple attention mechanisms

are introduced to re-calibrate features and adaptively learn feature weights to identify brain regions that are

particularly relevant for disease diagnosis. Finally, an improved VGG model is proposed as the backbone network.

The maximum pooling is adjusted to average pooling to retain more image information and the network efficiency

is improved by reducing the number of neurons in the fully connected layer to suppress over-fitting merging. The

experimental results show that the prediction accuracy, sensitivity and specificity of Alzheimer's disease prediction

method based on multiple attention mechanism are 99.8%, 99.9% and 99.8%, respectively, which is superior to the

existing mainstream methods.

Keywords: MRI, Alzheimer's disease, Convolutional Neural Network, Attention mechanism, Cyclic convolution

1. INTRODUCTION

Alzheimer's disease (AD) is a neurodegenerative disease. Neurons in the brain of Alzheimer's patients with

memory, thinking and learning function are destroyed, leading to dementia syndrome. Alzheimer's disease has

brought considerable harm to patients, which not only leads to a serious decline in the quality of life of patients,

depression and anxiety of patients' emotions, but also brings huge economic burden to patients and their families. It

has become the fourth largest killer of human health after cardiovascular disease, cancer and stroke. According to

the World Alzheimer's report, there are more than 50 million Alzheimer's patients worldwide, and the number of

Alzheimer's patients will reach 150 million by 2050, and the cost of caring for these patients is higher than the sum

of the first three diseases.

*Corresponding author:Huabin Wang(wanghuabin@ahu.edu.cn)

Thirteenth International Conference on Graphics and Image Processing (ICGIP 2021),

edited by Liang Xiao, Dan Xu, Proc. of SPIE Vol. 12083, 120830X

© 2022 SPIE · 0277-786X · doi: 10.1117/12.2623580

Proc. of SPIE Vol. 12083 120830X-1

Downloaded From: https://www.spiedigitallibrary.org/conference-proceedings-of-spie on 23 Feb 2023

Terms of Use: https://www.spiedigitallibrary.org/terms-of-use

Clinicians use neuropsychological cognitive tests (e.g. mini mental state tests (MMSE), logical memory testing

(LM)) and neuroimaging (e.g. functional magnetic resonance imaging (fMRI), MRI, positron emission tomography

(PET)) to assist the diagnosis of patients. Among them, MRI is one of the most popular neuroimaging techniques in

brain science.It can analyze texture and morphological changes in the brain. It is of great significance to understand

brain function and neuropsychiatric diseases. Specifically, The degree of cortical injury in the occipital, parietal,

prefrontal and temporal lobes and the of focal lesions and gray matter loss can be understood by observing MRI

scanning images[1].Studies have shown that changes in cortical atrophy, hippocampal volume reduction, and

ventricular enlargement in the brain are associated with dementia and cognitive decline[2].

Machine learning algorithms have been widely used to construct classifiers or predictors of various brain

diseases. The input feature can be structural gray matter readings obtained by cortical thickness, changes in white

matter microstructure, hippocampal volume, thickness of entorhinal cortex, etc. Machine learning algorithms such

as support vector machines (SVM) and random forests have proven to be useful for AD and mild cognitive

impairment (MCI) classification[3-5]. In recent years, The method based on depth learning has achieved good

results in the field of image recognition and object segmentation. CNN automatically search the entire image

through a series of convolution kernels (filters) which can be learned and updated independently. Combining

different convolution kernels can detect feature patterns associated with specific tasks and datasets. Currently, CNN

have been widely used in AD prediction, and the effect is better than the traditional machine learning algorithm.

For example, Abdulazeem et al.[10] proposed an end-to-end classification framework based on CNN, and in a

multi-classification experiment on ADNI dataset. The framework achieved 97.5% classification accuracy. Jiang et

al.[11] use CNN to acquire structural MRI image features, then the feature selection strategy is implemented to

eliminate redundant features, and SVM is further used to distinguish EMCI from control norm(CN). Finally 89.4%

classification accuracy was obtained. However, there are some limitations of deep learning methods in AD

prediction:(1) there are a large number of useless features in MRI images, and CNN can not adaptively identify key

features;(2) the convolution operation only focuses on local feature extraction, as the depth of the network increases,

disease-related pathological information is lost, and MRI global association information in the image is also lost.

Therefore, a multi-attention mechanism-based alzheimer’s disease prediction algorithm is proposed to solve these

problems. Experimental results show that, the performance indicators of the proposed algorithm are superior to most

mainstream algorithms.

The main contributions of this paper are as follows:

(1) Cyclic convolution layer is used to strengthen the key features of the original image, thus improving the

prediction accuracy and stability;

(2) A variety of attention mechanisms are introduced to re-calibrate features in the process of feature extraction

and adaptive learning feature weights to identify brain regions that are particularly relevant for disease

diagnosis;

(3) Improve VGG model to preserves more image feature information by adjusting the pool layer, and to

accelerate the training speed by reducing the number of neurons in the fully connected layer.

2. RELATED WORK

With the development of deep learning technology, more and more researchers use deep convolution models to

predict AD. These models are more effective than previous machine learning models, because convolution networks

can obtain more subtle features. Abrol et al.[12]used improved forms of deep residual neural networks to predict

progression from MCI to AD. The pre-trained model was first trained in AD and CN individuals and then used

transfer learning techniques to predict MCI.Their research showed that using three residual blocks works best, and

increasing the number of residual blocks does not improve the prediction effect. Venugopalan et al.[7]analyzed MRI,

Proc. of SPIE Vol. 12083 120830X-2

Downloaded From: https://www.spiedigitallibrary.org/conference-proceedings-of-spie on 23 Feb 2023

Terms of Use: https://www.spiedigitallibrary.org/terms-of-use

genes (single nucleotide polymorphisms) and clinical test data of patients. They used stacked denoising

autoencoders to extract features from clinical and genetic data, and then used CNN for AD prediction, the result

proved that the integrated multimodal data outperforms the single-modal model in terms of accuracy, recall, and

average F1-score. Sathiyamoorthi et al.[9] used two-dimensional adaptive bilateral filter (2 D-ABF) algorithm to

restore the image. An adaptive histogram adjustment algorithm (AHA) was used to improve image brightness and

contrast, segmenting regions of interest in Alzheimer's disease using the adaptive average displacement correction

expectation maximization (AMS-MEM) algorithm. Then a second-order two-dimensional gray level co-occurrence

matrix (2 D-GLCM) was used to calculate the features. Finally, the disease images and their stages were classified

by deep learning.

In recent years, the combination of deep learning and visual attention mechanism, using the principle of mask to

learn a new layer of weight, the key features in the image are identified, so that the depth neural network can form

attention to the areas that need attention in the image.The attention mechanism is to use the relevant features to learn

the weight distribution, and then apply the learned weights to the features to further extract the relevant knowledge.

Jaderberg et al.[14] proposed the spatial transformation network (STN) to transform the spatial information in

the original image into another space through the module of the spatial converter (spatial transformer), thus

extracting the key information. SENet[22] statistics the global information of the image at the feature channel level,

and uses global context to re-calibrate different channels. Woo et al.[16]proposed a convolutional block

attention module (CBAM), which divides the attention process into two separate parts, channel attention module

and spatial attention module. Not only can it save parameters and computational power, it also ensures to be

integrated into the existing network architecture as plug and play modules. Wang et al.[17] proposed Non-local

Network using self-attention mechanisms to capture remote dependencies. For each query point, the pairwise

relationship between query points and all points is calculated to get attention. Then it aggregate the characteristics

of all points by weighted sum to get the global features associated with this query point. Finally, the global features

are added to the features of each query point.

Inspired by attention mechanism, this paper proposes a prediction method of Alzheimer's disease based on

multiple attention mechanism.

3. METHODS

A CNN is used to extract disease-related feature information from MRI images to predict Alzheimer's disease.

To capture more detail information of MRI images, the VGG maximum pooling layer is replaced by the average

pooling layer. At the same time, the number of neurons in the fully connected layer is reduced, which not only

reduces the parameter quantity of the fully connected layer, but also inhibits the model over-fitting. In a cyclic

convolution layer, the results of convolution operation are added with the original image, thus enhancing the high-

order features of the original image.

3.1. Selection of Research Subjects

This article selects MRI data samples from the The Alzheimer's Disease Neuroimaging Initiative (ADNI)

database. Specific selection criteria are :(1) MRI data form the ADNI-1 period; (2) Data selected are grouped into

three groups: CN,MCI,AD; (3) Select images with T1 weighted and MP-RAGE sequences; (4) The size of each

image is 256×166×256 pixels. Experimental dataset consist of MRI images from each subject in different years, so

the amount of data used is much larger than the number of samples. Table 1 shows the sample statistics for this

experiment.

Proc. of SPIE Vol. 12083 120830X-3

Downloaded From: https://www.spiedigitallibrary.org/conference-proceedings-of-spie on 23 Feb 2023

Terms of Use: https://www.spiedigitallibrary.org/terms-of-use

Table 1. Statistical information on experimental samples

Label CN MCI AD

Number of Subjects 75 134 67

Number of MRI scans 416 339 253

Age-median (range) 82(70-93) 73(55-90) 74(55-91)

Male/Female (Permanent male) 196/220(47.1) 218/121(64.3) 123/130(48.6)

3.2. Data Preprocessing

This paper preprocesses the collected MRI images :(1) Categorize the data and send them to the SPM software

to match the scanned images of all subjects in the standard spatial coordinate system, and the specific processing

order is: head movement correction, registration, segmentation, standardization, smooth processing; (2) Separate

sections of gray matter, white matter and cerebrospinal fluid images along the axis after SPM treatment.Starting

with the slice at the 120th index position, and a total of 16 slices were taken from each MRI image; (3) Fusion of

gray matter, white matter and cerebrospinal fluid sections into a 3-channel image. Eventually, a total of 16128

fused images were generated in three categories, each with dimensions of 3×166×256 pixels. The sections selected

in this paper covered the lateral ventricle, lower temporal lobe, and middle temporal cortex, which were associated

with AD and MCI. As shown in figure 1, white matter, gray matter and cerebrospinal fluid sections were fused as

input to the model.

Figure 1. AD images of fused cerebrospinal fluid, white matter and gray matter

3.3. Prediction Model Based on Multiple Attention Mechanism

As shown in figure 2, the CNN model designed in this paper consists of three parts : (1) Cyclic convolution

layer adds the acquired feature maps to the original images; (2) The improved VGG is used as network to extract

feature information related to Alzheimer's disease; (3) Attention module automatically selects the most effective

feature for predicting disease by weight learning.

Proc. of SPIE Vol. 12083 120830X-4

Downloaded From: https://www.spiedigitallibrary.org/conference-proceedings-of-spie on 23 Feb 2023

Terms of Use: https://www.spiedigitallibrary.org/terms-of-use

Figure 2. Alzheimer's disease prediction model based on multiple attention mechanism

Backbone network: In this paper, the improved 11-layer VGG network is used as the backbone network to

extract features. In order to keep the local correlation information of the image as much as possible, the maximum

pool layer is adjusted to the average pool layer. In order to make the model more stable during training, batch

normalization layer is added after each convolution layer. In order to reduce the number of model parameters and

accelerate the training process, the number of fully connection layer output features is reduced. The backbone

network consists of eight convolution blocks(size=3×3, padding=1), and the average pool (size=2×2,stride=2) is

added to block 1,2,4 and 6, but the maximum pool of the last block is retained. Table 2 lists the details of the

network.

Table 2. Network configuration of Alzheimer's disease prediction model based on multiple attention mechanism

Section Layer Name Layer Parameters Output Size

3×3 64 − d

Cyclic convolution 3×3 64 − d × 2

Block 1 3×3 3−d 3×166×256

layer

Stride=1,padding=1

Conv: 64×3×3 , padding=1

Layer 1 64×83×128

Avg: 2×2, stride=2

Conv: 128×3×3 , padding=1

Backbone Layer 2 128×41×64

Avg: 2×2, stride=2

Layer 3 Conv: 256×3×3 , padding=1 64×41×64

Layer 4 Conv: 256×3×3 , padding=1 64×20×32

Proc. of SPIE Vol. 12083 120830X-5

Downloaded From: https://www.spiedigitallibrary.org/conference-proceedings-of-spie on 23 Feb 2023

Terms of Use: https://www.spiedigitallibrary.org/terms-of-use

Avg: 2×2, stride=2

Layer 5 Conv: 512×3×3 , padding=1 64×20×32

Conv: 512×3×3 , padding=1

Layer 6 64×10×16

Avg: 2×2, stride=2

Layer 7 Conv: 512×3×3 , padding=1 64×10×16

Conv: 512×3×3 , padding=1

Layer 8 64×5×8

Avg: 2×2, stride=2

FC 1 512 512×1

FC 2 96 96×1

FC 3 3 3×1

Attention module: The CBAM module is added in first layer convolution block, and the channel attention (CA)

and spatial attention (SA) are connected in series. The CBAM module is shown in figure 3, WHC represent the

width, height and number of channels of the feature map. The input feature map passes through the channel

attention module, the global pooling technology is used to compress the W×H feature map along the spatial

dimension to 1×1, while keeping the number of feature maps unchanged. The sigmoid function is used to calculate

the weight.

Proc. of SPIE Vol. 12083 120830X-6

Downloaded From: https://www.spiedigitallibrary.org/conference-proceedings-of-spie on 23 Feb 2023

Terms of Use: https://www.spiedigitallibrary.org/terms-of-use

Figure 3. CBAM convolutional block attention module

As shown in figure 2, the Non-Local Attention module (NL) is added after the fourth convolution block of the

backbone network, and the global connectivity is directly realized by strengthening the distance dependence through

NL. Local is mainly for the receptive field of convolution operation while the general receptive field is 3×3 and 5×5.

They only consider local regions, so they are all local operations. NL refers to the receptive field which is very large,

so it can see the overall situation. NL form is as follows:

1

yi = f(xi , xj )g(xj )

∁(x)

∀j

The i and j represent the spatial position of the input, function f calculates the similarity of any two points,

function g computes feature map representation at the j position.The y is finally obtained by standardizing response

factor ∁ x (Softmax) after processing.Using Embeded Gaussian as f function, 1×1 convolution as θ and ϕ.

Tϕ x

f(xi , xj ) = eθ xi j

∁ x = f(xi , xj )

∀j

Proc. of SPIE Vol. 12083 120830X-7

Downloaded From: https://www.spiedigitallibrary.org/conference-proceedings-of-spie on 23 Feb 2023

Terms of Use: https://www.spiedigitallibrary.org/terms-of-use

Figure 4. Non Local global attention module

After combining channel attention module, spatial attention module and global attention module, the model can

pay attention to the local and global features of the input image at the same time, thus enhancing the

representativeness of the features.

Cyclic convolution layer: By stacking the results of the two-layer convolution into the input data, strengthening the

significance features of the region, allowing the prediction network to capture the features faster.The display form of

cyclic convolution is as follows, which xt represents the input of the current moment,ht−1 represents the output of the

previous moment, and f represents the linear activation function.

ht = f xt + W ht−1 + xt−1

3.4. Model Training

The experimental dataset is divided into train set and test set. The train set contains 10,000 images and the test

set contains 6,128 images. During training, 500 images are randomly selected from the train set each time as the

verification set. After each epoch, the accuracy of the model on the verification set is calculated, and the parameters

with the highest accuracy of the verification set are retained. The image shape of the input model is 3×166×256

pixels, batchsize is 50. After three epoch intervals, the performance of the model is calculated using the test set, and

stop the training process when the model falls into over-fitting. Stochastic gradient descent optimization algorithm

is used, the initial learning rate is 0.01, the momentum is 0.9. There may be some data that are particularly sensitive

to label changes, resulting in an abnormal increase in the loss function, which makes the model unstable. To

increase the stability of the model, the cross entropy loss and label smoothing regularization are used, it ensures the

generalization ability of the model and the data is not too sensitive to labels.

3.5. Performance Evaluation Indicators

To evaluate the classification performance of the model, the classification accuracy (ACC), sensitivity (SEN),

specificity (SPE), F1-score were calculated in experiments. ACC is defined as the proportion of correctly classified

samples in the total number of samples. SEN is calculated according to the proportion of correctly classified

positive samples to the total number of positive samples. SPE is calculated according to the proportion of correctly

Proc. of SPIE Vol. 12083 120830X-8

Downloaded From: https://www.spiedigitallibrary.org/conference-proceedings-of-spie on 23 Feb 2023

Terms of Use: https://www.spiedigitallibrary.org/terms-of-use

classified negative samples to the total number of negative samples. F1-score is a weighted average of model

accuracy and recall rate, which takes into account the accuracy and recall rate of classification model.

4. EXPERIMENTS AND DISCUSSIONS

The dataset is randomly divided into independent train set and test set before the experiment begin. Using

Pytorch deep learning framework to build network.

4.1. Ablation Experiments

This paper uses an 11-tier VGG network to extract MRI features. In the process of feature extraction, attention

module is added to strengthen the expression ability of the network. For the purpose of verifying the predictive

performance of the network, we compared the results of the original VGG network with the VGG Advanced

network and the VGG Attention network. As shown in table 5, the average Accuracy of the VGG Advanced

network is 99.6%, up about 0.4% compared with original VGG network. After increasing the attention mechanism,

Accuracy up about 0.2%. Finally, the average prediction Accuracy of our model is 99.8%, the other performance

indicators of the model are superior to the original VGG network.

Table 3. Experimental analysis of ablation

Model ACC(%) SEN(%) SPE(%) F1-score

VGG 99.2 99.5 99.4 0.989

VGG Advanced 99.6 99.9 99.7 0.994

VGG Attention 99.8 99.7 99.8 0.997

Proposed 99.8 99.9 99.8 0.996

Figure 5. Performance changes of ablation network

Proc. of SPIE Vol. 12083 120830X-9

Downloaded From: https://www.spiedigitallibrary.org/conference-proceedings-of-spie on 23 Feb 2023

Terms of Use: https://www.spiedigitallibrary.org/terms-of-use

As shown in Figure 5, after ninth iteration on the train set, the Accuracy and Specificity of VGG network begin

to decline. As the training epoch increases, the over-fitting phenomenon of the network leads to the decline of

performance. We observed that the Accuracy and Specificity of VGG Advanced network and VGG Attention

network remain on the rise, but the performance of the network seems to reach the maximum. At the same time, it

can be observed that the attention mechanism enables the network to capture the key features of the MRI image

faster. On the one hand, the network performance is improved obviously. On the other hand, the attention

mechanism makes the model more stable in training. Our proposed model with cyclic convolution block, slightly

improved network prediction accuracy and sensitivity. Therefore, this paper adds attention mechanism and cyclic

convolution layer to the VGG network to predict the AD.

4.2. Model Comparison Experiment

We chose the classic CNN model to do the model comparison experiment. VGG were made by Karen Simonyan

and got second place in the detection and classification task at the 2014 ImageNet Challenge, respectively. VGG use

small convolution kernels (3×3), whereas previous net using larger convolution kernels (11×11, 5×5 etc.).There are

two main meanings of using small convolution: one is to obtain the same receptive field with much smaller

calculation; and two layers of 3×3 convolution can introduce more non-linearity than one layer of 5×5 convolution,

so that the fitting ability of the model is stronger. The GoogLeNet was proposed by Christian S. et al., in addition to

the continued increase in network depth, multiple convolutions of different sizes used in the same layer are finally

superimposed. The ResNet proposed by Kaiming He used shortcut short connections to make the network very deep,

solved the problem that the increase of network depth will cause the gradient to disappear. ResNet proposed a

variety of network structures of different depths, such as ResNet-18、ResNet-50 and ResNet-101.

Table 4. Comparative experimental analysis of models

Model ACC SEN SPE F1-score

AlexNet 91.5 87.3 93.6 0.873

VGG 99.2 99.5 99.4 0.989

GoogLeN 97.4 96.1 98.0 0.961

et

ResNet-18 99.3 98.9 99.4 0.989

ResNet-50 99.5 99.2 99.6 0.992

Proposed 99.8 99.9 99.8 0.996

Proc. of SPIE Vol. 12083 120830X-10

Downloaded From: https://www.spiedigitallibrary.org/conference-proceedings-of-spie on 23 Feb 2023

Terms of Use: https://www.spiedigitallibrary.org/terms-of-use

Figure 6. Model contrast experimental network performance changes

As shown in table 6, our proposed method achieved the best results in various performance indicators such as

Accuracy,Sensitivity,Specificity and F1-score. We also observed very low sensitivity to predictive AD using

AlexNet networks. GoogLeNet used inception modules to stack multiple convolution kernels of different sizes to

extract features and it is more complex than AlexNet and VGG. However, the experimental results show that it’s

prediction effect is limited. We can see that ResNet have a high specificity for AD prediction. Moreover, increasing

the depth of the network can significantly improve the performance (the ResNet-50 performance indicators are

better than ResNet-18). Meanwhile, We found that the depth and width of VGG are not as deep as GoogLeNet and

ResNet. Yet the performance of VGG is superior to GoogLeNet and ResNet-18 and close to ResNet-50. As shown

in Figure 6, after ninth iteration, the Accuracy and Sensitivity of ResNet-50 has declined significantly, and VGG

showed a small decline. ResNet-18 network fluctuated greatly during training. Our proposed model had steadily

improved performance during training, the indicators are consistently higher than other networks, indicating that our

proposed model identifies key features that can effectively and accurately predict AD.Meanwhile, compared with

the ResNet networks, the proposed network greatly improves the computational performance using a more concise

network structure, and finally achieves best prediction effect.

4.3. Comparison of Methods

Table 7 shows that the experimental results of predicting AD using different methods.They all use MRI

scanning data under the ADNI database, but the subjects selected in the experiment are different. Comparing the

experimental results of different methods, we found that the proposed method is superior to the existing mainstream

methods in Accuracy,Sensitivity and Specificity.

Asl, et al. [22] used 3D-CNN and transfer learning techniques to predict AD. Finally ,97.6% accuracy was

obtained in CN/AD classification. Bumshik et al.[19] removed the noise pixels from the sMRI image intensity

Proc. of SPIE Vol. 12083 120830X-11

Downloaded From: https://www.spiedigitallibrary.org/conference-proceedings-of-spie on 23 Feb 2023

Terms of Use: https://www.spiedigitallibrary.org/terms-of-use

histogram after calculating the entropy of the slice. The average accuracy of CN/AD classification was 97.0%, and

three classification accuracy was 98.0%. Jain et al.[18] used transfer learning technology on 16-layer VGG network

to predict AD. Mehmood et al.[8] used pre-trained VGG, the accuracy of CN/AD classification was 98.73%. Raju et

al.[13] used 3D CNN, the accuracy of three classification was 97.7%. Basaia et al.[21]used 3D-CNN network on an

enhanced MRI dataset, the accuracy of two classification was 99.2%. Li et al.[20] used 3D-ResNet, the

classification accuracy was 95%. Zhang et al.[23] used 3D residual attention depth neural network to capture local,

global and spatial information of MRI images to improve diagnostic performance, the accuracy of the two

classification was 91.7%.

Our proposed CNN network outperforms the existing mainstream methods. In particular, the sensitivity of our

method to AD is very high. The pre-trained models can provide network efficiency, but is not necessary to improve

network performance. Increasing dataset scale (such as data enhancement and increasing the number of slices) is

beneficial to network training.

Table 5. Comparative experimental analysis of methods

Method ACC(%) SEN(%) SPE(%) F1-score Classification

Zhang et al.[6] 88.2 97.4 84.3 - AD/MCI

Mehmood, A., et 98.7 98.1 99.0 - CN/AD

al.[8]

Raju et al .[13] 97.7 - - - CN/MCI/AD

Jain et al.[18] 95.7 - - - CN/MCI/AD

Bumshik et al.[19] 98.7 97.6 96.7 0.983 CN/MCI/AD

Li Q et al.[20] 95.0 94.3 96.0 - CN/AD

Basaia et al.[21] 99.2 98.9 99.5 - CN/AD

Asl et al.[22] 97.6 - - - CN/AD

Zhang et al.[23 91.0 91.0 92.0 - CN/AD

Proposed 99.8 99.9 99.8 0.996 CN/MCI/AD

4.4. Analysis of Experimental Results

As shown in Figure 7, as the number of training increases, Accuracy curve tends to 100% and Loss to 0. There

are 90% of the errors are due to the model's failure to correctly distinguish between MCI and AD. Because of the

unclear definition of MCI and the difference between the results of MRI scanning and the actual diagnosis of the

patient's condition.

Proc. of SPIE Vol. 12083 120830X-12

Downloaded From: https://www.spiedigitallibrary.org/conference-proceedings-of-spie on 23 Feb 2023

Terms of Use: https://www.spiedigitallibrary.org/terms-of-use

Figure 7. Loss and Accuracy curves

5. CONCLUSION AND FUTURE WORK

This paper presents a prediction method of Alzheimer's disease based on multiple attention mechanisms. An AD

prediction model is established by using deep convolution neural network. And the model consists of three parts.

The first part use cyclic convolution to capture feature information. In the second part, an improved VGG network

is used to extract disease features and output feature vectors. And the third part use the extracted feature vector to

predict the AD. We add channel, spatial, and global attention mechanisms to the first and fourth layers of backbone

networks to capture both global and local pathological features in the image in favor of AD prediction. The results

of ablation experiments show that attention mechanism can improve the prediction performance of the network. In

order to objectively evaluate the performance of the proposed model, we compare the results of the proposed

method with the literature method using the same ADNI database. The results show that the performance of our

proposed model is superior to the existing mainstream methods:Accuracy, Sensitivity, Specificity and F1-score on

the test set are 99.8%,99.9%,99.8% and 0.996, respectively. In future research, We will combine multi-modal data

(sMRI 、PET 、 fMRI and neuropsychological cognitive evaluation scores, etc.) for AD prediction, and further

explore the interpretability of the AD prediction model.

ACKNOWLEDGMENTS

This work was supported in part by the National Natural Science Foundation of China under Grant 61372137,

in part by the Natural Science Foundation of Anhui Province under Grant 1908085MF209 and in part by the

Natural Science Foundation for the Higher Education Institutions of Anhui Province under Grant KJ2019A0036.

Huabin Wang is the corresponding author. E-mail address: wanghuabin@ahu.edu.cn.

Proc. of SPIE Vol. 12083 120830X-13

Downloaded From: https://www.spiedigitallibrary.org/conference-proceedings-of-spie on 23 Feb 2023

Terms of Use: https://www.spiedigitallibrary.org/terms-of-use

REFERENCES

[1] Lorenzetti, V. , et al. "Structural brain abnormalities in major depressive disorder: a selective review of recent

MRI studies. " Journal of Affective Disorders 117.1-2,1-17(2009).

[2] Goethals, M. , and P. Santens . "Posterior cortical atrophy. Two case reports and a review of the literature. "

Clin Neurol Neurosurg 103.2,115-119(2001).

[3] Dyrba, M. , et al. "Multimodal analysis of functional and structural disconnection in Alzheimer's disease using

multiple kernel SVM." Human Brain Mapping 36.6,2118-2131(2015)

[4] Hojjati, S. H. , et al. "Predicting conversion from MCI to AD using resting-state fMRI, graph theoretical

approach and SVM." Journal of Neuroscience Methods 282.Complete,69-80(2017).

[5] Ramirez, J. , et al."Ensemble of random forests One vs .Rest classifiers for MCI and AD prediction using

ANOVA cortical and subcortical feature selection and partial least squares." Journal of Neuroscience Methods,

302,47-57(2018).

[6] Zhang, F. , et al. "Multi-modal Deep Learning Model for Auxiliary Diagnosis of Alzheimer's Disease."

Neurocomputing, 361(2019).

[7] Venugopalan, J. , et al. "Multimodal deep learning models for early detection of Alzheimer's disease stage."

Scientific Reports, 11(1), (2021)

[8] Mehmood, A. , R. Khan , and M. Maqsood . "A Transfer Learning Approach for Early Diagnosis of

Alzheimer's Disease on MRI Images." Neuroscience, (2021).

[9] Sathiyamoorthi, V. , et al. "A Deep Convolutional Neural Network based Computer Aided Diagnosis System

for the Prediction of Alzheimer's Disease in MRI Images." Measurement, 171, (2021).

[10] Abdulazeem, Y. , W. M. Bahgat , and M. Badawy . "A CNN based framework for classification of Alzheimer's

disease." Neural Computing and Applications, 1-14(2021).

[11] Jiang, J. , et al. "Deep Learning based Mild Cognitive Impairment Diagnosis Using Structure MR Images."

Neuroscience Letters 730(2), 134971(2020).

[12] Abrol, A. , et al. "Deep Residual Learning for Neuroimaging: An application to Predict Progression to

Alzheimer's Disease." Journal of Neuroscience Methods, 339, (2020).

[13] Raju, M. , et al. "Multi-class diagnosis of Alzheimer's disease using cascaded three dimensional-convolutional

neural network." Physical and Engineering Sciences in Medicine, 43(4), (2020).

[14] Jaderberg, M. , et al. "Spatial Transformer Networks." NIPS, (2015).

[15] Jie, H. , et al. "Squeeze-and-Excitation Networks." IEEE Transactions on Pattern Analysis and Machine

Intelligence, PP.99(2017).

[16] Woo, S. , et al. "CBAM: Convolutional Block Attention Module." Springer, Cham (2018).

[17] Wang, X. , et al. "Non-local Neural Networks." CVPR, (2017).

[18] Jain, R. , et al. "Convolutional neural network based Alzheimer's disease classification from magnetic

Proc. of SPIE Vol. 12083 120830X-14

Downloaded From: https://www.spiedigitallibrary.org/conference-proceedings-of-spie on 23 Feb 2023

Terms of Use: https://www.spiedigitallibrary.org/terms-of-use

resonance brain images." Cognitive Systems Research 57.OCT, 147-159 (2019).

[19] Bumshik, et al. "Using Deep CNN with Data Permutation Scheme for Classification of Alzheimer's Disease in

Structural Magnetic Resonance Imaging (sMRI)." IEICE Transactions on Information and Systems E102.D.7,

1384-1395(2019).

[20] Li, Q. , and M. Q. Yang . "Comparison of machine learning approaches for enhancing Alzheimer's disease

classification." PeerJ 9.12, e10549 (2021).

[21] Basaia, S. , et al. "Automated classification of Alzheimer's disease and mild cognitive impairment using a

single MRI and deep neural networks." NeuroImage: Clinical, 21, 101645 (2019).

[22] Asl, E. H. , R. Keynton , and A. El-Baz . "Alzheimer's Disease Diagnostics by Adaptation of 3D Convolutional

Network." IEEE International Conference on Image Processing - ICIP 2016 IEEE, (2016).

[23] Zhang, X. , et al. "An Explainable 3D Residual Self-Attention Deep Neural Network For Joint Atrophy

Localization and Alzheimer's Disease Diagnosis using Structural MRI." IEEE Journal of Biomedical and

Health Informatics PP.99, 1-1(2021).

Proc. of SPIE Vol. 12083 120830X-15

Downloaded From: https://www.spiedigitallibrary.org/conference-proceedings-of-spie on 23 Feb 2023

Terms of Use: https://www.spiedigitallibrary.org/terms-of-use

You might also like

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (122)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (589)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (401)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (842)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (897)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5806)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (345)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1091)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (74)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- M.H. Sloboda - 1961 - Design and Strength of Brazed JointsDocument16 pagesM.H. Sloboda - 1961 - Design and Strength of Brazed JointsPieter van der MeerNo ratings yet

- 24 Trig Identity ProblemsDocument4 pages24 Trig Identity ProblemsAldrean TinayaNo ratings yet

- CHE 321 Unit Operation 1 (3 Units) : 1: Drying, Conveying 2: Sedimentation, ClarificationDocument32 pagesCHE 321 Unit Operation 1 (3 Units) : 1: Drying, Conveying 2: Sedimentation, ClarificationGlory UsoroNo ratings yet

- FF68 Manual Check DepositDocument10 pagesFF68 Manual Check DepositvittoriojayNo ratings yet

- OSPF LabsDocument83 pagesOSPF LabsGulsah BulanNo ratings yet

- Expert Systems - 2022 - Abugabah - Health Care Intelligent System A Neural NetwDocument13 pagesExpert Systems - 2022 - Abugabah - Health Care Intelligent System A Neural NetwWided HechkelNo ratings yet

- Deep Learning 3D Convolutional Neural Networks For Predicting Alzheimer's Disease (ALD)Document12 pagesDeep Learning 3D Convolutional Neural Networks For Predicting Alzheimer's Disease (ALD)Wided HechkelNo ratings yet

- Alzheimer's Disease Classification Using Feed Forwarded Deep Neural Networks For Brain MRI ImagesDocument15 pagesAlzheimer's Disease Classification Using Feed Forwarded Deep Neural Networks For Brain MRI ImagesWided HechkelNo ratings yet

- Biceph-Net A Robust and Lightweight Framework For The Diagnosis of Alzheimers Disease Using 2D-MRI Scans and Deep Similarity LearningDocument9 pagesBiceph-Net A Robust and Lightweight Framework For The Diagnosis of Alzheimers Disease Using 2D-MRI Scans and Deep Similarity LearningWided HechkelNo ratings yet

- An Enhanced Deep Convolution Neural Network Model To Diagnose Alzheimer's Disease Using Brain Magnetic Resonance ImagingDocument11 pagesAn Enhanced Deep Convolution Neural Network Model To Diagnose Alzheimer's Disease Using Brain Magnetic Resonance ImagingWided HechkelNo ratings yet

- Neuroimaging (Anatomical MRI) - Based Classification of Alzheimer's Diseases and Mild Cognitive Impairment Using Convolution Neural NetworkDocument11 pagesNeuroimaging (Anatomical MRI) - Based Classification of Alzheimer's Diseases and Mild Cognitive Impairment Using Convolution Neural NetworkWided HechkelNo ratings yet

- 1 s2.0 S1746809422007662 MainDocument11 pages1 s2.0 S1746809422007662 MainWided HechkelNo ratings yet

- 1 s2.0 S1746809422003500 MainDocument16 pages1 s2.0 S1746809422003500 MainWided HechkelNo ratings yet

- 1 s2.0 S0169260721005770 MainDocument10 pages1 s2.0 S0169260721005770 MainWided HechkelNo ratings yet

- 1 s2.0 S1110016822005191 MainDocument11 pages1 s2.0 S1110016822005191 MainWided HechkelNo ratings yet

- 1 s2.0 S0031320322003065 MainDocument10 pages1 s2.0 S0031320322003065 MainWided HechkelNo ratings yet

- Partie1 These KarimDocument50 pagesPartie1 These KarimWided HechkelNo ratings yet

- Improving The Image Resolution in Diverging Wave Compounding Using The Sparse Arrays Method Combined With The Minimum VarianceDocument4 pagesImproving The Image Resolution in Diverging Wave Compounding Using The Sparse Arrays Method Combined With The Minimum VarianceWided HechkelNo ratings yet

- Combustion in Diesel EngineDocument108 pagesCombustion in Diesel EngineAmanpreet Singh100% (2)

- Chapter 10 - ElectrostaticsDocument8 pagesChapter 10 - ElectrostaticsMary Kate BacongaNo ratings yet

- Apti and Interview PreparationDocument12 pagesApti and Interview Preparationchaitalichoudhary20No ratings yet

- Biology June 2019 1BRDocument32 pagesBiology June 2019 1BRMohamedNo ratings yet

- 21711c PDFDocument24 pages21711c PDFAbdessamad EladakNo ratings yet

- Materials 12 03033 v2Document30 pagesMaterials 12 03033 v2GooftilaaAniJiraachuunkooYesusiinNo ratings yet

- Visual Aids Uses and ApplicationDocument32 pagesVisual Aids Uses and ApplicationTasneem AhmedNo ratings yet

- Hood Vents InstallationDocument12 pagesHood Vents Installationapi-26140644No ratings yet

- SBA5089ZDocument6 pagesSBA5089ZFrancisca Iniesta TortosaNo ratings yet

- Climatic Subdivisions in Saudi Arabia: An Application of Principal Component AnalysisDocument17 pagesClimatic Subdivisions in Saudi Arabia: An Application of Principal Component AnalysisirfanNo ratings yet

- PNP Assignment - Difference Between Phonetics and PhonologyDocument6 pagesPNP Assignment - Difference Between Phonetics and PhonologyMuhammad Jawwad Ahmed100% (1)

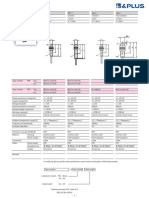

- B&Plus Proximity Sensor - 001.BES07e - Usm8-1Document1 pageB&Plus Proximity Sensor - 001.BES07e - Usm8-1Hussein RamzaNo ratings yet

- Solving ODEs Using Taylor Series ..Document25 pagesSolving ODEs Using Taylor Series ..asfimalikNo ratings yet

- Worksheet On Carboxylic AcidsDocument3 pagesWorksheet On Carboxylic AcidsmalisnotokNo ratings yet

- Optimal Design of Hybrid MSFRO Desalination PlantDocument185 pagesOptimal Design of Hybrid MSFRO Desalination PlantmohdnazirNo ratings yet

- Artificial Intelligence - Midterm ExamDocument7 pagesArtificial Intelligence - Midterm ExamMemo AlmalikyNo ratings yet

- Mlion-Catalogue (2020)Document28 pagesMlion-Catalogue (2020)M.ariefiryuqoriNo ratings yet

- Fractions Improper1 PDFDocument2 pagesFractions Improper1 PDFthenmoly100% (1)

- Electric PotentialDocument20 pagesElectric PotentialAllan Gabriel LariosaNo ratings yet

- 01 RationalNumbersDocument11 pages01 RationalNumbersSusana SalasNo ratings yet

- LP - MATH Gr7 Lesson2 Universal Set, Null Set, Cardinality of Set IDocument6 pagesLP - MATH Gr7 Lesson2 Universal Set, Null Set, Cardinality of Set ISarah AgunatNo ratings yet

- STM32 HTTP CameraDocument8 pagesSTM32 HTTP Cameraengin kavakNo ratings yet

- Projection of Planes: Problem 1Document2 pagesProjection of Planes: Problem 1Ravi ParkheNo ratings yet

- Operating Manual: (Shaker)Document43 pagesOperating Manual: (Shaker)Cardenas Cuito MarcoNo ratings yet