Professional Documents

Culture Documents

A Comprehensive Analysis of Medical Image Segmentation Using Deep Learning

Uploaded by

Vinayaga MoorthyOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

A Comprehensive Analysis of Medical Image Segmentation Using Deep Learning

Uploaded by

Vinayaga MoorthyCopyright:

Available Formats

International Journal of Scientific Research and Review ISSN NO: 2279-543X.

A Comprehensive Analysis of Medical Image Segmentation

using Deep Learning

1

R.Vinayagamoorthy, 2 T.Arul Raj, 3T.Ilamparithi,

4

Dr.R.Balasubramanian

1

vinayagamoorthy3@gmail.com, 2arulraj121@gmail.com, 3 iparithy@gmail.com,

4

rbalus662002@yahoo.com

1, 2

Research scholar, Department of computer science & engineering, Manonmaniam Sundaranar

University, Tirunelveli-627 012

3

Assistant Professor, Department of Computer Science, MSU College, Puliangudi, India

4

Professor, Department of computer science & engineering, Manonmaniam Sundaranar University,

Tirunelveli-627 012

Abstract

Image segmentation has created important advances in recent years. Recent work construct to a

great extent with respect to Deep Learning techniques that has brought about groundbreaking

enhancement within the accuracy of segmentation. As a result of image segmentations are a mid-

level illustration, they need a potential to create major contribution over the wide field of visual

understanding from image classification and interactive pursuit. Medical image segmentation is a

sub area of image segmentation that has many essential applications inside the prospect of

medical image evaluation and diagnostic. In this paper, distinct strategies of medical image

segmentation could be classified forthwith their sub techniques and sub fields. This paper

presents useful approaches into the field of medical image segmentation using Deep Learning

and attempt to summarize the long term scale of work.

I Introduction

In image segmentation, the images are partitioned into series of non-overlapping regions. These

regions provide the tissues of human with utterly different structures and submit the tissue into

the suitable technique for determining the clinical identification accurately. Automatic

segmentation of medical image is difficult due to the fact of variations in structure such as shape

and size among patients [1]. Moreover, the tissue surrounded by the poor contract will create

troublesome in automated segmentation. Recently, the application of deep learning based

methods provides an effective classification and learning of features from the image directly.

Particularly, the improvement of Convolutional Neural Network (CNN) has similarly advanced

the progressive in medical image segmentation [2].

A CNN contains more than one Conloluational layers with sub sampling layers. The

convolutional layers build spatial correlation for given input image by constructing a feature map

and sharing the kernel weight for each contribution [3]. The CNNs are effectual due to the

hierarchical feature representation of a given image is learned strictly in data driven method. The

challenges of using CNNs are defined as follows.

Volume 8, Issue 6, 2019 Page No: 613

International Journal of Scientific Research and Review ISSN NO: 2279-543X.

1. The CNN does not simplify the formerly unseen objects that are not available in the

training set. In medical image segmentation, the annotations of medical image are high-

prices to gather as both knowledge and it take more time to produce the accurate

information. This restricts the performance of CNNs to segment the specified image for

annotations are not present in the training set [4].

2. The recent research is not adaptive for various test images and requires image specific

learning for dealing the large context variations among various images.

Deep learning is a set of rules in machine learning [5] that automatically analyze the medical

images efficiently for diagnosis the diseases. In recent year, deep learning become popular by

facilitate the higher level of abstraction for providing enhanced prediction from the given data

set. In this paper, the proposed deep learning based medical image segmentation techniques are

compared to identify the challenges for its future research.

The rest of paper is organized as follows. In section II, architecture of Deep learning technique is

described in detail afterwards section III addresses the application of deep learning in medical

field and the pros and cons of each algorithm is discussed in brief. Finally the conclusion is

drawn with suggestion of future research.

II Deep Learning Architecture

This section analyzes completely different deep learning architecture that are developed and

utilized within the method of medical image segmentation. The aim of the survey is.

To show that the deep learning technologies are saturated into the entire field of

medical image segmentation

To identify the difficulties for effective utilization of deep learning in medical image

segmentation

To emphasize the precise contribution which illuminate or go around the difficulties

in medical image segmentation

The medical image segmentation permits the chemical analysis of clinical parameters associated

with the shape and volume of organs and its sub structures such as the analysis of brain and

cardiac [6]. The function of medical image segmentation is determining the arrangements of

voxels that invoke the structure of either contour or inside of the objects of interest.

Segmentation is that the most typical method for applying deep learning to medical image and

intrinsically observed the most stretched out assortment in approach. Here some of the important

existing deep learning architectures are analyzed as follows.

Unet

The U Net [7] architecture is constructed upon the FCCN and adapted in an exceedingly method

that it give up higher segmentation in medical image. The two important innovations of U Net

architecture is the unsampling and downsampling layers are mixed in equal quantity. The Unet

architecture is differed from FCN-8 in two ways (1) U Net has symmetric connection (2) the skip

Volume 8, Issue 6, 2019 Page No: 614

International Journal of Scientific Research and Review ISSN NO: 2279-543X.

connection between convolution and deconvolution layers apply a concatenate operator from

contracting and expanding its path. The aim of skip connection is to provide the local and global

information whereas unsampling. From the training viewpoint, the segmentation map is directly

produced in U-Net by processing the image in single forward pass. Due to the symmetric

connection, U-Net has a large number of feature map within the sampling layer that permits

transferring the information. The U-Net architecture is shown in fig 2.

Fig 1. Architecture of U-Net.

In U-Net, the contracting path consists of 4 blocks in which each block can have 3*3

convolutional layers and activation function with batch normalization. U-Net starts with 64

feature map. It doubles the feature map at each pooling. In order to segment the medical image,

the contracting path confine the context of input image and transfer it to the expanding path.

V-Net

In medical image segmentation, the diagnostic images often contains 3D format that are having

the ability to perform volumetric segmentation by the way of deliberating the entire volume

content directly has a specific relevance. The aim of V-Net [8] architecture is segment the

prostate MRI volumetric. This can be difficult function due to the prostate will assume in

different examines because of deformation of intensity distribution. In V-Net, medical image

segmentation uses the intensity of completely convolutional neural systems, to process MRI

volumes. In contrast to other latest methodologies, V-Net abstain from preparing the input

volumes in slice-wise and uses the dice coefficient maximization to get an accurate

segmentation. In V-Net the MRI images are segmented quickly and efficiently than other

networks.

In training, dice overlab coefficient is established between anticipated segmentation and

ground truth annotation. Fig. 3 shows a graphical representation of V-Net architecture. The

compression path is placed in left side and decompression path is in right side. It uses a

volumetric kernel with the size of 5*5*5 voxels for performing the convolution at each state. In

Volume 8, Issue 6, 2019 Page No: 615

International Journal of Scientific Research and Review ISSN NO: 2279-543X.

order to activate various resolution, the left side of the network is further divided into number of

stages.

GoogleNet

The GoogleNet [9] is developed by Google. It contains 22 layers with the novel method of

inception module. This module consists of number of very little convolutional layers with the

purpose of reducing the number of required parameters.

Fig.2 GoogleNet Architecture

In GoogleNet, the number of parametes is reduced from 60 million to 4 million compared

than AlexNet [10]. In GoogleNet many feature extractors exists in a single layers which helps to

improve the performance of network indirectly. The inception modules are arranged in stacked

manner in the final architecture when compared with the other network, the top most layers are

made up of its own later. As a result, the GoogleNet converge very fast compared than others

and the parallel trainings and joint trainings are performed layer itself.

ResNet

ResNet [11] built the architecture with the novel approach of skip connections with feature heavy

batch normalization. It has the ability to train the network with 152 layers at the same time as

lower complexities compared than previous networks. ResNet includes multiple residual

modules which helps to construct the architecture. The illustration of residual module as follows.

The residual module has two choices, both it may carry out the set of task on this input or it

may well omit this step on the whole. Now almost like GoogleNet, these modules are arranged

in staked manner to construct an absolute end-end network. Some of the additional novel

methods are introduced by the ResNet is described as follows.

ResNet use a standard SGD rather than a elaborate adaptive technique.

This can be carried out together with an affordable initialization function that

maintains the training together.

Volume 8, Issue 6, 2019 Page No: 616

International Journal of Scientific Research and Review ISSN NO: 2279-543X.

RCNN (Region Based CNN)

RCNN [12] is a region based method which can carried out the semantic segmentation based on

the result of object detection. It solves object detection problem by constructing the bounding

box for the object which are present in the image and then recognize the specified object in the

existing image. The structure of RCNN is defined as follows.

Fig 3. RCNN architectur

The RCNN determine the CNN feature of each object by using a selective search to extort

huge amount of object proposals. It uses class specific classifier SVMs for classifying each

region in the image. The RCNN concentrated on the more problematical task of image

segmentation and object detection. In RCNN image segmentation, 2 kinds of features are

extracted in each region. They are foreground features and full region features. These two

features are combined together to get a good performance for image segmentation. At testing

stage, the region based predictions are converted into pixel based predictions. The pixels are

classified based on the high score in the region.

SegNet

SegNet [13] is a deep learning architecture for image segmentation which has encoder and

consequent decoder with pixel classification layer. The encoder generates the feature map by

carry out the convolution with filter bank. The input feature map is converted into unsample

using the decoder with the help of max pooling. The max pooling induces from feature map

decoder. As a result, the sparse feature map is generated.

Fig 4. SegNet Architecture

Volume 8, Issue 6, 2019 Page No: 617

International Journal of Scientific Research and Review ISSN NO: 2279-543X.

These feature maps is further trained by the decoder filter bank to create a dense feature

maps. Finally batch normalization is applied over the feature maps. The decoded feature map is

passed into the soft-max classifier to generate the independent class probability for every pixel.

The output of soft-max classifier consist of k probabilities channel images. It retains the high

frequency details efficiently in segmented image by connecting the pooling indices of encoder

with the pooling indices of decoder. The comparison of deep learning architectures are shown in

table 1.

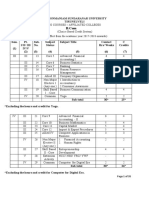

Table 1. Comparison of Deep Learning Architectures

Deep No. Layers Advantage Disdavantage

Learning

Architecture

U-Net 2 convlutional layers 1. To determine high quality image It takes considerable

with maxpooling segmentation, the UNet merge the local amount of time for

and ReLU layer information from deconvolutional layer training the data

and context information from

convolutional layer.

2. various size images will be used as

input. i.e . there is no dense layer

3. In medical image segmentation, the

usage of huge amount of data growth is

important while the quantity of

annotated sample is limited.

V-Net 2 convolutional 1. The dice loss layer doesn’t require Difficult to insert an

layers with 3*3*3 any sample re-weighting when the unparameterised layer

kernals pixels of foreground and background of such as ReLU or delete

the image is unbalanced after adding element

2. The V-Net minimizes the resolution

and extract the features from data by

using suitable stride.

GoogleNet 22 layers 1. The training of GoogleNet is faster 1. Still it has less

than VGG. computational complexity

2. The pre-trained size of GoogleNet is with fewer parameters.

relatively small when compared with 2. There is no use of fully

VGG. connected convlutional

3. The GoogleNet has a size of most neural networks

effective 96MB but VGG can have

greater than 500MB

ResNet 152 layers Hundreds or thousands of residual

1. The final performance

modules is familiarized to construct a

of image segmentation is

network completely different fromaffected

normal networks 2. It takes more time for

extracting the features of

specified objects.

RCNN 13 sharable RCNN attain major enhancement in 1. In segmentation, the

convolutional layers performing image segmentation by features is not attuned

using the high discriminative CNN 2. The features does not

Volume 8, Issue 6, 2019 Page No: 618

International Journal of Scientific Research and Review ISSN NO: 2279-543X.

features. have sufficient spatial

information for particular

boundary generation

SegNet 13 Encoder layers 1. SegNet is best architecture for 1. It cannot absolutely

13 Decoder layers dealing image segmentation. separate the result of

2. The trainable parameters are smaller model vs. optimization in

than other networks. attaining the detailed

3. SegNet provides more efficient time effect.

inference and memory inference 2. In training, this

compared than other networks. network includes gradient

back propagation which

is inadequate for solving

image segmented

problem.

III Deep Learning Algorithms for Image Segmentation

In computer vision, deep learning methods have become progressively more popular in image

segmentation because of its efficiency for performing a number of tasks. An efficient

segmentation method ought to acquire results expeditiously with feasible user interactions [14].

There are two important factors should be considered for handling the performance and quality

of image segmentation. They are i) user interaction is considered as input and ii) algorithms

groundwork representations.

Convolutional networks are mainly used to handle the computer vision and prescient

problems are closely beneath manipulated on user aspects. However, the deep learning method

have provides alternate solution for learning the feature of still or video images. Therefore, it is

necessary to understanding what kind of deep learning methods is appropriate for given problem

could be a challenging task. In this section, some of the existing deep learning algorithms for

image segmentation is discussed as follows.

DeepLab

Deeplab [15] segments the semantic images by applying the strous convolution using the

unsampled filters for extracting the dense features. Atrous convolution makes it possible for

segmentation to unambiguously manipulate the decision at which function extractions are

determined inside the DCNN. The DeepLab tend to more extend it to atrous special pyramid

pooling that train the objects furthermore as image framework at different scales. The

localization of object boundaries are enhanced by combining the probabilities graphical model

with Deep Convolutional Neural Networks. Furthermore, the DCNN is combined with fully

connected conditional random fields to provide the exact calculation of semantic images and

construct the segmentation maps for boundary of each objects.

DeepIGeos

A Deep Interactive Geodisk [16] proposed a 2D and 3D image segmentation using DL

interaction framework. To increase the interaction of users, the DeepIGeos presented a

framework with two stages. In first stage, the initial segmentation is obtained automatically by

using P-Net and in the second stage, DeepIGeos used R-Net for indicating the missegmented

Volume 8, Issue 6, 2019 Page No: 619

International Journal of Scientific Research and Review ISSN NO: 2279-543X.

regions by interacting with users and filter the user interaction. The filtered user interactions are

converted and integrated into the input of R-Net. The level features are captured without any loss

in resolution by using the resolution preserving structure in R-Net and P-Net. Once the R-Net is

trained based on the interaction of users, it consider the reasonable time for retraining the

convolutional neural network for large number of training set.

Deepcut

Deepcut [17] integrate the iterative graphical optimization with Deep Learning to attain

segmentation in pixelwise manner. The medical images are segmented from image data sets with

its consequent bounding box observations. The training targets are updated iteratively finds out

by CNN method and the segmentations are regularized with the help of fully connected

conditional random filed. The training targets are the functions related with the localization of

voxels which are depicted from image patch. So that Deepcut method is developed in an

exceedingly generic type and therefore is often readily applicable to any medical images.

DeepMedic

DeepMedic [18] presents a dual pathway with eleven layers and 3D DCNN for segmenting the

multi model MRI based brain lesion. It contains two important components. They are 1) 3D

Convolutional Neural Network which provides segmentation map accurately 2) fully connected

3D CRF which enforces the constraints in regular manner on output of CNN and finally generate

the segmentation labels. The dual pathway analyzes the given images at different scales

concurrently in order to integrate both local and global contextual annotations. DeepMedic is

usually assessed on three different tasks of brain MRI lesion segmentation: 1. Traumatic brain

wound, 2. Ischemic stroke and 3. Brain tumors. In training stage, test images segmentation

utilize the dense training for analyzing the behavior of each image.

DeepOrgan

DeepOrgan [19] provides a probabilities bottom up approach for pancreas image segmentation in

abdominal CT scan by using multilevel DCNN. It proposes coarse to fine classification for

image patches in dense annotations, to regions and whole organs. It generates the initial super

pixels for medical images and these super pixels can act as local contextual information with low

precision. DeppOrgan provides a dense classification of local patches in images through nearest

neighbor fusion and P-ConNet. Class probabilities are assigned to each super pixel regions to

train image.

DCAN

Deep contour-Aware Networks [20] propose multilevel contextual features from layered

architecture which discovered the gland segmentation with auxiliary supervisor. Discriminative

features of transitional features are improved when training set is integrated multilevel

regularization technique. DCAN can handle three important challenges of gland segmentation.

Fist, probability map is generated straightly in a single forward propagation for large quantity of

image analysis. Second, it is easy to analyze the glandlure structure with biopsy test which

includes benign and malignant. Finally, gland contour and objects are investigated independently

using multi task training framework. The comparison of deep learning applications are shown in

table 2.

Volume 8, Issue 6, 2019 Page No: 620

International Journal of Scientific Research and Review ISSN NO: 2279-543X.

Table 2. Comparison of Deep Learning Applications in Medical Field

DL Applications Data sets used Advantages Disadvantages

DeepLab Pascal context, It address the atrous 1.Downsampling causes loss of

Pascal perform- convolution and CRF information

port and 2. the input damage the pixel

cityscapes accuracy

DeepIGeos 2D Fetal MRI, 1.It uses underpinning In testing, this method does not

Brain Tumors, learning method to learn the connected fully

3D FALIK knowledge from large amount

images of training set.

2. it consider ales amount of

user interaction to encode into

geodeis distance

Deepcut Fetal MRI, Brain Fast and provide a optimal 1.In testing, it does not

and Lungs results interacted fully

2. no refinement for further user

interaction

DeepMedic Brain MRI, It minimize the user Loss of feature map due to

BRATS and interaction and provide the multilevel maxpooling and

ISLES good performance downsampling

DCAN MICCAI gland 1.It uses joint multilevel This method fails to provide

learning method for acceptable results in malignant

improving the performance of field.

gland segmentation.

2. it improve the robustness

of gland

IV Conclusion

This paper provides a brief overview of Deep Learning architecture and its application of image

segmentation in medical field. The aim of this survey is specify the applications of deep learning

in medical image analysis in a crisp and easy way. Medical field consists of a number of issues

for analyzing the disease of different kinds of patients. Therefore, this paper is concentrated on

analyzing the architecture of DL and application of DL in medical field. Furthermore, it also

carried out the difficulties of analyzing the particular disease in specified organs in discussed in

details. From the survey, it concludes that the DL can provide a sensible support for

specialization in medical field. In future, the application of various fields will be considered.

Reference

[1] Goodfellow, Ian, Yoshua Bengio, and Aaron Courville. “Deep learning”. MIT Press(2016)

[2] Y. Guo, Y. Liu, A. Oerlemans, S. Lao, S. Wu, and M. S. Lew, “Deep learning for visual

understanding: a review,” Neurocomputing, vol. 187, pp. 27–48, 2016.

[3] Bahrampour, Soheil, et al. “Comparative study of deep learning software frameworks.” arXiv

preprint arXiv:1511.06435 (2015).

[4] Dou, Q.; Yu, L.; Chen, H.; Jin, Y.; Yang, X.; Qin, J.; Heng, P.A. 3D deeply supervised network

for automated segmentation of volumetric medical images. Med Image Anal. 2017, 41, 40–54.

Volume 8, Issue 6, 2019 Page No: 621

International Journal of Scientific Research and Review ISSN NO: 2279-543X.

[5] Havaei, M.; Davy, A.; Warde-Farley, D.; Biard, A.; Courville, A.; Bengio, Y.; Larochelle, H.

Brain tumor segmentation with Deep Neural Networks. Med. Image Anal. 2017, 35, 18–31.

[6] Mehta, R.; Majumdar, A.; Sivaswamy, J. BrainSegNet: A convolutional neural network

architecture for automated segmentation of human brain structures. J. Med. Imaging (Bellingham)

2017, 4, 024003.

[7] Olaf Ronneberger, Philipp Fischer, and Thomas Brox, “U-Net: Convolutional Networks for

Biomedical”,Computer Vision and Pattern Recognition, 2015

[8] Fausto Milletari1, Nassir Navab1;2, Seyed-Ahmad Ahmadi,” V-Net: Fully Convolutional Neural

Networks for Volumetric Medical Image Segmentation”,

[9] C. Szegedy, W. Liu, Y. Jia, P. Sermanet, S. Reed, D. Anguelov, D. Erhan, V.Vanhoucke, A.

Rabinovich, Going deeper with convolutions, Proceedings ofthe IEEE Conference on Computer

Vision and Pattern Recognition (2015)1–9.

[10] A. Krizhevsky, I. Sutskever, G.E. Hinton, ImageNet classification with deepconvolutional

neural networks, in: Advances in Neural InformationProcessing Systems, 2012, pp. 1097–1105.

[11]rsnet

[12] RCNN

[13] Vijay Badrinarayanan, Alex Kendall, Roberto Cipolla, “SegNet: A Deep Convolutional

Encoder-Decoder Architecture for Image Segmentation”, arXiv:1511.00561v3 [cs.CV] 10 Oct 2016

[14] Isensee, F.; Kickingereder, P.; Bonekamp, D.; Bendszus, M.; Wick, W.; Schlemmer, H.P.;

Maier-Hein, K. Brain Tumor Segmentation Using Large Receptive Field Deep Convolutional Neural

Networks. Bildverarb. Med. 2017, 86–91.

[15] L. C. Chen et al., Deeplab: Semantic image segmentation with deep convolutional nets, atrous

convolution, and fully connected crfs[J]. 2016. [Online]. Available: arXiv:1606.00915.

[16] G. Wang et al. “DeepIGeoS: A deep interactive geodesic framework for medical image

segmentation.” [Online]. Available: https://arxiv.org/abs/1707.00652

[17] M. Rajchl et al., “DeepCut: Object segmentation from bounding box annotations using

convolutional neural networks,” IEEE Trans. Med. Imag., vol. 36, no. 2, pp. 674–683, Feb. 2017.

[18] K. Kamnitsas et al., “Efficient multi-scale 3D CNN with fully connected CRF for accurate

`brain lesion segmentation,” Med. Image Anal., vol. 36, pp. 61–78, Feb. 2017.

[19] H. R. Roth et al., “DeepOrgan: Multi-level deep convolutional networks for automated pancreas

segmentation,” in Proc. MICCAI, 2015, pp. 556–564.

[20] H. Chen, X. Qi, L. Yu, and P.-A. Heng, “DCAN: Deep contour-aware networks for accurate

gland segmentation,” in Proc. CVPR, Jun. 2016, pp. 2487–2496.

[21] Choi, H.; Jin, K.H. Fast and robust segmentation of the striatum using deep convolutional neural

networks. J. Neurosci. Methods 2016, 274, 146–153.

[22] Akkus, Z.; Galimzianova, A.; Hoogi, A.; Rubin, D.L.; Erickson, B.J. Deep Learning for Brain

MRI Segmentation: State of the Art and Future Directions. J. Digit. Imaging 2017, 30, 449–459.

[23] Moeskops, P.; Viergever, M.A.; Mendrik, A.M.; de Vries, L.S.; Benders, M.J.; Isgum, I.

Automatic Segmentation of MR Brain ImagesWith a Convolutional Neural Network. IEEE Trans.

Med. Imaging 2016, 35, 1252–1261.

Volume 8, Issue 6, 2019 Page No: 622

You might also like

- Cafe Astrology Planets and Points OverviewDocument3 pagesCafe Astrology Planets and Points OverviewSushant ChhotrayNo ratings yet

- Redox ReactionsDocument37 pagesRedox ReactionsJack Lupino85% (13)

- Turbine Control SystemDocument8 pagesTurbine Control SystemZakariya50% (2)

- Economics PPT Game TheoryDocument11 pagesEconomics PPT Game TheoryPriyanshu Prajapati (Pp)No ratings yet

- MTBFDocument4 pagesMTBFJulio CRNo ratings yet

- Internet Banking System SRSDocument37 pagesInternet Banking System SRSVinayaga Moorthy0% (2)

- SAP SD Interview QuestionsDocument130 pagesSAP SD Interview QuestionsPraveen Kumar100% (1)

- Unit 3Document113 pagesUnit 3Jai Sai RamNo ratings yet

- Lipid Analysis: Melisa Intan BarlianaDocument38 pagesLipid Analysis: Melisa Intan BarlianaChantique Maharani0% (1)

- FinalDocument45 pagesFinalWaqar Mirza100% (1)

- Sai Paper Format PDFDocument7 pagesSai Paper Format PDFwalpolpoNo ratings yet

- 1 s2.0 S2667102621000061 MainDocument10 pages1 s2.0 S2667102621000061 Mainwenjing bianNo ratings yet

- Retinal Image Segmentation and Disease Classification Using Deep LearningDocument13 pagesRetinal Image Segmentation and Disease Classification Using Deep LearningIJRASETPublicationsNo ratings yet

- J Applied Clin Med Phys - 2020 - Wang - A Review On Medical Imaging Synthesis Using Deep Learning and Its Clinical PDFDocument26 pagesJ Applied Clin Med Phys - 2020 - Wang - A Review On Medical Imaging Synthesis Using Deep Learning and Its Clinical PDFJack FruitNo ratings yet

- Project Name: Center of Excellence in Artificial Intelligence For Medical Image SegmentationDocument6 pagesProject Name: Center of Excellence in Artificial Intelligence For Medical Image SegmentationDr. Aravinda C V NMAMITNo ratings yet

- Bharath Simha Reddy 2021 IOP Conf. Ser. Mater. Sci. Eng. 1022 012020Document11 pagesBharath Simha Reddy 2021 IOP Conf. Ser. Mater. Sci. Eng. 1022 012020Fateeha Fatima TurkNo ratings yet

- Sustainability 13 01224 v2Document29 pagesSustainability 13 01224 v2truongthaoNo ratings yet

- EJMCM Volume8 Issue3 Pages215-2311Document18 pagesEJMCM Volume8 Issue3 Pages215-2311Noora LyetwruckNo ratings yet

- Biomedical Research PaperDocument7 pagesBiomedical Research PaperSathvik ShenoyNo ratings yet

- Entropy 22 00844Document13 pagesEntropy 22 00844yogaNo ratings yet

- 1 s2.0 S0097849320300546 MainDocument10 pages1 s2.0 S0097849320300546 MainSINGSTONNo ratings yet

- A Survey On Emerging Schemes in Brain Image SegmentationDocument3 pagesA Survey On Emerging Schemes in Brain Image SegmentationEditor IJRITCCNo ratings yet

- Medical Transformer Achieves Better Performance for Medical Image SegmentationDocument18 pagesMedical Transformer Achieves Better Performance for Medical Image Segmentationmarko CavdarNo ratings yet

- Medical Image Fusion Based On Anisotropic Diffusion and Non-Subsampled Contourlet TransformDocument17 pagesMedical Image Fusion Based On Anisotropic Diffusion and Non-Subsampled Contourlet Transformprocessingimage0No ratings yet

- A New Approach For Clustering of X-Ray ImagesDocument5 pagesA New Approach For Clustering of X-Ray ImagesAbdullah AbdullahNo ratings yet

- Intelligent Computing Techniques On Medical Image Segmentation and Analysis A SurveyDocument6 pagesIntelligent Computing Techniques On Medical Image Segmentation and Analysis A SurveyInternational Journal of Research in Engineering and TechnologyNo ratings yet

- A - Hybrid - CNN-PH 2based - Segmentation - and - Boosting - Classifier - For - Real - Time - Sensor - Spinal - Cord - Injury - DataDocument10 pagesA - Hybrid - CNN-PH 2based - Segmentation - and - Boosting - Classifier - For - Real - Time - Sensor - Spinal - Cord - Injury - DataKarpagam KNo ratings yet

- IET Image Processing - 2022 - Wang - Medical Image Segmentation Using Deep Learning a SurveyDocument25 pagesIET Image Processing - 2022 - Wang - Medical Image Segmentation Using Deep Learning a SurveySURYA PRAKASH K ITNo ratings yet

- Recurrent Residual U-Net Short Critical ReviewDocument3 pagesRecurrent Residual U-Net Short Critical ReviewInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Medical Image Classification Algorithm Based On Vi PDFDocument12 pagesMedical Image Classification Algorithm Based On Vi PDFEnes mahmut kulakNo ratings yet

- 10 1016@j Bbe 2019 06 003Document11 pages10 1016@j Bbe 2019 06 003HaryadiNo ratings yet

- Nihms 1034737Document42 pagesNihms 1034737mawara khanNo ratings yet

- AI Powered EchocardiographyDocument8 pagesAI Powered EchocardiographyijbbjournalNo ratings yet

- Thresholding and Filtering Based Brain Tumor Segmentation On MRI ImagesDocument6 pagesThresholding and Filtering Based Brain Tumor Segmentation On MRI ImagesIJRASETPublicationsNo ratings yet

- MSE NetDocument16 pagesMSE NetAmartya RoyNo ratings yet

- New Paper Brain TumorDocument8 pagesNew Paper Brain TumorZeeshanNo ratings yet

- Rishik Rangaraju Annotated Bibliography 9Document2 pagesRishik Rangaraju Annotated Bibliography 9api-672034864No ratings yet

- Sensors: Multi-Modality Medical Image Fusion Using Convolutional Neural Network and Contrast PyramidDocument17 pagesSensors: Multi-Modality Medical Image Fusion Using Convolutional Neural Network and Contrast PyramidShrida Prathamesh KalamkarNo ratings yet

- Transfer Learning For Cell Nuclei Classification in Histopathology ImagesDocument8 pagesTransfer Learning For Cell Nuclei Classification in Histopathology ImagesSandra VieiraNo ratings yet

- Tumor ClassificationDocument8 pagesTumor ClassificationSachNo ratings yet

- Explainable Deep Learning Models in Medical Image AnalysisDocument19 pagesExplainable Deep Learning Models in Medical Image Analysissaif alwailiNo ratings yet

- Review of Brain MRI Image Segmentation Using Deep Learning MethodsDocument6 pagesReview of Brain MRI Image Segmentation Using Deep Learning MethodsAndi OrlandaNo ratings yet

- Medical Image SegmentationDocument19 pagesMedical Image SegmentationMd. Ayash IslamNo ratings yet

- Efficacy of Algorithms in Deep Learning On Brain Tumor Cancer Detection (Topic Area Deep Learning)Document8 pagesEfficacy of Algorithms in Deep Learning On Brain Tumor Cancer Detection (Topic Area Deep Learning)International Journal of Innovative Science and Research TechnologyNo ratings yet

- Leukemia Cancer Cells Segmentation and Classification Using Machine LearningDocument18 pagesLeukemia Cancer Cells Segmentation and Classification Using Machine LearningOpen Access JournalNo ratings yet

- IRIS-Based Human Identity Recognition With Deep Learning MethodsDocument6 pagesIRIS-Based Human Identity Recognition With Deep Learning MethodsIJRASETPublicationsNo ratings yet

- Medical Image SegmentationDocument13 pagesMedical Image SegmentationMd. Ayash IslamNo ratings yet

- A survey on automatic generation of medical imaging reports based on deep learningDocument16 pagesA survey on automatic generation of medical imaging reports based on deep learningSuesarn WilainuchNo ratings yet

- Mri Brain Tumor Classification Using Artificial Neural NetworkDocument5 pagesMri Brain Tumor Classification Using Artificial Neural NetworkAnonymous w7llc3BDNo ratings yet

- Deep Learning Based Detection and Correction of Cardiac MR Motion Artefacts During Reconstruction For High-Quality SegmentationDocument10 pagesDeep Learning Based Detection and Correction of Cardiac MR Motion Artefacts During Reconstruction For High-Quality SegmentationJoseph FranklinNo ratings yet

- 筆記2Document3 pages筆記2盧旻瑋No ratings yet

- Deep Semantic Segmentation of Kidney and Space-Occupying Lesion Area Based On SCNN and Resnet Models Combined With Sift-Flow AlgorithmDocument12 pagesDeep Semantic Segmentation of Kidney and Space-Occupying Lesion Area Based On SCNN and Resnet Models Combined With Sift-Flow AlgorithmMuhamad Praja DewanataNo ratings yet

- A Survey On Deep Learning Techniques For Medical Image Analysis RiyajDocument20 pagesA Survey On Deep Learning Techniques For Medical Image Analysis Riyajdisha rawal100% (1)

- Eyeing The Human Brain'S Segmentation Methods: Lilian Chiru Kawala, Xuewen Ding & Guojun DongDocument10 pagesEyeing The Human Brain'S Segmentation Methods: Lilian Chiru Kawala, Xuewen Ding & Guojun DongTJPRC PublicationsNo ratings yet

- A Study of Segmentation Methods For Detection of Tumor in Brain MRIDocument6 pagesA Study of Segmentation Methods For Detection of Tumor in Brain MRItiaraNo ratings yet

- An Attention-Based Deep Convolutional Neural Network For Brain Tumor and Disorder Classification and Grading in Magnetic Resonance ImagingDocument14 pagesAn Attention-Based Deep Convolutional Neural Network For Brain Tumor and Disorder Classification and Grading in Magnetic Resonance Imagingpspcpspc7No ratings yet

- Diagnosis of Chronic Brain Syndrome Using Deep LearningDocument10 pagesDiagnosis of Chronic Brain Syndrome Using Deep LearningIJRASETPublicationsNo ratings yet

- 3 - Medical image fusion and noise suppression with fractional-order total variation and multi-scale decompositionDocument14 pages3 - Medical image fusion and noise suppression with fractional-order total variation and multi-scale decompositionNguyễn Nhật-NguyênNo ratings yet

- Attention U-Net Learning Where To Look For The PancreasDocument10 pagesAttention U-Net Learning Where To Look For The PancreasMatiur Rahman MinarNo ratings yet

- Diagnostics: Transmed: Transformers Advance Multi-Modal Medical Image ClassificationDocument15 pagesDiagnostics: Transmed: Transformers Advance Multi-Modal Medical Image ClassificationAhmedNo ratings yet

- Medical Image Dengan CNNDocument13 pagesMedical Image Dengan CNNarisNo ratings yet

- A Systematic Study of Deep Learning Architectures For Analysis of Glaucoma and Hypertensive RetinopathyDocument17 pagesA Systematic Study of Deep Learning Architectures For Analysis of Glaucoma and Hypertensive RetinopathyAdam HansenNo ratings yet

- Advances in Deep Learning Techniques For Medical Image AnalysisDocument7 pagesAdvances in Deep Learning Techniques For Medical Image AnalysisUsmaNo ratings yet

- Retraction: Retracted: Deep Neural Networks For Medical Image SegmentationDocument16 pagesRetraction: Retracted: Deep Neural Networks For Medical Image Segmentationsarahrrat16No ratings yet

- Irjet V10i214Document3 pagesIrjet V10i214tanzimreadsNo ratings yet

- Image Segmentation and Classification Using Neural NetworkDocument15 pagesImage Segmentation and Classification Using Neural NetworkAnonymous Gl4IRRjzNNo ratings yet

- 1 s2.0 S1746809422003500 MainDocument16 pages1 s2.0 S1746809422003500 MainWided HechkelNo ratings yet

- Multimodal Medical Image Fusionbasedon Deep Learning Neural Networkfor Clinical Treatment AnalysisDocument18 pagesMultimodal Medical Image Fusionbasedon Deep Learning Neural Networkfor Clinical Treatment AnalysisngontingtopyarnaurNo ratings yet

- New Approaches to Image Processing based Failure Analysis of Nano-Scale ULSI DevicesFrom EverandNew Approaches to Image Processing based Failure Analysis of Nano-Scale ULSI DevicesRating: 5 out of 5 stars5/5 (1)

- A Comparative Study of Brain Tumor Segmentation and DetectionDocument10 pagesA Comparative Study of Brain Tumor Segmentation and DetectionVinayaga MoorthyNo ratings yet

- Introduction to Electronic Commerce ChapterDocument43 pagesIntroduction to Electronic Commerce ChapterVinayaga MoorthyNo ratings yet

- Lecture Notes On E-Commerce &cyber Laws Course Code:Bcs-402: Dept of Cse & It VSSUT, BurlaDocument77 pagesLecture Notes On E-Commerce &cyber Laws Course Code:Bcs-402: Dept of Cse & It VSSUT, BurlachhotuNo ratings yet

- Ug Courses - Affiliated Colleges (Choice Based Credit System) (With Effect From The Academic Year 2017-2018 Onwards)Document35 pagesUg Courses - Affiliated Colleges (Choice Based Credit System) (With Effect From The Academic Year 2017-2018 Onwards)Vinayaga MoorthyNo ratings yet

- Comparative Analysis On Deep Convolutional Neural Network For Brain Tumor Data SetDocument14 pagesComparative Analysis On Deep Convolutional Neural Network For Brain Tumor Data SetVinayaga MoorthyNo ratings yet

- Introduction to Electronic Commerce ChapterDocument43 pagesIntroduction to Electronic Commerce ChapterVinayaga MoorthyNo ratings yet

- Lecture Notes On E-Commerce &cyber Laws Course Code:Bcs-402: Dept of Cse & It VSSUT, BurlaDocument77 pagesLecture Notes On E-Commerce &cyber Laws Course Code:Bcs-402: Dept of Cse & It VSSUT, BurlachhotuNo ratings yet

- UG PG Integrated Digital Era Computer CourseDocument2 pagesUG PG Integrated Digital Era Computer CourseVinayaga MoorthyNo ratings yet

- Introduction To PHPDocument40 pagesIntroduction To PHPPragya SinghNo ratings yet

- Embedded SystemDocument45 pagesEmbedded SystemVinayaga MoorthyNo ratings yet

- Introduction - SethaDocument79 pagesIntroduction - SethaVinayaga MoorthyNo ratings yet

- Chapter - 1Document56 pagesChapter - 1Vinayaga MoorthyNo ratings yet

- Real Time Embedded - SystemDocument15 pagesReal Time Embedded - SystemAndrei MocanuNo ratings yet

- Embed Sys v01Document18 pagesEmbed Sys v01Vignesh MaheswarasamyNo ratings yet

- Rubrics Vital Signs TakingDocument6 pagesRubrics Vital Signs TakingRichard SluderNo ratings yet

- Door Welding Inspection ReportDocument9 pagesDoor Welding Inspection ReportAnilkumarNo ratings yet

- ATS Automatic Transfer Switch GuideDocument4 pagesATS Automatic Transfer Switch GuideBerkah Jaya PanelNo ratings yet

- Assignment 7.3: Double-Angle, Half-Angle, and Reduction FormulasDocument3 pagesAssignment 7.3: Double-Angle, Half-Angle, and Reduction FormulasCJG MusicNo ratings yet

- Linprog 1 SolDocument7 pagesLinprog 1 SolyoursonesNo ratings yet

- Atomic Structure Section ADocument34 pagesAtomic Structure Section AMarshmalloowNo ratings yet

- The Performance and Service Life of Wire Ropes Under Deep Koepe and Drum Winders Conditions - Laboratory SimulationDocument9 pagesThe Performance and Service Life of Wire Ropes Under Deep Koepe and Drum Winders Conditions - Laboratory SimulationRicardo Ignacio Moreno MendezNo ratings yet

- Antioxidants - MELROB - RubberDocument6 pagesAntioxidants - MELROB - RubberMarcos ROSSINo ratings yet

- A 1008 - A 1008M - 04 Qtewmdgvqtewmdhnlvjfra - PDFDocument9 pagesA 1008 - A 1008M - 04 Qtewmdgvqtewmdhnlvjfra - PDFMarcos Verissimo Juca de PaulaNo ratings yet

- Montfort Secondary School Chapter 21: Electromagnetism: Name: Date: ClassDocument12 pagesMontfort Secondary School Chapter 21: Electromagnetism: Name: Date: ClasssonghannNo ratings yet

- Goldscmidt AlgoDocument4 pagesGoldscmidt AlgochayanpathakNo ratings yet

- Chapter 7Document23 pagesChapter 7enes_ersoy_3No ratings yet

- Gemh106 PDFDocument20 pagesGemh106 PDFVraj PatelNo ratings yet

- Pantron Amplifier ISG-N138 DatasheetDocument7 pagesPantron Amplifier ISG-N138 DatasheetlutfirozaqiNo ratings yet

- Lesson 2Document3 pagesLesson 2Margarette VinasNo ratings yet

- Irs Wtc2020Document73 pagesIrs Wtc2020Himanshu SinghNo ratings yet

- Prmo 2018 QPDocument2 pagesPrmo 2018 QPJatin RatheeNo ratings yet

- Overcoming Top 10 Oracle ErrorsDocument27 pagesOvercoming Top 10 Oracle ErrorsVishal S RanaNo ratings yet

- Sunshape Thesis - Wilbert - DLRDocument177 pagesSunshape Thesis - Wilbert - DLRAhmed AlshehrriNo ratings yet

- Managerial Accounting DefinitionsDocument15 pagesManagerial Accounting Definitionskamal sahabNo ratings yet

- Notes On Graph Algorithms Used in Optimizing Compilers: Carl D. OffnerDocument100 pagesNotes On Graph Algorithms Used in Optimizing Compilers: Carl D. Offnerref denisNo ratings yet

- Assigment1 Jan30-2023Document4 pagesAssigment1 Jan30-2023Vaquas aloNo ratings yet