Professional Documents

Culture Documents

Topic5 PCSPD Handout

Uploaded by

xinyichen121Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Topic5 PCSPD Handout

Uploaded by

xinyichen121Copyright:

Available Formats

Applications of Econometrics

Pooled Cross Sections and Panel Data

Wooldridge (2019) Chapter 13

Semester 2, 2023/24

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 1 / 65

We’d love to hear your feedback!

Mid-course survey link:

https://edinburgh.eu.qualtrics.com/jfe/form/SV_5thhRDVTmiKaMdw

The survey deadline is Thursday 15 February.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 2 / 65

In this lecture

1 Pooled Cross Section and Difference in Differences

2 Simple Panel Data Analysis

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 3 / 65

PCS and DID

Pooled Cross Section and

Difference in Difference

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 4 / 65

PCS and DID

Pooled cross section in surveys

Data obtained by pooling cross sections are very useful for establishing trends

and conducting policy analysis.

A pooled cross section (PCS) is available whenever a survey is repeated over

time with new random samples obtained in each time period.

That is, the survey does not track the same individuals across survey waves.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 5 / 65

PCS and DID

Making use of PCS

Statistically, analysing pooled cross sections is similar to a single cross

section, provided we assume random sampling was used to collect each

cross section.

From a policy perspective, PCSs are at the foundation of

difference-in-differences (DID) estimation.

The typical DID setup is that data can be collected both before and after an

intervention (or “treatment”), and there is (at least) one “control group” and (at

least) one “treatment” group.

Often the intervention is of a yes/no form. But other nonbinary treatments

(such as class size) can be handled, too.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 6 / 65

PCS and DID

Independently Pooled Cross Sections

A special setup often arises with independently pooled cross sections. The

setup is used often to study the effects of policy interventions.

Outcomes are observed for two groups over two time periods.

One of the groups is exposed to a “treatment” in the second period but not in

the first period.

The second group is not exposed to the treatment during either period.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 7 / 65

PCS and DID

The regression

Let A be the control group and B the treatment group. Let d2 be a time period

dummy equal to one for a unit in the second time period. Write

y = β0 + β1 dB + δ0 d2 + δ1 d2 · dB + u,

where y is the outcome of interest.

The mean value of u is zero (essentially by definition), so we can read off the

means of the response for different combinations.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 8 / 65

PCS and DID

Treatment vs. Control

y = β0 + β1 dB + δ0 d2 + δ1 d2 · dB + u.

Before (1) After (2) After − Before

Control (A) β0 β0 + δ0 δ0

Treatment (B) β0 + β1 β0 + δ0 + β1 + δ1 δ0 + δ1

Treatment − Control β1 β1 + δ1 δ1

What restrictions did we impose?

For before and after, we toggle d2.

For control and treatment, we toggle dB.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 9 / 65

PCS and DID

Interpreting the Coefficients

y = β0 + β1 dB + δ0 d2 + δ1 d2 · dB + u

dB captures possible differences between the treatment and control groups

prior to the policy change. Its coefficient, β1 , is the difference between

treatment and control ... before the intervention.

d2 captures aggregate factors that would cause changes in y over time even

in the absence of an intervention. Notice its coefficient, δ0 , is the change in

the mean of the control group ... across the two periods.

The change in the mean over time for the treatment group is δ0 + δ1 .

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 10 / 65

PCS and DID

Interpreting the Coefficients

y = β0 + β1 dB + δ0 d2 + δ1 d2 · dB + u

The coefficient of interest is δ1 = (δ1 + δ0 ) − δ0 , the difference in the average

changes over time for the treatment and control groups.

Conveniently, δ1 is the coefficient on the interaction d2 · dB, which is one if

and only if the unit is in the treatment group in period 2.

δ1 is sometimes called the average treatment effect, for it captures the the

effect of the treatment on the average outcome of y .

If y is a logarithm then, as usual, δ1 is a proportionate effect (multiple by 100

to approximate the percentage effect).

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 11 / 65

PCS and DID

Difference-in-Difference

The difference-in-differences (DD) estimate can be obtained by applying OLS

to equation (1). Or, we can just use the averages directly:

δ̂DD = δ̂1 = (ȳB,2 − ȳB,1 ) − (ȳA,2 − ȳA,1 )

= (ȳB,2 − ȳA,2 ) − (ȳB,1 − ȳA,1 )

Then why do we need OLS? OLS makes inference straightforward.

Heteroskedasticity-robust inference allows different group/time period

variances in regression framework.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 12 / 65

PCS and DID

Intuition

Just using ȳB,2 − ȳB,1 , the difference over time in the means of the treatment

group, attributes all change to the intervention.

Just using ȳB,2 − ȳA,2 , the difference in treatment and control means

post-treatment, attributes any differences in the groups to the treatment.

Writing

δ̂1 = (ȳB,2 − ȳB,1 ) − (ȳA,2 − ȳA,1 )

shows that we are comparing the change in means over time for the treatment

to the change in mean for the control.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 13 / 65

PCS and DID

Example: Workers’ compensation, motivation

We study the relationship between the length of injury leave and changes to

injury compensation.

Suppose a policy increased the cap on weekly earnings that were covered by

workers’ compensation.

Low earners were not affected by this policy. They received the same

compensation before and after the intervention.

High earners may stay on workers’ compensation for longer, because it had

become less costly to be on injury leave.

We use injury.dta.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 14 / 65

PCS and DID

Example: Workers’ compensation, the set-up

Let’s set up the variables of interest.

. des ldurat afchnge highearn

Variable Storage Display Value

name type format label Variable label

-----------------------------------------------------------------------------

ldurat float %9.0g log(durat)

afchnge byte %9.0g =1 if after change in benefits

highearn byte %9.0g =1 if high earner

The dependent variable is ldurat, log of the duration of benefits in weeks.

We use afchnge to indicate the period after the change. (It is the “time”

variable.)

We use highearn to indicate the high earners whose compensations were

affected by the reform. (It is the “treatment” variable.)

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 15 / 65

PCS and DID

Example: Workers’ compensation, single difference on time

What happens if we regress log duration on time only?

. reg ldurat afchnge

Source | SS df MS Number of obs = 5,626

-------------+---------------------------------- F(1, 5624) = 7.41

Model | 12.1728426 1 12.1728426 Prob > F = 0.0065

Residual | 9234.8331 5,624 1.64204002 R-squared = 0.0013

-------------+---------------------------------- Adj R-squared = 0.0011

Total | 9247.00594 5,625 1.64391217 Root MSE = 1.2814

------------------------------------------------------------------------------

ldurat | Coefficient Std. err. t P>|t| [95% conf. interval]

-------------+----------------------------------------------------------------

afchnge | .0931227 .034202 2.72 0.006 .0260736 .1601717

_cons | 1.233253 .023641 52.17 0.000 1.186907 1.279598

------------------------------------------------------------------------------

The afchnge coefficient shows that leave duration has increased, on average,

by 9.3%, after the policy.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 16 / 65

PCS and DID

Example: Workers’ compensation, single difference on treatment

What happens if we regress log duration on treatment only?

. reg ldurat highearn

Source | SS df MS Number of obs = 5,626

-------------+---------------------------------- F(1, 5624) = 103.77

Model | 167.520585 1 167.520585 Prob > F = 0.0000

Residual | 9079.48535 5,624 1.61441774 R-squared = 0.0181

-------------+---------------------------------- Adj R-squared = 0.0179

Total | 9247.00594 5,625 1.64391217 Root MSE = 1.2706

------------------------------------------------------------------------------

ldurat | Coefficient Std. err. t P>|t| [95% conf. interval]

-------------+----------------------------------------------------------------

highearn | .3490087 .0342618 10.19 0.000 .2818423 .416175

_cons | 1.129233 .0223497 50.53 0.000 1.085419 1.173047

------------------------------------------------------------------------------

The highearn coefficient shows that the average leave duration for high

earners is 35% longer than for low earners.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 17 / 65

PCS and DID

Example: Workers’ compensation, DID

Let’s run the DID.

. reg ldurat afchnge highearn afhigh

Source | SS df MS Number of obs = 5,626

-------------+---------------------------------- F(3, 5622) = 39.54

Model | 191.071442 3 63.6904807 Prob > F = 0.0000

Residual | 9055.9345 5,622 1.61080301 R-squared = 0.0207

-------------+---------------------------------- Adj R-squared = 0.0201

Total | 9247.00594 5,625 1.64391217 Root MSE = 1.2692

------------------------------------------------------------------------------

ldurat | Coefficient Std. err. t P>|t| [95% conf. interval]

-------------+----------------------------------------------------------------

afchnge | .0076573 .0447173 0.17 0.864 -.0800058 .0953204

highearn | .2564785 .0474464 5.41 0.000 .1634652 .3494918

afhigh | .1906012 .0685089 2.78 0.005 .0562973 .3249051

_cons | 1.125615 .0307368 36.62 0.000 1.065359 1.185871

------------------------------------------------------------------------------

afhigh is the interaction between afchnge and highearn.

The afhigh coefficient shows that the policy increased high earners’ leave

duration by 19.1% ...controlling for existing differences between high and low

earners, and the trend in leave duration.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 18 / 65

PCS and DID

Extension: Control variables

We can add control variables to the simple DID specification.

In the workers’ compensation example, maybe the treated and control groups

are not comparable because the injuries are of different nature.

We can adjust for this by including injury types as explanatory variables.

Low-wage earners may experience injuries at a younger age than high-wage

earners. As a result, low-wage earners require a shorter recovery period.

We can control for the age at injury.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 19 / 65

PCS and DID

Extension: Multiple control groups

A potential problem with using only two periods is that the control and

treatment groups may be trending at different rates having nothing to do with

the intervention.

In the workers’ compensation example, what if high earners are taking up

more compensation than low earners irrespective of the policy change?

We can find high earners from a different state in the U.S. and use them as a

second control group. (They are in the treated group, but they are not subject

to the treatment).

The resulting estimator is a triple difference estimator.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 20 / 65

PCS and DID

Extension: General framework

A more general approach to policy analysis is to include multiple control and

treatment groups as well as more than two time periods.

We control for aggregate time effects to all groups, fixed effects specific to

each group, group-specific time trends, and explanatory variables measured

at the individual and group level.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 21 / 65

PCS and DID

Extension: Continuous treatment

In the workers’ compensation example, what if the policy increased

compensation proportionally to earnings (i.e., a continuous treatment) rather

than raising the earnings cap (i.e., a binary treatment)?

We define treatment as log earnings, a continuous variable, and interact it

with the dummy variable for time (afchnge).

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 22 / 65

Simple Panel Data Analysis

Simple Panel Data Analysis

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 23 / 65

Simple Panel Data Analysis

What is a panel data set?

With a panel data set, the same units are sampled in two or more time

periods. For each unit (individual, school, city, and so on) i we have multiple

years of data.

At a minimum, statistical methods must recognise that the outcomes for a unit

will be correlated over time.

By contrast, with pooled cross sections we have different units sampled in

each period. If there is some overlap, we ignore it (and usually would not

know there is overlap).

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 24 / 65

Simple Panel Data Analysis

Balanced panel

Main benefit of panel data: with multiple years of data we can control for

unobserved characteristics that do not change (or change slowly) over time.

Very useful for policy analysis.

A balanced panel is one where we observe the same time periods for each

unit. Easier to achieve for larger units (such as schools and cities).

At a disaggregated level – such as individuals and families – following the

same units over time can be challenging. Attrition can be a serious problem.

What is attrition? Units dropping out of the sample.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 25 / 65

Simple Panel Data Analysis

Notation

Notation here assumes a balanced panel. Econometric methods extend to

unbalanced panels, and software takes care of algebraic details.

But one should ask: Why are some periods missing for some units? (For

example, is reporting achievement scores by schools optional or not

enforced?)

The notation we use is the following. For each cross-sectional unit i at time t

the response variable is yit . An explanatory variable is xit . With more than one

explanatory variable we have xit1 , xit2 , ..., xitk

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 26 / 65

Simple Panel Data Analysis

Two-period panel data

We will start with the case of two periods, so t = 1, 2 and (hopefully) many

cross section observations, i = 1, 2, . . . , n.

Along with the observed data (xit1 , xit2 , . . . , xitk , yit ) we draw unobserved

factors.

Put these into two categories. (1) A component that does not change over

time, ai . Called an unobserved effect or unobserved heterogeneity. It

varies by individual but not by time.

What is an example of ai at the individual level? “ability” – something innate

and subject to slow change.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 27 / 65

Simple Panel Data Analysis

Two-period panel data

There are also unobservables that change across time, uit . These are

sometimes called “shocks”; we will call them idiosyncratic errors.

They are specific to unit i but vary over time, and they affect the outcome, yit .

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 28 / 65

Simple Panel Data Analysis

Storing panel data

The best way to store panel data is to stack the time periods for each i on top

of each other.

In particular, the time periods for each unit should be adjacent, and stored in

chronological order (from earliest period to the most recent).

This is sometimes called the “long” storage format. It is by far the most

common.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 29 / 65

Simple Panel Data Analysis

Long format

. use $data\GPA3.dta, clear

. des id term trmgpa sat

Variable Storage Display Value

name type format label Variable label

--------------------------------------------------------------------------------

id float %9.0g student identifier

term int %4.0f fall = 1, spring = 2

trmgpa float %9.0g term GPA

sat int %4.0f SAT score

. list id term trmgpa sat in 1/10

+----------------------------+

| id term trmgpa sat |

|----------------------------|

1. | 22 1 1.5 920 |

2. | 22 2 2.25 920 |

3. | 35 1 2.2 780 |

4. | 35 2 1.6 780 |

5. | 36 1 1.6 810 |

|----------------------------|

6. | 36 2 1.29 810 |

7. | 156 1 2 1080 |

8. | 156 2 2.73 1080 |

9. | 246 1 2.8 960 |

10. | 246 2 2.6 960 |

+----------------------------+

The same data structure is convenient for more than two years.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 30 / 65

Simple Panel Data Analysis

Declaring panel data

While not absolutely necessary for some procedures, it is best to tell Stata

that you have a panel data set. In particular, what are i and t? In GPA3.DTA,

i = id and t = term.

. xtset id term

panel variable: id (strongly balanced)

time variable: term, 1 to 2

delta: 1 unit

. tab term

fall = 1, |

spring = 2 | Freq. Percent Cum.

------------+-----------------------------------

1 | 366 50.00 50.00

2 | 366 50.00 100.00

------------+-----------------------------------

Total | 732 100.00

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 31 / 65

Simple Panel Data Analysis

Sorting long panel data

Initially using xtset heads off most problems, but it is nice to have the data

appropriately sorted. To sort the data, use

sort distid year

You do not want to sort by year and then district ID. (That would make the data

set look more like independently pooled cross sections, and mask the panel

structure.)

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 32 / 65

Simple Panel Data Analysis

Wide format

Sometimes panel data sets (especially with two years) will be stored as

having only n records (rather than 2n, as above), with the variables from the

different years given different suffixes (to distinguish the years).

Generally, this makes the data harder to work with, especially if there are

more than two years.

It is sometimes called the “wide” storage method.

State has a command, reshape, that allows one to go from wide to long, and

vice versa.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 33 / 65

Simple Panel Data Analysis

Wide format

VOTE2.DTA is a two-year panel data set stored in the wide format.

. des state vote90 vote88 inexp90 inexp88

Variable Storage Display Value

name type format label Variable label

-----------------------------------------------------------------------------------

state str2 %9s state postal code

vote90 byte %8.0g inc. share two-party vote, 1990

vote88 byte %8.0g inc. share two-party vote, 1988

inexp90 float %9.0g inc. camp. expends., 1990

inexp88 float %9.0g inc. camp. expends., 1988

. list state vote90 vote88 inexp90 inexp88 in 1/10

+---------------------------------------------+

| state vote90 vote88 inexp90 inexp88 |

|---------------------------------------------|

1. | AL 51 94 596096 234923 |

2. | AK 52 62 564759 626377 |

3. | AZ 66 73 112373 99607 |

4. | AR 71 75 105354 159221 |

5. | CA 64 59 515020 696748 |

|---------------------------------------------|

6. | CO 64 70 521500 217503 |

7. | CT 60 64 464500 727919 |

8. | DE 66 68 521336 371747 |

9. | FL 52 67 158280 210940 |

10. | GA 71 67 399035 337048 |

+---------------------------------------------+

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 34 / 65

Simple Panel Data Analysis

Two periods with one explanatory variable

Assume a balanced panel for units i. The units can be aggregated (schools or

cities) or disaggregated (students or teachers).

We have time periods t = 1 and t = 2 for each unit i. These periods do not

have to be, say, adjacent years. They could be periods far apart in time. Or,

they could be close together.

First consider the case with a single explanatory variable, xit

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 35 / 65

Simple Panel Data Analysis

The model

The equation is

yit = β0 + δ0 d2t + β1 xit + ai + uit ,t = 1, 2.

We observe (xit , yit ) for each of the two time periods. The variable d2t is a

constructed time dummy for the second time period: d2t = 1 if t = 2 and

d2t = 0 if t = 1.

The variable ai is the unobserved unit effect (or heterogeneity). uit is the

unobserved idiosyncratic error.

We are interested in estimating β1 , the partial effect of x on y . Note that the

model assumes this effect is constant over time.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 36 / 65

Simple Panel Data Analysis

The model

yit = β0 + δ0 d2t + β1 xit + ai + uit ,t = 1, 2.

The intercept in the first (base) period is β0 , and that for the second period is

β0 + δ0 .

It can be very important to allow changing intercepts to get a good estimate of

a causal effect.

(For example, a policy, as measured by xit , might be implemented just as the

aggregate economy is turning up or down – as captured by δ0 d2t .)

For policy analysis, xit is often a dummy variable.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 37 / 65

Simple Panel Data Analysis

Estimation

How should we estimate the slope β1 (and β0 , δ0 along with it)?

One possibility is to just use a pooled OLS analysis.

Effectively, define the composite error as

vit = ai + uit ,t = 1, 2

and write

yit = β0 + δ0 d2t + β1 xit + vit ,t = 1, 2.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 38 / 65

Simple Panel Data Analysis

POLS error dependence

Applying OLS we obtain the pooled OLS estimator. We simply regress y on

d2 and x. (Stata need not even know we have a panel data set; it looks like a

regression with one long cross section.)

A few important issues arise with the POLS estimator.

1. Even if we assume random sampling across i – which we do – we cannot

reasonably assume the observations for i across t = 1, 2 are independent. In

fact,

vi1 = ai + ui1

vi2 = ai + ui2

must be correlated because of the presence of ai .

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 39 / 65

Simple Panel Data Analysis

POLS serial correlation

Correlation of vi1 and vi2 causes the usual OLS standard errors to be invalid.

And using heteroskedasticity-robust standard errors does not solve the

problem.

This is the familiar problem of serial correlation or cluster correlation.

(Each unit i is a cluster of two time periods.)

Obtaining “cluster-robust” standard errors and test statistics is very easy these

days.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 40 / 65

Simple Panel Data Analysis

Consistency of POLS

2. A more serious issue is that consistency of OLS (as n gets large, as usual)

requires that xit and vit are uncorrelated.

Because vit = ai + uit , we need

Cov (xit , ai ) = 0

Cov (xit , uit ) = 0

Suppose we are willing to assume the second of these. The first might be

violated if xit is determined based on systematic differences in units.

For example, if yit is regional employment rate, xit is regional crime rate.

Crime rate might depend partly on historical economic conditions (e.g., age

distribution, education level) of an area, captured by ai , but not on

contemporaneous shocks to employment (in uit ).

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 41 / 65

Simple Panel Data Analysis

POLS heterogeneity bias

When Cov (xit , ai ) ̸= 0 it is often said that (pooled) OLS suffers from

heterogeneity bias.

If the explanatory variable changes over time – at least for some units in the

population – heterogeneity bias can be solved by differencing away ai .

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 42 / 65

Simple Panel Data Analysis

Differencing the two years

To remove the source of bias in POLS, ai , write the time periods in reverse

order for any unit i:

yi2 = (β0 + δ0 ) + β1 xi2 + ai + ui2

yi1 = β0 + β1 xi1 + ai + ui1

Subtract time period one from time period two to get

yi2 − yi1 = δ0 + β1 (xi2 − xi1 ) + (ui2 − ui1 )

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 43 / 65

Simple Panel Data Analysis

Differencing the two years

If we define ∆yi = yi2 − yi1 , where ∆ = change – and similarly for ∆xi and

∆ui – we can write the cross-sectional equation as

∆yi = δ0 + β1 ∆xi + ∆ui

or

cyi = δ0 + β1 cxi + cui

Important: β1 is the original coefficient we are interested in. We have obtained

an estimating equation by taking changes or differencing.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 44 / 65

Simple Panel Data Analysis

Differencing the two years

Notice that the intercept in the differenced equation,

∆yi = δ0 + β1 ∆xi + ∆ui

is the change in the intercept over the two time periods. It is sometimes

interesting to study this change.

Differencing away the unobserved effect, ai , is simple but can be very

powerful for isolating causal effects.

If ∆xi = 0 for all i, or even if ∆xi is the same nonzero constant, this strategy

does not work. We need some variation in ∆xi across i.

In other words, we require some within-unit variations for the differencing to

work.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 45 / 65

Simple Panel Data Analysis

First-difference estimator

The OLS estimator applied to

∆yi = δ0 + β1 ∆xi + ∆ui

is often called the first-difference estimator. (With more than two time

periods, other orders of differencing are possible; hence the qualifier “first”.)

We will refer to it as the FD estimator.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 46 / 65

Simple Panel Data Analysis

Example: Training grant, set-up

We evaluate the effect of a job training grant on worker productivity using

jtrain.dta.

Let scrapit denote the scrap rate of firm i in year t. It represents the number of

defective items per 100 that must be scrapped.

Let grantit be a dummy variable that = 1 if firm i in year t received a job

training grant.

Let y88t be a dummy variable for 1988.

For this exercise, we focus on years 1987 and 1988.

The model is

scrapit = β0 + δ0 y 88t + β1 grantit + ai + uit , t = 1, 2,

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 47 / 65

Simple Panel Data Analysis

Example: Training grant, data

. use jtrain

. des fcode year scrap grant

storage display value

variable name type format label variable label

----------------------------------------------------------------------------------------------

fcode float %9.0g firm code number

year int %9.0g 1987, 1988, or 1989

scrap float %9.0g scrap rate (per 100 items)

grant byte %9.0g = 1 if received grant

. keep if year <= 1988

(157 observations deleted)

. tab year if scrap != .

1987, 1988, |

or 1989 | Freq. Percent Cum.

------------+-----------------------------------

1987 | 54 50.00 50.00

1988 | 54 50.00 100.00

------------+-----------------------------------

Total | 108 100.00

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 48 / 65

Simple Panel Data Analysis

Example: Training grant, firm fixed effects

What are some possible unobserved firm effects (ai )?

Average employee ability, capital, managerial skill (that can be considered

constant over a two-year period).

These may be related to whether a firm receives a grant, meaning ...

Cov (ai , grantit ) ̸= 0.

So we need to difference ai out to estimate the effects of the grant.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 49 / 65

Simple Panel Data Analysis

Example: Training grant, FD estimator

After first-differencing, we have

∆ scrapi = δ0 + β1 ∆granti + ∆ui .

. reg D.scrap cgrant if inlist(year, 1987, 1988)

Source | SS df MS Number of obs = 54

-------------+---------------------------------- F(1, 52) = 1.17

Model | 6.73345587 1 6.73345587 Prob > F = 0.2837

Residual | 298.400031 52 5.73846213 R-squared = 0.0221

-------------+---------------------------------- Adj R-squared = 0.0033

Total | 305.133487 53 5.7572356 Root MSE = 2.3955

------------------------------------------------------------------------------

D.scrap | Coefficient Std. err. t P>|t| [95% conf. interval]

-------------+----------------------------------------------------------------

cgrant | -.7394436 .6826276 -1.08 0.284 -2.109236 .6303488

_cons | -.5637143 .4049149 -1.39 0.170 -1.376235 .2488069

------------------------------------------------------------------------------

Job training grant had reduced the scrap rate by -0.739 on average, though

the estimate is not statistically significant.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 50 / 65

Simple Panel Data Analysis

Example: Training grant, FD with log

We get stronger results by differencing log(scrap).

∆log(scrapi ) = δ0 + β1 ∆granti + ∆ui .

. reg D.lscrap cgrant if inlist(year, 1987, 1988)

Source | SS df MS Number of obs = 54

-------------+---------------------------------- F(1, 52) = 3.74

Model | 1.23795567 1 1.23795567 Prob > F = 0.0585

Residual | 17.1971851 52 .330715099 R-squared = 0.0672

-------------+---------------------------------- Adj R-squared = 0.0492

Total | 18.4351408 53 .347832845 Root MSE = .57508

------------------------------------------------------------------------------

D.lscrap | Coefficient Std. err. t P>|t| [95% conf. interval]

-------------+----------------------------------------------------------------

cgrant | -.3170579 .1638751 -1.93 0.058 -.6458974 .0117816

_cons | -.0574357 .097206 -0.59 0.557 -.2524938 .1376224

------------------------------------------------------------------------------

The t statistic for ∆grant is only marginally significant (and even less

significant if we specify robust).

We conclude that ... there is likely no significant relationship between the

scrap rate and the job training grant.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 51 / 65

Simple Panel Data Analysis

More than two periods of data

First Differencing can be used with more than two periods of panel data, but

we must be careful to account for serial correlation (and, as usual, possibly

heteroskedasticity) in the FD equation.

This is because the FD equation is no longer just a single cross section.

Generally, we should also include a full set of time dummies for a convincing

analysis.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 52 / 65

Simple Panel Data Analysis

The model

With T time periods, where now T ≥ 2, we can write

yit = δ1 + δ2 d2t + . . . + δT dTt + β1 xit1 + β2 xit2 + . . . + βk xitk + ai + uit

where now δ1 denotes the intercept in the first year and δt , t ≥ 2, is the

difference between the intercept in period t and period 1.

The model still contains an unobserved effect, ai , and idiosyncratic error, uit .

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 53 / 65

Simple Panel Data Analysis

The model after differencing

Unless we are interested in the original δt , it is easier to include an overall

intercept and not difference the time dummies. We lose a time dummy

because we lose the first time period:

∆yit = α0 + α3 d3t + . . . + αT dTt + β1 ∆xit1 + β2 ∆xit2 + . . . + βk ∆xitk + ∆uit

Now we just use POLS on the changes for t = 2, . . . , T , and we can use the

usual and adjusted R-squareds as goodness-of-fit in the FD equation.

Except for changing how we allow for different time intercepts, this is the same

model as before. Estimates of the βj are identical.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 54 / 65

Simple Panel Data Analysis

Cluster-robust standard errors

The “cluster-robust” standard errors and test statistics are robust to

heteroskedasticity of any kind.

xtset id year

gen cy = D.y

gen cx1 = D.x1

...

gen cxk = D.xk

reg cy d3 d4 ... dT cx1 cx2 ... cxk, cluster(id)

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 55 / 65

Simple Panel Data Analysis

Some tricks

A simpler way is to apply the differencing operator, d., to the equation all at

once:

reg D.(y d2 d3 ... dT x1 x2 ... xk), cluster(id)

This gives different time intercepts because these time dummies are

differenced.

How many time dummies will be estimated? T − 2, because an intercept is

included.

Can force the intercept to zero to get estimates on all T − 1 dummies:

reg D.(y d2 d3 ... dT x1 x2 ... xk), cluster(id) nocons

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 56 / 65

Simple Panel Data Analysis

Interpreting the differenced model

Reminder: In using FD methods, it is important to remember that an equation

such as

∆yit = α0 + α3 d3t + . . . + αT dTt + β1 ∆xit1 + β2 ∆xit2 + . . . + βk ∆xitk + ∆uit

is an estimating equation used to get rid of ai .

The estimates should be interpreted in the context of the original “levels”

equation

yit = δ1 + δ2 d2t + . . . + δT dTt + β1 xit1 + β2 xit2 + . . . + βk xitk + ai + uit .

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 57 / 65

Simple Panel Data Analysis

Extensions

With the levels equation as the starting point, it is easy to choose explanatory

variables for policy evaluation that allow, say, lagged effects.

We can also add quadratics, interactions, and so on. These are constructed

before applying differencing.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 58 / 65

Simple Panel Data Analysis

Example: Training grant, three years

We use jtrain.dta, this time for three years: 1987,1988, and 1989.

No grants in 1987; if got a grant in 1988, could not get one in 1989.

Job training in a previous year could have an effect on this years’ productivity.

Hence, omitting the previous years’ grant indicator could be a serious

misspecification.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 59 / 65

Simple Panel Data Analysis

Example: Training grant, lagged effect

Remember that response is lscrap = log(scrap), where scrap is the number

of items out of 100 that must be discarded.

If the training grant improved productivity, lscrap should fall, on average.

Since we know that grant was zero prior to 1987, we have three years of data

to add a lagged effect:

lscrapit = α0 + α1 d88t + α2 d89t + β1 grantit + β2 granti,t−1 + ai + uit ,t = 1, 2, 3

Can estimate the above equation by pooled OLS if grant designation is

uncorrelated with ai (and uit ).

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 60 / 65

Simple Panel Data Analysis

Example: Training grant, Pooled OLS

. * Pooled OLS with robust standard errors:

. reg lscrap grant grant_1 d88 d89, cluster(fcode)

Linear regression Number of obs = 162

F( 4, 53) = 3.90

Prob > F = 0.0075

R-squared = 0.0173

Root MSE = 1.4922

(Std. Err. adjusted for 54 clusters in fcode)

------------------------------------------------------------------------------

Robust

lscrap | Coef. Std. Err. t P>|t| [95% Conf. Interval]

-------------+----------------------------------------------------------------

grant | .2000197 .3226246 0.62 0.538 -.4470833 .8471227

grant_1 | .0489357 .4720909 0.10 0.918 -.8979588 .9958302

d88 | -.2393704 .1258802 -1.90 0.063 -.4918541 .0131133

d89 | -.4965236 .2331842 -2.13 0.038 -.9642318 -.0288154

_cons | .5974341 .2197527 2.72 0.009 .156666 1.038202

------------------------------------------------------------------------------

Pooled OLS actually shows a positive (but insignificant) grant effect.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 61 / 65

Simple Panel Data Analysis

Example: Training grant, estimation

The differenced equation (where the “c” stands for “change” – can be

confused with “dummy variable” “d”) is

clscrapit = β1 cgrantit + β2 cgranti,t−1 + α1 cd88t + α2 cd89t + cuit ,t = 2, 3

Estimate by pooled OLS (using two years). The firm effect ai has been

removed.

Can just use a dummy for 1989 and include a constant.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 62 / 65

Simple Panel Data Analysis

Example: Training grant, FD estimates

. reg clscrap cgrant cgrant_1 d89, cluster(fcode)

Linear regression Number of obs = 108

F( 3, 53) = 1.98

Prob > F = 0.1284

R-squared = 0.0365

Root MSE = .57672

(Std. Err. adjusted for 54 clusters in fcode)

------------------------------------------------------------------------------

Robust

clscrap | Coef. Std. Err. t P>|t| [95% Conf. Interval]

-------------+----------------------------------------------------------------

cgrant | -.222781 .1316461 -1.69 0.096 -.4868297 .0412676

cgrant_1 | -.3512459 .2709732 -1.30 0.201 -.8947493 .1922575

d89 | -.0962081 .1136492 -0.85 0.401 -.3241596 .1317434

_cons | -.0906072 .0901821 -1.00 0.320 -.2714895 .0902751

------------------------------------------------------------------------------

Differencing gives much stronger results, but statistical significance is

marginal (small sample size).

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 63 / 65

Simple Panel Data Analysis

Example: Training grant, FD estimates

If we use the D. operator, we get estimated time effects directly:

. reg D.(lscrap grant grant_1 d88 d89), cluster(fcode)

note: _delete omitted because of collinearity

Linear regression Number of obs = 108

F( 3, 53) = 1.98

Prob > F = 0.1284

R-squared = 0.0365

Root MSE = .57672

(Std. Err. adjusted for 54 clusters in fcode)

------------------------------------------------------------------------------

| Robust

D.lscrap | Coef. Std. Err. t P>|t| [95% Conf. Interval]

-------------+----------------------------------------------------------------

grant |

D1. | -.222781 .1316461 -1.69 0.096 -.4868297 .0412676

|

grant_1 |

D1. | -.3512459 .2709732 -1.30 0.201 -.8947493 .1922575

|

d88 |

D1. | .0481041 .0568246 0.85 0.401 -.0658717 .1620798

|

d89 |

D1. | 0 (omitted)

|

_cons | -.1387113 .0953842 -1.45 0.152 -.3300278 .0526053

------------------------------------------------------------------------------

We can specify nocons to drop the intercept and get an estimate for d89.

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 64 / 65

Simple Panel Data Analysis

General policy analysis with panel data

The two-period, before-after setting is a special case of a more general policy

analysis framework when T ≥ 2.

yit = η1 + α2 d2t + . . . + αT dTt + βwit + xit ψ + ai + uit , t = 1, . . . , T

→ wit is the binary intervention indicator and β estimates the average

treatment effect of the policy.

To allow wit to be systematically related to the unobserved fixed effect ai , we

estimate the regression with either First Differencing or Fixed Effects (after the

flexible learning week), using cluster-robust standard errors.

We can also include lags of the policy intervention wit−1 , wit−2,... .

Applications of Econometrics Ch. 13 PCS and PD Semester 2, 2023/24 65 / 65

You might also like

- Lect - 10 - Difference-in-Differences Estimation PDFDocument19 pagesLect - 10 - Difference-in-Differences Estimation PDFأبوسوار هندسةNo ratings yet

- Wooldridge Slides 10 Diff in DiffsDocument31 pagesWooldridge Slides 10 Diff in DiffsAsrafuzzaman RobinNo ratings yet

- Chapter 2.Document24 pagesChapter 2.Roha CbcNo ratings yet

- 04 v4n2 00057Document13 pages04 v4n2 00057Mauricio Moyano CastilloNo ratings yet

- Lect 10 Diffindiffs 230305 014504Document20 pagesLect 10 Diffindiffs 230305 014504Silvio RosaNo ratings yet

- Pooled Cross Sections and Panel Data, Difference in DifferenceDocument35 pagesPooled Cross Sections and Panel Data, Difference in DifferenceDian HendrawanNo ratings yet

- Orton - Quantitative RadiobiologyDocument32 pagesOrton - Quantitative RadiobiologydptofisicaflorestaNo ratings yet

- Non-Traditional MSA With Continuous Data: - Binary: Only One of Two Answers Can Be Provided. For Many MSA StudiesDocument6 pagesNon-Traditional MSA With Continuous Data: - Binary: Only One of Two Answers Can Be Provided. For Many MSA Studiesapi-3744914No ratings yet

- A Representation and Economic Interpretation of A Two-Level Programming ProblemDocument11 pagesA Representation and Economic Interpretation of A Two-Level Programming Problems8nd11d UNINo ratings yet

- Tran Thanh Thao 31221021683Document13 pagesTran Thanh Thao 31221021683Thảo Trần ThanhNo ratings yet

- Solutions 5Document6 pagesSolutions 5xinyichen121No ratings yet

- Section 2 PDFDocument4 pagesSection 2 PDFGaoudam NatarajanNo ratings yet

- Difference in DifferencesDocument7 pagesDifference in DifferencesJean Eudes DEKPEMADOHANo ratings yet

- Intensity-Modulated Radiotherapy Optimization With gEUD-guided Dose-Volume ObjectivesDocument14 pagesIntensity-Modulated Radiotherapy Optimization With gEUD-guided Dose-Volume ObjectivessamuelfsjNo ratings yet

- Callaway & SantAnnaDocument31 pagesCallaway & SantAnnaVictor Maury SallucaNo ratings yet

- Measures of Dispersion (Variation)Document5 pagesMeasures of Dispersion (Variation)Bethelhem AshenafiNo ratings yet

- Regression Discntinue Paper PDFDocument21 pagesRegression Discntinue Paper PDFyanfikNo ratings yet

- Session 3Document28 pagesSession 3chang sunNo ratings yet

- Chapter-4 - Measures of DisperstionDocument21 pagesChapter-4 - Measures of DisperstionM BNo ratings yet

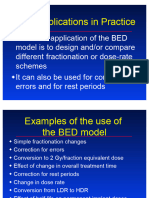

- BED Applications in Practice OrtonDocument44 pagesBED Applications in Practice OrtondptofisicaflorestaNo ratings yet

- Did (Y Y ) (Y Y ) : S Treatment, T After S Treatment, T Before S Control, T After S Control, T BeforeDocument2 pagesDid (Y Y ) (Y Y ) : S Treatment, T After S Treatment, T Before S Control, T After S Control, T BeforeAmna Noor FatimaNo ratings yet

- DID PrincetonDocument38 pagesDID PrincetoningNo ratings yet

- Introduction To The Difference-In-Differences Regression Model (2021)Document2 pagesIntroduction To The Difference-In-Differences Regression Model (2021)Bảo Ngọc TrầnNo ratings yet

- AIBSlide 15Document37 pagesAIBSlide 15Dimple RoheraNo ratings yet

- Pitfalls of DEADocument15 pagesPitfalls of DEAhind shririNo ratings yet

- Lee Wooldridge 20230720Document45 pagesLee Wooldridge 20230720basirunjie86No ratings yet

- Week 1Document75 pagesWeek 1xinyichen121No ratings yet

- Example Did Berkeley 1.11Document4 pagesExample Did Berkeley 1.11panosx87No ratings yet

- BA 1 Central Tendency DispersionDocument72 pagesBA 1 Central Tendency DispersionPrarthi DesaiNo ratings yet

- BFA MBA06201 AshutoshBhandekarDocument6 pagesBFA MBA06201 AshutoshBhandekarAshutosh BhandekarNo ratings yet

- Chapter 2 Simple Linear Regression - Jan2023Document66 pagesChapter 2 Simple Linear Regression - Jan2023Lachyn SeidovaNo ratings yet

- This Study Resource WasDocument7 pagesThis Study Resource WasAryaman JunejaNo ratings yet

- CT3 CMP Upgrade 11Document18 pagesCT3 CMP Upgrade 11Zaheer AhmadNo ratings yet

- SSau ExercisesDocument6 pagesSSau Exercisessweetie05No ratings yet

- 9 1507708731 - 11-10-2017 PDFDocument5 pages9 1507708731 - 11-10-2017 PDFRahul SharmaNo ratings yet

- Statistical Analysis of Method Comparison Studies: MorningDocument34 pagesStatistical Analysis of Method Comparison Studies: MorningAntonSurviyantoNo ratings yet

- Local Search Algorithm Direct Aperture OptimizationDocument6 pagesLocal Search Algorithm Direct Aperture OptimizationMauricio Moyano CastilloNo ratings yet

- Utad 016Document36 pagesUtad 016cao xiuzhenNo ratings yet

- Ch26 ExercisesDocument14 pagesCh26 Exercisesamisha2562585No ratings yet

- Diff PDFDocument4 pagesDiff PDFpcg20013793No ratings yet

- Faculty of Social Studies and HumantiesDocument16 pagesFaculty of Social Studies and HumantiesJeevesh AugunNo ratings yet

- DummyDocument20 pagesDummymushtaque61No ratings yet

- Multiple Linear Regression: y BX BX BXDocument14 pagesMultiple Linear Regression: y BX BX BXPalaniappan SellappanNo ratings yet

- Mitesh Kumar JenaDocument12 pagesMitesh Kumar JenaJayanti SahooNo ratings yet

- SET-1 Q1. Describe in Details The Different Scopes of Application of Operations Research. Ans.: Scope of Operations Research (OR)Document20 pagesSET-1 Q1. Describe in Details The Different Scopes of Application of Operations Research. Ans.: Scope of Operations Research (OR)shri_k76No ratings yet

- Mra HW2Document19 pagesMra HW2Melvin EstolanoNo ratings yet

- 4.0 Measures of Skewness - Dispersion - VariabilityDocument8 pages4.0 Measures of Skewness - Dispersion - VariabilityBridget MatutuNo ratings yet

- Assignment 1 - Time Dose Fractionation TDF ConceptsDocument3 pagesAssignment 1 - Time Dose Fractionation TDF Conceptsapi-299138743No ratings yet

- Multiple Regression Analysis: EstimationDocument50 pagesMultiple Regression Analysis: Estimationsanbin007No ratings yet

- Supply Chain Management: Forcasting Techniques and Value of InformationDocument46 pagesSupply Chain Management: Forcasting Techniques and Value of InformationSayan MitraNo ratings yet

- Econometrics 3A Supplementary Examination MemoDocument9 pagesEconometrics 3A Supplementary Examination MemomunaNo ratings yet

- Lecture 3 Differences in DifferencesDocument47 pagesLecture 3 Differences in DifferencesAlessandro Economia Uefs100% (1)

- TD-102. HAEFELY Technical DocumentDocument6 pagesTD-102. HAEFELY Technical DocumentTXEMANo ratings yet

- Solving DSGE Models Using DynareDocument11 pagesSolving DSGE Models Using DynarerudiminNo ratings yet

- Solving DSGE Models Using DynareDocument11 pagesSolving DSGE Models Using DynarerudiminNo ratings yet

- Medirad 1Document11 pagesMedirad 1Teresa PerezNo ratings yet

- Modeling Ordered Categorical Data: James J. DignamDocument27 pagesModeling Ordered Categorical Data: James J. DignamcdcdiverNo ratings yet

- Homework 3Document3 pagesHomework 3Cloud worldNo ratings yet

- Integer Optimization and its Computation in Emergency ManagementFrom EverandInteger Optimization and its Computation in Emergency ManagementNo ratings yet

- Neuroscientific based therapy of dysfunctional cognitive overgeneralizations caused by stimulus overload with an "emotionSync" methodFrom EverandNeuroscientific based therapy of dysfunctional cognitive overgeneralizations caused by stimulus overload with an "emotionSync" methodNo ratings yet

- Entropy (Information Theory)Document17 pagesEntropy (Information Theory)joseph676No ratings yet

- Effect of Social Economic Factors On Profitability of Soya Bean in RwandaDocument7 pagesEffect of Social Economic Factors On Profitability of Soya Bean in RwandaMarjery Fiona ReyesNo ratings yet

- FYP List 2020 21RDocument3 pagesFYP List 2020 21RSaif UllahNo ratings yet

- Grade 7 - R & C - Where Tigers Swim - JanDocument15 pagesGrade 7 - R & C - Where Tigers Swim - JanKritti Vivek100% (3)

- 1609 Um009 - en PDocument34 pages1609 Um009 - en PAnonymous VKBlWeyNo ratings yet

- D1 001 Prof Rudi STAR - DM in Indonesia - From Theory To The Real WorldDocument37 pagesD1 001 Prof Rudi STAR - DM in Indonesia - From Theory To The Real WorldNovietha Lia FarizymelinNo ratings yet

- ABM 221-Examples (ALL UNITS)Document10 pagesABM 221-Examples (ALL UNITS)Bonface NsapatoNo ratings yet

- CE 2812-Permeability Test PDFDocument3 pagesCE 2812-Permeability Test PDFShiham BadhurNo ratings yet

- Russian Sec 2023-24Document2 pagesRussian Sec 2023-24Shivank PandeyNo ratings yet

- Sculpture and ArchitectureDocument9 pagesSculpture and ArchitectureIngrid Dianne Luga BernilNo ratings yet

- Blaine Ray HandoutDocument24 pagesBlaine Ray Handoutaquilesanchez100% (1)

- Helena HelsenDocument2 pagesHelena HelsenragastrmaNo ratings yet

- CERADocument10 pagesCERAKeren Margarette AlcantaraNo ratings yet

- Under The SHODH Program For ResearchDocument3 pagesUnder The SHODH Program For ResearchSurya ShuklaNo ratings yet

- Pyrethroids April 11Document15 pagesPyrethroids April 11MadhumithaNo ratings yet

- Lac MapehDocument4 pagesLac MapehChristina Yssabelle100% (1)

- Working With Hierarchies External V08Document9 pagesWorking With Hierarchies External V08Devesh ChangoiwalaNo ratings yet

- High Performance ComputingDocument294 pagesHigh Performance Computingsorinbazavan100% (1)

- Australia Harvesting Rainwater For Environment, Conservation & Education: Some Australian Case Studies - University of TechnologyDocument8 pagesAustralia Harvesting Rainwater For Environment, Conservation & Education: Some Australian Case Studies - University of TechnologyFree Rain Garden ManualsNo ratings yet

- Cottrell Park Golf Club 710Document11 pagesCottrell Park Golf Club 710Mulligan PlusNo ratings yet

- Folktales Stories For Kids: Two Brothers StoryDocument1 pageFolktales Stories For Kids: Two Brothers StoryljNo ratings yet

- Can J Chem Eng - 2022 - Mocellin - Experimental Methods in Chemical Engineering Hazard and Operability Analysis HAZOPDocument20 pagesCan J Chem Eng - 2022 - Mocellin - Experimental Methods in Chemical Engineering Hazard and Operability Analysis HAZOPbademmaliNo ratings yet

- Commissioning 1. Commissioning: ES200 EasyDocument4 pagesCommissioning 1. Commissioning: ES200 EasyMamdoh EshahatNo ratings yet

- Arithmetic Mean PDFDocument29 pagesArithmetic Mean PDFDivya Gothi100% (1)

- NewspaperDocument2 pagesNewspaperbro nabsNo ratings yet

- B - ELSB - Cat - 2020 PDFDocument850 pagesB - ELSB - Cat - 2020 PDFanupamNo ratings yet

- PGT Computer Science Kendriya Vidyalaya Entrance Exam Question PapersDocument117 pagesPGT Computer Science Kendriya Vidyalaya Entrance Exam Question PapersimshwezNo ratings yet

- Sector San Juan Guidance For RepoweringDocument12 pagesSector San Juan Guidance For RepoweringTroy IveyNo ratings yet

- Motivational QuotesDocument39 pagesMotivational QuotesNarayanan SubramanianNo ratings yet

- Sap Business Objects Edge Series 3.1 Install Windows enDocument104 pagesSap Business Objects Edge Series 3.1 Install Windows enGerardoNo ratings yet