Professional Documents

Culture Documents

7 - Text Classification Naive Bayes

Uploaded by

simaak khanOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

7 - Text Classification Naive Bayes

Uploaded by

simaak khanCopyright:

Available Formats

Text classification: example

Dear Prof. [ZZ]:

My name is [XX]. I am an ambitious applicant for the Ph.D program of Electrical Engineering and Computer Science

at your university. Especially being greatly attracted by your research projects and admiring for your achievements

via the school website, I cannot wait to write a letter to express my aspiration to undertake the Ph.D program under

your supervision.

I have completed the M.S. program in Information and Communication Engineering with a high GPA of 3.95/4.0 at

[YY] University. In addition to throwing myself into the specialized courses in [...] I took part in the research projects,

such as [...]. I really enjoyed taking the challenges in the process of the researches and tests, and I spent two years

on the research project [...]. We proved the effectiveness of the new method for [...] and published the result in [...].

Having read your biography, I found my academic background and research experiences indicated some possibility of

my qualification to join your team. It is my conviction that the enlightening instruction, cutting-edge research projects

and state of-the-art facilities offered by your team will direct me to make breakthroughs in my career development in

the arena of electrical engineering and computer science. Thus, I shall be deeply grateful if you could give me the

opportunity to become your student. Please do not hesitate to contact me, should you need any further information

about my scholastic and research experiences.

Yours sincerely, [XX].

Alex Lascarides FNLP Lecture 7 1

Text classification

We might want to categorize the content of the text:

• Spam detection (binary classification: spam/not spam)

• Sentiment analysis (binary or multiway)

– movie, restaurant, product reviews (pos/neg, or 1-5 stars)

– political argument (pro/con, or pro/con/neutral)

• Topic classification (multiway: sport/finance/travel/etc)

Alex Lascarides FNLP Lecture 7 2

Text classification

Or we might want to categorize the author of the text (authorship attribution):

• Native language identification (e.g., to tailor language tutoring)

• Diagnosis of disease (psychiatric or cognitive impairments)

• Identification of gender, dialect, educational background, political orientation

(e.g., in forensics [legal matters], advertising/marketing, campaigning,

disinformation).

Alex Lascarides FNLP Lecture 7 3

N-gram models for classification?

N-gram models can sometimes be used for classification (e.g. identifying

specific authors). But

• For many tasks, sequential relationships between words are largely irrelevant:

we can just consider the document as a bag of words.

-

• On the other hand, we may want to include other kinds of features (e.g., part

of speech tags) that N-gram models don’t include.

Here we consider two alternative models for classification:

• Naive Bayes (this should be review)

• Maximum Entropy (aka multinomial logistic regression).

Alex Lascarides FNLP Lecture 7 4

Bag of words

Figure from J&M 3rd ed. draft, sec 7.1

Alex Lascarides FNLP Lecture 7 5

Naive Bayes: high-level formulation

• Given document d and set of categories C (say, spam/not-spam), we want to

assign d to the most probable category ĉ.

ĉ = argmax P (c|d)

c∈C

Alex Lascarides FNLP Lecture 7 6

Naive Bayes: high-level formulation

• Given document d and set of categories C (say, spam/not-spam), we want to

assign d to the most probable category ĉ.

ĉ = argmax P (c|d)

c∈C

P (d|c)P (c)

= argmax

c∈C P (d)

= argmax P (d|c)P (c)

c∈C

• Just as in spelling correction, we need to define P (d|c) and P (c).

Alex Lascarides FNLP Lecture 7 7

How to model P (d|c)?

• First, define a set of features that might help classify docs.

– Here we’ll assume these are all the words in the vocabulary.

– But, we could just use some words (more on this later...).

– Or, use other info, like parts of speech, if available.

• We then represent each document d as the set of features (words) it contains:

f1, f2, . . . fn. So

P (d|c) = P (f1, f2, . . . fn|c)

Alex Lascarides FNLP Lecture 7 8

Naive Bayes assumption

• As in LMs, we can’t accurately estimate P (f1, f2, . . . fn|c) due to sparse data.

• So, make a naive Bayes assumption: features are conditionally independent

given the class.

P (f1, f2, . . . fn|c) ≈ P (f1|c)P (f2|c) . . . P (fn|c)

• That is, the prob. of a word occurring depends only on the class.

– Not on which words occurred before or after (as in N-grams)

– Or even which other words occurred at all

Alex Lascarides FNLP Lecture 7 9

Naive Bayes assumption

• Effectively, we only care about the count of each feature in each document.

• For example, in spam detection:

the your model cash Viagra class account orderz

doc 1 12 3 1 0 0 2 0 0

doc 2 10 4 0 4 0 0 2 0

doc 3 25 4 0 0 0 1 1 0

doc 4 14 2 0 1 3 0 1 1

doc 5 17 5 0 2 0 0 1 1

Alex Lascarides FNLP Lecture 7 10

Naive Bayes classifier

Putting together the pieces, our complete classifier definition:

• Given a document with features f1, f2, . . . fn and set of categories C, choose

the class ĉ where

n

Y

ĉ = argmax P (c) P (fi|c)

c∈C i=1

– P (c) is the prior probability of class c before observing any data.

– P (fi|c) is the probability of seeing feature fi in class c.

Alex Lascarides FNLP Lecture 7 11

Estimating the class priors

• P (c) normally estimated with MLE:

Nc

P̂ (c) =

N

– Nc = the number of training documents in class c

– N = the total number of training documents

• So, P̂ (c) is simply the proportion of training documents belonging to class c.

Alex Lascarides FNLP Lecture 7 12

Learning the class priors: example

• Given training documents with correct labels:

the your model cash Viagra class account orderz spam?

doc 1 12 3 1 0 0 2 0 0 -

doc 2 10 4 0 4 0 0 2 0 +

doc 3 25 4 0 0 0 1 1 0 -

doc 4 14 2 0 1 3 0 1 1 +

doc 5 17 5 0 2 0 0 1 1 +

• P̂ (spam) = 3/5

Alex Lascarides FNLP Lecture 7 13

Learning the feature probabilities

• P (fi|c) normally estimated with simple smoothing:

count(fi, c) + α

P̂ (fi|c) = P

f ∈F (count(f, c) + α)

– count(fi, c) = the number of times fi occurs in class c

– F = the set of possible features

– α: the smoothing parameter, optimized on held-out data

Alex Lascarides FNLP Lecture 7 14

Learning the feature probabilities: example

the your model cash Viagra class account orderz spam?

doc 1 12 3 1 0 0 2 0 0 -

doc 2 10 4 0 4 0 0 2 0 +

doc 3 25 4 0 0 0 1 1 0 -

doc 4 14 2 0 1 3 0 1 1 +

doc 5 17 5 0 2 0 0 1 1 +

Alex Lascarides FNLP Lecture 7 15

Learning the feature probabilities: example

the your model cash Viagra class account orderz spam?

doc 1 12 3 1 0 0 2 0 0 -

doc 2 10 4 0 4 0 0 2 0 +

doc 3 25 4 0 0 0 1 1 0 -

doc 4 14 2 0 1 3 0 1 1 +

doc 5 17 5 0 2 0 0 1 1 +

(4+2+5+α)

P̂ (your|+) = (all words in + class)+α|F | = (11 + α)/(68 + α|F |)

Alex Lascarides FNLP Lecture 7 16

Learning the feature probabilities: example

the your model cash Viagra class account orderz spam?

doc 1 12 3 1 0 0 2 0 0 -

doc 2 10 4 0 4 0 0 2 0 +

doc 3 25 4 0 0 0 1 1 0 -

doc 4 14 2 0 1 3 0 1 1 +

doc 5 17 5 0 2 0 0 1 1 +

(4+2+5+α)

P̂ (your|+) = (all words in + class)+α|F | = (11 + α)/(68 + α|F |)

(3+4+α)

P̂ (your|−) = (all words in − class)+α|F | = (7 + α)/(49 + α|F |)

(2+α)

P̂ (orderz|+) = (all words in + class)+α|F | = (2 + α)/(68 + α|F |)

Alex Lascarides FNLP Lecture 7 17

Classifying a test document: example

• Test document d:

get your cash and your orderz

α

• Suppose all features not shown earlier have P̂ (fi|+) = (68+α|F |)

n

Y

P (+|d) ∝ P (+) P (fi|+)

i=1

α 11 + α 7+α

= P (+) · · ·

(68 + α|F |) (68 + α|F |) (68 + α|F |)

α 11 + α 2+α

· · ·

(68 + α|F |) (68 + α|F |) (68 + α|F |)

Alex Lascarides FNLP Lecture 7 18

Classifying a test document: example

• Test document d:

get your cash and your orderz

• Do the same for P (−|d)

• Choose the one with the larger value

Alex Lascarides FNLP Lecture 7 19

Alternative feature values and feature sets

• Use only binary values for fi: did this word occur in d or not?

• Use only a subset of the vocabulary for F

– Ignore stopwords (function words and others with little content)

– Choose a small task-relevant set (e.g., using a sentiment lexicon)

• Use more complex features (bigrams, syntactic features, morphological

features, ...)

Alex Lascarides FNLP Lecture 7 20

Very small numbers...

Multiplying large numbers of small probabilities together is problematic in practice

• Even in our toy example P (−|class)P (−|account)P (−|V iagra) with α =

0.01 is 5 × 10−5

• So it would only take two dozen similar words to get down to 10−44, which

cannot be represented with as single-precision floating point number

• Even double precision fails once we get to around 175 words with total

probability around 10−310

So most actual implementations of Naive Bayes use costs

• Costs are negative log probabilities

• So we can sum them, thereby avoiding underflow

• And look for the lowest cost overall

Alex Lascarides FNLP Lecture 7 21

Costs and linearity

Using costs, our Naive Bayes equation looks like this

n

X

ĉ = argmin +(−logP (c) + −logP (fi|c))

c∈C i=1

We’re finding the lowest cost classification.

This amounts to classification using a linear function (in log space) of the input

features.

• So Naive Bayes is called a linear classifier

• As is Logistic Regression (to come)

Alex Lascarides FNLP Lecture 7 22

Task-specific features

Example words from a sentiment lexicon:

Positive: Negative:

absolutely beaming calm abysmal bad callous

adorable beautiful celebrated adverse banal can’t

accepted believe certain alarming barbed clumsy

acclaimed beneficial champ angry belligerent coarse

accomplish bliss champion annoy bemoan cold

achieve bountiful charming anxious beneath collapse

action bounty cheery apathy boring confused

active brave choice appalling broken contradictory

admire bravo classic atrocious contrary

adventure brilliant classical awful corrosive

affirm bubbly clean corrupt

... ... ...

From http://www.enchantedlearning.com/wordlist/

Alex Lascarides FNLP Lecture 7 23

Choosing features can be tricky

• For example, sentiment analysis might need domain-specific non-sentiment

words

– Such as quiet, memory for computer product reviews.

• And for other tasks, stopwords might be very useful features

– E.g., People with schizophrenia use more 2nd-person pronouns (Watson et

al, 2012), those with depression use more 1st-person (Rude, 2004).

• Probably better to use too many irrelevant features than not enough relevant

ones.

Alex Lascarides FNLP Lecture 7 24

Advantages of Naive Bayes

• Very easy to implement

• Very fast to train, and to classify new documents (good for huge datasets).

• Doesn’t require as much training data as some other methods (good for small

datasets).

• Usually works reasonably well

• This should be your baseline method for any classification task

Alex Lascarides FNLP Lecture 7 25

Problems with Naive Bayes

• Naive Bayes assumption is naive!

• Consider categories Travel, Finance, Sport.

• Are the following features independent given the category?

beach, sun, ski, snow, pitch, palm, football, relax, ocean

Alex Lascarides FNLP Lecture 7 26

Problems with Naive Bayes

• Naive Bayes assumption is naive!

• Consider categories Travel, Finance, Sport.

• Are the following features independent given the category?

beach, sun, ski, snow, pitch, palm, football, relax, ocean

– No! Ex: Given Travel, seeing beach makes sun more likely, but ski less

likely.

– Defining finer-grained categories might help (beach travel vs ski travel), but

we don’t usually want to.

Alex Lascarides FNLP Lecture 7 27

Non-independent features

• Features are not usually independent given the class

• Adding multiple feature types (e.g., words and morphemes) often leads to even

stronger correlations between features

• Accuracy of classifier can sometimes still be ok, but it will be highly

overconfident in its decisions.

– Ex: NB sees 5 features that all point to class 1, treats them as five

independent sources of evidence.

– Like asking 5 friends for an opinion when some got theirs from each other.

Alex Lascarides FNLP Lecture 7 28

A less naive approach

• Although Naive Bayes is a good starting point, often we have enough training

data for a better model (and not so much that slower performance is a

problem).

• We may be able to get better performance using loads of features and a model

that doesn’t assume features are conditionally independent.

• Namely, a Maximum Entropy model.

Alex Lascarides FNLP Lecture 7 29

MaxEnt classifiers

• Used widely in many different fields, under many different names

• Most commonly, multinomial logistic regression

– multinomial if more than two possible classes

– otherwise (or if lazy) just logistic regression

• Also called: log-linear model, one-layer neural network, single neuron classifier,

etc ...

• The mathematical formulation here (and in the text) looks slightly different

from standard presentations of multinomial logistic regresssion, but is ultimately

equivalent.

Alex Lascarides FNLP Lecture 7 30

Naive Bayes vs MaxEnt

• Like Naive Bayes, MaxEnt assigns a document d to class ĉ, where

ĉ = argmax P (c|d)

c∈C

• Unlike Naive Bayes, we do not apply Bayes’ Rule. Instead, we model P (c|d)

directly.

Alex Lascarides FNLP Lecture 7 31

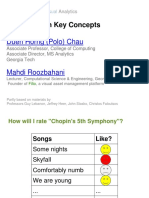

Example: classify by topic

• Given a web page document, which topic does it belong to?

– ~x are the words in the document, plus info about headers and links.

– c is the latent class. Assume three possibilities:

c= class

1 Travel

2 Sport

3 Finance

Alex Lascarides FNLP Lecture 7 32

Feature functions

• Like Naive Bayes, MaxEnt models use features we think will be useful for

classification.

• However, features are treated differently in the two models:

– NB: features are directly observed (e.g., words in doc): no difference

between features and data.

– MaxEnt: we will use ~x to represent the observed data. Features are

functions that depend on both observations ~x and class c.

This way of treating features in MaxEnt is standard in NLP; in ML it’s often explained differently.

Alex Lascarides FNLP Lecture 7 33

MaxEnt feature example

• If we have three classes, our features will always come in groups of three. For

example, we could have three binary features:

f1 : contains(‘ski’) & c = 1

f2 : contains(‘ski’) & c = 2

f3 : contains(‘ski’) & c = 3

– training docs from class 1 that contain ski will have f1 active;

– training docs from class 2 that contain ski will have f2 active;

– etc.

• Each feature fi has a real-valued weight wi (learned in training).

Alex Lascarides FNLP Lecture 7 34

Classification with MaxEnt

Choose the class that has highest probability according to

!

1 X

P (c|~x) = exp wifi(~x, c)

Z i

x, c0))

P P

where the normalization constant Z = c0 exp( i wi fi (~

~ · f~.

• Inside brackets is just a dot product: w

• And P (c|~x) is a monotonic function of this dot product.

~ · f~ is highest.

• So, we will end up choosing the class for which w

Alex Lascarides FNLP Lecture 7 35

Classification example

f1 : contains(‘ski’) & c = 1 w1 = 1.2

f2 : contains(‘ski’) & c = 2 w2 = 2.3

f3 : contains(‘ski’) & c = 3 w3 = −0.5

f4 : link to(‘expedia.com’) & c = 1 w4 = 4.6

f5 : link to(‘expedia.com’) & c = 2 w5 = −0.2

f6 : link to(‘expedia.com’) & c = 3 w6 = 0.5

f7 : num links & c = 1 w7 = 0.0

f8 : num links & c = 2 w8 = 0.2

f9 : num links & c = 3 w9 = −0.1

• f7, f8, f9 are numeric features that count outgoing links.

Alex Lascarides FNLP Lecture 7 36

Classification example

• Suppose our test document contains ski and 6 outgoing links.

• We don’t know c for this doc, so we try out each possible value.

P

– Travel: i wifi(~x, c = 1) = 1.2 + (0.0)(6) = 1.2.

P

– Sport: i wifi(~x, c = 2) = 2.3 + (0.2)(6) = 3.5.

P

– Finance: i wifi(~x, c = 3) = −0.5 + (−0.1)(6) = −1.1.

• We’d need to do further work to compute the probability of each class, but we

know already that Sport will be the most probable.

Alex Lascarides FNLP Lecture 7 37

Feature templates

• In practice, features are usually defined using templates

contains(w) & c

header contains(w) & c

header contains(w) & link in header & c

– instantiate with all possible words w and classes c

– usually filter out features occurring very few times

• NLP tasks often have a few templates, but 1000s or 10000s of features

Alex Lascarides FNLP Lecture 7 38

Training the model

• Given annotated data, choose weights that make the labels most probable

under the model.

• That is, given items x(1) . . . x(N ) with labels c(1) . . . c(N ), choose

X

ŵ = argmax log P (c(j)|x(j))

w

~ j

Alex Lascarides FNLP Lecture 7 39

Training the model

• Given annotated data, choose weights that make the class labels most probable

under the model.

• That is, given examples x(1) . . . x(N ) with labels c(1) . . . c(N ), choose

X

ŵ = argmax log P (c(j)|x(j))

w

~ j

• called conditional maximum likelihood estimation (CMLE)

• Like MLE, CMLE will overfit, so we use tricks (regularization) to avoid that.

Alex Lascarides FNLP Lecture 7 40

The downside to MaxEnt models

• Supervised MLE in generative models is easy: compute counts and normalize.

• Supervised CMLE in MaxEnt model not so easy

– requires multiple iterations over the data to gradually improve weights (using

gradient ascent).

– each iteration computes P (c(j)|x(j)) for all j, and each possible c(j).

– this can be time-consuming, especially if there are a large number of classes

and/or thousands of features to extract from each training example.

Alex Lascarides FNLP Lecture 7 41

You might also like

- Good Habits for Great Coding: Improving Programming Skills with Examples in PythonFrom EverandGood Habits for Great Coding: Improving Programming Skills with Examples in PythonNo ratings yet

- NLP NBDocument52 pagesNLP NBpawebiarxdxdNo ratings yet

- L 13 Naive Bayes ClassifierDocument52 pagesL 13 Naive Bayes Classifierpiyaliroy100% (1)

- Probabilistic Reasoning Lab ProcedureDocument4 pagesProbabilistic Reasoning Lab ProceduredanielkooeunNo ratings yet

- Machine Learning and Pattern Recognition Week 3 Intro - ClassificationDocument5 pagesMachine Learning and Pattern Recognition Week 3 Intro - ClassificationzeliawillscumbergNo ratings yet

- ClassificationDocument35 pagesClassificationMahesh RamalingamNo ratings yet

- INF 5110: Compiler Construction Collection of Exam QuestionsDocument20 pagesINF 5110: Compiler Construction Collection of Exam QuestionsYashoudhan KshirsagarNo ratings yet

- ELEN4903 hw1 Spring2018Document2 pagesELEN4903 hw1 Spring2018Azraf AnwarNo ratings yet

- Irs Unit 4 CH 1Document58 pagesIrs Unit 4 CH 1ZeenathNo ratings yet

- Unit 4Document207 pagesUnit 4Chetan RajNo ratings yet

- Relational Calculus: Zachary G. IvesDocument21 pagesRelational Calculus: Zachary G. IvesneeravaroraNo ratings yet

- K - Nearest Neighbours Classifier / RegressorDocument35 pagesK - Nearest Neighbours Classifier / RegressorSmitNo ratings yet

- Naive Bayes & Introduction To GaussiansDocument10 pagesNaive Bayes & Introduction To GaussiansMafer ReveloNo ratings yet

- Ps 4Document12 pagesPs 4Wassim AzzabiNo ratings yet

- Design & Analysis of Algorithm Notes For BCA Purvanchal 4th Sem PDFDocument37 pagesDesign & Analysis of Algorithm Notes For BCA Purvanchal 4th Sem PDFTabraiz Khan100% (2)

- CS5785 Homework 4: .PDF .Py .IpynbDocument5 pagesCS5785 Homework 4: .PDF .Py .IpynbAl TarinoNo ratings yet

- Lect 5Document43 pagesLect 5Ananya JainNo ratings yet

- Complex Analysis Math 322 Homework 4Document3 pagesComplex Analysis Math 322 Homework 409polkmnpolkmnNo ratings yet

- Data Structure and Algorithm, Spring 2023 Homework 1: P4-P6 Release and Due: 13:00:00, Tuesday, April 11, 2023Document22 pagesData Structure and Algorithm, Spring 2023 Homework 1: P4-P6 Release and Due: 13:00:00, Tuesday, April 11, 2023G36maidNo ratings yet

- Text Mining For SMMDocument62 pagesText Mining For SMMDuong Le Tuong KhangNo ratings yet

- Assignment 6: E1 244 - Detection and Estimation Theory (Jan 2023) Due Date: April 15, 2023 Total Marks: 40Document2 pagesAssignment 6: E1 244 - Detection and Estimation Theory (Jan 2023) Due Date: April 15, 2023 Total Marks: 40samyak jainNo ratings yet

- CS4740/5740 Introduction To NLP Fall 2017 Neural Language Models and ClassifiersDocument7 pagesCS4740/5740 Introduction To NLP Fall 2017 Neural Language Models and ClassifiersEdward LeeNo ratings yet

- R - Packages With Applications From Complete and Censored SamplesDocument43 pagesR - Packages With Applications From Complete and Censored SamplesLiban Ali MohamudNo ratings yet

- Lecture4 RP Knowledge RepresentationDocument28 pagesLecture4 RP Knowledge RepresentationAimee LemmaNo ratings yet

- Homework 1Document7 pagesHomework 1Muhammad MurtazaNo ratings yet

- Lab 4: Logistic Regression: PSTAT 131/231, Winter 2019Document10 pagesLab 4: Logistic Regression: PSTAT 131/231, Winter 2019vidish laheriNo ratings yet

- EC330 Applied Algorithms and Data Structures For Engineers Fall 2018 Homework 4Document3 pagesEC330 Applied Algorithms and Data Structures For Engineers Fall 2018 Homework 4DF QWERTNo ratings yet

- Improved Naive Bayes With Optimal Correlation Factor For Text ClassificationDocument10 pagesImproved Naive Bayes With Optimal Correlation Factor For Text ClassificationcarthrikuphtNo ratings yet

- Sentiment Analysis: Using Naïve Bayes ClassifierDocument18 pagesSentiment Analysis: Using Naïve Bayes ClassifierKadda ZerroukiNo ratings yet

- CSC263 Winter 2021 Problem Set 1: InstructionsDocument4 pagesCSC263 Winter 2021 Problem Set 1: InstructionsCodage AiderNo ratings yet

- Introduction To Machine Learning and Deep LearningDocument40 pagesIntroduction To Machine Learning and Deep LearningF13 NIECNo ratings yet

- CS 3MI3: Fundamentals of Programming Languages: Dr. Jacques CaretteDocument3 pagesCS 3MI3: Fundamentals of Programming Languages: Dr. Jacques Carettemidaw87576No ratings yet

- CS-15 Dec 09Document5 pagesCS-15 Dec 09Venkatesan RhNo ratings yet

- A Complete Guide To KNNDocument16 pagesA Complete Guide To KNNcinculiranjeNo ratings yet

- Course Website: X. Burps, P. Gurps TEX Template For 18.821, Which You Can Use For Your Own ReportsDocument4 pagesCourse Website: X. Burps, P. Gurps TEX Template For 18.821, Which You Can Use For Your Own ReportsRyan SimmonsNo ratings yet

- n27 PDFDocument3 pagesn27 PDFChristine StraubNo ratings yet

- Lecture 5-Naïve BayesDocument26 pagesLecture 5-Naïve BayesNada ShaabanNo ratings yet

- Theory AssignmentDocument4 pagesTheory AssignmentAlankrit Kr. SinghNo ratings yet

- Text Categorization and ClassificationDocument13 pagesText Categorization and ClassificationAbiy MulugetaNo ratings yet

- IR&TM Review II: F A S TDocument5 pagesIR&TM Review II: F A S Tnavid.basiry960No ratings yet

- An Approach of The Naive Bayes Classifier For The Document ClassificationDocument4 pagesAn Approach of The Naive Bayes Classifier For The Document ClassificationHaftom GebregziabherNo ratings yet

- Postech MaxentDocument74 pagesPostech MaxentKry RivadeneiraNo ratings yet

- Assignment 2 SpecificationDocument3 pagesAssignment 2 SpecificationRazinNo ratings yet

- Accelerated Data Science Introduction To Machine Learning AlgorithmsDocument37 pagesAccelerated Data Science Introduction To Machine Learning AlgorithmssanketjaiswalNo ratings yet

- Midterm SolDocument6 pagesMidterm SolMohasin Azeez KhanNo ratings yet

- Cs 229, Spring 2016 Problem Set #2: Naive Bayes, SVMS, and TheoryDocument8 pagesCs 229, Spring 2016 Problem Set #2: Naive Bayes, SVMS, and TheoryAchuthan SekarNo ratings yet

- Proj 3Document3 pagesProj 3lorentzongustafNo ratings yet

- Univt - IVDocument72 pagesUnivt - IVmananrawat537No ratings yet

- Dynamic PRG 1Document43 pagesDynamic PRG 111. Trương Huy HoàngNo ratings yet

- CSE 202 - AlgorithmsDocument24 pagesCSE 202 - AlgorithmsAbhilash KsNo ratings yet

- 600.325/425 - Declarative Methods Assignment 1: SatisfiabilityDocument13 pages600.325/425 - Declarative Methods Assignment 1: Satisfiabilityمحمد احمدNo ratings yet

- Department of Computer Engineering: Experiment No.6Document5 pagesDepartment of Computer Engineering: Experiment No.6BhumiNo ratings yet

- R TutorialsDocument73 pagesR TutorialsESTUDIANTE JOSE DAVID MARTINEZ RODRIGUEZNo ratings yet

- MISY 631 Final Review Calculators Will Be Provided For The ExamDocument9 pagesMISY 631 Final Review Calculators Will Be Provided For The ExamAniKelbakianiNo ratings yet

- STA4026S 2021 - Continuous Assessment 2 Ver0.0 - 2021!09!29Document6 pagesSTA4026S 2021 - Continuous Assessment 2 Ver0.0 - 2021!09!29Millan ChibbaNo ratings yet

- 178 hw1Document4 pages178 hw1Vijay kumarNo ratings yet

- Lab Manual-ArtificialDocument26 pagesLab Manual-ArtificialYadav AshishNo ratings yet

- Proof Checker PDFDocument15 pagesProof Checker PDFDaniel Lee Eisenberg JacobsNo ratings yet

- AI LAB - Docx-1Document18 pagesAI LAB - Docx-1Ayush SinghNo ratings yet

- Strings PDFDocument18 pagesStrings PDFadaNo ratings yet

- American Association For Medical Transcription 100 Sycamore Avenue, Modesto, CA 95354-0550 - 800-982-2182Document5 pagesAmerican Association For Medical Transcription 100 Sycamore Avenue, Modesto, CA 95354-0550 - 800-982-2182JijoNo ratings yet

- Intel (R) ME Firmware Integrated Clock Control (ICC) Tools User GuideDocument24 pagesIntel (R) ME Firmware Integrated Clock Control (ICC) Tools User Guidebox.office1003261No ratings yet

- CREW: Department of Defense: Department of The Army: Regarding PTSD Diagnosis: 6/30/2011 - Release Pgs 1-241 On 24 May 2011Document241 pagesCREW: Department of Defense: Department of The Army: Regarding PTSD Diagnosis: 6/30/2011 - Release Pgs 1-241 On 24 May 2011CREWNo ratings yet

- Toefl PBTDocument3 pagesToefl PBTLuis Fernando Morales100% (1)

- Computer ProfessionalDocument185 pagesComputer ProfessionalSHAHSYADNo ratings yet

- Third-Party LogisticsDocument16 pagesThird-Party Logisticsdeepshetty100% (1)

- 26 Rules To Becoming A Successful SpeakerDocument46 pages26 Rules To Becoming A Successful SpeakerSpeakerMatch100% (23)

- Actuation SystemDocument11 pagesActuation SystemNavendu GuptaNo ratings yet

- Berna1Document4 pagesBerna1Kenneth RomanoNo ratings yet

- Course 1 Unit 1 SEDocument80 pagesCourse 1 Unit 1 SEPrashant DhamdhereNo ratings yet

- Assignment 1 FRS651 Mac-July 2020Document2 pagesAssignment 1 FRS651 Mac-July 2020aizatNo ratings yet

- Entrepreneurship 1 Burt S BeesDocument5 pagesEntrepreneurship 1 Burt S BeesAly BhamaniNo ratings yet

- Dsto-Tn-1155 PRDocument52 pagesDsto-Tn-1155 PRGoutham BurraNo ratings yet

- Lab #3Document10 pagesLab #3Najmul Puda PappadamNo ratings yet

- The Universe ALWAYS Says YES - Brad JensenDocument15 pagesThe Universe ALWAYS Says YES - Brad JensenNicole Weatherley100% (3)

- I PU Geography English Medium PDFDocument211 pagesI PU Geography English Medium PDFMADEGOWDA BSNo ratings yet

- 03 Planning Application and Dimension OverviewDocument36 pages03 Planning Application and Dimension OverviewAnil Nanduri100% (1)

- Bhabha Atomic Research Centre BARC, MysuruDocument2 pagesBhabha Atomic Research Centre BARC, Mysururajesh kumarNo ratings yet

- PLC & HMI Interfacing For AC Servo Drive: Naveen Kumar E T.V.Snehaprabha Senthil KumarDocument5 pagesPLC & HMI Interfacing For AC Servo Drive: Naveen Kumar E T.V.Snehaprabha Senthil KumarNay Ba LaNo ratings yet

- The Impact of Demonetisation of Currency in Thanjavur CityDocument3 pagesThe Impact of Demonetisation of Currency in Thanjavur CitysantoshNo ratings yet

- Glor - Io Wall HackDocument889 pagesGlor - Io Wall HackAnonymous z3tLNO0TqH50% (8)

- How To OSCPDocument34 pagesHow To OSCPbudi.hw748100% (3)

- Ielts Reading Test 10 - QuestionsDocument5 pagesIelts Reading Test 10 - QuestionsĐinh Quốc LiêmNo ratings yet

- Forecasting TechniquesDocument38 pagesForecasting TechniquesNazia ZebinNo ratings yet

- Reflection Week 3 - The Sensible ThingDocument3 pagesReflection Week 3 - The Sensible Thingtho truongNo ratings yet

- Significance Testing (T-Tests)Document3 pagesSignificance Testing (T-Tests)Madison HartfieldNo ratings yet

- SCITECH - OBE SyllabusDocument12 pagesSCITECH - OBE SyllabusMary Athena100% (1)

- Cheat Sheet - Mathematical Reasoning Truth Tables Laws: Conjunction: Double Negation LawDocument1 pageCheat Sheet - Mathematical Reasoning Truth Tables Laws: Conjunction: Double Negation LawVîñît YãdûvãñšhîNo ratings yet

- Mac CyrillicDocument6 pagesMac CyrillicRazvan DiezNo ratings yet

- University of Khartoum Department of Electrical and Electronic Engineering Fourth YearDocument61 pagesUniversity of Khartoum Department of Electrical and Electronic Engineering Fourth YearMohamed AlserNo ratings yet