Professional Documents

Culture Documents

5G Core 21.1 Product Reliability ISSUE 1.0

Uploaded by

Mohamed EldessoukyCopyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

5G Core 21.1 Product Reliability ISSUE 1.0

Uploaded by

Mohamed EldessoukyCopyright:

Available Formats

5G Core Product Reliability

www.huawei.com

Copyright © Huawei Technologies Co., Ltd. All rights reserved.

Foreword

The 5G Core follows the concept of "layered construction and cross-layer collaboration" to ensure high

service availability and reliability. Its reliability design has shifted from hardware-centric to software-

centric E2E service availability.

Layered construction: A layered model is formulated for faulty objects, the solution is designed based on FMEA.

NFVI layer: Redundancy design and anti-affinity deployment are used to eliminate single points of failure. Shared and

distributed storage is used to ensure data reliability.

VNF layer: The cloud architecture and service processing unit stateless design are leveraged, and software is layered by

functions, paving the way for smooth service migration. The distributed database ensures 1+1 redundancy of service data.

Software-based fast fault detection and service migration within VNFs ensure quick service recovery in the case of a fault.

The VNFs inherit traditional network reliability characteristics, achieving remote disaster recovery.

Cross-layer collaboration: High availability is ensured at both the VNF and NFVI layers to ensure quick fault

recovery.

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 3

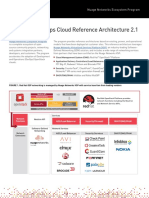

Logical Architecture of 5G Core

Peripheral components:

U2020: interconnects with products, and provides a GUI for

U2020

product O&M.

VNFM: provides life cycle and pod/VM management for

Product products.

O&M layer PaaS: provides container cluster management, and works with

ACS MNT KAFKA MML Service sHA ...... VNFMAdaptor the MANO to provide instantiation and routine operations for

containerized products.

Data & network access

Service layer Cloud OS: works with the MANO to provide VM-level entities

layer

SM AM HTTP ...... CM CSDB and routine operations for products.

Architecture:

CSLB

O&M layer: interconnects with the U2020 or VNFM to provide

Platform layer ......

VNF

VNRS configuration, maintenance, and other routine operations for

HAF SDR SCF ...... DCF

products.

Service layer: consists of the modules for service processing.

Data & network access layer: provides data storage & access

functions for services.

PaaS (FusionStage…)

Platform layer: provides communication, status awareness, token

allocation, and resource monitoring functions for the upper

CloudOS (FusionSphere OpenStack…) layers.

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 4

Objectives

Upon completion of this course, you will be able to:

Describe the inter-VNF redundancy and VNF internal reliability solutions.

Understand the difference between the NFVI and VNF reliability features and the scopes of fault rectification.

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 5

Contents

1. NFVI Reliability Solution

2. VNF Internal Reliability Solution

3. Inter-VNF Redundancy

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 6

NFVI Reliability Solution

Different types of faults:

Board restart or power-off, host OS, network port, or network adapter failure, packet loss or error, full disk, bad

sector of a disk, and storage controller or storage network failure

VNF-layer fault: VM or VM communication failure, slow VM I/O, or VM I/O failure

The VNF layer can quickly detect VM faults and initiate service migration and restoration when there is a

hardware failure.

The NFVI reliability solution is summarized as follows:

Computing reliability:

Redundant resources: racks, subracks, power modules, fans, boards, network ports, network adapters, and more

Redundant VM configuration, and anti-affinity deployment (pods of a type must be deployed on different VMs)

HA at both the VNF and NFVI levels

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 7

NFVI Reliability Solution

NFVI reliability solution

Storage reliability:

Shared storage (IP/FC SAN) is adopted on VMs. Dual-plane communication and RAID10 storage ensure data redundancy

and reliability.

Distributed storage is adopted on VMs where data is backed up with three replicas to enhance data reliability.

The anti-affinity mechanism is used for VM-level storage.

A pod can leverage the hostPath volume to have access to the hosted node (VM) directory, where the pod can store its data

produced during operation.

Multi-path access and storage status detection are supported.

Redundant devices, such as disk array controllers and switches, are deployed. Storage devices are equipped with built-in

batteries.

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 8

NFVI Reliability Solution

NFVI reliability solution

Network reliability:

Redundancy deployment is adopted for network ports, network adapters, switching devices, and routing devices to ensure

reliable communication.

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 9

Contents

1. NFVI Reliability Solution

2. VNF Internal Reliability Solution

3. Inter-VNF Redundancy

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 10

VNF Reliability Solution

The NFVI layer can be provided by different vendors. The 5G Core must provide

VNF-level reliability solutions independent of the NFVI layer.

Different types of faults:

Process/Container/Pod/VM failure

Network communication failure

Slow or failed VM I/O

External traffic burst

Prevention: Multiple instances must be deployed for multiple entities having the same function to avoid a single

point of failure. Service processing is separated from data storage. The service processing units are stateless.

Fault detection: Each layer provides the monitoring function, creating a complete monitoring chain at the VNF

layer and enabling faults to be discovered in a timely manner.

Service restoration or fault rectification: The faults are reported in a timely manner for quick service migration.

Different recovery policies are selected for different faults.

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 11

Contents

2. VNF Internal Reliability Solution

2.1 Stateless Design

2.2 Strong Anti-affinity Deployment

2.3 N-way Redundancy

2.4 Node Management Reliability

2.5 Multi-level Self-healing

2.6 VNF Fault Detection

2.7 Split Brain Avoidance

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 12

Stateless Design

The 5G Core divides VNF software into four layers (by service functions): O&M layer, service layer, data & access

layer, and platform layer. This design separates service processing from data storage. Service processing units become

stateless. Other units can smoothly take over services when some units fail and are isolated.

5G Core , CloudMSE , CloudUIC

Cloud Session Database

Service Process Unit

Cloud Session Load Balancer

Cloud OS

Data Center

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 13

Contents

2. VNF Internal Reliability Solution

2.1 Stateless Design

2.2 Strong Anti-affinity Deployment

2.3 N-way Redundancy

2.4 Node Management Reliability

2.5 Multi-level Self-healing

2.6 VNF Fault Detection

2.7 Split Brain Avoidance

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 14

Strong Anti-affinity Deployment

The 5G Core leverages VM containers. The minimum unit for service processing is a pod. Multiple pods

are deployed on one VM, and multiple VMs are deployed on one blade server. Strong anti-affinity is

available at two levels:

Pod-level: Only one pod of the same type can be deployed on one VM.

VM-level: Only one VM of the same type can be deployed on a host.

The two-level strong anti-affinity deployment ensures that only one pod of the same type can be deployed on one

host.

VNF layer:

Pods for control services require strong anti-affinity deployment at two layers to ensure that only one pod of the

same type is allowed on one board. If there is a VM- or board-level fault, the control service pods of the same

type will not be faulty (completely or partially).

Strong anti-affinity deployment is not mandatory for pods used for service processing. The 5G Core products

adopt the deployment for the pods but not for the VMs.

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 15

Contents

2. VNF Internal Reliability Solution

2.1 Stateless Design

2.2 Strong Anti-affinity Deployment

2.3 N-way Redundancy

2.4 Node Management Reliability

2.5 Multi-level Self-healing

2.6 VNF Fault Detection

2.7 Split Brain Avoidance

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 16

N-way Redundancy

N-way redundancy:

A VNF has X+Y VMs or pods, among which X VMs/pods are used to meet service requirements and Y VMs/pods ensure

service continuity in case of multiple VM/pod faults. There are X+Y pod/VMs handling services simultaneously, with user data

and multiple replicas stored in the CSDB in real time. If Z (1 < Z <= Y) pods/VMs become faulty, the VNF migrates services

and the CSDB pushes the services to other X+Y-Z pods/VMs to mitigate the service impact.

The service switchover process after AMF/SMF 1 is faulty is as

CSDB 1 CSDB 2 CSDB 3 follows:

If AMF/SMF 1 is detected to be faulty, the information is reported to

the token allocation service. The service then allocates the tokens of

AMF/SMF 1 evenly to AMFs/SMFs 2 to 5.

AMF/ AMF/ AMF/ AMF/ AMF/

The AMFs/SMFs 2 to 5 obtain service data from CSDBs 1 to 3 using

SMF 1 SMF 2 SMF 3 SMF 4 SMF 5 the new tokens to take over the original services from AMF/SMF 1.

Using the new tokens, the AMFs/SMFs 2 to 5 update the service

routing information of the original AMF/SMF 1 on the CSLBs 1 to 3.

CSLB 1 CSLB 2 CSLB 3

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 17

Contents

2. VNF Internal Reliability Solution

2.1 Stateless Design

2.2 Strong Anti-affinity Deployment

2.3 N-way Redundancy

2.4 Node Management Reliability

2.5 Multi-level Self-healing

2.6 VNF Fault Detection

2.7 Split Brain Avoidance

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 18

Node Management Reliability

Background:

The 5G Core products use pods as the entities to run services. The VNF layer does not use a VM (referred to as a

node at the PaaS layer) as a management object for fault monitoring and recovery. The NFVI layer checks NFVI

network and board faults to detect and recover from VM faults, and does not monitor internal VM status or

perform fault detection and restoration from the service perspective. The reliability requirements for VNF layer

are not met.

The VNF layer provides node management services at the software level. It monitors intra- and inter-VM faults

and takes different recovery measures based on the monitoring results, ensuring quick VM fault detection and

restoration.

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 19

Node Management Reliability

Working principle:

The server/client mode is used.

The client functionality is deployed on each node to detect faults.

Types of intra-node faults:

− Storage: storage read/write error (failure or suspension), slow disk response, I/O overload, partition/volume read-only fault,

partition exhaustion...

− Computing: CPU overload, memory overload or exhaustion, node overload, PID overload, system key service fault…

− Network: IP/route loss, network fault, IP address conflict, abnormal port status…

Types of inter-node faults:

− Communication link fault, or sub-health issue

The server functionality is deployed on control nodes. It diagnoses and rectifies faults reported by the client, detects node

faults (possibly caused by VM suspension, reboot, or repeated reboot) using the heartbeat mechanism, and provides node-

specific O&M commands (such as query, reboot, and rebuilding commands). Once detecting a fault, the server functionality

interacts with services to determine whether to start node self-healing.

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 20

Node Management Reliability

Working principle:

Fault rectification methods on the server:

Alarm reporting: The server reports alarms indicating the key file loss, disk partition overload, slow disk, key service fault,

IP address loss, IP address conflict, and other issues.

Direct rectification of deterministic faults: The server automatically restarts a network port which was found faulty.

Multi-level self-healing if a node fault is detected: The server determines whether to start self-healing based on the service

status. It self-healing is required, it performs different self-healing operations based on the VM status at the NFVI layer. The

operations include reboot, VM rebuilding, and OS rebuilding.

VNF HA

service

3. Fault info report &

Service status/Self-

healing policy query

1. Fault

4. Node info 2a. Fault info

detection

retrieval report

VNFM Server Client

5. Self - healing 2 b. Heartbeat

policy issued detection +Ping

Node management service

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 21

Contents

2. VNF Internal Reliability Solution

2.1 Stateless Design

2.2 Strong Anti-affinity Deployment

2.3 N-way Redundancy

2.4 Node Management Reliability

2.5 Multi-level Self-healing

2.6 VNF Fault Detection

2.7 Split Brain Avoidance

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 22

Multi-level Self-healing

Self-healing can be achieved at two levels:

Multi-level self-healing operations for a faulty object: When rectifying a fault on an object, the system reboots the

object first. If the fault persists, the system rebuilds the object.

Fault escalation for the object: If the fault cannot be rectified using the self-healing operations, the system

considers escalating the fault to a higher level based on the service status. For example, it escalates the fault from

the pod level to the VM level and performs VM-level self-healing until the fault is rectified.

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 23

Multi-level Self-healing

Deployment Hierarchy Self-healing Hierarchy

Node level: reboot/VM

rebuilding/OS rebuilding

Node Repeated reboots/persistent faults/large-

scale faults: escalation

Node

Pod level: repeated restarts

Pod Pod Repeated reboots/persistent faults/large-

scale faults: escalation

Container/RU Container/RU level: repeated restarts

Container/RU Container/RU Repeated reboots/persistent faults/large-

Cell scale faults: escalation

Cell level: repeated restarts

Cell Cell Cell Repeated reboots/persistent faults/large-

Actor scale faults: escalation

Actor Actor Actor Actor Actor level: repeated

restarts

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 24

Contents

2. VNF Internal Reliability Solution

2.1 Stateless Design

2.2 Strong Anti-affinity Deployment

2.3 N-way Redundancy

2.4 Node Management Reliability

2.5 Multi-level Self-healing

2.6 VNF Fault Detection

2.7 Split Brain Avoidance

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 25

VNF Fault Detection

Host (7)

Faults on:

Running entities: (7) host; (6) node or VM; (5) pod; (2) container or RU; (1)

Node (6)

cell or process

Pod (5) Networks: (3)(4) VM container network; (8)(9) NFVI network

Container/RU (2) There are two mechanisms to detect product faults:

Cell (1) Quick detection:

It must work with the proactive reporting or signal handing (interception) mechanisms.

(3) For example, a running entity detects a normal exit and reports it to the remote server.

Overlay SR-IOV Scope: Running entities' normal exits can be detected.

(4)

VF 1 VF 2 VF 3 Slow detection:

(8)

PF 1 PF 2 It must work with the heartbeat mechanism implemented between the remote server and

NFVI network (9)

running entities (deployed in processes).

Simplified VM Scope: Running entities' unexpected suspensions or exits as well as network faults can

container network

be detected.

Note: There are no nodes on a bare metal container network.

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 26

VNF Fault Detection

Object Fault Detection & Diagnosis Fault Detection, Isolation, and Recovery

Quick detection: When a child process exits, the parent process can immediately detect the

exit. The parent process reports it to the HA-Server, as shown in steps 3 and 1 in the

The HA-Server pushes the status change to the subscriber.

Child process preceding figure. The process takes less than 1 second.

Slow detection: The HA-Server and the SDK in the child process exchange heartbeat The parent process reboots the child process.

signaling. The process takes less than 6 seconds.

The first process periodically monitors the parent process, as shown in step 2.A in the

Container preceding figure. If the first process detects that the parent process is faulty, the

Parent The K8S livenessProbe mechanism is used to periodically check whether the parent process process exits. If the livenessProbe detection fails, the Kubelet reboots

process

is alive. If the parent process is not alive for a long time, an error is returned, as shown in the container.

steps 1.B and 2.B in the preceding figure.

Initial The Kubelet can detect container exit events. If the first process exits, it considers that the

The Kubelet reboots the container.

process container exits. See step 1.A in the preceding figure.

Product layer: The HA-Server determines whether all processes in the same container are Product layer: If there is a pod fault, then pod self-healing

faulty based on the process fault detection result in step 1, summarizes the data, and reports

Pod the container faults to the HA-Governance (as shown in step 2 of the figure). Then the HAF- operations (issued via the VNFM) are triggered.

PaaS layer: If the number of operational instances is not equal to

Governance determines whether the faults are pod faults.

the configured number, pod self-healing is triggered.

PaaS layer: The pod running status is monitored.

Product layer: The NRS-Agent detects faults in the node and checks the network between Product layer: If the fault is deterministic, the NRS-Master

nodes. If a fault occurs, the NRS-Agent notifies the NRS-Master of the fault. The NRS- performs node-level self-healing. (This function has been

Master notifies the HA-Governance of the fault. See steps 1 and 2 in the figure. available.) For undeterministic faults, the HA-Governance

Node PaaS layer: The node resource (CPU/ memory/disk) usage is checked to see if it exceeds the performs node-level self-healing based on service status

threshold. The network connection is checked. They are the criteria for determining if a node diagnosis. (This function has been planned.)

is still available. PaaS layer: If a node is unavailable, the pods will be migrated.

NFVI layer: The NFVI layer status is monitored for VMs. NFVI layer: HA is triggered at the NFVI layer.

Product layer: Faults are checked through heartbeat or network detection. If there are service Product layer: Node-level self-healing is triggered.

Host instance, process, container, or node faults, the information will be released. PaaS layer: The pod is migrated.

PaaS layer: The nodes on the host are checked to see if they are faulty.

NFVI layer: Hosts are checked.

NFVI layer: HA is triggered at the NFVI layer.

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 27

VNF Fault Detection Service migration:

(2)

Token allocation Token allocation

HA service (3) service HA service

(2) service

(4) Service 1

(1) (1) (1) (4) (1) (1) (1) (3)

(4) becomes faulty. (3)

Service 1 Service 2 Service 3 Service 1 Service 2 Service 3

(5) (5) (5) (4) (4)

Database Database

service service

After the system starts up: After service 1 becomes faulty:

(1) The HA service monitors services by interacting with service clients. (1) The HA service detects that service 1 is faulty.

(2) The token allocation service subscribes to service status from the HA service. (2) The HA service notifies the token allocation service of the fault.

(3) The HA service notifies the token allocation service of any service status change. (3) The token allocation service detects this fault and then migrates the token of

(4) The token allocation service allocates tokens to services that are in normal status. service 1 to service 2 or service 3.

(5) Services with tokens obtain service data from the database service. (4) Service 2 or service 3 then obtains service data from the database service.

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 28

Contents

2. VNF Internal Reliability Solution

2.1 Stateless Design

2.2 Strong Anti-affinity Deployment

2.3 N-way Redundancy

2.4 Node Management Reliability

2.5 Multi-level Self-healing

2.6 VNF Fault Detection

2.7 Split Brain Avoidance

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 29

Split Brain Avoidance

Split brain:

A network is split into two separate partitions if multiple nodes in the cluster are not able to communicate anymore. The two

partitions may interfere with each other because each of them has an active node, affecting services across the network.

Principle:

To resolve the dual-active problem, an arbitration center serves as a lighthouse at the product layer. Once the network is split,

nodes in the partition well connected to the lighthouse keep running properly, whereas nodes in the other partition disconnected

from the lighthouse are isolated.

Isolation measures:

Active control node: demoted to the standby node via a reboot if it is disconnected from the arbitration center.

Non-control node: reboots or keeps running after being disconnected from the active control node.

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 30

Split Brain Avoidance Network split:

Subnet A Subnet B

ETCD 1 ETCD 2 ETCD 3

Arbitration Follower

center as a Leader Follower

ETCD 1 ETCD 2 ETCD 3

lighthouse Arbitration Follower Leader

center as a Follower

Network lighthouse

split

Active Standby Standby

Service cluster - control control control

control nodes node node 1 node 2 Standby control

Service cluster Standby Active control

- control nodes control node node node 2

Non- Non- Non-

Service cluster - control

control control

non-control node 3 Non-control Non-control Non-control

node 1 node 2 Service cluster

nodes node 1 node 2 node 3

- non-control

nodes

Original network, shown in the left figure

Network split into partitions A and B, shown in the right figure

Arbitration center: ETCD 1 in partition A is demoted to a follower, and ETCD 2 in partition B is promoted to the leader.

Service cluster- control nodes: The active control node in partition A is disconnected from the arbitration center and is isolated after being demoted to a standby node via

a reboot. Standby control node 1 in partition B is promoted to the active control node.

Service cluster - non-control nodes: Non-control node 1 in partition A is disconnected from the master node and then isolated. Non-control nodes 2 and 3 in partition B

keep running to interact with the new active control node.

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 31

Contents

1. NFVI Reliability Solution

2. VNF Internal Reliability Solution

3. Inter-VNF Redundancy

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 32

Inter-VNF Redundancy Pool redundancy:

Two NRFs work in active/standby mode. The active

1. Active/standby

NRF processes services. NRFs NRF ( (active) NRF ( standby) NSSF ( (active) NSSF (standby)

Two NSSFs work in active/standby mode. The active 2. Active/standby NSSFs

NSSF processes services.

Multiple AMFs work in load-sharing mode. Center AMF AMF AMF

Multiple SMFs work in load-sharing mode. 3. Pooled AMFs

SMFs and UPFs are fully meshed.

4. Pooled SMFs SMF SMF SMF

Fault Scenario NRF NSSF AMF SMF

Intra-NF fault Self-healing

Fault in a single NF,

Subscribers need PDU sessions

Edge 5. Fully meshed

SMFs and UPFs

DC GW, or DC

16s to be registered need to be re- UPF UPF UPF …

(CloudOS or

again. established.

equipment room)

The time in the table refers to the service interruption time.

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 33

Inter-VNF Redundancy Backup redundancy:

Multiple NRFs work in load sharing mode and store NF information

in the distributed database cluster for mutual backup.

1. Pooled NRFs

Multiple NSSFs work in load sharing mode and store slice 2. Pooled NSSFs NRF NRF NRF NSSF NSSF NSSF

information in the distributed database cluster for mutual backup. Distributed database cluster Distributed database cluster

Multiple AMFs work in load sharing mode and store subscriber

information in the distributed database cluster for mutual backup.

Multiple SMFs work in load sharing mode and store PDU sessions Center AMF AMF AMF

in the distributed database cluster for mutual backup. 3. Pooled AMFs Distributed database cluster

SMFs and UPFs are fully meshed.

4. Pooled SMFs SMF SMF SMF

Distributed database cluster

Fault Scenario NRF NSSF AMF SMF Edge

Intra-NF fault Self-healing 5. Fully meshed CU …

UPF UPF UPF

Fault in a single NF, 10s Subscribers are PDU sessions

DC GW, or DC still online and do not need

(CloudOS or do not need to to be re-

equipment room) be registered established.

again. (< 14s) (< 14s)

The time in the table refers to the service interruption time.

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 34

Summary

NFVI reliability solution: NFVI reliability design from the aspects of compute, storage, and network

Intra-VNF reliability solution: Stateless service processing units, strong anti-affinity deployment, N-way redundancy,

node management reliability, multi-level self-healing, VNF fault detection, and brain-split avoidance ensure timely

detection of VNF layer faults and service migration. Then comprehensive diagnosis will be performed and effective

recovery measures will be taken.

Inter-VNF reliability solution: Remote disaster recovery is used. Different NFs use different disaster recovery modes.

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 35

Q&A

1. A split brain is discovered within a VNF, with the network split into a partitions A and B. The ETCD cluster has

three nodes (1, 2, and 3), where nodes 1 and 2 are in partition A and node 3 is in partition B. How will the master

instance A in partition B respond in this case? ( )

A. Reboots

B. Continues running

2. An AMF keeps subscribers online using the ( ) mechanism if a single NF becomes faulty.

A. Backup redundancy

B. Pool redundancy

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 36

Future Plan

Feature Description

Disk array bypass When a disk array is faulty, services are not affected and limited O&M operations are allowed.

• 5% packet loss allowed on the communication plane

Enhanced communications reliability • Support for priority settings for communication channels or partitions

• Subhealthy communication detection and comprehensive diagnosis

Flow control Flow control over 64x/100x traffic

Solution to no-master termination in case Instances in a smaller partition will keep running, and services will not be affected during or after

of a split brain network consolidation.

Enhanced multi-level self-healing More fault or fault escalation scenarios will be considered.

Copyright © Huawei Technologies Co., Ltd. All rights reserved. Page 37

Thank You

www.huawei.com

You might also like

- 1 Ecore (E9000H-4) Product Overview - 3GPPDocument50 pages1 Ecore (E9000H-4) Product Overview - 3GPPKiko AndradeNo ratings yet

- 20 Introduction To SDN and NFVDocument53 pages20 Introduction To SDN and NFVيوسف الدرسيNo ratings yet

- 08b - NFVDocument36 pages08b - NFVturturkeykey24No ratings yet

- ObjectivesDocument23 pagesObjectivesMohd WadieNo ratings yet

- FusionSphere V100R005C00 Quick User Guide 01Document64 pagesFusionSphere V100R005C00 Quick User Guide 01him2000himNo ratings yet

- Dell. Building An Open vRAN Ecosystem.2020Document14 pagesDell. Building An Open vRAN Ecosystem.2020Pavel SchukinNo ratings yet

- Tema 03 - NFVDocument37 pagesTema 03 - NFVJuan Gabriel Ochoa AldeánNo ratings yet

- 5G NFV SDN and MECDocument45 pages5G NFV SDN and MECkdmu123No ratings yet

- Telco Cloud - 02. Introduction To NFV - Network Function VirtualizationDocument10 pagesTelco Cloud - 02. Introduction To NFV - Network Function Virtualizationvikas_sh81No ratings yet

- Learn About: Network Functions VirtualizationDocument10 pagesLearn About: Network Functions Virtualizationvrtrivedi86No ratings yet

- LearnAbout NFVDocument10 pagesLearnAbout NFVAhmed SharifNo ratings yet

- 01 HCNA-Cloud V2.0 Lab GuideDocument179 pages01 HCNA-Cloud V2.0 Lab GuideMicael CelestinoNo ratings yet

- CloudEdge V100R018C10 Introduction To FAC-B-V1.0 - OKDocument25 pagesCloudEdge V100R018C10 Introduction To FAC-B-V1.0 - OKAhmed KenzyNo ratings yet

- SDN 5gDocument70 pagesSDN 5gSimmhadri Simmi0% (1)

- NCE CampusDocument20 pagesNCE CampusTest TestNo ratings yet

- NFV-Foundations-Workshop 2-Day CISCO India VC Sept 03-04-2020 Part1 PDFDocument92 pagesNFV-Foundations-Workshop 2-Day CISCO India VC Sept 03-04-2020 Part1 PDFMahendar SNo ratings yet

- Hybrid Network SlicingDocument5 pagesHybrid Network Slicingciocoiu.iuliantNo ratings yet

- Huawei AR1000V BrochureDocument4 pagesHuawei AR1000V BrochureRonan AndradeNo ratings yet

- Versa Ds Flex VNF 01 5Document4 pagesVersa Ds Flex VNF 01 5komesh kNo ratings yet

- Nokia Cloud Operations Manager - Data SheetDocument3 pagesNokia Cloud Operations Manager - Data SheetChetan BhatNo ratings yet

- Demystifying NFV in Carrier NetworksDocument8 pagesDemystifying NFV in Carrier NetworksGabriel DarkNo ratings yet

- Analysis of Network Function Virtualization and Software Defined VirtualizationDocument5 pagesAnalysis of Network Function Virtualization and Software Defined Virtualizationsaskia putri NabilaNo ratings yet

- Darjeeling, A Feature-Rich VM For The Resource Poor: Niels Brouwers Koen Langendoen Peter CorkeDocument14 pagesDarjeeling, A Feature-Rich VM For The Resource Poor: Niels Brouwers Koen Langendoen Peter Corkejoseph.stewart9331No ratings yet

- Virtual Solutions For Your NFV EnvironmentDocument12 pagesVirtual Solutions For Your NFV EnvironmenteriquewNo ratings yet

- Brkarc 1004Document80 pagesBrkarc 1004masterlinh2008No ratings yet

- Nokia CloudBand Application Manager Data Sheet enDocument3 pagesNokia CloudBand Application Manager Data Sheet enaranjan08No ratings yet

- SG 007 SD Wan SD Lan Design Arch GuideDocument120 pagesSG 007 SD Wan SD Lan Design Arch GuideJonny TekNo ratings yet

- Intelligent Edge Shows Power of Splunk Analytics For EnterprisesDocument4 pagesIntelligent Edge Shows Power of Splunk Analytics For EnterprisesMaritza Joany Tábori RiveraNo ratings yet

- Technical Report: Samsung's Cloud Native 5G Core and Open Source ActivityDocument16 pagesTechnical Report: Samsung's Cloud Native 5G Core and Open Source ActivityALEXANDRE JOSE FIGUEIREDO LOUREIRO100% (1)

- Grid and Cloud Unit IIIDocument29 pagesGrid and Cloud Unit IIIcsisajurajNo ratings yet

- Formal framework for VNFaaS resource management synthesisDocument9 pagesFormal framework for VNFaaS resource management synthesisabdulkader hajjouzNo ratings yet

- Constraint programming for flexible SFC deployment in NFV/SDN networksDocument10 pagesConstraint programming for flexible SFC deployment in NFV/SDN networksMario DMNo ratings yet

- Course Title:-Advanced Computer Networking Group Presentation On NFV FunctionalityDocument18 pagesCourse Title:-Advanced Computer Networking Group Presentation On NFV FunctionalityRoha CbcNo ratings yet

- Analysis of Basic Architectures Used For Lifecycle Management and Orchestration of Network Service in Network Function Virtualization EnvironmentDocument5 pagesAnalysis of Basic Architectures Used For Lifecycle Management and Orchestration of Network Service in Network Function Virtualization EnvironmentEditor IJRITCCNo ratings yet

- Introduction To NFVDocument48 pagesIntroduction To NFVMohamed Abdel MonemNo ratings yet

- Okeanos IaaS Cloud WhitepaperDocument4 pagesOkeanos IaaS Cloud Whitepapernectarios_kozirisNo ratings yet

- - 08 Case Study - Cloudification of Conventional Application-已调整Document21 pages- 08 Case Study - Cloudification of Conventional Application-已调整Alaa FarghalyNo ratings yet

- OpenStack Foundation NFV ReportDocument24 pagesOpenStack Foundation NFV ReportChristian Tipantuña100% (1)

- Accelerating NFV Delivery With OpenstackDocument24 pagesAccelerating NFV Delivery With OpenstackAnonymous SmYjg7gNo ratings yet

- Blue Planet SD-WAN Automation SB1Document3 pagesBlue Planet SD-WAN Automation SB1Jean AmaniNo ratings yet

- Implement Windows Deployment Services in IntranetDocument154 pagesImplement Windows Deployment Services in IntranetAkwinderKaurNo ratings yet

- Huawei FusionSphere 6.1 Virtualization Suite Data SheetDocument11 pagesHuawei FusionSphere 6.1 Virtualization Suite Data SheetJWilliam Chavez MolinaNo ratings yet

- Ati Awplus DsDocument7 pagesAti Awplus DsWahyu WidiastonoNo ratings yet

- Vmware Vcloud NFV Solution Brief - 49528 PDFDocument15 pagesVmware Vcloud NFV Solution Brief - 49528 PDFkallolshyam.roy2811No ratings yet

- Windows OS Architecture and FeaturesDocument20 pagesWindows OS Architecture and FeaturesKhushal RathoreNo ratings yet

- Survey of Software-Defined Networks and Network Function Virtualization (NFV)Document6 pagesSurvey of Software-Defined Networks and Network Function Virtualization (NFV)Nayera AhmedNo ratings yet

- CCA 1st SchemeDocument7 pagesCCA 1st SchemegangambikaNo ratings yet

- 5g Core Guide Cloud Infrastructure PDFDocument8 pages5g Core Guide Cloud Infrastructure PDFrohitkamahi0% (1)

- 1.huawei CloudWAN Solution BrochureDocument6 pages1.huawei CloudWAN Solution Brochuremarcoant2287No ratings yet

- Redes 5G y Tecnologías Habilitadoras: Network SlicingDocument41 pagesRedes 5G y Tecnologías Habilitadoras: Network SlicingWalterLooKungVizurragaNo ratings yet

- Emulex Leaders in Fibre Channel Connectivity: Fibre Chanel Over EthernetDocument29 pagesEmulex Leaders in Fibre Channel Connectivity: Fibre Chanel Over Etherneteasyroc75No ratings yet

- Manage multi-vendor networks with SGRwin NMSDocument2 pagesManage multi-vendor networks with SGRwin NMSfonpereiraNo ratings yet

- ESGB5303 MX Series Scalable Fabric Architecture Technical Overview - FINAL - v2Document28 pagesESGB5303 MX Series Scalable Fabric Architecture Technical Overview - FINAL - v2Taha ZakiNo ratings yet

- Best Practices When Deploying VMware Vspehre 5.0 HP SWITCHESDocument21 pagesBest Practices When Deploying VMware Vspehre 5.0 HP SWITCHESrtacconNo ratings yet

- CL15-01 FusionSphere OverviewDocument30 pagesCL15-01 FusionSphere Overviewkaleemullah0987_9429No ratings yet

- Streamlining VNF On-Boarding Process: White PaperDocument7 pagesStreamlining VNF On-Boarding Process: White Papermore_nerdyNo ratings yet

- Nuage Networks OpenStack DevOps RedHatOSP Cloud Reference ArchitectureDocument4 pagesNuage Networks OpenStack DevOps RedHatOSP Cloud Reference ArchitectureroshanNo ratings yet

- Vmware Nokia Solution OverviewDocument4 pagesVmware Nokia Solution OverviewEduardoNo ratings yet

- Next-Generation switching OS configuration and management: Troubleshooting NX-OS in Enterprise EnvironmentsFrom EverandNext-Generation switching OS configuration and management: Troubleshooting NX-OS in Enterprise EnvironmentsNo ratings yet

- SCADA QuestionsDocument3 pagesSCADA QuestionsshreemantiNo ratings yet

- Config & Change Management - SHDocument7 pagesConfig & Change Management - SHSyed HammadNo ratings yet

- ERP Implementation Checklist for SuccessDocument2 pagesERP Implementation Checklist for SuccessNorth W.rawdNo ratings yet

- Mod 3Document60 pagesMod 3MUSLIMA MBA21-23No ratings yet

- Fortisandbox: Third-Generation Sandboxing Featuring Dynamic Ai AnalysisDocument4 pagesFortisandbox: Third-Generation Sandboxing Featuring Dynamic Ai AnalysisRudi Arif candraNo ratings yet

- Lorenzo Vasquez - JrPBIDocument1 pageLorenzo Vasquez - JrPBIAlvin CarreonNo ratings yet

- Jai Prakash Kumar (20423MBA020) Summer Internship ReportDocument32 pagesJai Prakash Kumar (20423MBA020) Summer Internship ReportDigital Marketing JPNo ratings yet

- CISSP SelfPaced MasterClassDocument20 pagesCISSP SelfPaced MasterClassAhmed Ali KhanNo ratings yet

- IBM DATASTAGE Online Training, Online Datastage TrainingDocument5 pagesIBM DATASTAGE Online Training, Online Datastage TrainingMatthew RyanNo ratings yet

- 2 Programming 1 - L1 - Module IntroductionDocument7 pages2 Programming 1 - L1 - Module IntroductionSamuel PlescaNo ratings yet

- Queen's Square PDFDocument44 pagesQueen's Square PDFSeinsu ManNo ratings yet

- Aswin Sundar - ResumeDocument4 pagesAswin Sundar - ResumeRadhika KantliNo ratings yet

- ISC Certified in Cybersecurity 1Document14 pagesISC Certified in Cybersecurity 1raspberries1No ratings yet

- Storage Migration - Hybrid Array To All-Flash Array: Victor WuDocument30 pagesStorage Migration - Hybrid Array To All-Flash Array: Victor WuVương NhânNo ratings yet

- Memory SystemDocument70 pagesMemory SystemritikNo ratings yet

- MSIT Distance Course CatalogDocument4 pagesMSIT Distance Course Catalogmurali_pmp1766No ratings yet

- Tracing file deletions in Windows Event LogsDocument6 pagesTracing file deletions in Windows Event LogssivextienNo ratings yet

- Gartner Reprint PAMDocument33 pagesGartner Reprint PAMOussèma SouissiNo ratings yet

- Lab No. Coverage Experiments: CN-LAB/socket-programming-slides PDFDocument3 pagesLab No. Coverage Experiments: CN-LAB/socket-programming-slides PDFTushar SrivastavaNo ratings yet

- Chapter 1 NotesDocument14 pagesChapter 1 NotesrahulNo ratings yet

- CN-Lab-6-Basic-Router-Configuration Mubashir Hussain 4979Document26 pagesCN-Lab-6-Basic-Router-Configuration Mubashir Hussain 4979Mubashir HussainNo ratings yet

- DNS CheatSheet V1.02 PDFDocument1 pageDNS CheatSheet V1.02 PDFJuanMateoNo ratings yet

- Sy0 601 11Document34 pagesSy0 601 11marwen hassenNo ratings yet

- Survey Tute 3CE - T3 - BCE-302 - Akhil PandeyDocument1 pageSurvey Tute 3CE - T3 - BCE-302 - Akhil Pandeyamansri035No ratings yet

- WPS70 DeploymentEnvironment Cluster Oracle11gDocument305 pagesWPS70 DeploymentEnvironment Cluster Oracle11gfofofNo ratings yet

- Ecrime and Cyber Security DXB 2017Document136 pagesEcrime and Cyber Security DXB 2017Cookie CluverNo ratings yet

- Teradata Administration TrackDocument2 pagesTeradata Administration TrackAmit SharmaNo ratings yet

- AJAX MCQDocument13 pagesAJAX MCQAbhinav DakshaNo ratings yet

- Diksha NDocument3 pagesDiksha NDiksha nawaleNo ratings yet

- Black Hat RustDocument357 pagesBlack Hat Rustjeffreydahmer420No ratings yet