Professional Documents

Culture Documents

Cpu Scheduling

Uploaded by

rhesusfactor8480 ratings0% found this document useful (0 votes)

7 views16 pagesAll content about CPU Scheduling

Original Title

CPU SCHEDULING

Copyright

© © All Rights Reserved

Available Formats

PPTX, PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentAll content about CPU Scheduling

Copyright:

© All Rights Reserved

Available Formats

Download as PPTX, PDF, TXT or read online from Scribd

0 ratings0% found this document useful (0 votes)

7 views16 pagesCpu Scheduling

Uploaded by

rhesusfactor848All content about CPU Scheduling

Copyright:

© All Rights Reserved

Available Formats

Download as PPTX, PDF, TXT or read online from Scribd

You are on page 1of 16

CPU SCHEDULING

What to know about CPU sheduling

• Preemptive and non preemptive scheduling

• Cpu burst

• Process states

• Context switch

• Dispatcher and cpu/process scheduler

• Scheduling criteria

• Scheduling algorithms

Definitions

• CPU Burst:

• CPU burst refers to the period during which a process or thread is actively executing instructions on the

CPU. It is the time interval between the moment a process starts using the CPU and the moment it releases

the CPU, either voluntarily (e.g., completing its task) or involuntarily (e.g., waiting for I/O).

• CPU Scheduler:

• CPU scheduler is a component of the operating system responsible for selecting which process or thread to

execute next on the CPU. It determines the order in which processes are allocated CPU time based on

scheduling policies and criteria.

• Dispatcher:

• Dispatcher is a component of the operating system responsible for performing the actual context switch

between processes or threads on the CPU. It is invoked by the CPU scheduler when a new process is

selected for execution or when a context switch is required. Context Switch:

• Context switch refers to the process of saving the state of a currently running process or thread (context)

and loading the state of a different process or thread for execution on the CPU. It occurs when the CPU

scheduler selects a new process to run or when a process voluntarily relinquishes the CPU.

definitions

• Preemptive Scheduling:

• In preemptive scheduling, the operating system can interrupt a currently running process or thread and

allocate the CPU to another process or thread.

• Non-preemptive Scheduling (also known as Cooperative Scheduling):

• In non-preemptive scheduling, a running process or thread voluntarily gives up the CPU, typically by blocking

on I/O operations, completing its execution, or yielding explicitly.

FCFS

• First-Come, First-Served (FCFS):

• Definition: FCFS is a non-preemptive scheduling algorithm where processes are executed in the

order they arrive in the ready queue. The process that arrives first gets executed first.

• Advantages:

• Simple and easy to implement.

• Fairness: Ensures that processes are executed in the order they arrive, promoting fairness

among processes.

• Disadvantages:

• Poor turnaround time: May result in longer average turnaround time, especially if short

processes are queued behind long ones (convoy effect).

• Inefficiency: May lead to low CPU utilization if long processes are scheduled first.

SJF

• Shortest Job Next (SJN) or Shortest Job First (SJF):

• Definition: SJN is a non-preemptive scheduling algorithm where the process with the shortest

burst time (execution time) is selected for execution next. Also known as Shortest Job First (SJF).

• Advantages:

• Minimizes average waiting time and turnaround time by executing shorter processes first.

• Improves system throughput by quickly completing short jobs.

• Disadvantages:

• Difficulty in prediction: Requires knowledge of the execution time of each process, which

may not always be available.

• May lead to starvation: Long processes may suffer from starvation if short processes

continuously arrive.

PRIORITY SCHEDULING

• Priority Scheduling:

• Definition: Priority scheduling is a preemptive or non-preemptive scheduling algorithm where each

process is assigned a priority. The scheduler selects the process with the highest priority for execution.

In preemptive priority scheduling, the running process can be preempted by a higher-priority process.

• Advantages:

• Supports priority-based execution, allowing higher-priority processes to receive preferential

treatment.

• Enables customization: Allows administrators to assign priorities based on process importance or

criticality.

• Disadvantages:

• Possibility of starvation: Lower-priority processes may suffer from starvation if higher-priority

processes continuously arrive.

• Priority inversion: Low-priority processes may hold resources needed by high-priority processes,

causing priority inversion.

ROUND ROBIN

• Round Robin (RR):

• Definition: Round Robin is a preemptive scheduling algorithm where each process is assigned a

fixed time slice (quantum). The scheduler executes processes in a circular manner, allocating one

time slice to each process in turn.

• Advantages:

• Fairness: Provides fairness by allocating a fixed time slice (quantum) to each process,

ensuring that no process monopolizes the CPU for too long.

• Responsive: Suitable for interactive systems as it provides quick response times.

• Disadvantages:

• Higher overhead: Context switching overhead may reduce overall system performance,

especially with small time slices.

• Poor performance with varying burst times: May result in longer average waiting time if

processes have varying burst times

MULTILEVEL QUEUE

• Multilevel Queue:

• Definition: Multilevel queue scheduling divides processes into multiple queues based on priority

or other criteria. Each queue has its own scheduling algorithm and priority level.

• Advantages:

• Organizes processes into separate queues based on priority or other criteria, providing better

management and control.

• Supports different scheduling algorithms for different queues, allowing customization based

on process characteristics.

• Disadvantages:

• Complexity: More complex to manage multiple queues and scheduling policies, requiring

careful design and implementation.

• Priority inversion: Lower-priority processes in higher-priority queues may hold resources

needed by higher-priority processes, causing priority inversion.

MULTILEVEL QUEUE FEEDBACK

• Multilevel Feedback Queue:

• Definition: Multilevel feedback queue scheduling is an extension of multilevel queue scheduling

where processes can move between queues based on their behavior. Processes that use more CPU

time or exhibit high priority may be moved to higher-priority queues.

• Advantages:

• Provides flexibility: Allows processes to move between different priority queues based on their

behavior and resource requirements.

• Adaptive: Adapts to changing workload characteristics and process behavior, optimizing

performance over time.

• Disadvantages:

• Complexity: More complex to implement compared to simple multilevel queue algorithms,

requiring sophisticated feedback mechanisms and policies.

• Overhead: Increased overhead due to dynamic queue management and process migration

between queues.

THREADS

• Definition: A thread is the smallest unit of execution within a process. Unlike processes, which are

independent entities with their own memory space, threads share the same memory space and

resources within a process.

• Concurrency: Threads allow multiple tasks to be executed concurrently within the same process.

Each thread has its own program counter, stack, and thread-specific register values, but they all

share the same code segment, data segment, and other resources of the parent process.

TYPES OF THREADS

• User-Level Threads (ULTs): Managed entirely by the application and

not visible to the operating system. ULTs provide more flexibility and

control over thread management but may suffer from limitations such

as inability to take advantage of multi-core processors effectively.

• Kernel-Level Threads (KLTs): Managed by the operating system

kernel. Each thread is represented as a separate kernel data structure.

KLTs offer better performance and support for multi-core processors,

as the kernel can schedule threads across multiple processors.

RELATIONSHIPS FOR THREADS

• Many-to-One Model:

• Definition: In the many-to-one model, multiple user-level threads are mapped to a single kernel-

level thread. Thread management and scheduling are handled entirely by the user-level thread

library without kernel support.

• Advantages:

• Lightweight: User-level threads are lightweight and incur minimal overhead, as they do not require kernel

involvement for thread management.

• Easy to implement: Implementing user-level threading libraries for many-to-one models is straightforward and

does not require changes to the kernel.

• Disadvantages:

• Lack of parallelism: Since all user-level threads are mapped to a single kernel-level thread, only one thread can

execute at a time, limiting parallelism and scalability.

• Blocking system calls: If a user-level thread performs a blocking system call (e.g., I/O), it blocks the entire

process, preventing other user-level threads from making progress.

• Non-preemptive: Kernel-level threads cannot be preempted by the operating system scheduler, leading to

potential responsiveness issues.

ONE TO ONE

• One-to-One Model:

• Definition: In the one-to-one model, each user-level thread is mapped to a distinct kernel-level thread.

Thread management and scheduling are handled by the kernel.

• Advantages:

• Parallelism: Multiple threads can execute concurrently on multiple CPU cores, maximizing CPU utilization and

parallelism.

• Preemptive: Kernel-level threads can be preempted by the operating system scheduler, allowing for better

responsiveness and fairness.

• Blocking system calls: Blocking system calls do not affect other threads, as each thread has its own kernel-level

thread.

• Disadvantages:

• Overhead: Creating and managing kernel-level threads incurs additional overhead compared to user-level threads,

leading to increased resource consumption.

• Scalability: The one-to-one model may not scale well for applications with a large number of threads, as it requires

significant kernel resources.

• Complexity: Implementing and managing a large number of kernel-level threads can be complex and may require

careful tuning

MANY TO MANY

• Many-to-Many Model:

• Definition: In the many-to-many model, multiple user-level threads are multiplexed onto a smaller or equal

number of kernel-level threads. Both user-level and kernel-level threads are managed by the system.

• Advantages:

• Flexibility: Provides a balance between lightweight user-level threads and efficient kernel-level threads, allowing for

better resource utilization and scalability.

• Improved parallelism: Allows multiple user-level threads to execute concurrently on multiple CPU cores, maximizing

parallelism and performance.

• Blocking system calls: Blocking system calls do not block the entire process, as other user-level threads can continue

executing on different kernel-level threads.

• Disadvantages:

• Complexity: Implementing and managing the many-to-many model is more complex than other models, as it requires

coordination between user-level and kernel-level thread management.

• Overhead: Multiplexing user-level threads onto a smaller number of kernel-level threads incurs additional overhead

compared to the many-to-one model.

• Resource contention: If the number of user-level threads exceeds the number of available kernel-level threads, resource

contention may occur, leading to decreased performance.

You might also like

- Unit-VI: Advance Tools and Technologies (And Problem Solving in The OS)Document76 pagesUnit-VI: Advance Tools and Technologies (And Problem Solving in The OS)NERO FERONo ratings yet

- Process Scheduling - 3Document28 pagesProcess Scheduling - 3StupefyNo ratings yet

- Chapter 10Document25 pagesChapter 10Fauzan PrasetyoNo ratings yet

- Operting System BookDocument48 pagesOperting System Bookbasit qamar100% (3)

- Operating System Chapter 4 - MaDocument41 pagesOperating System Chapter 4 - MaSileshi Bogale HaileNo ratings yet

- Mohamed Abdelrahman Anwar - 20011634 - Sheet 4Document15 pagesMohamed Abdelrahman Anwar - 20011634 - Sheet 4mohamed abdalrahmanNo ratings yet

- OS Unit - IIDocument74 pagesOS Unit - IIDee ShanNo ratings yet

- Scheduler Activations: Effective Kernel Support For The User-Level Management of ParallelismDocument29 pagesScheduler Activations: Effective Kernel Support For The User-Level Management of ParallelismsushmsnNo ratings yet

- Unit 2Document60 pagesUnit 2gdzqxjgomvnldysggkNo ratings yet

- CPU SchedulingDocument6 pagesCPU SchedulingharshimogalapalliNo ratings yet

- Elg 6171 Scheduling PresentationDocument20 pagesElg 6171 Scheduling PresentationMoulali SkNo ratings yet

- Lecture 4 - Process - CPU SchedulingDocument38 pagesLecture 4 - Process - CPU SchedulingtalentNo ratings yet

- Lecture 2Document17 pagesLecture 2Lets clear Jee mathsNo ratings yet

- CO4 CHAP 9 - (13-24) - PracticeDocument28 pagesCO4 CHAP 9 - (13-24) - PracticeSoumyajit HazraNo ratings yet

- Lecture 5 Unit 3Document15 pagesLecture 5 Unit 3Dharshana BNo ratings yet

- 2 Types of Operating SystemDocument26 pages2 Types of Operating Systemanshuman1006No ratings yet

- Processes: Lenmar T. Catajay, CoeDocument62 pagesProcesses: Lenmar T. Catajay, Coebraynat kobeNo ratings yet

- CPU Scheduling 2Document61 pagesCPU Scheduling 2kranthi chaitanyaNo ratings yet

- ES&RTOS Unit-2Document45 pagesES&RTOS Unit-2Sai KallemNo ratings yet

- Chapter - 1Document11 pagesChapter - 1Harshada BavaleNo ratings yet

- OS Assignment 2Document3 pagesOS Assignment 2Rizwan SannyNo ratings yet

- Os Unit - IiDocument80 pagesOs Unit - IiChandanaNo ratings yet

- ThreadsDocument47 pagesThreads21PC12 - GOKUL DNo ratings yet

- 3 - OS Process CPU SchedulingDocument38 pages3 - OS Process CPU SchedulingKaushal AnandNo ratings yet

- Parallel Programming PlatformsDocument109 pagesParallel Programming PlatformsKarthik LaxmikanthNo ratings yet

- OS Mini ProjectDocument21 pagesOS Mini ProjectDarrel Soo Pheng Kian50% (2)

- Process Mangement 2Document21 pagesProcess Mangement 2Glyn Sarita GalleposoNo ratings yet

- Scheduling: Covers Chapter#09 From TextbookDocument39 pagesScheduling: Covers Chapter#09 From TextbookFootball King'sNo ratings yet

- Multiprocessor and Real-Time SchedulingDocument57 pagesMultiprocessor and Real-Time Schedulingapi-3845765No ratings yet

- Module - 2 Cont..: Threads, Scheduling, SynchronizationDocument58 pagesModule - 2 Cont..: Threads, Scheduling, SynchronizationNarmatha ThiyagarajanNo ratings yet

- 04 Process Process MGT - 3Document34 pages04 Process Process MGT - 3Jacob AbrahamNo ratings yet

- Lecture 06Document16 pagesLecture 06api-3801184No ratings yet

- HWSW Co-Synthesis AlgorithmsDocument38 pagesHWSW Co-Synthesis AlgorithmsAbdur-raheem Ashrafee BeparNo ratings yet

- Multiprocessing: - ClassificationDocument14 pagesMultiprocessing: - Classificationdomainname9No ratings yet

- Week 1 - Class Orientation & Introduction To OSDocument26 pagesWeek 1 - Class Orientation & Introduction To OSCrisostomo CalitinaNo ratings yet

- 06 - CPU Scheduling - ConDocument31 pages06 - CPU Scheduling - Conعمرو جمالNo ratings yet

- L8 Processs Management PART IIDocument18 pagesL8 Processs Management PART IIProf. DEVIKA .RNo ratings yet

- Unit 2 - Process ManagementDocument118 pagesUnit 2 - Process ManagementARVIND HNo ratings yet

- Uniprocessor Scheduling: William StallingsDocument58 pagesUniprocessor Scheduling: William Stallingsnaumanahmed19No ratings yet

- Threads in Operating SystemDocument103 pagesThreads in Operating SystemMonika SahuNo ratings yet

- Operating SystemDocument29 pagesOperating SystemFrancine EspinedaNo ratings yet

- Module 2Document127 pagesModule 2LaxminathTripathyNo ratings yet

- Unit 5Document45 pagesUnit 5Abhinav JaiswalNo ratings yet

- Scheduling AlgorithmsDocument75 pagesScheduling AlgorithmsGowtam ReddyNo ratings yet

- Os Unit III 2018 ArsDocument102 pagesOs Unit III 2018 ArsAditiNo ratings yet

- Os Unit 2Document60 pagesOs Unit 2Anish Dubey SultanpurNo ratings yet

- Chapter No 4Document86 pagesChapter No 4CM 45 KADAM CHAITANYANo ratings yet

- Chapter 09Document26 pagesChapter 09Fauzan PrasetyoNo ratings yet

- Os - 5Document15 pagesOs - 5StupefyNo ratings yet

- Processes: Operating Systems: Internals and Design Principles, 6/EDocument52 pagesProcesses: Operating Systems: Internals and Design Principles, 6/EM Shahid KhanNo ratings yet

- Threads: Basic Theory and Libraries: Unit of Resource OwnershipDocument33 pagesThreads: Basic Theory and Libraries: Unit of Resource OwnershipSudheera PalihakkaraNo ratings yet

- Economics PPT FinalDocument10 pagesEconomics PPT FinalDebasis GaraiNo ratings yet

- Module 3 ConcurrencyDocument26 pagesModule 3 Concurrency202202214No ratings yet

- Multiprocessing SchedullingDocument22 pagesMultiprocessing SchedullingOscar LoorNo ratings yet

- Short Notes Operating Systems 1 11Document26 pagesShort Notes Operating Systems 1 11see thaNo ratings yet

- OS Unit 2Document6 pagesOS Unit 2Study WorkNo ratings yet

- Operating Systems Interview Questions You'll Most Likely Be Asked: Job Interview Questions SeriesFrom EverandOperating Systems Interview Questions You'll Most Likely Be Asked: Job Interview Questions SeriesNo ratings yet

- Digital Control Engineering: Analysis and DesignFrom EverandDigital Control Engineering: Analysis and DesignRating: 3 out of 5 stars3/5 (1)

- MVI56E MCMMCMXT User Manual PDFDocument199 pagesMVI56E MCMMCMXT User Manual PDFCarlos Andres Porras NinoNo ratings yet

- C2804x C/C++ Header Files and Peripheral Examples Quick StartDocument39 pagesC2804x C/C++ Header Files and Peripheral Examples Quick StartVo Thai Huy HoangNo ratings yet

- DWG CMP MX Mars CompressedAir 20210219 M 2Document1 pageDWG CMP MX Mars CompressedAir 20210219 M 2Edgardo MartinezNo ratings yet

- UML Distilled Second Edition A Brief Guide To The Standard Object Modeling LanguageDocument234 pagesUML Distilled Second Edition A Brief Guide To The Standard Object Modeling LanguageMichael Plexousakis100% (1)

- Spectre User ManualDocument20 pagesSpectre User ManualAaron SmithNo ratings yet

- VimDocument157 pagesVimManu CodjiaNo ratings yet

- Virtual Machines Provisioning and Migration ServicesDocument41 pagesVirtual Machines Provisioning and Migration ServicesShivam ChaharNo ratings yet

- MB Manual A520m-S2h e 1201Document40 pagesMB Manual A520m-S2h e 1201fernandes.angeljNo ratings yet

- Laura Mercado: Whoiam StudiesDocument1 pageLaura Mercado: Whoiam StudiesEdilson Laura MercadoNo ratings yet

- DJI L1 Operation Guidebook V1.1Document47 pagesDJI L1 Operation Guidebook V1.1Sebastian ChaconNo ratings yet

- Ict. MCQDocument14 pagesIct. MCQCommerce HoDNo ratings yet

- ZEDI Detailed User GuideDocument23 pagesZEDI Detailed User GuideSuresh ChandraNo ratings yet

- Dynamo in Civil 3D Introduction - Unlocking The Mystery of ScriptingDocument43 pagesDynamo in Civil 3D Introduction - Unlocking The Mystery of ScriptingDaniel Pasy SelekaNo ratings yet

- 4.2 Acitivity SoftwareModelingIntroduction - REVISED-2Document5 pages4.2 Acitivity SoftwareModelingIntroduction - REVISED-2Catherine RosenfeldNo ratings yet

- RDX Manager Manual 1022447Document41 pagesRDX Manager Manual 1022447Boopala KrishnanNo ratings yet

- Virtua-Fighter-2 Manual Win ENDocument21 pagesVirtua-Fighter-2 Manual Win ENDJ MOB CHANNELNo ratings yet

- University of The Visayas: Final Examination (1 SY: 2019-2020Document4 pagesUniversity of The Visayas: Final Examination (1 SY: 2019-2020ChaseWenn Del HortadoNo ratings yet

- VideoXpert VMS Sales Sheet-2021-V4Document4 pagesVideoXpert VMS Sales Sheet-2021-V4Shahid MehboobNo ratings yet

- GGR272 - W2022-Syllabus-1Document9 pagesGGR272 - W2022-Syllabus-1Ada LovelaceNo ratings yet

- CNC Programming: Profile No.: 272 NIC Code:62011Document10 pagesCNC Programming: Profile No.: 272 NIC Code:62011Sanyam BugateNo ratings yet

- Multimedia Course SyllabusDocument35 pagesMultimedia Course Syllabusartsraj80% (5)

- Why Blockchain Unit 1Document40 pagesWhy Blockchain Unit 1alfiyashajakhanNo ratings yet

- Rift TD Tutorial - Deposition ResultsDocument11 pagesRift TD Tutorial - Deposition ResultsmavinNo ratings yet

- MyDomino - EN - ManualsPortal - ScribingLaser - D Series Itech - English - D120i D320i D620i Pharma User Guide English L027971 4Document14 pagesMyDomino - EN - ManualsPortal - ScribingLaser - D Series Itech - English - D120i D320i D620i Pharma User Guide English L027971 4Nikola PerencevicNo ratings yet

- Lilypond Usage: The Lilypond Development TeamDocument73 pagesLilypond Usage: The Lilypond Development Teamm8rcjndgxNo ratings yet

- Autocad Training in AmeerpetDocument14 pagesAutocad Training in AmeerpetOmega caddNo ratings yet

- Cloud Hosting Solutions Built For Your BusinessDocument8 pagesCloud Hosting Solutions Built For Your BusinessOxtrys CloudNo ratings yet

- Recognition of Text in Textual Images Using Deep LearningDocument14 pagesRecognition of Text in Textual Images Using Deep LearningRishi keshNo ratings yet

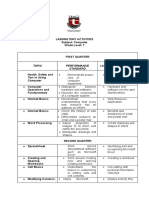

- Laboratory Activities - Computer 7Document2 pagesLaboratory Activities - Computer 7John Marvin CanariaNo ratings yet

- Text To Speech Conversion in MATLAB. Access Speech Properties of Windows From MATLAB. - ProgrammerworldDocument1 pageText To Speech Conversion in MATLAB. Access Speech Properties of Windows From MATLAB. - ProgrammerworldamanNo ratings yet