Professional Documents

Culture Documents

p20 KV PDF

p20 KV PDF

Uploaded by

Anthony FelderOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

p20 KV PDF

p20 KV PDF

Uploaded by

Anthony FelderCopyright:

Available Formats

1 of 5 TEXT

kode vicious ONLY

Every Silver Lining

Has a Cloud Cache is king.

And if your

cache is cut,

you’re going

to feel it.

Dear KV,

My team and I have spent the past eight weeks debugging

an application performance problem in a system that

I

we moved to a cloud provider. Now, after a few drinks to

who is celebrate, we thought we would tell you the story and see

KV? if you have any words of wisdom.

In 2016, our management decided that—to save money—

we would move all our services from self-hosted servers in

click for video two racks in our small in-office data center into the cloud

so that we could take advantage of the elastic pricing

available from most cloud providers. Our system uses

fairly generic, off-the-shelf, open-source components,

including Postgres and memcached, to provide the back-

end storage to our web service.

Over the past two years we built up a good deal of

expertise in tuning the system for performance, so we

thought we were in a good place to understand what we

needed when we moved the service to the cloud. What we

found was quite the opposite.

Our first problem was very inconsistent response times

to queries. The long tail of long queries of our database

began to grow the moment we moved our systems into

the cloud service, but each time we went to look for a root

cause, the problem would disappear. The tools we would

normally use to diagnose the issues we found on bare

metal also gave far more varied results than expected.

acmqueue | march-april 2018 1

kode vicious 2 of 5

I

In the end, some of the systems could not be allocated

elastically but had to be statically allocated, so the service

would behave in a consistent manner. The savings that

management expected were never realized. Perhaps the

only bright side is that we no longer have to maintain our

own deployment tools, because deployment is handled by

the cloud provider.

As we sip our drinks, we wonder, is this really a common

T

problem, or could we have done something to have made

he savings

in cloud

this transition less painful?

computing Rained on our Parade

comes

at the Dear Rained,

expense of a loss

of control over Clearly, your management has never heard the phrase,

your systems. “You get what you pay for.” Or perhaps they heard it and

didn’t realize it applied to them. The savings in cloud

computing come at the expense of a loss of control over

your systems, which is summed up best in the popular

nerd sticker that says, “The Cloud Is Just Other People’s

Computers.”

All the tools you built during those last two years work

only because they have direct knowledge of the system

components down to the metal, or at least as close to the

metal as possible. Once you move a system into the cloud,

your application is sharing resources with other, competing

systems, and if you’re taking advantage of elastic pricing,

then your machines may not even be running until the

cloud provider deems them necessary. Request latency

is dictated by the immediate availability of resources to

answer the incoming request. These resources include

CPU cycles, data in memory, data in CPU caches, and data

acmqueue | march-april 2018 2

kode vicious 3 of 5

I

on storage. In a traditional server, all these resources are

controlled by your operating system at the behest of the

programs running on top of the operating system; but in

a cloud, there is another layer, the virtual machine, which

adds another turtle to the stack, and even when it’s turtles

all the way down, that extra turtle is going to be the

source of resource variation. This is one reason you saw

inconsistent results after you moved your system to the

cloud.

Let’s think only about the use of CPU caches for a

moment. Modern CPUs gain quite a bit of their overall

performance from having large, efficiently managed L1,

L2, and sometimes L3 caches. The CPU caches are shared

among all programs, but in the case of a virtualized system

with several tenants, the amount of cache available to

any one program—such as your database or memcached

server—decreases linearly with the addition of each

tenant. If you had a beefy server in your original colo,

you were definitely gaining a performance boost from

the large caches in those CPUs. The very same server

running in a cloud provider is going to give your programs

drastically less cache space with which to work.

With less cache, fewer things are kept in fast memory,

meaning that your programs now need to go to regular

RAM, which is often much slower than cache. Those

accesses to memory are now competing with other

tenants that are also squeezed for cache. Therefore,

although the real server on which the instances are

running might be much larger than your original

hardware—perhaps holding nearly a terabyte of RAM—

each tenant receives far worse performance in a virtual

acmqueue | march-april 2018 3

kode vicious 4 of 5

I

instance of the same memory size than it would if it had a

real server with the same amount of memory.

Let’s imagine this with actual numbers. If your team

owned a modern dual-processor server with 128 gigabytes

of RAM, each processor would have 16 megabytes

(not gigabytes) of L2 cache. If that server is running an

operating system, a database, and memcached, then those

three programs share that 16 megabytes. Taking the same

server and increasing the memory to 512 gigabytes, and

then having four tenants, means that the available cache

space has now shrunk to one-fourth of what it was—each

tenant now receives only four megabytes of L2 cache and

has to compete with three other tenants for all the same

resources it had before. In modern computing, cache is

king, and if your cache is cut, you’re going to feel it, as you

did when trying to fix your performance problems.

Most cloud providers offer systems that are nonelastic,

as well as elastic, but having a server always available in

a cloud service is more expensive than hosting one at a

traditional colocation facility. Why is that? It’s because

the economies of scale for cloud providers work only

if everyone is playing the game and allowing the cloud

provider to dictate how resources are consumed.

Some providers now have something called Metal-

as-a-Service, which I really think ought to mean that

an ’80s metal band shows up at your office, plays a gig,

smashes the furniture, and urinates on the carpet, but

alas, it’s just the cloud providers’ way of finally admitting

that cloud computing isn’t really the right answer for

all applications. For systems that require deterministic

performance guarantees to work well, you really have

acmqueue | march-april 2018 4

kode vicious 5 of 5

I

to think very hard about whether or not a cloud-based

system is the right answer, because providing deterministic

guarantees requires quite a bit

of control over the variables in

Related articles

the environment. Cloud systems

3 Cloud Calipers

Kode Vicious

are not about giving you control;

they’re about the owner of the

Naming the next generation and

systems having the control.

remembering that the cloud is just

KV

other people’s computers

https://queue.acm.org/detail.cfm?id=2993454 Kode Vicious, known to mere

3 20 Obstacles to Scalability

Sean Hull

mortals as George V. Neville-

Neil, works on networking and

Watch out for these pitfalls that operating-system code for fun and

can prevent web application scaling. profit. He also teaches courses

https://queue.acm.org/detail.cfm?id=2512489 on various subjects related to

3 A Guided Tour through

Data-center Networking

programming. His areas of interest

are code spelunking, operating

Dennis Abts, Bob Felderman systems, and rewriting your bad

A good user experience depends on code (OK, maybe not that last

predictable performance within

one). He earned his bachelor’s

the data-center network.

degree in computer science at

https://queue.acm.org/detail.cfm?id=2208919

Northeastern University in Boston,

Massachusetts, and is a member of

ACM, the Usenix Association, and IEEE. Neville-Neil is the co-

author with Marshall Kirk McKusick and Robert N. M. Watson

of The Design and Implementation of the FreeBSD Operating

System (second edition). He is an avid bicyclist and traveler

who currently lives in New York City.

Copyright © 2018 held by owner/author. Publication rights licensed to ACM.

acmqueue | march-april 2018 5

You might also like

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (122)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (590)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (540)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (401)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5813)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (844)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (822)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (897)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (348)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1092)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (74)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- PC World Magazine MarchDocument72 pagesPC World Magazine MarchJames MrBoombastic WhyteNo ratings yet

- MindfulnessDocument14 pagesMindfulnessAnthony FelderNo ratings yet

- Programmable Logic Circuit PLCDocument79 pagesProgrammable Logic Circuit PLCRahul100% (7)

- Scheduling of Operating System Services: Stefan BonfertDocument2 pagesScheduling of Operating System Services: Stefan BonfertAnthony FelderNo ratings yet

- Boosting The Cloud Meta-Operating System With Heterogeneous Kernels. A Novel Approach Based On Containers and MicroservicesDocument6 pagesBoosting The Cloud Meta-Operating System With Heterogeneous Kernels. A Novel Approach Based On Containers and MicroservicesAnthony FelderNo ratings yet

- Frightening Small Children and Disconcerting Grown-Ups: Concurrency in The Linux KernelDocument29 pagesFrightening Small Children and Disconcerting Grown-Ups: Concurrency in The Linux KernelAnthony FelderNo ratings yet

- Theoretical Study Based Analysis On The Facets of MPI in Parallel ComputingDocument4 pagesTheoretical Study Based Analysis On The Facets of MPI in Parallel ComputingAnthony FelderNo ratings yet

- Understanding Fileless Attacks On Linux-Based Iot Devices With HoneycloudDocument12 pagesUnderstanding Fileless Attacks On Linux-Based Iot Devices With HoneycloudAnthony FelderNo ratings yet

- Top 10 Cloud Computing PapersDocument30 pagesTop 10 Cloud Computing PapersAnthony FelderNo ratings yet

- Linux KernelsDocument7 pagesLinux KernelsAnthony FelderNo ratings yet

- Net CloudfutureDocument19 pagesNet CloudfutureAnthony FelderNo ratings yet

- A Practical Guide To Scientific Data AnalysisDocument13 pagesA Practical Guide To Scientific Data AnalysisAnthony FelderNo ratings yet

- Linux EmdbedDocument36 pagesLinux EmdbedAnthony FelderNo ratings yet

- Algorithms: Modern SystemsDocument21 pagesAlgorithms: Modern SystemsAnthony FelderNo ratings yet

- 2018 TSPC ZouDocument20 pages2018 TSPC ZouAnthony FelderNo ratings yet

- PHD Positions at Technical University of MunichDocument1 pagePHD Positions at Technical University of MunichAnthony FelderNo ratings yet

- H Uman L Iberation: R Emoving B Iological and P Sychological B Arriers To F ReedomDocument15 pagesH Uman L Iberation: R Emoving B Iological and P Sychological B Arriers To F ReedomAnthony FelderNo ratings yet

- Good Things For Those Who Wait: Predictive Modeling Highlights Importance of Delay Discounting For Income AttainmentDocument10 pagesGood Things For Those Who Wait: Predictive Modeling Highlights Importance of Delay Discounting For Income AttainmentAnthony FelderNo ratings yet

- FPGAs in Data CentersDocument6 pagesFPGAs in Data CentersAnthony FelderNo ratings yet

- FLOSS Network EmulatorsDocument8 pagesFLOSS Network EmulatorsAnthony FelderNo ratings yet

- p10 Chisnall PDFDocument13 pagesp10 Chisnall PDFAnthony FelderNo ratings yet

- Models of Quantum Computation and Quantum Programming LanguagesDocument20 pagesModels of Quantum Computation and Quantum Programming LanguagesAnthony FelderNo ratings yet

- Th-xxpx80 v1.13 Firmware GuideDocument13 pagesTh-xxpx80 v1.13 Firmware Guideadmin323No ratings yet

- N428E02 2-Channel RS485 Relay ManualDocument7 pagesN428E02 2-Channel RS485 Relay ManualMirosław WalasikNo ratings yet

- TP Yoga14 Frubom 20151130Document12 pagesTP Yoga14 Frubom 20151130sdifghweiu wigufNo ratings yet

- RSLinx Classic Release Notes v2.59.00Document24 pagesRSLinx Classic Release Notes v2.59.00Luis Alberto RamosNo ratings yet

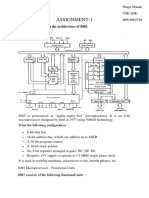

- MPMC Assignment-1Document7 pagesMPMC Assignment-113Panya CSE2No ratings yet

- Service Manual: Viewsonic Vp201B/S-1Document77 pagesService Manual: Viewsonic Vp201B/S-1Digital_defNo ratings yet

- DT-176CV2: True Rms Voltage & Current Data LoggerDocument2 pagesDT-176CV2: True Rms Voltage & Current Data LoggerAndrzej GomulaNo ratings yet

- C-Code Software Routines For Using The SPI Interface On The MAX7456 On-Screen DisplayDocument9 pagesC-Code Software Routines For Using The SPI Interface On The MAX7456 On-Screen DisplayVinay Ashwath100% (2)

- Review Your Precision 5860 TowerDocument2 pagesReview Your Precision 5860 TowerLuis Alberto Bernal AnotaNo ratings yet

- Coa CH4Document6 pagesCoa CH4vishalNo ratings yet

- ArduinoDocument77 pagesArduinoAntonio C. KeithNo ratings yet

- Boot Time Labs PDFDocument28 pagesBoot Time Labs PDFVaibhav DhinganiNo ratings yet

- LDM 03Document6 pagesLDM 03rasimNo ratings yet

- MPMC MCQDocument9 pagesMPMC MCQDhivyaManian83% (6)

- Graphtec CAMEODocument2 pagesGraphtec CAMEOLeandro ChaileNo ratings yet

- IOT Liquid Level Monitoring SystemDocument5 pagesIOT Liquid Level Monitoring SystemNegmNo ratings yet

- How To Create LVM Using Vgcreate, Lvcreate, and Lvextend lvm2 CommandsDocument4 pagesHow To Create LVM Using Vgcreate, Lvcreate, and Lvextend lvm2 CommandsssdsNo ratings yet

- Department of Computer Science and EngineeringDocument3 pagesDepartment of Computer Science and EngineeringVSBCETC EXAM CELLNo ratings yet

- Case Study On FujitsuDocument14 pagesCase Study On Fujitsuhimanisharma9No ratings yet

- Grade 10 - L2Document21 pagesGrade 10 - L2Jackielyn Canimo GaitanoNo ratings yet

- Alarm Clock MANUALDocument15 pagesAlarm Clock MANUALShankar ArunmozhiNo ratings yet

- Linx 7900 Spectrum Web Mp42143 03Document2 pagesLinx 7900 Spectrum Web Mp42143 03khoiminh8714No ratings yet

- Radisys Sc815e Datasheet 14083Document3 pagesRadisys Sc815e Datasheet 14083Marko AgassiNo ratings yet

- Estimate 1194 From H STRUCTURED SERVICESDocument2 pagesEstimate 1194 From H STRUCTURED SERVICESAnonpcNo ratings yet

- XUF Card Firmware Update 8412 InfosheetDocument2 pagesXUF Card Firmware Update 8412 InfosheetPaul MirtschinNo ratings yet

- Memory Management: Early SystemsDocument49 pagesMemory Management: Early Systemsrvaleth100% (1)

- Interpreting Esxtop Statistics - VMware CommunitiesDocument50 pagesInterpreting Esxtop Statistics - VMware CommunitiesFacer DancerNo ratings yet

- Assignment 2 QP MPMC - ITDocument1 pageAssignment 2 QP MPMC - ITProjectsNo ratings yet