Professional Documents

Culture Documents

Hadoop Ecosystem: An Introduction: Sneha Mehta, Viral Mehta

Uploaded by

Sivaprakash ChidambaramOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Hadoop Ecosystem: An Introduction: Sneha Mehta, Viral Mehta

Uploaded by

Sivaprakash ChidambaramCopyright:

Available Formats

International Journal of Science and Research (IJSR)

ISSN (Online): 2319-7064

Index Copernicus Value (2013): 6.14 | Impact Factor (2015): 6.391

Hadoop Ecosystem: An Introduction

Sneha Mehta1, Viral Mehta2

1

International Institute of Information Technology, Department of Information Technology, Pune, India

2

MasterCard Technology Pvt. Ltd., Pune, India

Abstract: Hadoop a de facto industry standard has become kernel of the distributed operating system for Big data. Hadoop has gained

its popularity due to its ability of storing, analyzing and accessing large amount of data, quickly and cost effectively through clusters of

commodity hardware. But, No one uses kernel alone. “Hadoop” is taken to be a combination of HDFS and MapReduce. To complement

the Hadoop modules there are also a variety of other projects that provide specialized services and are broadly used to make Hadoop

laymen accessible and more usable, collectively known as Hadoop Ecosystem. All the components of the Hadoop ecosystem, as explicit

entities are evident to address particular needs. Recent Hadoop ecosystem consists of different level layers, each layer performing

different kind of tasks like storing your data, processing stored data, resource allocating and supporting different programming

languages to develop various applications in Hadoop ecosystem.

Keywords: HDFS, HBase, MapReduce, YARN, Hive, Pig, Mahout, Avro, Sqoop, Oozie, Chukwa, Flume, Zookeeper

1. Introduction

Hadoop Ecosystem is a framework of various types of

complex and evolving tools and components which have

proficient advantage in solving problems. Some of the

elements may be very dissimilar from each other in terms of

their architecture; however, what keeps them all together

under a single roof is that they all derive their functionalities

from the scalability and power of Hadoop. Hadoop

Ecosystem is alienated in four different layers: data storage,

data processing, data access, data management. Figure 1

depicts how the diverse elements of hadoop [1] involve at

various layers of processing data.

All the components of the Hadoop ecosystem, as explicit

entities are evident. The holistic view of Hadoop architecture

gives prominence to Hadoop common, Hadoop YARN,

Hadoop Distributed File Systems (HDFS) and Hadoop

MapReduce of the Hadoop Ecosystem. Hadoop common

provides all Java libraries, utilities, OS level abstraction,

necessary Java files and script to run Hadoop, while Hadoop

YARN is a framework for job scheduling and cluster

resource management. HDFS in Hadoop architecture Figure 1: Hadoop Ecosystem

provides high throughput access to application data and

Hadoop MapReduce provides YARN based parallel 2. Data Storage Layer

processing of large data sets.

Data Storage is the layer where the data is stored in a

The Hadoop ecosystem [15] [18] [19] includes other tools to distributed file system; consist of HDFS and HBase

address particular needs. Hive is a SQL dialect and Pig is a ColumnDB Storage. HBase is scalable, distributed database

dataflow language for that hide the tedium of creating that supports structured data storage for large tables.

MapReduce jobs behind higher-level abstractions more

appropriate for user goals. HBase is a column-oriented 2.1 HDFS

database management system that runs on top of HDFS.

Avro provides data serialization and data exchange services HDFS, the storage layer of Hadoop, is a distributed,

for Hadoop. Sqoop is a combination of SQL and hadoop. scalable, Java-based file system adept at storing large

Zookeeper is used for federating services and Oozie is a volumes of data with high-throughput access to application

scheduling system. In the absence of an ecosystem [11] [12], data on the community machines, providing very high

developers have to implement separate sets of technologies aggregate bandwidth across the cluster. When data is pushed

to create Big Data [20] solutions. to HDFS, it automatically splits up into multiple blocks and

stores/replicates the data thus ensuring high availability and

fault tolerance.

Volume 5 Issue 6, June 2016

www.ijsr.net

Licensed Under Creative Commons Attribution CC BY

Paper ID: NOV164121 http://dx.doi.org/10.21275/v5i6.NOV164121 557

International Journal of Science and Research (IJSR)

ISSN (Online): 2319-7064

Index Copernicus Value (2013): 6.14 | Impact Factor (2015): 6.391

HDFS comprises of 2 important components (Fig. 2) – HBase [2] also endow with a variety of functional data

NameNode and DataNode. HDFS operates on a Master- processing features, such as consistency, sharding, high

Slave architecture model where the NameNode acts as the availability, client API and support for IT operations.

master node for keeping a track of the storage cluster and the

DataNode acts as a slave node summing up to the various 3. Data Processing Layer

systems within a Hadoop cluster.

Scheduling, resource management and cluster management

is premeditated here. YARN job scheduling and cluster

resource management with Map Reduce are located in this

layer.

3.1 MapReduce

MapReduce [8] is a software framework for distributed

processing of large data sets that serves as the compute layer

of Hadoop which process vast amounts of data (multi-

terabyte data-sets) in-parallel on large clusters (thousands of

nodes) of commodity hardware in a reliable, fault-tolerant

manner.

A MapReduce job usually splits the input data-set into

independent chunks which are processed by the map tasks in

a completely parallel manner. The framework sorts the

outputs of the maps, which are then input to the reduce

tasks. The “Reduce” function aggregates the results of the

“Map” function to determine the “answer” to the query. On

Figure 2: HDFS Architecture average both the input and the output of the job are stored in

a file-system. The framework takes care of scheduling tasks,

HDFS works on the write once-read many times approach monitoring them and re-executing any failed tasks.

and this makes it capable of handling such huge volumes of

data with least possibilities of errors caused by replication of The compute nodes and the storage nodes are the same, that

data. This replication of data across the cluster provides fault is, the MapReduce framework and the Hadoop Distributed

tolerance and resilience against server failure. Data File System are running on the same set of nodes. This

Replication, Data Resilience, and Data Integrity are the three configuration allows the framework to effectively schedule

key features of HDFS. task on the nodes where data is already present, resulting in

very high aggregate bandwidth across the cluster.

NameNode: It acts as the master of the system. It

maintains the name system i.e., directories and files and

manages the blocks which are present on the DataNodes.

DataNodes: They are the slaves which are deployed on

each machine and provide the actual storage and are

responsible for serving read and write requests for the

clients. Additionally, DataNodes communicate with each

other to co-operate and co-ordinate in the file system

operations.

2.2 HBase

HBase is a scalable, distributed database that supports

structured data storage for large tables. Apache HBase

provides Bigtable - like capabilities on top of Hadoop and

HDFS. HBase is a data model that is similar to Google’s big

table designed to provide quick random access to huge

amounts of structured data. HBase facilitates reading/writing

of Big Data randomly and efficiently in real time. It stores

data into tables with rows and columns as in RDBMs.

HBase tables have one key feature called “versioning”

which helps in keeping a track of the changes made in a cell

and allows the retrieval of the previous version of the cell Figure 3: MapReduce Architecture: Workflow

contents, if required.

The MapReduce framework (Fig. 3), [7] consists of a single

master Job Tracker and one slave Task Tracker per cluster-

node. The master is responsible for scheduling the jobs'

Volume 5 Issue 6, June 2016

www.ijsr.net

Licensed Under Creative Commons Attribution CC BY

Paper ID: NOV164121 http://dx.doi.org/10.21275/v5i6.NOV164121 558

International Journal of Science and Research (IJSR)

ISSN (Online): 2319-7064

Index Copernicus Value (2013): 6.14 | Impact Factor (2015): 6.391

component tasks on the slaves, monitoring them and re- guarantees, fairness in allocation of resources etc. Thus,

executing the failed tasks. The slaves execute the tasks as YARN Resource Manager is responsible for almost all the

directed by the master. tasks. Resource Manager performs all its tasks in integration

with NodeManager and Application Manager.

Although the Hadoop framework is implemented in Java,

MapReduce [25] applications need not be written in Java. Application Manager: Every instance of an application

Hadoop Streaming is a utility which allows users to create running within YARN is managed by an Application

and run jobs with any executables (e.g. shell utilities) as the Manager, which is responsible for the negotiation of

mapper and/or the reducer. Hadoop Pipes is a SWIG- resources with the Resource Manager. Application Manager

compatible C++ API sto implement MapReduce also keeps track of availability and consumption of container

applications. resources, and provides fault tolerance for resources.

Accordingly, it is responsible for negotiating for appropriate

3.2 YARN resource containers from the Scheduler, monitoring of their

status, and checking the progress.

YARN (Yet Another Resource Negotiator) forms an integral

part of Hadoop 2.0.YARN is great enabler for dynamic Node Manager: NodeManager is the per-machine slave,

resource utilization on Hadoop framework as users can run which is responsible for launching the applications’

various Hadoop applications without having to bother about containers, monitoring their resource usage, and reporting

increasing workloads. The inclusion of YARN in hadoop 2 the status of the resource usage to the Resource Manager.

also means scalability provided to the data processing NodeManager manages each node within YARN cluster. It

applications. provides per-node services within the cluster. These services

range from managing a container to monitoring resources

YARN [19] [17] is a core hadoop service that supports two and tracking the health of its node.

major services:

YARN benefits include efficient resource utilization, highly

--Global resource management (ResourceManager) scalability, beyond Java, novel programming models and

--Per-application management (ApplicationMaster) services and agility.

4. Data Access Layer

The layer, where the request from Management layer is sent

to Data Processing Layer. Some projects have been setup for

this layer, Some of them are: Hive, A data warehouse

infrastructure that provides data summarization and ad hoc

querying; Pig, A high-level data-flow language and

execution framework for parallel computation; Mahout, A

Scalable machine learning and data mining library; Avro,

data serialization system.

4.1 Hive

Hive [5] is a Hadoop-based data warehousing-like

framework originally developed by Facebook, later the

Apache Software Foundation took it up and developed it

further as an open source under the name Apache Hive. It

resides on top of Hadoop to summarize Big Data, and makes

querying and analyzing easy. It allows users to write queries

in a SQL-like language called HiveQL which are then

converted to MapReduce. This allows SQL programmers

with no MapReduce experience to use the warehouse and

Figure 4: YARN Architecture makes it easier to integrate with business intelligence and

visualization tools.

Resource Manager: Resource Manager, in YARN Features of Hive:

architecture (Figure 4), is supreme authority that controls all It stores schema in a database and processed data into

the decisions related to resource management and allocation. HDFS.

It has a Scheduler Application Programming Interface (API) It is designed for OLAP.

that negotiates and schedules resources. However, The It provides SQL type language for querying called

Scheduler API doesn’t monitor or track the status of HiveQL or HQL.

applications. It is familiar, fast, scalable, and extensible.

The main purpose of introducing Resource Manager in

YARN is to optimize the utilization of resources all the time

by managing all the restrictions, which involve capacity

Volume 5 Issue 6, June 2016

www.ijsr.net

Licensed Under Creative Commons Attribution CC BY

Paper ID: NOV164121 http://dx.doi.org/10.21275/v5i6.NOV164121 559

International Journal of Science and Research (IJSR)

ISSN (Online): 2319-7064

Index Copernicus Value (2013): 6.14 | Impact Factor (2015): 6.391

4.2 Apache Pig It was developed by Doug Cutting, the father of Hadoop.

Since Hadoop writable classes lack language portability,

Apache Pig [6] is a platform for analyzing large data sets Avro has become quite helpful, as it deals with data formats

that consists of a high-level language for expressing data that can be processed by multiple languages. Avro is a

analysis programs, coupled with infrastructure for evaluating preferred tool to serialize data in Hadoop.

these programs. The salient property of Pig programs is that

their structure is amenable to substantial parallelization, Avro has a schema-based system. A language-independent

which in turns enables them to handle very large data sets. schema is associated with its read and writes operations.

Avro serializes the data which has a built-in schema. Avro

At the present time, Pig's infrastructure layer consists of a serializes the data into a compact binary format, which can

compiler that produces sequences of Map-Reduce programs, be deserialized by any application.

for which large-scale parallel implementations already exist

(e.g., the Hadoop subproject). Pig's language layer currently Avro uses JSON format to declare the data structures.

consists of a textual language called Pig Latin, which has the Presently, it supports languages such as Java, C, C++, C#,

following key properties: Python, and Ruby. Below are some of the prominent

features of Avro:

Ease of programming: It is trivial to achieve parallel Avro is a language-neutral data serialization system. It

execution of simple, "embarrassingly parallel" data can be processed by many languages (currently C, C++,

analysis tasks. Complex tasks comprised of multiple C#, Java, Python, and Ruby).

interrelated data transformations are explicitly encoded Avro creates binary structured format that is both

as data flow sequences, making them easy to write, compressible and splittable. Hence it can be efficiently

understand, and maintain. used as the input

Optimization Opportunities: The way in which tasks to Hadoop MapReduce jobs.

are encoded permits the system to optimize their Avro provides rich data structures. For example, you can

execution automatically, allowing the user to focus on create a record that contains an array, an enumerated

semantics rather than efficiency. type, and a sub record. These data types can be created

Extensibility: Users can create their own functions to do in any language can be processed in Hadoop, and the

special-purpose processing. results can be fed to a third language.

Avro schemas defined in JSON facilitate

4.3 Apache Mahout implementation in the languages that already have JSON

libraries.

Apache Mahout [9] [14] is a project of the Apache Avro creates a self-describing file named Avro Data

Software Foundation to produce free implementations of File, in which it stores data along with its schema in the

distributed or otherwise scalable machine learning metadata section.

algorithms focused primarily in the areas of collaborative

filtering, clustering and classification and implements them 4.5 Apache Sqoop

using the Map Reduce model.

“SQL to Hadoop and Hadoop to SQL”

The primitive features of Apache Mahout include:

The algorithms of Mahout are written on top of Hadoop, Sqoop [11] is a connectivity tool for moving data from non-

so it works well in distributed environment. Hadoop data stores – such as relational databases and data

Mahout uses the Apache Hadoop library to scale warehouses into Hadoop. It allows users to specify the target

effectively in the cloud. location inside of Hadoop and instruct Sqoop to move data

Mahout offers the coder a ready-to-use framework for from Oracle, Teradata or other relational databases to the

doing data mining tasks on large volumes of data. target.

Mahout lets applications to analyze large sets of data

effectively and in quick time. Sqoop is a tool designed to transfer data between Hadoop

Includes several MapReduce enabled clustering and relational database servers. It is used to import data from

implementations such as k-means, fuzzy k-means, relational databases such as MySQL, Oracle to Hadoop

Canopy etc. HDFS, and export from Hadoop file system to relational

Supports Distributed Naive Bayes and Complementary databases. It is provided by the Apache Software

Naive Bayes classification implementations. Foundation.

Comes with distributed fitness function capabilities for

evolutionary programming. --Sqoop Import

Includes matrix and vector libraries. The import tool imports individual tables from RDBMS to

HDFS. Each row in a table is treated as a record in HDFS.

4.4 Avro All records are stored as text data in text files or as binary

data in Avro and Sequence files.

Avro [10] is a data serialization system that allows for

encoding the schema of Hadoop files. It is adept at parsing --Sqoop Export

data and performing removed procedure calls. The export tool exports a set of files from HDFS back to an

RDBMS. The files given as input to Sqoop contain records,

Volume 5 Issue 6, June 2016

www.ijsr.net

Licensed Under Creative Commons Attribution CC BY

Paper ID: NOV164121 http://dx.doi.org/10.21275/v5i6.NOV164121 560

International Journal of Science and Research (IJSR)

ISSN (Online): 2319-7064

Index Copernicus Value (2013): 6.14 | Impact Factor (2015): 6.391

which are called as rows in table. Those are read and and processing stages, with clean and narrow interfaces

parsed into a set of records and delimited with user- between stages.

specified delimiter.

Chukwa has four primary components:

5. Data Management Layer Agents that run on each machine and emit data and

Collectors that receive data from the agent and write it to

A layer that meets, user. User access the system through this stable storage.

layer which has the components like: Chukwa, A data MapReduce jobs for parsing and archiving the data.

collection system for managing large distributed system and HICC, the Hadoop Infrastructure Care Center; a web-

Zookeeper, high-performance coordination service for portal style interface for displaying data.

distributed applications.

5.3 Apache Flume

5.1 Oozie

Flume [23] is a distributed, reliable, and available service for

Oozie is a workflow processing system that lets users define efficiently collecting, aggregating, and moving large

a series of jobs written in multiple languages – such as Map amounts of log data. It has a simple and flexible architecture

Reduce, Pig and Hive -- then intelligently link them to one based on streaming data flows. It is robust and fault tolerant

another. Oozie allows users to specify, for example, that a with tunable reliability mechanisms and many failover and

particular query is only to be initiated after specified recovery mechanisms. It uses a simple extensible data model

previous jobs on which it relies for data are completed. that allows for online analytic application.

Oozie is a scalable, reliable and extensible system.

Features of Flume

Oozie workflow is a collection of actions (i.e. Hadoop Flume ingests log data from multiple web servers into a

Map/Reduce jobs, Pig jobs) arranged in a control centralized store (HDFS, HBase) efficiently.

dependency DAG (Direct Acyclic Graph), specifying a Using Flume, we can get the data from multiple servers

sequence of actions execution. This graph is specified in immediately into Hadoop.

hPDL (a XML Process Definition Language). Along with the log files, Flume is also used to import

huge volumes of event data produced by social

Benefits of Oozie are as follows: networking sites.

Flume supports a large set of sources and destinations

Complex workflow action dependencies – The Oozie types and can be scaled horizontally.

workflow contains actions and dependencies among Flume supports multi-hop flows, fan-in and fan out

these actions. At runtime, Oozie manages dependencies

flows, contextual routing, etc.

and executes actions when dependencies identified in

DAG are satisfied.

5.4 Apache Zookeeper

Frequency Execution – Oozie workflow specification

supports both data and time triggers. Apache Zookeeper [22] is a coordination service for

Native Hadoop stack integration – Oozie supports all distributed application that enables synchronization across a

types of hadoop jobs and is integrated with Hadoop cluster. Zookeeper in Hadoop can be viewed as centralized

stack. repository where distributed applications can put data and

Reduces Time-To-Market (TTM) – The DAG get data out of it. It is used to keep the distributed system

specification enables users to specify the workflow, functioning together as a single unit, using its

which saves time to build and maintain custom solutions synchronization, serialization and coordination goals. For

for dependency and workflow management. simplicity's sake Zookeeper can be thought of as a file

Mechanism to manage a variety of complex data system where we have znodes that store data instead of files

Analysis – Oozie is integrated with the yahoo! or directories storing data. Zookeeper is a Hadoop Admin

Distribution of Hadoop with security and is a primary tool used for managing the jobs in the cluster.

mechanism to manage a variety of complex data analysis

workloads across Yahoo! Features of Zookeeper includes managing configuration

across nodes, implementing reliable messaging,

5.2 Apache Chukwa implementing redundant services and to synchronize process

execution.

Chukwa [24] aims to provide a flexible and powerful

platform for distributed data collection and rapid data Benefits of Zookeeper

processing. It is an open source data collection system for Simple distributed coordination process.

monitoring large distributed system and is built on top of the Synchronization, Reliability and Ordered Messages −

Hadoop Distributed File System (HDFS) and Map/Reduce Mutual exclusion and co-operation between server

framework that inherits Hadoop’s scalability and robustness. processes. This process helps in Apache HBase for

configuration management.

Chukwa also includes a flexible and powerful toolkit for Serialization − Encode the data according to specific

displaying, monitoring and analyzing results to make the rules. Ensure your application runs consistently.

best use of the collected data. In order to maintain this

flexibility, Chukwa is structured as a pipeline of collection

Volume 5 Issue 6, June 2016

www.ijsr.net

Licensed Under Creative Commons Attribution CC BY

Paper ID: NOV164121 http://dx.doi.org/10.21275/v5i6.NOV164121 561

International Journal of Science and Research (IJSR)

ISSN (Online): 2319-7064

Index Copernicus Value (2013): 6.14 | Impact Factor (2015): 6.391

Atomicity − Data transfer either succeed or fail [13] Ivanilton Polato, Reginaldo Ré, Alfredo Goldman,

completely, but no transaction is partial. Fabio Kon, “ A comprehensive view of hadoop research

– A systematic literature review”, Journal of network

6. Conclusion and computer applications, volume 46, november 2014.

[14] Arantxa Duque Barrachina, Aisling O’Driscoll, “A big

With a rapid pace in evolution of Big Data, its processing data methodology for categorising technical support

frameworks also seem to be evolving in a full swing mode. requests using Hadoop and Mahout”, Journal of Big

The huge data giants on the web has adopted Apache Data, February 2014 [DOI: 10.1186/2196-1115-1-1].

Hadoop had to depend on the partnership of Hadoop HDFS [15] Mr.NileshVishwasrao Patil, Mr.Tanvir Patel, “Apache

with the resource management environment and MapReduce Hadoop: Resourceful Big Data Management”, IJRSET,

programming. Hadoop ecosystem has introduced a new Volume 3, Special Issue 4, April 2014.

processing model that lends itself to common big data use [16] Shilpa Manjit Kaur,” BIG Data and Methodology- A

cases including interactive SQL over big data, machine review” ,International Journal of Advanced Research in

learning at scale, and the ability to analyze big data scale Computer Science and Software Engineering, Volume

graphs. Apache Hadoop is not actually single product but 3, Issue 10, October 2013.

instead a collection of several components. When all these [17] Varsha B.Bobade, “Survey Paper on Big Data and

components are merged, it makes the Hadoop very user Hadoop”, IRJET, Volume: 03 Issue: 01, Jan-2016.

friendly. The Hadoop ecosystem and its commercial [18] A Katal, M. Wazid, R.H. Goudar, "Big Data: Issues,

Challenges, tools and Good practices," Aug. 2013.

distributions continue to evolve, with new or improved

[19] Deepika P, Anantha Raman G R,” A Study of Hadoop-

technologies and tools emerging all the time.

Related Tools and Techniques”, IJARCSSE, Volume 5,

Issue 9, September 2015.

7. Acknowledgment [20] Anand Loganathan, Ankur Sinha, Muthuramakrishnan

V., and Srikanth Natarajan, “A Systematic Approach to

We express our gratitude to our parents, family members, Big Data Exploration of the Hadoop Framework”,

Prof. (Dr). Vijay Patil (principal) and Prof. Vilas Mankar International Journal of Information & Computation

(HOD IT Dept.) I2IT, Pune, for his constant encouragement Technology, Volume 4, 2014.

and motivation throughout this work. [21] Andrew Pavlo, “A comparison of approaches to large

scale data Analysis”, SIGMOD, 2009.

References [22] Zookeeper - Apache Software Foundation project home

page https://zookeeper.apache.org.

[1] Hadoop - Apache Software Foundation project home [23] Flume - Apache Software Foundation project home

page. http://hadoop.apache.org. page https://flume.apache.org.

[2] HBase - Apache Software Foundation project home [24] Chukwa - Apache Software Foundation project home

page http://hbase.apache.org. page https://chukwa.apache.org.

[3] Kiran kumara Reddi & Dnvsl Indira “Different [25] J. Dean and S. Ghemawat, “MapReduce: A Flexible

Technique to Transfer Big Data: survey” IEEE Data Processing Tool”. CACM, 53(1):72–77, 2010.

Transactions on 52(8) (Aug.2013) 2348 {2355}.

[4] Konstantin Shvachko, et al., “The Hadoop Distributed

File System, ”Mass Storage Systems and Technologies

(MSST), IEEE 26th Symposium on IEEE,

2010,http://storageconference.org/2010/Papers/MSST/S

hvachko.pdf.

[5] Hive - Apache Software Foundation project home page

http://hive.apache.org.

[6] Pig - Apache Software Foundation project home page.

http://pig.apache.org.

[7] Jens Dittrich, Jorge-Arnulfo Quiane-Ruiz “Efficient Big

Data Processing in Hadoop MapReduce”, 2014.

[8] Jeffrey Dean, Sanjay Ghemawat, “MapReduce:

simplified data processing on large clusters”,

Communications of the ACM, v.51 n.1, January 2008

[doi>10.1145/1327452.1327492].

[9] Mahout - Apache Software Foundation project home

page http://mahout.apache.org

[10] Avro - Apache Software Foundation project home page

http://avro.apache.org

[11] J. Yates Monteith, John D. McGregor, and John E.

Ingram, “Hadoop and its evolving ecosystem”,

IWSECO@ ICSOB, Citeseer, 2013.

[12] Monteith, J.Y., McGregor, J.D.: “A three viewpoint

model for software ecosystems”, In: Proceedings of

Software Engineering and Applications’, 2012.

Volume 5 Issue 6, June 2016

www.ijsr.net

Licensed Under Creative Commons Attribution CC BY

Paper ID: NOV164121 http://dx.doi.org/10.21275/v5i6.NOV164121 562

You might also like

- Hadoop Ecosystem ComponentsDocument6 pagesHadoop Ecosystem ComponentsKittuNo ratings yet

- Experiment No - 01Document14 pagesExperiment No - 01AYAAN SatkutNo ratings yet

- V3i308 PDFDocument9 pagesV3i308 PDFIJCERT PUBLICATIONSNo ratings yet

- A Review On HADOOP MAPREDUCE-A Job Aware Scheduling TechnologyDocument5 pagesA Review On HADOOP MAPREDUCE-A Job Aware Scheduling TechnologyInternational Journal of computational Engineering research (IJCER)No ratings yet

- Optimized Approach (SPCA) For Load Balancing in Distributed HDFS ClusterDocument6 pagesOptimized Approach (SPCA) For Load Balancing in Distributed HDFS ClustermanjulakinnalNo ratings yet

- Unit 2Document56 pagesUnit 2Ramstage TestingNo ratings yet

- BDA Unit 2 Q&ADocument14 pagesBDA Unit 2 Q&AviswakranthipalagiriNo ratings yet

- Adobe Scan Dec 05, 2023Document7 pagesAdobe Scan Dec 05, 2023dk singhNo ratings yet

- Hadoop: A Report Writing OnDocument13 pagesHadoop: A Report Writing Ondilip kodmourNo ratings yet

- 777 1651400645 BD Module 3Document62 pages777 1651400645 BD Module 3nimmyNo ratings yet

- Experiment No.1: AIM: Study of HadoopDocument6 pagesExperiment No.1: AIM: Study of HadoopHarshita MandloiNo ratings yet

- Comparative Analysis of MapReduce and Apache Tez Performance in Multinode Clusters With Data CompressionDocument8 pagesComparative Analysis of MapReduce and Apache Tez Performance in Multinode Clusters With Data CompressionrectifiedNo ratings yet

- Unit 3Document15 pagesUnit 3xcgfxgvxNo ratings yet

- Hadoop: A Seminar Report OnDocument28 pagesHadoop: A Seminar Report OnRoshni KhairnarNo ratings yet

- A Review of Hadoop Ecosystem for Processing Big DataDocument7 pagesA Review of Hadoop Ecosystem for Processing Big DataV KalyanNo ratings yet

- Bda - 10Document7 pagesBda - 10deshpande.pxreshNo ratings yet

- Hadoop Ecosystem Components ExplainedDocument7 pagesHadoop Ecosystem Components ExplainedAkhiNo ratings yet

- 基于HDFS、MapReduce和数据仓库工具Hive的Hadoop平台生态描述Document7 pages基于HDFS、MapReduce和数据仓库工具Hive的Hadoop平台生态描述郑铭No ratings yet

- Unit 5 - Big Data Ecosystem - 06.05.18Document21 pagesUnit 5 - Big Data Ecosystem - 06.05.18Asmita GolawtiyaNo ratings yet

- HADOOP: A Solution To Big Data Problems Using Partitioning Mechanism Map-ReduceDocument6 pagesHADOOP: A Solution To Big Data Problems Using Partitioning Mechanism Map-ReduceEditor IJTSRDNo ratings yet

- HadoopDocument11 pagesHadoopInu KagNo ratings yet

- Big Data Analytics Unit-3Document15 pagesBig Data Analytics Unit-34241 DAYANA SRI VARSHANo ratings yet

- Apache Hadoop: Developer(s) Stable Release Preview ReleaseDocument5 pagesApache Hadoop: Developer(s) Stable Release Preview Releasenitesh_mpsNo ratings yet

- Hadoop EcosystemDocument4 pagesHadoop Ecosystemshweta shedshaleNo ratings yet

- Hadoop PDFDocument4 pagesHadoop PDFRavi JoshiNo ratings yet

- MapreduceDocument15 pagesMapreducemanasaNo ratings yet

- Hadoop Overview: MapReduce, HDFS Architecture & DemoDocument16 pagesHadoop Overview: MapReduce, HDFS Architecture & DemoSunil D Patil100% (1)

- BDA Mod2@AzDOCUMENTS - inDocument64 pagesBDA Mod2@AzDOCUMENTS - inramyaNo ratings yet

- Hadoop Unit-4Document44 pagesHadoop Unit-4Kishore ParimiNo ratings yet

- Bda Unit 2Document21 pagesBda Unit 2245120737162No ratings yet

- High Performance Fault-Tolerant Hadoop Distributed File SystemDocument9 pagesHigh Performance Fault-Tolerant Hadoop Distributed File SystemEditor IJRITCCNo ratings yet

- Big Data Introduction & EcosystemsDocument4 pagesBig Data Introduction & EcosystemsHarish ChNo ratings yet

- Haddob Lab ReportDocument12 pagesHaddob Lab ReportMagneto Eric Apollyon ThornNo ratings yet

- HADOOPDocument1 pageHADOOPNasir AhmedNo ratings yet

- Efficient Ways To Improve The Performance of HDFS For Small FilesDocument5 pagesEfficient Ways To Improve The Performance of HDFS For Small FilesYassine ZriguiNo ratings yet

- Big Data and Hadoop - SuzanneDocument5 pagesBig Data and Hadoop - SuzanneTripti SagarNo ratings yet

- Hadoop Ecosystem: Hdfs Mapreduce Yarn Hadoop CommonDocument5 pagesHadoop Ecosystem: Hdfs Mapreduce Yarn Hadoop CommonHarshdeep850No ratings yet

- Module-2 - Introduction To HadoopDocument13 pagesModule-2 - Introduction To HadoopshreyaNo ratings yet

- Big Data Analytics AssignmentDocument7 pagesBig Data Analytics AssignmentDevananth A BNo ratings yet

- Fbda Unit-3Document27 pagesFbda Unit-3Aruna ArunaNo ratings yet

- HadoopDocument5 pagesHadoopVaishnavi ChockalingamNo ratings yet

- BDA Unit 3Document6 pagesBDA Unit 3SpNo ratings yet

- Big data processing frameworks survey examines Hadoop, Spark, Storm and FlinkDocument68 pagesBig data processing frameworks survey examines Hadoop, Spark, Storm and Flinkaswanis8230No ratings yet

- S - Hadoop EcosystemDocument14 pagesS - Hadoop Ecosystemtrancongquang2002No ratings yet

- HadoopDocument6 pagesHadoopVikas SinhaNo ratings yet

- 2 HadoopDocument20 pages2 HadoopYASH PRAJAPATINo ratings yet

- Intro Hadoop Ecosystem Components, Hadoop Ecosystem ToolsDocument15 pagesIntro Hadoop Ecosystem Components, Hadoop Ecosystem ToolsRebecca thoNo ratings yet

- Compusoft, 2 (11), 370-373 PDFDocument4 pagesCompusoft, 2 (11), 370-373 PDFIjact EditorNo ratings yet

- An Introduction To HadoopDocument12 pagesAn Introduction To Hadooparjun.ec633No ratings yet

- Introduction To HadoopDocument5 pagesIntroduction To HadoopHanumanthu GouthamiNo ratings yet

- What Is The Hadoop Ecosystem?Document4 pagesWhat Is The Hadoop Ecosystem?Maanit SingalNo ratings yet

- Lovely Professional University (Lpu) : Mittal School of Business (Msob)Document10 pagesLovely Professional University (Lpu) : Mittal School of Business (Msob)FareedNo ratings yet

- Q1. Discuss Hadoop and Map Reduce Algorithm.: Data Is LocatedDocument7 pagesQ1. Discuss Hadoop and Map Reduce Algorithm.: Data Is LocatedHîмanî JayasNo ratings yet

- Hadoop Major ComponentsDocument10 pagesHadoop Major ComponentsaswagadaNo ratings yet

- 02 Unit-II Hadoop Architecture and HDFSDocument18 pages02 Unit-II Hadoop Architecture and HDFSKumarAdabalaNo ratings yet

- Design An Efficient Big Data Analytic Architecture For Retrieval of Data Based On Web Server in Cloud EnvironmentDocument10 pagesDesign An Efficient Big Data Analytic Architecture For Retrieval of Data Based On Web Server in Cloud EnvironmentAnonymous roqsSNZNo ratings yet

- Bda Bi Jit Chapter-4Document20 pagesBda Bi Jit Chapter-4Araarsoo JaallataaNo ratings yet

- BDA Presentations Unit-4 - Hadoop, EcosystemDocument25 pagesBDA Presentations Unit-4 - Hadoop, EcosystemAshish ChauhanNo ratings yet

- Improved Job Scheduling For Achieving Fairness On Apache Hadoop YARNDocument6 pagesImproved Job Scheduling For Achieving Fairness On Apache Hadoop YARNFrederik Lauro Juarez QuispeNo ratings yet

- Hortonworks Support Compatability Report2 PDFDocument1 pageHortonworks Support Compatability Report2 PDFSivaprakash ChidambaramNo ratings yet

- Infosys Data Governance FrameworkDocument2 pagesInfosys Data Governance FrameworkSivaprakash ChidambaramNo ratings yet

- Infosys Data Governance FrameworkDocument2 pagesInfosys Data Governance FrameworkSivaprakash ChidambaramNo ratings yet

- Hortonworks Support Compatability Report3 PDFDocument1 pageHortonworks Support Compatability Report3 PDFSivaprakash ChidambaramNo ratings yet

- Metadata Management - FAQ - 180420-0740-30Document3 pagesMetadata Management - FAQ - 180420-0740-30Sivaprakash ChidambaramNo ratings yet

- Hortonworks Support Compatability ReportDocument2 pagesHortonworks Support Compatability ReportSivaprakash ChidambaramNo ratings yet

- Hortonworks Support Compatability Report PDFDocument1 pageHortonworks Support Compatability Report PDFSivaprakash ChidambaramNo ratings yet

- Hortonworks Support Compatability ReportDocument2 pagesHortonworks Support Compatability ReportSivaprakash ChidambaramNo ratings yet

- Hortonworks Support Compatability ReportDocument2 pagesHortonworks Support Compatability ReportSivaprakash ChidambaramNo ratings yet

- Hortonworks Support Compatability Report PDFDocument1 pageHortonworks Support Compatability Report PDFSivaprakash ChidambaramNo ratings yet

- Finance & Risk Architecture Alignment InitiativeDocument1 pageFinance & Risk Architecture Alignment InitiativeSivaprakash ChidambaramNo ratings yet

- Data Quality Programme Accelerates Issue ResolutionDocument1 pageData Quality Programme Accelerates Issue ResolutionSivaprakash ChidambaramNo ratings yet

- Hortonworks Support Compatability ReportDocument1 pageHortonworks Support Compatability ReportSivaprakash ChidambaramNo ratings yet

- Title: Digital Integration & Access LayerDocument2 pagesTitle: Digital Integration & Access LayerSivaprakash ChidambaramNo ratings yet

- Hortonworks Support Compatability ReportDocument1 pageHortonworks Support Compatability ReportSivaprakash ChidambaramNo ratings yet

- EcosystemDocument4 pagesEcosystemandiNo ratings yet

- Visa Application FormDocument1 pageVisa Application FormSayed Saeed AhmadNo ratings yet

- CosmosdbDocument46 pagesCosmosdbSivaprakash ChidambaramNo ratings yet

- 2015 02 26 Rimmoussa HadoopoverviewDocument21 pages2015 02 26 Rimmoussa HadoopoverviewMallikarjun RaoNo ratings yet

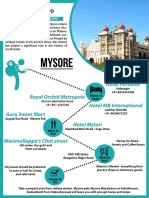

- My sore: A historic city of palaces and cultureDocument1 pageMy sore: A historic city of palaces and cultureSivaprakash ChidambaramNo ratings yet

- Visa Application FormDocument1 pageVisa Application FormSayed Saeed AhmadNo ratings yet

- Use of The ImperativeDocument34 pagesUse of The Imperativeakhilesh sahooNo ratings yet

- The Names of GodDocument5 pagesThe Names of GodAlan D Cobourne-Smith100% (1)

- Engllish For Nursing Akper Pemerintah Kab - Cianjur Name: Rifqi Muhamad Sabiq NIM: D12.113 Grade: 2 Class: 2 C Smester: 3 MarksDocument4 pagesEngllish For Nursing Akper Pemerintah Kab - Cianjur Name: Rifqi Muhamad Sabiq NIM: D12.113 Grade: 2 Class: 2 C Smester: 3 MarksrifqiMSNo ratings yet

- GAT 9 สามัญ ENGDocument66 pagesGAT 9 สามัญ ENGAdissaya BEAM S.No ratings yet

- SVÉD (Angol) Nyelv Gyakorló - NyelvtanDocument26 pagesSVÉD (Angol) Nyelv Gyakorló - NyelvtanWynsaintNo ratings yet

- Canary Summer Task - Grade4Document8 pagesCanary Summer Task - Grade4Ruchi SatyawadiNo ratings yet

- The Introduction To Computer ScienceDocument48 pagesThe Introduction To Computer ScienceJohnNo ratings yet

- 21st - 2nd MonthlyDocument4 pages21st - 2nd MonthlyCinderella SamsonNo ratings yet

- BookDocument20 pagesBookchch67% (3)

- English Navneet Practice Paper by HumanshuDocument45 pagesEnglish Navneet Practice Paper by HumanshuOnkar JaragNo ratings yet

- Question and Answers Part A (2 Marks) 1. Define The Term InternetDocument24 pagesQuestion and Answers Part A (2 Marks) 1. Define The Term InternetNivi NivethaNo ratings yet

- Hyderabad International Convention CentreDocument31 pagesHyderabad International Convention CentreHarshini PNo ratings yet

- Year 12 Poetry LessonsDocument120 pagesYear 12 Poetry Lessonsapi-301906697No ratings yet

- Writing Skills Practice: A For and Against Essay Exercises: PreparationDocument4 pagesWriting Skills Practice: A For and Against Essay Exercises: PreparationAna Carranza100% (1)

- Model AnswersDocument14 pagesModel Answerskitty christina winstonNo ratings yet

- Hollywood Icons, Local Demons Exhibition of Works by Mark AnthonyDocument9 pagesHollywood Icons, Local Demons Exhibition of Works by Mark AnthonyNdewura JakpaNo ratings yet

- Noli BookReviewDocument6 pagesNoli BookReviewKaye Angele HernandezNo ratings yet

- D80194GC11 sg2Document224 pagesD80194GC11 sg2hilordNo ratings yet

- SQL Engine ReferenceDocument608 pagesSQL Engine ReferenceIndra AnggaraNo ratings yet

- Grey Larsen Notation SystemDocument18 pagesGrey Larsen Notation SystemBrad MaestasNo ratings yet

- Examen Final Del Curso - Revisión Del IntentoDocument10 pagesExamen Final Del Curso - Revisión Del IntentoSthephany MontenegroNo ratings yet

- String Manipulation: Cc3 Cluster 2 SEM A.Y. 2019-2020Document16 pagesString Manipulation: Cc3 Cluster 2 SEM A.Y. 2019-2020Rovell AsideraNo ratings yet

- Modal Verbs Practice 2Document3 pagesModal Verbs Practice 2mykecr205rlNo ratings yet

- Alive & Well TestDocument9 pagesAlive & Well Testmeow pasu100% (1)

- ULEA 3519 Study Guide Academic Listening SkillsDocument195 pagesULEA 3519 Study Guide Academic Listening SkillsClaire LuambulaNo ratings yet

- AnnotationsDocument17 pagesAnnotationsAnonymous DPwpbRI7ZKNo ratings yet

- 9no Teacher - S BookDocument136 pages9no Teacher - S BookMontoya Susy100% (1)

- Taarifa Kutoka DuceDocument3 pagesTaarifa Kutoka DuceChediel CharlesNo ratings yet

- Draft EN 12845Document10 pagesDraft EN 12845pam7164No ratings yet

- Formal DictionDocument36 pagesFormal DictionJohnNo ratings yet