Professional Documents

Culture Documents

Cooperative Control Wireless LAN Architecture PDF

Cooperative Control Wireless LAN Architecture PDF

Uploaded by

juharieOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Cooperative Control Wireless LAN Architecture PDF

Cooperative Control Wireless LAN Architecture PDF

Uploaded by

juharieCopyright:

Available Formats

solution brief

Cooperative Control Wireless LAN

Architecture

Version 3.5

Table of Contents

Introduction ........................................................................................................................................... 4

Cooperative Control® Architecture ............................................................................................... 4

Centralized Management, Distributed Control, and Distributed Forwarding ........................... 5

Key Concepts and Naming Conventions ..................................................................................... 7

Cooperative Control ............................................................................................................................. 8

HiveAP Auto Discovery & Self Organization .................................................................................. 9

Roaming Issues with Autonomous APs ........................................................................................... 9

Fast/Secure Layer 3 Roaming ....................................................................................................... 11

Tunnel Load Balancing in Large Scale Layer 3 Roaming Environments .................................. 13

Radio Resource Management (RRM) .......................................................................................... 13

Station Load Balancing.................................................................................................................. 13

Policy Enforcement at the Edge ........................................................................................................ 14

User Profiles and Identity-Based Policy ......................................................................................... 14

QoS at the Edge ............................................................................................................................. 15

QoS with Dynamic Airtime Scheduling ........................................................................................ 17

Service Level Agreements (SLAs) and Airtime Boost .................................................................. 20

HiveAP Security and Theft Protection........................................................................................... 21

Wireless Intrusion Protection System (WIPS) - Rogue AP and Client Prevention ...................... 22

Voice- and Video-aware WIPS Scanning .................................................................................... 23

Integrated Protocol Analyzer Integration and Client Monitoring ............................................. 24

3rd Party WIPS and Protocol Analyzer Integration ....................................................................... 25

Wireless Decryption at the HiveAP Allows Advanced Security at the Edge ........................... 26

RADIUS Server Built into HiveAPs .................................................................................................... 27

Built-in Captive Web Portal ............................................................................................................ 27

Identity-Based Tunnels .................................................................................................................... 28

Guest Access Policy Enforcement at the Edge .......................................................................... 29

Private Preshared Key (PPSK) Security .......................................................................................... 30

Virtual Private Networking (VPN) .................................................................................................. 32

Best Path Forwarding and Wireless Mesh ......................................................................................... 34

Wireless Mesh .................................................................................................................................. 34

Scalable, Layer-2 Routing and Optimal Path Selection ............................................................ 36

Scalability with Best Path Forwarding ........................................................................................... 37

2 Copyright © 2011, Aerohive Networks, Inc.

Cooperative Control WLAN Architecture

High Availability .................................................................................................................................. 37

No Single Point of Failure ............................................................................................................... 37

Smart Power-over-Ethernet (PoE) ................................................................................................. 37

Redundant and Aggregate Links ................................................................................................. 38

Self Healing by Dynamically Routing Around Failures ................................................................ 38

Dynamic Mesh Failover .................................................................................................................. 39

AAA Credential Caching .............................................................................................................. 40

Centralized WLAN Management ....................................................................................................... 40

Simple, Scalable Management with the HiveManager WNMS ................................................ 40

Versions of HiveManager ............................................................................................................... 41

HiveManager Components and Communication ........................................................................... 42

Simplified Configuration Management ....................................................................................... 43

Zero Configuration for Access Point Deployments ..................................................................... 44

Auto-Provisioning ............................................................................................................................ 44

Simplified Monitoring, Troubleshooting, and Reporting ............................................................. 44

HiveAP Classification for Adaptive WLAN Policy Configuration ............................................... 46

Guest Manager ................................................................................................................................... 48

Role-based Guest Administration ................................................................................................. 48

Guest Access with GuestManager............................................................................................... 49

Guest Account Creation and Management .............................................................................. 49

Credentials ...................................................................................................................................... 50

Single Centralized Instance for Guest Authentication ............................................................... 50

Delivered as an Appliance ........................................................................................................... 50

Conclusion ........................................................................................................................................... 51

Copyright © 2011, Aerohive Networks, Inc. 3

Introduction

The first generation of WLANs were autonomous (standalone) access points and were

relatively simple to deploy, but they lacked the manageability, mobility, and security

features that enterprises required, even for convenience networks. Then centralized,

controller-based architectures emerged to address these issues and others such as

fast/secure roaming for mobile devices, radio resource management (RRM), and per-

user or per-device security policies. Unfortunately, they also introduced opaque overlay

networks, performance bottlenecks, single points of failure, increased latency, and

substantially higher costs to enterprise networks. As Wi-Fi is increasingly embraced as a

critical part of the enterprise network and enterprises deploy demanding applications

(e.g. voice and video) over an extremely high-speed Wi-Fi infrastructure, the

consequences of this movement are magnified and are leading the industry to

reexamine the validity of today’s centralized WLAN architecture.

Aerohive Networks has responded by pioneering a new WLAN architecture called the

Cooperative Control architecture. It is a controller-less architecture that eliminates the

downsides of controllers while providing the management, mobility, scalability, resiliency,

and security that enterprises require in their wireless infrastructure.

Cooperative Control® Architecture

Aerohive Networks has developed an innovative new class of wireless infrastructure

equipment called a Cooperative Control Access Point (CC-AP). A CC-AP combines an

enterprise-class access point with a suite of cooperative control protocols and functions

to provide all of the benefits of a controller-based WLAN solution, but without requiring a

controller or an overlay network. Aerohive Networks’ implementation of a CC-AP is

called a HiveAP. This cooperative control functionality enables multiple HiveAPs to be

organized into groups, called “Hives,” that share control information between HiveAPs

and enable functions like fast/secure layer 2/3 roaming, coordinated radio channel and

power management, security, quality-of-service (QoS), and native mesh networking. This

information sharing capability enables a next generation WLAN architecture – the

cooperative control architecture – that provides all of the benefits of a controller-based

architecture, but is easier to deploy and expand, lower cost, more reliable, more

scalable, more ubiquitously deployable, higher performing, and more suitable for

demanding applications such as voice and video than controller-based architectures.

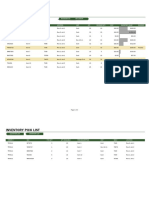

The diagram that follows outlines the building blocks of the cooperative control

architecture. It is implemented using two types of products:

• Cooperative Control Access Points (HiveAPs) that have dual radios that support

simultaneous use of the 2.4 GHz and 5 GHz spectrums for wireless access and/or

wireless mesh connectivity. HiveAPs implement robust security such as:

WPA/WPA2-Enterprise, WPA/WPA2-Person, de facto standards such as

Opportunistic Key Caching, Private PSK, integrated WIPS, stateful firewall policies,

and L2-L4 denial-of-service (DoS) prevention. Each HiveAP’s SLA capabilities are

based on advanced QoS policies, Dynamic Airtime Scheduling, and Airtime

Boost capabilities using an easily-configured management application. A single

radio HiveAP is also available.

4 Copyright © 2011, Aerohive Networks, Inc.

Cooperative Control WLAN Architecture

• A central management platform (HiveManager) that provides centralized user

policy management and simplified HiveAP configuration, firmware updates,

monitoring, and troubleshooting. HiveManager is available in many flavors,

including 1U and 2U appliances, a virtual appliance (virtual machine), and a

SaaS delivery option called HiveManager Online.

The architecture is supported by three distinct, but tightly-interrelated technology

building blocks:

• Cooperative control: a set of control-plane protocols that provides dynamic

layer 2 (MAC-based) routing, automatic radio channel and power selection, and

fast/secure roaming without requiring controllers.

• Policy enforcement at the edge: the ability to enforce granular, user-based QoS,

security, and access policies at the edge of the network where the user

connects.

• Best-path forwarding: scalable wired/wireless mesh routing protocols allow traffic

to be securely forwarded via the highest performance and most available path

in the network. This includes both the ability to fail back when failed links are

reestablished and to dynamically transition access radios into mesh backhaul

mode as policy dictates.

Diagram 1. Building Blocks of

Cooperative Control Architecture

Centralized Management, Distributed Control, and Distributed Forwarding

Copyright © 2011, Aerohive Networks, Inc. 5

In order to better appreciate the Aerohive Networks cooperative control architecture, it

is important to understand the three major functional areas, or logical network planes,

that can be used to describe how network architecture operates: the management

plane, the control plane, and the data plane.

By comparing the logical network planes of the most common networking devices –

such as routers and switches – with that of HiveAPs, you can see striking similarities.

For example:

• They all have the ability to use a centralized management platform for configuration,

monitoring, and troubleshooting, and because the management platform itself is not

in the data path, it can be taken offline without affecting the functionality of the

network.

• Each class of network device implements a distributed control plane that uses control

protocols (e.g. OSPF, spanning tree, etc.) to share information between devices that

allows them to coordinate with each other to ensure the network functions properly

and continuously adapts to changes. With this knowledge of network state provided

by the distributed control plane, each individual device is then able to implement a

distributed data plane allowing each one to quickly make decisions on how traffic

should be processed and forwarded using the optimal path.

This architecture has proven to be the winning architecture for switched and routed

networks for many years because it is scalable, high performance, and resilient while still

allowing for central management. As an example, the Internet uses this architecture.

Many enterprise WLANs today are implemented with a centralized controller-based

architecture that breaks from this proven network architecture by centralizing the control

plane and data plane in a controller hardware platform, which compromises scalability

and resilience.

Aerohive’s cooperative control architecture is the first architecture to bring these proven

network benefits to WLANs. The following chart shows the architectural parallels

between cooperative control and the proven architecture used in switched and routed

networks.

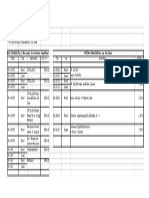

Logical Network Planes Routers Switches HiveAPs

Management Plane Centralized – Using Centralized – Using an Centralized – Using

Responsible for an NMS platform NMS platform HiveManager, a centralized

configuration, monitoring, WNMS platform

and troubleshooting

Control Plane Responsible Distributed – Using Distributed – Using Distributed – Using Aerohive

for making forwarding Protocols (OSPF, Protocols such as Cooperative Control Protocols

decisions and RIP, BGP, etc.) to spanning tree protocol (AMRP, ACSP, DNXP, INXP) for

programming the data determine how (STP) to avoid loops dynamic RRM, fast/secure L2/L3

plane traffic should be and MAC address roaming, identity-based

forwarded learning to determine tunnels, and for determining

where traffic should go how traffic should be

forwarded

Data Plane Distributed – Distributed – Processed Distributed – Processed and

Responsible for processing Processed and and forwarded by forwarded by each HiveAP

and forwarding data forwarded by each switch

each router

Extending the proven architecture used in switched and routed infrastructures to WLANs

through the use of distributed control and data planes is especially important as

6 Copyright © 2011, Aerohive Networks, Inc.

Cooperative Control WLAN Architecture

enterprises require greater levels of availability, increased performance with 802.11n, and

seek to improve productivity in their regional and branch offices. Distributing the control

and data planes (e.g., removing controllers) eliminates single points of failure and

performance bottlenecks from the entire wireless network, allowing the remote site

deployment to be as simple and as functional as the campus deployment.

Key Concepts and Naming Conventions

The diagram below shows that HiveAPs have different roles which are automatically

designated based on how they are connected to the network. The following is a list of

key terms used to describe the Aerohive Networks cooperative control architecture:

• HiveAP®: The product brand name for Aerohive’s CC-AP (Cooperative Control

Access Point). HiveAPs coordinate with each other using cooperative control

protocols to provide critical functions including seamless mobility, automatic radio

resource management (RRM), policy-based security, and best-path forwarding.

• HiveOS®: The firmware developed by Aerohive Networks that runs on HiveAPs.

• HiveManager®: A centralized wireless network management system (WNMS) that

enables sophisticated identity-based policy management, simplistic device

configuration, HiveOS updates, and monitoring and troubleshooting of HiveAPs within

a cooperative control WLAN infrastructure. HiveManager is available as an

appliance, a virtual appliance, or a SaaS offering called HiveManager Online™.

• Hive: A Hive is a group of HiveAPs that share a common name and secret key that

permit them to securely communicate with each other using cooperative control

protocols. Within a Hive, clients can seamlessly roam among HiveAPs across layer 2

and layer 3 boundaries, while preserving their security state, QoS settings, IP settings,

and data connections.

• GuestManager™: A guest management platform that provides a simple web

interface for allowing administrators, such as receptionists or lobby ambassadors, to

create temporary user accounts that provide guests with access to the wireless

network.

• Wired Backhaul Link: An Ethernet connection from a HiveAP to the primary wired

network, typically called the distribution system (DS) in wireless standards, which is

used to bridge traffic between the wireless and wired LANs.

• Wireless Backhaul Link: Wireless connections between HiveAPs that are used to

create a wireless mesh and to provide wireless connections that transport primarily

control and data traffic.

• Bridge Link: An Ethernet connection from a HiveAP that allows a wired device or

network segment to be bridged over the WLAN onto the primary wired LAN.

• Wireless Access Link: The wireless connection between a wireless client and a

HiveAP.

• Portal: A HiveAP that is directly connected to the wired LAN via Ethernet that provides

default MAC routes to mesh points within the Hive. This role is dynamically chosen. If

the wired link is unplugged, then the HiveAP can dynamically become a mesh point.

• Mesh Point: A HiveAP that is connected to the Hive via wireless backhaul links and

does not use a wired link for backhaul. This role is also dynamically chosen. If a wired

link is plugged in, the HiveAP dynamically becomes a portal, if permitted by the

configuration.

• Cooperative Control Signaling: The control-plane communication between HiveAPs

using Cooperative Control Protocols

Copyright © 2011, Aerohive Networks, Inc. 7

Diagram 2. Aerohive Networks Naming Conventions

Cooperative Control

By utilizing cooperative control, HiveAPs cooperate with neighboring HiveAPs to support

control functions such as radio resource management, Layer 2/3 roaming, client load

balancing, and wireless mesh networking, eliminating the need for a centralized

controller.

Cooperative control is made possible with the following self-organizing and

automatically-operational cooperative control protocols:

• AMRP (Aerohive Mobility Routing Protocol) – Provides HiveAPs with the ability to

perform automatic neighbor discovery, MAC-layer best-path forwarding through a

wireless mesh, dynamic and stateful rerouting of traffic in the event of a failure, and

predictive identity information and key distribution to neighboring HiveAPs. This

provides clients with fast/secure roaming capabilities between HiveAPs while

maintaining their authentication state, encryption keys, firewall sessions, and QoS

enforcement settings.

• ACSP (Aerohive Channel Selection Protocol) – Used by HiveAPs to analyze the RF

environment on each channel within a regulatory domain and to work in conjunction

with each other to determine the best channel and power settings for wireless access

and mesh. ACSP minimizes co-channel and adjacent channel interference in order

to provide optimized application performance.

• DNXP (Dynamic Network Extension Protocol) – Dynamically creates tunnels on an as-

needed basis between HiveAPs in different subnets, giving clients the ability to

seamlessly roam between subnets while preserving their IP address settings,

8 Copyright © 2011, Aerohive Networks, Inc.

Cooperative Control WLAN Architecture

authentication state, encryption keys, firewall sessions, and QoS enforcement

settings. Note that tunnels are not required for clients roaming among APs in the

same subnet.

Cooperative control protocols allow HiveAPs to operate as a cohesive system in order to

provide the mobility, security, RF control, scalability, and resiliency that are essential for

supporting today’s and tomorrow’s demanding applications over a Wi-Fi infrastructure.

HiveAP Auto Discovery & Self Organization

Cooperative control simplifies the deployment of HiveAPs by enabling them to

automatically discover one another and by proactively synchronizing network state.

HiveAPs have the ability to discover each other, whether they see each other over a

wired network or a wireless network. When HiveAPs are powered on, they start to search

for both wired and wireless HiveAP neighbors, and if neighbors are found with the same

hive name and shared secret, they can build AES-secured connections to each other.

Once the neighbor relationships have been established between HiveAPs in a Hive, they

will run cooperative control protocols across wired and wireless links to provide

fast/secure roaming, radio resource management, and resiliency. If HiveAPs discover

neighboring HiveAPs that are in a different subnet, as long as the HiveAPs are configured

with same hive name and hive shared secret settings, they will exchange IP information

with each other and establish communications over the routed network infrastructure to

provide cooperative control functionality across layer 3 boundaries. The beauty of

cooperative control protocols is that they do not need to be configured, greatly

decreasing the operational cost and complexity of deploying a modern wireless solution.

Roaming Issues with Autonomous APs

Fast/secure roaming is most often defined as roaming that occurs in just a few tens of

milliseconds. Fast/secure roaming becomes very important when using real-time

applications like voice and video, where an interruption in a connection can cause

dead air, pops, or even dropped sessions.

With traditional autonomous APs that exist without knowledge of each other, fast/secure

roaming using IEEE 802.1X/EAP for authentication is not possible. This is because during

authentication, the RADIUS server, wireless client, and AP exchange user authentication

information and derive encryption keys between themselves. If the wireless client moves

to another AP, the new AP does not have any of the keys that were created on the

previous AP, and so the wireless client will have to repeat the entire authentication and

key derivation process again. During this process, existing sessions on the client that are

time sensitive will be terminated, such as voice, video, or file transfers.

Copyright © 2011, Aerohive Networks, Inc. 9

Diagram 3. Autonomous APs – No

Fast/Secure Roaming with 802.1X/EAP

Aerohive Networks has solved the problem that exists with autonomous AP solutions using

AMRP. Whether connected via the wired LAN or wireless mesh, HiveAPs cooperate with

each other using AMRP to predicatively exchange client authentication state, identity

information, and encryption key information with neighboring HiveAPs, allowing clients to

perform fast/secure roaming. The following diagram lists the steps taken by the HiveAPs

for fast/secure roaming.

Diagram 4. HiveAPs - Authentication,

Key Derivation, and Key Distribution

10 Copyright © 2011, Aerohive Networks, Inc.

Cooperative Control WLAN Architecture

Step 1 - After a wireless client successfully authenticates with a RADIUS server

using 802.1X/EAP authentication, the information exchanged between the

RADIUS server and the client is used to derive a key called the pairwise master key

(PMK). This PMK is the same on the wireless client and RADIUS server.

Step 2 – The RADIUS server transfers the PMK to the HiveAP so that the client and

HiveAP can build an encrypted connection between each other.

Step 3 – Using AMRP, the HiveAP proactively distributes encryption keys, identity

information, SIP voice session state information, firewall, and QoS policy

information to all neighboring HiveAPs. This, along with the de facto standard

Opportunistic Key Caching (OKC), permits clients to roam between HiveAPs

without having to repeat the 802.1X/EAP authentication process, enabling

fast/secure roaming.

Note: For security reasons, the key and identity information sent between HiveAPs is

encrypted with AES and is stored only in memory on the HiveAP. This way, the keys are

removed from the system with all user identity information when a HiveAP is powered off.

Furthermore, administrators do not have access to view the keys. These security measures

prevent the keys from being obtained if the wired network is analyzed or if a HiveAP is

stolen.

Along with the key information that is distributed among neighboring HiveAPs, AMRP also

distributes the user’s identity information so that HiveAPs can enforce the identity-based

firewall access policies and QoS settings as the user roams between HiveAPs.

Fast/Secure Layer 3 Roaming

Mobility in typical IP networks is challenging because, as a user moves from subnet to

subnet, their IP settings change, which usually makes IP-based sessions or applications

fail. To allow users to maintain their IP settings and network connections while roaming

across subnets throughout a WLAN, Aerohive has developed the Dynamic Network

Extension Protocol (DNXP). At the time a user roams to an AP that is located in a different

subnet, DNXP will dynamically establish a tunnel from the new AP back to an AP in the

subnet the user roamed from. The user’s traffic is tunneled back to its original subnet,

which allows clients to preserve their IP address settings, authentication state, encryption

keys, firewall sessions, and QoS enforcement settings as they roam across HiveAPs in

different subnets. This is especially important for clients using voice and video

applications.

When layer 3 roaming is enabled, HiveAPs can automatically discover their layer 3

neighbors (neighboring HiveAPs on different subnets) by scanning radio channels. If

HiveAPs are within radio range of each other, are in the same hive, have layer 3 roaming

enabled, and are in different IP networks, the HiveAPs will build layer 3 neighbor

relationships with each other over the routed Ethernet network. HiveAPs will then

distribute tunnel and client information to their layer 3 neighbors. This way, when the user

roams across layer 3 boundaries, the tunnels can be built without delay.

In situations where HiveAPs cannot discover each other automatically over the air,

possibly due to being on opposite sides of an RF obstacle, you can manually configure

layer 3 neighbors for HiveAPs using HiveManager.

Copyright © 2011, Aerohive Networks, Inc. 11

When layer 3 neighbors are discovered, either automatically or manually, HiveAPs in

different subnets will exchange lists of available HiveAP portals and client and roaming

cache information. This way, if a client does roam to a new subnet, the HiveAP in the

new subnet will be aware of the client and can dynamically build a tunnel back to any

one of the portal HiveAPs in the previous subnet. This allows for fast/secure layer 3

roaming.

The following diagram shows the basic steps performed by HiveAPs as clients roam within

their subnet and across subnet boundaries.

Diagram 5. The Process for Fast/Secure Layer 3 Roaming

Step 1 – The client performs seamless, fast/secure layer 2 roaming within subnet A.

Step 2 – After the client successfully roams to HiveAP 2, HiveAP 2 will send an

encrypted control packet over the Ethernet infrastructure to HiveAP neighbors in

the neighboring subnet. The control packet contains, as a minimum, the client’s

identity, security and QoS information, SIP call state, and the client’s originating

subnet.

Step 3 – Because the client’s identity and key information, including SIP call state,

is proactively synchronized between neighboring HiveAPs, when the client roams

to HiveAP3, HiveAP3 has all the information it needs to enforce policies and to

tunnel permitted traffic, over the GRE tunnel, to a portal HiveAP in the client’s

original subnet. This behavior allows the client to maintain its IP address and

active sessions as it roams. Predictively, HiveAP3 forwards the wireless client’s

roaming information to HiveAP4 in anticipation of any further roaming.

The ability for a client to maintain its IP, QoS, firewall, and security settings while roaming

across subnet boundaries ensures that client application sessions do not get dropped

while roaming. Based on a configurable idle time or number of packets per minute,

HiveAPs can be set to disassociate these wireless clients so that they can reconnect and

receive an IP address in their new subnet allowing traffic to be locally forwarded. If a

client roams across subnet boundaries when it does not have any active sessions in

12 Copyright © 2011, Aerohive Networks, Inc.

Cooperative Control WLAN Architecture

process, it can be immediately transitioned to the new subnet, eliminating the need to

tunnel traffic.

In summary, with HiveAPs and cooperative control, wireless clients have the ability to

perform fast/secure roaming between HiveAPs within the same or between different

subnets without impacting client data or voice connections.

Tunnel Load Balancing in Large Scale Layer 3 Roaming Environments

Aerohive’s layer 3 roaming feature provides unprecedented scalability by using tunnel

load balancing to distribute tunnels across all portal HiveAPs within a subnet. This

leverages the distributed processing power of the wireless network to support thousands

of layer 3 roaming tunnels and multiple gigabits of cross subnet throughput. When a

HiveAP in a remote subnet attempts to establish a tunnel to a HiveAP in the original

subnet, in the very rare case that the HiveAP in the original subnet has high tunnel load, it

can inform the HiveAP in the remote subnet to tunnel to another portal HiveAP in the

subnet. This prevents any single HiveAP from being over-utilized.

Radio Resource Management (RRM)

To respond to changes in the RF environment, HiveAPs use Aerohive Channel Selection

Protocol (ACSP). ACSP allows HiveAPs to cooperate in order to to automatically select

the best channels and power settings on which to operate for optimal network

performance across an entire system. HiveAPs use ACSP to scan channels and to build

tables of discovered wireless devices. These tables, along with additional RF information

such as channel utilization and retry counters, are used to identify and classify

interference types and sources. HiveAPs communicate ACSP state information with

each other and use this information to select the appropriate channels and power levels

for the network topology and configuration.

For each radio in access mode, ACSP will select a channel and power level to maximize

coverage while minimizing interference with its neighbors. This is accomplished by

ensuring that HiveAPs use different channels than their immediate neighbors, and that

they adjust their power to minimize co-channel interference with other, more distant,

HiveAPs. For radios in backhaul (mesh) mode, ACSP ensures that that they use the same

channel throughout the mesh, while still minimizing interference with the access links.

To maintain optimal performance, ACSP constantly checks the radio power settings and

can automatically decrease radio power based on communication from neighboring

APs to give the maximum coverage possible while minimizing interference. This behavior

is highly beneficial in a failure state or when an AP is taken off line, where neighboring

APs can automatically adjust their power to the optimum state, essentially taking into

account the missing AP. ACSP can also be scheduled to recalibrate the radio channels

during a configurable daily time window and when a specified number of clients are

associated. This helps ensure that radio channels do not switch while the WLAN is being

utilized, preventing a disruption of service for wireless clients.

Station Load Balancing

Many times in a wireless network, many users will unknowingly be connected to the same

AP, or even the same radio on the same AP, while neighboring APs may be under-

utilized. This can have a significant impact on client performance and may cause the

Copyright © 2011, Aerohive Networks, Inc. 13

users to have an unsatisfactory experience. It is logical, therefore, that clients be

encouraged to move from the more heavily-loaded APs to the lightly-loaded ones. To

aid in the distribution of clients among HiveAPs in a cooperative control infrastructure,

Aerohive has implemented station load balancing.

HiveAP load is determined, as a minimum, by:

1) the overall load of the system

2) the load in a specific area on a specific channel

3) the voice traffic load of attached stations

4) the total number of attached stations

5) the signal quality of attached stations

HiveAPs can make decisions to offload stations from one radio to another within the

same HiveAP (Bandsteering) based on client capabilities and/or to offload stations to a

HiveAP that is better suited to handle the load in the immediate area. Transitioning

clients between radios and between APs is done without breaking the application

session.

Use of admission control can prevent over-utilization by ensuring there is enough

headroom for stations that roam to the HiveAP. It also prevents overloading a single

HiveAP, especially when there are other HiveAPs nearby that can better handle the

load. This is useful with VoWiFi, because it helps ensure that a HiveAP has availability to

support new or roaming voice stations, and that there is enough airtime available for

excellent voice quality.

Policy Enforcement at the Edge

Utilizing Policy Enforcement at the Edge, a key technology in Aerohive Networks’

cooperative control architecture, HiveAPs can enforce powerful and flexible identity-

based security, access control, and QoS policies at the edge of the network. Applying

those policies to traffic at the local HiveAP allows the denial-of-service (DoS) and firewall

engines to validate traffic at the point of entry before it is forwarded through the HiveAP.

Likewise, it allows QoS engines in the HiveAP to instantaneously respond to the real-time

variations (measured in microseconds) in wireless throughput inherent to a dynamic RF

environment. Enforcing policy at the edge provides substantially better control than

solutions that enforce policy multiple hops, and several milliseconds away on a

centralized controller.

User Profiles and Identity-Based Policy

The Aerohive solution defines different sets of access policies for different classes of users

through the creation of user profiles (a set of connectivity parameters). Each user profile

defines a VLAN, QoS policy, MAC firewall policy, IP firewall policy, tunnel policy, and

layer 3 roaming policy, which is assigned to users when they connect to the WLAN.

On a HiveAP, user profile attributes (numbers associated with a set of connectivity

parameters) are used to identify a user profile. If a user authenticates with the HiveAP

using 802.1X/EAP authentication or MAC address authentication, the RADIUS server can

return a user profile attribute to the HiveAP, which binds the user to a user profile. The

user profile attribute returned by RADIUS can be defined based on the user’s group

14 Copyright © 2011, Aerohive Networks, Inc.

Cooperative Control WLAN Architecture

settings in Active Directory, eDirectory, OpenDirectory, LDAP, or a RADIUS user group,

user, or realm. If the user does not use 802.1X/EAP or MAC address authentication, the

user profile can be assigned to the user based on the SSID’s default user profile attribute

setting.

The user profile attribute assigned to a user stays with the user as he/she roams

throughout the WLAN. Administrators can configure HiveAPs to use the same set of

policies for a user profile throughout a WLAN or they can make adjustments based on

specific HiveAPs. For example, clients associating to the same SSID in different locations

can be assigned to different VLANs and subnets. As a user roams between the two

locations, the attribute is used by the HiveAPs to identify the set of policies for the client

at the new location including: VLAN, firewall policy, QoS policy, and layer 3 roaming

policy. Depending on the policies in place for each location, the client’s traffic can be

tunneled back to a HiveAP in the client’s original location (if it’s on a different subnet),

which is useful for seamlessly maintaining active IP sessions, or the client can forced to

obtain new IP settings for the VLAN in the new location.

QoS at the Edge

Any time you transmit data from a higher speed network, such as an Ethernet network, to

a lower speed network, such as a WLAN, there is a high potential for packet loss and

performance degradation. Think of traffic from a five lane highway that narrows down

to a two lane road, and imagine how an emergency vehicle, such as an ambulance,

would get through that traffic on a busy day.

Advanced QoS techniques are required in order to ensure optimal performance for high-

priority applications, such as voice and video, without adversely affecting the

performance of lower-priority traffic such as web-based applications and email, when

traffic is sent from the wired network to the WLAN. Each HiveAP has a sophisticated and

granular QoS engine, which provides linear and unlimited scalability of QoS services

across the WLAN system.

Most modern WLAN systems support the Wi-Fi Alliance WMM certification for QoS, which

was based on the IEEE 802.11e amendment. With WMM, traffic can be classified into

one of four access categories (ACs), which are bound to queues, for transmission onto

the wireless network. Higher-priority ACs have different (better) arbitration values than

lower-priority ACs, so higher-priority traffic experiences less delay in transitioning from

queue to wireless medium.

Though WMM does a reasonable job of ensuring that each traffic type gets an

appropriate amount of access to the wireless medium, if voice and video (high-priority)

traffic are being used on the WLAN, it is likely that there will be momentary periods of

congestion for lower priority queues. When queues get full, even momentarily, packets

get dropped.

Dropped packets may not sound like a big deal, especially if they use TCP (since they will

be retransmitted); however, because TCP uses a built-in congestion avoidance algorithm

that cuts TCP window sizes in half when a packet is dropped, TCP performance can be

severely affected by L2 frames that are dropped. Though applications classified in the

higher-priority queues may not be affected, applications in lower-priority queues

including email, database, accounting, inventory, workforce automation, or SaaS

applications may suffer significantly. By using advanced QoS techniques to augment

Copyright © 2011, Aerohive Networks, Inc. 15

WMM, it is possible to mitigate L2 frame loss and to ensure higher performance for lower-

priority applications as well.

In order to provide highly-efficient voice and video transmission and to alleviate the

problems that occur when packets are dropped because of momentary congestion of

WMM queues, Aerohive has augmented WMM by implementing advanced

classification, policing (rate limiting), queuing, and packet scheduling mechanisms within

each HiveAP.

The following diagram shows a simple example and workflow of the QoS engines within a

HiveAP.

Diagram 6. QoS Policy Enforcement at the Edge – Downlink Example

Diagram 6 shows an example of how traffic arriving from the wired LAN is processed by a

HiveAP to ensure highly-effective QoS to the WLAN.

Step 1 –Traffic is sent from the wired LAN to a HiveAP

Step 2 –When packets arrive from an Ethernet uplink, a wireless uplink, or an access

connection, the traffic is assigned to its appropriate user profile, which defines the QoS

policy.

16 Copyright © 2011, Aerohive Networks, Inc.

Cooperative Control WLAN Architecture

Step 3 –The QoS packet classifier categorizes traffic into eight queues per user based on

QoS classification policies. Classification policies can be configured to map traffic to

queues based on MAC OUI (Organization Unique Identifier), network service, SSID and

interface, or priority markings on incoming packets using IEEE 802.1p or DSCP (DiffServ

Code Point).

Step 4 –The QoS traffic policer can then enforce QoS policy by performing rate-limiting

and marking. Traffic can be rate-limited per user profile, per user, and per user queue.

Step 5 –The marker is responsible for marking packets with DSCP and/or 802.11e for traffic

destined to the WLAN and with DSCP and/or 802.1p for traffic destined for the wired LAN.

Step 6 –Traffic to the WLAN is queued in eight queues per user and waits to be scheduled

for transmission by the scheduler.

Step 7 –The QoS packet scheduling engine uses two scheduling types for determining

how packets are sent from the user queues to the WMM hardware queues for

transmission onto the wireless medium.

• Strict priority – Packets in queues that are scheduled with strict priority are sent

ahead of packets in all other queues. Strict priority is typically configured for user

queues that are assigned to low latency traffic such as voice.

• Weighted round robin – The scheduler can allocate the amount of airtime or

bandwidth that can be transmitted by the user of a wireless client device based

on weights specified for a user profile, individual users within a user profile, and

the eight queues per user. Based on weighted preferences, the scheduler moves

packets from the individual user queues to the appropriate WMM access

categories for transmission onto the wireless network.

Because a QoS packet scheduling engine is built into every HiveAP, HiveAPs have the

ability to closely monitor the availability of the WMM access categories and to instantly

react to changing network conditions. The QoS packet scheduling engine only transmits

to WMM access categories when they are available, queuing packets in eight queues

per user in the meantime. This behavior prevents dropped packets and jitter, which

adversely affect time-sensitive applications such as voice. It also prevents TCP

performance degradation caused by contention window back-off algorithms that are

invoked when TCP packets are dropped.

Step 8 –Finally, WMM functionality transmits L2 frames from its four access categories

based on the availability of the wireless medium. Packets from higher-priority access

categories are transmitted with a smaller random back-off window to allow transmission

onto the wireless medium with less delay.

QoS with Dynamic Airtime Scheduling

In WLANs, wireless performance can vary significantly based on the RF environment.

WLANs are a shared medium, which means that all clients and neighboring APs using the

same or overlapping channel(s) compete for the same bandwidth. In addition, each

client’s data rate set varies depending on the Physical Layer Specification (PHY) it is using

(802.11 a/b/g/n), signal strength, and interference and noise it is experiencing. Use of

low-speed PHYs, RF interference, inconsistent RF coverage, connecting at the fringe of

Copyright © 2011, Aerohive Networks, Inc. 17

the network, and moving behind obstructions all contribute to low data rate

connections. Slow clients consume more airtime to transfer a given amount of data,

leaving less airtime for other clients, which decreases network capacity and significantly

degrades the performance of all clients on the network. By enabling Aerohive’s new

patent-pending wireless Quality of Service (QoS) technology –Dynamic Airtime

Scheduling - these problems are solved.

The benefits of Dynamic Airtime Scheduling are compelling both to the IT organization

and to the users of the WLAN, as it enables clients connected at higher data rates, in a

mixed data rate environment, to achieve up to 10 times more throughput than they

would get with traditional WLAN infrastructures - without penalizing low-speed clients.

This means that users see faster download times and improved application performance,

and it means that low-speed clients don’t destroy the performance of the WLAN for the

rest of the users. This allows IT to implement a phased upgrade to 802.11n and

immediately reap the benefits of the new 802.11n infrastructure, even if it takes years to

upgrade all of the clients. And, because a user connecting at the fringe of the WLAN

can no longer consume all of the airtime, the network impact of a bad client or a weak

coverage area is diminished. This allows IT to reduce their infrastructure investment,

saving IT time and increasing user satisfaction.

With bandwidth-based QoS scheduling, the AP calculates the bandwidth used by clients

based on the size and number of frames transmitted to or from a client. Bandwidth-

based scheduling does not take into account the time it takes for a frame to be

transmitted over the air. Clients connected at different data rates take different

amounts of airtime to transmit the same amount of data. By enabling Dynamic Airtime

Scheduling, the scheduler allocates airtime, instead of bandwidth, to each type of user,

user profile, and user queue, which can be given weighted preferences based on QoS

policy settings. When traffic is transmitted to or from a client, the HiveAP calculates the

airtime utilization based on intricate knowledge of the clients, user queues, per-packet

client data rates, and frame transmission times, ensuring that the appropriate amount of

airtime is provided to clients based on their QoS policy settings.

Dynamic Airtime Scheduling is made possible because it is performed directly on the

HiveAPs responsible for processing the wireless frames. This gives the scheduling engine

access to all the information needed, in real time (at the microsecond level), allowing

the HiveAP to react to instantaneous changes in client airtime utilization that occur when

the client is moving.

Managing airtime usage is critical and needs to be dynamically scheduled because it

affects all clients within the cell and varies drastically by client, based on distance from

the AP, PHY being used, signal strength, interference, and error rates. With Dynamic

Airtime Scheduling, airtime can be dynamically scheduled to increase aggregate

network and client performance and to allocate network capacity based on IT-specified

policies.

To demonstrate the advantages of Dynamic Airtime Scheduling, a series of tests have

been run and documented in the Aerohive whitepaper: Optimizing Network and Client

Performance Through Dynamic Airtime Scheduling.

The following test, which is an excerpt from the whitepaper, simulates a typical WLAN’s

transition to 802.11n – where 802.11n APs are servicing a mixture of 802.11n clients and

legacy (a/b/g) clients. These tests show how Dynamic Airtime Scheduling prevents a

18 Copyright © 2011, Aerohive Networks, Inc.

Cooperative Control WLAN Architecture

high performance 802.11n WLAN from being hampered by clients connected at lower

data rates – even if some of those slow clients are slow 802.11n clients. Depending on

distance from the AP, position of the antenna, or even RF interference, the data rate of a

connected 802.11n client can range from 6.5 to 300 Mbps (depending on supported

features), so some 802.11n clients will be slower than other 802.11n clients. It is even

possible that some 802.11n clients could actually be slower than 802.11a/g clients.

To simulate a mixed 11n/a environment, we connect three 802.11n clients at 3 different

rates on the 5 GHz radio band of an AP. The rates used for the 802.11n clients are 270

Mbps, 108 Mbps, and 54 Mbps (with frame aggregation enabled for 802.11n-only data

rates). Simultaneously we connect three 802.11a clients at 54 Mbps, 12 Mbps, and 6

Mbps. This simulates a mixed-PHY environment where six clients, 3 of each type, are

connected to the same AP but at varying distances or interference levels, which cause

the data rate to vary. These tests transfer 10,000 good 1500-byte HTTP packets for each

client. The graph to the left shows the results without airtime scheduling. In this test, each

of the clients, though connected at different data rates, has the same throughput as the

client at the lowest rate, which in this case is 6 Mbps. The time it takes all 6 clients to finish

transferring 10,000 HTTP packets is between 90 and 110 seconds.

Diagram 7. Side-by-side comparison with

and without Dynamic Airtime Scheduling

The graph to the right shows the same test with a HiveAP using Aerohive’s Dynamic

Airtime Scheduling. The transfer time at the 270 Mbps data rate is approximately 10

seconds – about 10 times faster than the 110 seconds seen in the previous test. Likewise,

the rest of the transfer times improved significantly. The 802.11n client at 108 Mbps was

over 6 times faster, the 802.11n client at 54 Mbps was over 3 times faster, the 802.11a

client at 54 Mbps was 2.5 times faster, the 802.11a client at 12 Mbps was 30% faster, and

the 802.11n client at 6 Mbps decreased slightly (10%).

With Dynamic Airtime Scheduling, network performance is dramatically improved for

individual clients and for the network as a whole. All of the high data rate clients saw

substantial improvements in performance, while the slower clients saw almost no

negative impact. In an open air network, the effects of the performance gain will even

be higher. Once the high-speed clients finish, fewer clients are on the air, so contention

and retries decrease leading to performance increases.

Copyright © 2011, Aerohive Networks, Inc. 19

Service Level Agreements (SLAs) and Airtime Boost

Dynamic Airtime Scheduling gives HiveAPs a platform on which to build additional

capabilities. Such capabilities currently include Performance Sentinel, SLA, and Airtime

Boost. Performance Sentinel is a feature that compares client throughput and demand

with pre-defined SLA throughput levels. It uses client data statistics to determine client

throughput and uses buffer statistics in the HiveAP’s QoS engine to determine if a client is

trying to send more data. SLAs are easily configured within a user profile, as shown

below.

Diagram 8. SLA Parameter Configuration

If, for any reason, SLAs are not being met, actions can be taken, such as logging or use

of the Airtime Boost feature. Airtime Boost is a feature that works in concert with

Dynamic Airtime Scheduling that provides additional airtime to a client that is not

meeting its SLA.

Diagram 9. SLA with Airtime Boost

20 Copyright © 2011, Aerohive Networks, Inc.

Cooperative Control WLAN Architecture

The ability to assign and guarantee specific levels of throughput to individual users or

groups of users within a Wi-Fi network is another significant step, pioneered by Aerohive

Networks, toward making a Wi-Fi network as deterministic as Ethernet.

HiveAP Security and Theft Protection

Unlike firewalls, routers, and switches, which are typically locked away in a wiring closet,

APs are usually mounted on ceilings, above ceiling tiles, on walls, and in other publicly-

accessible locations. Because of this, Aerohive has developed mechanisms to prevent

secure information from being obtained from HiveAPs and to prevent HiveAPs from

functioning in the event of a HiveAP theft.

• Encrypted Configuration File

If a malicious user were to steal a HiveAP and then open it up and remove the flash

drive, the configuration of the HiveAP is encrypted and unreadable.

• Encrypted and/or Hashed Passwords and Shared Keys in Command Line Interface

If the bootstrap configuration is not set or if someone knows the admin name and

password and is able to gain access to the HiveOS command line interface, all

passwords and shared keys are hashed and/or encrypted so they cannot be

obtained.

• Secure Trusted Platform Module (TPM) device in HiveAP 300 series

The HiveAP 300 series HiveAPs are equipped with an integrated TPM chip, which is a

standards-based hardware device that provides capabilities for secure storage of

sensitive data. HiveAPs use the TPM chip to securely store cryptographic keys for

encrypting and decrypting the configuration file, shared secrets, and user databases

stored in flash, ensuring they cannot be viewed or altered.

• 802.1X/EAP Dynamically-Generated Keys Are Removed When A HiveAP Is Powered

Off

All dynamically-generated keys, such as keys used by 802.1X/EAP authenticated

clients, which includes PMKs (Pairwise Master Key) and PTKs (Pairwise Transient Keys),

are securely stored in memory and are not accessible via the command line

interface. Once the HiveAP is powered off, the keys are wiped from memory.

• HiveAP RADIUS Users Stored in DRAM Are Removed When HiveAP is Powered Off

If the HiveAP is configured as a RADIUS server and uses a local user database, this

database can optionally be stored in DRAM, which is cleared when a HiveAP is

powered off or rebooted.

• Bootstrap Configuration

If the hardware reset button functionality is enabled, you can set a bootstrap

configuration on a HiveAP so that when the HiveAP boots, after a hardware reset, it

will be configured with:

– A strong admin name and password for serial console or SSH access;

– disabled wireless interfaces;

– disabled console access;

– and optionally disabled Ethernet interfaces.

Copyright © 2011, Aerohive Networks, Inc. 21

Note that if you do configure the bootstrap to disable console access, wireless

interfaces, and Ethernet interfaces, the HiveAP will essentially become a paperweight in

the event it is stolen.

In the unlikely and unfortunate event that a HiveAP is ever stolen, Aerohive has provided

these security mechanisms to prevent loss of secure data and to prevent the misuse of

the HiveAP.

Wireless Intrusion Protection System (WIPS) - Rogue AP and Client Prevention

Using WIPS functionality, each HiveAP has the ability to perform off-channel scanning to

identify and locate unauthorized (rogue) APs and clients, as well as misbehaving clients,

and report them to HiveManager. Administrators can configure rogue AP

detection/prevention policies that look for APs that have invalid BSSIDs, SSIDs,

authentication and encryption settings, and other incorrect configuration parameters.

Along with searching for rogue APs over the airwaves, HiveAPs can be configured to use

on-network rogue AP detection functionality to probe VLANs using cooperative control

messages to detect if rogue APs are physically attached to the corporate switched

network. Once rogue APs are found, the administrator can use rogue mitigation

functionality (e.g. deauthentication) to prevent clients from associating with the rogue

APs. The administrator can then use HiveManager to locate the position of rogue APs on

a topology map so they can be removed.

The following diagram shows a list of rogue APs in HiveManager, Aerohive’s WLAN

management system. In the picture, you can see the detailed information displayed

about the rogue APs along with a link to a topology map displaying the location of the

rogue AP on the map if it was discovered by at least three HiveAPs.

Diagram 10. Rogue AP List

The following diagram shows an example of a discovered rogue AP on the topology

map and the channel mappings of HiveAPs. The rogue AP is highlighted in the circle.

22 Copyright © 2011, Aerohive Networks, Inc.

Cooperative Control WLAN Architecture

Diagram 11. Rogue AP Location on Topology Map

Voice- and Video-aware WIPS Scanning

In order for HiveAPs to detect rogue APs or clients that are on different channels than

what the HiveAP is using, the HiveAP must take a few milliseconds at a time to scan other

radio channels. This off-channel scanning can slightly affect voice and video quality. To

prevent degradation of voice and video quality, the off-channel scanning can be

configured to operate only when there is no traffic in voice queues.

Copyright © 2011, Aerohive Networks, Inc. 23

Integrated Protocol Analyzer Integration and Client Monitoring

Integrated into HiveManager is a Packet Capture tool. This tool allows the administrator

to capture filtered or unfiltered traffic from an AP’s radios and to store the capture file for

post-capture analysis.

Diagram 12. Integrate Protocol Analysis

Monitoring one or more clients in real-time within HiveManager is a snap with the Client

Monitor tool. You simply select the client you want to monitor, select one or APs to

monitor in regard to this client, and start the process. Many clients can be monitored at

once, and everything that happens with monitored clients is logged to the display and

can be exported.

Diagram 13. Client Monitoring

24 Copyright © 2011, Aerohive Networks, Inc.

Cooperative Control WLAN Architecture

3rd Party WIPS and Protocol Analyzer Integration

Aerohive has worked with leading WIPS vendors to integrate their systems with

HiveManager. This integration greatly simplifies configuration and deployment of the

WIPS system by white-listing all HiveAPs in one simple step.

Diagram 14. 3rd Party WIPS Integration

Aerohive has integrated HiveOS with Wireshark, one of the industry’s leading protocol

analyzers, for the purposes of remote troubleshooting and performance and security

analysis. Integration with other protocol analyzers is on the way, and with this kind of

integration, administrators can now see what the AP sees without disconnecting clients.

Each radio can capture data and send it to the analyzer (over the air or over the

Ethernet) while clients continue to operate normally. This revolutionary step enables

quick and easy monitoring across a distributed enterprise, or even large campus

installations, without an on-site visit.

Copyright © 2011, Aerohive Networks, Inc. 25

Diagram 15. 3rd Party Protocol Analyzer Integration

Wireless Decryption at the HiveAP Allows Advanced

Security at the Edge

Each HiveAP is responsible for encrypting and decrypting wireless frames and therefore

has the ability to view frame payloads, perform security checks, and encrypt again if

necessary before sending traffic out. This allows HiveAPs to enforce advanced security

policies locally, at the point of network entry, before traffic is sent to the wired network,

wirelessly through a mesh, or to another wireless client.

HiveAPs apply security policies to traffic based on the identity of a user or by the SSID

being used. Security policies can enforce MAC address filters, MAC (layer 2) firewall

policies, IP (layer-3/layer-4) stateful inspection firewall polices, and can prevent a

number of wireless MAC-layer and IP-layer denial of service (DoS) attacks. Because

each HiveAP is responsible for processing the security policies for its own traffic, the

distributed processing power of all HiveAPs in the WLAN system is harnessed. Given the

high-end processing power of each HiveAP, collective processing power far exceeds a

centralized, controller-based model, allowing virtually unlimited scalability.

HiveAPs decrypt wireless frames at the network edge (before they are transmitted on to

the wired network), making it possible to use the security systems currently in place in the

wired network to enforce security policies on wireless traffic as well. This way, wireless

and wired traffic alike can be forced to flow through the corporate security systems,

including firewalls, antivirus gateways, intrusion detection and prevention systems, and

network access control (NAC) devices.

26 Copyright © 2011, Aerohive Networks, Inc.

Cooperative Control WLAN Architecture

RADIUS Server Built into HiveAPs

For locations that do not have local RADIUS servers or do not want to backhaul RADIUS

traffic over a WAN to a central office, HiveAPs can be configured to provide a full

RADIUS server implementation. This gives administrators the ability to implement a

centrally-managed, secure WLAN authentication solution using 802.1X/EAP without

having to purchase, configure/modify, or maintain corporate RADIUS servers. HiveAPs

with the RADIUS server enabled can terminate 802.1X/EAP authentication methods such

as PEAP, EAP-TTLS, EAP-TLS, and LEAP locally on the HiveAP.

HiveAPs can authenticate wireless users using a local user database on the HiveAP or

with external domain authentication to Directory Services (Active Directory, eDirectory,

OpenDirectory, LDAP, or LDAP/S). Because the 802.1X/EAP key processing and

distribution is performed in HiveAPs for wireless clients, these processes are offloaded from

the corporate RADIUS servers, preserving their performance. With the ability to use any

HiveAP as a RADIUS server, network administrators have the flexibility to design fail-safe

RADIUS server implementations anywhere within a WLAN. This is especially useful in the

branch office, where the 802.1X/EAP authentication process can occur locally on a

HiveAP without the need to traverse a WAN link.

The HiveManager management appliance makes configuring HiveAP RADIUS servers a

straightforward process. The RADIUS server settings, server certificates, and local users

can be managed from a simple, easy-to-use graphical interface.

Built-in Captive Web Portal

Another way to provide policy enforcement at the edge is by using captive web portal

functionality built in to each HiveAP. Captive web portals are what you expect to see

when accessing a wireless network at a hotel or airport. When a user associates with an

SSID which has the captive web portal enabled, and then opens a web browser, the user

is redirected to a web-based registration screen hosted by the HiveAP itself.

Copyright © 2011, Aerohive Networks, Inc. 27

Diagram 16. HiveAP Captive Web Portal

The web page can be customized by the administrator to display a page to

authenticate guests created with GuestManager (Aerohive’s web-based guest

management solution), a HiveAP RADIUS server, or a third party RADIUS server.

Additionally the captive web portal can be configured to display a web-based self-

registration form where users can fill in their details and agree to the company’s

acceptable use policy.

Administrators can customize the captive web portal by designing their own web pages.

After a user passes the registration process, their traffic is enforced by the HiveAP based

on the user profile assigned to the SSID or based on a user profile assigned by an

attribute returned from GuestManager or a RADIUS server.

Identity-Based Tunnels

Along with firewall policies, QoS policies, and VLAN assignment, when a client associates

with an SSID and gets assigned to a user profile, the client’s traffic can be directed to be

tunneled to a HiveAP in a pre-defined location or subnet.

The following diagram provides a common use-case for identity-based tunnels. An SSID

called Guest-WiFi is configured on HiveAPs in the internal network. When a guest client

associates with the SSID, they are assigned to the Guest-DMZ user profile that has a policy

to tunnel all the guest client’s frames (layer 2 traffic) via L2-GRE to one of the HiveAPs in

the DMZ. The client obtains its IP address from a DHCP server accessible from the DMZ,

which can optionally be running on a DMZ HiveAP.

When a guest attempts to access the network, the guest traffic is restricted by the

captive web portal on the local HiveAP until the guest has authenticated with a user

account created using GuestManager or a RADIUS server, or the guest has completed a

web-based self registration form. After being granted access, the local HiveAP enforces

the guest-specific firewall policy, QoS policy, and tunnel policy configured in the Guest-

DMZ user profile, and the permitted traffic is directed over a L2-GRE tunnel to a HiveAP in

the DMZ, which permits access to the Internet. The guest traffic will never appear on the

internal network outside of the L2-GRE tunnel. The client is essentially an extended

member of the remote network.

28 Copyright © 2011, Aerohive Networks, Inc.

Cooperative Control WLAN Architecture

Diagram 17. A Common Use for Identity-Based Tunnels -

Placing Guests in a Firewalled DMZ for Internet-only Access

While this diagram shows an example of using identity-based tunnels for guest access,

identity-based tunnels can be used for a variety of other scenarios as well. Using identity-

based tunnels, network resources can be accessed from any location in the wireless

network as if they were physically there. For extra scalability, HiveAPs can use round

robin to build tunnels to a set of HiveAPs at the destination, and tunnels only exist while

they are in use by clients.

Guest Access Policy Enforcement at the Edge

Along with the captive web portal capabilities that are available within HiveAPs, there

are a number of complimentary features within Aerohive products that can be used to

provide a robust guest access solution. These features include:

1. GuestManager – a robust, appliance-based guest management solution with an

easy-to-use web interface that allows a lobby ambassador to easily create time-

limited user accounts with access to the WLAN. By supporting RADIUS Dynamic

Change of Authorization (CoA), guest users can be removed from the network when

their temporary account expires. Accounts can have varying user profiles, which will

assign different QoS settings, firewall policies, and VLAN assignments. It is possible to

provide access via username/password authentication, or just require a simple

registration form before granting access to the WLAN.

2. Guest segmentation/isolation – after a guest has been authenticated or registered

with a HiveAP, they can be placed on their own VLAN to prevent them from

accessing corporate resources, but to allow them to still access the Internet. If VLAN

segmentation is not possible due to the network architecture at the access layer,

guests can be tunneled, using the identity-based tunnel functionality, directly to one

or more HiveAPs within a firewalled DMZ area, such as a lobby.

3. User Profile-based Firewall Policies – these policies can be used to allow a different

set of MAC and IP firewall policies to be applied to guest users to limit their access to

specific resources. This can be used as an alternative to VLANs or tunneling or as an

Copyright © 2011, Aerohive Networks, Inc. 29

additional layer of control beyond VLANs and tunneling to control guest use of the

network.

4. Per-SSID RADIUS server settings – allows a guest SSID to use a separate RADIUS server

for authenticating guest users. This is important because GuestManager and most

third-party guest management solutions utilize their own RADIUS servers. These

RADIUS servers are equipped with a graphical interface to allow lobby administrators

to create temporary guest user accounts, as guests are registered at the front desk.

With RADIUS server settings per SSID, your corporate users authenticate with

corporate RADIUS servers, while guest users authenticate with RADIUS servers tied to

guest management solutions.

5. Per-SSID DoS settings – allows stricter management frame DoS settings and airtime

flood prevention settings to be applied to guests.

The Aerohive guest access solution provides the flexibility of using features integrated into

each HiveAP while allowing seamless interoperability with third-party guest access

solutions.

Private Preshared Key (PPSK) Security

Though using 802.1X/EAP is the most secure approach to Wi-Fi authentication, this

method is typically only implemented for devices managed by IT administrators, where

they have control over the domain infrastructure, user accounts, and wireless clients

being used. For temporary users such as contractors, students, or guests, the IT

administrator may not have the access rights required, the time, or the knowledge of

how to configure 802.1X/EAP clients for all the different wireless devices involved.

Even more difficult is the fact that many legacy and SOHO-class wireless devices are still

used in the enterprise that do not support 802.1X/EAP or the latest WPA2 standard with

support for Opportunistic Key Caching (OKC) that required for fast/secure roaming

between APs. The next best option has traditionally been to use a preshared key (PSK)

for these devices; however, classic PSK trades off many of the advantages of 802.1X/EAP,

such as the ability to revoke keys for wireless devices if they are lost, stolen, or

compromised, and the extra security of having unique keys per user or client device.

To draw on the strengths of both preshared key and 802.1X/EAP mechanisms without

incurring the significant shortcomings of either, Aerohive has introduced a new approach

to WLAN authentication: Private PSKs (PPSKs). PPSKs are unique preshared keys created

for individual users on the same SSID. They offer the key uniqueness and policy flexibility

that 802.1X/EAP provides with the deployment simplicity of preshared keys.

The following diagram is a simple example showing a WLAN with traditional PSKs versus

that of a WLAN using Aerohive’s PPSK functionality. With the traditional approach, all of

the client devices use the same PSK and all receive the same access rights because the

clients cannot be distinguished from each other.

30 Copyright © 2011, Aerohive Networks, Inc.

Cooperative Control WLAN Architecture

Diagram 18. Private Preshared Key (PPSK)

On the other hand, with PPSK, as shown on the right, every user is assigned his/her own

unique or “private” PSK, which can be manually created or automatically generated by

HiveManager and sent to the user via email, printout, or SMS. Every PPSK can also be

used to identify the user’s access policy, including their VLAN, firewall policy, QoS policy,

tunnel policy, access schedule, and key validity period. Because the keys are unique,

keys from one user cannot be used to derive keys for other users. Furthermore, if a

device is lost, stolen, or compromised, the individual user’s key can be revoked from the

network, preventing unauthorized access from any wireless device using that key. As for

the client users, the configuration is the same as using a standard PSK.

Copyright © 2011, Aerohive Networks, Inc. 31

Virtual Private Networking (VPN)

The Aerohive wireless VPN solution uses HiveAP 300s with their powerful network processor

and CPU, as VPN servers, and any model HiveAP for VPN clients. Because the wireless

VPN solution is made up entirely of Aerohive components, you can use HiveManager’s

web-based interface to create a VPN policy, server certificates, and user-based policy,

and push them out to all participating HiveAPs at the push of a button to establish a

global VPN.

Aerohive’s wireless VPN solution has been designed from the ground up to take

advantage of layer 2 (L2) and layer 3 (L3) tunneling making it easier to deploy a global

VPN. Typical L3 IPSec VPN solutions require planning and implementation of unique IP

subnets for the remote offices, DHCP relays/IP helpers on remote routers, and IP routing,

access control lists (ACLs), or firewall policies are needed to send traffic though the VPN.

Aerohive’s wireless VPN does not have these complexities because it uses GRE to

encapsulate layer 2 traffic, and IPSec to authenticate and encrypt the traffic so that it

can be securely passed though the Internet. To the client, it will appear as though they

are physically connected to the L2-switched network at the corporate office. For the IT

administrator, planning and implementation complexities of typical L3 VPN solutions are

alleviated because the same subnet can be shared among many remote sites.

To deploy an Aerohive wireless VPN solution, HiveAPs configured as VPN servers are

installed in the corporate network, typically in a DMZ. HiveAPs configured as VPN clients

are installed at remote sites can obtain IP address settings via DHCP and can

automatically establish a L2 VPN tunnel to the HiveAP acting as a VPN server at

corporate headquarters. From that point on, traffic from corporate devices at the

32 Copyright © 2011, Aerohive Networks, Inc.

Cooperative Control WLAN Architecture

remote site, including DHCP, is encapsulated in GRE and sent encrypted though a VPN

tunnel to the HiveAP VPN servers at headquarters. Traffic is then unencrypted,

decapsulated, and transmitted on to the corporate LAN with its full L2 MAC header and

VLAN tag intact, just as if the traffic was originated from the corporate office. This gives IT

administrators the ability to allocate and share corporate IP subnets among many

remote sites without having to create unique IP subnets for each office. This alleviates

the need to configure IP routing at the corporate site or branch offices to route traffic

though the VPN. HiveAPs use MAC layer routing to determine whether traffic should be

forwarded locally or sent though the VPN.

An example of Aerohive’s wireless VPN solution is displayed below, which shows the