Professional Documents

Culture Documents

Variable Selection 8.1 The Model Building Problem

Uploaded by

Mon LuffyOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Variable Selection 8.1 The Model Building Problem

Uploaded by

Mon LuffyCopyright:

Available Formats

CHAPTER 8

VARIABLE SELECTION

8.1 The Model Building Problem

“Variable selection” means selecting which variables to include in our model (rather than some

sort of selection which is itself variable).

Variable selection also occurs when the competing models differ on which variables should be

included but agree on the mathematical form that will be used for each variable — e.g.,

temperature might or might not be included as a predictor, but there is no question about

whether, if it is, we’d use temperature or temperature2 or log temperature.

Model Selection

The complete regression analysis depends on the explanatory variables present in the model. It

is understood in the regression analysis that only correct and important explanatory variables

appear in the model. In practice, after ensuring the correct functional form of the model, the

analyst usually has a pool of explanatory variables which possibly influence the process or

experiment.

However, in most practical problems all such candidate variables are not used in the regression

modelling, but a subset of explanatory variables is chosen from this pool. How to determine

such an appropriate subset of explanatory variables to be used in regression is called the

problem of variable selection.

While choosing a subset of explanatory variables, there are two possible options:

• To make the model as realistic as possible, the analyst may include as many as possible

explanatory variables.

• To make the model as simple as possible, one way include only fewer number of

explanatory variables.

So, the model building and subset selection have contradicting objectives.

• When large number of variables are included in the model, then these factors can

influence the prediction of study variable y.

• When small number of variables are included then the predictive variance of 𝑦𝑦�

decreases.

• When the observations on more number are to be collected, then it involves more cost,

time, labour etc.

A compromise between these consequences is striked to select the “best regression equation”.

Regression Analysis | Chapter 8 | MTH5404| Mustafa, M. S (UPM) 1

Selection the “best” regression model

• What is “best”?

• There are a number of ways we can choose the “best” - they will not all yield the same

results.

• What about the other potential problems with the model that might have been ignored

while selecting the “best” model?

The problem of variable selection is addressed assuming that the functional form of the

explanatory variable, such as 𝑥𝑥 2 , log 𝑥𝑥, 1/𝑥𝑥1 etc., is known and no outliers or influential

observations are present in the data.

Various statistical tools like residual analysis, identification of influential or high leverage

observations, model adequacy etc. are linked to variable selection. In fact, all these processes

should be solved simultaneously.

Usually, these steps are iteratively employed. In the first step, a strategy for variable selection

is opted and model is fitted with selected variables. The fitted model is then checked for the

functional form, outliers, influential observations etc. Based on the outcome, the model is re-

examined and selection of variable is reviewed again.

8.2 Strategy for Building a Regression Model

This strategy involves a several phases.

Data Collection

The data collection requirements for building a regression model vary with the nature of the

study.

1. Controlled Experiments

Researcher controls the treatments by assigning them to experimental units and observe

the response.

For example: a researcher studied the effects of the size of a graphic presentation and

the time allowed for analysis of the accuracy with which the analysis of the presentation

is carried out. The response variable is a measure of the accuracy of the analysis, and

the explanatory variables are the size of the graphic presentation and the time allowed.

A treatment consisted of a particular combination of size of presentation and length of

time allowed.

Regression Analysis | Chapter 8 | MTH5404| Mustafa, M. S (UPM) 2

2. Controlled Experiment with Covariates

In the controlled experiment, additional information is obtained such as characteristics

of the experimental units in designing the experiment so as to reduce the variance of

the experimental errors.

3. Confirmatory Observational Studies

These studies based on observational, not experimental are intended to test (i.e. to

confirm or not to confirm) hypothesis derived from previous studies. For these studies,

data are collected for explanatory variables that previous studies have shown to effect

the response variable (controlled variable-risk factor) as well as for new variable or

variable involved in the hypothesis (primary variable).

4. Explanatory Observational Studies

In the social, behavioral, and health sciences, management, and other fields, it is often

not possible to conduct controlled experiments. Furthermore, adequate knowledge for

conducting confirmatory observational studies may be lacking. As a result, many

studies in these fields are exploratory observational studies where investigators search

for explanatory variables that might be related to the response variable.

Data Preparation

Once data have been collected, edit, checks to identify gross errors as well as extreme outliers.

Difficulties with data errors are especially prevalent in large data sets and should be corrected

or resolved before the model building begins.

Preliminary Model Investigation

Once data have been properly edited, formal modelling process can begin. A variety of

diagnostics should be employed to identify:

• functional form

• important interactions that should be included in the model

• scatter plots and residual plots useful for determining relationships and their strength

Regression Analysis | Chapter 8 | MTH5404| Mustafa, M. S (UPM) 3

• selected explanatory variables can be fitted in regression function to explore

relationships

• possible strong interactions and

• need for transformations

Reduction of Predictor Variables

• Regression model with many explanatory variables may be difficult to maintain. While

regression model with limited number of explanatory variables are easier to work with

and understood.

• The presence of many highly inter-correlated explanatory variables may increase

sampling variation of regression coefficients, increase problems of round -off errors,

not improve or oven worsen model’s predictive ability.

• An actual worsening of model’s predictive ability can occur when explanatory variables

are kept in regression model that are not related to the response variable.

• Once, one has tentatively decided the functional form of regression relations (whether

in linear form, quadratic etc, whether interaction term), next step to identify a few

“good” subsets of X variables, can be first order, quadratic or other curvature terms or

interaction terms.

Regression Analysis | Chapter 8 | MTH5404| Mustafa, M. S (UPM) 4

Regression Analysis | Chapter 8 | MTH5404| Mustafa, M. S (UPM) 5

8.3 Consequences of Model Misspecification

Suppose there are K candidate regressors, 𝑥𝑥1 , 𝑥𝑥2 , … , 𝑥𝑥𝑘𝑘 and 𝑛𝑛 ≥ 𝐾𝐾 + 1 observations. The full

model is

𝐾𝐾

𝑦𝑦𝑖𝑖 = 𝛽𝛽0 + � 𝛽𝛽𝑗𝑗 𝑥𝑥𝑖𝑖𝑖𝑖 + 𝜀𝜀𝑖𝑖 , 𝑖𝑖 = 1,2, … , 𝑛𝑛 (8.1)

𝑗𝑗=1

We will assume;

• The list of candidates regressors includes all the important variables (no unmeasured

confounders).

• The intercept, 𝛽𝛽0 will always be in the model.

Suppose we delete r regressors from the model and retain 𝑝𝑝 = 𝐾𝐾 − 𝑟𝑟 (𝑝𝑝 + 1 total regressors

including the intercept). The model may be rewritten as

𝑦𝑦 = 𝑋𝑋𝑝𝑝+1 𝛽𝛽𝑝𝑝+1 + 𝑋𝑋𝑟𝑟 𝛽𝛽𝑟𝑟 + 𝜀𝜀 (8.2)

where the X matrix has been partitioned into 𝑋𝑋𝑝𝑝+1 and 𝑋𝑋𝑟𝑟 and 𝛽𝛽 has been partitioned into 𝛽𝛽𝑝𝑝+1

and 𝛽𝛽𝑟𝑟 .

For the full model, the least squares estimate of 𝛽𝛽 is

𝛽𝛽̂ ∗ = (𝑋𝑋′𝑋𝑋)−1 𝑋𝑋′𝑦𝑦 (8.3)

and an estimate of the variance is

2∗

𝑦𝑦′(𝐼𝐼 − 𝑋𝑋(𝑋𝑋 ′ 𝑋𝑋)−1 𝑋𝑋′)𝑦𝑦

𝜎𝜎� = (8.4)

𝑛𝑛 − 𝐾𝐾 − 1

The components of 𝛽𝛽̂ ∗ are denoted by 𝛽𝛽̂𝑝𝑝+1

∗

and 𝛽𝛽̂𝑟𝑟∗ and 𝑦𝑦�𝑖𝑖∗ denotes the fitted values.

The subset model is denoted by

𝑦𝑦 = 𝑋𝑋𝑝𝑝+1 𝛽𝛽𝑝𝑝+1 + 𝜀𝜀 (8.5)

where the least squares estimate of 𝛽𝛽𝑝𝑝+1 is

−1 ′

𝛽𝛽̂𝑝𝑝+1 = �𝑋𝑋𝑝𝑝+1

′

𝑋𝑋𝑝𝑝+1 � 𝑋𝑋𝑝𝑝+1 𝑦𝑦 (8.6)

Regression Analysis | Chapter 8 | MTH5404| Mustafa, M. S (UPM) 6

and the estimate of the residual variance is

′ −1′

𝑦𝑦′ �𝐼𝐼 − 𝑋𝑋𝑝𝑝+1 �𝑋𝑋𝑝𝑝+1 𝑋𝑋𝑝𝑝+1 � 𝑋𝑋𝑝𝑝+1 � 𝑦𝑦

𝜎𝜎� 2 = (8.7)

𝑛𝑛 − 𝑝𝑝 − 1

and the fitted values are 𝑦𝑦�𝑖𝑖 .

Some Properties of the Estimates 𝜷𝜷𝒑𝒑+𝟏𝟏 and 𝝈𝝈

� 𝟐𝟐

−1 ′

1. 𝐸𝐸�𝛽𝛽̂𝑝𝑝+1 � = 𝛽𝛽𝑝𝑝 + �𝑋𝑋𝑝𝑝+1

′

𝑋𝑋𝑝𝑝+1 � 𝑋𝑋𝑝𝑝+1 𝑋𝑋𝑟𝑟 𝛽𝛽𝑟𝑟

−1

2. 𝑉𝑉𝑉𝑉𝑉𝑉�𝛽𝛽̂𝑝𝑝+1 � = 𝜎𝜎 2 �𝑋𝑋𝑝𝑝+1

′

𝑋𝑋𝑝𝑝+1 � and 𝐸𝐸�𝛽𝛽̂ ∗ � = 𝜎𝜎 2 (𝑋𝑋′𝑋𝑋)−1

3. The estimate 𝜎𝜎� 2∗ from the full model is an unbiased estimate of 𝜎𝜎 2 . For the subset model

′ −1 ′

𝛽𝛽𝑟𝑟′ 𝑋𝑋𝑟𝑟′ �𝐼𝐼 − 𝑋𝑋𝑝𝑝+1 �𝑋𝑋𝑝𝑝+1 𝑋𝑋𝑝𝑝+1 � 𝑋𝑋𝑝𝑝+1 � 𝑋𝑋𝑟𝑟 𝛽𝛽𝑟𝑟

𝐸𝐸(𝜎𝜎� 2 ) = 𝜎𝜎 2 +

𝑛𝑛 − 𝑝𝑝 − 1

8.4 Criteria for Model Selection

Various criteria have been proposed in the literature to evaluate and compare the subset

regression models.

The coefficient of Multiple Determination

Identify several “good” subsets of X variables for which high.

Let 𝑅𝑅𝑝𝑝2 denote the 𝑅𝑅 2 for a subset regression model with p regressors (𝑝𝑝 + 1 terms include the

intercept).

𝑅𝑅𝑝𝑝2 criterion equivalent to using 𝑆𝑆𝑆𝑆𝑆𝑆𝑝𝑝 as the criterion consider good for which 𝑆𝑆𝑆𝑆𝑆𝑆𝑝𝑝 small.

𝑆𝑆𝑆𝑆𝑆𝑆𝑝𝑝

𝑅𝑅𝑝𝑝2 = 1 − (8.8)

𝑆𝑆𝑆𝑆𝑆𝑆𝑆𝑆

Since 𝑅𝑅𝑝𝑝2 does not take account of the number of parameters in the regression model and since

𝑅𝑅𝑝𝑝2 can never decrease as P increases, the adjusted coefficient of multiple determination, 𝑅𝑅𝛼𝛼,𝑝𝑝

2

has been suggested as an alternative criterion.

𝑀𝑀𝑀𝑀𝑀𝑀𝑝𝑝

𝑅𝑅𝑝𝑝2 = 1 − (8.9)

𝑆𝑆𝑆𝑆𝑆𝑆𝑆𝑆

𝑛𝑛 − 1

Regression Analysis | Chapter 8 | MTH5404| Mustafa, M. S (UPM) 7

Mallows’ 𝑪𝑪𝒑𝒑 Criterion

This criterion concerned with the total mean squared error of the fitted values for each subset

regression model. The mean squared error concept involves the total error in each fitted value,

𝑌𝑌�𝑖𝑖 − 𝜇𝜇𝑖𝑖 , where 𝜇𝜇𝑖𝑖 is the true mean response when the levels of the predictor variables 𝑋𝑋𝑘𝑘 are

those for the ith case.

𝑆𝑆𝑆𝑆𝑆𝑆𝑝𝑝

𝐶𝐶𝑝𝑝 = − (𝑛𝑛 − 2𝑝𝑝) (8.10)

𝑀𝑀𝑀𝑀𝑀𝑀�𝑋𝑋1 , … , 𝑋𝑋𝑝𝑝−1 �

where 𝑆𝑆𝑆𝑆𝑆𝑆𝑝𝑝 is the error sum of squares for the fitted subset regression model with p parameters

(i.e. with 𝑝𝑝 − 1 X variables).

When there is no bias in the regression model with 𝑝𝑝 − 1 X variables so that 𝐸𝐸(𝑌𝑌�𝑖𝑖 ) ≡ 𝜇𝜇𝑖𝑖 , the

expected value of 𝐶𝐶𝑝𝑝 is approximately p:

𝐸𝐸(𝐶𝐶𝑝𝑝 ) ≈ 𝑝𝑝 when 𝐸𝐸(𝑌𝑌�𝑖𝑖 ) ≡ 𝜇𝜇𝑖𝑖

In using the 𝐶𝐶𝑝𝑝 criterion, we seek to identify subsets of X variables for which (I) the 𝐶𝐶𝑝𝑝 value

is small and (2) the 𝐶𝐶𝑝𝑝 value is near p. Subsets with small 𝐶𝐶𝑝𝑝 values have a small total mean

squared error, and when the 𝐶𝐶𝑝𝑝 value is also near p, the bias of the regression model is small.

𝑨𝑨𝑨𝑨𝑨𝑨𝒑𝒑 and 𝑺𝑺𝑺𝑺𝑺𝑺𝒑𝒑 Criteria

The Akaike’s information criterion (AIC) statistic is given as:

𝐴𝐴𝐴𝐴𝐴𝐴𝑝𝑝 = 𝑛𝑛 𝑙𝑙𝑙𝑙 𝑆𝑆𝑆𝑆𝑆𝑆𝑝𝑝 − 𝑛𝑛 𝑙𝑙𝑙𝑙 𝑛𝑛 + 2𝑝𝑝 (8.11)

The Schwarz’ Bayesian Criterion (SBC) statistic is given as:

𝑆𝑆𝑆𝑆𝑆𝑆𝑝𝑝 = 𝑛𝑛 𝑙𝑙𝑙𝑙 𝑆𝑆𝑆𝑆𝑆𝑆𝑝𝑝 − 𝑛𝑛 𝑙𝑙𝑙𝑙 𝑛𝑛 + [𝑙𝑙𝑙𝑙 𝑛𝑛]𝑝𝑝 (8.12)

PRESS Criterion Statistic

Since the residuals and residual sum of squares acts as a criterion of subset model selection, so

similarly the PRESS residuals and prediction sum of squares can also be used as a basis for

subset model selection.

𝑃𝑃𝑃𝑃𝑃𝑃𝑃𝑃𝑃𝑃𝑝𝑝 (prediction sum of squares) criterion is a measure of how well the use of the fitted

values for a subset model can predict the observe response 𝑌𝑌𝑖𝑖 . The error sum of squares, 𝑆𝑆𝑆𝑆𝑆𝑆 =

2

∑�𝑌𝑌𝑖𝑖 − 𝑌𝑌�𝑖𝑖 � is also such a measure.

The PRESS measure differs from SSE in that each fitted values 𝑌𝑌�𝑖𝑖 for the PRESS criterion is

obtained by deleting the i-th cases from the data set, estimating the regression function for the

Regression Analysis | Chapter 8 | MTH5404| Mustafa, M. S (UPM) 8

subset model from the remaining (n-1) cases and then using the fitted regression function to

obtain the predicted value 𝑌𝑌�𝑖𝑖(𝑖𝑖) ;

𝑛𝑛

2

𝑃𝑃𝑃𝑃𝑃𝑃𝑃𝑃𝑃𝑃𝑝𝑝 = ��𝑌𝑌𝑖𝑖 − 𝑌𝑌�𝑖𝑖(𝑖𝑖) � (8.13)

𝑖𝑖=1

Model with small 𝑃𝑃𝑃𝑃𝑃𝑃𝑃𝑃𝑃𝑃𝑝𝑝 value consider good candidate.

Residual Mean Square

The residual mean square for a subset regression model with p regressors,

𝑆𝑆𝑆𝑆𝑆𝑆𝑝𝑝

𝑀𝑀𝑀𝑀𝑀𝑀𝑝𝑝 = (8.14)

𝑛𝑛 − 𝑝𝑝

So, 𝑀𝑀𝑀𝑀𝑀𝑀𝑝𝑝 can be used as a criterion for model selection like 𝑆𝑆𝑆𝑆𝑆𝑆𝑝𝑝 . The 𝑆𝑆𝑆𝑆𝑆𝑆𝑝𝑝 decreases with

an increase in p. So, similarly as p increases, 𝑀𝑀𝑀𝑀𝑀𝑀𝑝𝑝 initially decreases, then stabilizes and

finally may increase if the model is not sufficient to compensate the loss of one degree of

freedom in the factor (𝑛𝑛 − 𝑝𝑝).

A model with smaller 𝑆𝑆𝑆𝑆𝑆𝑆𝑝𝑝 is preferable.

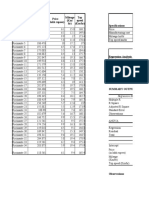

Example 1: A hospital surgical unit was interested in predicting survival in patients undergoing

a particular type of liver operation. A random selection of 108 patients was available for

analysis. From each patient record, the following information was extracted from the

preoperation evaluation:

𝑋𝑋1 blood clotting score

𝑋𝑋2 prognostic index

𝑋𝑋3 enzyme function test score

𝑋𝑋4 liver function test score

𝑋𝑋5 age, in years

𝑋𝑋6 indicator variable for gender (0 = male, 1 = female

𝑋𝑋7 and 𝑋𝑋8 indicator variables for history of alcohol use:

Alcohol Use 𝑿𝑿𝟕𝟕 𝑿𝑿𝟖𝟖

None 0 0

Moderate 1 0

Severe 0 1

Regression Analysis | Chapter 8 | MTH5404| Mustafa, M. S (UPM) 9

Regression Analysis | Chapter 8 | MTH5404| Mustafa, M. S (UPM) 10

Regression Analysis | Chapter 8 | MTH5404| Mustafa, M. S (UPM) 11

Regression Analysis | Chapter 8 | MTH5404| Mustafa, M. S (UPM) 12

8.5 Automatic Search Procedures for Model Selection

Questions in Model Selection:

1. How many variables to use?

• Smaller sets are more convenient, particularly for parameter interpretation.

• Larger sets may explain more of the variation in the response and be better for

prediction.

2. Which variables to use?

• F-tests, t-tests

• Statistics Tools (e.g., 𝑅𝑅 2 , Mallows Cp)

Automatic computer search procedure to arrive at single subset of explanatory variables to

simplify the task, two common approaches considered for automatic search procedures:

1. The “Best” Subset Algorithms

− Algorithm determined best subsets according to specified criterion without

requiring the fitting of all possible subsets.

− Only a small fraction of all possible regression models is required to calculate.

− Several regression models can be identified as "good" for final consideration,

depending on which criteria we use.

− The surgical unit example:

2

𝑅𝑅𝑎𝑎,𝑝𝑝 = 0.823: seven- or eight-parameter model

min(𝐶𝐶7 ) = 5.541

min(𝐴𝐴𝐴𝐴𝐴𝐴7 ) = −163.834

min(𝑆𝑆𝑆𝑆𝑆𝑆5 ) = −153.406

min(𝑃𝑃𝑃𝑃𝑃𝑃𝑃𝑃𝑃𝑃5 ) = 2.738

− When pool of potential X variables very large, say greater than 30, require

excessive processing time.

− Under these conditions, suggest one of the stepwise regression procedures to assist

in the selection of X variables.

2. Stepwise Regression Methods

− Automatic search procedure that develop “best” subset of X variables sequentially,

forward and backward stepwise regression.

− Uses t-statistics to “search” for model.

− Based on t-statistic, choose one variable to add to (or delete from) the model.

− Repeat until no variables can be added or deleted (based on the alpha values you

set initially).

Regression Analysis | Chapter 8 | MTH5404| Mustafa, M. S (UPM) 13

− The stepwise search procedures end with the identification of a single regression

model as "best".

− Weakness: identify only single regression as “best”.

− Remedy by exploring and identify other candidate models with approximately the

same number of explanatory variables identified by automatic procedure.

Forward Stepwise Regression

The forward stepwise regression search algorithm:

𝑡𝑡 ∗ statistics and the associated P-values

Step 1: Fits a simple linear regression model for each of potential variables. For each simple

linear regression model, test whether or not slope equals zero.

𝛽𝛽̂𝑘𝑘

𝑡𝑡𝑘𝑘∗ = (8.15)

𝑠𝑠�𝛽𝛽̂𝑘𝑘 �

The X variable with the largest 𝑡𝑡𝑘𝑘∗ value is the candidate for the first addition. If this 𝑡𝑡𝑘𝑘∗ value

exceeds a predetermined level, the X variable is added. Otherwise, program will terminates

with no X variable considered to enter regression model.

Step 2: Assume 𝑋𝑋7 is the variable entered at Step 1. The stepwise regression routine now fits

all regression model with two X variables, where 𝑋𝑋7 is one of the pair. For each such regression

model, the 𝑡𝑡𝑘𝑘∗ statistics corresponding to the newly added predictor 𝑋𝑋𝑘𝑘 is obtained. The X

variable with the largest 𝑡𝑡𝑘𝑘∗ value (smallest p-value) is candidate for addition at the second

stage. If this 𝑡𝑡𝑘𝑘∗ value exceeds a predetermined level, the second X variable is added. Otherwise

program is terminated.

Step 3: Suppose 𝑋𝑋3 is added at second stage. Now the stepwise regression routine examines

whether any of the other variables already in the model should be dropped. At this step, there

is only one other variable in the model, 𝑋𝑋7, so that only one 𝑡𝑡 ∗ test statistic is obtained

𝛽𝛽̂7

𝑡𝑡7∗ = (8.16)

𝑠𝑠�𝛽𝛽̂7 �

At later stage, there would be a number of these 𝑡𝑡 ∗ statistics, one for each of the variables in

the model besides the one last added. The variable for which this value is smallest is the

candidate for deletion.

Regression Analysis | Chapter 8 | MTH5404| Mustafa, M. S (UPM) 14

Step 4: Suppose 𝑋𝑋7 is retained so that both 𝑋𝑋3 and 𝑋𝑋7 are now in the model. The stepwise

regression routine now examine which variable is the next candidate for addition, then examine

whether any of the variables already in the model should now be dropped and so on until no

further X variables can either be added or deleted at which point the search terminates.

NOTE: The maximum acceptance α limit for adding a variable is 0.10, and the minimum

acceptance α limit for removing a variable is 0.15.

Example 2: The forward stepwise regression procedure for the surgical unit example.

Regression Analysis | Chapter 8 | MTH5404| Mustafa, M. S (UPM) 15

8.6 Model Validation

The final step in the model-building process.

• the validation of the selected regression models

• checking a candidate model against independent data

Three basic ways of validating a regression model:

1. Collection of new data to check the model and its predictive ability.

2. Comparison of results with theoretical expectation; early results and simulation results.

3. Holdout sample to check the model and its predictive ability.

Collection of New data to Check Model

A means of measuring the actual predictive capability of the selected regression model is to

use this model to predict each case in the new data set and then to calculate the mean of the

squared prediction errors, to be denoted by MSPR, which stands for mean squared prediction

error:

2

∑𝑛𝑛𝑖𝑖=1�𝑌𝑌𝑖𝑖 − 𝑌𝑌�𝑖𝑖 �

𝑀𝑀𝑀𝑀𝑀𝑀𝑀𝑀 = (8.17)

𝑛𝑛∗

• If the mean squared prediction error MSPR is fairly close to MSE based on the regression

fit to the model-building data set, then the error mean square MSE for the selected

regression model is not seriously biased and gives an appropriate indication of the

predictive ability of the model.

• If the mean squared prediction error is much larger than MSE, one should rely on the mean

squared prediction error as an indicator of how well the selected regression model will

predict in the future.

Difficulty in Replicating a Study

• There may be difficulties in replicating an earlier study in identical fashion.

• Repetition of an observational study usually involves different conditions; the differences

being related to changes in setting and/or time.

• For example, a study relating consumer purchases of product to a special promotional

incentives was repeated in another year when the business climate differed substantially

from that during the initial study.

• If a replication study for which the conditions of setting differ slightly or substantially, and

the regression results are still similar, indicate the regression results can be generalized to

apply under substantially varying conditions.

Regression Analysis | Chapter 8 | MTH5404| Mustafa, M. S (UPM) 16

Comparison with Theory, Empirical Evidence or Simulation Results

Comparison of regression coefficients and predictions with theoretical expectations previous

empirical results or simulation results should be made.

Data Splitting

• Preferred method to validate a regression model is through collection of new data- often

this is neither practical nor feasible.

• If data set is large enough, is to split data into two sets. The first set, called model-building

set or training sample, is used to develop the model. The second data set, called the

validation or prediction set is used to evaluate the reasonableness and predictive ability of

the selected model.

• This validation procedure is often called cross-validation.

• Data splitting in effect is an attempt to simulate replication of the study.

• Validation on data set is used for validation in the same way as when new data are

collected.

• The regression coefficients can be re-estimated for the selected model and then compared

for consistency with the coefficients obtained from the model-building data set.

• When splitting the data, it is important that the model-building data set be larger so that

reliable model can be developed.

• Splits of data can be made at random.

• Possible drawback of data splitting, variances of the estimates regression coefficients

developed from model-building data set will usually be larger than those that would have

been obtained from the fit to the entire data set.

• If model-building data set is reasonably large, these variances generally will not be that

much larger than those for the entire data set.

• Once the model has been validated, it is customary practice to use the entire data set for

estimating the final regression model.

Regression Analysis | Chapter 8 | MTH5404| Mustafa, M. S (UPM) 17

Example 3: in the surgical unit, three models were favoured by the various model-selection

criteria. The 𝑆𝑆𝑆𝑆𝑆𝑆𝑝𝑝 and 𝑃𝑃𝑃𝑃𝑃𝑃𝑃𝑃𝑃𝑃𝑝𝑝 criteria favoured the four-predictor model

Regression Analysis | Chapter 8 | MTH5404| Mustafa, M. S (UPM) 18

You might also like

- Integer Optimization and its Computation in Emergency ManagementFrom EverandInteger Optimization and its Computation in Emergency ManagementNo ratings yet

- Variable Selection 8.1 The Model Building ProblemDocument18 pagesVariable Selection 8.1 The Model Building ProblemMon LuffyNo ratings yet

- Chapter No. 03 Experiments With A Single Factor - The Analysis of Variance (Presentation)Document81 pagesChapter No. 03 Experiments With A Single Factor - The Analysis of Variance (Presentation)Sahib Ullah MukhlisNo ratings yet

- Q. 1 How Is Mode Calculated? Also Discuss Its Merits and Demerits. AnsDocument13 pagesQ. 1 How Is Mode Calculated? Also Discuss Its Merits and Demerits. AnsGHULAM RABANINo ratings yet

- Estimation Strategies For The Regression Coefficient Parameter Matrix in Multivariate Multiple RegressionDocument20 pagesEstimation Strategies For The Regression Coefficient Parameter Matrix in Multivariate Multiple RegressionRonaldo SantosNo ratings yet

- TestDocument4 pagesTestamanjots01No ratings yet

- 1 Nonlinear RegressionDocument9 pages1 Nonlinear RegressioneerneeszstoNo ratings yet

- Balazsi-Matyas: The Estimation of Multi-Dimensional FixedEffects Panel Data ModelsDocument38 pagesBalazsi-Matyas: The Estimation of Multi-Dimensional FixedEffects Panel Data Modelsrapporter.netNo ratings yet

- Between-Study Heterogeneity: Doing Meta-Analysis in RDocument23 pagesBetween-Study Heterogeneity: Doing Meta-Analysis in RNira NusaibaNo ratings yet

- TN06 - Time Series Technical NoteDocument8 pagesTN06 - Time Series Technical NoteRIDDHI SHETTYNo ratings yet

- Mane-4040 Mechanical Systems Laboratory (MSL) Lab Report Cover SheetDocument13 pagesMane-4040 Mechanical Systems Laboratory (MSL) Lab Report Cover Sheetjack ryanNo ratings yet

- Estimation For Multivariate Linear Mixed ModelsDocument6 pagesEstimation For Multivariate Linear Mixed ModelsFir DausNo ratings yet

- Parameter Statistic Parameter Population Characteristic Statistic Sample CharacteristicDocument9 pagesParameter Statistic Parameter Population Characteristic Statistic Sample Characteristic30049fahmida alam mahiNo ratings yet

- Paper MS StatisticsDocument14 pagesPaper MS Statisticsaqp_peruNo ratings yet

- Partial Least Squares Regression A TutorialDocument17 pagesPartial Least Squares Regression A Tutorialazhang576100% (1)

- Estimation For Multivariate Linear Mixed ModelsDocument7 pagesEstimation For Multivariate Linear Mixed Modelsilkom12No ratings yet

- Development of Robust Design Under Contaminated and Non-Normal DataDocument21 pagesDevelopment of Robust Design Under Contaminated and Non-Normal DataVirojana TantibadaroNo ratings yet

- Press (1972)Document6 pagesPress (1972)cbisogninNo ratings yet

- Maruo Etal 2017Document15 pagesMaruo Etal 2017Andre Luiz GrionNo ratings yet

- Clay 2017Document13 pagesClay 2017everst gouveiaNo ratings yet

- 1-Nonlinear Regression Models in AgricultureDocument9 pages1-Nonlinear Regression Models in AgricultureJaka Pratama100% (1)

- Outros Métodos de InferenciasDocument13 pagesOutros Métodos de Inferenciasjoao lucio de souza júniorNo ratings yet

- Statistics 1Document97 pagesStatistics 1BJ TiewNo ratings yet

- Regression Techniques for Dependence AnalysisDocument13 pagesRegression Techniques for Dependence AnalysisQueenie Marie Obial AlasNo ratings yet

- Unit 3 Experimental Designs: ObjectivesDocument14 pagesUnit 3 Experimental Designs: ObjectivesDep EnNo ratings yet

- Variable Selection in Multivariable Regression Using SAS/IMLDocument20 pagesVariable Selection in Multivariable Regression Using SAS/IMLbinebow517No ratings yet

- Simulation OptimizationDocument6 pagesSimulation OptimizationAndres ZuñigaNo ratings yet

- 05 Diagnostic Test of CLRM 2Document39 pages05 Diagnostic Test of CLRM 2Inge AngeliaNo ratings yet

- Answers Review Questions EconometricsDocument59 pagesAnswers Review Questions EconometricsZX Lee84% (25)

- CLRM assumptions near multicollinearityDocument3 pagesCLRM assumptions near multicollinearityAna-Maria BadeaNo ratings yet

- Design of Experiments For ScreeningDocument33 pagesDesign of Experiments For ScreeningWaleed AwanNo ratings yet

- One-Way Analysis of Covariance-ANCOVADocument55 pagesOne-Way Analysis of Covariance-ANCOVAswetharajinikanth01No ratings yet

- Use of Sensitivity Analysis To Assess Reliability of Mathematical ModelsDocument13 pagesUse of Sensitivity Analysis To Assess Reliability of Mathematical ModelsaminNo ratings yet

- Imbens Wooldridge NotesDocument473 pagesImbens Wooldridge NotesAlvaro Andrés PerdomoNo ratings yet

- The Method of Paired ComparisonsDocument23 pagesThe Method of Paired Comparisons김정민No ratings yet

- Lesson Plans in Urdu SubjectDocument10 pagesLesson Plans in Urdu SubjectNoor Ul AinNo ratings yet

- BS Unit 2Document37 pagesBS Unit 2Sneha DavisNo ratings yet

- Econometrics OraDocument30 pagesEconometrics OraCoca SevenNo ratings yet

- PLS Regression TutorialDocument17 pagesPLS Regression TutorialElsa GeorgeNo ratings yet

- Design of ExperimentsDocument5 pagesDesign of ExperimentsPhelelaniNo ratings yet

- Checking Model AssumptionsDocument4 pagesChecking Model AssumptionssmyczNo ratings yet

- XI Lecture 2020Document47 pagesXI Lecture 2020Ahmed HosneyNo ratings yet

- Chapter-4 StatDocument34 pagesChapter-4 StatJanelle Dela CruzNo ratings yet

- Application of Statistical Concepts in The Determination of Weight Variation in SamplesDocument6 pagesApplication of Statistical Concepts in The Determination of Weight Variation in SamplesRaffi IsahNo ratings yet

- Chapter 1Document22 pagesChapter 1Adilhunjra HunjraNo ratings yet

- Chen10011 NotesDocument58 pagesChen10011 NotesTalha TanweerNo ratings yet

- Aea Cookbook Econometrics Module 1Document117 pagesAea Cookbook Econometrics Module 1shadayenpNo ratings yet

- Solving Quadratic Equations using Genetic AlgorithmDocument4 pagesSolving Quadratic Equations using Genetic AlgorithmsudhialamandaNo ratings yet

- Mathematical ModelingDocument33 pagesMathematical ModelingIsma OllshopNo ratings yet

- Forecasting Cable Subscriber Numbers with Multiple RegressionDocument100 pagesForecasting Cable Subscriber Numbers with Multiple RegressionNilton de SousaNo ratings yet

- 125.785 Module 2.2Document95 pages125.785 Module 2.2Abhishek P BenjaminNo ratings yet

- EXPERIMENT 1-Error and Data Analysis: (I) Significant FiguresDocument13 pagesEXPERIMENT 1-Error and Data Analysis: (I) Significant FiguresKenya LevyNo ratings yet

- Integral UtilityDocument35 pagesIntegral UtilityPino BacadaNo ratings yet

- Chapter 1. Introduction and Review of Univariate General Linear ModelsDocument25 pagesChapter 1. Introduction and Review of Univariate General Linear Modelsemjay emjayNo ratings yet

- LECTURE Notes On Design of ExperimentsDocument31 pagesLECTURE Notes On Design of Experimentsvignanaraj88% (8)

- Optimization Using The Gradient and Simplex MethodDocument8 pagesOptimization Using The Gradient and Simplex Methodion firdausNo ratings yet

- And Estimation Sampling Distributions: Learning OutcomesDocument12 pagesAnd Estimation Sampling Distributions: Learning OutcomesDaniel SolhNo ratings yet

- Statistical Inference in Financial and Insurance Mathematics with RFrom EverandStatistical Inference in Financial and Insurance Mathematics with RNo ratings yet

- Why Do We Study Physics - Socratic PDFDocument1 pageWhy Do We Study Physics - Socratic PDFMon LuffyNo ratings yet

- BBBM4103 Bank Management PDFDocument319 pagesBBBM4103 Bank Management PDFkshangkariNo ratings yet

- Based On BCG PEOPLE PRIORITIES IN RESPONSE TO COVID-19 Article, PDFDocument2 pagesBased On BCG PEOPLE PRIORITIES IN RESPONSE TO COVID-19 Article, PDFMon LuffyNo ratings yet

- Why Should You Study Physics - PDFDocument10 pagesWhy Should You Study Physics - PDFMon LuffyNo ratings yet

- ITS Education Asia Article - WHY STUDY PHYSICS AND IS PHYSICS RELEVANT - PDFDocument4 pagesITS Education Asia Article - WHY STUDY PHYSICS AND IS PHYSICS RELEVANT - PDFMon LuffyNo ratings yet

- This Study Resource Was: BCG People Priorities in Response To Covid 19Document8 pagesThis Study Resource Was: BCG People Priorities in Response To Covid 19Mon LuffyNo ratings yet

- Multiple Choice Questions (1-5) 1 Tick For Each Correct Answer PDFDocument2 pagesMultiple Choice Questions (1-5) 1 Tick For Each Correct Answer PDFMon LuffyNo ratings yet

- NUTR 323 Chapter 14 NotesDocument27 pagesNUTR 323 Chapter 14 NotesMon LuffyNo ratings yet

- Summary of Alpha MaleDocument1 pageSummary of Alpha MaleMon LuffyNo ratings yet

- Case Study 18.1Document2 pagesCase Study 18.1Mon Luffy100% (1)

- Assignment For SCC Internship PDFDocument2 pagesAssignment For SCC Internship PDFMon LuffyNo ratings yet

- TB Chapter 20Document14 pagesTB Chapter 20Mon LuffyNo ratings yet

- Based On BCG PEOPLE PRIORITIES IN RESPONSE TO COVID-19 Article, PDFDocument2 pagesBased On BCG PEOPLE PRIORITIES IN RESPONSE TO COVID-19 Article, PDFMon LuffyNo ratings yet

- Case Study 19.2Document1 pageCase Study 19.2Mon LuffyNo ratings yet

- IFM TB ch18Document9 pagesIFM TB ch18Mon LuffyNo ratings yet

- Case Study 17.1 Maintaining A Healthy WeightDocument1 pageCase Study 17.1 Maintaining A Healthy WeightMon LuffyNo ratings yet

- Case Study 15.1 Following Ana S Medical HistoryDocument2 pagesCase Study 15.1 Following Ana S Medical HistoryMon LuffyNo ratings yet

- Multinational Cost of Capital and Capital StructureDocument11 pagesMultinational Cost of Capital and Capital StructureMon LuffyNo ratings yet

- Test Bank International Finance MCQ (Word) Chap 10Document38 pagesTest Bank International Finance MCQ (Word) Chap 10Mon LuffyNo ratings yet

- Case Study 5 - Older AdultsDocument6 pagesCase Study 5 - Older AdultsMon LuffyNo ratings yet

- IFM TB ch21Document10 pagesIFM TB ch21Mon LuffyNo ratings yet

- Are You?" This Book Communicates To Strong Men Who Are Good, HardworkingDocument8 pagesAre You?" This Book Communicates To Strong Men Who Are Good, HardworkingMon LuffyNo ratings yet

- CBMS4303 Management of Information System September 2017Document14 pagesCBMS4303 Management of Information System September 2017Mon LuffyNo ratings yet

- 9-13 What Was The Problem at Celcom That Was Described This Case? What People, Organization, and Technology Factors Contributed To This Problem?Document5 pages9-13 What Was The Problem at Celcom That Was Described This Case? What People, Organization, and Technology Factors Contributed To This Problem?Mon LuffyNo ratings yet

- 6a Operational Excellence in Action Celcom PDFDocument9 pages6a Operational Excellence in Action Celcom PDFMon LuffyNo ratings yet

- Sample Exam Answer 3 PDFDocument16 pagesSample Exam Answer 3 PDFMon LuffyNo ratings yet

- 20.1 Forecasting Short-Term Financing NeedsDocument40 pages20.1 Forecasting Short-Term Financing NeedsMon LuffyNo ratings yet

- Case Study CelcomDocument7 pagesCase Study CelcomMon LuffyNo ratings yet

- Be Final PDFDocument15 pagesBe Final PDFMon LuffyNo ratings yet

- Marketing Mix: MKT420 (Principles and Practice or Marketing) Group MHR 2Document53 pagesMarketing Mix: MKT420 (Principles and Practice or Marketing) Group MHR 2Mon LuffyNo ratings yet

- Binomial DistributionDocument15 pagesBinomial DistributionAnonymous eDV7aJoD100% (1)

- Statistical analysis of subjective and objective methods of evaluating fabric handle Part 2Document8 pagesStatistical analysis of subjective and objective methods of evaluating fabric handle Part 2Swati SharmaNo ratings yet

- Hypergeometric DistributionDocument9 pagesHypergeometric Distributiondanny222No ratings yet

- SPSS AssignmentDocument3 pagesSPSS AssignmentKwin KonicNo ratings yet

- Hasil Uji Normalitas Nim/Absen Ganjil: Case Processing SummaryDocument2 pagesHasil Uji Normalitas Nim/Absen Ganjil: Case Processing SummaryAnisaNo ratings yet

- Financial Risk and Financial Performance A Critical Analysis of Commercial Banks Listed in Rwanda Stock ExchangeDocument12 pagesFinancial Risk and Financial Performance A Critical Analysis of Commercial Banks Listed in Rwanda Stock ExchangeEditor IJTSRDNo ratings yet

- Iter PDFDocument400 pagesIter PDFHilton FernandesNo ratings yet

- Prediction Is A Key Task of StatisticsDocument18 pagesPrediction Is A Key Task of StatisticsadmirodebritoNo ratings yet

- Formulates Appropriate Null and Alternative HypothesisDocument23 pagesFormulates Appropriate Null and Alternative HypothesisRossel Jane CampilloNo ratings yet

- Regression AnalysisDocument19 pagesRegression AnalysisPRANAYNo ratings yet

- Orca Share Media1521296717980Document11 pagesOrca Share Media1521296717980Ace PatriarcaNo ratings yet

- LectureDocument6 pagesLectureJubillee MagsinoNo ratings yet

- Classification Pros ConsDocument1 pageClassification Pros ConsCem ErsoyNo ratings yet

- BUS 660 Benchmark AssignmentDocument21 pagesBUS 660 Benchmark AssignmentLori DoctorNo ratings yet

- Pattern Classification: All Materials in These Slides Were Taken FromDocument16 pagesPattern Classification: All Materials in These Slides Were Taken FromSuccessNo ratings yet

- 17 Statistical Hypothesis Tests in Python (Cheat Sheet)Document44 pages17 Statistical Hypothesis Tests in Python (Cheat Sheet)KrishanSinghNo ratings yet

- Lecture No.10Document8 pagesLecture No.10Awais RaoNo ratings yet

- One-Way Analysis of Variance: Using The One-WayDocument25 pagesOne-Way Analysis of Variance: Using The One-WayAustria, Gerwin Iver LuisNo ratings yet

- Organic and Inorganic Chemistry: Accuracy and MeasurementsDocument3 pagesOrganic and Inorganic Chemistry: Accuracy and MeasurementsAlexi GoNo ratings yet

- Msai349 Project Final ReportDocument5 pagesMsai349 Project Final Reportapi-439010648No ratings yet

- Analysis of VarianceDocument52 pagesAnalysis of VarianceNgọc Yến100% (1)

- Joint, Marginal, Conditional Distributions ProblemsDocument2 pagesJoint, Marginal, Conditional Distributions ProblemsQinxin XieNo ratings yet

- Stratified Random Sampling PrecisionDocument10 pagesStratified Random Sampling PrecisionEPAH SIRENGONo ratings yet

- downloadMathsA LevelS3Papers EdexcelJune20201220MS20 20S320Edexcel PDFDocument12 pagesdownloadMathsA LevelS3Papers EdexcelJune20201220MS20 20S320Edexcel PDFMashhood Babar ButtNo ratings yet

- Thesis Tabulation Group 2Document18 pagesThesis Tabulation Group 2Alodia ApoyonNo ratings yet

- FR 609 LTRC 13-2ST Live Load Monitoring of The I-10 Twin Span BridgeDocument150 pagesFR 609 LTRC 13-2ST Live Load Monitoring of The I-10 Twin Span BridgewalaywanNo ratings yet

- Using IBM SPSS Statistics - An Interactive Hands-On Approach (2016) James AldrichDocument473 pagesUsing IBM SPSS Statistics - An Interactive Hands-On Approach (2016) James AldrichSabcMxyz100% (6)

- Sampling and Sampling Distributions Chap 7Document19 pagesSampling and Sampling Distributions Chap 7Sami119No ratings yet

- Probability and Statistic Chapter2Document78 pagesProbability and Statistic Chapter2PHƯƠNG ĐẶNG YẾNNo ratings yet

- Negative Hypergeometric DistributionDocument4 pagesNegative Hypergeometric Distributionhobson616No ratings yet