Professional Documents

Culture Documents

Moravecs Paradox

Moravecs Paradox

Uploaded by

Nicholas Featherston0 ratings0% found this document useful (0 votes)

99 views4 pagesCopyright

© © All Rights Reserved

Available Formats

PDF or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

© All Rights Reserved

Available Formats

Download as PDF or read online from Scribd

0 ratings0% found this document useful (0 votes)

99 views4 pagesMoravecs Paradox

Moravecs Paradox

Uploaded by

Nicholas FeatherstonCopyright:

© All Rights Reserved

Available Formats

Download as PDF or read online from Scribd

You are on page 1of 4

ai7r2020 Moravec’s paradox - Wikiped'a

WikiPepiA.

Moravec's paradox

Moravee's paradox is the observation by artificial intelligence and roboties researchers that, contrary

to traditional assumptions, reasoning (which is high-level in humans) requires very little computation,

but sensorimotor skills (comparatively low-level in humans) require enormous computational resources.

The principle was articulated by Hans Moravec, Rodney Brooks, Marvin Minsky and others in the 1980s.

As Moravec writes, "it is comparatively easy to make computers exhibit adult level performance on

intelligence tests or playing checkers, and difficult or impossible to give them the skills of a one-year-old

when it comes to perception and mobility”.|4]

Similarly, Minsky emphasized that the most difficult human skills to reverse engineer are those that are

unconscious. "In general, we're least aware of what our minds do best", he wrote, and added "we're more

aware of simple processes that don't work well than of complex ones that work flawlessly" [2]

Contents

The biological basis of human skills

Historical influence on artificial intelligence

Reception

See also

Notes

References

Bibliography

External links

The biological basis of human skills

One possible explanation of the paradox, offered by Moravec, is based on evolution. All human skills are

implemented biologically, using machinery designed by the process of natural selection. In the course of

their evolution, natural selection has tended to preserve design improvements and optimizations. The

older a skill is, the more time natural selection has had to improve the design. Abstract thought

developed only very recently, and consequently, we should not expect its implementation to be

particularly efficient.

As Moravec writ

Encoded in the large, highly evolved sensory and motor portions of the human brain is a

billion years of experience about the nature of the world and how to survive in it. The

deliberate process we call reasoning is, I believe, the thinnest veneer of human thought,

effective only because it is supported by this much older and much more powerful, though

usually unconscious, sensorimotor knowledge. We are all prodigious olympians in perceptual

htpsion.wikipodta orghwikiMoravect.27s_paradox Me

172020 oravee's paradox: Wikipedia

and motor areas, so good that we make the difficult look easy. Abstract thought, though, is a

new trick, perhaps less than 100 thousand years old. We have not yet mastered it. It is not all

that intrinsically difficult; it just seems so when we do it.[31

Acompact way to express this argument would be:

= We should expect the difficulty of reverse-engineering any human skill to be roughly proportional to

the amount of time that skill has been evolving in animals.

= The oldest human skills are largely unconscious and so appear to us to be effortless

= Therefore, we should expect skills that appear effortless to be difficult to reverse-engineer, but skills

that require effort may not necessarily be difficult to engineer at all.

Some examples of skills that have been evolving for millions of years: recognizing a face, moving around

in space, judging people's motivations, catching a ball, recognizing a voice, setting appropriate goals,

paying attention to things that are interesting; anything to do with perception, attention, visualization,

motor skills, social skills and so on.

Some examples of skills that have appeared more recently: mathematics, engineering, human games,

logic and scientific reasoning. These are hard for us because they are not what our bodies and brains

were primarily evolved to do. These are skills and techniques that were acquired recently, in historical

time, and have had at most a few thousand years to be refined, mostly by cultural evolution. 4)

Historical influence on artificial intelligence

In the early days of artificial intelligence research, leading researchers often predicted that they would be

able to create thinking machines in just a few decades (see history of artificial intelligence). Their

optimism stemmed in part from the fact that they had been successful at writing programs that used

logic, solved algebra and geometry problems and played games like checkers and chess. Logic and

algebra are difficult for people and are considered a sign of intelligence. Many prominent researchers!)

assumed that, having (almost) solved the "hard" problems, the "easy" problems of vision and

commonsense reasoning would soon fall into place. They were wrong, and one reason is that these

problems are not easy at all, but incredibly difficult. The fact that they had solved problems like logic and

algebra was irrelevant, because these problems are extremely easy for machines to solve.[®)

Rodney Brooks explains that, according to early AI research, intelligence was "best characterized as the

things that highly educated male scientists found challenging", such as chess, symbolic integration,

proving mathematical theorems and solving complicated word algebra problems. "The things that

children of four or five years could do effortlessly, such as visually distinguishing between a coffee cup

and a chair, or walking around on two legs, or finding their way from their bedroom to the living room

‘were not thought of as activities requiring intelligence."|5]

‘This would lead Brooks to pursue a new direction in artificial intelligence and robotics research. He

decided to build intelligent machines that had "No cognition. Just sensing and action. That is all I would

build and completely leave out what traditionally was thought of as the intelligence of artificial

intelligence."[5] This new direction, which he called "Nouvelle AI" was highly influential on robotics

research and AI.(6II7]

Reception

hitpsson.wikipodta orghwkiMoravect27s_paradox 28

‘772020 Moravec’s paradox - Wikiped'a

Linguist and cognitive scientist Steven Pinker considers this the main lesson uncovered by AI

researchers. In his 1994 book The Language Instinct, he wrote:

The main lesson of thirty-five years of AI research is that the hard problems are easy

easy problems are hard. The mental abilities of a four-year-old that we take for granted -

recognizing a face, lifting a pencil, walking across a room, answering a question — in fact

solve some of the hardest engineering problems ever conceived... As the new generation of

intelligent devices appears, it will be the stock analysts and petrochemical engineers and

parole board members who are in danger of being replaced by machines. The gardeners,

receptionists, and cooks are secure in their jobs for decades to come. [81

See also

= Aleffect

= Embodied cognition

= History of artificial intelligence

= Subsumption architecture

Notes

1. Even given that cultural evolution is faster than genetic evolution, the difference in development time

between these two kinds of skills is five or six orders of magnitude, and (Moravec would argue) there

hasn't been nearly enough time for us to have "mastered" the new skills

2, These are not the only reasons that their predictions did not come true: see the problems.

References

Moravec 1988, p. 15.

Minsky 1986, p, 29

Moravec 1988, pp. 15-16.

Zador, Anthony (2019-08-21). "A critique of pure learning and what artificial neural networks can

leam from animal brains" (https://www.ncbi.nlm.nih.gov/pme/articles/PMC6704116). Nature

Communications. 10 (1): 3770, Biboode:2019NatCo..10.3770Z (https://uiadsabs.harvard.edu/abs/20

19NatCo..10.3770Z). doi:10.1038/s41467-019-11786-6 (https://doi.org/10.1038%2Fs41467-019-117

86-6), PMC 6704116 (https://www.ncbi.nlm.nih.gov/pme/articles/PMC6704116). PMID 31434893 (htt

ps://pubmed.ncbi.nlm.nih.gov/31434893). "Herbert Simon, a pioneer of artificial intelligence (Al),

famously predicted in 1965 that “machines will be capable, within twenty years, of doing any work a

man can do"—to achieve general Al."

Brooks (2002), quoted in McCorduck (2004, p. 458)

McCorduck 2004, p. 456

Brooks 1986.

Pinker 2007, pp. 190-91.

RONS

Bibliography

= Brooks, Rodney (1986), intelligence Without Representation (http://people.csail.mit,edu/brooks/pape

rs/representation.ps.Z), MIT Artificial Intelligence Laboratory

hipadin wiped. orglwkiMoravec%27e, paradox a4

‘772020 Moravec’s paradox - Wikiped'a

= Brooks, Rodney (2002), Flesh and Machines, Pantheon Books

= Minsky, Marvin (1986), The Society of Mind, Simon and Schuster, p. 29

= Moravec, Hans (1988), Mind Children, Harvard University Press

= McCorduck, Pamela (2004), Machines Who Think (http:/Avww.pamelamc.com/htmVmachines_who_t

hink.htm)) (2nd ed.), Natick, MA: A. K. Peters, Ltd., ISBN 1-56881-205-1, p. 456.

= Nilsson, Nils (1998). Artificial Intelligence: A New Synthesis (https://archive.org/details/artificialintellO

O0dnils). Morgan Kaufmann. p. 7 (https://archive.org/details/artificialintell000Onils/page/7). ISBN 978-

1-55860-467-4

= Pinker, Steven (September 4, 2007) [1994], The Language Instinct, Perennial Modern Classics,

Harper, ISBN 978-0-06-133646-1

External links

= “explanation” (https://www.explainxkcd.com/wiki/index,php/1425:_Tasks) of the XKCD comic (https://

xked.com/1425/) about this “paradox”

Retrieved from “https://en.wikipedia org/wlindex.phptitle=Moravec%27s_paradox&oldid=947936122"

This page was last edited on 29 March 2020, at 08:04 (UTC).

Text is available under the Creative Commons Attribution-ShareAlike License; additional terms may apply. By using this site,

you agree to the Terms of Use and Privacy Policy. Wikipedia® is a registered trademark of the Wikimedia Foundation, Inc., &

non-profit organization,

hitpsson.wikipodta orghwkiMoravect27s_paradox 46

You might also like

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5807)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1091)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (842)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (590)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (897)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (345)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (122)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (401)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (74)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- VESC ProjectDocument5 pagesVESC ProjectNicholas FeatherstonNo ratings yet

- Why Do Your Wings Have Dihedral - BoldmethodDocument9 pagesWhy Do Your Wings Have Dihedral - BoldmethodNicholas FeatherstonNo ratings yet

- Lect 32Document16 pagesLect 32Nicholas FeatherstonNo ratings yet

- Grillage WestDocument56 pagesGrillage WestNicholas FeatherstonNo ratings yet

- Irc Gov in SP 071 2018Document24 pagesIrc Gov in SP 071 2018Nicholas FeatherstonNo ratings yet

- HyerPeterson Mines 0052N 11781Document147 pagesHyerPeterson Mines 0052N 11781Nicholas FeatherstonNo ratings yet

- Glue Ear - Symptoms, Causes, Treatment, and PreventionDocument11 pagesGlue Ear - Symptoms, Causes, Treatment, and PreventionNicholas FeatherstonNo ratings yet

- Steel-Concrete Composite Bridges: Design, Life Cycle Assessment, Maintenance, and Decision-MakingDocument14 pagesSteel-Concrete Composite Bridges: Design, Life Cycle Assessment, Maintenance, and Decision-MakingNicholas FeatherstonNo ratings yet

- Electrohemical Study of Corrosion Rate of Steel in Soil Barbalat2012Document8 pagesElectrohemical Study of Corrosion Rate of Steel in Soil Barbalat2012Nicholas FeatherstonNo ratings yet

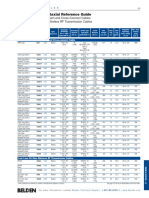

- RG Coaxial and Triaxial Reference GuideDocument13 pagesRG Coaxial and Triaxial Reference GuideNicholas FeatherstonNo ratings yet

- Sukhoi Su-35Document30 pagesSukhoi Su-35Nicholas FeatherstonNo ratings yet

- Aluminum Alloys For AerospaceDocument2 pagesAluminum Alloys For AerospaceNicholas Featherston100% (1)

- Thesis Ault ReportDocument167 pagesThesis Ault ReportNicholas FeatherstonNo ratings yet

- Jesus Nut - WikipediaDocument2 pagesJesus Nut - WikipediaNicholas FeatherstonNo ratings yet

- Dihedral (Aeronautics) - WikipediaDocument7 pagesDihedral (Aeronautics) - WikipediaNicholas FeatherstonNo ratings yet

- Excel Intermediate ManualDocument35 pagesExcel Intermediate ManualNicholas FeatherstonNo ratings yet