Professional Documents

Culture Documents

Analysis of Naïve Bayes Classification For Diabetes Mellitus

Uploaded by

kharisOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Analysis of Naïve Bayes Classification For Diabetes Mellitus

Uploaded by

kharisCopyright:

Available Formats

International Journal of Computer Sciences and Engineering Open Access

Review Paper Vol.-6, Issue-12, Dec 2018 E-ISSN: 2347-2693

Analysis of Naïve Bayes Classification for Diabetes Mellitus

S. Sankaranarayanan1*, T. Pramananda Perumal2

1

Research and Development Centre Bharathiar University, Coimbatore-46, India

2

(Retd), Presidency College (Autonomous),Chennai-5, India

*Corresponding author: profsankaranarayanan1970@gmail.com, Tel.: +91-7904051822 / 9443651545

Available online at: www.ijcseonline.org

Accepted: 21/Dec/2018, Published: 31/Dec/2018

Abstract- Data Mining plays a major role in the decision making process of any application as in Health Care, Artificial

Intelligence, military and weather forecasting. In particular, Classification is used to implement the real time Clinical Decision

Support System (CDSS) in health care industry. Thus the CDSS can be viewed as if it predicts the decisions through the

supervised learning instances from the training dataset. Here a discrete set of algorithms and techniques are in vogue in the

backdrop of classification through supervised learning and hence termed as classification algorithms. Among these

classification algorithms, Naïve Bayes is the most familiar which uses the historical data as supervised learning instances. This

paper surveys the application of Naïve Bayes classification in health care with specific pertinence to analyzing Diabetic

Mellitus disease. It also focuses on the implementation of this specific algorithm in the Diabetic domain to expertise an

application.

Keywords— Classification, Text Classification, Naïve Bayes, Semantic Analysis, Health Care

I. INTRODUCTION The initial process is then directing for the final

classification phase where the classification algorithms

In machine learning, classification can mainly be used for specifically Machine Learning Classifiers are used. The last

the decision making process in the critical situations and step finds the effectiveness and performance of the

when predictive observations are needed with the classification task being applied based on the selected

application of sample data. Supervised learning instances are classifier and the appropriate feature selection method thus

used as historical data or training dataset to predict the far used.

decisions over the application which does not warrant

human intervention. Classification deals with both text- Section I deals with the introductory concepts on Data

based and semantic content for the decision making mining in the context of classification and is better analyzed

analysis. Text classification is obtained for reason prediction both for Text data and Semantic inputs. As machine learning

through data processing based on Natural Language classifiers so selected for classification process dictate the

Processing (NLP). The text classification process consists of success rate of these algorithms, models implementing

five main phases; Data preprocessing, feature extraction, various types of classifiers for specific domains are inducted

feature selection, classification and performance analysis. in Section II that follows here. In Section III, special

Data preprocessing step is performed as being warranted emphasis is given to Naïve Bayesian algorithm as is so

based on the available content and type of the data as to such important to the context of classification in its simplicity and

text or sentence to be made nominal. Here, NLP is used for elegance of exhibiting performance in almost all domains of

the preprocessing phase. The whole document or sentence application including Clinical Decision Support System. It

may involve to process of tokenization initially. The tokens also elucidates all background information of Naïve

can be processed with the procedural tasks such as stop Bayesian classification thoroughly. Section IV deals with

word identification, word sense disambiguation. Finally, the survey of application of Naïve Bayesian process to

stemming process is used to obtain the processed level of various medical sub domains. Since a comparative survey of

data for the input to next phase. Next to preprocessing various classification algorithms for Medical domain is

phase, the feature extraction is used for obtain the key terms proposed, no numerical inputs have been taken and hence no

for the analysis to predict. The most essential of all the steps results are thus far been obtained and discussed.

is the feature selection phase doing selection of important

features/words in the whole document/sentence based on the II. BACKGROUND

significance of those keywords in the indispensable There have been many classifiers namely Naïve Bayes[1],

classification task. This is done despite the fact that a range Rochhio‘s algorithm[2], Support Vector Machines[3], K-

of effectual feature selection methods as made available. Nearest Neighbor[4], Maximum Entropy classifier[5] etc.

© 2018, IJCSE All Rights Reserved 520

International Journal of Computer Sciences and Engineering Vol.6(12), Dec 2018, E-ISSN: 2347-2693

The Naïve Bayes Classifier is a probabilistic graphical III. PRELIMINARIES

model also called as Bayesian Classifier and Bayesian

A. Classification and Prediction

Classification Algorithm which is based on the Bayes

Data mining is the knowledge discovery technique used to

Theorem[6] proposed by the Naïve Bayes. Bayesian

analyze and predict the class labels. It is also called as

Classification works on the principle that a class can

Knowledge discovery from databases (KDD), is the fully or

probably find the features of objects of that class because of

semi automated pattern extraction strategy from the training

the cohesion of this text feature value in the class. If a class

data set. Data mining techniques predict the behavior

is referred to an agent, feature value finding is simpler.

patterns or future trends and used to make the knowledge

Nonetheless, if the class itself is unknown and the only of

driven and proactive business decisions for the enhancement

the content of information are some of the measures of the

of business strategies for higher productivity. The data

features, then corresponding class can be predicted by using

mining techniques are clustering, classification,

the Bayes rule and hence the probabilistic model of the

generalization and prediction

obtained features

Classification techniques are mainly used for classifying the

A learning agent can therefore build a probabilistic model of

data among an assortment of classes in data mining.

the available features and use the same for predicting the

Classification is widely used to classify the tuple of the

classification. Naïve Bayes Classification can also be

industry to identify the type based on the similarities in

collectively organized in terms of latent variables, i.e. the

properties. Classification can be done through the two

probabilistic variables are not observed. Thereby building

important processes of Model Construction: Building the

the resultant classification an inference in the probabilistic

classifier model and Model Usage. The constructed

model in such a case, latent variables are obtained from

classifier model extracts the Class features.

latent variables observed due to probability. The simplest of

all the associated classifiers projected is the Naïve Bayes

Prediction is the process of obtaining the future trends based

Classifier. Naïve Bayes classifier fully works on the

on the historical data. It is widely used for forecasting the

principle of independent assumption using the input features

critical weather situations, predicting the future values in

participating independently. As long as the primary

stock trading, identifying the possibilities of medical

supposition of independence is true, the Naïve Bayes

diseases from the historical patient medical data. Priyanka et

classifier performs as much as good. It is proportional that

al have devised a smart health care system using data mining

the obtained class independent of the feature to the

strategies [7].

assumption for the good feature of the class. When

In medical health care systems, the diabetes mellitus can be

compared to other evolutionary algorithms, it is very simple

identified and predicted from the patient data set.

and easy to implement. The primary hypothesis is not

always convenient as per the real time scenario. The Naïve

B. Naïve Bayes Classification Algorithm

Bayes assumption is also not practical always and also

Naïve Bayes Classification is widely applied classification

called as linear classifier.

algorithm among all because it supports scalable

dimensionality of the input and training data set. Moreover it

Here it concentrates on the classification of medical data

is simple and more sophisticated amongst classification

analysis for the prediction of diabetes mellitus from the

methods. It displays the probability of each parameter of the

supervised learning of historical patient data patterns.

input for the conventional state. It implicates from the Bayes

Diabetes Mellitus is the highly possible as is a predisposed

Rule to create models with predictive capabilities.

genetic type disease which can be easily predicted through

the genetic history. The Clinical Decision Support System is

1. Naïve Bayes Rule:

proposed with the data mining techniques such as clustering

A conditional probability is the likelihood of some

and classification. As regards to, this survey has been taken

conclusion say C, E be some evidence/observation where a

for the identification of importance of classification

dependence relationship exists between C and E.

algorithm and a critical comparison of various algorithms

This probability is denoted as P(C|E) where

including Naïve Bayes Classification algorithm to this ( | ) ( )

medical application are to be made. Major contribution of P(C|E) =

( )

this survey work is to obtain the preliminaries for the 2. Naïve Bayesian Classification Algorithm:

classification and its techniques and prediction of diabetes The Naïve Bayes Classifier or Linear Bayesian Classifier

mellitus. It mainly concentrates on the methodology performs as follows:

illustration of the recommended classification algorithm Step 1: Let D be tuples collected from training set and their

with high accuracy and as is easier to deploy in the medical associated class labels Ca and Cp. As usual, each record is

environment. represented by an n-dimensional attribute vecor,

© 2018, IJCSE All Rights Reserved 521

International Journal of Computer Sciences and Engineering Vol.6(12), Dec 2018, E-ISSN: 2347-2693

X=(x1,x2,…xn-1, xn), representing n measurements made independent of one another, given the class label of the

on the tuple from n parameters, A1 to An. tuple. Thus,

Step 2: Suppose that there are m number of classes for ( | ) ∏ ( | ) ( | ) ( | )

prediction C(C1, C2…, Cm-1,Cm) given a record X, the ( | )

classifier will predict that X belongs to the class C having We can often estimate the probabilities

the most posterior probability, conditioned on X. The naive P(X1|Ci),P(X2|Ci)..P(xm|Ci) from the database tuples. For

bayes rule can be used to predict that the tuple X belongs to instance, to find P(X|Ci), it is consider that if Ak is

the class C categorical, then P(Xk|ci) is the tuples of class Ci in dataset

( | ) ( | ) D having the values of Xk for Ak, divided by |Ci,D|, the

Thus we maximize P(Ci|X). The class Ci for which P(Ci|X) number of tuples of class Ci in D.

when maximum then it is called as maximum posteriori Step 5: In order to find the class label of tuple X,

hypothesis by Bayes Theorem P(X|Ci)P(Ci) is evaluated for each class Ci. The classifier

( | ) ( ) finds that the class label of tuple X is the class Ci if and only

( | )

( ) if

Step 3: As P(X) is constant for all classes, only P(X|Ci) ( | ) ( ) ( | ) ( )

P(Ci) need be maximized. If the class prior probabilities are The predicted class label for the tuples is the class Ci for

not known, then it is often assumed that the classes are which P(X|Ci)P(Ci) is the maximum.

equally likely, that is P(C1)=P(C2)=…=P(Cm-1) = P(Cm) If required for accuracy, the smoothing techniques for

and it would therefore maximize P(X|Ci). Otherwise, we Naïve bayes are also used. Smoothing is a technique to

maximize P(X|Ci)P(Ci). Note that the class prior make a approximating function used to find the important

probabilities may be identified by P(Ci)=|Ci,D|/|D|, where patterns in the data instead of obtaining the noise or other

|Ci,D| is the number of training tuples of class Ci in dataset fine scale structures.

D.

Step 4: Given data sets with many attributes, it would be C. Methodology Illustration of Naïve Bayes classifier

extremely computationally expensive to compute P(X|Ci). Thus the medical disease prediction can be based on the

To decrease computation in evaluating P(X|Ci), the naïve Naïve bayes classification algorithm for the test patient data

assumption of class conditional independence is made. This using the patient medical data history. The illustration of the

presumes that the values of the parameters are conditionally CDSS using Naïve Bayes Classification is as follows:

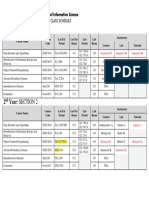

Fig.1. Naive Bayes Classification Methodology

IV. NAÏVE BAYES AND MEDICAL DOMAIN scheme can be used for investigating further the Swine flu

disease in patients using Information technology.

Borkar and Deshmukh [8] proposed using Naïve Bayes

classifier for detection of Swine Flu disease. The process Patil [9] worked in the direction of diagnosing whether a

starts with finding probability for each attribute of Swine flu patient with his given information regarding age, sex, blood

against all output. The probabilities of each attribute are then pressure, blood sugar, chest pain, ECG reports etc can have

multiplied. Selecting the maximum probability from all the a heart disease later in life or not. The experiments involve

probabilities, the attributes belong to the class variable with taking the parameters of the medical tests as inputs. The

maximum value. The promising results of the proposed proposal is effective enough in being used by nurses and

© 2018, IJCSE All Rights Reserved 522

International Journal of Computer Sciences and Engineering Vol.6(12), Dec 2018, E-ISSN: 2347-2693

medical students for training purposes. The data mining implementation of the algorithm must be as simple as it is

technique used is Naïve Bayes Classification for the with high support for analysis through large number of input

development of Decision Support System in Heart Disease parameters. Here, Naïve Bayes Classification algorithm has

Prediction System (HDPS). The performance of the proposal been explained in detail with its illustrated methodology and

is further improved using a smoothing operation. The algorithmic procedure. The CDSS implementation using

implementation of HDPS is done through a MATLAB classification on various domains of medical data were

application able to detect and extract hidden knowledge surveyed. Similar approach is leveled for Medical data

related to heart diseases from a historical heart disease mining of Diabetes Mellitus data. In future, the health care

database. system can be implemented for medical data with

enhancements through clustering and prediction.

Kharya et al [10] proposed detecting in patients the chances

of having Breast Cancer later in life. Severity in Breast REFERENCES

Cancer is necessary seeing it becoming the second most

cause of death among women. A Graphical User Interface [1] D. Lewis, ―Naive Bayes at Forty: The Independence Assumption

(GUI) is designed for entering the patient‘s record for the in Information Retrieval‖, Proceedings of the 10th European

Conference on Machine Learning(ECML-98), 1998.

prediction. The records are mined through the data [2] Christopher D. Manning, Prabhakar Raghavan and Hinrich Schütze,

repository. Naïve Bayes classifier, being simple and ―An Introduction to Information Retrieval‖, Cambridge

efficient is chosen for the prediction. The results obtained by University Press, page 181, 2009.

the Naïve Bayes classifier are accurate, have low [3] A. Basu, C. Waters and M.Shepherd, ―Support Vector Machines

computational effort and are fast. Implementation of the for Text Categorization‖, Proceedings of the 36th Annual Hawaii

proposal is done through Java and the training of data is International Conference on System Sciences, 2003. For

Conference

done using datasets from UCI Machine Repository [11]. [4] Gongde Guo, Hui Wang, David Bell, Yaxin Bi and Kieran Greer,

Another advantage of the proposed system is that the system ―KNN Model-Based Approach in Classification‖, Proceedings of

expands according to the dataset used. the ODBASE, pp- 986 – 996, 2003.

[5] Kamal Nigam, John Lafferty and Andrew McCulllum, ―Using

Stephanie J. Hickey [12] proposed using Naïve Bayes Maximum Entropy for Text Classification‖, IJCAI-99, Workshop

Classifier for public health domain combined with greedy on Machine learning for Information Filtering, pp. 61-67, 1999.

[6] Stuart, A.; Ord, K. (1994), Kendall's Advanced Theory of Statistics:

feature selection. The input was a public health dataset and Volume I—Distribution Theory, Edward Arnold, §8.7.

the objective behind the proposal was to identify one or [7] Priyanka, Sana Khan, Tulsi Kaur—Investigation on Smart Health

several attributes that best predict a selected target attribute Care using Data Mining Methods‖, International Journal of

without the need for searching the input space exhaustively. Scientific Research in Computer Sciences and Engineering

The proposal achieved its goal with increase in accuracy of (IJSRCSE), Vol 4, Issue 2, pp 31-36, 2320–088X, 2016

[8] Ankita R. Borkar and Dr. Prashant R. Deshmukh , ―Naïve Bayes

classification. The target attributes were related to diagnosis Classifier for Prediction of Swine Flu Disease‖, International

or procedure codes. Journal of Advanced Research in Computer Science and Software

Engineering, Volume 5, Issue 4, pp. 120-123, 2277 128X, 2015.

Ambica et al [13] proposed using Naïve Bayes for an [9] Ms.Rupali R.Patil, ―Heart Disease Prediction System using Naive

efficient decision support system for Diabetes disease. They Bayes and Jelinek-Mercer smoothing‖, International Journal of

proposed classification system was divided into two steps. Advanced Research in Computer and Communication

Engineering, Vol. 3, Issue 5, pp. 6787-6792, : 2278-1021 2014.

The first step includes analysis of how optimal the dataset is [10] Shweta Kharya, Shika Agrawal and Sunita Soni, ―Naive Bayes

and accordingly extraction of the optimal feature set from Classifiers: A Probabilistic Detection Model for Breast Cancer‖,

the training data is done. The second step forms the new International Journal of Computer Applications (0975 – 8887)

dataset as the optimal training dataset and the proposed Volume 92 – No.10, pp.26-31, 2014 [10] UCI Machine Learning

classification scheme is now applied on the optimal feature Repository, http://ics.uci.edu/ mlearn/MLRepository.html. 0975 –

set. The mismatched and unavailable features from the 8887.

[12] Stephanie J. Hickey, ―Naive Bayes Classification of Public

training and testing datasets are ignored and the dataset Health Data with Greedy Feature Selection‖, Communications of

attributes are used for the calculation of posterior the IIMA, Volume 13, Issue 2 Article 7, pp. 87-98, 2013.

probability. The proposed procedure therefore shows [13] A.Ambica, Satyanarayana Gandi and Amarendra Kothalanka,

elimination of unavailable features and document wise ―An Efficient Expert System For Diabetes By Naïve Bayesian

filtering. Classifier‖, International Journal of Engineering Trends and

Technology (IJETT) –Volume 4 Issue 10, pp.4634-4639, 2231-

5381 2013

V. CONCLUSION [14] Pablo Gamallo, Marcos Garcia and Santiago Fernández-Lanza,

―TASS: A Naive-Bayes strategy for sentiment analysis on

Classification algorithms can be selected based on the usage Spanish tweets‖, Workshop on Sentiment Analysis at SEPLN

and working principle of specific algorithm. However, the (TASS2013), pp. 126-132, 2013.

© 2018, IJCSE All Rights Reserved 523

International Journal of Computer Sciences and Engineering Vol.6(12), Dec 2018, E-ISSN: 2347-2693

Authors Profile Mr T.Pramanandaperumal has got graduated to Master of

Mr. S.Sankaranarayanan had his Master Science in Physics from Madurai Kamaraj University,

degree in Computer Science from India in the year 1981 and had his Master of Philosophy in

Bharathidasan University, Tiruchirappalli Computer Science from University of Madras in 1986. He

in 1991and Master of Philosophy in pursued his Ph.D with University of Madras in the year

Computer Science from Manonmaniam 2008 and was working as Associate Professor and Head in

Sundaranar University, Tirunelveli in the the Department of Computer Science in various

year 2003. He is currently pursuing his Ph.D in Bharathiar Government Colleges of Tamilnadu and also served as

University, Coimbatore and currently working as Principal in Four Government Colleges of Tamilnadu

Associate Professor in the Department of Computer including the pretegious Presidency College of Chennai.

Science, Government Arts College, Kumbakonam since He was a Member of Broard of Studies in various

1999. He has published more than 10 research papers in Universities and Colleges and during his period of service

reputed international journals and conferences including as Chairman of Board of Studies in Computer Science in

IEEE and it’s also available online. His main research University of Madras once. He has published more than

work focusses on Medical Data Mining, Natural 20 research papers in reputed international journals

Lanaguage Processing and Cloud Computing systems. He including Elsevier and conferences including IEEE and it’s

has more than 20 years of teaching experience and 5 years also available online. His main research work focusses on

of Research Experience. Computer Simulation modelling, Computer Graphics,

Image Processing and Data Mining. He has more than 35

years of teaching experience and 18 years of Research

Experience.

© 2018, IJCSE All Rights Reserved 524

You might also like

- Performance Analysis of Data Mining Classification Method Using Naïve Bayes Algorithm To Predict Student Graduation TimelinessDocument4 pagesPerformance Analysis of Data Mining Classification Method Using Naïve Bayes Algorithm To Predict Student Graduation TimelinessInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- 5 Unit NotesDocument166 pages5 Unit NotesYokesh R100% (1)

- Data Mining and Visualization Question BankDocument11 pagesData Mining and Visualization Question Bankghost100% (1)

- A Study On Prediction Techniques of Data Mining in Educational DomainDocument4 pagesA Study On Prediction Techniques of Data Mining in Educational DomainijcnesNo ratings yet

- DP-203T00 Microsoft Azure Data Engineering-02Document23 pagesDP-203T00 Microsoft Azure Data Engineering-02Javier MadrigalNo ratings yet

- Chowdhury 2020Document6 pagesChowdhury 2020Fantanesh TegegnNo ratings yet

- D04-Prediction Analysis Techniques of Data Mining A ReviewDocument6 pagesD04-Prediction Analysis Techniques of Data Mining A ReviewHendra Nusa PutraNo ratings yet

- Prediction Analysis Techniques of Data Mining: A ReviewDocument7 pagesPrediction Analysis Techniques of Data Mining: A ReviewEdwardNo ratings yet

- 2887-Article Text-5228-1-10-20180103Document6 pages2887-Article Text-5228-1-10-20180103Vijay ManiNo ratings yet

- Hybrid Decision Tree and Naive Bayes Model Predicts Student GraduationDocument6 pagesHybrid Decision Tree and Naive Bayes Model Predicts Student GraduationNurul RenaningtiasNo ratings yet

- 2013 Selection of The Best Classifier From Different Datasets Using WEKA PDFDocument8 pages2013 Selection of The Best Classifier From Different Datasets Using WEKA PDFpraveen kumarNo ratings yet

- Various Sequence Classification Mechanisms For Knowledge DiscoveryDocument4 pagesVarious Sequence Classification Mechanisms For Knowledge DiscoveryAnonymous lPvvgiQjRNo ratings yet

- An Overview of Data Mining in Medical Field: Reema Arora, Sandeep JaglanDocument4 pagesAn Overview of Data Mining in Medical Field: Reema Arora, Sandeep JaglanerpublicationNo ratings yet

- Share BATCH 8 JOURNAL PAPERDocument12 pagesShare BATCH 8 JOURNAL PAPEROm namo Om namo SrinivasaNo ratings yet

- Student Performance Evaluation in EducatDocument3 pagesStudent Performance Evaluation in EducatAli Asghar Pourhaji KazemNo ratings yet

- A N M F C R - B N B C: Ovel Ethodology OR Onstructing ULE Ased Aïve Ayesian LassifiersDocument13 pagesA N M F C R - B N B C: Ovel Ethodology OR Onstructing ULE Ased Aïve Ayesian LassifiersIves RodríguezNo ratings yet

- Review of Data Mining Classification Techniques: Shraddha Sharma & Ankita SaxenaDocument8 pagesReview of Data Mining Classification Techniques: Shraddha Sharma & Ankita SaxenaTJPRC PublicationsNo ratings yet

- Survey of Classification Techniques in Data Mining: Open AccessDocument10 pagesSurvey of Classification Techniques in Data Mining: Open AccessFahri Alfiandi StsetiaNo ratings yet

- Applied Sciences: Outlier Detection Based Feature Selection Exploiting Bio-Inspired Optimization AlgorithmsDocument28 pagesApplied Sciences: Outlier Detection Based Feature Selection Exploiting Bio-Inspired Optimization AlgorithmsAyman TaniraNo ratings yet

- Comparative Study of K-NN, Naive Bayes and Decision Tree Classification TechniquesDocument4 pagesComparative Study of K-NN, Naive Bayes and Decision Tree Classification TechniquesElrach CorpNo ratings yet

- Model-Based Book Recommender Systems Using Naïve Bayes Enhanced With Optimal Feature SelectionDocument7 pagesModel-Based Book Recommender Systems Using Naïve Bayes Enhanced With Optimal Feature Selectionmohammed moaidNo ratings yet

- Big Data-Classification SurveyDocument11 pagesBig Data-Classification Surveyஷோபனா கோபாலகிருஷ்ணன்No ratings yet

- Ijctt V48P126Document11 pagesIjctt V48P126omsaiboggala811No ratings yet

- Chi-Square Feature Selection Effect On Naive Bayes Classifier Algorithm Performance For Sentiment Analysis DocumentDocument8 pagesChi-Square Feature Selection Effect On Naive Bayes Classifier Algorithm Performance For Sentiment Analysis Documentsumina soNo ratings yet

- NFL Machine Learning Classification ModelsDocument8 pagesNFL Machine Learning Classification Modelssimpang unitedNo ratings yet

- Pattern Recognition Techniques in AIDocument6 pagesPattern Recognition Techniques in AIksumitkapoorNo ratings yet

- Accuracies and Training Times of Data Mining Classification Algorithms: An Empirical Comparative StudyDocument8 pagesAccuracies and Training Times of Data Mining Classification Algorithms: An Empirical Comparative StudyQaisar HussainNo ratings yet

- Sentiment Analysis on Tweets using Machine Learning: An SEO-Optimized TitleDocument5 pagesSentiment Analysis on Tweets using Machine Learning: An SEO-Optimized TitleRicha GargNo ratings yet

- Pattern recognition techniques reviewDocument6 pagesPattern recognition techniques reviewLuis GongoraNo ratings yet

- Data Mining: A Prediction of Performer or Underperformer Using ClassificationDocument5 pagesData Mining: A Prediction of Performer or Underperformer Using ClassificationArdian SyahNo ratings yet

- Literature Review CCSIT205Document9 pagesLiterature Review CCSIT205Indri LisztoNo ratings yet

- An Overview of Anomalous Sub Population: Mukti, Hari SinghDocument4 pagesAn Overview of Anomalous Sub Population: Mukti, Hari SingherpublicationNo ratings yet

- Ijcrt 195700Document7 pagesIjcrt 195700woxiko1688No ratings yet

- Data Warehouse and Mining NotesDocument12 pagesData Warehouse and Mining Notesbadal.singh07961No ratings yet

- Classification and PredictionDocument41 pagesClassification and Predictionkolluriniteesh111No ratings yet

- News Classification Using Machine LearningDocument5 pagesNews Classification Using Machine LearningInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Prediksi Nilai Proyek Akhir Mhs Menggunakan Algoritma Klasifikasi DataMiningDocument8 pagesPrediksi Nilai Proyek Akhir Mhs Menggunakan Algoritma Klasifikasi DataMiningteh tarekNo ratings yet

- Comparative Analysis of Classification Algorithms Using WekaDocument12 pagesComparative Analysis of Classification Algorithms Using WekaEditor IJTSRDNo ratings yet

- Swarm IntelligenceDocument16 pagesSwarm Intelligencewehiye438No ratings yet

- Ensemble Based Learning With Stacking, Boosting and Bagging For Unimodal Biometric Identification SystemDocument6 pagesEnsemble Based Learning With Stacking, Boosting and Bagging For Unimodal Biometric Identification SystemsuppiNo ratings yet

- Comparative Analysis of Classification Models On Income PredictionDocument5 pagesComparative Analysis of Classification Models On Income PredictionEditor IJRITCCNo ratings yet

- Doceng2012 Submission 19Document10 pagesDoceng2012 Submission 19Thiago SallesNo ratings yet

- Data Analysis in Qualitative Research: A Brief Guide To Using NvivoDocument7 pagesData Analysis in Qualitative Research: A Brief Guide To Using NvivoYola MaulidaNo ratings yet

- A Survey of Classification Techniques in The Area of Big DataDocument7 pagesA Survey of Classification Techniques in The Area of Big DataNandu KUMARNo ratings yet

- A Survey On Partitioning and Hierarchical Based Data Mining Clustering TechniquesDocument5 pagesA Survey On Partitioning and Hierarchical Based Data Mining Clustering TechniquesHayder KadhimNo ratings yet

- 500 1615 1 PBDocument7 pages500 1615 1 PBNurfikaNo ratings yet

- Analysis of Classification Algorithm in Data MiningDocument4 pagesAnalysis of Classification Algorithm in Data MiningIntegrated Intelligent ResearchNo ratings yet

- 1.1 Data and Information MiningDocument24 pages1.1 Data and Information MiningjeronNo ratings yet

- Survey Feature Selection Improves Intrusion Detection EfficiencyDocument7 pagesSurvey Feature Selection Improves Intrusion Detection EfficiencyImrana YaqoubNo ratings yet

- 78-Article Text-126-1-10-20180416Document11 pages78-Article Text-126-1-10-20180416buy krisp25No ratings yet

- Data Mining Approaches.Document6 pagesData Mining Approaches.saajithNo ratings yet

- Abhisek Linux448Document8 pagesAbhisek Linux448Deepu kumarNo ratings yet

- DMWH M3Document21 pagesDMWH M3BINESHNo ratings yet

- Classification Techniques in Machine Learning: Applications and IssuesDocument8 pagesClassification Techniques in Machine Learning: Applications and Issuesiyasu ayenekuluNo ratings yet

- Data Mining in RecommenderDocument1 pageData Mining in RecommenderSrinivasNo ratings yet

- SVMvs KNNDocument5 pagesSVMvs KNNLook HIMNo ratings yet

- 16012-Article Text-53270-1-10-20220918Document7 pages16012-Article Text-53270-1-10-20220918Abdullah ArifinNo ratings yet

- The Machine Learning: The Method of Artificial IntelligenceDocument4 pagesThe Machine Learning: The Method of Artificial Intelligencearif arifNo ratings yet

- PR Assignment 01 - Seemal Ajaz (206979)Document7 pagesPR Assignment 01 - Seemal Ajaz (206979)Azka NawazNo ratings yet

- Feature Selection For Medical Data Mining Comparisons of Expert JudgmentDocument6 pagesFeature Selection For Medical Data Mining Comparisons of Expert JudgmentMahmood SyedNo ratings yet

- DATA MINING and MACHINE LEARNING. PREDICTIVE TECHNIQUES: ENSEMBLE METHODS, BOOSTING, BAGGING, RANDOM FOREST, DECISION TREES and REGRESSION TREES.: Examples with MATLABFrom EverandDATA MINING and MACHINE LEARNING. PREDICTIVE TECHNIQUES: ENSEMBLE METHODS, BOOSTING, BAGGING, RANDOM FOREST, DECISION TREES and REGRESSION TREES.: Examples with MATLABNo ratings yet

- DATA MINING and MACHINE LEARNING. CLASSIFICATION PREDICTIVE TECHNIQUES: SUPPORT VECTOR MACHINE, LOGISTIC REGRESSION, DISCRIMINANT ANALYSIS and DECISION TREES: Examples with MATLABFrom EverandDATA MINING and MACHINE LEARNING. CLASSIFICATION PREDICTIVE TECHNIQUES: SUPPORT VECTOR MACHINE, LOGISTIC REGRESSION, DISCRIMINANT ANALYSIS and DECISION TREES: Examples with MATLABNo ratings yet

- September 0922Document2 pagesSeptember 0922kharisNo ratings yet

- Prediksi Dalam Penjurusan Siswa Baru Tingkat Sma Menggunakan Algoritma Naïve Bayes ClassifierDocument7 pagesPrediksi Dalam Penjurusan Siswa Baru Tingkat Sma Menggunakan Algoritma Naïve Bayes ClassifierkharisNo ratings yet

- Course Track: Data Analyst Data Scientist Data EngineerDocument1 pageCourse Track: Data Analyst Data Scientist Data EngineerkharisNo ratings yet

- C MaretDocument2 pagesC MaretkharisNo ratings yet

- October 1022Document2 pagesOctober 1022kharisNo ratings yet

- C JanuaryDocument2 pagesC JanuarykharisNo ratings yet

- December 1222Document2 pagesDecember 1222kharisNo ratings yet

- Agustus 0822Document2 pagesAgustus 0822kharisNo ratings yet

- C MayDocument2 pagesC MaykharisNo ratings yet

- November 1122Document2 pagesNovember 1122kharisNo ratings yet

- Mei 2022Document2 pagesMei 2022kharisNo ratings yet

- Recipient Name Contact: Title - Company - Address - City, ST ZIP Address City, ST ZIP Email TelephoneDocument1 pageRecipient Name Contact: Title - Company - Address - City, ST ZIP Address City, ST ZIP Email TelephoneQwertyNo ratings yet

- Agustus 2021Document2 pagesAgustus 2021kharisNo ratings yet

- C AprilDocument2 pagesC AprilkharisNo ratings yet

- C FebruariDocument2 pagesC FebruarikharisNo ratings yet

- January 2022Document2 pagesJanuary 2022kharisNo ratings yet

- Februari 2024Document2 pagesFebruari 2024kharisNo ratings yet

- Recipient Name Contact: Title - Company - Address - City, ST ZIP Address City, ST ZIP Email TelephoneDocument1 pageRecipient Name Contact: Title - Company - Address - City, ST ZIP Address City, ST ZIP Email TelephoneQwertyNo ratings yet

- Recipient Name Contact: Title - Company - Address - City, ST ZIP Address City, ST ZIP Email TelephoneDocument1 pageRecipient Name Contact: Title - Company - Address - City, ST ZIP Address City, ST ZIP Email TelephoneQwertyNo ratings yet

- Recipient Name Contact: Title - Company - Address - City, ST ZIP Address City, ST ZIP Email TelephoneDocument1 pageRecipient Name Contact: Title - Company - Address - City, ST ZIP Address City, ST ZIP Email TelephoneQwertyNo ratings yet

- Hari 2026Document2 pagesHari 2026kharisNo ratings yet

- Create a calendar in WordDocument2 pagesCreate a calendar in WordkharisNo ratings yet

- Recipient Name Contact: Title - Company - Address - City, ST ZIP Address City, ST ZIP Email TelephoneDocument1 pageRecipient Name Contact: Title - Company - Address - City, ST ZIP Address City, ST ZIP Email TelephoneQwertyNo ratings yet

- July 2023Document2 pagesJuly 2023kharisNo ratings yet

- Beo 2026Document2 pagesBeo 2026kharisNo ratings yet

- Tahun 2026Document2 pagesTahun 2026kharisNo ratings yet

- November 2024Document2 pagesNovember 2024kharisNo ratings yet

- July 2024 CalendarDocument2 pagesJuly 2024 CalendarkharisNo ratings yet

- BULAN 2026 CalendarDocument2 pagesBULAN 2026 CalendarkharisNo ratings yet

- Computer Applications in Pharmacy - Bp205TDocument56 pagesComputer Applications in Pharmacy - Bp205TAyura DhabeNo ratings yet

- Unit - 4 Artificial Intelligence AssignmentDocument13 pagesUnit - 4 Artificial Intelligence AssignmentVishalNo ratings yet

- DB Schema Refinement and NormalizationDocument26 pagesDB Schema Refinement and Normalization320126512L20 yerramsettibhaskarNo ratings yet

- 2nd Year Second Semester REVISED Class ScheduleDocument1 page2nd Year Second Semester REVISED Class ScheduleTENSAE ASCHALEWNo ratings yet

- T.Y.B.Sc. It Sem Vi SIC MCQ-Unit-5Document4 pagesT.Y.B.Sc. It Sem Vi SIC MCQ-Unit-5Shiva ChapagainNo ratings yet

- Sysnopsis Data (2) - MergedDocument11 pagesSysnopsis Data (2) - MergedHarshadaNo ratings yet

- 01 IntroToDMandDBMSDocument50 pages01 IntroToDMandDBMSManishNo ratings yet

- Neural DesignerDocument2 pagesNeural Designerwatson191No ratings yet

- 1 s2.0 S1342937X2200123X MainDocument17 pages1 s2.0 S1342937X2200123X Mainandriyan yulikasariNo ratings yet

- JUSTIFICATIONDocument3 pagesJUSTIFICATIONYec YecNo ratings yet

- Python SyllabusDocument12 pagesPython SyllabusNarendra kumarNo ratings yet

- Probability Statistics and Random Processes For Electrical Engineering 3Rd Edition Leon Solutions Manual Full Chapter PDFDocument67 pagesProbability Statistics and Random Processes For Electrical Engineering 3Rd Edition Leon Solutions Manual Full Chapter PDFEarlCollinsmapcs100% (11)

- Class 10 AI Half Yearly M.S (2023)Document5 pagesClass 10 AI Half Yearly M.S (2023)safayajyotiNo ratings yet

- Land Use Planning PresentationDocument12 pagesLand Use Planning PresentationAditya KushwahaNo ratings yet

- Scodeen Global Python DS - ML - Django Syllabus Version 13Document22 pagesScodeen Global Python DS - ML - Django Syllabus Version 13AbhijitNo ratings yet

- Database System Concepts 6th Edition Ebook PDFDocument61 pagesDatabase System Concepts 6th Edition Ebook PDFrodney.walker793100% (42)

- Gallery-Entry-Form TemplateDocument3 pagesGallery-Entry-Form TemplateJuly AjeroNo ratings yet

- Prateek Kumar Tiwari: Internship SkillsDocument1 pagePrateek Kumar Tiwari: Internship Skillsprateek tiwariNo ratings yet

- MLDM2006S Lecture 01 IntroductionDocument45 pagesMLDM2006S Lecture 01 Introductionrakibul islam rokyNo ratings yet

- Fresh and Rotten Fruit Classification: Using Deep LearningDocument17 pagesFresh and Rotten Fruit Classification: Using Deep LearningPalak AroraNo ratings yet

- History of AIDocument1 pageHistory of AISandro CafagnaNo ratings yet

- Madeeasy Bits FORMATDocument25 pagesMadeeasy Bits FORMATGouri ShankerNo ratings yet

- Awp Presentation2Document14 pagesAwp Presentation2PawanNo ratings yet

- The Ultimate Guide To Anomaly Detection: Key Use Cases, Techniques, and Autoencoder Machine Learning ModelsDocument9 pagesThe Ultimate Guide To Anomaly Detection: Key Use Cases, Techniques, and Autoencoder Machine Learning ModelsClément MoutardNo ratings yet

- Save Machine Learning Model Using Pickle and JoblibDocument3 pagesSave Machine Learning Model Using Pickle and JoblibPriya dharshini.GNo ratings yet

- Batch-9 PaperDocument8 pagesBatch-9 PaperMavoori AkhilNo ratings yet

- Fundementalsof Data ScienceDocument4 pagesFundementalsof Data ScienceSamanvitha VijapurapuNo ratings yet

- DBMS: Database Management System ExplainedDocument12 pagesDBMS: Database Management System ExplainedTaniya SethiNo ratings yet