Professional Documents

Culture Documents

WWW - Rle.mit - Edu Reduced

Uploaded by

br4bnavrck0v80 ratings0% found this document useful (0 votes)

4 views1 pageaa2 int

Original Title

www.rle.mit.edu_reduced

Copyright

© © All Rights Reserved

Available Formats

PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this Documentaa2 int

Copyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

0 ratings0% found this document useful (0 votes)

4 views1 pageWWW - Rle.mit - Edu Reduced

Uploaded by

br4bnavrck0v8aa2 int

Copyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

You are on page 1of 1

Home News About People Research Media Services Contact Search

RLE Recent Papers

NetAdaptV2: Efficient Neural Architecture Search Related RLE Recent Papers

with Fast Super-Network Training and

SysML: The New Frontier of

Architecture Optimization Machine Learning Systems

posted November 18, 2022 at 3:08 pm

Visual-Inertial Odometry on Chip:

T.-J. Yang, Y.-L. Liao, V. An Algorithm-and-Hardware Co-

Sze, “NetAdaptV2: design Approach

"The work was both theoretical and

Efficient neural

experimental, as well as basic and

architecture search with An Architecture-Level Energy and

applied...This mix of the exploration of

fast super-network Area Estimator for Processing-In-

new ideas and their reduction to practice training and Memory Accelerator Designs

remains the hallmark of the present-day architecture

RLE." optimization,” IEEE

Conference on Computer

—Jerome Wiesner

Vision and Pattern

Third Director of RLE

Recognition ( CVPR), June

2021. [ paper PDF |

poster PDF | slides PDF | project website LINK ]

Abstract:

Neural architecture search (NAS) typically consists of three main

steps: training a super-network, training and evaluating sampled

deep neural networks (DNNs), and training the discovered DNN.

Most of the existing efforts speed up some steps at the cost of a

significant slowdown of other steps or sacrificing the support of

non-differentiable search metrics. The unbalanced reduction in the

time spent per step limits the total search time reduction, and the

inability to support non-differentiable search metrics limits the

performance of discovered DNNs. In this paper, we present

NetAdaptV2 with three innovations to better balance the time

spent for each step while supporting non-differentiable search

metrics. First, we propose channel-level bypass connections that

merge network depth and layer width into a single search

dimension to reduce the time for training and evaluating sampled

DNNs. Second, ordered dropout is proposed to train multiple

DNNs in a single forward-backward pass to decrease the time for

training a super-network. Third, we propose the multi-layer

coordinate descent optimizer that considers the interplay of

multiple layers in each iteration of optimization to improve the

performance of discovered DNNs while supporting non-

differentiable search metrics. With these innovations, NetAdaptV2

reduces the total search time by up to 5.8× on ImageNet and 2.4×

on NYU Depth V2, respectively, and discovers DNNs with better

accuracy-latency/accuracy-MAC trade-offs than state-of-the-art NAS

works. Moreover, the discovered DNN outperforms NAS-

discovered MobileNetV3 by 1.8% higher top‑1 accuracy with the

same latency.1

CONNECT WITH US!

Copyright © 2022 RLE at MIT

Accessibility

You might also like

- Development_of_Deep_Residual_Neural_Networks_for_Gear_Pitting_Fault_Diagnosis_Using_Bayesian_OptimizationDocument15 pagesDevelopment_of_Deep_Residual_Neural_Networks_for_Gear_Pitting_Fault_Diagnosis_Using_Bayesian_OptimizationCarel N'shimbiNo ratings yet

- IEEE SIGNAL PROCESSING MAGAZINE SURVEY OF MODEL COMPRESSION AND ACCELERATION FOR DEEP NEURAL NETWORKSDocument10 pagesIEEE SIGNAL PROCESSING MAGAZINE SURVEY OF MODEL COMPRESSION AND ACCELERATION FOR DEEP NEURAL NETWORKSirfanwahla786No ratings yet

- Auto-DeepLab Hierarchical Neural Architecture Search For Semantic Image SegmentationDocument12 pagesAuto-DeepLab Hierarchical Neural Architecture Search For Semantic Image SegmentationMatiur Rahman MinarNo ratings yet

- Electronics 11 00945Document23 pagesElectronics 11 00945cthfmzNo ratings yet

- Accelerated Deep Learning Inference From Constrained Embedded DevicesDocument5 pagesAccelerated Deep Learning Inference From Constrained Embedded DevicesBhargav BhatNo ratings yet

- CNN Algorithms Survey on Edge Computing with Reconfigurable HardwareDocument24 pagesCNN Algorithms Survey on Edge Computing with Reconfigurable Hardwarearania313No ratings yet

- On Network Design Spaces For Visual RecognitionDocument11 pagesOn Network Design Spaces For Visual RecognitionWelcome To UsrNo ratings yet

- Learning Transferable Architectures For Scalable Image RecognitionDocument14 pagesLearning Transferable Architectures For Scalable Image RecognitionMaria PalancaresNo ratings yet

- Temporal Neural Network A Rchitecture For Online LearningDocument13 pagesTemporal Neural Network A Rchitecture For Online LearninghelbakouryNo ratings yet

- GhostNet: Cheap Operations Generate More FeaturesDocument10 pagesGhostNet: Cheap Operations Generate More FeaturesSSCPNo ratings yet

- Neural Architecture Search For Transformers A SurvDocument39 pagesNeural Architecture Search For Transformers A SurvSalima OUADFELNo ratings yet

- NoC Based DNN AcceleratorsDocument8 pagesNoC Based DNN AcceleratorslokeshNo ratings yet

- Real Time Object Detection Using CNNDocument5 pagesReal Time Object Detection Using CNNyevanNo ratings yet

- A Compressed Deep Convolutional Neural Networks For Face RecognitionDocument6 pagesA Compressed Deep Convolutional Neural Networks For Face RecognitionSiddharth KoliparaNo ratings yet

- Auto CNNDocument11 pagesAuto CNNirbankhusus2021No ratings yet

- Convolutional Neural Networks For Image ClassificationDocument5 pagesConvolutional Neural Networks For Image ClassificationInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Simple Is Good Investigation of History State Ensemble Deep Neu - 2023 - NeurocDocument15 pagesSimple Is Good Investigation of History State Ensemble Deep Neu - 2023 - NeurocSumeet MitraNo ratings yet

- Mobiledets: Searching For Object Detection Architectures For Mobile AcceleratorsDocument11 pagesMobiledets: Searching For Object Detection Architectures For Mobile AcceleratorsBubble LineNo ratings yet

- Mobilenetv2: Inverted Residuals and Linear BottlenecksDocument14 pagesMobilenetv2: Inverted Residuals and Linear Bottlenecksrineeth mNo ratings yet

- Cbnetv2: A Composite Backbone Network Architecture For Object DetectionDocument11 pagesCbnetv2: A Composite Backbone Network Architecture For Object DetectionMih VicentNo ratings yet

- A Real-Time Object Detection Processor With Xnor-BDocument13 pagesA Real-Time Object Detection Processor With Xnor-BcattiewpieNo ratings yet

- 1602.07360v1 - SqueezeNet: AlexNet-level Accuracy With 50x Fewer Parameters and 1MB Model Size - 2016Document5 pages1602.07360v1 - SqueezeNet: AlexNet-level Accuracy With 50x Fewer Parameters and 1MB Model Size - 2016ajgallegoNo ratings yet

- A Survey of Quantization Methods For Efficient Neural Network InferenceDocument33 pagesA Survey of Quantization Methods For Efficient Neural Network Inferencex moodNo ratings yet

- Transfer Learning For Object Detection Using State-of-the-Art Deep Neural NetworksDocument7 pagesTransfer Learning For Object Detection Using State-of-the-Art Deep Neural NetworksArebi MohamdNo ratings yet

- Deep Tensor Convolution On MulticoresDocument10 pagesDeep Tensor Convolution On MulticoresTrần Văn DuyNo ratings yet

- Image based classificationDocument8 pagesImage based classificationMuhammad Sami UllahNo ratings yet

- Feng Liang et al_2021_Efficient neural network using pointwise convolution kernels with linear phaseDocument8 pagesFeng Liang et al_2021_Efficient neural network using pointwise convolution kernels with linear phasebigliang98No ratings yet

- LinkNet - Exploiting Encoder Representations For Efficient Semantic SegmentationDocument5 pagesLinkNet - Exploiting Encoder Representations For Efficient Semantic SegmentationMario Galindo QueraltNo ratings yet

- P C N N R E I: Runing Onvolutional Eural Etworks FOR Esource Fficient NferenceDocument17 pagesP C N N R E I: Runing Onvolutional Eural Etworks FOR Esource Fficient NferenceAnshul VijayNo ratings yet

- Node2vec: Scalable Feature Learning For NetworksDocument10 pagesNode2vec: Scalable Feature Learning For NetworksKevin LevroneNo ratings yet

- Node2vec: Scalable Feature Learning For NetworksDocument10 pagesNode2vec: Scalable Feature Learning For Networksjavier veraNo ratings yet

- Deep Learning Architectures for Volumetric SegmentationDocument7 pagesDeep Learning Architectures for Volumetric Segmentationakmalulkhairi16No ratings yet

- YOLOPv2 bdd100kDocument8 pagesYOLOPv2 bdd100knedra benletaiefNo ratings yet

- 186187_1Document5 pages186187_1Pablo Peña TorresNo ratings yet

- 2004 10934v1 PDFDocument17 pages2004 10934v1 PDFKSHITIJ SONI 19PE10041No ratings yet

- Squeeze NetDocument13 pagesSqueeze NetpukkapadNo ratings yet

- Research Article: A Model For Surface Defect Detection of Industrial Products Based On Attention AugmentationDocument12 pagesResearch Article: A Model For Surface Defect Detection of Industrial Products Based On Attention AugmentationUmar MajeedNo ratings yet

- HAST-IDS: Learning Hierarchical Spatial-Temporal Features Using Deep Neural Networks To Improve Intrusion DetectionDocument15 pagesHAST-IDS: Learning Hierarchical Spatial-Temporal Features Using Deep Neural Networks To Improve Intrusion DetectionRavindra SinghNo ratings yet

- DSLOT-NN: Digit-Serial Left-to-Right Neural Network AcceleratorDocument7 pagesDSLOT-NN: Digit-Serial Left-to-Right Neural Network AcceleratorGJNo ratings yet

- Optimizing Performance of Convolutional Neural Network Using Computing TechniqueDocument4 pagesOptimizing Performance of Convolutional Neural Network Using Computing TechniqueNguyen Van ToanNo ratings yet

- Multiple Object Tracking For Video Analysis and Surveillance A Literature SurveyDocument10 pagesMultiple Object Tracking For Video Analysis and Surveillance A Literature SurveyInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Transformer-Based Visual Segmentation - A SurveyDocument23 pagesTransformer-Based Visual Segmentation - A SurveycvalprhpNo ratings yet

- CNN Model For Image Classification Using Resnet: Dr. Senbagavalli M & Swetha Shekarappa GDocument10 pagesCNN Model For Image Classification Using Resnet: Dr. Senbagavalli M & Swetha Shekarappa GTJPRC PublicationsNo ratings yet

- Mining User Navigation Patterns For Efficient Relevance Feedback For CbirDocument3 pagesMining User Navigation Patterns For Efficient Relevance Feedback For CbirJournalNX - a Multidisciplinary Peer Reviewed JournalNo ratings yet

- Can Neural Networks Be Easily Interpreted in Software Cost Estimation?Document6 pagesCan Neural Networks Be Easily Interpreted in Software Cost Estimation?jagannath_singhNo ratings yet

- Deep NN - Theory, Tutorial and SurveyDocument32 pagesDeep NN - Theory, Tutorial and SurveyDejanNo ratings yet

- S-NN: Stacked Neural Networks: Milad Mohammadi Stanford University Subhasis Das Stanford UniversityDocument8 pagesS-NN: Stacked Neural Networks: Milad Mohammadi Stanford University Subhasis Das Stanford UniversityfsdsNo ratings yet

- A Study On Detecting Drones Using Deep Convolutional Neural NetworksDocument6 pagesA Study On Detecting Drones Using Deep Convolutional Neural NetworksHarshitha SNo ratings yet

- Deep Learning For Intelligent Wireless Networks: A Comprehensive SurveyDocument25 pagesDeep Learning For Intelligent Wireless Networks: A Comprehensive SurveynehaNo ratings yet

- Generalizability of Semantic Segmentation Techniques: Keshav Bhandari Texas State University, San Marcos, TXDocument6 pagesGeneralizability of Semantic Segmentation Techniques: Keshav Bhandari Texas State University, San Marcos, TXKeshav BhandariNo ratings yet

- Neural NetworkDocument10 pagesNeural NetworkganeshNo ratings yet

- LightNets The Concept of Weakening LayersDocument7 pagesLightNets The Concept of Weakening LayersDaniel Muharom A SNo ratings yet

- CNN object detection helps blind with real-time scene analysisDocument7 pagesCNN object detection helps blind with real-time scene analysisHarsh ModiNo ratings yet

- Guided Evolution for Neural Architecture Search Using Zero-Proxy EstimationDocument9 pagesGuided Evolution for Neural Architecture Search Using Zero-Proxy EstimationFranco CapuanoNo ratings yet

- Deep and EvolutionaryDocument19 pagesDeep and EvolutionaryTayseer AtiaNo ratings yet

- Feature Fusion Based On Convolutional Neural Netwo PDFDocument8 pagesFeature Fusion Based On Convolutional Neural Netwo PDFNguyễn Thành TânNo ratings yet

- Phase 2Document13 pagesPhase 2Harshitha RNo ratings yet

- Deep Iris Network Design with Computation and Model ConstraintsDocument10 pagesDeep Iris Network Design with Computation and Model ConstraintspedroNo ratings yet

- DATA MINING and MACHINE LEARNING. CLASSIFICATION PREDICTIVE TECHNIQUES: NAIVE BAYES, NEAREST NEIGHBORS and NEURAL NETWORKS: Examples with MATLABFrom EverandDATA MINING and MACHINE LEARNING. CLASSIFICATION PREDICTIVE TECHNIQUES: NAIVE BAYES, NEAREST NEIGHBORS and NEURAL NETWORKS: Examples with MATLABNo ratings yet

- Embedded Deep Learning: Algorithms, Architectures and Circuits for Always-on Neural Network ProcessingFrom EverandEmbedded Deep Learning: Algorithms, Architectures and Circuits for Always-on Neural Network ProcessingNo ratings yet

- Quality TestDocument22 pagesQuality TestUmair Shaikh100% (1)

- Barrafer ADocument2 pagesBarrafer ADoby YuniardiNo ratings yet

- Abalos I Aesthetics and SustainabilityDocument4 pagesAbalos I Aesthetics and SustainabilityAna AscicNo ratings yet

- Understanding OPNFV Ebook PDFDocument145 pagesUnderstanding OPNFV Ebook PDFRafik Kafel100% (1)

- ERP ComparisonDocument33 pagesERP ComparisonsuramparkNo ratings yet

- SIA Format For The FE100 Digital Receiver: Rev Date CommentsDocument15 pagesSIA Format For The FE100 Digital Receiver: Rev Date CommentsGabi DimaNo ratings yet

- Vde 2015 0091Document60 pagesVde 2015 0091Rakesh KRNo ratings yet

- Sun Control and Shading DevicesDocument10 pagesSun Control and Shading DevicesNerinel CoronadoNo ratings yet

- 3-Bts3900 v100r009c00spc230 (GBTS) Upgrade Guide (Smt-Based)Document59 pages3-Bts3900 v100r009c00spc230 (GBTS) Upgrade Guide (Smt-Based)samNo ratings yet

- Nr-420504 Advanced DatabasesDocument6 pagesNr-420504 Advanced DatabasesSrinivasa Rao GNo ratings yet

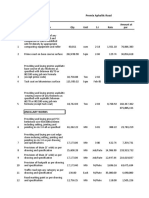

- Premix Aphaltic Road: # Description Qty Unit S.I Rate Amount at ParDocument8 pagesPremix Aphaltic Road: # Description Qty Unit S.I Rate Amount at ParadnanliaquatNo ratings yet

- Knighthayes CourtDocument11 pagesKnighthayes Courtathaya respatiNo ratings yet

- UsenetDocument22 pagesUsenetmaulik4191No ratings yet

- Deep Basement Structures and Temporary Earth Retaining SystemsDocument103 pagesDeep Basement Structures and Temporary Earth Retaining SystemsMelinda GordonNo ratings yet

- Concrete and Masonry: Section 13 Unit 39Document78 pagesConcrete and Masonry: Section 13 Unit 39mbvyassNo ratings yet

- PP API ReferenceDocument272 pagesPP API Referencetestsrs123No ratings yet

- Goddess Hathor-ICONOGRAPHY PDFDocument133 pagesGoddess Hathor-ICONOGRAPHY PDFRevitarom Oils Srl100% (7)

- Approach Methodology-Sharavati Bridge-CSBDocument10 pagesApproach Methodology-Sharavati Bridge-CSBSK SwainNo ratings yet

- Deck Slab Concreting - Aug 2011Document11 pagesDeck Slab Concreting - Aug 2011AlsonChinNo ratings yet

- Some Economic Facts of The Prefabricated HousingDocument20 pagesSome Economic Facts of The Prefabricated Housingchiorean_robertNo ratings yet

- GroffDocument268 pagesGroffJason SinnamonNo ratings yet

- Sailor 500 Fleetbroadband: Second Generation Maritime Broadband CommunicationsDocument2 pagesSailor 500 Fleetbroadband: Second Generation Maritime Broadband Communicationsjeffry.firmansyahNo ratings yet

- Albany MaxiDocument10 pagesAlbany MaxiRaúl Marcelo Blanco ZambranaNo ratings yet

- Osman Hamdi Bey and The Historiophile Mo PDFDocument6 pagesOsman Hamdi Bey and The Historiophile Mo PDFRebecca378No ratings yet

- JNDocument120 pagesJNanon_314007968No ratings yet

- CHAPTER 6 - Design of Knuckle Joint, Sleeve and Cotter JointDocument34 pagesCHAPTER 6 - Design of Knuckle Joint, Sleeve and Cotter JointVignesan MechNo ratings yet

- Concrete - The Basic MixDocument2 pagesConcrete - The Basic MixMZJNo ratings yet

- Full Report - Dry SievingDocument12 pagesFull Report - Dry SievingDini NoordinNo ratings yet

- F - Ceiling DiffusersDocument25 pagesF - Ceiling DiffusersTeoxNo ratings yet

- Progress ReportDocument45 pagesProgress ReportAnonymous Of0C4d100% (1)