Professional Documents

Culture Documents

Evidence-Based Medicine - Wikipedia

Evidence-Based Medicine - Wikipedia

Uploaded by

Badhon Chandra SarkarOriginal Description:

Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Evidence-Based Medicine - Wikipedia

Evidence-Based Medicine - Wikipedia

Uploaded by

Badhon Chandra SarkarCopyright:

Available Formats

Search Wikipedia Search Create account Log in

Evidence-based medicine 34 languages

Contents hide Article Talk Read Edit View history Tools

(Top) From Wikipedia, the free encyclopedia

Background, history, and

"Science-based medicine" redirects here. For the website, see Science-Based Medicine.

definition

Evidence-based medicine (EBM) is "the conscientious, explicit and judicious use of current best evidence

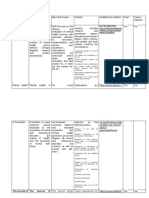

Clinical decision-making Part of a series on

in making decisions about the care of individual patients."[1] The aim of EBM is to integrate the experience

Evidence-based guidelines

Evidence-based practices

of the clinician, the values of the patient, and the best available scientific information to guide decision- Assessment · Design · Management ·

and policies

making about clinical management. The term was originally used to describe an approach to teaching the Research · Scheduling

Medical education practice of medicine and improving decisions by individual physicians about individual patients.[2] Dentistry · Medical ethics · Medicine · Nursing

Progress · Pharmacy in developing countries ·

The EBM Pyramid is a tool that helps in visualizing the hierarchy of evidence in medicine, from least Toxicology

Current practice authoritative, like expert opinions, to most authoritative, like systematic reviews.[3] Conservation · Education · Legislation ·

Methods Library and information practice · Policy ·

Policing · Prosecution

Steps Background, history, and definition [ edit ] · ·

Evidence reviews Medicine has a long history of scientific inquiry about the prevention, diagnosis, and treatment of human

Assessing the quality of disease.[4][5] In the 11th century AD, Avicenna, a Persian physician and philosopher, developed an approach to EBM that was mostly similar to current

evidence ideas and practises.[6][7]

Categories of

The concept of a controlled clinical trial was first described in 1662 by Jan Baptist van Helmont in reference to the practice of bloodletting.[8] Wrote Van

recommendations

Helmont:

Statistical measures

Quality of clinical trials Let us take out of the Hospitals, out of the Camps, or from elsewhere, 200, or 500 poor People, that have fevers or Pleuritis. Let us divide

Limitations and criticism them in Halfes, let us cast lots, that one halfe of them may fall to my share, and the others to yours; I will cure them without blood-letting and

sensible evacuation; but you do, as ye know ... we shall see how many Funerals both of us shall have...

Application of evidence in

clinical settings

The first published report describing the conduct and results of a controlled clinical trial was by James Lind, a Scottish naval surgeon who conducted

Education research on scurvy during his time aboard HMS Salisbury in the Channel Fleet, while patrolling the Bay of Biscay. Lind divided the sailors participating in

See also his experiment into six groups, so that the effects of various treatments could be fairly compared. Lind found improvement in symptoms and signs of

scurvy among the group of men treated with lemons or oranges. He published a treatise describing the results of this experiment in 1753.[9]

An early critique of statistical methods in medicine was published in 1835.[10]

The term 'evidence-based medicine' was introduced in 1990 by Gordon Guyatt of McMaster University.[11][12][13][14]

Clinical decision-making [ edit ]

Alvan Feinstein's publication of Clinical Judgment in 1967 focused attention on the role of clinical reasoning and identified biases that can affect it.[15] In

1972, Archie Cochrane published Effectiveness and Efficiency, which described the lack of controlled trials supporting many practices that had previously

been assumed to be effective.[16] In 1973, John Wennberg began to document wide variations in how physicians practiced.[17] Through the 1980s, David

M. Eddy described errors in clinical reasoning and gaps in evidence.[18][19][20][21] In the mid-1980s, Alvin Feinstein, David Sackett and others published

textbooks on clinical epidemiology, which translated epidemiological methods to physician decision-making.[22][23] Toward the end of the 1980s, a group

at RAND showed that large proportions of procedures performed by physicians were considered inappropriate even by the standards of their own

experts.[24]

Evidence-based guidelines and policies [ edit ]

Main article: Medical guideline

David M. Eddy first began to use the term 'evidence-based' in 1987 in workshops and a manual commissioned by the Council of Medical Specialty

Societies to teach formal methods for designing clinical practice guidelines. The manual was eventually published by the American College of

Physicians.[25][26] Eddy first published the term 'evidence-based' in March 1990, in an article in the Journal of the American Medical Association that laid

out the principles of evidence-based guidelines and population-level policies, which Eddy described as "explicitly describing the available evidence that

pertains to a policy and tying the policy to evidence instead of standard-of-care practices or the beliefs of experts. The pertinent evidence must be

identified, described, and analyzed. The policymakers must determine whether the policy is justified by the evidence. A rationale must be written."[27] He

discussed evidence-based policies in several other papers published in JAMA in the spring of 1990.[27][28] Those papers were part of a series of 28

published in JAMA between 1990 and 1997 on formal methods for designing population-level guidelines and policies.[29]

Medical education [ edit ]

The term 'evidence-based medicine' was introduced slightly later, in the context of medical education. In the autumn of 1990, Gordon Guyatt used it in an

unpublished description of a program at McMaster University for prospective or new medical students.[30] Guyatt and others first published the term two

years later (1992) to describe a new approach to teaching the practice of medicine.[2]

In 1996, David Sackett and colleagues clarified the definition of this tributary of evidence-based medicine as "the conscientious, explicit and judicious use

of current best evidence in making decisions about the care of individual patients. ... [It] means integrating individual clinical expertise with the best

available external clinical evidence from systematic research."[1] This branch of evidence-based medicine aims to make individual decision making more

structured and objective by better reflecting the evidence from research.[31][32] Population-based data are applied to the care of an individual patient,[33]

while respecting the fact that practitioners have clinical expertise reflected in effective and efficient diagnosis and thoughtful identification and

compassionate use of individual patients' predicaments, rights, and preferences.[1]

Between 1993 and 2000, the Evidence-Based Medicine Working Group at McMaster University published the methods to a broad physician audience in

a series of 25 "Users' Guides to the Medical Literature" in JAMA. In 1995 Rosenberg and Donald defined individual-level, evidence-based medicine as

"the process of finding, appraising, and using contemporaneous research findings as the basis for medical decisions."[34] In 2010, Greenhalgh used a

definition that emphasized quantitative methods: "the use of mathematical estimates of the risk of benefit and harm, derived from high-quality research

on population samples, to inform clinical decision-making in the diagnosis, investigation or management of individual patients."[35][1]

The two original definitions[which?] highlight important differences in how evidence-based medicine is applied to populations versus individuals. When

designing guidelines applied to large groups of people in settings with relatively little opportunity for modification by individual physicians, evidence-based

policymaking emphasizes that good evidence should exist to document a test's or treatment's effectiveness.[36] In the setting of individual decision-

making, practitioners can be given greater latitude in how they interpret research and combine it with their clinical judgment.[1][37] In 2005, Eddy offered

an umbrella definition for the two branches of EBM: "Evidence-based medicine is a set of principles and methods intended to ensure that to the greatest

extent possible, medical decisions, guidelines, and other types of policies are based on and consistent with good evidence of effectiveness and

benefit."[38]

Progress [ edit ]

In the area of evidence-based guidelines and policies, the explicit insistence on evidence of effectiveness was introduced by the American Cancer

Society in 1980.[39] The U.S. Preventive Services Task Force (USPSTF) began issuing guidelines for preventive interventions based on evidence-based

principles in 1984.[40] In 1985, the Blue Cross Blue Shield Association applied strict evidence-based criteria for covering new technologies.[41] Beginning

in 1987, specialty societies such as the American College of Physicians, and voluntary health organizations such as the American Heart Association,

wrote many evidence-based guidelines. In 1991, Kaiser Permanente, a managed care organization in the US, began an evidence-based guidelines

program.[42] In 1991, Richard Smith wrote an editorial in the British Medical Journal and introduced the ideas of evidence-based policies in the UK.[43] In

1993, the Cochrane Collaboration created a network of 13 countries to produce systematic reviews and guidelines.[44] In 1997, the US Agency for

Healthcare Research and Quality (AHRQ, then known as the Agency for Health Care Policy and Research, or AHCPR) established Evidence-based

Practice Centers (EPCs) to produce evidence reports and technology assessments to support the development of guidelines.[45] In the same year, a

National Guideline Clearinghouse that followed the principles of evidence-based policies was created by AHRQ, the AMA, and the American Association

of Health Plans (now America's Health Insurance Plans).[46] In 1999, the National Institute for Clinical Excellence (NICE) was created in the UK.[47]

In the area of medical education, medical schools in Canada, the US, the UK, Australia, and other countries[48][49] now offer programs that teach

evidence-based medicine. A 2009 study of UK programs found that more than half of UK medical schools offered some training in evidence-based

medicine, although the methods and content varied considerably, and EBM teaching was restricted by lack of curriculum time, trained tutors and teaching

materials.[50] Many programs have been developed to help individual physicians gain better access to evidence. For example, UpToDate was created in

the early 1990s.[51] The Cochrane Collaboration began publishing evidence reviews in 1993.[42] In 1995, BMJ Publishing Group launched Clinical

Evidence, a 6-monthly periodical that provided brief summaries of the current state of evidence about important clinical questions for clinicians.[52]

Current practice [ edit ]

By 2000, use of the term evidence-based had extended to other levels of the health care system. An example is evidence-based health services, which

seek to increase the competence of health service decision makers and the practice of evidence-based medicine at the organizational or institutional

level.[53]

The multiple tributaries of evidence-based medicine share an emphasis on the importance of incorporating evidence from formal research in medical

policies and decisions. However, because they differ on the extent to which they require good evidence of effectiveness before promoting a guideline or

payment policy, a distinction is sometimes made between evidence-based medicine and science-based medicine, which also takes into account factors

such as prior plausibility and compatibility with established science (as when medical organizations promote controversial treatments such as

acupuncture).[54] Differences also exist regarding the extent to which it is feasible to incorporate individual-level information in decisions. Thus, evidence-

based guidelines and policies may not readily "hybridise" with experience-based practices orientated towards ethical clinical judgement, and can lead to

contradictions, contest, and unintended crises.[21] The most effective "knowledge leaders" (managers and clinical leaders) use a broad range of

management knowledge in their decision making, rather than just formal evidence.[22] Evidence-based guidelines may provide the basis for

governmentality in health care, and consequently play a central role in the governance of contemporary health care systems.[23]

Methods [ edit ]

Steps [ edit ]

The steps for designing explicit, evidence-based guidelines were described in the late 1980s: formulate the question (population, intervention,

comparison intervention, outcomes, time horizon, setting); search the literature to identify studies that inform the question; interpret each study to

determine precisely what it says about the question; if several studies address the question, synthesize their results (meta-analysis); summarize the

evidence in evidence tables; compare the benefits, harms and costs in a balance sheet; draw a conclusion about the preferred practice; write the

guideline; write the rationale for the guideline; have others review each of the previous steps; implement the guideline.[20]

For the purposes of medical education and individual-level decision making, five steps of EBM in practice were described in 1992[55] and the experience

of delegates attending the 2003 Conference of Evidence-Based Health Care Teachers and Developers was summarized into five steps and published in

2005.[56] This five-step process can broadly be categorized as follows:

1. Translation of uncertainty to an answerable question; includes critical questioning, study design and levels of evidence[57]

2. Systematic retrieval of the best evidence available[58]

3. Critical appraisal of evidence for internal validity that can be broken down into aspects regarding:[33]

Systematic errors as a result of selection bias, information bias and confounding

Quantitative aspects of diagnosis and treatment

The effect size and aspects regarding its precision

Clinical importance of results

External validity or generalizability

4. Application of results in practice[59]

5. Evaluation of performance[60]

Evidence reviews [ edit ]

Systematic reviews of published research studies are a major part of the evaluation of particular treatments. The Cochrane Collaboration is one of the

best-known organisations that conducts systematic reviews. Like other producers of systematic reviews, it requires authors to provide a detailed study

protocol as well as a reproducible plan of their literature search and evaluations of the evidence.[61] After the best evidence is assessed, treatment is

categorized as (1) likely to be beneficial, (2) likely to be harmful, or (3) without evidence to support either benefit or harm.[citation needed]

A 2007 analysis of 1,016 systematic reviews from all 50 Cochrane Collaboration Review Groups found that 44% of the reviews concluded that the

intervention was likely to be beneficial, 7% concluded that the intervention was likely to be harmful, and 49% concluded that evidence did not support

either benefit or harm. 96% recommended further research.[62] In 2017, a study assessed the role of systematic reviews produced by Cochrane

Collaboration to inform US private payers' policymaking; it showed that although the medical policy documents of major US private payers were informed

by Cochrane systematic reviews, there was still scope to encourage the further use.[63]

Assessing the quality of evidence [ edit ]

Main article: Levels of evidence

Evidence-based medicine categorizes different types of clinical evidence and rates or grades them[64] according to the strength of their freedom from the

various biases that beset medical research. For example, the strongest evidence for therapeutic interventions is provided by systematic review of

randomized, well-blinded, placebo-controlled trials with allocation concealment and complete follow-up involving a homogeneous patient population and

medical condition. In contrast, patient testimonials, case reports, and even expert opinion have little value as proof because of the placebo effect, the

biases inherent in observation and reporting of cases, and difficulties in ascertaining who is an expert (however, some critics have argued that expert

opinion "does not belong in the rankings of the quality of empirical evidence because it does not represent a form of empirical evidence" and continue

that "expert opinion would seem to be a separate, complex type of knowledge that would not fit into hierarchies otherwise limited to empirical evidence

alone.").[65]

Several organizations have developed grading systems for assessing the quality of evidence. For example, in 1989 the U.S. Preventive Services Task

Force (USPSTF) put forth the following system:[66]

Level I: Evidence obtained from at least one properly designed randomized controlled trial.

Level II-1: Evidence obtained from well-designed controlled trials without randomization.

Level II-2: Evidence obtained from well-designed cohort studies or case-control studies, preferably from more than one center or research group.

Level II-3: Evidence obtained from multiple time series designs with or without the intervention. Dramatic results in uncontrolled trials might also be

regarded as this type of evidence.

Level III: Opinions of respected authorities, based on clinical experience, descriptive studies, or reports of expert committees.

Another example are the Oxford CEBM Levels of Evidence published by the Centre for Evidence-Based Medicine. First released in September 2000, the

Levels of Evidence provide a way to rank evidence for claims about prognosis, diagnosis, treatment benefits, treatment harms, and screening, which

most grading schemes do not address. The original CEBM Levels were Evidence-Based On Call to make the process of finding evidence feasible and its

results explicit. In 2011, an international team redesigned the Oxford CEBM Levels to make them more understandable and to take into account recent

developments in evidence ranking schemes. The Oxford CEBM Levels of Evidence have been used by patients and clinicians, as well as by experts to

develop clinical guidelines, such as recommendations for the optimal use of phototherapy and topical therapy in psoriasis[67] and guidelines for the use of

the BCLC staging system for diagnosing and monitoring hepatocellular carcinoma in Canada.[68]

In 2000, a system was developed by the Grading of Recommendations Assessment, Development and Evaluation (GRADE) working group. The GRADE

system takes into account more dimensions than just the quality of medical research.[69] It requires users who are performing an assessment of the

quality of evidence, usually as part of a systematic review, to consider the impact of different factors on their confidence in the results. Authors of GRADE

tables assign one of four levels to evaluate the quality of evidence, on the basis of their confidence that the observed effect (a numeric value) is close to

the true effect. The confidence value is based on judgments assigned in five different domains in a structured manner.[70] The GRADE working group

defines 'quality of evidence' and 'strength of recommendations' based on the quality as two different concepts that are commonly confused with each

other.[70]

Systematic reviews may include randomized controlled trials that have low risk of bias, or observational studies that have high risk of bias. In the case of

randomized controlled trials, the quality of evidence is high but can be downgraded in five different domains.[71]

Risk of bias: A judgment made on the basis of the chance that bias in included studies has influenced the estimate of effect.

Imprecision: A judgment made on the basis of the chance that the observed estimate of effect could change completely.

Indirectness: A judgment made on the basis of the differences in characteristics of how the study was conducted and how the results are actually

going to be applied.

Inconsistency: A judgment made on the basis of the variability of results across the included studies.

Publication bias: A judgment made on the basis of the question whether all the research evidence has been taken to account.[72]

In the case of observational studies per GRADE, the quality of evidence starts off lower and may be upgraded in three domains in addition to being

subject to downgrading.[71]

Large effect: Methodologically strong studies show that the observed effect is so large that the probability of it changing completely is less likely.

Plausible confounding would change the effect: Despite the presence of a possible confounding factor that is expected to reduce the observed effect,

the effect estimate still shows significant effect.

Dose response gradient: The intervention used becomes more effective with increasing dose. This suggests that a further increase will likely bring

about more effect.

Meaning of the levels of quality of evidence as per GRADE:[70]

High Quality Evidence: The authors are very confident that the presented estimate lies very close to the true value. In other words, the probability is

very low that further research will completely change the presented conclusions.

Moderate Quality Evidence: The authors are confident that the presented estimate lies close to the true value, but it is also possible that it may be

substantially different. In other words, further research may completely change the conclusions.

Low Quality Evidence: The authors are not confident in the effect estimate, and the true value may be substantially different. In other words, further

research is likely to change the presented conclusions completely.

Very Low Quality Evidence: The authors do not have any confidence in the estimate and it is likely that the true value is substantially different from it.

In other words, new research will probably change the presented conclusions completely.

Categories of recommendations [ edit ]

In guidelines and other publications, recommendation for a clinical service is classified by the balance of risk versus benefit and the level of evidence on

which this information is based. The U.S. Preventive Services Task Force uses the following system:[73]

Level A: Good scientific evidence suggests that the benefits of the clinical service substantially outweigh the potential risks. Clinicians should discuss

the service with eligible patients.

Level B: At least fair scientific evidence suggests that the benefits of the clinical service outweighs the potential risks. Clinicians should discuss the

service with eligible patients.

Level C: At least fair scientific evidence suggests that the clinical service provides benefits, but the balance between benefits and risks is too close for

general recommendations. Clinicians need not offer it unless individual considerations apply.

Level D: At least fair scientific evidence suggests that the risks of the clinical service outweigh potential benefits. Clinicians should not routinely offer

the service to asymptomatic patients.

Level I: Scientific evidence is lacking, of poor quality, or conflicting, such that the risk versus benefit balance cannot be assessed. Clinicians should

help patients understand the uncertainty surrounding the clinical service.

GRADE guideline panelists may make strong or weak recommendations on the basis of further criteria. Some of the important criteria are the balance

between desirable and undesirable effects (not considering cost), the quality of the evidence, values and preferences and costs (resource utilization).[71]

Despite the differences between systems, the purposes are the same: to guide users of clinical research information on which studies are likely to be

most valid. However, the individual studies still require careful critical appraisal.[citation needed]

Statistical measures [ edit ]

Evidence-based medicine attempts to express clinical benefits of tests and treatments using mathematical methods. Tools used by practitioners of

evidence-based medicine include:

Likelihood ratio

Main article: Likelihood ratios in diagnostic testing

The pre-test odds of a particular diagnosis, multiplied by the likelihood ratio, determines the post-test odds. (Odds can be calculated from, and then

converted to, the [more familiar] probability.) This reflects Bayes' theorem. The differences in likelihood ratio between clinical tests can be used to

prioritize clinical tests according to their usefulness in a given clinical situation.

AUC-ROC The area under the receiver operating characteristic curve (AUC-ROC) reflects the relationship between sensitivity and specificity for a

given test. High-quality tests will have an AUC-ROC approaching 1, and high-quality publications about clinical tests will provide information about the

AUC-ROC. Cutoff values for positive and negative tests can influence specificity and sensitivity, but they do not affect AUC-ROC.

Number needed to treat (NNT)/Number needed to harm (NNH). NNT and NNH are ways of expressing the effectiveness and safety, respectively, of

interventions in a way that is clinically meaningful. NNT is the number of people who need to be treated in order to achieve the desired outcome (e.g.

survival from cancer) in one patient. For example, if a treatment increases the chance of survival by 5%, then 20 people need to be treated in order

for 1 additional patient to survive because of the treatment. The concept can also be applied to diagnostic tests. For example, if 1,339 women age

50–59 need to be invited for breast cancer screening over a ten-year period in order to prevent one woman from dying of breast cancer,[74] then the

NNT for being invited to breast cancer screening is 1339.

Quality of clinical trials [ edit ]

Evidence-based medicine attempts to objectively evaluate the quality of clinical research by critically assessing techniques reported by researchers in

their publications.

Trial design considerations: High-quality studies have clearly defined eligibility criteria and have minimal missing data.[75][76]

Generalizability considerations: Studies may only be applicable to narrowly defined patient populations and may not be generalizable to other clinical

contexts.[75]

Follow-up: Sufficient time for defined outcomes to occur can influence the prospective study outcomes and the statistical power of a study to detect

differences between a treatment and control arm.[77]

Power: A mathematical calculation can determine whether the number of patients is sufficient to detect a difference between treatment arms. A

negative study may reflect a lack of benefit, or simply a lack of sufficient quantities of patients to detect a difference.[77][75][78]

Limitations and criticism [ edit ]

There are a number of limitations and criticisms of evidence-based medicine.[79][80][81] Two widely cited categorization schemes for the various published

critiques of EBM include the three-fold division of Straus and McAlister ("limitations universal to the practice of medicine, limitations unique to evidence-

based medicine and misperceptions of evidence-based-medicine")[82] and the five-point categorization of Cohen, Stavri and Hersh (EBM is a poor

philosophic basis for medicine, defines evidence too narrowly, is not evidence-based, is limited in usefulness when applied to individual patients, or

reduces the autonomy of the doctor/patient relationship).[83]

In no particular order, some published objections include:

Research produced by EBM, such as from randomized controlled trials (RCTs), may not be relevant for all treatment situations.[84] Research tends to

focus on specific populations, but individual persons can vary substantially from population norms. Because certain population segments have been

historically under-researched (due to reasons such as race, gender, age, and co-morbid diseases), evidence from RCTs may not be generalizable to

those populations.[85] Thus, EBM applies to groups of people, but this should not preclude clinicians from using their personal experience in deciding

how to treat each patient. One author advises that "the knowledge gained from clinical research does not directly answer the primary clinical question

of what is best for the patient at hand" and suggests that evidence-based medicine should not discount the value of clinical experience.[65] Another

author stated that "the practice of evidence-based medicine means integrating individual clinical expertise with the best available external clinical

evidence from systematic research."[1]

Use of evidence-based guidelines often fits poorly for complex, multimorbid patients. This is because the guidelines are usually based on clinical

studies focused on single diseases. In reality, the recommended treatments in such circumstances may interact unfavorably with each other and

often lead to polypharmacy[86][87].

The theoretical ideal of EBM (that every narrow clinical question, of which hundreds of thousands can exist, would be answered by meta-analysis and

systematic reviews of multiple RCTs) faces the limitation that research (especially the RCTs themselves) is expensive; thus, in reality, for the

foreseeable future, the demand for EBM will always be much higher than the supply, and the best humanity can do is to triage the application of

scarce resources.

Research can be influenced by biases such as publication bias and conflict of interest in academic publishing. For example, studies with conflicts due

to industry funding are more likely to favor their product.[88][89] It has been argued that contemporary evidence based medicine is an illusion, since

evidence based medicine has been corrupted by corporate interests, failed regulation, and commercialisation of academia.[90]

Systematic Reviews methodologies are capable of bias and abuse in respect of (i) choice of inclusion criteria (ii) choice of outcome measures,

comparisons and analyses (iii) the subjectivity inevitable in Risk of Bias assessments, even when codified procedures and criteria are

observed.[91][92][93] An example of all these problems can be seen in a Cochrane Review,[94] as analyzed by Edmund J. Fordham, et al. in their

relevant review.[91]

A lag exists between when the RCT is conducted and when its results are published.[95]

A lag exists between when results are published and when they are properly applied.[96]

Hypocognition (the absence of a simple, consolidated mental framework into which new information can be placed) can hinder the application of

EBM.[97]

Values: while patient values are considered in the original definition of EBM, the importance of values is not commonly emphasized in EBM training, a

potential problem under current study.[98][99][100]

A 2018 study, "Why all randomised controlled trials produce biased results", assessed the 10 most cited RCTs and argued that trials face a wide range of

biases and constraints, from trials only being able to study a small set of questions amenable to randomisation and generally only being able to assess

the average treatment effect of a sample, to limitations in extrapolating results to another context, among many others outlined in the study.[79]

Application of evidence in clinical settings [ edit ]

Despite the emphasis on evidence-based medicine, unsafe or ineffective medical practices continue to be applied, because of patient demand for tests

or treatments, because of failure to access information about the evidence, or because of the rapid pace of change in the scientific evidence.[101] For

example, between 2003 and 2017, the evidence shifted on hundreds of medical practices, including whether hormone replacement therapy was safe,

whether babies should be given certain vitamins, and whether antidepressant drugs are effective in people with Alzheimer's disease.[102] Even when the

evidence unequivocally shows that a treatment is either not safe or not effective, it may take many years for other treatments to be adopted.[101]

There are many factors that contribute to lack of uptake or implementation of evidence-based recommendations.[103] These include lack of awareness at

the individual clinician or patient (micro) level, lack of institutional support at the organisation level (meso) level or higher at the policy (macro)

level.[104][105] In other cases, significant change can require a generation of physicians to retire or die and be replaced by physicians who were trained

with more recent evidence.[101]

Physicians may also reject evidence that conflicts with their anecdotal experience or because of cognitive biases – for example, a vivid memory of a rare

but shocking outcome (the availability heuristic), such as a patient dying after refusing treatment.[101] They may overtreat to "do something" or to address

a patient's emotional needs.[101] They may worry about malpractice charges based on a discrepancy between what the patient expects and what the

evidence recommends.[101] They may also overtreat or provide ineffective treatments because the treatment feels biologically plausible.[101]

It is the responsibility of those developing clinical guidelines to include an implementation plan to facilitate uptake.[106] The implementation process will

include an implementation plan, analysis of the context, identifying barriers and facilitators and designing the strategies to address them.[106]

Education [ edit ]

Training in evidence based medicine is offered across the continuum of medical education.[56] Educational competencies have been created for the

education of health care professionals.[107][56][108]

The Berlin questionnaire and the Fresno Test[109][110] are validated instruments for assessing the effectiveness of education in evidence-based

medicine.[111][112] These questionnaires have been used in diverse settings.[113][114]

A Campbell systematic review that included 24 trials examined the effectiveness of e-learning in improving evidence-based health care knowledge and

practice. It was found that e-learning, compared to no learning, improves evidence-based health care knowledge and skills but not attitudes and

behaviour. No difference in outcomes is present when comparing e-learning with face-to-face learning. Combining e-learning and face-to-face learning

(blended learning) has a positive impact on evidence-based knowledge, skills, attitude and behavior.[115] As a form of e-learning, some medical school

students engage in editing Wikipedia to increase their EBM skills,[116] and some students construct EBM materials to develop their skills in

communicating medical knowledge.[117]

See also [ edit ]

Anecdotal evidence

Medicine portal

Clinical decision support system (CDSS)

Clinical epidemiology

Consensus (medical)

Epidemiology

Evidence-based dentistry

Evidence-based design

Evidence-based nursing

Evidence-based policy

Evidence-based practices

Evidence-based management

Evidence-based research (Metascience)

Hierarchy of evidence

Medical algorithm

Paradigm shift – the social process by which scientific evidence is eventually adopted

Personalized medicine

Policy-based evidence making

Precision medicine

Scientific evidence

References [ edit ]

1. ^ a b c d e f Sackett DL, Rosenberg WM, Gray JA, Haynes RB, Richardson 67. ^ OCEBM Levels of Evidence Working Group (May 2016). "The Oxford

WS (January 1996). "Evidence based medicine: what it is and what it Levels of Evidence 2' " . Archived from the original on 5 December 2013.

isn't" . BMJ. 312 (7023): 71–72. doi:10.1136/bmj.312.7023.71 . Retrieved 9 December 2013.

PMC 2349778 . PMID 8555924 . 68. ^ Paul C, Gallini A, Archier E, Castela E, Devaux S, Aractingi S, et al. (May

2. ^ a b Evidence-Based Medicine Working Group (November 1992). 2012). "Evidence-based recommendations on topical treatment and

"Evidence-based medicine. A new approach to teaching the practice of phototherapy of psoriasis: systematic review and expert opinion of a panel of

medicine". JAMA. 268 (17): 2420–2425. CiteSeerX 10.1.1.684.3783 . dermatologists". Journal of the European Academy of Dermatology and

doi:10.1001/JAMA.1992.03490170092032 . PMID 1404801 . Venereology. 26 (Suppl 3): 1–10. doi:10.1111/j.1468-3083.2012.04518.x .

3. ^ "Evidence-Based Medicine Pyramid" . Med Scholarly. Retrieved PMID 22512675 . S2CID 36103291 .

28 September 2023. 69. ^ "Welcome to the GRADE working group" . www.gradeworkinggroup.org.

4. ^ Brater DC, Daly WJ (May 2000). "Clinical pharmacology in the Middle Archived from the original on 7 February 2006. Retrieved 24 September

Ages: principles that presage the 21st century". Clinical Pharmacology and 2007.

Therapeutics. 67 (5): 447–450. doi:10.1067/mcp.2000.106465 . 70. ^ a b c Balshem H, Helfand M, Schünemann HJ, Oxman AD, Kunz R, Brozek

PMID 10824622 . S2CID 45980791 . J, et al. (April 2011). "GRADE guidelines: 3. Rating the quality of

5. ^ Daly WJ, Brater DC (2000). "Medieval contributions to the search for truth evidence" . Journal of Clinical Epidemiology. 64 (4): 401–406.

in clinical medicine". Perspectives in Biology and Medicine. 43 (4): 530–540. doi:10.1016/j.jclinepi.2010.07.015 . PMID 21208779 .

doi:10.1353/pbm.2000.0037 . PMID 11058989 . S2CID 30485275 . 71. ^ a b c Schünemann H, Brożek J, Oxman A, eds. (2009). GRADE handbook

6. ^ Shoja MM, Rashidi MR, Tubbs RS, Etemadi J, Abbasnejad F, Agutter PS for grading quality of evidence and strength of recommendation (Version

(August 2011). "Legacy of Avicenna and evidence-based medicine". 3.2 ed.).

International Journal of Cardiology. 150 (3): 243–246. "GRADEPro" . Cochrane Informatics and Knowledge Management

doi:10.1016/j.ijcard.2010.10.019 . PMID 21093081 . Department. Archived from the original on 5 March 2016. Retrieved

7. ^ Akhondzadeh S (January 2014). "Avicenna and evidence based 1 March 2016.

medicine" . Avicenna Journal of Medical Biotechnology. 6 (1): 1–2. Schünemann H, Brożek J, Guyatt G, Oxman A, eds. (2013). GRADE

PMC 3895573 . PMID 24523951 . handbook for grading quality of evidence and strength of recommendations.

8. ^ John Baptista Van Helmont (1662). Oriatrike, or Physick Refined (English Updated October 2013 . The GRADE Working Group. Retrieved

translation of Ortus medicinae) . Translated by John Chandler. 3 September 2019.

9. ^ Lind J (2018). Treatise on the scurvy. Gale Ecco. ISBN 978-1-379-46980- 72. ^ DeVito, Nicholas J.; Goldacre, Ben (April 2019). "Catalogue of bias:

3. publication bias" . BMJ Evidence-based Medicine. 24 (2): 53–54.

10. ^ "Statistical research on conditions caused by calculi by Doctor Civiale. doi:10.1136/bmjebm-2018-111107 . ISSN 2515-4478 .

1835" . International Journal of Epidemiology. 30 (6): 1246–1249. PMID 30523135 .

December 2001 [1835]. doi:10.1093/ije/30.6.1246 . PMID 11821317 . 73. ^ Sherman M, Burak K, Maroun J, Metrakos P, Knox JJ, Myers RP, et al.

Archived from the original on 29 April 2005. (October 2011). "Multidisciplinary Canadian consensus recommendations for

11. ^ Guyatt GH. Evidence-Based Medicine [editorial]. ACP Journal Club the management and treatment of hepatocellular carcinoma" . Current

1991:A-16. (Annals of Internal Medicine; vol. 114, suppl. 2). Oncology. 18 (5): 228–240. doi:10.3747/co.v18i5.952 . PMC 3185900 .

12. ^ "Development of evidence-based medicine explored in oral history video, PMID 21980250 .

AMA, Jan 27, 2014" . 27 January 2014. 74. ^ "Patient Compliance with statins" Bandolier Review 2004 Archived 12

13. ^ Sackett DL, Rosenberg WM (November 1995). "The need for evidence- May 2015 at archive.today

based medicine" . Journal of the Royal Society of Medicine. 88 (11): 620– 75. ^ a b c Bellomo, Rinaldo; Bagshaw, Sean M. (2006). "Evidence-based

624. doi:10.1177/014107689508801105 . PMC 1295384 . medicine: classifying the evidence from clinical trials—the need to consider

PMID 8544145 . other dimensions" . Critical Care. 10 (5): 232. doi:10.1186/cc5045 .

ISSN 1466-609X . PMC 1751050 . PMID 17029653 .

14. ^ The history of evidence-based medicine . Cologne, Germany: Institute

for Quality and Efficiency in Health Care (IQWiG). 2016. NBK390299. 76. ^ Greeley, Christopher (December 2016). "Demystifying the Medical

Literature" . Academic Forensic Pathology. 6 (4): 556–567.

15. ^ Alvan R. Feinstein (1967). Clinical Judgement. Williams & Wilkins.

doi:10.23907/2016.055 . ISSN 1925-3621 . PMC 6474497 .

16. ^ Cochrane A.L. (1972). Effectiveness and Efficiency: Random Reflections

PMID 31239931 .

on Health Services. Nuffield Provincial Hospitals Trust.

77. ^ a b Akobeng, A. K. (August 2005). "Understanding randomised controlled

17. ^ Wennberg J (December 1973). "Small area variations in health care

trials" . Archives of Disease in Childhood. 90 (8): 840–844.

delivery". Science. 182 (4117): 1102–1108. Bibcode:1973Sci...182.1102W .

doi:10.1136/adc.2004.058222 . ISSN 1468-2044 . PMC 1720509 .

doi:10.1126/science.182.4117.1102 . PMID 4750608 .

PMID 16040885 .

S2CID 43819003 .

78. ^ "Statistical Power" . Bandolier. 2007.

18. ^ Eddy DM (1982). "18 Probabilistic Reasoning in Clinical Medicine:

79. ^ a b Krauss A (June 2018). "Why all randomised controlled trials produce

Problems and Opportunities" . In Kahneman D, Slovic P, Tversky A (eds.).

Judgment Under Uncertainty: Heuristics and Biases. Cambridge University biased results" . Annals of Medicine. 50 (4): 312–322.

doi:10.1080/07853890.2018.1453233 . PMID 29616838 .

Press. pp. 249–267. ISBN 978-0-521-28414-1.

S2CID 4971775 .

19. ^ Eddy DM (August 1982). "Clinical policies and the quality of clinical

80. ^ Timmermans S, Mauck A (2005). "The promises and pitfalls of evidence-

practice". The New England Journal of Medicine. 307 (6): 343–347.

based medicine". Health Affairs. 24 (1): 18–28.

doi:10.1056/nejm198208053070604 . PMID 7088099 .

doi:10.1377/hlthaff.24.1.18 . PMID 15647212 .

20. ^ a b Eddy DM (1984). "Variations in physician practice: the role of

81. ^ Jureidini J, McHenry LB (March 2022). "The illusion of evidence based

uncertainty". Health Affairs. 3 (2): 74–89. doi:10.1377/hlthaff.3.2.74 .

medicine" . BMJ. 376: o702. doi:10.1136/bmj.o702 . PMID 35296456 .

PMID 6469198 .

S2CID 247475472 .

21. ^ a b Eddy DM, Billings J (1988). "The quality of medical evidence:

82. ^ Straus SE, McAlister FA (October 2000). "Evidence-based medicine: a

implications for quality of care". Health Affairs. 7 (1): 19–32.

commentary on common criticisms" (PDF). CMAJ. 163 (7): 837–841.

doi:10.1377/hlthaff.7.1.19 . PMID 3360391 .

PMC 80509 . PMID 11033714 .

22. ^ a b Feinstein AR (1985). Clinical Epidemiology: The Architecture of Clinical

83. ^ Cohen AM, Stavri PZ, Hersh WR (February 2004). "A categorization and

Research. W.B. Saunders Company. ISBN 978-0-7216-1308-6.

analysis of the criticisms of Evidence-Based Medicine" (PDF).

23. ^ a b Sackett D (2006). Haynes BR (ed.). Clinical Epidemiology: How to Do

International Journal of Medical Informatics. 73 (1): 35–43.

Clinical Practice Research . Lippincott Williams & Wilkins. ISBN 978-0-

CiteSeerX 10.1.1.586.3699 . doi:10.1016/j.ijmedinf.2003.11.002 .

7817-4524-6.

PMID 15036077 . Archived from the original (PDF) on 3 July 2010.

24. ^ Chassin MR, Kosecoff J, Solomon DH, Brook RH (November 1987). "How

84. ^ Upshur RE, VanDenKerkhof EG, Goel V (May 2001). "Meaning and

coronary angiography is used. Clinical determinants of appropriateness".

measurement: an inclusive model of evidence in health care". Journal of

JAMA. 258 (18): 2543–2547. doi:10.1001/jama.258.18.2543 .

Evaluation in Clinical Practice. 7 (2): 91–96. doi:10.1046/j.1365-

PMID 3312657 .

2753.2001.00279.x . PMID 11489034 .

25. ^ Eddy DM (1992). A Manual for Assessing Health Practices and Designing

85. ^ Rogers WA (April 2004). "Evidence based medicine and justice: a

Practice Policies. American College of Physicians. ISBN 978-0-943126-18-0.

framework for looking at the impact of EBM upon vulnerable or

26. ^ Institute of Medicine (1990). Field MJ, Lohr KN (eds.). Clinical Practice

disadvantaged groups" . Journal of Medical Ethics. 30 (2): 141–145.

Guidelines: Directions for a New Program . Washington, DC: National

doi:10.1136/jme.2003.007062 . PMC 1733835 . PMID 15082806 .

Academy of Sciences Press. p. 32. doi:10.17226/1626 . ISBN 978-0-309-

86. ^ Greenhalgh, Trisha; Howick, Jeremy; Maskrey, Neal (13 June 2014).

07666-1. PMC 5310095 . PMID 25144032 .

"Evidence based medicine: a movement in crisis?" . BMJ. 348: g3725.

27. ^ a b Eddy DM (April 1990). "Clinical decision making: from theory to

doi:10.1136/bmj.g3725 . PMC 4056639 . PMID 24927763 .

practice. Practice policies—guidelines for methods". JAMA. 263 (13): 1839–

87. ^ Sheridan, Desmond J.; Julian, Desmond G. (July 2016). "Achievements

1841. doi:10.1001/jama.263.13.1839 . PMID 2313855 .

and Limitations of Evidence-Based Medicine". Journal of the American

28. ^ Eddy DM (April 1990). "Clinical decision making: from theory to practice.

College of Cardiology. 68 (2): 204–213. doi:10.1016/j.jacc.2016.03.600 .

Guidelines for policy statements: the explicit approach". JAMA. 263 (16):

PMID 27386775 .

2239–40, 2243. doi:10.1001/jama.1990.03440160101046 .

88. ^ Every-Palmer S, Howick J (December 2014). "How evidence-based

PMID 2319689 .

medicine is failing due to biased trials and selective publication" . Journal

29. ^ Eddy DM (1996). Clinical Decision Making: From Theory to Practice. A

of Evaluation in Clinical Practice. 20 (6): 908–914. doi:10.1111/jep.12147 .

Collection of Essays. American Medical Association. ISBN 978-0-7637-

PMID 24819404 .

0143-7.

89. ^ Friedman LS, Richter ED (January 2004). "Relationship between conflicts

30. ^ Howick JH (23 February 2011). The Philosophy of Evidence-based

of interest and research results" . Journal of General Internal Medicine. 19

Medicine. Wiley. p. 15. ISBN 978-1-4443-4266-6.

(1): 51–56. doi:10.1111/j.1525-1497.2004.30617.x . PMC 1494677 .

31. ^ Katz DL (2001). Clinical Epidemiology & Evidence-Based Medicine:

PMID 14748860 .

Fundamental Principles of Clinical Reasoning & Research . Sage.

90. ^ Jureidini J, McHenry LB (March 2022). "The illusion of evidence based

ISBN 978-0-7619-1939-1.

medicine" . BMJ. 376: o702. doi:10.1136/bmj.o702 . PMID 35296456 .

32. ^ Grobbee DE, Hoes AW (2009). Clinical Epidemiology: Principles, Methods,

91. ^ a b Fordham Edmund J, et al. (October 2021). "The uses and abuses of

and Applications for Clinical Research. Jones & Bartlett Learning. ISBN 978-

systematic reviews" . ResearchGate.

0-7637-5315-3.

92. ^ Kicinski M, Springate DA, Kontopantelis E (September 2015). "Publication

33. ^ a b Doi SA (2012). Understanding Evidence in Health Care: Using Clinical

bias in meta-analyses from the Cochrane Database of Systematic Reviews".

Epidemiology. South Yarra, VIC, Australia: Palgrave Macmillan. ISBN 978-1-

Statistics in Medicine. 34 (20): 2781–2793. doi:10.1002/sim.6525 .

4202-5669-7.

PMID 25988604 . S2CID 25560005 .

34. ^ Rosenberg W, Donald A (April 1995). "Evidence based medicine: an

93. ^ Egger M, Smith GD, Sterne JA (November–December 2001). "Uses and

approach to clinical problem-solving" . BMJ. 310 (6987): 1122–1126.

abuses of meta-analysis" . Clinical Medicine. 1 (6): 478–484.

doi:10.1136/bmj.310.6987.1122 . PMC 2549505 . PMID 7742682 .

doi:10.7861/clinmedicine.1-6-478 . PMC 4953876 . PMID 11792089 .

35. ^ Greenhalgh T (2010). How to Read a Paper: The Basics of Evidence-

94. ^ Popp M, Reis S, Schießer S, Hausinger RI, Stegemann M, Metzendorf MI,

Based Medicine (4th ed.). John Wiley & Sons. p. 1 . ISBN 978-1-4443-

et al. (June 2022). "Ivermectin for preventing and treating COVID-19" . The

9036-0.

Cochrane Database of Systematic Reviews. 2022 (6): CD015017.

36. ^ Eddy DM (March 1990). "Practice policies: where do they come from?".

doi:10.1002/14651858.CD015017.pub3 . PMC 9215332 .

JAMA. 263 (9): 1265, 1269, 1272 passim. doi:10.1001/jama.263.9.1265 .

PMID 35726131 .

PMID 2304243 .

95. ^ Yitschaky O, Yitschaky M, Zadik Y (May 2011). "Case report on trial: Do

37. ^ Tonelli MR (December 2001). "The limits of evidence-based medicine".

you, Doctor, swear to tell the truth, the whole truth and nothing but the

Respiratory Care. 46 (12): 1435–1440. PMID 11728302 .

truth?" . Journal of Medical Case Reports. 5 (1): 179. doi:10.1186/1752-

38. ^ Eddy DM (2005). "Evidence-based medicine: a unified approach". Health

1947-5-179 . PMC 3113995 . PMID 21569508 .

Affairs. 24 (1): 9–17. doi:10.1377/hlthaff.24.1.9 . PMID 15647211 .

96. ^ "Knowledge Transfer in the ED: How to Get Evidence Used" . Best

39. ^ Eddy D (1980). "ACS report on the cancer-related health checkup". CA. 30

Evidence Healthcare Blog. Archived from the original on 8 October 2013.

(4): 193–240. doi:10.3322/canjclin.30.4.194 . PMID 6774802 .

Retrieved 8 October 2013.

S2CID 221546339 .

97. ^ Mariotto A (January 2010). "Hypocognition and evidence-based medicine".

40. ^ "About the USPSTF" . Archived from the original on 15 August 2014.

Internal Medicine Journal. 40 (1): 80–82. doi:10.1111/j.1445-

Retrieved 21 August 2014.

5994.2009.02086.x . PMID 20561370 . S2CID 24519238 .

41. ^ Rettig RA, Jacobson PD, Farquhar CM, Aubry WM (2007). False Hope:

98. ^ Yamada S, Slingsby BT, Inada MK, Derauf D (1 June 2008). "Evidence-

Bone Marrow Transplantation for Breast Cancer: Bone Marrow

based public health: a critical perspective". Journal of Public Health. 16 (3):

Transplantation for Breast Cancer . Oxford University Press. p. 183.

169–172. doi:10.1007/s10389-007-0156-7 . ISSN 0943-1853 .

ISBN 978-0-19-974824-2.

S2CID 652725 .

42. ^ ab Davino-Ramaya C, Krause LK, Robbins CW, Harris JS, Koster M,

99. ^ Kelly MP, Heath I, Howick J, Greenhalgh T (October 2015). "The

Chan W, Tom GI (2012). "Transparency matters: Kaiser Permanente's

importance of values in evidence-based medicine" . BMC Medical Ethics.

National Guideline Program methodological processes" . The Permanente

16 (1): 69. doi:10.1186/s12910-015-0063-3 . PMC 4603687 .

Journal. 16 (1): 55–62. doi:10.7812/tpp/11-134 . PMC 3327114 .

PMID 26459219 .

PMID 22529761 .

100. ^ Fulford KW, Peile H, Carroll H (March 2012). Essential Values-Based

43. ^ Smith R (October 1991). "Where is the wisdom...?" . BMJ. 303 (6806):

Practice . Cambridge University Press. ISBN 978-0-521-53025-5.

798–799. doi:10.1136/bmj.303.6806.798 . PMC 1671173 .

101. ^ a b c d e f g Epstein D (22 February 2017). "When Evidence Says No, But

PMID 1932964 .

Doctors Say Yes" . ProPublica. Retrieved 24 February 2017.

44. ^ "The Cochrane Collaboration" . Retrieved 21 August 2014.

102. ^ Herrera-Perez D, Haslam A, Crain T, Gill J, Livingston C, Kaestner V, et al.

45. ^ "Agency for Health Care Policy and Research" . Retrieved 21 August

(June 2019). "A comprehensive review of randomized clinical trials in three

2014.

medical journals reveals 396 medical reversals" . eLife. 8: e45183.

46. ^ "National Guideline Clearinghouse" . Archived from the original on 19

doi:10.7554/eLife.45183 . PMC 6559784 . PMID 31182188 .

August 2014. Retrieved 21 August 2014.

103. ^ Frantsve-Hawley J, Rindal DB (January 2019). "Translational Research:

47. ^ "National Institute for Health and Care Excellence" . Retrieved 21 August

Bringing Science to the Provider Through Guideline Implementation". Dental

2014.

Clinics of North America. 63 (1): 129–144.

48. ^ Ilic D, Maloney S (February 2014). "Methods of teaching medical trainees

doi:10.1016/j.cden.2018.08.008 . PMID 30447788 . S2CID 53950224 .

evidence-based medicine: a systematic review". Medical Education. 48 (2):

104. ^ Sharp CA, Swaithes L, Ellis B, Dziedzic K, Walsh N (August 2020).

124–135. doi:10.1111/medu.12288 . PMID 24528395 .

"Implementation research: making better use of evidence to improve

S2CID 12765787 .

healthcare" . Rheumatology. 59 (8): 1799–1801.

49. ^ Maggio LA, Tannery NH, Chen HC, ten Cate O, O'Brien B (July 2013). doi:10.1093/rheumatology/keaa088 . PMID 32252071 .

"Evidence-based medicine training in undergraduate medical education: a

105. ^ Carrier J (December 2017). "The challenges of evidence implementation:

review and critique of the literature published 2006–2011" . Academic

it's all about the context" . JBI Database of Systematic Reviews and

Medicine. 88 (7): 1022–1028. doi:10.1097/ACM.0b013e3182951959 .

Implementation Reports. 15 (12): 2830–2831. doi:10.11124/JBISRIR-2017-

PMID 23702528 .

003652 . PMID 29219863 .

50. ^ Meats E, Heneghan C, Crilly M, Glasziou P (April 2009). "Evidence-based

106. ^ a b Loza E, Carmona L, Woolf A, Fautrel B, Courvoisier DS, Verstappen S,

medicine teaching in UK medical schools". Medical Teacher. 31 (4): 332–

et al. (October 2022). "Implementation of recommendations in rheumatic

337. doi:10.1080/01421590802572791 . PMID 19404893 .

and musculoskeletal diseases: considerations for development and

S2CID 21133182 .

uptake" . Annals of the Rheumatic Diseases. 81 (10): 1344–1347.

51. ^ "UpToDate" . Retrieved 21 August 2014. doi:10.1136/ard-2022-223016 . PMID 35961760 . S2CID 251540204 .

52. ^ "Clinical Evidence" . Retrieved 21 August 2014.

107. ^ Albarqouni L, Hoffmann T, Straus S, Olsen NR, Young T, Ilic D, et al. (June

53. ^ Gray, J. A. Muir (2009). Evidence-based Health Care & Public Health. 2018). "Core Competencies in Evidence-Based Practice for Health

Churchill Livingstone. ISBN 978-0-443-10123-6. Professionals: Consensus Statement Based on a Systematic Review and

54. ^ "AAFP promotes acupuncture" . Science-Based Medicine. 9 October Delphi Survey" . JAMA Network Open. 1 (2): e180281.

2018. Retrieved 12 January 2019. doi:10.1001/jamanetworkopen.2018.0281 . PMID 30646073 .

55. ^ Cook DJ, Jaeschke R, Guyatt GH (1992). "Critical appraisal of therapeutic S2CID 58637188 .

interventions in the intensive care unit: human monoclonal antibody 108. ^ Shaughnessy AF, Torro JR, Frame KA, Bakshi M (May 2016). "Evidence-

treatment in sepsis. Journal Club of the Hamilton Regional Critical Care based medicine teaching requirements in the USA: taxonomy and themes".

Group". Journal of Intensive Care Medicine. 7 (6): 275–282. Journal of Evidence-Based Medicine. 9 (2): 53–58.

doi:10.1177/088506669200700601 . PMID 10147956 . doi:10.1111/jebm.12186 . PMID 27310370 . S2CID 2898612 .

S2CID 7194293 . 109. ^ Fritsche L, Greenhalgh T, Falck-Ytter Y, Neumayer HH, Kunz R

56. ^ a b c Dawes M, Summerskill W, Glasziou P, Cartabellotta A, Martin J, (December 2002). "Do short courses in evidence based medicine improve

Hopayian K, et al. (January 2005). "Sicily statement on evidence-based knowledge and skills? Validation of Berlin questionnaire and before and after

practice" . BMC Medical Education. 5 (1): 1. doi:10.1186/1472-6920-5-1 . study of courses in evidence based medicine" . BMJ. 325 (7376): 1338–

PMC 544887 . PMID 15634359 . 1341. doi:10.1136/bmj.325.7376.1338 . PMC 137813 .

57. ^ Richardson WS, Wilson MC, Nishikawa J, Hayward RS (1995). "The well- PMID 12468485 .

built clinical question: a key to evidence-based decisions". ACP Journal 110. ^ Ramos KD, Schafer S, Tracz SM (February 2003). "Validation of the

Club. 123 (3): A12–A13. doi:10.7326/ACPJC-1995-123-3-A12 . Fresno test of competence in evidence based medicine" . BMJ. 326

PMID 7582737 . (7384): 319–321. doi:10.1136/bmj.326.7384.319 . PMC 143529 .

58. ^ Rosenberg WM, Deeks J, Lusher A, Snowball R, Dooley G, Sackett D PMID 12574047 .

(1998). "Improving searching skills and evidence retrieval" . Journal of the Fresno test

Royal College of Physicians of London. 32 (6): 557–563. PMC 9662986 . 111. ^ Shaneyfelt T, Baum KD, Bell D, Feldstein D, Houston TK, Kaatz S, et al.

PMID 9881313 . (September 2006). "Instruments for evaluating education in evidence-based

59. ^ Epling J, Smucny J, Patil A, Tudiver F (October 2002). "Teaching practice: a systematic review". JAMA. 296 (9): 1116–1127.

evidence-based medicine skills through a residency-developed guideline". doi:10.1001/jama.296.9.1116 . PMID 16954491 .

Family Medicine. 34 (9): 646–648. PMID 12455246 . 112. ^ Straus SE, Green ML, Bell DS, Badgett R, Davis D, Gerrity M, et al.

60. ^ Ivers N, Jamtvedt G, Flottorp S, Young JM, Odgaard-Jensen J, French (October 2004). "Evaluating the teaching of evidence based medicine:

SD, et al. (June 2012). "Audit and feedback: effects on professional practice conceptual framework" . BMJ. 329 (7473): 1029–1032.

and healthcare outcomes". The Cochrane Database of Systematic Reviews. doi:10.1136/bmj.329.7473.1029 . PMC 524561 . PMID 15514352 .

6 (6): CD000259. doi:10.1002/14651858.CD000259.pub3 . 113. ^ Kunz R, Wegscheider K, Fritsche L, Schünemann HJ, Moyer V, Miller D,

PMID 22696318 . et al. (2010). "Determinants of knowledge gain in evidence-based medicine

61. ^ Tanjong-Ghogomu E, Tugwell P, Welch V (2009). "Evidence-based short courses: an international assessment" . Open Medicine. 4 (1): e3–

medicine and the Cochrane Collaboration" . Bulletin of the NYU Hospital e10. doi:10.2174/1874104501004010003 (inactive 31 January 2024).

for Joint Diseases. 67 (2): 198–205. PMID 19583554 . Archived from the PMC 3116678 . PMID 21686291 .

original on 1 June 2013. 114. ^ West CP, Jaeger TM, McDonald FS (June 2011). "Extended evaluation of

62. ^ El Dib RP, Atallah AN, Andriolo RB (August 2007). "Mapping the Cochrane a longitudinal medical school evidence-based medicine curriculum" .

evidence for decision making in health care". Journal of Evaluation in Journal of General Internal Medicine. 26 (6): 611–615. doi:10.1007/s11606-

Clinical Practice. 13 (4): 689–692. doi:10.1111/j.1365-2753.2007.00886.x . 011-1642-8 . PMC 3101983 . PMID 21286836 .

PMID 17683315 . 115. ^ Rohwer A, Motaze NV, Rehfuess E, Young T (2017). "E-learning of

63. ^ Singh A, Hussain S, Najmi AK (November 2017). "Role of Cochrane evidence-based health care (EBHC) to increase EBHC competencies in

Reviews in informing US private payers' policies". Journal of Evidence- healthcare professionals: a systematic review" . Campbell Systematic

Based Medicine. 10 (4): 293–331. doi:10.1111/jebm.12278 . Reviews. 4: 1–147. doi:10.4073/csr.2017.4 .

PMID 29193899 . S2CID 22796658 . 116. ^ Azzam A, Bresler D, Leon A, Maggio L, Whitaker E, Heilman J, et al.

64. ^ "EBM: Levels of Evidence" . Essential Evidence Plus. Retrieved (February 2017). "Why Medical Schools Should Embrace Wikipedia: Final-

23 February 2012. Year Medical Student Contributions to Wikipedia Articles for Academic Credit

65. ^ a b Tonelli MR (November 1999). "In defense of expert opinion" . at One School" . Academic Medicine. 92 (2): 194–200.

Academic Medicine. 74 (11): 1187–1192. doi:10.1097/00001888-199911000- doi:10.1097/ACM.0000000000001381 . PMC 5265689 .

00010 . PMID 10587679 . PMID 27627633 .

66. ^ U.S. Preventive Services Task Force (August 1989). Guide to clinical 117. ^ Maggio LA, Willinsky JM, Costello JA, Skinner NA, Martin PC, Dawson JE

preventive services: report of the U.S. Preventive Services Task Force . (December 2020). "Integrating Wikipedia editing into health professions

DIANE Publishing. pp. 24–. ISBN 978-1-56806-297-6. education: a curricular inventory and review of the literature" . Perspectives

on Medical Education. 9 (6): 333–342. doi:10.1007/s40037-020-00620-1 .

PMC 7718341 . PMID 33030643 .

Bibliography [ edit ]

Doi SA (2012). Understanding evidence in health care: Using clinical epidemiology. South Yarra, VIC, Australia: Palgrave Macmillan. ISBN 978-1-4202-5669-7.

Grobbee DE, Hoes AW (2009). Clinical Epidemiology: Principles, Methods, and Applications for Clinical Research. Jones & Bartlett Learning. ISBN 978-0-7637-5315-

3.

Howick JH (2011). The Philosophy of Evidence-based Medicine . Wiley. ISBN 978-1-4051-9667-3.

Katz DL (2001). Clinical Epidemiology & Evidence-Based Medicine: Fundamental Principles of Clinical Reasoning & Research . Sage. ISBN 978-0-7619-1939-1.

Stegenga J (2018). Care and Cure: An Introduction To Philosophy of Medicine . University of Chicago Press. ISBN 978-0-226-59517-7.

External links [ edit ]

Evidence-Based Medicine – An Oral History , JAMA and the BMJ, 2014. Look up evidence-based

Centre for Evidence-based Medicine at the University of Oxford. medicine in Wiktionary, the

free dictionary.

Evidence-Based Medicine at Curlie

· · Evidence-based practice [show]

· · Health care quality [show]

· · Medicine [show]

· · Clinical research and experimental design [show]

Authority control databases: National France · BnF data · Germany · Israel · United States · Czech Republic

Categories: Evidence-based medicine Evidence Health informatics Health care quality Clinical research

This page was last edited on 12 February 2024, at 20:11 (UTC).

Text is available under the Creative Commons Attribution-ShareAlike License 4.0; additional terms may apply. By using this site, you agree to the Terms of Use and Privacy Policy. Wikipedia® is a registered trademark of the Wikimedia Foundation, Inc., a non-profit

organization.

Privacy policy About Wikipedia Disclaimers Contact Wikipedia Code of Conduct Developers Statistics Cookie statement Mobile view

You might also like

- Rheumatoid Arthritis CaseDocument9 pagesRheumatoid Arthritis Caseندى القلويNo ratings yet

- 2 Nursing Foundation II Including Health Assessment Module-Converted - 020222Document17 pages2 Nursing Foundation II Including Health Assessment Module-Converted - 020222Akshata BansodeNo ratings yet

- Evidence Based Practice A Concept AnalysisDocument7 pagesEvidence Based Practice A Concept Analysissana naazNo ratings yet

- Drugs Acting On Digestive System of AnimalsDocument11 pagesDrugs Acting On Digestive System of AnimalsSunil100% (4)

- Evidence-Based Management: Jump To Navigation Jump To SearchDocument4 pagesEvidence-Based Management: Jump To Navigation Jump To Searchpizzah001pNo ratings yet

- Quality and Safety: Critical Care NursingDocument3 pagesQuality and Safety: Critical Care NursingKristine Marie YlayaNo ratings yet

- Salinan Literature Review and Use of Theory - S2 Kuantitatif - MHSDocument43 pagesSalinan Literature Review and Use of Theory - S2 Kuantitatif - MHSasna nipanNo ratings yet

- h7d) KCD, 5bc$9zm-5Document29 pagesh7d) KCD, 5bc$9zm-5Kristel AnneNo ratings yet

- Medical Surgical NursingDocument21 pagesMedical Surgical NursingSanthosh.S.UNo ratings yet

- Evidence-Based Public HealthDocument19 pagesEvidence-Based Public HealthAsthra R.No ratings yet

- Evidence Based Practice: Beth Cloud, PHD, PT Jena Ogston, PHD, PT Peter Rundquist, PHD, PTDocument9 pagesEvidence Based Practice: Beth Cloud, PHD, PT Jena Ogston, PHD, PT Peter Rundquist, PHD, PTsana naazNo ratings yet

- Autor Guidelines #Author-GuidelinesDocument4 pagesAutor Guidelines #Author-GuidelinesTeofilo Palsimon Jr.No ratings yet

- Puprevman Bps Mar09Document40 pagesPuprevman Bps Mar09Brian NiuNo ratings yet

- Basic Course in Biomedical Research Handbook: March 2021Document89 pagesBasic Course in Biomedical Research Handbook: March 2021Ayush GuptaNo ratings yet

- TFNDocument35 pagesTFNDominique Lim Yañez100% (1)

- EBPDocument43 pagesEBPEvangeline JenniferNo ratings yet

- Howard Butcher - Integrating The Nursing Intervention Classification Into Education and PracticeDocument75 pagesHoward Butcher - Integrating The Nursing Intervention Classification Into Education and PracticeHanif Illahi RidwanaNo ratings yet

- Evidence Based Report Mam KathDocument3 pagesEvidence Based Report Mam KathLorenz Jude CańeteNo ratings yet

- NCM 111Document6 pagesNCM 111Erika Mae Sta. MariaNo ratings yet

- Nursing ResearchDocument12 pagesNursing ResearchShane SappayaniNo ratings yet

- Informatics Application in Evidence-Based Nursing Practice-REPORTDocument61 pagesInformatics Application in Evidence-Based Nursing Practice-REPORTKristle Ann VillarealNo ratings yet

- Maintaining Best Practice in Record-Keeping and DocumentationDocument7 pagesMaintaining Best Practice in Record-Keeping and DocumentationRetno Indah PertiwiNo ratings yet

- Clinical Decision-Making: Theory and Practice: Continuing Professional DevelopmentDocument6 pagesClinical Decision-Making: Theory and Practice: Continuing Professional DevelopmentAmeng GosimNo ratings yet

- Unit1nursingresearch 160829140315Document235 pagesUnit1nursingresearch 160829140315Hardeep KaurNo ratings yet

- Kidney Careoutline1203Document3 pagesKidney Careoutline1203weesiniNo ratings yet

- NCMB 311 LecDocument4 pagesNCMB 311 LecGian Carlo BenitoNo ratings yet

- Journal AN INTELLIGENT MEDICINE RECOMMENDER SYSTEM FRAMEWORKDocument9 pagesJournal AN INTELLIGENT MEDICINE RECOMMENDER SYSTEM FRAMEWORKAishvarya ShanmugamNo ratings yet

- Introductin To ResearchDocument7 pagesIntroductin To ResearchhalayehiahNo ratings yet

- DR Hadeel Tayeb: Insert Your ImageDocument42 pagesDR Hadeel Tayeb: Insert Your ImageWani GhootenNo ratings yet

- Mapping The Landscape of Knowledge Synthesis - Mallidou 2014Document10 pagesMapping The Landscape of Knowledge Synthesis - Mallidou 2014SILVIA ANGELA GUGELMINNo ratings yet

- Journal of Clinical Nursing - 2020 - Halberg - Understandings of and Experiences With Evidence Based Practice in PracticeDocument11 pagesJournal of Clinical Nursing - 2020 - Halberg - Understandings of and Experiences With Evidence Based Practice in Practicexisici5890No ratings yet

- Core Module 3: Information Technology, Clinical Governance and ResearchDocument9 pagesCore Module 3: Information Technology, Clinical Governance and ResearchKaushika KalaiNo ratings yet

- Medsurg Lecture Evidence-Based Practice: Evidence Can Be A Policy or A Standard Used by A Certain PopulationDocument5 pagesMedsurg Lecture Evidence-Based Practice: Evidence Can Be A Policy or A Standard Used by A Certain PopulationDada Tin GoTanNo ratings yet

- PT 304 Topic 1 - Guide To PT Practice - 2of4Document4 pagesPT 304 Topic 1 - Guide To PT Practice - 2of4Lynx Heera Legaspi QuiamcoNo ratings yet

- Evidence Based Dietetics PracticeDocument11 pagesEvidence Based Dietetics PracticeLinaTrisnawati100% (1)

- Clinical Reasoning in Neurological Physiotherapy: A Framework For The Management of Patients With Movement DisordersDocument12 pagesClinical Reasoning in Neurological Physiotherapy: A Framework For The Management of Patients With Movement DisordersPredrag NikolicNo ratings yet

- Nursing Theories & EBP SV 2023Document23 pagesNursing Theories & EBP SV 2023Just MeNo ratings yet

- التلخيص العام بحث علمي PDFDocument12 pagesالتلخيص العام بحث علمي PDFBenben LookitandI'mNo ratings yet

- S2 T1 FMCH Introduction To Research Methodology PDFDocument3 pagesS2 T1 FMCH Introduction To Research Methodology PDFKwinee Haira BautistaNo ratings yet

- SR NO Time Specific Objective Content Teaching Learning Activity AV Aids EvaluationDocument22 pagesSR NO Time Specific Objective Content Teaching Learning Activity AV Aids EvaluationAkshata BansodeNo ratings yet

- The Role of The Nurse in Research Some Themes in Nursing Ethics Code of EthicsDocument13 pagesThe Role of The Nurse in Research Some Themes in Nursing Ethics Code of EthicsAries BautistaNo ratings yet

- Nursing and Euthanasia: A Narrative Review of The Nursing Ethics LiteratureDocument16 pagesNursing and Euthanasia: A Narrative Review of The Nursing Ethics LiteratureFirda ApriyantiNo ratings yet

- Module 5 Evidence Based Nursing PracticeDocument15 pagesModule 5 Evidence Based Nursing PracticeALAS MichelleNo ratings yet

- Research 1 ModuleDocument23 pagesResearch 1 ModuleNina Anne ParacadNo ratings yet

- Purple and Green Modern ICT Computer Parts Classroom QuizDocument17 pagesPurple and Green Modern ICT Computer Parts Classroom QuizKRIZIELL KATE ALIGANNo ratings yet

- Ce610 enDocument24 pagesCe610 enChawan SanaanNo ratings yet

- Strategies For The Implementation of Palliative Care Education and Organizational Interventions in Long-Term Care FacilitiesDocument13 pagesStrategies For The Implementation of Palliative Care Education and Organizational Interventions in Long-Term Care FacilitiescharmyshkuNo ratings yet

- Evidence Based Practice in Nursing ResearchDocument3 pagesEvidence Based Practice in Nursing ResearchLorenz Jude CańeteNo ratings yet

- 3 NCM119A Evidence Based Practice Sept. 3 2021Document5 pages3 NCM119A Evidence Based Practice Sept. 3 2021Ghianx Carlox PioquintoxNo ratings yet

- Evidence Based PracticeDocument28 pagesEvidence Based PracticeSathish Rajamani100% (7)

- Introduction To Nursing Research and Importance of The Course in NursingDocument2 pagesIntroduction To Nursing Research and Importance of The Course in NursingAdiel CalsaNo ratings yet

- 2 Evidence-Based NursingDocument14 pages2 Evidence-Based NursingGeoffrey KimaniNo ratings yet

- EVIDENCE BASED PRACTICE Tools and TechniDocument16 pagesEVIDENCE BASED PRACTICE Tools and Technihassan mahmoodNo ratings yet

- Matrix Worksheet TemplateDocument4 pagesMatrix Worksheet TemplateDanny ClintonNo ratings yet

- EBM Self Instructional Manual 2008Document127 pagesEBM Self Instructional Manual 2008Adrian Oscar Z. Bacena100% (2)

- Clinical FocusDocument6 pagesClinical FocusSheryll Almira HilarioNo ratings yet

- Historical Perspectives On Evidence-Based Nursing: Suzanne C. Beyea, RN PHD Faan and Mary Jo Slattery, RN MsDocument4 pagesHistorical Perspectives On Evidence-Based Nursing: Suzanne C. Beyea, RN PHD Faan and Mary Jo Slattery, RN MsrinkuNo ratings yet

- Ms. Chithra Chandran A. First Year MSC Nursing Govt. College of Nursing KottayamDocument54 pagesMs. Chithra Chandran A. First Year MSC Nursing Govt. College of Nursing Kottayamchithrajayakrishnan100% (1)

- 6-Evidence Based For Hinari UsersDocument58 pages6-Evidence Based For Hinari UsersMohmmed Abu MahadyNo ratings yet

- Introduction To Nursing ResearchDocument44 pagesIntroduction To Nursing ResearchpremaNo ratings yet

- Advanced Practice and Leadership in Radiology NursingFrom EverandAdvanced Practice and Leadership in Radiology NursingKathleen A. GrossNo ratings yet

- 16th Amendment Judgement PDF - Google SearchDocument1 page16th Amendment Judgement PDF - Google SearchBadhon Chandra SarkarNo ratings yet

- Unnatural Offences - Decrypting The Phrase, Against The Order of Nature'Document1 pageUnnatural Offences - Decrypting The Phrase, Against The Order of Nature'Badhon Chandra SarkarNo ratings yet

- Cricket Scorecard - India Vs England, 2nd Test, England Tour of India, 2024Document1 pageCricket Scorecard - India Vs England, 2nd Test, England Tour of India, 2024Badhon Chandra SarkarNo ratings yet

- Age-Old Law Restricts Women's Right To Abortion in BangladeshDocument1 pageAge-Old Law Restricts Women's Right To Abortion in BangladeshBadhon Chandra SarkarNo ratings yet

- Abortion Is (Again) A Criminal-Justice Issue - The Texas ObserverDocument1 pageAbortion Is (Again) A Criminal-Justice Issue - The Texas ObserverBadhon Chandra SarkarNo ratings yet

- Trial Steps (Criminal Procedure) - Law Help BDDocument1 pageTrial Steps (Criminal Procedure) - Law Help BDBadhon Chandra SarkarNo ratings yet

- When Someone Dies in JapanDocument1 pageWhen Someone Dies in JapanBadhon Chandra SarkarNo ratings yet

- What Is Necrophilia - Mental Illness Where Individual Find Pleasure in Having Physical Relation With Dead BodiesDocument1 pageWhat Is Necrophilia - Mental Illness Where Individual Find Pleasure in Having Physical Relation With Dead BodiesBadhon Chandra SarkarNo ratings yet

- Hanskhali Rape Case - WikipediaDocument1 pageHanskhali Rape Case - WikipediaBadhon Chandra SarkarNo ratings yet

- Why Mumbai Indians Took Away Captaincy From Rohit SharmaDocument1 pageWhy Mumbai Indians Took Away Captaincy From Rohit SharmaBadhon Chandra SarkarNo ratings yet

- Category - Murder in India - WikipediaDocument1 pageCategory - Murder in India - WikipediaBadhon Chandra SarkarNo ratings yet

- What Is Putrefaction - Process of Putrefaction and Factors Affecting ItDocument1 pageWhat Is Putrefaction - Process of Putrefaction and Factors Affecting ItBadhon Chandra SarkarNo ratings yet

- What Is Harassment and What Is Not - Google SearchDocument1 pageWhat Is Harassment and What Is Not - Google SearchBadhon Chandra SarkarNo ratings yet

- Women's Health - Macquarie-St-ClinicDocument1 pageWomen's Health - Macquarie-St-ClinicBadhon Chandra SarkarNo ratings yet

- 4 Types of Hypoxia That Trauma Nurses Should Understand - Trauma System NewsDocument1 page4 Types of Hypoxia That Trauma Nurses Should Understand - Trauma System NewsBadhon Chandra SarkarNo ratings yet

- CMCH Morgue Guard Arrested On Charge of NecrophiliaDocument1 pageCMCH Morgue Guard Arrested On Charge of NecrophiliaBadhon Chandra SarkarNo ratings yet

- Animal - WikipediaDocument1 pageAnimal - WikipediaBadhon Chandra SarkarNo ratings yet

- All You Need To Know About The Priyadarshini Mattoo Case - IpleadersDocument1 pageAll You Need To Know About The Priyadarshini Mattoo Case - IpleadersBadhon Chandra SarkarNo ratings yet

- Crime in India - WikipediaDocument1 pageCrime in India - WikipediaBadhon Chandra SarkarNo ratings yet

- 2009 Shopian Rape and Murder Case - WikipediaDocument1 page2009 Shopian Rape and Murder Case - WikipediaBadhon Chandra SarkarNo ratings yet

- Act 5 April 1998Document21 pagesAct 5 April 1998Badhon Chandra SarkarNo ratings yet

- Hazard - WikipediaDocument1 pageHazard - WikipediaBadhon Chandra SarkarNo ratings yet

- All About The Hathras Case - IpleadersDocument1 pageAll About The Hathras Case - IpleadersBadhon Chandra SarkarNo ratings yet

- Lactic Acid - WikipediaDocument1 pageLactic Acid - WikipediaBadhon Chandra SarkarNo ratings yet

- Multicellular Organism - WikipediaDocument1 pageMulticellular Organism - WikipediaBadhon Chandra SarkarNo ratings yet

- Carboxylic Acid - WikipediaDocument1 pageCarboxylic Acid - WikipediaBadhon Chandra SarkarNo ratings yet

- Means of Transport - WikipediaDocument1 pageMeans of Transport - WikipediaBadhon Chandra SarkarNo ratings yet

- Derailment - WikipediaDocument1 pageDerailment - WikipediaBadhon Chandra SarkarNo ratings yet

- Trier of Fact - WikipediaDocument1 pageTrier of Fact - WikipediaBadhon Chandra SarkarNo ratings yet

- Horse-Drawn Vehicle - WikipediaDocument1 pageHorse-Drawn Vehicle - WikipediaBadhon Chandra SarkarNo ratings yet

- PSY 408 - Unit Exam #3 With Answers (7-13-2023)Document3 pagesPSY 408 - Unit Exam #3 With Answers (7-13-2023)Ashley TaylorNo ratings yet

- MS Renal NCLEX QuestionsDocument6 pagesMS Renal NCLEX Questionsmrmr92No ratings yet

- TOB - Al Mana CDocument6 pagesTOB - Al Mana CJasim SulaimanNo ratings yet

- Initial Management of The Critically Ill Adult With An Unknown Overdose - UpToDateDocument17 pagesInitial Management of The Critically Ill Adult With An Unknown Overdose - UpToDateDANIELA BOHÓRQUEZ ORTEGANo ratings yet

- Sato 2012Document6 pagesSato 2012triNo ratings yet

- Mini-Review: Neonatal PolycythaemiaDocument3 pagesMini-Review: Neonatal PolycythaemiaElizabeth HendersonNo ratings yet

- Lapjag 13 OktDocument24 pagesLapjag 13 OktMartha SimonaNo ratings yet

- What Causes Abdominal Pain?Document5 pagesWhat Causes Abdominal Pain?Charticha PatrisindryNo ratings yet

- STI Guidelines 1Document30 pagesSTI Guidelines 1Faye TuscanoNo ratings yet