Professional Documents

Culture Documents

Evaluacion Triptico Ingles

Uploaded by

ever rodriguezOriginal Description:

Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Evaluacion Triptico Ingles

Uploaded by

ever rodriguezCopyright:

Available Formats

SECURING AI DECISION- INSTITUTO UNIVERSITARIO DE TECNOLOGÍA

MAKING SYSTEMS POLICY PROPOSALS FOR AI DE ADMINISTRACIÓN INDUSTRIAL (IUTA)

SECURITY INSTITUTO UNIVERSITARIO DE TECNOLOGÍA

DE ADMINISTRACIÓN INDUSTRIAL (IUTA)

One of the major security risks to AI In the past four years there has been a

CARRERA: INFORMATICA

systems is the potential for rapid acceleration of government SECCIÓN: 282A3

adversaries to compromise the interest and policy proposals regarding MATERIA: INGLES BASICO

integrity of their decision-making artificial intelligence and security, with

processes so that they do not make 27 governments publishing official AI

choices in the manner that their plans or initiatives by 2019.

designers would expect or desire.

One way to achieve this would be for

adversaries to directly take control of

an AI system so that they can decide

EDITOR'S NOTE:

what outputs the system generates HOW TO IMPROVE

and what decisions it makes. “Editor's Note: This report from The

Alternatively, an attacker might try to Brookings Institution’s Artificial

influence those decisions more subtly Intelligence

CYBERSECURITY FOR

and indirectly by delivering malicious and Emerging Technology (AIET)

inputs or traini ng data to an AI Initiative ARTIFICIAL

model. is part of “AI Governance,” a series that

identifies key governance and norm INTELLIGENCE

issues

related to AI and proposes policy

remedies

to address the complex challenges

associated with emerging technologies”.

Profesor: Alumno:

Henry Abraham Ever Rodríguez.CI. V-27.903.202

Caracas, Abril 2021

HOW TO IMPROVE Much of the discussion to date has A related but distinct set of issues

CYBERSECURITY FOR centered on how beneficial machine deals with the question of how AI

learning algorithms may be for systems can themselves be secured,

ARTIFICIAL identifying and defending against not just about how they can be used

computer-based vulnerabilities and to augment the security of our data

INTELLIGENCE threats by automating the detection and computer networks. The push to

of and response to attempted implement AI security solutions to

attacks. Conversely, respond to rapidly evolving threats

I

n January 2017, a group of artificial

intelligence researchers gathered makes the need to secure AI itself

at the Asilomar Conference even more pressing;

Grounds in California and

developed 23 principles for artificial “Increasing dependence on AI

intelligence, which was later dubbed for critical functions and

the Asilomar AI Principles. The sixth services will not only create

principle states that “AI systems greater incentives for attackers

should be safe and secure throughout to target those algorithms, but

their operational lifetime, and also the potential for each

verifiably so where applicable and successful attack to have more

feasible.” Thousands of people in both severe consequences.”

academia and the private sector have.

concerns have been raised that using

AI for offensive purposes may make if we rely on machine learning

cyberattacks increasingly difficult to algorithms to detect and respond to

block or defend against by enabling cyberattacks, it is all the more

rapid adaptation of malware to adjust important that those algorithms be

to restrictions imposed by protected from interference,

countermeasures and security compromise, or misuse. Increasing

controls. dependence on AI for critical

functions and services will not only

create greater incentives for

attackers to target those algorithms,

but also the potential for each

successful attack to have more

since signed on to these principles, severe consequences.

but, more than three years after the

Asilomar conference, many questions

remain about what it means to make

AI systems safe and secure.

You might also like

- Morphology of Flowering Plants Mind MapDocument4 pagesMorphology of Flowering Plants Mind MapAstha Agrawal89% (9)

- Implementing Generative Ai With Speed and SafetyDocument10 pagesImplementing Generative Ai With Speed and SafetyromainNo ratings yet

- Fluent Japanese From Anime and Manga How To Learn Japanese Vocabulary, Grammar, and Kanji The Easy and Fun Way (Eric Bodnar) (Z-Library)Document88 pagesFluent Japanese From Anime and Manga How To Learn Japanese Vocabulary, Grammar, and Kanji The Easy and Fun Way (Eric Bodnar) (Z-Library)ificianaNo ratings yet

- SANS - 5 Critical ControlsDocument19 pagesSANS - 5 Critical ControlsAni MNo ratings yet

- ICE3001A RoutestoMembership WebDocument20 pagesICE3001A RoutestoMembership Websam_antony2005No ratings yet

- Artificial Intelligence and Cybersecurity A Comprehensive Review of Recent DevelopmentsDocument4 pagesArtificial Intelligence and Cybersecurity A Comprehensive Review of Recent DevelopmentsInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- DLL in General Mathematics (Week A)Document4 pagesDLL in General Mathematics (Week A)Mardy Nelle Sanchez Villacura-Galve100% (2)

- Humans To Machines: The Shift in Security Strategy With Machine LearningDocument8 pagesHumans To Machines: The Shift in Security Strategy With Machine LearningAlessandro BocchinoNo ratings yet

- Machine Learning and CybersecurityDocument58 pagesMachine Learning and CybersecuritydouglasgoianiaNo ratings yet

- AI in Cybersecurity - Report PDFDocument28 pagesAI in Cybersecurity - Report PDFTaniya Faisal100% (1)

- Threat Defense Cyber Deception ApproachDocument25 pagesThreat Defense Cyber Deception ApproachAndrija KozinaNo ratings yet

- ESOL Literacies Access 2 Alphabet Numbers PhonicsDocument144 pagesESOL Literacies Access 2 Alphabet Numbers Phonicsclbbb100% (3)

- SYE AI and Cyber Security WP 190925Document6 pagesSYE AI and Cyber Security WP 190925JhonathanNo ratings yet

- Machine Learning-Based Adaptive Intelligence: The Future of CybersecurityDocument11 pagesMachine Learning-Based Adaptive Intelligence: The Future of CybersecurityJorge VargasNo ratings yet

- My Demo Reflection in MathDocument2 pagesMy Demo Reflection in MathBilly Daliyong KiosiNo ratings yet

- The Influence of AI's Brain On CybersecurityDocument8 pagesThe Influence of AI's Brain On CybersecurityInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Economics of Artificial Intelligence in CybersecurityDocument5 pagesEconomics of Artificial Intelligence in CybersecurityFakrudhinNo ratings yet

- Report State of Ai Cyber Security 2024 1714045778Document32 pagesReport State of Ai Cyber Security 2024 1714045778dresses.wearier.0nNo ratings yet

- F-23-149.1 PresentationDocument5 pagesF-23-149.1 PresentationmonimonikamanjunathNo ratings yet

- Enhancing Cybersecurity: The Power of Artificial Intelligence in Threat Detection and PreventionDocument6 pagesEnhancing Cybersecurity: The Power of Artificial Intelligence in Threat Detection and PreventionIJAERS JOURNALNo ratings yet

- The AI Security Pyramid of PainDocument10 pagesThe AI Security Pyramid of Painvofariw199No ratings yet

- Chapter Two For MieteDocument35 pagesChapter Two For MieteoluwaseunNo ratings yet

- Cyber Security Management of Indutrial Automation and Control Systems IACSDocument15 pagesCyber Security Management of Indutrial Automation and Control Systems IACSHussainNo ratings yet

- Incorporating AI-Driven Strategies in DevSecOps For Robust Cloud SecurityDocument7 pagesIncorporating AI-Driven Strategies in DevSecOps For Robust Cloud SecurityInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Arfath ReportDocument12 pagesArfath ReportMohammed ArfathNo ratings yet

- A S A I C: Urvey of Rtificial Ntelligence in YbersecurityDocument7 pagesA S A I C: Urvey of Rtificial Ntelligence in YbersecurityVinayNo ratings yet

- Artificial Intelligence in Information SecurityDocument13 pagesArtificial Intelligence in Information Securitylailamohd33No ratings yet

- AI and Cybersecurity, Enhancing Digital Defense in The Age of Advanced Threats 2Document4 pagesAI and Cybersecurity, Enhancing Digital Defense in The Age of Advanced Threats 2m.rqureshi11223No ratings yet

- Cyber Snapshot Issue 4Document39 pagesCyber Snapshot Issue 4Bekim KrasniqiNo ratings yet

- Engineering Trustworthy Systems (2109)Document7 pagesEngineering Trustworthy Systems (2109)sahibNo ratings yet

- Artificial Intelligence Trust Risk and SDocument14 pagesArtificial Intelligence Trust Risk and SnayeyrnaffeyNo ratings yet

- Enhancing Anomaly Detection and Intrusion Detection Systems in Cybersecurity Through .Machine Learning TechniquesDocument8 pagesEnhancing Anomaly Detection and Intrusion Detection Systems in Cybersecurity Through .Machine Learning TechniquesInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- F - DR - Deep Instinct - How-Machine-Learning-AI - Deep Learning-Improve-Cybersecurity - Sept2022Document19 pagesF - DR - Deep Instinct - How-Machine-Learning-AI - Deep Learning-Improve-Cybersecurity - Sept2022ashwinNo ratings yet

- Breach & Attack Simulation 101: Expert Tips InsideDocument20 pagesBreach & Attack Simulation 101: Expert Tips InsideRikrdo BarcoNo ratings yet

- Prof - Lazicpaper BISEC2019Ver.2 PDFDocument9 pagesProf - Lazicpaper BISEC2019Ver.2 PDFAmitNo ratings yet

- Developing Intelligent Cyber Threat Detection Systems Through Advanced Data AnalyticsDocument10 pagesDeveloping Intelligent Cyber Threat Detection Systems Through Advanced Data AnalyticsInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- 154-Article Text-229-3-10-20230813Document24 pages154-Article Text-229-3-10-20230813lailamohd33No ratings yet

- Easychair Preprint: Suyash Srivastava and Bejoy BennyDocument12 pagesEasychair Preprint: Suyash Srivastava and Bejoy BennyGarima SharmaNo ratings yet

- AI ImpactDocument17 pagesAI ImpactIsrael BeyeneNo ratings yet

- 2Document42 pages2crenitepkNo ratings yet

- X Artificial Intelligence For Cybersecurity - Literature Review and Future Research DirectionsDocument29 pagesX Artificial Intelligence For Cybersecurity - Literature Review and Future Research Directionsmaxsp759No ratings yet

- SeminarCyber Automation and AutonomyDocument24 pagesSeminarCyber Automation and AutonomyMarwan CompNo ratings yet

- An Outlook On The Status of Security Performance in The Light of IntelligenceDocument7 pagesAn Outlook On The Status of Security Performance in The Light of IntelligenceIJRASETPublicationsNo ratings yet

- Artificial Intelligence For Cybersecurity, A Systematic Mapping of LiteratureDocument6 pagesArtificial Intelligence For Cybersecurity, A Systematic Mapping of Literatureefraim.purwanto01No ratings yet

- 1 s2.0 S0140366419306693 MainDocument8 pages1 s2.0 S0140366419306693 MainGrazzy MayambalaNo ratings yet

- Cyber Autonomy: Automating The HackerDocument15 pagesCyber Autonomy: Automating The HackerMarwan CompNo ratings yet

- The Confluence of Cybersecurity and AI Shaping The Future of Digital DefenseDocument3 pagesThe Confluence of Cybersecurity and AI Shaping The Future of Digital DefenserajeNo ratings yet

- Ai in CyberDocument4 pagesAi in CyberRashmi SBNo ratings yet

- Ai Concepts AND Applications: Presented BYDocument10 pagesAi Concepts AND Applications: Presented BYkejalNo ratings yet

- Study of Artificial Intelligence in Cyber Security and The Emerging Threat of Ai-Driven Cyber Attacks and ChallengeDocument14 pagesStudy of Artificial Intelligence in Cyber Security and The Emerging Threat of Ai-Driven Cyber Attacks and Challengebalestier235No ratings yet

- Nomios - The Rise of AI in CybersecurityDocument13 pagesNomios - The Rise of AI in CybersecurityNico MorarNo ratings yet

- Securing Artificial Intelligence Systems Tda Cyber SnapshotDocument10 pagesSecuring Artificial Intelligence Systems Tda Cyber SnapshottonykwannNo ratings yet

- Ai For Beyond 5g NetworksDocument8 pagesAi For Beyond 5g NetworksINDER SINGHNo ratings yet

- The Ethics and Norms of Artificial Intelligence (AI)Document3 pagesThe Ethics and Norms of Artificial Intelligence (AI)International Journal of Innovative Science and Research TechnologyNo ratings yet

- Artificial Intelligence For Cybersecurity: A Systematic Mapping of LiteratureDocument15 pagesArtificial Intelligence For Cybersecurity: A Systematic Mapping of LiteratureRajNo ratings yet

- Paper PDocument8 pagesPaper Plocalhost54322No ratings yet

- The Horizon of Cyber ThreatsDocument2 pagesThe Horizon of Cyber Threatsseek4secNo ratings yet

- A Novel Framework For Smart Cyber Defence A Deep-Dive Into Deep Learning Attacks and DefencesDocument22 pagesA Novel Framework For Smart Cyber Defence A Deep-Dive Into Deep Learning Attacks and DefencesnithyadheviNo ratings yet

- ROBOTICS ResearchDocument6 pagesROBOTICS Researchtenc.klabandiaNo ratings yet

- SABILLON Et Al (2017) A Comprehensive Cybersecurity Audit Model To Improve Cybersecurity AssuranceDocument7 pagesSABILLON Et Al (2017) A Comprehensive Cybersecurity Audit Model To Improve Cybersecurity AssuranceRobertoNo ratings yet

- I Jcs It 20150604107Document6 pagesI Jcs It 20150604107Abel EkwonyeasoNo ratings yet

- Industrial Cybersecurity Improving Security Through Access Control Policy ModelsDocument12 pagesIndustrial Cybersecurity Improving Security Through Access Control Policy Modelsrakesh kumarNo ratings yet

- McKinsey "Getting To Know-And Manage-Your Biggest AI Risks"Document6 pagesMcKinsey "Getting To Know-And Manage-Your Biggest AI Risks"Jaime Luis MoncadaNo ratings yet

- Hardware and Embedded Security in The Context of Internet of ThingsDocument5 pagesHardware and Embedded Security in The Context of Internet of ThingsTeddy IswahyudiNo ratings yet

- Ai November Report V2Document9 pagesAi November Report V2Harman iNo ratings yet

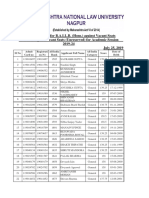

- Second Merit List For B.A.LL.B. (Hons.) Against Vacant SeatsDocument2 pagesSecond Merit List For B.A.LL.B. (Hons.) Against Vacant Seatssourabh rajpurohitNo ratings yet

- Recommended BooksDocument1 pageRecommended BooksJocel SangalangNo ratings yet

- COMMUNICATIONDocument4 pagesCOMMUNICATIONChenee KonghopNo ratings yet

- Resume 3Document2 pagesResume 3api-375026755No ratings yet

- Rizal Family Tree:: Life A ND Works of RizalDocument5 pagesRizal Family Tree:: Life A ND Works of RizalMary Ann GuillermoNo ratings yet

- Internship Program at IDL IMFDocument2 pagesInternship Program at IDL IMFsakshi srivastavaNo ratings yet

- Tingkat Kesadaran para Mahasiswi Remaja Dari Berbagai Perguruan Tinggi Di Indonesia Terhadap Gejala Keputihan Normal Dan AbnormalDocument13 pagesTingkat Kesadaran para Mahasiswi Remaja Dari Berbagai Perguruan Tinggi Di Indonesia Terhadap Gejala Keputihan Normal Dan AbnormalYana DoankNo ratings yet

- Weekly Accomplishment Report On The Monitoring of StudentsDocument6 pagesWeekly Accomplishment Report On The Monitoring of StudentsJamielor BalmedianoNo ratings yet

- Assignment On Essay Introduction To Sociology: Name: Faisal Farooqui ID: 11113 Program: Bba-HDocument13 pagesAssignment On Essay Introduction To Sociology: Name: Faisal Farooqui ID: 11113 Program: Bba-HFaisal FarooquiNo ratings yet

- Psychiatric Nursing CE Week 1 NotesDocument2 pagesPsychiatric Nursing CE Week 1 NotesMeryville JacildoNo ratings yet

- Optional Literature Course-Vii GradeDocument6 pagesOptional Literature Course-Vii GradeIulia AlexandraNo ratings yet

- Geology - Lab 1Document7 pagesGeology - Lab 1Rebwar FakherNo ratings yet

- Questions LTDocument10 pagesQuestions LTeowenshieldmaidenNo ratings yet

- Qual Table 1 APADocument4 pagesQual Table 1 APAAlexis BelloNo ratings yet

- Interference and Diffraction LABDocument5 pagesInterference and Diffraction LABAnvar Pk100% (1)

- DR Bharat Singh GehlotDocument4 pagesDR Bharat Singh GehlotR Pavan SenNo ratings yet

- Q1 G 9 Selection Test (2) AnswersDocument6 pagesQ1 G 9 Selection Test (2) AnswersMARO BGNo ratings yet

- Melc Based Eapp DLP Q1 Week 3Document3 pagesMelc Based Eapp DLP Q1 Week 3kimbeerlyn doromasNo ratings yet

- University of Lagos Slides LG DesignLab in The MakingDocument32 pagesUniversity of Lagos Slides LG DesignLab in The MakingOmotayo FakinledeNo ratings yet

- Cef GuideDocument12 pagesCef GuideAndres Eliseo Lima100% (1)

- Mera Baba BookDocument15 pagesMera Baba BookSuryasai RednamNo ratings yet

- Ancient Mesopotamian Stories by Assia Kaab A Historian and Classical ArchitectDocument1 pageAncient Mesopotamian Stories by Assia Kaab A Historian and Classical ArchitectAssia KaabNo ratings yet

- Tos Mapeh 10 1STQDocument3 pagesTos Mapeh 10 1STQDianne Mae DagaNo ratings yet

- Jordan F Boyd ResumeDocument1 pageJordan F Boyd Resumeapi-240673325No ratings yet