Professional Documents

Culture Documents

Silhouette Based Emotion Recognition

Uploaded by

Hanhoon ParkOriginal Description:

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Silhouette Based Emotion Recognition

Uploaded by

Hanhoon ParkCopyright:

Available Formats

1

AbstractWe present a vision-based method that directly

recognizes human emotion from monocular modern dance image

sequences. The method only exploits the visual information within

image sequences and does not require cumbersome attachments

such as sensors. This makes the method an easy-to-use and

human-friendly one. The procedure of the method is as follows.

First, we compute binary silhouette images with respect to input

dance images. Next, we extract the quantitative features, which

represent the quality of the motion of a dance, from the binary

silhouette images based on Labans movement theory and apply

consolidate algorithms to them in order to improve their

classifiability. Then, we statistically analyze the features and find

meaningful low-dimensional structures, removing redundant

information but retaining essential information possessing high

discrimination power, using SVD. Finally, we classify the

low-dimensional features into 4 predefined emotional categories

(happy, surprised, angry, sad) using TDMLP. Experimental

results show above 70% recognition rate outside training sequence

and thus confirm that it is feasible to directly recognize human

emotion using not physical entities but approximated features

based on Labans movement theory.

Index TermsEmotion recognition, modern dance analysis,

noncontacting, Labans movement theory

I. INTRODUCTION

HE ability to recognize, interpret and express emotions

commonly referred to as emotional intelligence [1] plays

a key role in human communication. Human-computer

interaction follows the same principles, i.e. we have an inherent

tendency to respond to media and systems in the ways that are

common in human-human interaction [2]. Since emotions are an

integral part of human-computer communication, computers

with emotion recognition skill will allow a more natural and thus

improved human-computer interaction.

For the last couple of years many researchers have focused on

developing emotion recognition technology. It is understood

that emotion has its expression in many areas such as ones face,

H. Park and J.-I. Park are with the Department of Electrical and Computer

Engineering, Hanyang University, Seoul, South Korea (e-mail: {hanuni,

jipark}@mr.hanyang.ac.kr).

U.-M. Kim is with the Department of Dance, Hanyang University, Seoul,

South Korea (e-mail: kimunmi@hanyang.ac.kr).

W. Woo is with the Department of Information and Communications,

Kwangju Institute of Science & Technology, Kwangju, South Korea (e-mail:

wwoo@kjist.ac.kr).

voice, and body movements. While the emotion recognition

methods that extract emotional information from speeches or

facial expressions have been extensively investigated [3-6]

(although it is still a challenge [6]), the ones associated with

body movements were less explored. This may be due to the fact

that body movement is too high dimensional, dynamic, and

ambiguous to analyze. There is no doubt that the need for a

framework for emotion recognition from body movements has

been increasing.

A. Related Work

1) Vision Based Human Motion Analysis: Most work in this

area has been concentrated on body parts such as hands [28] and

gait [26, 27] because they are relatively simple or well-regulated

and thus easy to quantify and analyze. This type of method is not

very applicable to other full body movements (neither body part

nor gait) that change unexpectedly or dynamically. Methods that

try to analyze more general human motions exist in the literature.

However, most of them rely on motion-capture systems or are

model-based approaches [29, 30, 31] of which both need a

troublesome initialization step.

There are some methods that try to analyze body movements

in time domain in the literature. Kojima et al. defined the word

rhythm points, which indicates the time of the start and the end

of a movement, so that the whole motion could be represented

as the collection of partial movements (a unit of motion) with a

period [7]. Wilson et al. also tried to identify temporal aspects of

body movement [8]. They proposed a method that detects

candidate rest states and motion phases from the movements

spontaneously generated by a person telling a story. Kojimas

and Wilsons methods are appropriate for analyzing simple and

regular motions such as small baton-like movement or swing

strokes. In general, however, human body motions (especially in

case of dance in this paper) are not so simple or regular so we

cannot easily determine the start/end of a unit of motion or rest

state. Thus, their otherwise effective methods do not seem to be

applicable to general and natural human motions.

All the methods explained in this section have only aimed at

analyzing human motions but do not pay attention to the

emotion communicated.

2) Emotion Recognition from Body Movement: Several

methods aiming at recognizing human emotion from body

movements exist in the literature.

Darwin described the motions associated with emotion and

theorized on the relationship between emotions and their

Silhouette-Based Emotion Recognition from

Modern Dance Performance

Hanhoon Park, Jong-Il Park, Un-Mi Kim, and Woontack Woo

T

2

expression [9]. Montepare et al. described the emotional cues

that could be determined from the way someone walks [10]. For

example, they found out that a heavy-footed gait and longer

strides indicate anger while a faster pace indicates happiness.

Barrientos suggested that some expression of emotion should be

expressed in writing since it is merely a stylized human

movement [33]. And he described an interaction technique in

which pen gesture controls avatar gesture. Picard et al. tried to

recognize affective state by capturing the physiological signals,

e.g. electromygram (EMG), blood volume pressure, galvanic

skin response, and respiration, etc. [11]. They considered

emotion recognition as physiological pattern recognition. The

aforementioned methods have limitation in that Darwins is

heuristic, Montepares or Barrientoss technique cannot be

applicable to common human motions, and Picards requires

complicated devices to capture the physiological signals and

thus is not human-friendly.

Recently, endeavors for recognizing emotion from dance

have been made. Dance is close to common human motions that

are the most typical the basis of natural body expression has

further developed in terms of dance of expressing human

emotion at the same time. Dittrich et al. tried to recognize

emotion from dynamic joint-attached point light displays

represented in dance [12]. They concluded biological-motion

displays permit a large amount of emotional encoding to take

place. Their method based on subjective evaluation using a

questionnaire was not an automatic one based on vision

techniques.

Figure 1. Rectangle surrounding human body.

Some fully vision-based methods that can automatically

recognize human emotion from modern dance performance have

been introduced [13-15, 17, 18, 32]. To represent the

high-dimensional and dynamic change of body movements, they

simplified a dynamic dance to the movement of rectangle

surrounding human body and exploited several features related

to the motion of rectangles on the basis of Labans movement

theory [16] (see Fig. 1). They then analyzed the features

heuristically [13, 14] or statistically [15] and tried to find the

relationship between the features and human emotion similar

researches using two or more cameras had also been done by

Camurri et al. [17, 18]. These bounding-box-based methods had

shown satisfactory results in most cases. However, they were

confronted with difficulties in some cases. For example, when

the bounding box information from an emotional category is

similar to that from another emotional category, they cannot

discriminate the differences.

To resolve this problem, Park et al. proposed a method of

exploiting new form feature for representing human motion in

more detail [32]. They appended the contour-based shape

information (concerned with silhouette boundary) to the

region-based shape information (concerned with bounding box).

Thus, they could discriminate the subtle difference between the

shapes of silhouettes that have the same bounding box

information. It had an effect that the recognition rate of each

emotion category was improved and balanced.

B. Content of This Paper

In this paper, we present a new framework for noncontacting

emotion recognition from modern dance performance. We

consider emotion recognition as a direct 1-to-1 mapping

problem between low-level features and high-level information

(emotions). Notice that its feasibility has been verified in the

previous works [13-15].

Human motions possess emotive meaning together with their

descriptive meaning (Camurri et al. [18] used the terms,

prepositional and non-prepositional, instead). Descriptive

motions are a kind of signs to transmit meaning such as shaking

ones head to say no. In contrast, emotive motions are

embodied in the direct and natural emotional expression of body

movement based on fundamental elements such as tempo and

force that could be combined in a vast range of movement

possibilities [16]. In this sense emotive motions do not rely on

specific motions, but build on the quality of movements i.e., how

motions are carried through, for example whether it is fast or

slow. In this paper, we pay attention to this property of the

emotive movements.

The rest of this paper is organized as follows. In the next

section we explain the method for recognizing human emotion

from monocular dance image sequences in detail. In Section ,

we provide the experimental results and conclude in Section .

II. METHOD

The overall procedure consists of four parts:

- Foreground (silhouette) extraction from each frame

in dance image sequence (see Fig. 1);

- Reliable feature extraction from silhouette image;

- Transformation of the features into hidden space

using Singular Value Decomposition (SVD);

- Classification of the hidden space features into 4

predefined emotional categories, i.e. happy,

surprised, angry, and sad, using Time Delayed

Multi-Layer Perceptron (TDMLP).

A. Foreground (Silhouette) Extraction

We remove the background and shadow out of dance images

using difference keying and normalized difference keying

technique separately and create binary silhouette images (see Fig.

1).

1) Difference Keying: In general, the background region in an

3

image is unnecessary and thus must be removed. Chroma-keying

has been widely used for this purpose. While it has good

performance when the color of background is uniform, it cannot

be applied to natural (or cluttered) backgrounds.

We use an approach to separate objects from a static but

natural background [19]. We use motion information that has

been widely accepted as a crucial cue to separate moving objects

from the background. The basic idea is to subtract the current

image from a reference image. The reference image is acquired

by averaging the images of a static background during a period

of time in the well-known (R,G,B) color space. The subtraction

leaves only non-stationary objects. For details, see [19]

2) Normalized Difference Keying: The conventional object

separation techniques based on static background modeling are

susceptible to both global and local illumination changes.

Normally, moving objects create shadow according to lighting

conditions. The (R,G,B) color space is not a proper space to

handle shadow. Thus, we introduce a new color space, called the

normalized (R,G,B) color space, which is able to cope with

slight change of illumination conditions [19]. What we use

normalized (R,G,B) color space means that we only consider

chromaticity of pixels. It is based on the observation that shadow

has similar chromaticity but slightly different brightness than

those of the same pixel in the background. For details, see [19].

3) Combining Two Keying Techniques: In 2), The result

binary image computed by 1) is used as a mask image. Fig.2 (c)

shows the separation result using both difference keying and

normalized difference keying.

(a) (b) (c)

Figure 2. Moving silhouette extraction. (a) Image when 4 directional lights were

used, (b) only using difference keying, (c) after shadow elimination. Shadow

was clearly eliminated.

B. Reliable Feature Extraction

After we create binary silhouette images, we extract the

features representing the quality of the motion of a dance based

on Labans movement theory (see Appendix for details) (Table

I).

Many researchers have used their features based on Labans

movement theory to analyze human motion and have supported

the theory lasting previous years [13-15, 17, 18, 20, 32, 33].

Among them, Suzuki et al. redefined the time-space-weight

concept introduced by Laban to be as generally and easily

extractable from video images as possible [13].

Time : Human body movement speed

Space : Openness of human body

Weight(Energy) : Acceleration of human body movement

They omitted flow and redefined space as the wideness or an

amount of space instead of design or form because they thought

that it was a minor feature that could be derived from the

redefined time-space-weight features. Afterward, Woo et al.

introduced TDMLP to take flow into consideration [14]. Suzuki

and Woo used similar features as shown in Table I except N

d

.

TABLE I

FEATURES EXTRACTED FROM BINARY SILHOUETTE IMAGES

The aspect ratio of rectangle H/W

The coordinates of centroid (C

x

, C

y

)

The coordinates of the center of rectangle (R

x

, R

y

)

The ratio between silhouette area and

rectangle area

S

s

/S

r

The number of dominant points on boundary N

d

The velocity of each feature f(

.

)

The acceleration of each feature g(

.

)

f(x

n

) = x

n

-x

n-1

, g(x

n

)=x

n

-2*x

n-1

+x

n-2

W, H , Cx, Cy, Rx, Ry, Ss, and Sr represent the width and height of bounding

box, the x-, y-coordinates of centroid, the x-, y-coordinates of rectangle, the area

of silhouette, and the area of rectangle, respectively. f() represents the velocity of

each feature, g() represents the acceleration of each feature.

(a) (b)

Figure 3. Similar but contextually different motions. (a) With only a bounding

box, we cannot discriminate between two motions. (b) On the right, a little

motion of her left leg gives rise to new dominant points. Then we can

discriminate between the two motions.

Without the feature N

d

, the difference between similar but

contextually different human motions cannot be detected as

shown in Fig. 3. Park et al. introduced N

d

to resolve this problem

[32]. The improvement resulted from using N

d

is clear in their

experimental results. In the same purpose, we use N

d

as a feature.

And we use Teh-Chins algorithm to compute N

d

because it has

shown reliable results even if the object is dynamically scaled or

changed [21].

Dominant points represent the points that have significant

change of curvature. This means that an object with a lot of

dominant points is star-like, on the other hand, an object with

few dominant points is circular. In other words, the number of

dominant points represents the shape complexity of an object

(see Fig. 4). Needless to say, the shape complexity of an object is

related to space in Labans movement theory.

At this point in time, a question arises, Why is the number of

dominant points used as a feature, not the dominant points

themselves or more detailed representations? The answer is

that it may suffice to utilize rough contour information because

we do not aim to answer the question what is the gesture he/she

made? but to catch the overall mood, i.e. emotion.

In general, the features extracted from dynamically changing

dance images include some outliers. Outliers are main culprit of

4

decreasing recognition rate. To remove outliers, we calculate

the mean and deviation of each feature in advance and clamp the

outliers that deviate from the mean more than the allowed

deviation. The value of each feature in Table I does not have

same scale. To alleviate the scale problem, we normalize the

features to be distributed evenly between their maximum value

and minimum value. This normalization procedure can resolve

the problem resulted from the physical difference between

dancers at the same time [15].

(a) (b)

Figure 4. Dominant point detection on (a) real images and (b) synthetic images.

The number of dominant points is associated with the complexity of an object.

The features are inherently noisy and need to be smoothed to

obtain a reliable result. Thus, we take frame-by-frame median

filtering. The window size (= the number of frames) for median

filtering heavily affects the recognition rate [15]. If the window

size is too small, it tends to be noisy. On the contrary, if the

window size is too large, valid information for effective

recognition is blurred (= deteriorated). We set the window size

to 10.

C. Hidden Space Transform

After applying the preceding algorithms to the low-level

features, they are still high dimensional and hard-to-classify. We

take into account statistical properties of the features. We apply

SVD to the features and select the eigen-vectors having large

eigen-value. This has an effect to remove redundant information

out of the features while maintaining high recognition rate by

classifying them in hidden space [22]. In the section, we give

details of our hidden space transform for a reader with little

experience in computer science. These details can be skipped by

a reader in the field of computer science.

In the case of extracting n features from the dance sequence

having m frames, we apply SVD to F matrix having mn

features as its elements. That is:

T

V U F E =

(5)

where

,

f f f f

f f f f

f f f f

f f f f

n

m

n

3

n

2

n

1

3

m

3

3

3

2

3

1

2

m

2

3

2

2

2

1

1

m

1

3

1

2

1

1

(

(

(

(

(

(

F

. , , 0

0

0

,

2 1

1

n m r

r

r

s > > > >

|

|

|

.

|

\

|

=

|

|

.

|

\

|

=

o o o

o

o

D

0 0

0 D

Here,

j

i

f represents the value of the i-th feature in the j-th

frame. o

k

represents the singular value and k-th column of U is

eigen-vector associated with o

k

. The r eigen-vectors (u

1

, u

2

, ,

u

r

) associated with large o are represented by linear combination

of the feature vectors (f

1

, f

2

, , f

n

). That is:

.

,

,

2 1

2 22 21

1 12 11

n 2 1 r

n 2 1 2

n 2 1 1

f f f u

f f f u

f f f u

rn r r

n

n

o o o

o o o

o o o

+ + + =

+ + + =

+ + + =

(6)

Equation (6) is rewritten as follows:

, , , 2 , 1 r i for = =

i i

F u (7)

where

| |

| |. f f f

,

n 2 1

T

in i2 i1

=

=

F

i

Here, o

i

represents contribution coefficient vector and it is

solved as follows:

. ) (

1

i i

u F F F

T T

= (8)

Given o

i

, the hidden space features are represented by weighted

sum of the original features extracted from dance image

sequence:

'

n rn

'

2 2 r

'

1 1 r r

'

n n 2

'

2 22

'

1 21 2

'

n n 1

'

2 12

'

1 11 1

f f f p

, f f f p

, f f f p

o + + o + o =

o + + o + o =

o + + o + o =

(9)

where f represents the original features extracted from each

frame of dance sequence.

In practice, we calculated the contribution coefficients for 12

eigen-vectors having large eigen-values and extracted 12 hidden

space features associated with the eigen-vectors every frame.

The number of eigen-vectors can be changed according to the

characteristics of data. We used 12 eigen-vectors because the

eigen-values related to the eigen-vectors were much larger than

the other ones. In addition, the hidden space features associated

with few largest eigen-values cannot account for all of the three

kinds of features i.e. space-time-weight. The ones associated

with smaller eigen-values can account for the velocity and

acceleration features (see Fig. 5). Finally, these 12 hidden space

features are used as an input of TDMLP that will be explained in

the next section.

D. Classification of Hidden Space Features

The distribution of the features is very complicated and

over-populated. It is almost impossible to classify them linearly.

5

We introduce a neural network to classify the features

nonlinearly. Multi-Layer Perceptron (MLP) is a feedforward

neural network that has hidden layers between input layer and

output layer. MLP can be used to classify arbitrary data because

its internal neurons have nonlinear properties [23].

Space

0

0.05

0.1

0.15

0.2

0.25

0.3

0.35

0.4

0.45

1 2 3 4 5 6 7 8 9 10 11 12

(1) > (2) > ... > (12)

c

o

n

t

r

i

b

u

t

i

o

n

c

o

e

f

f

i

c

i

e

n

t

H/W

Cx

Cy

Rx

Ry

Ss/Sr

Nd

Time

0

0.1

0.2

0.3

0.4

0.5

0.6

0.7

0.8

0.9

1

1 2 3 4 5 6 7 8 9 10 11 12

(1) > (2) > ... > (12)

c

o

n

t

r

i

b

u

t

i

o

n

c

o

e

f

f

i

c

i

e

n

t

H/W

Cx

Cy

Rx

Ry

Ss/Sr

Nd

Weight

0

0.05

0.1

0.15

0.2

0.25

0.3

0.35

0.4

0.45

1 2 3 4 5 6 7 8 9 10 11 12

(1) > (2) > ... > (12)

c

o

n

t

r

i

b

u

t

i

o

n

c

o

e

f

f

i

c

i

e

n

t

H/W

Cx

Cy

Rx

Ry

Ss/Sr

Nd

Figure 5. The contribution coefficients associated with 12 largest eigen-values.

Only the ones associated with smaller eigen-values can account for the velocity

and acceleration features.

The number of neurons of hidden layer is not fixed but can be

determined by multiplying the number of inputs by the number of

outputs. We obtain a satisfactory performance in this way.

When a sigmoid function is used as active function, the value

of output is not rounded off to 0 or 1 and it is distributed

uniformly between 0 and 1. If we use these values as is, the sum

of errors becomes very large. Applying the Winner Take All

(WTA) rule, the values of output are rounded and we can reduce

the result error-sum.

In the case of dynamically changing data like dance, it is of

great importance to find out how they vary temporally (Laban

introduced the factor, flow, to explain it). However, MLP

cannot deal with such a changing pattern of data. As shown in

Fig. 6, TDMLP stores the input data in a buffer for some period

and uses all the delayed data as input. Because putting together

data acquired at different times has an effect on coping with the

change among them, TDMLP can effectively analyze not only an

instant value but also a changing patterns of input data [14].

To find the optimal number of delays is necessary because the

performance of TDMLP is influenced by the number of delays

[14, 15]. Through experiments, we confirmed that zero-delayed

TDMLP (simply MLP) cannot cope with temporal changes of

data and TDMLP with lots of delays lose its separability for data

with different characteristics in spatial domain. In practice, we

used 1-delayed MLP.

We used 12 hidden space features as an input of TDMLP as

explained previous section. Thus, the TDMLP used in our

experiments has 24 (=12+12) input nodes, 96 hidden nodes, and

4 output nodes.

Figure 6. The structure of our TDMLP. It has three layers and a buffer in input

layer.

III. EXPERIMENTAL RESULTS

To obtain experimental sequences, we captured the motions

of a dance from 4 professional dancers using a static video

camera (Cannon MV1). The images have 320240 pixels. The

dancers freely performed the various movements of a dance

related to pre-defined emotional categories, keeping within

about 5m5m size of space with static background, within a

given period of time. Fig. 7 shows the examples of the dance

images. Some sequences were used for training the TDMLP and

the others for testing the TDMLP, not duplicated.

The frame-rate of each sequence used in our experiments was

30Hz. We obtained 3 recognition results per second because we

took the frame-by-frame median filtering every 10 frames as

explained before. Strictly speaking, 1/3 sec is too short to

estimate an exact emotion. Therefore, the recognition results

6

were saved into a buffer with a given size

1

and we took the

major one as a final result.

We implemented most of the algorithms using the functions in

OpenCV library [24] that aims at real-time computer vision.

Figure 7. Dance images.

Because insufficient training data may result in erroneous

recognition, we tried to capture the dance image sequence

including various motions of a dance for a long time. If the

sequence is too short, it has few specific patterns. The TDMLP

learned with such a sequence can recognize only the specific

patterns and thus does not have a good recognition rate. As

shown in Fig. 8, when the number of frames is greater than 5000,

satisfactory performance was obtained.

0%

10%

20%

30%

40%

50%

60%

70%

80%

90%

100%

1 2 3 4 5 6 7 8

frames (1000)

r

e

c

o

g

n

i

t

i

o

n

r

a

t

e

(

%

)

happy

surprised

angry

sad

Figure 8. Recognition rate according to the number of frames in training

sequence. When the number of frames is greater than 5000, satisfactory

performance was obtained.

TABLE

RECOGNITION RATE INSIDE THE TRAINING SEQUENCE

Output

Input

Happy Surprised Angry Sad

Happy

Surprised

Angry

Sad

88%

2%

12%

0%

2%

80%

4%

12%

10%

12%

84%

2%

0%

6%

0%

86%

1

We set the buffer size to 6

TABLE

RECOGNITION RATE OUTSIDE THE TRAINING SEQUENCE

Output

Input

Happy Surprised Angry Sad

Happy

Surprised

Angry

Sad

75%

14%

14%

0%

14%

70%

15%

11%

11%

11%

60%

2%

0%

5%

11%

87%

Table and shows the cross-recognition rate of four

emotions to the dance image sequences inside and outside the

training sequence respectively. In both sequences, we could

obtain more advanced performance than the previous methods

[13, 14] and more balanced performance than the previous

method [15].

The recognition rate outside the training sequence is less than

that inside the training sequence since the method of each

professional dancers expressing his/her emotion is different.

This can be alleviated if we acquire the sample sequence from

more dancers and have the TDMLP learn to adapt to various

expression methods included.

We obtained above 70% recognition rate outside the training

sequence using the proposed method. This is really nice. Even

people cannot recognize other persons emotion with that

precision when they watch only his/her natural motion. In

practice, we confirmed through a subjective evaluation on

college students that people have the correctness of

approximately 50-60%.

IV. CONCLUDING REMARKS

We presented a framework to recognize human emotion from

modern dance performance based on Labans movement theory.

Through experiments, we obtained acceptable performance and

confirmed that it was feasible to directly recognize human

emotion using not physical entities but approximated features

based on Labans movement theory.

We expect that the proposed method be practically used in

entertainment and education fields that need humanlike,

real-time human-computer interaction on which we are

intensively investigating.

Currently we are trying to recognize human emotion from

traditional dance performance that differs from modern dance.

As interesting future work, it is necessary to focus on how to

categorize emotions. In this paper, we categorized human

emotions into just four common ones. However, they are not

sufficient for describing all emotional categories and might not

be familiar to those who are from other (not Korea) countries.

Anthropologists report that there are enormous differences in

the ways that different cultures categorize emotions and the

words used to name or describe an emotion can influence what

emotion is experienced [25].

7

APPENDIX: LABANS MOVEMENT THEORY [16]

While it is abstract, human movement connotes a physical law

and a psychological meaning. As Laban made use of this

characteristic, he tried to observe, analyze and classify human

movement. While the movement of animals is instinctive and

responds to external stimulus normally, human movement is

filled with human temperament. People express themselves and

transmit something (effort, named by Laban) occurred to heart

by their movement. To analyze the effort, Laban adopted the

following elements:

1) Weight: The element of effort, firm, consists of a

powerful resistance to the weight or depressed motion or

heaviness as a sense of motion. The element of effort, soft,

consists of a weak resistance to the weight or light feeling or

lightness as a sense of motion.

2) Time: The element of effort, sudden, consists of a rapid

speed or a short time or a short length of time as a sense of

motion. The element of effort, consistent, consists of a slow

speed or endlessness or long length of time as a sense of motion.

3) Space: The element of effort, straight, consists of a

straight direction or narrowness or droop like a thread as a sense

of motion. The element of effort, pliable, consists of a

wavelike direction or curved shape or a bend toward different

direction as a sense of motion.

Additionally, Laban adopted flow to represent the temporally

changing pattern of human movement. It means that he tried to

observe the tendency of human movement within a specific

period as well as instant human movement.

Labans movement theory has been used by many group of

researchers, dance performers, etc., to observe and analyze how

human movement changes in weight, time and space.

REFERENCES

[1] D. Goleman, Emotional Intelligence, Bantam Books, New York, 1995.

[2] B. Reeves and C. Nass, The Media Equation. How People Treat

Computers, Television, and New Media Like Real People and Places,

Cambridge University Press, Cambridge, 1996.

[3] K. Lee and Y. Xu, Real-time estimation of facial expression intensity,

Proc. of ICRA03, vol. 2, 2003, pp.2567-2572.

[4] R. Cowie, E. Douglas-Cowie, N. Tsapatsoulis, G. Votsis, S. Kollias, W.

Fellenz, and J. G. Taylor, Emotion recognition in human-computer

interaction, IEEE Signal Processing Magazine, 2001, pp. 32-80.

[5] I. Cohen, N. Sebe, F. Cozman, M. Cirelo, and T. Huang, Learning

Bayesian network classifier for facial expression recognition using both

labeled and unlabeled data, Proc. of CVPR03, vol. 1, 2003, pp. 595-601.

[6] C. C. Chibelushi and F. Bourel, Facial Expression Recognition: a brief

tutorial overview, 2002. Available:

homepages.inf.ed.ac.uk/rbf/CVonline/LOCAL_COPIES/CHIBELUSHI1/

CCC_FB_FacExprRecCVonline.pdf

[7] K. Kojima, T. Otobe, M. Hironaga, and S. Nagae, Human motion

analysis using the rhythm, Proc. of Intl Workshop on Robot and Human

Interactive Communication, 2001, pp. 194-199.

[8] A. Wilson, A. Bobick, and J. Cassell, Temporal classification of natural

gesture and application to video coding, Proc. of CVPR97, 1997, pp.

948-954.

[9] C. Darwin, The Expression of the Emotions in Man and Animals, Oxford

University Press, 1998.

[10] J. M. Montepare, S. B. Goldstein, and A. Clausen, The identification of

emotions from gait information, Journal of Nonverbal Behavior, vol. 11,

1987, pp. 33-42.

[11] R. Picard, E. Vyzas, and J. Healey, Toward machine emotional

intelligence: analysis of affective physiological state, IEEE Transactions

on Pattern Analysis and Machine Intelligence, vol. 23, no. 10, 2001, pp.

1175-1191.

[12] W. H. Dittrich, T. Troscianko, S. E. G. Lea, and D. Morgan, Perception of

emotion from dynamic point-light displays represented in dance,

Perception, vol. 25, 1996, pp. 727-738.

[13] R. Suzuki, Y. Iwadate, M. Inoue, and W. Woo, MIDAS: MIC Interactive

Dance System, Proc. of IEEE Intl Conf. on Systems, Man and

Cybernetics, vol. 2, 2000, pp. 751-756.

[14] W. Woo, J.-I. Park, and Y. Iwadate, Emotion analysis from dance

performance using time-delay neural networks, Proc. of CVPRIP00, vol.

2, 2000, pp. 374-377.

[15] H. Park, J.-I. Park, U.-M. Kim, and W. Woo, A statistical approach for

recognizing emotion from dance sequence, Proc. of ITC-CSCC02, vol. 2,

2002, pp. 1161-1164.

[16] R. Laban, Modern Educational Dance, Trans-Atlantic Publications, 1988.

[17] A. Camurri, M. Ricchetti, and R. Trocca, Eyeweb-toward gesture and

affect recognition in dance/music interactive system, Proc. of IEEE

Multimedia Systems, 1999.

[18] A. Camurri, I. Lagerlf, and G. Volpe, Recognizing emotion from dance

movement: comparision of spectator recognition and automated

techniques, Intl Journal of Human-Computer Studies, 2003, pp.

213-225.

[19] N. Kim, W. Woo, and M. Tadenuma, Photo-realistic interactive virtual

environment generation using multiview cameras, Proc. of SPIE

PW-EI-VCIP01, vol. 4310, 2001.

[20] L. Zhao, Synthesis and Acquisition of Laban Movement Analysis

Qualitative Parameters for Communicative Gestures, Technical Report,

MS-CIS-01-24, 2001.

[21] C.-H. Teh and R. T. Chin, On the detection of dominant points on digital

curves, IEEE Transactions on Pattern Analysis and Machine

Intelligence, vol. 11, no. 8, 1989, pp. 859-872.

[22] G. Strang, Linear Algebra and Its Applications, Harcourt Brace

Jovanovich, Inc., 1988.

[23] E. Micheli-Tzanakou, Supervised and Unsupervised Pattern Recognition,

CRC Press, 2000.

[24] Open Source Computer Vision (OpenCV) Library. Available:

http://www.intel.com

[25] S. Vaknin, The Manifold of Sense. Available:

http://samvak.tripod.com/sense.html

[26] L. Wang, H. Ning, T. Tan, and W. Hu, Fusion of static and dynamic body

biometrics for gait recognition, IEEE Transactions on Circuits and

Systems for Video Technology, vol. 14, no. 2, 2004, pp. 149-158.

[27] R. D. Green and L. Guan, Quantifying and recognizing human movement

patterns from monocular video images, IEEE Transactions on Circuits

and Systems for Video Technology, vol. 14, no. 2, 2004, pp. 191-198.

[28] J. MacCormick and M. Isard, Partitioned sampling, articulated objects,

and interface-quality hand tracking, Proc. of ECCV00, 2000.

[29] A. Nakazawa, S. Nakaoka, T. Shiratori, and K. Ikeuchi, Analysis and

synthesis of human dance motions, Proc. of IEEE Conf. on Multisensor

Fusion and Integration for Intelligent Systems, 2003, pp. 83-88.

[30] J. K. Aggarwal and Q. Cai, Human motion analysis: a review, Proc. of

IEEE Workshop on Nonrigid and Articulated Motion, 1997, pp. 90-102.

[31] T. B. Moeslund and E. Granum, A survey of computer vision-based

human motion capture, Computer Vision and Image Understanding,

2001, pp. 231-268.

[32] H. Park, J.-I. Park, U.-M. Kim, and W. Woo, Emotion recognition from

dance image sequences using contour approximation, Proc. of

S+SSPR04, 2004, pp. 547-555.

[33] F. A. Barrientos, Controlling Expressive Avatar Gesture, University of

California, Berkeley, Ph.D. Dissertation, 2002. Available:

http://www.sonic.net/~fbarr/Dissertation/index.html

You might also like

- Glowinski, D., Dael, N., Camurri, A., Volpe, G., Mortillaro, M., & Scherer, K. R. (2011). Towards a minimal representation of affective gestures. IEEE Transactions on Affective Computing, 2(2), 106–118..pdfDocument8 pagesGlowinski, D., Dael, N., Camurri, A., Volpe, G., Mortillaro, M., & Scherer, K. R. (2011). Towards a minimal representation of affective gestures. IEEE Transactions on Affective Computing, 2(2), 106–118..pdfMaria MiñoNo ratings yet

- FaceDocument11 pagesFaceMarriam AkhtarNo ratings yet

- On Posture As A Modality For Expressing and Recognizing EmotionsDocument8 pagesOn Posture As A Modality For Expressing and Recognizing EmotionsAndra BaddNo ratings yet

- Acii 185Document8 pagesAcii 185BelosnezhkaNo ratings yet

- Reflex Movements for Virtual HumansDocument10 pagesReflex Movements for Virtual HumansVignesh RajaNo ratings yet

- Body Movements For Affective ExpressionDocument19 pagesBody Movements For Affective ExpressionSinan SonluNo ratings yet

- IJETR032336Document5 pagesIJETR032336erpublicationNo ratings yet

- Recognizing Emotions From Videos by Studying Facial Expressions, Body Postures and Hand GesturesDocument4 pagesRecognizing Emotions From Videos by Studying Facial Expressions, Body Postures and Hand GesturesFelice ChewNo ratings yet

- Delsarte CameraReadyDocument14 pagesDelsarte CameraReadydaveNo ratings yet

- Planificacion e Imitacion de Movimientos CorporalesDocument19 pagesPlanificacion e Imitacion de Movimientos CorporalesJc BTorresNo ratings yet

- Neural Mechanisms SubservingDocument7 pagesNeural Mechanisms SubservingRandy HoweNo ratings yet

- Dance Accion and Social Cognition Brain and CognitionDocument6 pagesDance Accion and Social Cognition Brain and CognitionrorozainosNo ratings yet

- An Exploration of The Perception of Dance and Its Relation To Biomechanical MotionDocument10 pagesAn Exploration of The Perception of Dance and Its Relation To Biomechanical MotionMaria Elizabeth Acosta BarahonaNo ratings yet

- Body Motion and Emotions DMPDocument6 pagesBody Motion and Emotions DMPAnonymous wTvi61tjANo ratings yet

- NeuroaestheticsDocument6 pagesNeuroaestheticsIvereck IvereckNo ratings yet

- Emotion Classification in Arousal Valence Model Using MAHNOB-HCI DatabaseDocument6 pagesEmotion Classification in Arousal Valence Model Using MAHNOB-HCI DatabaseParantapDansanaNo ratings yet

- Recognizing and Regulating Emotions Through Body PostureDocument7 pagesRecognizing and Regulating Emotions Through Body PostureAlexOO7No ratings yet

- NotesDocument14 pagesNotesjohn awaNo ratings yet

- Analysis of Emotions From Body Postures Based On Digital ImagingDocument6 pagesAnalysis of Emotions From Body Postures Based On Digital ImagingPhùng Ngọc TânNo ratings yet

- The Human Equation: The Constant in Human Development and Culture from Pre-Literacy to Post-Literacy -- Book 1, The Human Equation ToolkitFrom EverandThe Human Equation: The Constant in Human Development and Culture from Pre-Literacy to Post-Literacy -- Book 1, The Human Equation ToolkitRating: 5 out of 5 stars5/5 (1)

- Designing Unstable Landscapes Improvisational Dance Within Cognitive Systems by Marlon Barrios SolanoDocument12 pagesDesigning Unstable Landscapes Improvisational Dance Within Cognitive Systems by Marlon Barrios SolanoMarlon Barrios SolanoNo ratings yet

- ACII HandbookDocument21 pagesACII HandbookJimmygadu007No ratings yet

- The Role of Attention Perception and Mem PDFDocument13 pagesThe Role of Attention Perception and Mem PDFMarian ChiraziNo ratings yet

- Embodiment For BehaviorDocument28 pagesEmbodiment For BehaviorSuzana LuchesiNo ratings yet

- Combining Facial and Postural ExpressionDocument14 pagesCombining Facial and Postural ExpressionSterling PliskinNo ratings yet

- Koch 2011 BodyRhythmsDocument18 pagesKoch 2011 BodyRhythmsLuminita HutanuNo ratings yet

- Going Viral Thesis FinalDocument74 pagesGoing Viral Thesis FinalNathan AndaryNo ratings yet

- A Set of Full Body Movement Features For Emotion Recognition To Help Children Affected by Autism Spectrum ConditionDocument7 pagesA Set of Full Body Movement Features For Emotion Recognition To Help Children Affected by Autism Spectrum Conditionnabeel hasanNo ratings yet

- EmotionSense Emotion Recognition Based onDocument10 pagesEmotionSense Emotion Recognition Based ongabrielaNo ratings yet

- Ballistic Hand Movements: Abstract. Common Movements Like Reaching, Striking, Etc. Observed DuringDocument12 pagesBallistic Hand Movements: Abstract. Common Movements Like Reaching, Striking, Etc. Observed DuringpsundarusNo ratings yet

- ButohDocument10 pagesButohShilpa AlaghNo ratings yet

- Beffara 2012Document4 pagesBeffara 2012April ShedyakNo ratings yet

- Automatic ECG-Based Emotion Recognition in Music ListeningDocument16 pagesAutomatic ECG-Based Emotion Recognition in Music ListeningmuraliNo ratings yet

- 3D Skeleton-Based Human Action Classification: A SurveyDocument18 pages3D Skeleton-Based Human Action Classification: A Surveyhvnth_88No ratings yet

- Emotion Recognition Through Multiple Modalities: Face, Body Gesture, SpeechDocument13 pagesEmotion Recognition Through Multiple Modalities: Face, Body Gesture, SpeechAndra BaddNo ratings yet

- Nonlinear dynamics of the brain: emotion and cognitionDocument17 pagesNonlinear dynamics of the brain: emotion and cognitionbrinkman94No ratings yet

- A Motion Capture Library For The Study of Identity, Gender, and Emotion Perception From Biological MotionDocument8 pagesA Motion Capture Library For The Study of Identity, Gender, and Emotion Perception From Biological MotionStanko.Stuhec8307No ratings yet

- Perception of MotionDocument32 pagesPerception of MotionJoão Dos Santos MartinsNo ratings yet

- Tracking The Origin of Signs in Mathematical Activity: A Material Phenomenological ApproachDocument35 pagesTracking The Origin of Signs in Mathematical Activity: A Material Phenomenological ApproachWolff-Michael RothNo ratings yet

- Experiência Extra CorporalDocument10 pagesExperiência Extra CorporalINFO100% (2)

- TR 2009 02Document8 pagesTR 2009 02RichieDaisyNo ratings yet

- EmotionsDocument14 pagesEmotionsz mousaviNo ratings yet

- Binding Action and Emotion in Social UnderstandingDocument9 pagesBinding Action and Emotion in Social UnderstandingJoaquin OlivaresNo ratings yet

- Berson2016 Chapter CartographiesOfRestTheSpectralDocument8 pagesBerson2016 Chapter CartographiesOfRestTheSpectralNAILA ZAQIYANo ratings yet

- Aesthetic Preferences For Prototypical Movements in Human ActionsDocument13 pagesAesthetic Preferences For Prototypical Movements in Human ActionsAndrésNo ratings yet

- The Use of Semantic Human Description As A Soft Biometric: Sina Samangooei Baofeng Guo Mark S. NixonDocument7 pagesThe Use of Semantic Human Description As A Soft Biometric: Sina Samangooei Baofeng Guo Mark S. NixonmalekinezhadNo ratings yet

- Body Segment Parameters - Drillis Contini BluesteinDocument23 pagesBody Segment Parameters - Drillis Contini BluesteinJavier VillavicencioNo ratings yet

- From The Lab To The Real World Affect Recognition Using Multiple Cues and ModalitiesDocument37 pagesFrom The Lab To The Real World Affect Recognition Using Multiple Cues and Modalitiessherif.m.osamaNo ratings yet

- tmp5D49 TMPDocument8 pagestmp5D49 TMPFrontiersNo ratings yet

- Entrevista Hubert GodardDocument8 pagesEntrevista Hubert GodardKk42bNo ratings yet

- RoboticsDocument23 pagesRoboticschotumotu131111No ratings yet

- Visual Perception of Human MovementDocument14 pagesVisual Perception of Human MovementClau OrellanoNo ratings yet

- Vol9 Art4 Gomes-Cochet-Guyon DanceDocument23 pagesVol9 Art4 Gomes-Cochet-Guyon DanceNorma GomesNo ratings yet

- Recognise People by The Way They Walk.Document7 pagesRecognise People by The Way They Walk.Béllà NoussaNo ratings yet

- Gale Researcher Guide for: Experimental and Behavioral PsychologyFrom EverandGale Researcher Guide for: Experimental and Behavioral PsychologyNo ratings yet

- Claudio Garuti, Isabel Spencer: Keywords: ANP, Fractals, System Representation, Parallelisms, HistoryDocument10 pagesClaudio Garuti, Isabel Spencer: Keywords: ANP, Fractals, System Representation, Parallelisms, HistoryjegosssNo ratings yet

- Pollick2002 PDFDocument11 pagesPollick2002 PDFedvinas6valikonisNo ratings yet

- Geometric Translations of The Body in MotionDocument8 pagesGeometric Translations of The Body in Motionarch.samermabroukNo ratings yet

- Department of Exercise and Sport Science Manchester Metropolitan University, UKDocument24 pagesDepartment of Exercise and Sport Science Manchester Metropolitan University, UKChristopher ChanNo ratings yet

- Zaini 2012 BehavResMethDocument16 pagesZaini 2012 BehavResMethE PNo ratings yet

- Cla IdmaDocument160 pagesCla Idmacurotto1953No ratings yet

- TM T70 BrochureDocument2 pagesTM T70 BrochureNikhil GuptaNo ratings yet

- Psych 1xx3 Quiz AnswersDocument55 pagesPsych 1xx3 Quiz Answerscutinhawayne100% (4)

- Classification of AnimalsDocument6 pagesClassification of Animalsapi-282695651No ratings yet

- Greek Myth WebquestDocument9 pagesGreek Myth Webquesthollyhock27No ratings yet

- 2008-14-03Document6 pages2008-14-03RAMON CALDERONNo ratings yet

- Nigeria Emergency Plan NemanigeriaDocument47 pagesNigeria Emergency Plan NemanigeriaJasmine Daisy100% (1)

- Atlas Ci30002Tier-PropanDocument3 pagesAtlas Ci30002Tier-PropanMarkus JeremiaNo ratings yet

- Search Engine Marketing Course Material 2t4d9Document165 pagesSearch Engine Marketing Course Material 2t4d9Yoga Guru100% (2)

- All Creatures Great and SmallDocument4 pagesAll Creatures Great and SmallsaanviranjanNo ratings yet

- X-Ray Generator Communication User's Manual - V1.80 L-IE-4211Document66 pagesX-Ray Generator Communication User's Manual - V1.80 L-IE-4211Marcos Peñaranda TintayaNo ratings yet

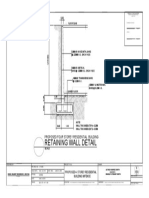

- Retaining Wall DetailsDocument1 pageRetaining Wall DetailsWilbert ReuyanNo ratings yet

- Desk PiDocument21 pagesDesk PiThan LwinNo ratings yet

- FSRE SS AppendixGlossariesDocument27 pagesFSRE SS AppendixGlossariessachinchem020No ratings yet

- Finding The Answers To The Research Questions (Qualitative) : Quarter 4 - Module 5Document39 pagesFinding The Answers To The Research Questions (Qualitative) : Quarter 4 - Module 5Jernel Raymundo80% (5)

- Spare Parts List: WarningDocument5 pagesSpare Parts List: WarningÃbdøū Èqúípmeńť MédîcàlNo ratings yet

- Orion C.M. HVAC Case Study-07.25.23Document25 pagesOrion C.M. HVAC Case Study-07.25.23ledmabaya23No ratings yet

- SocorexDocument6 pagesSocorexTedosNo ratings yet

- Tax1 Course Syllabus BreakdownDocument15 pagesTax1 Course Syllabus BreakdownnayhrbNo ratings yet

- Measurement of Mass and Weight by NPLDocument34 pagesMeasurement of Mass and Weight by NPLN.PalaniappanNo ratings yet

- MNCs-consider-career-development-policyDocument2 pagesMNCs-consider-career-development-policySubhro MukherjeeNo ratings yet

- 21st Century Literary GenresDocument2 pages21st Century Literary GenresGO2. Aldovino Princess G.No ratings yet

- LTG 04 DD Unit 4 WorksheetsDocument2 pagesLTG 04 DD Unit 4 WorksheetsNguyễn Kim Ngọc Lớp 4DNo ratings yet

- Aka GMP Audit FormDocument8 pagesAka GMP Audit FormAlpian BosixNo ratings yet

- A New Aftercooler Is Used On Certain C9 Marine Engines (1063)Document3 pagesA New Aftercooler Is Used On Certain C9 Marine Engines (1063)TASHKEELNo ratings yet

- Curriculam VitaeDocument3 pagesCurriculam Vitaeharsha ShendeNo ratings yet

- LINDA ALOYSIUS Unit 6 Seminar Information 2015-16 - Seminar 4 Readings PDFDocument2 pagesLINDA ALOYSIUS Unit 6 Seminar Information 2015-16 - Seminar 4 Readings PDFBence MagyarlakiNo ratings yet

- Why it's important to guard your free timeDocument2 pagesWhy it's important to guard your free timeLaura Camila Garzón Cantor100% (1)

- Public OpinionDocument7 pagesPublic OpinionSona Grewal100% (1)

- The Completely Randomized Design (CRD)Document16 pagesThe Completely Randomized Design (CRD)Rahul TripathiNo ratings yet