Professional Documents

Culture Documents

Linear Algebra Notes

Uploaded by

Angela ZhouOriginal Description:

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Linear Algebra Notes

Uploaded by

Angela ZhouCopyright:

Available Formats

MAT 204: Definitions and Theorems (Midterm Prep) Vector Space: has zero element, closed under addition

and subtraction (which are commutative & associative), scalar multiplication is associative I guess, etc. i.e. vector spaces include set of polynomials of degree n, , M(m,n) (the set of m by n matrices), etc ** do not include things not closed under addition and subtraction, like positive values of R^n or whatever Gaussian Elimination and such: - Basically write out the augmented matrix (coefficients + constants) Echelon form: the first nonzero entry in any nonzero row occurs to right of first entry in the row DIRECTLY above it - All zero rows grouped together at bottom Row-Reduced Echelon form: - A is in echelon form - All pivot entries are 1 - All entries above pivots are 0 Theorem: (More Unknowns): If there are more unknowns than there are equations (rank of m by n matrix is m, m<n) there are either no solutions or infinitely many If its consistent, itll have nonpivot variables that can be arbitrarily set Theorem: linear system is solvable IFF constant vector B belongs in column space of coefficient matrix Subspace: 1) is a span of linearly independent vectors 2) is a collection of elements that contains the zero element/vector, and is closed under linear combinations (for x, y Higher dimensional analogues of lines, planes through the origin The fundamental subspaces include: Rowspace the subspace spanned by the pivot rows of an echelon form of the matrix M Column Space the column space spanned by the pivot columns of the original matrix (constant vector B, AX = B, is always in the column space of A, if equation is solvable) col sp. Mxn matrix A is the set of X for which AX = 0. Subspace of Rn. Also the set of vectors B where AX = B is solvable.

Nullspace or Kernel the subspace of solutions to the equation Ax = 0; for a linear transformation, consists of all inputs that will get mapped to the zero vector; is always within the column space of matrix A - if the nullspace is 0, there is a unique solution to Ax = B (and matrix is of full rank) - given by the spanning vectors in the general solution - if the kernel of a linear transformation is {0} then the map is surjective onto - subspace of the domain space (Rm) - general solution to system: translate of nullspace

(t) translation theorem - A is an m x n matrix; T is any solution to AX = B - General solution: X = T + Z; Z belongs to the nullspace T can be any arbitrary solution to the system Solution to AX = B is unique IFF zero vector is the only element of the nullspace (t) nullspace of an m x n matrix is a subspace of Rn def: LINEAR INDEPENDENCE a set of vectors { v1, v2, v3, v4 } is linearly dependent if there

and a,b,c,d are not all 0 a set of n linearly independent vectors is a basis for an n-dimensional space and spans the space i.e. a set is linearly dependent if one of its vectors can be written as a linear combination of the others i.e. 0 vector is dependent on other vectors testing for linear independence: consider the dependency equation: multiply out the A1, A2,

RANK of a matrix: Can be found by: the number of pivot variables, the number of nonzero rows in an echelon form of matrix A, dimension of row space = dimension of column space = rank , Notes on rank: rank A = rank corollary: rank A <= m, <=n

Notes on solvability and rank, given A the m x n matrix: 1) if rank A = n: 2) if rank A = m: system is always solvable (is it?) (n unknowns and n equations) a. n linearly independent vectors in the column space so they are a basis and span all of R^m; all are in span of the column space 3) if rank A = n, A is square n by n: a. system is always solvable and solution is always unique b. null A = 0 c. by the way, its probably invertible.. rank n, n x n matrix Rank-nullity theorem: rank A + null A = n (# columns) LINEARITY PROPERTIES: - transformation T: takes elements from U, domain, produces T(X) in V (target space) - T: U -> V represented by a m x n matrix A - S is subset of domain of the transformation, T image of S under T given by T(X), where - Linear transformations: transformations of linear combinations = linear combinations of transformations - **GIVEN: linear transformation T, finds its matrix MATRIX MULTIPLICATION: really the process of forming linear combinations of the columns of the coefficient matrix - Leads to composition of transformations.. - SoT(X) = S(T(X)) ( ) - ( ) INVERSES: - If we say multiplying by the inverse of A solves AX = Y: o A(BY) = Y -> (AB)Y = Y; AB = I - A is invertible IFF A is nonsingular LU Factorization: - you go through a series of EROS (no row swaps, though) to arrive at an upper triangular matrix [echelon]. Thats your upper triangular matrix. - Inverse of your other matrix (used to be identity) will be lower triangular, thats your U - Diagonal matrix: numbers along the diagonal - By the way, the L matrix is def. unipotent

If you need a row swap, permute A first

LINEAR TRANSFORMATIONS: BASES: a set of linearly independent vectors that spans an n-dimensional subspace; has n elements Minimally spanning set maximally linearly independent set 1) finding the first basis from RREF a. second basis can just come from any echelon form (EROs are fair game) LINEAR TRANSFORMATIONS Def of linearity: T(X) + T(Y) = T(X + Y) Maps from a vector to another vector space: This can be written as a matrix, A which is m x n T = A(X) \ Coordinate vector: vector X = [x, y] where (just a linear combination of the bases) Note this is dependent on the order of the bases Uniqueness of the coordinates depends on linear Facts: product of two lower triangular matrices is lower triangular; similarly for upper triangular and pf: dot product of ith row and jth column of A, B = 0, for I < j Theorem/prop: A is m x n matrix, I is identity. [A | I ] is row-equivalent to [ U | B ],, where U is upper triangular/in echelon form, and B is mxm. B is invertible and A = B-1U; B-1 = L, A = LU Getting this L matrix: identity matrix except for one element Linear combinations of rows: denoted by And therefore its inverse: , given by Scalar multiples; ; and its inverse is that

Determinant: Laplace/cofactor expansion: expanding along any row/column [EXAMPLE] Expanding down an even-numbered row/column gives you det (A) by row-exchange property General formula: ( ) ( )

Scalar property: If you take = X, subtract them, coeficients are the same matrix but multiply a row by a scalar c, you need to multiply the determinant by the same factor c (follows by laplace expansion) Row Exchange Property: exchanging 2 rows of a matrix gives the negative of the determinant (determinant of an upper triangular matrix is the product of the entires on the main diagonal. Additive Property: det [U+V,a2, a3] = det [U,a2,a3] + det [V,a2,a3] Reduction of determinants: Any nxn matrix with 2 equal rows has determinant (by row exchange) *row equivalent matrices have the same determinant (decompose into addition with matrices 2 equal rows which cancel out) (factor out scalars) (remember, adding/subtracting scalar multiples does nothing **nxn matrix is invertible IFF determinant A != 0, e.g. there are no nonzero rows full rank nxn matrix proof: determinant of any nonzero matrix is a nonzero multiple of its RREF; if RREF is not I, then det = 0 **determinant function is unique Theorem 4; product theorem ( Theorem 5: for all nxn matrices, det A = det At ) ( ) ( )

X = Pb*X where point matrix is the nxn matrix whose columns are the basis vectors Multiplying coordinate vector X by Pb generates point X Pb invertible inverse is Cb, coordinate matrix; multiplying by it produces the coordinates of a point.

You might also like

- Emerging Technology Workshop COLADocument3 pagesEmerging Technology Workshop COLARaj Daniel Magno58% (12)

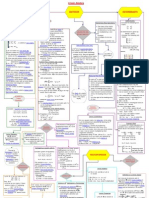

- Linear Algebra Flow Diagram 1Document1 pageLinear Algebra Flow Diagram 1stansmith1100% (1)

- BCA - 1st - SEMESTER - MATH NotesDocument25 pagesBCA - 1st - SEMESTER - MATH Noteskiller botNo ratings yet

- Inventor ReportDocument7 pagesInventor Report2cekal50% (2)

- Linear Algebra Cheat SheetDocument2 pagesLinear Algebra Cheat SheetBrian WilliamsonNo ratings yet

- MATH2101 Cheat SheetDocument3 pagesMATH2101 Cheat SheetWong NgNo ratings yet

- Linear Algebra Cheat SheetDocument3 pagesLinear Algebra Cheat SheetMiriam Quan100% (1)

- Linear Algebra (Bretscher) Chapter 3 Notes: 1 Image and Kernel of A Linear TransformationDocument4 pagesLinear Algebra (Bretscher) Chapter 3 Notes: 1 Image and Kernel of A Linear TransformationAlex NosratNo ratings yet

- Kashif Khan Assignment of Linear Algebra.Document10 pagesKashif Khan Assignment of Linear Algebra.engineerkashif97No ratings yet

- Eigenvalues of GraphsDocument29 pagesEigenvalues of GraphsDenise ParksNo ratings yet

- Linear AlgebraDocument7 pagesLinear Algebrataych03No ratings yet

- Lin Agebra RevDocument18 pagesLin Agebra RevMustafa HaiderNo ratings yet

- Linear Algebra HintsDocument17 pagesLinear Algebra Hintsgeetharaman1699No ratings yet

- Math C1 - Matrices and VectorsDocument7 pagesMath C1 - Matrices and VectorsIvetteNo ratings yet

- Math C1 - Matrices and VectorsDocument14 pagesMath C1 - Matrices and VectorsIvetteNo ratings yet

- Gate Mathematics BasicsDocument11 pagesGate Mathematics Basicsprabhu81No ratings yet

- 2.7.3 Example: 2.2 Matrix AlgebraDocument8 pages2.7.3 Example: 2.2 Matrix Algebrafredy8704No ratings yet

- Rf4002a50 MTH101Document20 pagesRf4002a50 MTH101Abhishek SharmaNo ratings yet

- Vector Spaces: All R Is X-Y Plane R Is A LineDocument6 pagesVector Spaces: All R Is X-Y Plane R Is A LineMahesh AbnaveNo ratings yet

- Questions CoprihensiveVivaDocument3 pagesQuestions CoprihensiveVivapalas1991-1No ratings yet

- Mce371 13Document19 pagesMce371 13Abul HasnatNo ratings yet

- 1 Linear AlgebraDocument5 pages1 Linear AlgebraSonali VasisthaNo ratings yet

- Linear Algebra - Module 1Document52 pagesLinear Algebra - Module 1TestNo ratings yet

- Linear Algebra Review Sheet - Chapter 1: by Vincent FiorentiniDocument2 pagesLinear Algebra Review Sheet - Chapter 1: by Vincent FiorentiniMichika MaedaNo ratings yet

- Math PrimerDocument13 pagesMath Primertejas.s.mathaiNo ratings yet

- Linear Equations Linear AlgebraDocument4 pagesLinear Equations Linear AlgebraSrijit PaulNo ratings yet

- cs530 12 Notes PDFDocument188 pagescs530 12 Notes PDFyohanes sinagaNo ratings yet

- Rank of A MatrixDocument4 pagesRank of A MatrixSouradeep J ThakurNo ratings yet

- Eigenvector and Eigenvalue ProjectDocument10 pagesEigenvector and Eigenvalue ProjectIzzy ConceptsNo ratings yet

- Mit18 06scf11 Ses1.5sumDocument3 pagesMit18 06scf11 Ses1.5sumZille SubhaniNo ratings yet

- Mat133 Reveiw NotesDocument27 pagesMat133 Reveiw NotesDimitre CouvavasNo ratings yet

- S10 Prodigies PPTDocument33 pagesS10 Prodigies PPTKrish GuptaNo ratings yet

- For A Given Vector in 2D Space, Stretching It by A Value of 2 Is CalledDocument23 pagesFor A Given Vector in 2D Space, Stretching It by A Value of 2 Is CalledIgorJales100% (1)

- MathematicsDocument66 pagesMathematicsTUSHIT JHANo ratings yet

- 11 Linearmodels 3Document13 pages11 Linearmodels 3SCRBDusernmNo ratings yet

- Engg AnalysisDocument117 pagesEngg AnalysisrajeshtaladiNo ratings yet

- Linear Algebra Nut ShellDocument6 pagesLinear Algebra Nut ShellSoumen Bose100% (1)

- Solution For Linear SystemsDocument47 pagesSolution For Linear Systemsshantan02No ratings yet

- Hanilen G. Catama February 5, 2014 Bscoe 3 Emath11Document5 pagesHanilen G. Catama February 5, 2014 Bscoe 3 Emath11Hanilen CatamaNo ratings yet

- The Four Fundamental SubspacesDocument4 pagesThe Four Fundamental SubspacesGioGio2020No ratings yet

- Mathematics For Economists Slides - 3Document30 pagesMathematics For Economists Slides - 3HectorNo ratings yet

- Systems of Linear EquationsDocument10 pagesSystems of Linear EquationsDenise ParksNo ratings yet

- Differential Calculus Made Easy by Mark HowardDocument178 pagesDifferential Calculus Made Easy by Mark HowardLeonardo DanielliNo ratings yet

- Week-5 Session 2Document21 pagesWeek-5 Session 2Vinshi JainNo ratings yet

- Vector Space PresentationDocument26 pagesVector Space Presentationmohibrajput230% (1)

- Assignment 2 Kashif KhanDocument17 pagesAssignment 2 Kashif Khanengineerkashif97No ratings yet

- Fom Assignment 1Document23 pagesFom Assignment 11BACS ABHISHEK KUMARNo ratings yet

- On The Topic: Submitted byDocument10 pagesOn The Topic: Submitted byAkshay KumarNo ratings yet

- Vectors and MatricesDocument8 pagesVectors and MatricesdorathiNo ratings yet

- 4 Pictures of The Same Thing: Picture (I) : Systems of Linear EquationsDocument4 pages4 Pictures of The Same Thing: Picture (I) : Systems of Linear EquationsthezackattackNo ratings yet

- MATHEMATICS, Lecture 1: Carmen HerreroDocument28 pagesMATHEMATICS, Lecture 1: Carmen HerreroHun HaiNo ratings yet

- Chapter 26 - Remainder - A4Document9 pagesChapter 26 - Remainder - A4GabrielPaintingsNo ratings yet

- Vectors and MatricesDocument8 pagesVectors and MatricesdorathiNo ratings yet

- Linear Algebra IDocument43 pagesLinear Algebra IDaniel GreenNo ratings yet

- 8 Rank of A Matrix: K J N KDocument4 pages8 Rank of A Matrix: K J N KPanji PradiptaNo ratings yet

- Left Right Pseudo-Inverse PDFDocument4 pagesLeft Right Pseudo-Inverse PDFYang CaoNo ratings yet

- WEEK 5-StudentDocument47 pagesWEEK 5-Studenthafiz patahNo ratings yet

- Rank of A Matrix: M × N M × NDocument4 pagesRank of A Matrix: M × N M × NDevenderNo ratings yet

- An Introduction to Linear Algebra and TensorsFrom EverandAn Introduction to Linear Algebra and TensorsRating: 1 out of 5 stars1/5 (1)

- Basic Traffic Shaping Based On Layer-7 Protocols - MikroTik WikiDocument14 pagesBasic Traffic Shaping Based On Layer-7 Protocols - MikroTik Wikikoulis123No ratings yet

- 16CS3123-Java Programming Course File-AutonomousDocument122 pages16CS3123-Java Programming Course File-AutonomousSyed WilayathNo ratings yet

- Subfiles by Michael CataliniDocument508 pagesSubfiles by Michael Catalinignani123No ratings yet

- Techniques of Integration Thomas FinneyDocument6 pagesTechniques of Integration Thomas FinneyVineet TannaNo ratings yet

- Advanced Excel FormulasDocument318 pagesAdvanced Excel FormulasRatana KemNo ratings yet

- MultiplexersDocument23 pagesMultiplexersAsim WarisNo ratings yet

- Design of An Efficient FIFO Buffer For Network On Chip RoutersDocument4 pagesDesign of An Efficient FIFO Buffer For Network On Chip RoutersAmityUniversity IIcNo ratings yet

- Lab Manuals DDBSDocument67 pagesLab Manuals DDBSzubair100% (1)

- 1617 s4 Mat Whole (Eng) Fe (p2) QusDocument7 pages1617 s4 Mat Whole (Eng) Fe (p2) QusCHIU KEUNG OFFICIAL PRONo ratings yet

- Ma102intro PDFDocument9 pagesMa102intro PDFSarit BurmanNo ratings yet

- Tutorial 7 Introduction To Airbag FoldingDocument12 pagesTutorial 7 Introduction To Airbag Foldingarthurs9792100% (1)

- Mindtree Annual Report 2016 17Document272 pagesMindtree Annual Report 2016 17janpath3834No ratings yet

- SAP Gioia Tauro Terminal ProblemDocument26 pagesSAP Gioia Tauro Terminal Problemapi-3750011No ratings yet

- Pt855tadm b112016Document406 pagesPt855tadm b112016viktorNo ratings yet

- Central Processing Unit: From Wikipedia, The Free EncyclopediaDocument18 pagesCentral Processing Unit: From Wikipedia, The Free EncyclopediaJoe joNo ratings yet

- Monte Carlo Method For Solving A Parabolic ProblemDocument6 pagesMonte Carlo Method For Solving A Parabolic ProblemEli PaleNo ratings yet

- Quick Help For EDI SEZ IntegrationDocument2 pagesQuick Help For EDI SEZ IntegrationsrinivasNo ratings yet

- 135 To 150 Mcqs Word Best McqsDocument3 pages135 To 150 Mcqs Word Best McqsSaadNo ratings yet

- OFDMADocument29 pagesOFDMAterryNo ratings yet

- Resume Writing Tips For FreshersDocument5 pagesResume Writing Tips For FreshersAnshul Tayal100% (1)

- Chi-Square, Student's T and Snedecor's F DistributionsDocument20 pagesChi-Square, Student's T and Snedecor's F DistributionsASClabISBNo ratings yet

- Brall 2007Document6 pagesBrall 2007ronaldNo ratings yet

- DDR3 VsDocument3 pagesDDR3 VsMichael NavarroNo ratings yet

- Arcswat Manual PDFDocument64 pagesArcswat Manual PDFguidoxlNo ratings yet

- As 400Document162 pagesAs 400avez4uNo ratings yet

- Mc-Simotion Scout ConfiguringDocument298 pagesMc-Simotion Scout ConfiguringBruno Moraes100% (1)

- FC1 625Document50 pagesFC1 625LOL1044No ratings yet

- 26 Time-Management, Productivity TricksDocument28 pages26 Time-Management, Productivity TricksShahzeb AnwarNo ratings yet