Professional Documents

Culture Documents

Assignment Questions: 4. Construct Decision Tree For The Following Data Set

Uploaded by

priya shashikumar0 ratings0% found this document useful (0 votes)

92 views1 pageThe document outlines 12 assignment questions related to machine learning classification algorithms including decision trees, candidate generation and pruning in decision trees, expressing attribute conditions, Hunt's algorithm, constructing a decision tree on a sample data set, the Apriori algorithm and frequent item set generation, alternative frequent item set generation methods, constructing a hash tree and identifying leaf nodes for a transaction, constructing an FP-tree in the FP-Growth algorithm from a transaction data set, explaining classification problems and approaches, measures for selecting the best split in decision trees, overfitting in models and how to address it, and characteristics of decision tree induction.

Original Description:

Original Title

DM Assignment questions

Copyright

© © All Rights Reserved

Available Formats

DOCX, PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentThe document outlines 12 assignment questions related to machine learning classification algorithms including decision trees, candidate generation and pruning in decision trees, expressing attribute conditions, Hunt's algorithm, constructing a decision tree on a sample data set, the Apriori algorithm and frequent item set generation, alternative frequent item set generation methods, constructing a hash tree and identifying leaf nodes for a transaction, constructing an FP-tree in the FP-Growth algorithm from a transaction data set, explaining classification problems and approaches, measures for selecting the best split in decision trees, overfitting in models and how to address it, and characteristics of decision tree induction.

Copyright:

© All Rights Reserved

Available Formats

Download as DOCX, PDF, TXT or read online from Scribd

0 ratings0% found this document useful (0 votes)

92 views1 pageAssignment Questions: 4. Construct Decision Tree For The Following Data Set

Uploaded by

priya shashikumarThe document outlines 12 assignment questions related to machine learning classification algorithms including decision trees, candidate generation and pruning in decision trees, expressing attribute conditions, Hunt's algorithm, constructing a decision tree on a sample data set, the Apriori algorithm and frequent item set generation, alternative frequent item set generation methods, constructing a hash tree and identifying leaf nodes for a transaction, constructing an FP-tree in the FP-Growth algorithm from a transaction data set, explaining classification problems and approaches, measures for selecting the best split in decision trees, overfitting in models and how to address it, and characteristics of decision tree induction.

Copyright:

© All Rights Reserved

Available Formats

Download as DOCX, PDF, TXT or read online from Scribd

You are on page 1of 1

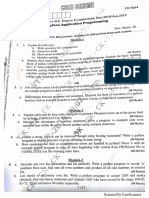

Assignment questions

1. Describe the different methods of candidate generation and pruning.

2. Explain various methods for Expressing Attribute Test Conditions.

3. How decision trees are used for classification. Write Hunts algorithm and illustrate

it’s working

4. Construct decision tree for the following data set

Age competition type profit

old yes s/w Down

old no s/w Down

old no h/w Down

mid yes s/w Down

mid yes h/w Down

mid no h/w Up

mid no s/w Up

new yes s/w Up

new no h/w Up

new no s/w Up

5. Describe frequent item set generation in Aprior algorithm with example.

6. Describe alternative methods for generating frequent item set.

7. Consider the following set of candidate three-itemsets {1,2,3} {1,2,6} {1,2,7}

{1,3,4}{2,3,4}{2,4,6}{2,7,8}{1,7,8}{3,4,8}{3,7,9}{4,7,6}{4,5,9}{6,8,9}{3,6,7}

{3,7,6}{456} assume hash function hp=p mod 3

i)construct a hash tree ii)given a transaction that contains items{1,2,5,6,8} which of

the hash tree leaf nodes will be visited when finding the candidates of the

transaction.

8. Consider the following transaction data set. Describe the construction of FP-Tree in

FP-Growth algorithm.

TID items

1 {a,b}

2 {b,c,d}

3 {a,c,d,e}

4 {a,d,e}

5 {a,b,c}

6 {a,b,c,d}

7 {a}

8 {a,b,c}

9 {a,b,d}

10 {b,c,e}

9. What is classification. Explain the general approach for solving a classification

problem with an example.

10. Explain various measures for selecting the best split with an example

11. Explain Model Over fitting. What are the reasons for overfitting? How to address

overfitting problems

12. List characteristics of decision tree induction.

You might also like

- Elementary StatisticsDocument9 pagesElementary StatisticsRusna Ahmad100% (1)

- How To Use SPSSDocument134 pagesHow To Use SPSSdhimba100% (6)

- Theory of ComputationDocument429 pagesTheory of ComputationRoshan Ajith100% (1)

- Mathematics: Quarter 1 - Module 1Document26 pagesMathematics: Quarter 1 - Module 1Ayn Realosa-Barcelon100% (2)

- Validation of Analytical MethodDocument20 pagesValidation of Analytical Methodkamran alamNo ratings yet

- Sets+ +11th+classDocument161 pagesSets+ +11th+classChundita 4th class100% (1)

- Nonlinear Programming Solution ADocument65 pagesNonlinear Programming Solution AAlka ChoudharyNo ratings yet

- PERT Exercise1 Q&ADocument14 pagesPERT Exercise1 Q&ADeepak Daya MattaNo ratings yet

- TQM - TRG - B-07 - Matrix Data Analysis - Rev02 - 20180603 PDFDocument9 pagesTQM - TRG - B-07 - Matrix Data Analysis - Rev02 - 20180603 PDFUmashankarNo ratings yet

- 2000+ Technical Interview QuestionsDocument174 pages2000+ Technical Interview Questionspratiksha guptaNo ratings yet

- Tableau Desktop SpecialistDocument11 pagesTableau Desktop SpecialistTayyab Vohra100% (1)

- Decision Making TheoryDocument48 pagesDecision Making Theorydr.panu100% (2)

- 5.-Abramowitz, M. and Stegun, I.A. "Handbook of Mathematical Functions PDFDocument9 pages5.-Abramowitz, M. and Stegun, I.A. "Handbook of Mathematical Functions PDFDarayt JimenezNo ratings yet

- Designing and Conducting Survey Research: A Comprehensive GuideFrom EverandDesigning and Conducting Survey Research: A Comprehensive GuideRating: 2 out of 5 stars2/5 (2)

- Statistical Inference for Models with Multivariate t-Distributed ErrorsFrom EverandStatistical Inference for Models with Multivariate t-Distributed ErrorsNo ratings yet

- COMP1942 Question PaperDocument7 pagesCOMP1942 Question PaperpakaMuzikiNo ratings yet

- DM QuizDocument1 pageDM Quizbonifacio gianga jrNo ratings yet

- Tutorialexercises 1Document9 pagesTutorialexercises 1Ngân ChâuNo ratings yet

- Points Point Multiplier DateDocument17 pagesPoints Point Multiplier Datelepton1973No ratings yet

- COMP1942 Question PaperDocument5 pagesCOMP1942 Question PaperpakaMuzikiNo ratings yet

- Assignment 6 (COPY)Document6 pagesAssignment 6 (COPY)geetha megharajNo ratings yet

- Excel Grade Tracker Directly by PercentDocument17 pagesExcel Grade Tracker Directly by PercentmevegadeNo ratings yet

- A Detailed Lesson Plan in Mathematics 10: A. Preliminary/Routinary ActivityDocument12 pagesA Detailed Lesson Plan in Mathematics 10: A. Preliminary/Routinary ActivityWilson MoralesNo ratings yet

- Sets Sets SetsDocument47 pagesSets Sets SetsNolzkie CalisoNo ratings yet

- Chapter 4: Presentation of Data Important Terms and ConceptsDocument4 pagesChapter 4: Presentation of Data Important Terms and Conceptsraghu8215No ratings yet

- Sample Aptitude Questions of HexawareDocument31 pagesSample Aptitude Questions of HexawarevigneshwariNo ratings yet

- Mean WorksheetsDocument1 pageMean WorksheetsRECHEL BURGOSNo ratings yet

- 200 Problem Set 6Document7 pages200 Problem Set 6Dr Cheryl-Grace PattyNo ratings yet

- Jupesa 000Document8 pagesJupesa 000Karina GarcíaNo ratings yet

- ISE 291 Introduction To Data Science: Term 212 Homework #6Document6 pagesISE 291 Introduction To Data Science: Term 212 Homework #6Hamdi M. SaifNo ratings yet

- Least Learned Competency InstrumentDocument10 pagesLeast Learned Competency InstrumentDavid Organiza AmpatinNo ratings yet

- Answers Association Rules We KaDocument7 pagesAnswers Association Rules We KaBảo BrunoNo ratings yet

- Sample Space and EventsDocument15 pagesSample Space and EventsMa. Rema PiaNo ratings yet

- Scribd Upload A Document: ExploreDocument518 pagesScribd Upload A Document: ExploreDeepa LakshmiNo ratings yet

- Prepared by Bundala, N.HDocument58 pagesPrepared by Bundala, N.HKanika RelanNo ratings yet

- CS8082 - Rejinpaul - IqDocument5 pagesCS8082 - Rejinpaul - IqRajachandra VoodigaNo ratings yet

- Cs2201 Data StructuresDocument4 pagesCs2201 Data StructuresKarthik Sara MNo ratings yet

- AE 108 Handout No.1Document8 pagesAE 108 Handout No.1hambalos broadcastingincNo ratings yet

- Pam3100 Ps5 Revised Spring 2018Document5 pagesPam3100 Ps5 Revised Spring 2018Marta Zavaleta DelgadoNo ratings yet

- UNIT3Document71 pagesUNIT3TomNo ratings yet

- Remedial Test (1 Quarter Performance) : Mathematics 7 2020 - 2021Document3 pagesRemedial Test (1 Quarter Performance) : Mathematics 7 2020 - 2021angelNo ratings yet

- Aizam QuaestionDocument2 pagesAizam Quaestion171173No ratings yet

- Lab Manual CS4500 Artificial Intelligence Lab Fall Session 2013, 1434 With Lab SessionDocument23 pagesLab Manual CS4500 Artificial Intelligence Lab Fall Session 2013, 1434 With Lab SessionAnkit100% (1)

- School of Computing: COS351D/204/2007Document10 pagesSchool of Computing: COS351D/204/2007tondiNo ratings yet

- Technical Aptitude Questions EbookDocument175 pagesTechnical Aptitude Questions EbookJothi BossNo ratings yet

- Constant Rates of Change: Practice and Problem Solving: A/BDocument2 pagesConstant Rates of Change: Practice and Problem Solving: A/BRajendra PilludaNo ratings yet

- UMBC CMSC 671 Final Exam: December 20, 2009Document8 pagesUMBC CMSC 671 Final Exam: December 20, 2009sraenjrNo ratings yet

- CSC 212: Data Structures and Abstractions University of Rhode IslandDocument2 pagesCSC 212: Data Structures and Abstractions University of Rhode IslandSaurabh ShuklaNo ratings yet

- ML Lab ObservationDocument44 pagesML Lab Observationjaswanthch16100% (1)

- Q12E Question - Let - ( ( ( - BF (A) ) - ( - BF (1... (FREE SOLUTION) - StudySmarterDocument5 pagesQ12E Question - Let - ( ( ( - BF (A) ) - ( - BF (1... (FREE SOLUTION) - StudySmarterPrakriti BhatiaNo ratings yet

- ST102 Exercise 1Document4 pagesST102 Exercise 1Jiang HNo ratings yet

- Examquestionbank PRDocument4 pagesExamquestionbank PRwinster21augNo ratings yet

- OBIEE Interview Questions and AnswersDocument5 pagesOBIEE Interview Questions and AnswersJinendraabhiNo ratings yet

- Walk The Line 5Document2 pagesWalk The Line 5api-279576217No ratings yet

- Advanced Data Structure IT-411Document8 pagesAdvanced Data Structure IT-411Rajesh KrishnamoorthyNo ratings yet

- Full Download Java Programming From Problem Analysis To Program Design 4th Edition Malik Solutions ManualDocument36 pagesFull Download Java Programming From Problem Analysis To Program Design 4th Edition Malik Solutions Manualbenjaminmfp7hof100% (27)

- Dwnload Full Implementing Organizational Change 3rd Edition Spector Test Bank PDFDocument35 pagesDwnload Full Implementing Organizational Change 3rd Edition Spector Test Bank PDFseritahanger121us100% (8)

- Document 3Document11 pagesDocument 3sjeffNo ratings yet

- Lesson PlanDocument5 pagesLesson Planapi-365141997No ratings yet

- TL07 - Selected SolutionDocument4 pagesTL07 - Selected SolutionWang RunyuNo ratings yet

- Solution Manual For Introduction To Data Mining 0321321367Document38 pagesSolution Manual For Introduction To Data Mining 0321321367Pearline Teel100% (16)

- Lesson 13, 14 & 15Document2 pagesLesson 13, 14 & 15MoAzzam HassanNo ratings yet

- Ms. AccessDocument10 pagesMs. AccessKhalidHassan100% (1)

- Computer Programming: A Simplified Entry to Python, Java, and C++ Programming for BeginnersFrom EverandComputer Programming: A Simplified Entry to Python, Java, and C++ Programming for BeginnersNo ratings yet

- Tutorial Questions II: C Byregowda Institute of TechnologyDocument2 pagesTutorial Questions II: C Byregowda Institute of Technologypriya shashikumarNo ratings yet

- Vtu QP2Document2 pagesVtu QP2priya shashikumarNo ratings yet

- Assignment & Tutorials 02Document1 pageAssignment & Tutorials 02priya shashikumarNo ratings yet

- C Byregowda Institute of TechnologyDocument1 pageC Byregowda Institute of Technologypriya shashikumarNo ratings yet

- Model QP2Document2 pagesModel QP2priya shashikumarNo ratings yet

- Vtu QP1Document2 pagesVtu QP1priya shashikumarNo ratings yet

- Os Model PaperDocument2 pagesOs Model Paperpriya shashikumarNo ratings yet

- Management and Entrepreneurship Question Bank: Sai Vidya Institute of TechnologyDocument7 pagesManagement and Entrepreneurship Question Bank: Sai Vidya Institute of Technologypriya shashikumarNo ratings yet

- SS CD Model Paper 2Document2 pagesSS CD Model Paper 2priya shashikumarNo ratings yet

- C Byregowda Institute of TechnologyDocument3 pagesC Byregowda Institute of Technologypriya shashikumarNo ratings yet

- C Byregowda Institute of TechnologyDocument2 pagesC Byregowda Institute of Technologypriya shashikumarNo ratings yet

- Introducing Microsoft Visual C#: Question Bank Dot Net Frame Work For Application DEVELOPMENT (10CS564) Course OutcomesDocument6 pagesIntroducing Microsoft Visual C#: Question Bank Dot Net Frame Work For Application DEVELOPMENT (10CS564) Course Outcomespriya shashikumar0% (1)

- " Apothecare Data Management System ": Presented By: CHANDANA S (1CK16CS017) MEGHANA K (1CK16CS042)Document20 pages" Apothecare Data Management System ": Presented By: CHANDANA S (1CK16CS017) MEGHANA K (1CK16CS042)priya shashikumarNo ratings yet

- C Byregowda Institute of TechnologyDocument2 pagesC Byregowda Institute of Technologypriya shashikumarNo ratings yet

- C Byregowda Institute of TechnologyDocument2 pagesC Byregowda Institute of Technologypriya shashikumarNo ratings yet

- C Byregowda Institute of Technology: Module 3 Advances in Complex Queries & Database Application DevelopmentDocument2 pagesC Byregowda Institute of Technology: Module 3 Advances in Complex Queries & Database Application Developmentpriya shashikumarNo ratings yet

- Tut DBMS Module 5 2018Document1 pageTut DBMS Module 5 2018priya shashikumarNo ratings yet

- Selective Repeat ArqDocument234 pagesSelective Repeat Arqpriya shashikumarNo ratings yet

- Testing PPT Phase2-4Document3 pagesTesting PPT Phase2-4priya shashikumarNo ratings yet

- Dark Tourism: Understanding The Concept and Recognizing The ValuesDocument18 pagesDark Tourism: Understanding The Concept and Recognizing The Valuesmultipurpose shopNo ratings yet

- Math 7 Curriculum MapDocument32 pagesMath 7 Curriculum MapJessa Mae Neri Sardan100% (1)

- MAT 167: Statistics Test II Instructor: Anthony Tanbakuchi Spring 2009Document10 pagesMAT 167: Statistics Test II Instructor: Anthony Tanbakuchi Spring 2009Riadh AlouiNo ratings yet

- Linear Contrasts and Multiple Comparisons (Chapter 9) : TermsDocument38 pagesLinear Contrasts and Multiple Comparisons (Chapter 9) : TermsRahul TripathiNo ratings yet

- What Is Central TendencyDocument10 pagesWhat Is Central Tendencycarmela ventanillaNo ratings yet

- Chapter - 03 Marketing Decision Making and Case AnalysisDocument28 pagesChapter - 03 Marketing Decision Making and Case AnalysisKJ VillNo ratings yet

- GRR 751 Learner Centered Activity 5Document2 pagesGRR 751 Learner Centered Activity 5ian92193No ratings yet

- Applied Maths B Tech I II PDFDocument4 pagesApplied Maths B Tech I II PDFnehabehlNo ratings yet

- Graphs of Cosecant, Secant, and CotangentDocument4 pagesGraphs of Cosecant, Secant, and CotangentmussNo ratings yet

- DR Lee Peng Hin: EE6203 Computer Control Systems 77 DR Lee Peng Hin: EE6203 Computer Control Systems 78Document44 pagesDR Lee Peng Hin: EE6203 Computer Control Systems 77 DR Lee Peng Hin: EE6203 Computer Control Systems 78phatctNo ratings yet

- Qualitative Analysis To Improve TechniquesDocument18 pagesQualitative Analysis To Improve TechniquesFrancis FrimpongNo ratings yet

- Introduction To Six SigmaDocument131 pagesIntroduction To Six Sigmavishp7100% (1)

- WS 02.9 Derivatives of Exp Functs KEYDocument5 pagesWS 02.9 Derivatives of Exp Functs KEYJody Mhar BlancoNo ratings yet

- Bromantane EssayDocument6 pagesBromantane EssayRafaelNo ratings yet

- Data Science Course Brochure - One Year CourseDocument4 pagesData Science Course Brochure - One Year CourseRamesh KummamNo ratings yet

- 20131-Ec Diferenciales Carlos - jarrIAGAM.tarea1Document3 pages20131-Ec Diferenciales Carlos - jarrIAGAM.tarea1Janai ArriagaNo ratings yet

- LAB-03 EE-311 Signal and Systems PDFDocument12 pagesLAB-03 EE-311 Signal and Systems PDFAwais AliNo ratings yet

- ACST202/851: Mathematics of Finance Tutorial Solutions 3: I e e IDocument11 pagesACST202/851: Mathematics of Finance Tutorial Solutions 3: I e e IAbhishekMaranNo ratings yet

- The Theorems of Green, Stokes, and GaussDocument112 pagesThe Theorems of Green, Stokes, and Gaussabha singhNo ratings yet

- Basic Calculus ReviewerDocument3 pagesBasic Calculus ReviewerGummy Min0903No ratings yet

- Module 4: Laplace and Z Transform Lecture 31: Z Transform and Region of ConvergenceDocument2 pagesModule 4: Laplace and Z Transform Lecture 31: Z Transform and Region of ConvergencePrasad KavthakarNo ratings yet

- HND Pappers With Solutions For Electrical Power SystemDocument5 pagesHND Pappers With Solutions For Electrical Power SystemTassi Sonikish100% (1)

- Section 3.6 Lecture Notes 122BDocument5 pagesSection 3.6 Lecture Notes 122BShubham PardeshiNo ratings yet