0% found this document useful (0 votes)

50 views16 pagesBayesian Wage Modeling Lab in R

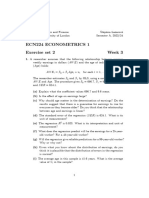

This document provides instructions for completing exercises and submitting answers for Week 4 of a Coursera lab course on modeling wages. It introduces the topic of wage modeling in labor economics and explains that Bayesian selection methods like BIC and Bayesian Model Averaging will be used to construct parsimonious predictive models from cross-sectional wage data. Packages and functions in R like dplyr, ggplot2, lm, stepAIC, and bas.lm are introduced for exploring the data, fitting simple linear regressions, and performing model selection and averaging.

Uploaded by

thakkarraj99Copyright

© © All Rights Reserved

We take content rights seriously. If you suspect this is your content, claim it here.

Available Formats

Download as PDF, TXT or read online on Scribd

0% found this document useful (0 votes)

50 views16 pagesBayesian Wage Modeling Lab in R

This document provides instructions for completing exercises and submitting answers for Week 4 of a Coursera lab course on modeling wages. It introduces the topic of wage modeling in labor economics and explains that Bayesian selection methods like BIC and Bayesian Model Averaging will be used to construct parsimonious predictive models from cross-sectional wage data. Packages and functions in R like dplyr, ggplot2, lm, stepAIC, and bas.lm are introduced for exploring the data, fitting simple linear regressions, and performing model selection and averaging.

Uploaded by

thakkarraj99Copyright

© © All Rights Reserved

We take content rights seriously. If you suspect this is your content, claim it here.

Available Formats

Download as PDF, TXT or read online on Scribd