Professional Documents

Culture Documents

DR Tran Anh Tuan - Mathematics For AI

DR Tran Anh Tuan - Mathematics For AI

Uploaded by

Tư Mã Trọng Đạt0 ratings0% found this document useful (0 votes)

45 views4 pagesOriginal Title

Dr Tran Anh Tuan - Mathematics for AI

Copyright

© © All Rights Reserved

Available Formats

DOCX, PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

© All Rights Reserved

Available Formats

Download as DOCX, PDF, TXT or read online from Scribd

0 ratings0% found this document useful (0 votes)

45 views4 pagesDR Tran Anh Tuan - Mathematics For AI

DR Tran Anh Tuan - Mathematics For AI

Uploaded by

Tư Mã Trọng ĐạtCopyright:

© All Rights Reserved

Available Formats

Download as DOCX, PDF, TXT or read online from Scribd

You are on page 1of 4

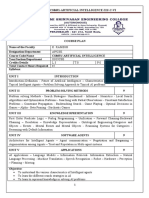

Mathematics for AI (Dr Tran Anh Tuan)

Wee Title Content

k

1 Introduction to - Overview of AI Applications

Mathematics for AI - Overview of Applied Mathematics

- Introduction to Algebra

- Introduction to Calculus

- Introduction to Statistics and Probabilities

- Introduction to Graph Theory

- Introduction to Information Theory

- Introduction to Optimization

2-3 Linear Algebra - Matrices and Vectors

- Inner Products

- Matrix Multiplication

- Orthogonal and Diagonal Matrix

- Transpose Matrix and Inverse Matrix in

Normal Equation

- Solving Systems of Linear Equations

- L1 norm or Manhattan Norm

- L2 norm or Euclidean Norm

- Regularization in Machine Learning

- Lasso and Ridge

- Feature Extraction and Feature Selection

- Covariance Matrix

- Eigenvalues and Eigenvectors

- Orthogonality and Orthonormal Set

- Span, Vector Spaces, Rank and Basis

- Determinant and Trace

- Principal Component Analysis (PCA)

- Singular Value Decomposition

- Matrix Decomposition or Matrix

Factorization

- Affine Spaces

4-5 Calculus - Derivative

- Partial derivative

- Second derivative

- Hessian matrix

- Gradient

- Gradient descent

- Critical points

- Stationary points

- Local maximum

- Global minimum

- Saddle points

- Jacobian matrix

6 Statistics and Probabilities - What Is Data?

- Categories Of Data

- What Is Statistics?

- Basic Terminologies In Statistics

- Sampling Techniques

- Types Of Statistics

Descriptive Statistics

- Measures Of Centre

- Measures Of Spread

- Information Gain And Entropy

- Confusion Matrix

Probability

- What Is Probability?

- Terminologies In Probability

- Probability Distribution

- Types Of Probability

- Bayes’ Theorem

- Gaussian Distribution

- Conjugacy and the Exponential Family

Inferential Statistics

- Point Estimation

- Interval Estimation

- Estimating Level Of Confidence

- Hypothesis Testing

7 Graph Theory - Directed and Undirected Graph

- Path analysis

- Connectivity analysis

- Community analysis:

- Centrality analysis

8 Information Theory - Information

- Self-Information

- Entropy

- Mutual Information (Joint Entropy,

Conditional Entropy )

- Kullback–Leibler Divergence

- Cross Entropy

- Cross Entropy as An Objective Function of

Multi-class Classification

9 Continuous Optimization - Optimization using Gradient Descent

- Constrained Optimization and Lagrange

Multipliers

- Convex Optimization

10 Linear Regression - Problem Formulation

- Parameter Estimation

- Bayesian Linear Regression

- Maximum Likelihood as Orthogonal

Projection

11 Dimensional Reduction - Maximum Variance Perspective

with PCA - Projection Perspective

- Eigenvector Computation and Low-Rank

Approximations

- PCA in High Dimensions

- Latent Variable Perspective

12 Density Estimation with - Gaussian Mixture Model

Gaussian Mixture Models - Parameter Learning via Maximum

Likelihood

- EM Algorithm

- Latent-Variable Perspective

13 Classification with Support - Separating Hyperplanes

Vector Machine - Primal Support Vector Machine

- Dual Support Vector Machine

- Kernel

- Numerical Solution

14 Outlier Detection - Interquartile Range (IQR)

- Isolation Forest

- Minimum Covariance Determinant

- Local Outlier Factor

- One-Class SVM

15 Recommendation System - Singular value decomposition techniques -

svd

- Hybrid and context aware recommender

systems

- Factorization machines

You might also like

- Unit 8 Test 6th Grade FutureDocument5 pagesUnit 8 Test 6th Grade FutureAlejandro FaveroNo ratings yet

- EditorialDocument12 pagesEditorialgelumntrezNo ratings yet

- Tài Liệu Tiếng Anh: Ôn tập thi đầu vào Thạc sĩDocument90 pagesTài Liệu Tiếng Anh: Ôn tập thi đầu vào Thạc sĩThanh100% (3)

- 1 Programming Model (Algorithms 1.1)Document10 pages1 Programming Model (Algorithms 1.1)HarshaSharmaNo ratings yet

- Assignment Report: Simple Operating SystemDocument24 pagesAssignment Report: Simple Operating SystemNguyen Trong Tin50% (2)

- Class Notes Ppts Day-02Document13 pagesClass Notes Ppts Day-02Akshay VaradeNo ratings yet

- LabVIEW Academy Question BankDocument63 pagesLabVIEW Academy Question BankCamilo SolisNo ratings yet

- Black Techniques+ +exercise StudentsDocument6 pagesBlack Techniques+ +exercise StudentsPipy Chupachius ChrisNo ratings yet

- MAEG4050 Syllabus 2017-18Document4 pagesMAEG4050 Syllabus 2017-18horace LinNo ratings yet

- Assignment 2Document1 pageAssignment 2Usman Haider0% (2)

- Technical Summative Assessment 3Document7 pagesTechnical Summative Assessment 3John Henry EscotoNo ratings yet

- Just 01 CPP HandoutDocument9 pagesJust 01 CPP HandoutYohanes SuyantoNo ratings yet

- EViews 7 Users Guide II PDFDocument822 pagesEViews 7 Users Guide II PDFismamnNo ratings yet

- Ioi 2012Document293 pagesIoi 2012Mursal MohamedNo ratings yet

- Exam 2003Document21 pagesExam 2003kib6707No ratings yet

- Quiz DsaDocument18 pagesQuiz Dsalyminh194No ratings yet

- Exam 2004Document20 pagesExam 2004kib6707No ratings yet

- Os Lab Manual - 0 PDFDocument56 pagesOs Lab Manual - 0 PDFadityaNo ratings yet

- C122020 Jan PDFDocument48 pagesC122020 Jan PDFLoh Jun XianNo ratings yet

- CS210 Solutions For Quiz 1-3 Quiz 1: 1. Programming ModelDocument16 pagesCS210 Solutions For Quiz 1-3 Quiz 1: 1. Programming ModelHarshaSharmaNo ratings yet

- ASIC Alvarez Valverde ReportDocument17 pagesASIC Alvarez Valverde ReportJuan VALVERDENo ratings yet

- Support Vector Machines & Kernels: David Sontag New York UniversityDocument19 pagesSupport Vector Machines & Kernels: David Sontag New York UniversityramanadkNo ratings yet

- CS502 GQ DEC 2020, MCS of VirtualliansDocument41 pagesCS502 GQ DEC 2020, MCS of VirtualliansCh HamXa Aziz (HeMi)100% (1)

- Quiz 3 Solution (No 1-4)Document3 pagesQuiz 3 Solution (No 1-4)irhamNo ratings yet

- A Path Finding Visualization Using A Star Algorithm and Dijkstra's AlgorithmDocument2 pagesA Path Finding Visualization Using A Star Algorithm and Dijkstra's AlgorithmEditor IJTSRD100% (1)

- AGBDocument13 pagesAGBMartins JansonsNo ratings yet

- Final Examination - Attempt ReviewDocument26 pagesFinal Examination - Attempt ReviewcvkcuongNo ratings yet

- Course Work 2018Document49 pagesCourse Work 2018Павел ПивоварNo ratings yet

- CS8691 Ai Iii Cse CDocument9 pagesCS8691 Ai Iii Cse CMohana SubbuNo ratings yet

- Resume ExampleDocument1 pageResume ExampleWan Nuranis Wan SaaidiNo ratings yet

- The 18th Central European Olympiad in Informatics CEOI 2011Document56 pagesThe 18th Central European Olympiad in Informatics CEOI 2011Paweł KapiszkaNo ratings yet

- (Anis Koubaa, Hachemi Bennaceur, Imen Chaari, Saha (B-Ok - Xyz)Document201 pages(Anis Koubaa, Hachemi Bennaceur, Imen Chaari, Saha (B-Ok - Xyz)AbhishekSharmaNo ratings yet

- CS 2008 Gate KeyDocument25 pagesCS 2008 Gate KeymvdurgadeviNo ratings yet

- Assignment 2 Eve PDFDocument1 pageAssignment 2 Eve PDFArfan ChathaNo ratings yet

- Flowgorithm - Documentation - ExpressionsDocument2 pagesFlowgorithm - Documentation - ExpressionsistiqlalNo ratings yet

- Computer Graphics Polygon Clipping: by Asmita NagDocument26 pagesComputer Graphics Polygon Clipping: by Asmita NagApoorva RanjanNo ratings yet

- Finger Print PDFDocument63 pagesFinger Print PDFVasu ThakurNo ratings yet

- De Thi Tieng Anh Chuyen Nganh CNTT 1Document4 pagesDe Thi Tieng Anh Chuyen Nganh CNTT 1Đinh Hồng HuệNo ratings yet

- ANZSCO List of OccupationsDocument854 pagesANZSCO List of OccupationsSaravanan RasayaNo ratings yet

- Questions Exam Mathematics and Game Theory 2019-2020Document5 pagesQuestions Exam Mathematics and Game Theory 2019-2020silNo ratings yet

- PDF Elementary Program With C LanguageDocument6 pagesPDF Elementary Program With C LanguageAlishaNo ratings yet

- BSC MSCSDocument124 pagesBSC MSCSArun Jyothi C0% (1)

- Thoughtworks: TR Interview:-Interview Experience 1 - (90 Mins On Zoom, 2 Interviewers)Document19 pagesThoughtworks: TR Interview:-Interview Experience 1 - (90 Mins On Zoom, 2 Interviewers)Aditya SinghNo ratings yet

- Kodu - Programming A Rover - Lesson PDFDocument37 pagesKodu - Programming A Rover - Lesson PDFrenancostaalencarNo ratings yet

- CMU-CS 462: Software Measurement and Analysis: Spring 2019-2020Document29 pagesCMU-CS 462: Software Measurement and Analysis: Spring 2019-2020Phu Phan ThanhNo ratings yet

- Long - Fresher CV TemplateDocument3 pagesLong - Fresher CV TemplateMinh ĐứcNo ratings yet

- Grafcet Step 7Document34 pagesGrafcet Step 7Mazarel AurelNo ratings yet

- The Switch Book by Rich Seifert-NotesDocument51 pagesThe Switch Book by Rich Seifert-NotesharinijNo ratings yet

- DLD Lab#01Document6 pagesDLD Lab#01Zoya KhanNo ratings yet

- Asset-V1 MITx+6.86x+1T2021+Type@Asset+Block@Resources 1T2021 Calendar 1T2021-1Document2 pagesAsset-V1 MITx+6.86x+1T2021+Type@Asset+Block@Resources 1T2021 Calendar 1T2021-1Khatia IvanovaNo ratings yet

- Dsa Qa-1Document47 pagesDsa Qa-1Hồng HảiNo ratings yet

- Final Assignment-Mat 250-Sec-07-HArDocument2 pagesFinal Assignment-Mat 250-Sec-07-HArMd. Shahajada Imran 1520675642No ratings yet

- Structures: Short AnswerDocument11 pagesStructures: Short AnswerKhải NguyễnNo ratings yet

- Introduction To Randomization & Approximation AlgorithmsDocument22 pagesIntroduction To Randomization & Approximation AlgorithmsRizon Kumar RahiNo ratings yet

- Midsem Regular MFDS 22-12-2019 Answer Key PDFDocument5 pagesMidsem Regular MFDS 22-12-2019 Answer Key PDFBalwant SinghNo ratings yet

- Reduction of A Symmetric Matrix To Tridiagonal Form - Givens and Householder ReductionsDocument7 pagesReduction of A Symmetric Matrix To Tridiagonal Form - Givens and Householder ReductionsAntonio PeixotoNo ratings yet

- Multithreaded Programming Using Java ThreadsDocument44 pagesMultithreaded Programming Using Java ThreadslicoesaprendidasNo ratings yet

- Fee P A101-CmsDocument10 pagesFee P A101-CmsNguyen The Vinh (K15 HL)No ratings yet

- Top-Down Parsing: Programming Language ApplicationDocument4 pagesTop-Down Parsing: Programming Language Applicationnetcity143No ratings yet

- Revison PlanDocument15 pagesRevison Planbuithimyhanh2009No ratings yet

- pTIA DataX+ Dy0-001Document16 pagespTIA DataX+ Dy0-001AndyNo ratings yet

- Up M PHD Seminar Cart RF May 2023Document101 pagesUp M PHD Seminar Cart RF May 2023pepemiramcNo ratings yet

- Text Paraphrase DetectionDocument37 pagesText Paraphrase DetectionThanhNo ratings yet

- Master TestDocument90 pagesMaster TestThanhNo ratings yet

- Listening AnswerDocument52 pagesListening AnswerThanhNo ratings yet

- 13 - Chapter 4 PDFDocument46 pages13 - Chapter 4 PDFSyam Siva ReddyNo ratings yet

- Data Structures - Stack - and - Queue - Hands-OnDocument3 pagesData Structures - Stack - and - Queue - Hands-Ongamer 1100% (1)

- Assignment: Q.1 A Firm Makes Two Products X and Y, and Has A Total Production Capacity of 9 TonnesDocument2 pagesAssignment: Q.1 A Firm Makes Two Products X and Y, and Has A Total Production Capacity of 9 Tonnesmanoj kumar sethyNo ratings yet

- 11 Frequency Response TechniquesDocument74 pages11 Frequency Response Techniqueszaid100% (1)

- UNIT5 - Comparison TreeDocument52 pagesUNIT5 - Comparison Treeroshankumar1patelNo ratings yet

- Physical ChemistryDocument29 pagesPhysical ChemistrySonali SahuNo ratings yet

- MY MatlabDocument2,088 pagesMY Matlabashwin saileshNo ratings yet

- Lecture 1Document66 pagesLecture 1trivaNo ratings yet

- Assignment 2 Dr. Ranga AbeysooriyaDocument3 pagesAssignment 2 Dr. Ranga AbeysooriyaUtpali BhagyaNo ratings yet

- New Forecasting Working 1 04102022 102149amDocument65 pagesNew Forecasting Working 1 04102022 102149amnimra nazimNo ratings yet

- Unit 5 MCQDocument15 pagesUnit 5 MCQ30. Suraj IngaleNo ratings yet

- Week 6 Decision TreesDocument47 pagesWeek 6 Decision TreessinanahmedofficalNo ratings yet

- NA Reteach U5M11L03Document1 pageNA Reteach U5M11L03Lila AlwaerNo ratings yet

- Using Ann Modelling For Predicting The Duration of Construction Projects in IraqDocument10 pagesUsing Ann Modelling For Predicting The Duration of Construction Projects in IraqEquar Beyra EquarNo ratings yet

- Economic Lot Size With Finite Replenishment With and Without ShortageDocument20 pagesEconomic Lot Size With Finite Replenishment With and Without ShortageMeenal Thosar0% (1)

- NLE SolutionDocument35 pagesNLE Solutionhabib1234No ratings yet

- Complete Resources of DSADocument8 pagesComplete Resources of DSAAvinash SinghNo ratings yet

- Problem Set 2 SolDocument7 pagesProblem Set 2 SolsristisagarNo ratings yet

- TEST 2. Derivatives (SOLUTIONS)Document5 pagesTEST 2. Derivatives (SOLUTIONS)Kayleen PerdanaNo ratings yet

- Research ArticleDocument11 pagesResearch ArticleEdison QuimbiambaNo ratings yet

- Chapter 3 PPT CondensedDocument11 pagesChapter 3 PPT CondensedRaymond GuillartesNo ratings yet

- Rohan Gusain: Get in ContactDocument1 pageRohan Gusain: Get in ContactRohanNo ratings yet

- Sheet 4Document2 pagesSheet 4MONA KUMARINo ratings yet

- Definition & Concept of Mortality TableDocument9 pagesDefinition & Concept of Mortality TableMayaz Muhammad MomshadNo ratings yet

- EEX4465 - Data Structures and Algorithms 2020/2021: Tutor Marked Assignment - #2 Deadline OnDocument2 pagesEEX4465 - Data Structures and Algorithms 2020/2021: Tutor Marked Assignment - #2 Deadline OnNawam UdayangaNo ratings yet

- Discrete-Time Fourier Transform: XN X XneDocument36 pagesDiscrete-Time Fourier Transform: XN X Xneyadavsticky5108No ratings yet

- Tug of WarDocument7 pagesTug of WarHarsh DhakeNo ratings yet

- Ann - Simulation of Fault Detection For Protection of Transmission Line Using Neural Network - 2014Document5 pagesAnn - Simulation of Fault Detection For Protection of Transmission Line Using Neural Network - 2014andhita mahayana buat tugasNo ratings yet

- MonteCarlo PDFDocument84 pagesMonteCarlo PDFMr. KNo ratings yet