Professional Documents

Culture Documents

G Theory Encyclo of Beh Sci

Uploaded by

bella swan0 ratings0% found this document useful (0 votes)

6 views3 pagesCopyright

© © All Rights Reserved

Available Formats

PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

0 ratings0% found this document useful (0 votes)

6 views3 pagesG Theory Encyclo of Beh Sci

Uploaded by

bella swanCopyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

You are on page 1of 3

Generalizability Theory: Overview

NOREEN M. WEBB AND RICHARD J. SHAVELSON

Volume 2, pp. 717–719

in

Encyclopedia of Statistics in Behavioral Science

ISBN-13: 978-0-470-86080-9

ISBN-10: 0-470-86080-4

Editors

Brian S. Everitt & David C. Howell

John Wiley & Sons, Ltd, Chichester, 2005

Generalizability Theory: used in G theory; instead, standard errors for variance

component estimates provide information about sam-

Overview pling variability of estimated variance components.

The decision (D) study deals with the practical

application of a measurement procedure. A D study

Generalizability (G) theory is a statistical theory for uses variance component information from a G study

evaluating the dependability (‘reliability’) of behav- to design a measurement procedure that minimizes

ioral measurements [2]; see also [1], [3], and [4]. G error for a particular purpose. In planning a D study,

theory pinpoints the sources of measurement error, the decision maker defines the universe that he or

disentangles them, and estimates each one. In G the- she wishes to generalize to, called the universe of

ory, a behavioral measurement (e.g., a test score) generalization, which may contain some or all of the

is conceived of as a sample from a universe of facets and their levels in the universe of admissible

admissible observations, which consists of all pos- observations. In the D study, decisions usually will

sible observations that decision makers consider to be based on the mean over multiple observations

be acceptable substitutes for the observation in hand. (e.g., test items) rather than on a single observation

Each characteristic of the measurement situation that (a single item).

a decision maker would be indifferent to (e.g., test G theory recognizes that the decision maker might

form, item, occasion, rater) is a potential source of want to make two types of decisions based on a

error and is called a facet of a measurement. The behavioral measurement: relative (‘norm-referenced’)

universe of admissible observations, then, is defined and absolute (‘criterion- or domain-referenced’). A

by all possible combinations of the levels (called relative decision focuses on the rank order of per-

conditions) of the facets. In order to evaluate the sons; an absolute decision focuses on the level of

dependability of behavioral measurements, a general- performance, regardless of rank. Error variance is

izability (G) study is designed to isolate and estimate defined differently for each kind of decision. To

as many facets of measurement error as is reasonably reduce error variance, the number of conditions of

and economically feasible. the facets may be increased in a manner analogous

Consider a two-facet crossed person x item x occa- to the Spearman–Brown prophecy formula in clas-

sion G study design where items and occasions have sical test theory and the standard error of the mean

been randomly selected. The object of measurement, in sampling theory. G theory distinguishes between

here persons, is not a source of error and, therefore, two reliability-like summary coefficients: a General-

is not a facet. In this design with generalization over izability (G) Coefficient for relative decisions and an

all admissible test items and occasions taken from an Index of Dependability (Phi) for absolute decisions.

indefinitely large universe, an observed score for a Generalizability theory allows the decision maker

particular person on a particular item and occasion to use different designs in G and D studies. Although

is decomposed into an effect for the grand mean, G studies should use crossed designs whenever

plus effects for the person, the item, the occasion, possible to estimate all possible variance components

each two-way interaction (see Interaction Effects), in the universe of admissible observations, D studies

and a residual (three-way interaction plus unsystem- may use nested designs for convenience or to increase

atic error). The distribution of each component or estimated generalizability.

‘effect’, except for the grand mean, has a mean of G theory is essentially a random effects theory.

zero and a variance σ 2 (called the variance compo- Typically, a random facet is created by randomly

nent). The variance component for the person effect sampling levels of a facet. A fixed facet arises when

is called the universe-score variance. The variance the decision maker: (a) purposely selects certain con-

components for the other effects are considered error ditions and is not interested in generalizing beyond

variation. Each variance component can be estimated them, (b) finds it unreasonable to generalize beyond

from a traditional analysis of variance (or other the levels observed, or (c) when the entire universe of

methods such as maximum likelihood). The relative levels is small and all levels are included in the mea-

magnitudes of the estimated variance components surement design (see Fixed and Random Effects).

provide information about sources of error influenc- G theory typically treats fixed facets by averaging

ing a behavioral measurement. Statistical tests are not over the conditions of the fixed facet and examining

2 Generalizability Theory: Overview

the generalizability of the average over the random 2

occasions. Finally, the large σ̂pio,e (36%) reflects the

facets. Alternatives include conducting a separate G varying relative standing of persons across occasions

study within each condition of the fixed facet, or a and items and/or other sources of error not systemat-

multivariate analysis with the levels of the fixed facet ically incorporated into the G study.

comprising a vector of dependent variables. Because more of the error variability in science

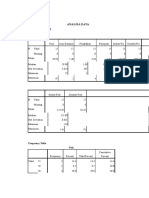

As an example, consider a G study in which per- achievement scores came from items than from

sons responded to 10 randomly selected science items occasions, changing the number of items will have

on each of 2 randomly sampled occasions. Table 1 a larger effect on the estimated variance components

gives the estimated variance components from the G and generalizability coefficients than will changing

study. The large σ̂p2 (1.376, 32% of the total varia- the number of occasions. For example, the estimated

tion) shows that, averaging over items and occasions, G and Phi coefficients for 4 items and 2 occasions

persons in the sample differed systematically in their are 0.72 and 0.69, respectively; the coefficients for 2

science achievement. The other estimated variance items and 4 occasions are 0.67 and 0.63, respectively.

components constitute error variation; they concern Choosing the number of conditions of each facet in

the item facet more than the occasion facet. The non- a D study, as well as the design (nested vs. crossed,

negligible σ̂i2 (5% of total variation) shows that items fixed vs. random facet), involves logistical and cost

varied somewhat in difficulty level. The large σ̂pi2 considerations as well as issues of dependability.

(20%) reflects different relative standings of persons

across items. The small σ̂o2 (1%) indicates that per-

formance was stable across occasions, averaging over

References

2

persons and items. The nonnegligible σ̂po (6%) shows

that the relative standing of persons differed some- [1] Brennan, R.L. (2001). Generalizability Theory, Springer-

Verlag, New York.

what across occasions. The zero σ̂io2 indicates that the [2] Cronbach, L.J., Gleser, G.C., Nanda, H. & Rajaratnam, N.

rank ordering of item difficulty was similar across (1972). The Dependability of Behavioral Measurements,

Wiley, New York.

Table 1 Estimated variance components in a generaliz- [3] Shavelson, R.J. & Webb, N.M. (1981). Generalizability

ability study of science achievement (p × i × o design) theory: 1973–1980, British Journal of Mathematical and

Statistical Psychology 34, 133–166.

Total [4] Shavelson, R.J. & Webb, N.M. (1991). Generalizability

Variance variability Theory: A Primer, Sage Publications, Newbury Park.

Source component Estimate (%)

Person (p) σp2 1.376 32 (See also Generalizability Theory: Basics; Gener-

Item (i) σi2 0.215 05 alizability Theory: Estimation)

Occasion (o) σo2 0.043 01

p×i σpi2 0.860 20 NOREEN M. WEBB AND RICHARD J.

p×o σpo2

0.258 06 SHAVELSON

i×o σio2 0.001 00

p × i × o,e 2

σpio,e 1.548 36

You might also like

- Generalizability Theory: January 2015Document21 pagesGeneralizability Theory: January 2015bella swanNo ratings yet

- Materi Group InfluenceDocument29 pagesMateri Group Influencebella swanNo ratings yet

- Kesiapsiagaan Anak Dalam Menghadapi Bencana: Studi Di Kabupaten SlemanDocument9 pagesKesiapsiagaan Anak Dalam Menghadapi Bencana: Studi Di Kabupaten Slemanbella swanNo ratings yet

- Generalizability Theory: An Introduction With Application To Simulation EvaluationDocument10 pagesGeneralizability Theory: An Introduction With Application To Simulation Evaluationbella swanNo ratings yet

- Abnormal Psychology 5th Ed by Gerald C Davidson-177-216Document40 pagesAbnormal Psychology 5th Ed by Gerald C Davidson-177-216bella swanNo ratings yet

- Abnormal Psychology - Clinical Persps. On Psych. Disorders, 6th Ed. - R. Halgin, Et. Al., (McGraw-Hill, 2010 WW-76-80Document5 pagesAbnormal Psychology - Clinical Persps. On Psych. Disorders, 6th Ed. - R. Halgin, Et. Al., (McGraw-Hill, 2010 WW-76-80bella swanNo ratings yet

- Peter Dickinson: Return To The CaseDocument5 pagesPeter Dickinson: Return To The Casebella swanNo ratings yet

- Chapter 13 Conflict and Peacemaking-36-39Document4 pagesChapter 13 Conflict and Peacemaking-36-39bella swanNo ratings yet

- Chapter 3 Job AnalysisDocument25 pagesChapter 3 Job Analysisbella swan100% (1)

- Rebec Ca Hasbrouck: Return To The CaseDocument5 pagesRebec Ca Hasbrouck: Return To The Casebella swanNo ratings yet

- Online Learning Center: Student Resources About The BookDocument5 pagesOnline Learning Center: Student Resources About The Bookbella swanNo ratings yet

- Psychological Causes: What Is Abnormal Behavior?Document5 pagesPsychological Causes: What Is Abnormal Behavior?bella swanNo ratings yet

- Abnormal Psychology - Clinical Persps. On Psych. Disorders, 6th Ed. - R. Halgin, Et. Al., (McGraw-Hill, 2010 WW-10-15Document6 pagesAbnormal Psychology - Clinical Persps. On Psych. Disorders, 6th Ed. - R. Halgin, Et. Al., (McGraw-Hill, 2010 WW-10-15bella swanNo ratings yet

- Personal Adjustment and Mental Health-Pages-443-521-11-19Document9 pagesPersonal Adjustment and Mental Health-Pages-443-521-11-19bella swanNo ratings yet

- Veggie Restaurant Company Profile by SlidesgoDocument45 pagesVeggie Restaurant Company Profile by Slidesgobella swanNo ratings yet

- Trivia Night by SlidesgoDocument51 pagesTrivia Night by Slidesgobella swanNo ratings yet

- RagozineDocument28 pagesRagozineMoisés MedinaNo ratings yet

- Gender Differences in Personality Traits Across CuDocument11 pagesGender Differences in Personality Traits Across Cubella swanNo ratings yet

- en The Relationship of Conformity and MemorDocument9 pagesen The Relationship of Conformity and Memorbella swanNo ratings yet

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5794)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (895)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (588)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (400)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (838)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (74)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (345)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1090)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (121)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- K-Means ClusteringDocument16 pagesK-Means ClusteringLUCKYNo ratings yet

- Probability MethodsDocument106 pagesProbability MethodsebrarrsevimmNo ratings yet

- CHAPTER 1: Introduction To Statistical Concepts: ObjectivesDocument9 pagesCHAPTER 1: Introduction To Statistical Concepts: ObjectivesDominique Anne BenozaNo ratings yet

- Demisse Dalecha Thiesis June2019 - 2Document153 pagesDemisse Dalecha Thiesis June2019 - 2wagogNo ratings yet

- M2 - Introduction To Factorial ANOVADocument15 pagesM2 - Introduction To Factorial ANOVAJunjie GohNo ratings yet

- Lecture 6 Correlation and RegressionDocument10 pagesLecture 6 Correlation and RegressionMd. Mizanur RahmanNo ratings yet

- Literature Review On Sampling TechniquesDocument7 pagesLiterature Review On Sampling Techniquesafmzuiffugjdff100% (1)

- Quantitative Research Methods and Design Week 1Document124 pagesQuantitative Research Methods and Design Week 1Krystan GungonNo ratings yet

- Advanced Engineering Mathematics B.Tech. 2nd Year, III-Semester Computer Science & Engineering Lecture-3: Random Variables (Part-1)Document29 pagesAdvanced Engineering Mathematics B.Tech. 2nd Year, III-Semester Computer Science & Engineering Lecture-3: Random Variables (Part-1)BALAKRISHNAN0% (1)

- Automatic Statistical ComputationDocument14 pagesAutomatic Statistical ComputationRyan San LuisNo ratings yet

- 400 HW 10Document4 pages400 HW 10Alma CalderonNo ratings yet

- GM Practice SAC-answersDocument6 pagesGM Practice SAC-answersmonsurNo ratings yet

- Cultural Mapping Project: Cindy Mae B. SoncuyaDocument51 pagesCultural Mapping Project: Cindy Mae B. SoncuyaAhmad RayaNo ratings yet

- Analisa Data Analisa Univariat: StatisticsDocument11 pagesAnalisa Data Analisa Univariat: StatisticsyudikNo ratings yet

- The Effect On The 8 Grade Students' Attitude Towards Statistics of Project Based LearningDocument13 pagesThe Effect On The 8 Grade Students' Attitude Towards Statistics of Project Based LearningyulfaNo ratings yet

- Random Variables and Probability DistributionsDocument5 pagesRandom Variables and Probability Distributionsmanilyn100% (1)

- Ebook PDF Using Multivariate Statistics 7th Edition by Barbara G Tabachnick PDFDocument41 pagesEbook PDF Using Multivariate Statistics 7th Edition by Barbara G Tabachnick PDFmartha.keener953100% (36)

- An Extension of Competence and Technology in Supporting The Effectiveness of Kostra Tani's Program On Agricultural ProductsDocument5 pagesAn Extension of Competence and Technology in Supporting The Effectiveness of Kostra Tani's Program On Agricultural ProductsInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Prob-Stat - 222 FinalDocument41 pagesProb-Stat - 222 Finaluyen.ta1309No ratings yet

- DRAFT NSPC GUIDELINES FOR 2020 As of Oct13Document47 pagesDRAFT NSPC GUIDELINES FOR 2020 As of Oct13Ronnie Francisco Tejano100% (1)

- A Comparative Study of Student Performance When Using Minecraft As A Learning ToolDocument24 pagesA Comparative Study of Student Performance When Using Minecraft As A Learning ToolVirgil Kent BulanhaguiNo ratings yet

- Information Gain - Towards Data ScienceDocument8 pagesInformation Gain - Towards Data ScienceSIDDHARTHA SINHANo ratings yet

- Written Report in METHODS OF RESEARCHDocument12 pagesWritten Report in METHODS OF RESEARCHeiramNo ratings yet

- Confidence Intervals For Population ProportionDocument24 pagesConfidence Intervals For Population Proportionstar world musicNo ratings yet

- Linear Regression and Correlation: Mcgraw-Hill/IrwinDocument16 pagesLinear Regression and Correlation: Mcgraw-Hill/IrwinHussainNo ratings yet

- Ankita Module 4 QTDocument12 pagesAnkita Module 4 QTAnkita BawaneNo ratings yet

- Business AnalyticsDocument142 pagesBusiness AnalyticsG Madhuri100% (2)

- Continuous Assessment Test - IIDocument4 pagesContinuous Assessment Test - IIPranav RajNo ratings yet

- Stats NotesDocument8 pagesStats NotesKeith SalesNo ratings yet