Professional Documents

Culture Documents

Citation 298606994

Citation 298606994

Uploaded by

Siri Reddy0 ratings0% found this document useful (0 votes)

4 views1 pageThis paper discusses ensemble methods in machine learning, which combine multiple learning algorithms to obtain better predictive performance than could be obtained from any of the constituent learning algorithms alone. The paper reviews algorithmic methods for generating multiple models and methods for combining these models, including bagging, boosting, stacking, and various other techniques. The paper also discusses theoretical reasons why ensembles of models often perform better than single models.

Original Description:

Original Title

citation-298606994

Copyright

© © All Rights Reserved

Available Formats

TXT, PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentThis paper discusses ensemble methods in machine learning, which combine multiple learning algorithms to obtain better predictive performance than could be obtained from any of the constituent learning algorithms alone. The paper reviews algorithmic methods for generating multiple models and methods for combining these models, including bagging, boosting, stacking, and various other techniques. The paper also discusses theoretical reasons why ensembles of models often perform better than single models.

Copyright:

© All Rights Reserved

Available Formats

Download as TXT, PDF, TXT or read online from Scribd

0 ratings0% found this document useful (0 votes)

4 views1 pageCitation 298606994

Citation 298606994

Uploaded by

Siri ReddyThis paper discusses ensemble methods in machine learning, which combine multiple learning algorithms to obtain better predictive performance than could be obtained from any of the constituent learning algorithms alone. The paper reviews algorithmic methods for generating multiple models and methods for combining these models, including bagging, boosting, stacking, and various other techniques. The paper also discusses theoretical reasons why ensembles of models often perform better than single models.

Copyright:

© All Rights Reserved

Available Formats

Download as TXT, PDF, TXT or read online from Scribd

You are on page 1of 1

Dietterich, TG. (2000). Ensemble methods in machine learning.

Multiple Classifier

Systems: First International Workshop, MCS 2000, Lecture Notes in Computer Science.

1-15.

You might also like

- COMPUTER STUDIES LESSON PLANS G8 by Phiri D PDFDocument45 pagesCOMPUTER STUDIES LESSON PLANS G8 by Phiri D PDFchisanga cliveNo ratings yet

- COMPUTER STUDIES LESSON PLANS G8 by Phiri D PDFDocument64 pagesCOMPUTER STUDIES LESSON PLANS G8 by Phiri D PDFChishale Friday100% (1)

- Test Bank For Using Mis 9th Edition 01Document31 pagesTest Bank For Using Mis 9th Edition 01Quỳnh NguyễnNo ratings yet

- (Bsc. Agriculture) End Semester Examination 2018 3Rd SemesterDocument1 page(Bsc. Agriculture) End Semester Examination 2018 3Rd Semesteratul211988No ratings yet

- Logica DigitalDocument5 pagesLogica DigitalLUIS PEÑANo ratings yet

- Tribhuvan University: Institute of Science and TechnologyDocument1 pageTribhuvan University: Institute of Science and TechnologyvikashNo ratings yet

- r20 4-1 Open Elective III Syllabus Final WsDocument29 pagesr20 4-1 Open Elective III Syllabus Final WsDharmik Pawan KumarNo ratings yet

- Knowledge ManagementDocument3 pagesKnowledge Managementdagy36444No ratings yet

- Tribhuvan University: Long QuestionsDocument60 pagesTribhuvan University: Long QuestionsArpoxonNo ratings yet

- MIS ElectiveDocument1 pageMIS ElectivejrgtonyNo ratings yet

- Reg. No NameDocument1 pageReg. No NamejrgtonyNo ratings yet

- MID Edu Uni 21-24Document2 pagesMID Edu Uni 21-24Muhammad IrfanNo ratings yet

- 08Mtcs042-Design and Analysis of Algorithms: Answer ALL Questions (10×2 20)Document1 page08Mtcs042-Design and Analysis of Algorithms: Answer ALL Questions (10×2 20)chayav88No ratings yet

- Tribhuvan University: Candidates Are Required To Give Their Answers in Their Own Words As For As PracticableDocument1 pageTribhuvan University: Candidates Are Required To Give Their Answers in Their Own Words As For As PracticablevikashNo ratings yet

- 136 - 72707 PDFDocument2 pages136 - 72707 PDFAmarjeet Singh RanaNo ratings yet

- 2nd PUC Computer Science Paper 2Document2 pages2nd PUC Computer Science Paper 2Mohammed Afzal KhaziNo ratings yet

- MCA 101: Information Technology Unit - I Business and InformationDocument22 pagesMCA 101: Information Technology Unit - I Business and InformationGosukondaBalavenkataAnanthBharadwajNo ratings yet

- Introduction To Information Technology 2073Document1 pageIntroduction To Information Technology 2073vinenet112No ratings yet

- Fun With ElectronicsDocument1 pageFun With ElectronicsRatsihNo ratings yet

- It 2066 PDFDocument1 pageIt 2066 PDFvikashNo ratings yet

- PMC211Document1 pagePMC211SwapnilNo ratings yet

- ICDL ExamDocument2 pagesICDL ExamJean Paul Ize100% (1)

- Mca 201Document2 pagesMca 201kola0123No ratings yet

- Gujarat Technological UniversityDocument1 pageGujarat Technological Universitynayan bhowmickNo ratings yet

- Institute of Science and TechnologyDocument6 pagesInstitute of Science and TechnologyyalahubjiNo ratings yet

- Comp Sci emDocument2 pagesComp Sci emMalathi RajaNo ratings yet

- PGD An31n PDFDocument8 pagesPGD An31n PDFHaresh KNo ratings yet

- Open Source DocumentDocument2 pagesOpen Source DocumentPandiya RajanNo ratings yet

- Computer Studies Lesson Plans g8 by Phiri D-1 1Document45 pagesComputer Studies Lesson Plans g8 by Phiri D-1 1Wilfred Muthali100% (1)

- Computer 8Document2 pagesComputer 8PRASHANT DHUNGANANo ratings yet

- Mira Chandra Kirana: Informatics Engineering Politeknik Negeri BatamDocument11 pagesMira Chandra Kirana: Informatics Engineering Politeknik Negeri BatamMuhammad RehanNo ratings yet

- Informatics Practices (Code No. 065) : Course DesignDocument11 pagesInformatics Practices (Code No. 065) : Course DesignSonika DograNo ratings yet

- Mca 101Document2 pagesMca 101kola0123No ratings yet

- (M2-TECHNICAL) Introduction To ComputerDocument3 pages(M2-TECHNICAL) Introduction To ComputerJoshua Miguel NaongNo ratings yet

- Introduction To Information Technology 2069Document1 pageIntroduction To Information Technology 2069Sushant BhattaraiNo ratings yet

- CSE303 Hw2J2801Document1 pageCSE303 Hw2J2801himanchalsingh93No ratings yet

- Computer Science: June/July, 2009Document4 pagesComputer Science: June/July, 2009bkvuvce8170No ratings yet

- Introduction To Machine LearningDocument2 pagesIntroduction To Machine LearningsirishaksnlpNo ratings yet

- #V$%& #%VV'% (V# #%V) V%%+,$%& - % (& #V#V & (#%V: C C C C C CDocument11 pages#V$%& #%VV'% (V# #%V) V%%+,$%& - % (& #V#V & (#%V: C C C C C CSunny SiaNo ratings yet

- Informatics Practices (Code No. 065) : Course DesignDocument11 pagesInformatics Practices (Code No. 065) : Course DesignAnurag BhartiNo ratings yet

- EDU 03 New SyllabusDocument2 pagesEDU 03 New SyllabusPratheesh PkNo ratings yet

- 1st Year Chapter 1 (1st Half) 01Document1 page1st Year Chapter 1 (1st Half) 01Engr Naveed AhmedNo ratings yet

- Science Academy Saddar Gogera Okara: Chapter 1: Basics of Information TechnologyDocument1 pageScience Academy Saddar Gogera Okara: Chapter 1: Basics of Information TechnologyEngr Naveed AhmedNo ratings yet

- BSC It Syllabus Mumbai UniversityDocument81 pagesBSC It Syllabus Mumbai UniversitysanNo ratings yet

- Bachelor of Computer Applications (BCA) Degree Programme: I Semester BCA - Blown Up SyllabusDocument13 pagesBachelor of Computer Applications (BCA) Degree Programme: I Semester BCA - Blown Up Syllabusthanvishetty2703No ratings yet

- MMPC 008Document2 pagesMMPC 008Vikash KumarNo ratings yet

- Answer All Questions. Each Carries 1 Mark: Section ADocument2 pagesAnswer All Questions. Each Carries 1 Mark: Section ANaveen Jacob JohnNo ratings yet

- BMS 400 AssignmentDocument1 pageBMS 400 AssignmentRuth NyawiraNo ratings yet

- Computer Science P&ODocument5 pagesComputer Science P&OARUL MOZHI SELVAN VNo ratings yet

- 2nd Puc Computer Science Question Papers-2006 To 2011Document32 pages2nd Puc Computer Science Question Papers-2006 To 2011Mohan Kumar PNo ratings yet

- 15 Informatics Practices OLDDocument11 pages15 Informatics Practices OLDSumit TiroleNo ratings yet

- Ert 167 WK 15 - Lect - Review - 15.2 28 Jan 2021 - ClassDocument11 pagesErt 167 WK 15 - Lect - Review - 15.2 28 Jan 2021 - ClassLEE YI JIE STUDENTNo ratings yet

- Fundamentals of ComputersDocument3 pagesFundamentals of ComputersShameem JazirNo ratings yet

- Mis ExamDocument3 pagesMis ExamchisomoNo ratings yet

- S2 Bca 2021 Reg - SupplyDocument53 pagesS2 Bca 2021 Reg - SupplyLeslie QwerNo ratings yet

- Bharati Vidyapeeth (Deemed To Be University), Pune: School of Distance EducationDocument1 pageBharati Vidyapeeth (Deemed To Be University), Pune: School of Distance EducationYogesh KhataleNo ratings yet

- Egov-2067 9 PDFDocument3 pagesEgov-2067 9 PDFBishnu K.C.No ratings yet

- Object Oriented Programming Using C++ 2020Document2 pagesObject Oriented Programming Using C++ 2020SRUJAN KALYANNo ratings yet

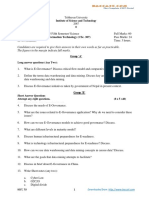

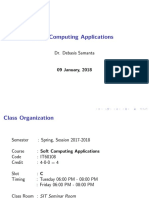

- Soft Computing Applications: Dr. Debasis SamantaDocument16 pagesSoft Computing Applications: Dr. Debasis SamantaEr Farzan UllahNo ratings yet

- Computer Science: March, 2009Document4 pagesComputer Science: March, 2009bkvuvce8170No ratings yet