Professional Documents

Culture Documents

AIX Troy Protocol Performance Over RoCE v0.2

Uploaded by

wangyt0821Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

AIX Troy Protocol Performance Over RoCE v0.2

Uploaded by

wangyt0821Copyright:

Available Formats

IBM Power SystemsTM

IBM Systems & Technology Group

AIX Troy performance over DDR IB, QDR IB

and RoCE

Bernie King-Smith

IBM Systems &Technology Group

AIX Performance and analysis

Power your planet © 2011 IBM Corporation

IBM Power Systems

Overview

Goal: Measure how the three fabrics support by Troy compare to each

other in POWER/AIX?

How well do Troy/pureScale operations scale on DDR IB, QDR IB

and RoCE?

How well do QDR IB and RoCE perform as compared to DDR IB

using a simulated DTW operation mix?

How well does the CF scale going from 1 to 2 ports on the CF?

This is just a beginning with questions yet to be answered.

2 Power your planet © 2011 IBM Corporation

IBM Power Systems

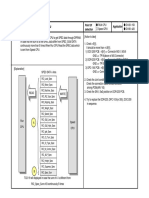

System Configuration

Member 1 Member 2 Member 3 Member 4

P730-IOC P730-IOC P730-IOC P730-IOC

IB M

IB M IB M IB M server

server server server

SilverStorm 9024 Mellanox 5030 BNT G8124

DDR IB QDR IB RoCE

Networks server

IB M

RoCE

CF 4 member configuration.

P730-IOC

QDR IB Five P730-IOC CECS 16 core 128 GB Memory

DDR IB Galaxy2 adapter

DDR IB Travis-IB QDR IB adapter

Travis-EN RoCE adapter ( PRQ)

Only the CF used both ports in the adapters for 2 port

measurements

Power your planet © 2011 IBM Corporation

IBM Power Systems

Microb Single port Latency

Troy operation single connection latency

Build 1146A_61ps111

●

Latency was expected to be higher by about 1

usec for QDR IB and RoCE than DDR IB due to 60

PCIe bus overhead. vs. GX++. However we

currently see about a 2.2 usec increase. 50

●

RoCE latency was expected to be about 1

usec higher than QDR IB even though they

use the same Connect@ chip. We currently 40

see about a 2 usec increase.

Latency (usec)

DDR IB

QDR IB

30

RoCE

●

Findings comparing DDR IB to RoCE ctraces.

20

●

Instruction counts for SLS, RAR and

WARM are higher by 3.99%, 4.80%

and 3.79% respectively. 10

●

All increases in instruction counts

are in RoCE specific code, 0

specifically mxibQpPostSend. DIAGPTEST RARPTEST RARPTESTND REGPTEST SLSPTEST WARMOPTEST

●

Of the increased instruction counts Opertation type

30% are from the stamp_wqe routine.

This is used to place an end of list

pattern at end of WQE list for the

adapter read ahead function. Working Latency usec Galaxy2 DDR QDR IB RoCE

with RoCE development on improving DIAGPTEST 6.91 9.11 11.08

this routine.

●

RoCE currently uses up to 3 lwsync() RARPTEST 18.27 19.36 25.82

calls per mxibQpPostSEnd call, RARPTESTND 7.76 9.93 12.02

which may be unnecessary. At the REGPTEST 23.22 25.65 30.82

end of the routine we ring the

doorbell which does a full sync() SLSPTEST 8.77 10.68 12.95

anyway. WARMOPTEST 34.10 34.37 48.90

Power your planet © 2011 IBM Corporation

IBM Power Systems

Microb Single port Operation rates Troy single port small message rates

build 1146A_61ps111

2500000

Small Messages

2000000

Operation per second

●

QDR IB operation rates are 9.6 – 14.6% lower

than DDR IB. 1500000 DDR IB

●

RoCE operation rates are 7.3 – 8.5% lower QDR IB

RoCE

than QDR IB using the same adapter chip in 1000000

different modes.

●

The RegisterPage operation is not to critical 500000

to DB2 transaction performance, however is

much slower on QDR IB and RoCE adapters. 0

DIAGPTEST RARPTESTND REGPTEST SLSPTEST

Operation type

Data Operations Troy single port data pages per second rates

build 1146A_61ps111

QDR IB limit

●

DDR IB and RoCE write multiple page rates 600000

are link limited.

●

Read rates for DDR IB and RoCe are lower DRR IB limit 500000

than writes because of additional per page

4K pages pre second

overhead. 400000

DDR IB

●

QDR IB read and write rates are well below RoCE limit QDR IB

300000

link limits. QDR IB not considered RoCE

strategic/tactical for Tory on POWER. 200000

100000

0

Read Write

Operation type

Power your planet © 2011 IBM Corporation

IBM Power Systems

Microb DTW paced rate traffic simulation results

Microb DTW profile performance operation rates

Build 1146A_61ps111

●

DTW rate generation based on member tuning to 1400000

generate 215,000 operations on pureScale FP2 with 1200000

DDR IB adapters.

Total operations per decond

1000000

DTW simulation DDR

30 SLS connections 800000 QDR

14 RAR connctions 600000

RoCE

1 WARMO connection

Interoperation gap 175 usec. 400000

RoCE 1 port operation rate is 11.47% lower than

● 200000

DDR IB, 199994 vs. 225907 operations per second 0

1 port 2 ports

●

For 2 ports RoCE is 12.68% lower than DDR IB,

379937 vs. 435904 operations per second

Microb DTW profile performance latency

●

Average latency for RoCE 1 ports is 1.4x higher Build 1146A_61ps111

than DDR IB. It is 43.66 vs. 17.93 usec. 160

For 2 ports RoCE latency is 12.68% higher than

●

140

Average operation latency (usec)

DDR IB. 54.92 vs. 25.50 usec. 120

2 port scaling is 94% for DDR IB but only 90% for

●

100 DDR

RoCE QDR

80 RoCE

60

40

20

0

1 port 2 ports

Power your planet © 2011 IBM Corporation

IBM Power Systems

Microb DTW peak rate traffic simulation results

Microb peak profile performance operation rates

●

Peak rate generation based on same number of

Build 1146A_61ps111

connections running SLS, RAR and WARMO operation

unthrottled. Current results do not necessarily match 1400000

the DTW profile percentages. 1200000

Total operations per decond

●

Peak rates give indicy of operation rates and latency 1000000

differences when increasing the load above 215 000 800000

DDR

QDR

DTW mix operations per second. RoCE

600000

●

RoCE 1 port operation rate is 42.37% lower than DDR

IB. 828927 vs. 464843 operations per second. 400000

200000

●

For 2 ports RoCE is 46.74% lower than DDR IB.

1202565 vs. 640531 operations per second. 0

1 port 2 ports

●

Average latency for RoCE 1 ports is 43.73% higher

than DDR IB. It is 78.51 vs. 53.60 usec.

Microb peak profile performance latency

●

For 2 ports RoCE latency is 78.51% higher than DDR Build 1146A_61ps111

IB. 138.65 vs. 74.28 usec. 160

●

2 port scaling is only 45% for DDR IB at peak traffic 140

Average operation latency (usec)

rates and 38% for RoCE. 120

100 DDR

QDR

80 RoCE

60

40

20

0

1 port 2 ports

Power your planet © 2011 IBM Corporation

IBM Power Systems

Summary

●

Current assessment:

●

Currently DDR IB using Galaxy2 adapter in POWER performs best.

●

RoCE is the worst performing option due to much higher latency and limited link

bandwidth.

●

Yet to be assessed

●

Indepth analysis of the RoCE driver vs. GX++ Galaxy driver.

●

Scaling up to 4 ports on the CF.

●

Comparison to Intel hardware for RoCE.

Power your planet © 2011 IBM Corporation

IBM Power Systems

Backup charts

9 Power your planet © 2011 IBM Corporation

You might also like

- WR - DC3001 - E01 - 1 ZXWR RNS Dimensioning 57Document57 pagesWR - DC3001 - E01 - 1 ZXWR RNS Dimensioning 57noumizredhaNo ratings yet

- BIG-INNO EMCP MX100 Series Datasheet PDFDocument109 pagesBIG-INNO EMCP MX100 Series Datasheet PDFSteven ChowNo ratings yet

- Brumund Building A Smallish v1Document30 pagesBrumund Building A Smallish v1koaungmon47No ratings yet

- S3C2410 Developer Notice 030204Document7 pagesS3C2410 Developer Notice 030204ydongyolNo ratings yet

- 04 GERAN BC en ZXG10 IBSC Structure and Principle 1 PPT 201010Document118 pages04 GERAN BC en ZXG10 IBSC Structure and Principle 1 PPT 201010BouabdallahNo ratings yet

- Lockheed Martin Digital PayloadDocument13 pagesLockheed Martin Digital PayloadVasudev PurNo ratings yet

- mcs7840 DatasheetDocument41 pagesmcs7840 DatasheetDenis KalabinNo ratings yet

- AXI Multi-Bus Memory Controller: Block DiagramDocument2 pagesAXI Multi-Bus Memory Controller: Block Diagrammark bNo ratings yet

- Channel Coding For EGPRSDocument20 pagesChannel Coding For EGPRSAminreza PournorouzNo ratings yet

- 4Gb DDR4 D Die Component Datasheet PDFDocument298 pages4Gb DDR4 D Die Component Datasheet PDFanhxcoNo ratings yet

- 04 - Design With MicroprocessorsDocument71 pages04 - Design With Microprocessorsgill1234jassiNo ratings yet

- Elm327ds PDFDocument68 pagesElm327ds PDFIvan Francisco Lorenzatti100% (1)

- Sugandh Uldc Scada OverviewDocument18 pagesSugandh Uldc Scada OverviewRatilal M JadavNo ratings yet

- 16gb ddr4 SdramDocument372 pages16gb ddr4 SdramADBNo ratings yet

- Lecture17 PDFDocument31 pagesLecture17 PDFTimothy EngNo ratings yet

- VC SpyGlass RDC Training 06-2021Document28 pagesVC SpyGlass RDC Training 06-2021Rajeev Varshney100% (1)

- Project Overview Of: Zigbee Based Mapping and Position TrackingDocument31 pagesProject Overview Of: Zigbee Based Mapping and Position TrackingNaga NikhilNo ratings yet

- Iub TX Configuration Recommendation - ATM RAN14 V1.1Document94 pagesIub TX Configuration Recommendation - ATM RAN14 V1.1BegumKarabatakNo ratings yet

- AI Transformation PlaybookDocument22 pagesAI Transformation PlaybookOdun Adetola AdeboyeNo ratings yet

- Big Buddha DBG Arch SCHDocument43 pagesBig Buddha DBG Arch SCHRaimundo MouraNo ratings yet

- Ddr3 Sdram Unbuffered Sodimms Based On 2Gb C-Die: Hmt325S6Cfr8C Hmt351S6Cfr8CDocument48 pagesDdr3 Sdram Unbuffered Sodimms Based On 2Gb C-Die: Hmt325S6Cfr8C Hmt351S6Cfr8Cr521999No ratings yet

- Shornur Panambur PDFDocument77 pagesShornur Panambur PDFANURAJM44No ratings yet

- RNC in PoolDocument21 pagesRNC in PoolHatim SalihNo ratings yet

- H9TQ17ABJTMCUR HynixSemiconductorDocument183 pagesH9TQ17ABJTMCUR HynixSemiconductorSfawaNo ratings yet

- Lecture 31 32 MultiProcessor MultiCoreDocument37 pagesLecture 31 32 MultiProcessor MultiCoreUdai ValluruNo ratings yet

- Mehrpoo2019 PDFDocument5 pagesMehrpoo2019 PDFDigvijayNo ratings yet

- Annexure - I: - Core Network Deployment - UTRAN Network VT Setup - UTRAN Protocol and Connectivity DiagramDocument5 pagesAnnexure - I: - Core Network Deployment - UTRAN Network VT Setup - UTRAN Protocol and Connectivity DiagramsumitgoraiNo ratings yet

- ddr4 Pi Model PDFDocument18 pagesddr4 Pi Model PDFAnh Viet NguyenNo ratings yet

- 16a OfdmDocument35 pages16a OfdmMohammad R AssafNo ratings yet

- C600 - Inventec Calcutta 10 PDFDocument56 pagesC600 - Inventec Calcutta 10 PDFMas Nanang FathurrohimNo ratings yet

- SPEC DATA Error in Speed CPU TCD - 019: Point of Detection ApplicationDocument1 pageSPEC DATA Error in Speed CPU TCD - 019: Point of Detection ApplicationDaniel GatdulaNo ratings yet

- 3 CP 1529Document8 pages3 CP 1529nio756No ratings yet

- Yichip-Yc1021-W C2916803Document16 pagesYichip-Yc1021-W C2916803sls vikNo ratings yet

- VOLTE Activation Procedure v2Document8 pagesVOLTE Activation Procedure v2HoudaNo ratings yet

- RTC MCP79410Document8 pagesRTC MCP79410hunter73100% (1)

- V850 Family: Product Letter DescriptionDocument4 pagesV850 Family: Product Letter DescriptionMaiChiVuNo ratings yet

- Design of Low - Power and High - Speed Pla and Rom For SOC ApplicationsDocument50 pagesDesign of Low - Power and High - Speed Pla and Rom For SOC ApplicationsMohammadAshrafulNo ratings yet

- Embedded Systems Design Part1Document61 pagesEmbedded Systems Design Part1arihant raj singhNo ratings yet

- OM1 Section3Document19 pagesOM1 Section3hungpm2013No ratings yet

- 2.3.11 Packet Tracer - Determine The DR and BDR Editado PDFDocument3 pages2.3.11 Packet Tracer - Determine The DR and BDR Editado PDFMaría ArmijosNo ratings yet

- DS2401 Silicon Serial Number: Benefits and Features Pin ConfigurationsDocument11 pagesDS2401 Silicon Serial Number: Benefits and Features Pin ConfigurationsDrJeseussNo ratings yet

- 01 (Handout)Document44 pages01 (Handout)snualpeNo ratings yet

- Design SolutionDocument5 pagesDesign SolutionsubbuNo ratings yet

- CZC Digital Technologies Co.,LTD: CPL S01 (R48)Document54 pagesCZC Digital Technologies Co.,LTD: CPL S01 (R48)Joaquin Koki VenturaNo ratings yet

- GSM ChannelsDocument44 pagesGSM ChannelsSergio BuonomoNo ratings yet

- NIIT BS+IT ProgramDocument14 pagesNIIT BS+IT ProgramAmirSyarifNo ratings yet

- Processor Intro PPTDocument36 pagesProcessor Intro PPTChand1891No ratings yet

- tlc7524 - 8bit DACDocument22 pagestlc7524 - 8bit DAC40818248No ratings yet

- D D D D D D D: DescriptionDocument26 pagesD D D D D D D: DescriptionsirosNo ratings yet

- Amd Ryzen Cpu OptimizationDocument55 pagesAmd Ryzen Cpu OptimizationSiddharth PujariNo ratings yet

- 5G RF Basic ParametersDocument4 pages5G RF Basic Parameters蔣文彬100% (3)

- Avalon Switch FabricDocument29 pagesAvalon Switch FabrictptuyenNo ratings yet

- Tumhpsc5406 TD20Document107 pagesTumhpsc5406 TD20manojbendkevNo ratings yet

- CH3 (Read Only)Document21 pagesCH3 (Read Only)Sherief Abd El FattahNo ratings yet

- Ddr4 Sdram-Sodimm: 8GB Based On 4gbit (512Mx8) ComponentDocument16 pagesDdr4 Sdram-Sodimm: 8GB Based On 4gbit (512Mx8) Componentmarcelomattos37No ratings yet

- MDT2005EPDocument15 pagesMDT2005EPراجيرحمةربهNo ratings yet

- Initial Thoughts On "Pulsar in SRC Mode" Possibility: Ted Liu, Dec. 03, 2003Document37 pagesInitial Thoughts On "Pulsar in SRC Mode" Possibility: Ted Liu, Dec. 03, 2003anujnehraNo ratings yet

- Important Notes About This Schematic: at Ecs Confidential @Document35 pagesImportant Notes About This Schematic: at Ecs Confidential @Виталий МорозовNo ratings yet

- LEARN MPLS FROM SCRATCH PART-B: A Beginners guide to next level of networkingFrom EverandLEARN MPLS FROM SCRATCH PART-B: A Beginners guide to next level of networkingNo ratings yet

- Haunted Horror PreviewDocument16 pagesHaunted Horror PreviewGraphic Policy100% (1)

- Marketing Communication Assignment (Resit)Document24 pagesMarketing Communication Assignment (Resit)wafiy92No ratings yet

- Used To Exercises Grammar Drills Grammar Guides 73036Document2 pagesUsed To Exercises Grammar Drills Grammar Guides 73036cristinacanoleNo ratings yet

- Personal Best A2 Unit 12 Vocabulary TestDocument1 pagePersonal Best A2 Unit 12 Vocabulary TestatsuhiroyamiNo ratings yet

- ENGLISH 9 Module - April 8 2024Document2 pagesENGLISH 9 Module - April 8 2024Rhian Joy Balantac DaguioNo ratings yet

- Agent VNDocument112 pagesAgent VNTimNo ratings yet

- Bojack Horseman - Animal House (Except Literally)Document33 pagesBojack Horseman - Animal House (Except Literally)Matt AcuñaNo ratings yet

- 4th EditionDocument17 pages4th EditionMarcos R. SandovalNo ratings yet

- Abhee PrideDocument14 pagesAbhee PrideAnoop VkNo ratings yet

- Raha Product List & StandardsDocument1 pageRaha Product List & StandardsMohammad Jubayar Al MamunNo ratings yet

- Z M B (MM) N H (Hours) : Gear Design (Is-4460) (Spur or Helical)Document16 pagesZ M B (MM) N H (Hours) : Gear Design (Is-4460) (Spur or Helical)Babu RajamanickamNo ratings yet

- INGLÉS II Recuperación Primera EvaluaciónDocument4 pagesINGLÉS II Recuperación Primera EvaluaciónElena Murcia100% (3)

- UCCN1004 - Lect2a - Intro To Network Devices - AddressingDocument28 pagesUCCN1004 - Lect2a - Intro To Network Devices - AddressingVickRam RaViNo ratings yet

- Rendering Engine Browser Platform(s) Engine Version CSS GradeDocument2 pagesRendering Engine Browser Platform(s) Engine Version CSS GradeDani MurtadhoNo ratings yet

- 07A. Kanga ToursDocument1 page07A. Kanga ToursАлекс ГриненкоNo ratings yet

- 5.2 Z W NG V OoDocument20 pages5.2 Z W NG V OoNiroshi NisansalaNo ratings yet

- Computeractive 25.10.2023Document76 pagesComputeractive 25.10.2023Gervásio PaupérioNo ratings yet

- Prepositions of Time Adalah Kata Depan Dalam Bahasa Inggris Yang Berfungsi Untuk Menunjukkan WaktuDocument6 pagesPrepositions of Time Adalah Kata Depan Dalam Bahasa Inggris Yang Berfungsi Untuk Menunjukkan Waktuaxsioma goNo ratings yet

- Panasonic Sa-Ht855e Eb EgDocument119 pagesPanasonic Sa-Ht855e Eb EgNuno SobreiroNo ratings yet

- Worcester 2012 BrochureDocument13 pagesWorcester 2012 BrochureDarth BaderNo ratings yet

- Ols-3x Ds Fop TM AeDocument4 pagesOls-3x Ds Fop TM AeAghil Ghiasvand MkhNo ratings yet

- Monitoring Times 1997 12Document124 pagesMonitoring Times 1997 12Benjamin DoverNo ratings yet

- MZ-X500 MZ-X300: Firmware Version 1.40 User's GuideDocument14 pagesMZ-X500 MZ-X300: Firmware Version 1.40 User's GuideAlexander RestrepoNo ratings yet

- Reader A Hero For Plunkett StreetDocument28 pagesReader A Hero For Plunkett StreetALEX ANDRe ARQUE MU�OZNo ratings yet

- Important Stuff:: Pambula Hockey ClubDocument6 pagesImportant Stuff:: Pambula Hockey Clubpambula_thunderNo ratings yet

- HowTo Monitor FortiGate With PRTGDocument14 pagesHowTo Monitor FortiGate With PRTGJavier MoralesNo ratings yet

- Hydrant SystemDocument10 pagesHydrant SystemSheikh Faiz RockerNo ratings yet

- Grade 4-WW 4-Test1 (4 K Năng)Document6 pagesGrade 4-WW 4-Test1 (4 K Năng)le tuyet nhungNo ratings yet

- The Spider v01n01 - The Spider Strikes (1933-10) (OCR+Cover)Document78 pagesThe Spider v01n01 - The Spider Strikes (1933-10) (OCR+Cover)donkey slapNo ratings yet

- Only Reminds Me of You LyricsDocument2 pagesOnly Reminds Me of You LyricsPrecious Grace DirectoNo ratings yet