Professional Documents

Culture Documents

ChatGPT For Internal Auditors

Uploaded by

20916030Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

ChatGPT For Internal Auditors

Uploaded by

20916030Copyright:

Available Formats

ARTIFICIAL INTELLIGENCE 101 SERIES:

ChatGPT

for Internal

Auditors

Use cases, sample prompts, and key

considerations when using Natural

Language Processing tools.

Check out our

“Artificial Intelligence 101

for Internal Auditors” for a

primer on AI technology

Table of Contents

Introduction. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .2

What Is Natural Language Processing?. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3

• Definition . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3

• Use in the Marketplace. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3

• ChatGPT Alternatives . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3

Common Risks Associated With the Use of Publicly Available AI Tools. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 4

Use Cases. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5

• Example 1: General Audit Plan. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5

• Example 2: General Audit Plan . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 10

• Example 3: Structure Unstructured Data . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 13

• Example 4: Write Your Audit Report . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14

Introduction

By November 2023, over 100 million people can internal auditors. This AI 101 guide will

globally were regularly using ChatGPT. provide novice AI users with use cases and

Businesses and individuals have praised the recommendations for how they can incorporate

AI tool for its ability to save time spent on ChatGPT-style tools into their practice.

manual, time-consuming activities, using However, as with any technology, there are

it for everything from writing emails and both risks and rewards — and the potential risks

summarizing documents to developing code, associated with publicly available tools cannot

Excel shortcuts, and PowerPoint presentations. be overlooked. As always, internal auditors

Because of these and other benefits, should remain vigilant about the inherent risks

organizations across all professions and and diligent about the controls in place to avoid,

industries are using ChatGPT and other Natural share, accept, or mitigate those risks.

Language Processing (NLP) tools — and so

Artificial Intelligence 101 Series: ChatGPT for Internal Auditors 2

What Is Natural Language Processing?

Natural Language Processing (NLP) is a type of How are NLP tools like ChatGPT

artificial intelligence that gives a machine the ability to

understand written and spoken words in the same way used today?

that humans do. While each specific type of NLP may be Natural Language Processing-based tools are already

programmed to focus on different skills, in general this integrated into many aspects of our everyday lives.

type of AI is capable of:

For example, NLP is used in:

• Speech recognition – identifying when a human is

• Google Translate

speaking and what words are being said

• Email filters (Gmail’s primary, social, or promotions

• Grammatical tagging – identifying parts of speech,

categories)

such as nouns, verbs, or adjectives

• Chatbots and virtual agents like Apple Siri and

• Sentiment analysis – determining themes in data,

Amazon Alexa

such as whether customer responses are positive,

negative, or neutral • Search results and predictive text

• Text summarization – reducing the numbers of • Speech recognition software (voice-to-text)

words in data without changing its meaning, or

providing a synopsis of other data (a video, for Do alternatives to OpenAI’s

example) ChatGPT exist?

• Natural language generation – putting information The use cases in this resource relied on ChatGPT 4.0.

into new text or speech, such as the content However, there are numerous alternatives to ChatGPT,

produced by ChatGPT or Apple Siri each with unique features and capabilities. Below is a

small sample of commonly used ones:

1. Google BARD – BARD is a conversational AI

chatbot that ties into the popular search engine.

In addition to being a type

2. Microsoft Bing AI – Bing’s AI-slash-search

of NLP, ChatGPT is more

engine integrates with and has support for other

specifically a Generative

Microsoft applications, allowing it to integrate

Pre-trained Transformer

items such as search and chat histories into its

(GPT). It is designed to

responses.

handle sequential data,

such as language, and will 3. Amazon Lex – This chatbot by Amazon Web

generate text based on the input it receives. GPT Services allows users to build conversational

models don’t only copy and paste — they can interfaces into applications using voice and text.

create brand new content. This content is based It is adept at automatic speech recognition (ASR)

on all the data it was initially trained on, as well as for converting speech to text and natural language

new data it received. This means that the model understanding (NLU) to recognize user intent.

will learn from — and “keep” — any information 4. IBM Watson Assistant – This tool is designed to

you give it. Internal auditors and the businesses understand and respond to customer inquiries. It

they represent must be cautious not to provide can be trained to provide information specific to

proprietary data in “conversations” with NLP an industry or company.

models like ChatGPT.

5. Jasper – This AI is used often to develop marketing

Some organizations are beginning to develop and communications content, including blogs,

private, or closed, GPT models. In those cases, the social media profiles, and websites.

information entered into the system does not

6. CopyAI – CopyAI is another tool often used for

become publicly available.

developing marketing and communications

content for organizations.

Artificial Intelligence 101 Series: ChatGPT for Internal Auditors 3

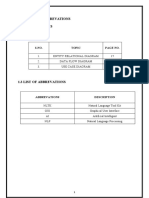

Common Risks Associated With the

Use of Publicly Available AI Tools

RISK EXPLANATION SAMPLE MITIGATION STEPS

Data is inaccurate due to a biased The algorithm has been trained on data

algorithm that was collected, classified, or otherwise

subjected to biases

Don’t accept AI-generated

Data has been “poisoned” An AI tool has been deliberately given responses as completely

inaccurate, biased, or otherwise bad accurate; apply professional

information, which it then uses in developing skepticism and verify all

responses information

Users place too much reliance on Internal auditors or other staff blindly “copy

AI-generated content and paste” information from the tool

Confidential information is Users enter information into the chat that is Remove any potential

provided in the prompts not meant for public consumption identifying information from

prompts

Artificial Intelligence 101 Series: ChatGPT for Internal Auditors 4

USE CASES:

Examples of How to Safely Incorporate

ChatGPT-Style Tools in Your Practice

EXAMPLE 1: Use it to help you think through your general audit plan

3. Data Integrity and Quality:

Prompt: Given The Institute of Internal Auditors’

• Source Data: Assess the accuracy, completeness,

existing standards for internal auditing, what would you

and timeliness of the data feeding into the AI

recommend a Chief Audit Executive include in an audit

models.

plan designed to audit the appropriate use of artificial

intelligence within their organization? • Data Privacy and Security: Ensure that the AI

systems comply with data protection laws and

that sensitive data is appropriately protected.

• Bias Detection: Audit for biases in the data that

PRO TIP! Ask generalized questions. might result in unfair or discriminatory outcomes.

Never give identifiable or proprietary

information to an NLP tool available for 4. AI Model Validation:

public use. If you think it could be used

• Model Development and Training: Assess the

to identify your company, remove it.

methodologies used to develop and train AI

models for appropriateness and scientific validity.

Actual ChatGPT 4.0 Response: Auditing the appropriate • Testing and Calibration: Ensure rigorous testing

use of artificial intelligence (AI) within an organization is a and calibration of models before deployment

relatively new but increasingly important frontier. Given and periodically thereafter.

The IIA’s standards for internal auditing, a Chief Audit • Model Documentation: Review documentation

Executive (CAE) should consider the following components for thoroughness — including assumptions made,

when developing an audit plan for AI: data sources, algorithms used, and decision

rationales.

1. Risk Assessment:

• Identification of AI Systems: Catalog all AI-driven 5. Transparency and Explainability:

systems and applications in the organization.

• Interpretability: Check if the AI model’s

• Risk Prioritization: Evaluate the criticality and decisions can be explained and understood

potential impact of each AI system on the by stakeholders. This is especially crucial for AI

organization. Systems with higher potential systems used in decision-making that affects

impact (financial, operational, reputational) individuals, like hiring or loan approvals.

should receive priority.

2. Governance and Oversight: PRO TIP! Throughout the entire

• AI Strategy: Review the organization’s AI strategy engagement, make sure you are

and ensure alignment with organizational goals. scrutinizing the responses and applying

• Roles and Responsibilities: Check that there are reasonable skepticism. The responses

clear delineations of roles and responsibilities provided should be used as a general guide and to

concerning AI development, implementation, and help provoke your own thoughts; they should not

monitoring. be used as authoritative text.

Artificial Intelligence 101 Series: ChatGPT for Internal Auditors 5

• Reporting: Review reporting mechanisms for 11. Third-Party Management (if the AI system is

AI decisions, ensuring they are transparent and outsourced or built in partnership):

provide appropriate information to relevant • Vendor Due Diligence: Audit third-party providers

stakeholders. for their AI capabilities, data management

practices, and compliance with relevant

6. Operational Controls: standards.

• Change Management: Assess the processes for

• Contractual Safeguards: Ensure contracts with

updating or altering AI models and systems.

third parties have clear terms regarding data

• Incident Management: Ensure there are usage, AI model transparency, and other critical

mechanisms for capturing, reporting, and factors.

addressing anomalies or errors in AI-driven

decisions. Regularly updating the audit plan to reflect advances in

AI technology and methodologies will also be crucial. The

7. Ethical Considerations: rapid evolution of AI means that what is considered a best

• Ethical Guidelines: Ensure AI deployments align practice now might be outdated in just a few years.

with ethical guidelines and principles, both

internally set and recognized globally.

• Stakeholder Impact Analysis: Analyze potential Prompt: You suggest that the audit plan should audit for

adverse impacts of AI decisions on stakeholders biases in data that might result in unfair or discriminatory

and ensure that there are mitigation strategies. outcomes. How might the Chief Audit Executive audit for

such biases? What specific steps should the auditor take?

8. Performance Monitoring:

• Ongoing Monitoring: Audit processes

for continuous monitoring of AI systems’

PRO TIP! Ask a clarifying question or

performance against defined metrics.

request a more detailed response based

• Feedback Loops: Ensure that there are upon the information given (or not given).

mechanisms for learning from inaccuracies

or failures and that these insights are used to

improve AI systems. Actual ChatGPT 4.0 Response: Auditing for biases in

data and AI models is a challenging yet essential task,

9. Regulatory and Compliance:

especially as businesses and organizations increasingly

• Legal Requirements: Ensure AI applications rely on AI for decision-making. Biased decisions can lead

are in compliance with any sector-specific or to significant financial, reputational, and legal risks. Here’s

jurisdiction-specific regulations. a step-by-step approach a Chief Audit Executive (CAE)

• External Reporting: Review processes for might take:

external reporting, if required, ensuring accuracy 1. Define What Bias Looks Like:

and timeliness.

• Understand the business and regulatory context.

Different industries or sectors might have

10. Stakeholder Communication:

specific definitions or concerns related to bias.

• Training and Awareness: Assess training

programs for employees and stakeholders • Consult with stakeholders, including legal, ethical,

affected by AI decisions to ensure understanding and diversity and inclusion experts, to define

and effective use. clear benchmarks or indicators of bias.

• Feedback Channels: Ensure stakeholders have 2. Identify High-Risk Areas:

clear channels to provide feedback or raise

• Prioritize AI systems and data sets that have a

concerns about AI-driven decisions.

more significant impact on individuals or groups,

such as hiring systems, lending algorithms, or

healthcare diagnostics.

Artificial Intelligence 101 Series: ChatGPT for Internal Auditors 6

• Understand the historical context: Some

industries or sectors have historical biases that

might seep into AI models if not corrected.

3. Assess Data Collection Methods:

• Review the sources of the data. Is it from a

diverse and representative sample, or does it

disproportionately represent certain groups?

• Examine how data was cleaned and pre-

processed. Data cleaning can sometimes

unintentionally introduce biases.

4. Conduct Statistical Analysis: • Check if these measures align with best practices

and industry standards.

• Use statistical tools to identify patterns in the

data. For instance, disproportionate outcomes

9. Review Documentation and Transparency:

for certain groups might indicate bias.

• Ensure that there’s comprehensive

• Consider using fairness-enhancing interventions documentation about the AI model’s

and techniques to mitigate identified biases in development, training, objectives, and any known

data. limitations. This can help provide context and

might highlight areas where bias was introduced.

5. Test the Model:

• Check if explanations for AI decisions (especially

• Use adversarial testing: Intentionally input data

those impacting individuals) are clear,

that challenges the AI model to see if it produces

understandable, and free from bias.

biased outcomes.

• Compare model decisions across different 10. Stakeholder Feedback:

groups to identify any disproportionate impacts. • Engage with end-users and those affected by

For instance, compare approval rates between the AI decisions. Feedback from these groups

different ethnic or gender groups. can provide valuable insights into perceived and

actual biases.

6. Review Model Training and Development:

• Understand the objectives and optimization 11. Continuous Monitoring:

criteria of the AI model. If a model is optimized • Bias detection is not a one-off activity. Regularly

for profit maximization without fairness audit and monitor the AI model’s decisions to

constraints, it may produce biased outcomes. ensure that biases do not creep in over time.

• Examine the model’s feedback loops. If a model

is continually learning from biased decisions, it 12. Report and Recommend:

can reinforce and exacerbate those biases. • Document findings, potential risks, and provide

recommendations for rectification. Ensure that

7. Engage External Experts: senior management is aware of any significant

• Consider bringing in third-party experts risks related to bias.

specializing in AI fairness and bias to review • Advocate for training and awareness programs

the model and its outcomes. This can provide within the organization to ensure everyone

an objective perspective and validate internal understands the importance of unbiased AI

findings. systems.

8. Evaluate Mitigation Measures: Remember, while statistical and technical measures

• Review any fairness interventions or corrective are valuable, they must be combined with a contextual

measures the organization has already understanding of the AI system’s impact on individuals and

implemented in the AI model. groups to effectively audit for biases.

Artificial Intelligence 101 Series: ChatGPT for Internal Auditors 7

Steps to Take

Prompt: What questions should I ask or what steps

should I take to analyze the data for inherent biases? 1. Statistical Analysis:

• Conduct statistical tests to identify any

disparities in data distribution across different

groups.

PRO TIP! Continue to ask clarifying • Look for correlations that might indicate biases,

and/or refining questions. especially involving sensitive attributes.

2. Data Segmentation:

• Segment the data based on various

Actual ChatGPT 4.0 Response: Analyzing data for inherent

demographics and analyze each segment to

biases is a crucial step in ensuring fairness and ethical

identify disparities.

use of AI systems. Here are questions to ask and steps to

take for a thorough analysis: • This helps in understanding how different groups

are represented and treated in the dataset.

Questions to Ask

1. Is the Data Representative? 3. Bias Detection Algorithms:

• Does the dataset adequately represent all groups, • Use specialized algorithms designed to detect

especially those that are historically marginalized biases in datasets.

or underrepresented? • Tools and frameworks for fairness assessment

• Are there any groups that are overrepresented or (like IBM’s AI Fairness 360, Google’s What-If Tool)

underrepresented? can be helpful.

2. Are There Proxy Variables? 4. Consultation With Subject Matter Experts:

• Does the dataset contain variables that could • Engage with domain experts and data scientists

act as proxies for sensitive attributes (like race, to understand potential sources of bias.

gender, age)? • Experts can provide insights into nuances of data

• How might these proxies influence the outcomes that might not be evident through statistical

of the AI system? analysis alone.

3. How Was the Data Collected?

• What was the process of data collection?

• Could the collection method have introduced

any biases (e.g., self-selection bias, sampling

bias)?

4. What Is the Historical Context?

• Does the historical data contain biases or reflect

past discriminatory practices?

• How might historical biases affect current and

future AI decisions?

5. Data Labeling:

• Who labeled the data, and what criteria were

used?

• Could there be subjective biases in how data was

categorized or labeled?

Artificial Intelligence 101 Series: ChatGPT for Internal Auditors 8

5. Review Data Collection and Curation 8. Historical Data Review:

Processes: • Critically evaluate historical data for biases and

• Examine how data was collected, processed, and consider how historical trends might influence

curated. current data.

• Look for any stages in the data pipeline where

9. Impact Assessment:

biases could have been introduced.

• Conduct impact assessments to understand

6. External Benchmarks and Standards: how biases in data might affect various groups.

• Compare your data with external benchmarks or • Evaluate the potential consequences of biased

standards to identify any deviations. decisions on different communities.

• This can help in understanding if the dataset is

10. Document Findings and Recommendations:

skewed compared to real-world distributions.

• Thoroughly document the analysis process,

7. Feedback Loops: findings, and any recommendations for

addressing identified biases.

• Analyze whether the AI system’s outputs are

creating feedback loops that reinforce biases. • This documentation is crucial for transparency

and accountability.

• Assess how data generated by the AI system is

fed back into the system, potentially amplifying

By asking these questions and following these steps, you

initial biases.

can conduct a comprehensive analysis of your data for

inherent biases, which is essential for building fair and

ethical AI systems.

Artificial Intelligence 101 Series: ChatGPT for Internal Auditors 9

EXAMPLE 2: Use it to help you think through your general audit plan

• Scope:

Prompt: Using The Institute of Internal Auditors’ existing • Examination of the online content library.

standards for internal auditing, draft an audit program

• Assessment of the current taxonomy and

with the objective of validating whether an organization’s

tagging processes.

website search functionality and the processes supporting

website search capabilities are effective. The scope of the • Evaluation of the website’s search

audit includes the organization’s online content library, its functionality.

current taxonomy, tagging processes, and website. The

3. Risk Assessment:

organization in question is a small, for-profit organization

specializing in the sale of pet products. Its website • Conduct a risk assessment to identify potential

governance process is defined; the entire organization has issues that could impact the effectiveness of the

standardized and documented its processes; and cross- website’s search functionality.

team collaboration thrives.

4. Develop Audit Criteria:

• Benchmarking: Compare the organization’s

PRO TIP! Be specific in your questions. practices against best practices in website

The more nuanced you are (without search functionality.

giving out private information!), the • Compliance: Ensure adherence to any relevant

better your responses will be. laws, regulations, and internal policies.

5. Conducting the Audit:

Actual ChatGPT 4.0 Response: Creating an audit program

• Test Website Search Functionality: Conduct

using The Institute of Internal Auditors’ existing standards

tests to assess the accuracy, speed, and

for internal auditing to evaluate the effectiveness of an

relevance of search results.

organization’s website search functionality and related

processes involves several steps. Here’s a draft audit • Evaluate Taxonomy and Tagging Processes:

program tailored to a small, for-profit organization Review how content is categorized and tagged,

specializing in pet products: and how this influences search results.

• Assess Content Library: Determine if the content

1. Preliminary Planning:

library is up to date, relevant, and adequately

• Understand the Business: Gain an in-depth indexed for search.

understanding of the organization’s business

model, focusing on online sales and content • Website Technical Evaluation: Examine technical

dissemination strategies. aspects like website coding, metadata, and

search algorithms.

• Review Existing Documentation: Examine the

organization’s website governance process, • Stakeholder Interviews: Engage with team

standardized documentation, and collaboration members across different departments to

protocols. understand their role in maintaining and

improving search functionality.

• Identify Key Stakeholders: Determine who will be

involved in the audit (e.g., IT, marketing, content • Document Review: Analyze the standardization

management teams). and documentation of processes related to

website search.

2. Define Audit Objective and Scope:

6. Reporting:

• Objective: To validate the effectiveness of the

organization’s website search functionality and • Draft Report: Prepare a draft audit report

the processes supporting it. detailing findings, risks identified, and areas for

improvement.

Artificial Intelligence 101 Series: ChatGPT for Internal Auditors 10

• Management Review: Present the draft report to 2. Content Management Risks:

management for feedback. • Poorly Managed Taxonomy and Tagging:

• Final Report: Issue a final report with Inaccurate or inconsistent taxonomy and tagging

recommendations and an action plan. can lead to irrelevant search results.

• Outdated or Irrelevant Content: If the content

7. Follow-Up: library is not regularly updated or curated, it can

• Action Plan Monitoring: Monitor the result in outdated or irrelevant search results.

implementation of recommendations.

• Follow-Up Audit: Plan a follow-up audit, if 3. User Experience Issues:

necessary, to evaluate the implementation of the • Ineffective Search Interface: A non-intuitive or

action plan. complicated search interface can hinder user

experience and reduce the effectiveness of

8. Continuous Improvement: searches.

• Feedback Loop: Establish a feedback mechanism • Lack of Customization: Inability to tailor search

to continuously improve website search results to individual user preferences or search

functionality and related processes. history.

This audit program is designed to comprehensively 4. Data and Analytics Challenges:

evaluate the effectiveness of the organization’s website • Inadequate Data Analysis: Failure to effectively

search functionality, ensuring it aligns with its objectives analyze user search patterns and behaviors to

and the needs of its customers in the pet products industry. improve search functionality.

• Limited Feedback Mechanisms: Lack of effective

channels for receiving user feedback on search

Prompt: Given the information already provided about functionality.

the organization’s size and type, as well as the maturity

of the website governance process, what risks should I 5. Security and Privacy Concerns:

be looking for that could impact the effectiveness of the

• Data Breaches: Risks associated with the

website’s search functionality?

security of user data and search histories.

• Compliance Issues: Non-compliance with data

protection regulations (like GDPR) in handling

PRO TIP! Biased questions or data will user data.

give biased answers. Try to remove all

bias in your prompts. 6. Operational and Governance Risks:

• Insufficient Cross-Team Collaboration: Even

with thriving collaboration, there could be gaps

Actual ChatGPT 4.0 Response: Given the nature of the in communication or understanding between

small, for-profit organization specializing in pet products, different teams (like IT, content, marketing)

with a well-defined website governance process and impacting search efficiency.

standardized, documented processes, several specific

• Change Management Issues: Challenges in

risks could impact the effectiveness of the website’s

implementing changes or updates to the search

search functionality. Here are some key risks to consider:

functionality due to rigid governance structures.

1. Technological Limitations:

• Outdated Technology: The website might be 7. Resource Constraints:

running on outdated technology that limits • Limited IT Resources: Being a small organization,

search functionality capabilities. there might be constraints in IT support

and resources for ongoing maintenance and

• Inadequate Search Algorithms: The algorithms

improvement of search functionality.

used for search might not be sophisticated

enough to handle complex queries or filter results

effectively.

Artificial Intelligence 101 Series: ChatGPT for Internal Auditors 11

• Budget Limitations: Financial constraints may • Personalization Features: Develop capabilities

limit the ability to invest in advanced search for customized search experiences based on user

technology or hire specialized talent. preferences.

8. Scalability and Growth Challenges: 4. Data and Analytics Challenges:

• Scalability of Search Function: As the business • Data Analysis Tools: Use advanced data analytics

grows, the search functionality may not be tools to monitor and analyze search patterns.

scalable to handle increased traffic or expanded • Feedback Mechanisms: Establish channels for

content. collecting and analyzing user feedback on search

functionality.

Identifying and addressing these risks during the audit

will help ensure that the organization’s website search 5. Security and Privacy Concerns:

functionality remains effective and aligned with its

• Data Security Protocols: Implement robust data

business objectives and user needs.

security measures, including encryption and

access controls.

• Compliance Audits: Regularly audit data handling

Prompt: For each of the risks identified above, what

practices for compliance with relevant data

are some potential internal controls that could help the

protection laws.

organization avoid, share, accept, or mitigate those risks?

6. Operational and Governance Risks:

Actual ChatGPT 4.0 Response: For each of the risks • Cross-Functional Teams: Form dedicated teams

identified in the effectiveness of the website’s search comprising members from IT, content, and

functionality, implementing specific internal controls marketing to oversee search functionality.

can help the organization manage these risks effectively.

• Change Management Processes: Develop a

Here’s a breakdown of potential controls for each risk

structured approach for implementing changes

category:

to the search system.

1. Technological Limitations:

• Regular Technology Audits: Conduct periodic 7. Resource Constraints:

reviews of the website’s technology stack to • Resource Allocation Plan: Develop a strategic

ensure it’s up to date. plan for allocating IT resources efficiently.

• Investment in Advanced Search Technologies: • Budget Planning: Set aside funds specifically for

Allocate budget for upgrading search algorithms maintaining and upgrading search functionality.

and software.

8. Scalability and Growth Challenges:

2. Content Management Risks: • Scalability Testing: Regularly test the search

• Standardized Tagging Procedures: Implement system for scalability as part of the ongoing

and enforce strict guidelines for tagging and maintenance routine.

categorizing content.

• Growth Planning: Incorporate scalability

• Regular Content Reviews: Schedule periodic considerations in long-term planning for the

audits of the content library to ensure relevance website’s infrastructure.

and accuracy.

By implementing these controls, the organization can

3. User Experience Issues: effectively manage the risks associated with its website

• User Interface Testing: Regularly test and update search functionality. It’s important to periodically review and

the search interface based on user feedback and update these controls to ensure they remain effective as the

usability studies. organization and its online presence grow and evolve.

Artificial Intelligence 101 Series: ChatGPT for Internal Auditors 12

EXAMPLE 3: Use It to Help You Structure Unstructured Data

• Date: May 18, 2023

Prompt: Access the attached, publicly available minutes

• Contract Name: Procurement of Directors and

of the New York City Municipal Water Finance Authority

Officers Liability Insurance from AIG, Zurich

Board of Directors for 2023. Based on the information

American Insurance Company, Liberty Mutual

listed in the files, identify all of the times that the Board

Insurance Company, and Aspen Insurance

of Directors discussed contracts. List the Date, Contract

Name, and Status of each contract. • Status: Approved

• Date: August 3, 2023

• Contract Name: Rate Consultant Contracts

PRO TIP! ChatGPT-style tools can with Amawalk Consulting Group LLC and Carollo

structure many types of unstructured Engineers

data, but avoid uploading any proprietary

information into a public tool. For • Status: Approved

example, an organization would not want minutes

• Date: September 22, 2023

from a private meeting, accounts payable reports,

or bank statements uploaded into a public tool. • Contract Name: Ratification of Rate Consultant

Contracts with Amawalk Consulting Group LLC

and Carollo Engineers

Actual ChatGPT 4.0 Response: Based on the information • Status: Ratified

from the minutes of the New York City Municipal Water

Finance Authority Board of Directors meetings for 2023, Prompt: Put the above information into a CSV file.

here are the times the Board discussed contracts:

• Date: February 2, 2023

Actual ChatGPT 4.0 Response: The information about

• Contract Name: Amendment of Consulting the contracts discussed by the New York City Municipal

Engineer Contracts with AECOM and Macan Deve Water Finance Authority Board of Directors in 2023 has

• Status: Approved been structured into a CSV file.

• Date: February 24, 2023

• Contract Name: Approval of Minutes of PRO TIP! Be skeptical and verify. The

Previous Meeting and Approval of Supplemental responses a NLP tool give you should

Resolution No. 180 Authorizing the Issuance of be fact-checked and used to provoke

Fiscal 2023 Series DD Water and Sewer System further thinking, but should not be

Second General Resolution Revenue Bonds treated as an authoritative statement.

• Status: Approved

• Date: March 28, 2023

• Contract Name: Extension of Bond Counsel

Contracts with Nixon Peabody LLP, Hardwick LLC,

and Orrick Herrington & Sutcliffe LLP

• Status: Approved

Artificial Intelligence 101 Series: ChatGPT for Internal Auditors 13

EXAMPLE 4: Use It to Help You Write Your Audit Report

website governance, such as compliance and other key

Prompt: Make the following information more concise operational components, which may not fall under the

and action-oriented: direct purview of the Web Team.

• Enhanced Recommendations: It is imperative that the

management takes a proactive stance in this matter.

PRO TIP! ChatGPT-type tools excel at The first step should be to establish, document, and

producing short, concise, and compelling effectively communicate a comprehensive plan. This

text. However, there are significant plan must explicitly address the strategic direction

limitations with report writing, as of the website, ensuring that it aligns with and

internal auditors should not include any proprietary supports the organization’s broader strategic goals. In

information about their organization in their prompts. addition to setting the strategic direction, it is equally

Remember: ChatGPT stores everything that is important to delineate clear policies, processes, and

typed into it. roles/responsibilities. These should be geared towards

ensuring the continued operation and maintenance

of the website at an optimal level. Establishing such

a governance framework will not only provide clear

• Expanded Background Information: The concept of

guidelines for current operations, but will also lay a

website governance is an essential best practice that

solid foundation for future growth and adaptation of

is crucial for ensuring that this digital asset significantly

the website in alignment with the evolving objectives

supports the organization’s overarching strategic

of the organization.

goals. It is instrumental in delivering the anticipated

value from the substantial investment placed in the Actual ChatGPT 4.0 Response: Concise Summary and

website’s creation and ongoing maintenance. This Action Plan

comprehensive approach includes a well-defined set of • Issue: The organization lacks a structured

policies, processes, roles, and responsibilities. Moreover, website governance plan, essential for aligning

it comprehensively addresses all aspects of website the website with strategic goals. Initial efforts

maintenance and operation, ensuring that every facet stalled due to leadership changes, and the

of the website’s performance and functionality aligns current understanding of website governance is

seamlessly with the organization’s objectives and inadequate.

expectations.

• Immediate Action: Management must establish

• Detailed Observations: Currently, there is a notable and document a comprehensive website

absence of a structured governance plan specifically governance plan. This plan should:

targeting the operation and maintenance of the

website. This gap was somewhat addressed in • Clearly define the strategic direction of

October 2022, when the Web Team presented key the website, ensuring alignment with the

performance indicators (KPIs) for the website, along organization’s broader goals.

with a plan outlining potential future improvements. • Detail policies, processes, roles, and

This presentation was a commendable initial step responsibilities for ongoing website operation

towards establishing a framework for website and maintenance.

governance. However, the situation has evolved

• Address all aspects of website performance

since then due to a turnover in leadership, which

and functionality.

has somewhat stalled these initial efforts. When the

Board of Directors recently inquired about the state of

• Long-Term Benefits: Implementing this

website governance, the response provided was rather

governance framework will provide clear

simplistic and insufficient. It was stated that “the

operational guidelines, support the website’s

Web Team owns the website,” which, while factually

optimal functioning, and facilitate future growth

correct, does not constitute a robust governance

and alignment with organizational objectives.

plan. This response fails to address critical aspects of

Artificial Intelligence 101 Series: ChatGPT for Internal Auditors 14

About The IIA

The Institute of Internal Auditors (IIA) is the internal audit profession’s most widely recognized advocate,

educator, and provider of standards, guidance, and certifications. Established in 1941, The IIA today

serves more than 230,000 members from more than 170 countries and territories. The association’s

global headquarters is in Lake Mary, Fla., USA. For more information, visit www.theiia.org.

Disclaimer

The IIA publishes this document for informational and educational purposes. This material is not

intended to provide definitive answers to specific individual circumstances and as such is only intended

to be used as a guide. The IIA recommends seeking independent expert advice relating directly to any

specific situation. The IIA accepts no responsibility for anyone placing sole reliance on this material.

Copyright

Copyright © 2023 The Institute of Internal Auditors, Inc. All rights reserved. For permission to reproduce,

please contact copyright@theiia.org.

IIA Headquarters

1035 Greenwood Blvd., Suite 401

Lake Mary, FL 32746 USA

You might also like

- Mastering Chat GPT : Conversational AI and Language GenerationFrom EverandMastering Chat GPT : Conversational AI and Language GenerationNo ratings yet

- About ChatGPT, by Chat GPTDocument54 pagesAbout ChatGPT, by Chat GPTmarte liNo ratings yet

- ChatGPTDocument17 pagesChatGPThpoirotNo ratings yet

- The Benefits and Challenges of ChatGPT An OverviewDocument3 pagesThe Benefits and Challenges of ChatGPT An OverviewIrfan MohdNo ratings yet

- Cvimis Lec 2Document18 pagesCvimis Lec 2Iffat AraNo ratings yet

- What Is Chatgpt? (And How To Use It)Document5 pagesWhat Is Chatgpt? (And How To Use It)guruict2021No ratings yet

- Chat GPT.Document9 pagesChat GPT.rahmasaif2015No ratings yet

- LLM_-A_Introduction_to_Generative_AIDocument31 pagesLLM_-A_Introduction_to_Generative_AIfghfghjfghjvbnyjytjNo ratings yet

- Erne J. The Artificial Intelligence Handbook for Management Consultants...2024Document413 pagesErne J. The Artificial Intelligence Handbook for Management Consultants...2024Jeffrey GrantNo ratings yet

- Chatbot: An Intelligent Agent For Enterprise ProfessionalsDocument28 pagesChatbot: An Intelligent Agent For Enterprise Professionalssupriya028No ratings yet

- Chatgpt Theory FinalDocument18 pagesChatgpt Theory FinalShuvo AhmedNo ratings yet

- Artificial Intelligence: and How We Can Use It To Our Advantage Presented By: Rwik Kumar DuttaDocument9 pagesArtificial Intelligence: and How We Can Use It To Our Advantage Presented By: Rwik Kumar DuttaRwik DuttaNo ratings yet

- 23nu1a05b8-It WorkshopDocument9 pages23nu1a05b8-It Workshopsteveharrington1418No ratings yet

- Chatgpt: For Agile DevelopmentDocument15 pagesChatgpt: For Agile DevelopmentJavi LobraNo ratings yet

- Chat GPTDocument3 pagesChat GPTAna Isabel SchutteNo ratings yet

- The Artificial Intelligence Handbook For Graphic Designers by Jeroen ErneDocument367 pagesThe Artificial Intelligence Handbook For Graphic Designers by Jeroen ErneLaszlo PergeNo ratings yet

- Artificial Intelligence ExplainedDocument10 pagesArtificial Intelligence ExplainedRabiie MnasriiNo ratings yet

- 2304 02017Document23 pages2304 02017yuriiaacNo ratings yet

- Pursue An Online Executive Post Graduate Programme in Machine Learning AI From IIITB 1679906379Document28 pagesPursue An Online Executive Post Graduate Programme in Machine Learning AI From IIITB 1679906379manthanNo ratings yet

- Beyond Chatgpt - State of Ai for Autumn 2023 CorrectDocument36 pagesBeyond Chatgpt - State of Ai for Autumn 2023 CorrectCouch PotatoNo ratings yet

- Study and Analysis of Chat GPT and Its Impact On Different Fields of StudyDocument7 pagesStudy and Analysis of Chat GPT and Its Impact On Different Fields of StudyInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- 1. ChatGPT and Leveraging AI in AuditingDocument4 pages1. ChatGPT and Leveraging AI in AuditingMuhamad PanjiNo ratings yet

- HP's ProjectDocument57 pagesHP's ProjectALBERT JNo ratings yet

- AI Based Computer Program To Simulate and Process Human ConversationDocument9 pagesAI Based Computer Program To Simulate and Process Human ConversationInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- The Product Manager'S: Prompt BookDocument62 pagesThe Product Manager'S: Prompt BookJuan Briones100% (2)

- Chat GPTDocument5 pagesChat GPTdileep.jcmNo ratings yet

- everything-you-need-to-know-about-chatgpt-expeed-software-240314091646-b2188bc5Document19 pageseverything-you-need-to-know-about-chatgpt-expeed-software-240314091646-b2188bc5bibek27597No ratings yet

- An Era of ChatGPT As A Significant Futuristic Support Tool A Study On FeaturesDocument8 pagesAn Era of ChatGPT As A Significant Futuristic Support Tool A Study On Featuresbeatzmine20No ratings yet

- Exploring ChatGPT, Presenter DocumentDocument8 pagesExploring ChatGPT, Presenter DocumentMehtab AmeemNo ratings yet

- Ai ExamDocument4 pagesAi Examoumaimayoucef43No ratings yet

- IJISRT23MAR956Document8 pagesIJISRT23MAR956Lekhana MNo ratings yet

- Data Science and Machine Learning Project IdeasDocument20 pagesData Science and Machine Learning Project IdeasJune June100% (1)

- Generative AI For Project ManagersDocument45 pagesGenerative AI For Project ManagersJacinth PaulNo ratings yet

- Artificial Intelligence OMG StandardsDocument22 pagesArtificial Intelligence OMG StandardsTae-Young ByunNo ratings yet

- MD Burhan6621documentation1Document23 pagesMD Burhan6621documentation1nphotographer99No ratings yet

- AI Tools and Technologies-An Overview: Assignment No. 3Document21 pagesAI Tools and Technologies-An Overview: Assignment No. 3Arpit AryaNo ratings yet

- Aptivaai User GuideDocument8 pagesAptivaai User GuideSushil KallurNo ratings yet

- Project Black Book JarvisDocument14 pagesProject Black Book JarvisSaad Chaudhary100% (4)

- Upgrad Campus - Generative AI BootcampDocument9 pagesUpgrad Campus - Generative AI BootcampDhanu RNo ratings yet

- Presentation - of - Project - Report - VikasDocument19 pagesPresentation - of - Project - Report - VikasVikash sharmaNo ratings yet

- Prompt Engineering For ChatGPT A Quick Guide To TeDocument11 pagesPrompt Engineering For ChatGPT A Quick Guide To Teatef toumi100% (1)

- How Does ChatGPT WorkDocument8 pagesHow Does ChatGPT Work[CO - 174] Shubham MouryaNo ratings yet

- Specific Purpose Pre-Trained Chatbot For Enterprise Reliability EngineeringDocument7 pagesSpecific Purpose Pre-Trained Chatbot For Enterprise Reliability EngineeringIJAR JOURNALNo ratings yet

- AI BOTProjectDocument56 pagesAI BOTProjectALBERT JNo ratings yet

- SSRN Id4402499Document7 pagesSSRN Id4402499janellajaquias55No ratings yet

- BTC Analytics HandbookDocument30 pagesBTC Analytics HandbookRishabh PandeyNo ratings yet

- Erne J. the Artificial Intelligence Handbook for HR is (HRIS) Specialists 2024Document368 pagesErne J. the Artificial Intelligence Handbook for HR is (HRIS) Specialists 2024Jeffrey GrantNo ratings yet

- Chatgpt What Procurement Leaders Need To Know and Do Right NowDocument6 pagesChatgpt What Procurement Leaders Need To Know and Do Right Nowgeorge.siewNo ratings yet

- Ai Use Cases eBookDocument25 pagesAi Use Cases eBookjoanrupasNo ratings yet

- An Introduction To Chat GPTDocument13 pagesAn Introduction To Chat GPTsrk_ankitNo ratings yet

- AnneDashini 1106191005 SE AssignmentDocument6 pagesAnneDashini 1106191005 SE AssignmentAnneNo ratings yet

- Digital Paper 2 - ChatGPT - AI - ICAIDocument10 pagesDigital Paper 2 - ChatGPT - AI - ICAIsnehalk25No ratings yet

- Chatgpt Book For Beginners : A Step By Step Guide To Use Chatgpt Effectively, Earn Money And Increase Your Productivity With Over 50+ TipsFrom EverandChatgpt Book For Beginners : A Step By Step Guide To Use Chatgpt Effectively, Earn Money And Increase Your Productivity With Over 50+ TipsNo ratings yet

- 166 190410245 SiddhantSahooDocument10 pages166 190410245 SiddhantSahooSiddhant SahooNo ratings yet

- Chat GPTDocument10 pagesChat GPTTarang SinghNo ratings yet

- Gartner - Generative AI Briefing Bunnings - August 2023Document49 pagesGartner - Generative AI Briefing Bunnings - August 2023artie.mellorsNo ratings yet

- What Is ChatGPT Why You Need To Care About GPT 4 - PC GuideDocument1 pageWhat Is ChatGPT Why You Need To Care About GPT 4 - PC GuideJOSIP JANCIKICNo ratings yet

- Sefer Torah, Nĕbiim, Kĕtubim Im Hanĕquddot WĕhatĕamimDocument1,211 pagesSefer Torah, Nĕbiim, Kĕtubim Im Hanĕquddot Wĕhatĕamim[PROJETO REVELANDO O OCULTO]No ratings yet

- DivyanshDocument21 pagesDivyanshKhushal JangidNo ratings yet

- Indoor Cable Floor Heating Systems: Application ManualDocument52 pagesIndoor Cable Floor Heating Systems: Application ManualLucian CiudinNo ratings yet

- NotesHub Android App DownloadDocument29 pagesNotesHub Android App DownloadTanmay GautamNo ratings yet

- Experiment 13: Data Structure & Algorithm LabDocument7 pagesExperiment 13: Data Structure & Algorithm LabRuchi JainNo ratings yet

- CapsuleNetworks ASurveyDocument17 pagesCapsuleNetworks ASurveymanaliNo ratings yet

- Compiler Unit 1Document110 pagesCompiler Unit 1Aditya RajNo ratings yet

- Brochure DFX1000 2010Document2 pagesBrochure DFX1000 2010johnnykentNo ratings yet

- 1LOC005 - How Do I Allocate Legs To A Drivers Run SheetDocument2 pages1LOC005 - How Do I Allocate Legs To A Drivers Run Sheetguolidong2017No ratings yet

- Stop A Power Zombie Apocalypse Using Dell EMC OpenManage Enterprise Power ManagerDocument5 pagesStop A Power Zombie Apocalypse Using Dell EMC OpenManage Enterprise Power ManagerPrincipled TechnologiesNo ratings yet

- Openflow For Wireless Mesh NetworksDocument6 pagesOpenflow For Wireless Mesh NetworkslidonesNo ratings yet

- Scope of Scripting in VLSIDocument4 pagesScope of Scripting in VLSIAamodh KuthethurNo ratings yet

- MET 415 104 - Automatic Control SystemsDocument8 pagesMET 415 104 - Automatic Control SystemsVergielyn MatusNo ratings yet

- Data Sheet c78-633747Document6 pagesData Sheet c78-633747Gia Toàn LêNo ratings yet

- Seminar Report on Computing Power and ModelingDocument2 pagesSeminar Report on Computing Power and ModelingLelandTienNo ratings yet

- 0Document343 pages0Artem Sergeevich AkopyanNo ratings yet

- Artificial Intelligence in Service-Oriented Software DesignDocument22 pagesArtificial Intelligence in Service-Oriented Software DesignChris CocNo ratings yet

- Mod3 Supplychainanalytics Usecases Part 1Document27 pagesMod3 Supplychainanalytics Usecases Part 1Ashner NovillaNo ratings yet

- NPT 1030 ImmDocument253 pagesNPT 1030 ImmThe Quan BuiNo ratings yet

- Expose Sites Bama NewDocument75 pagesExpose Sites Bama NewLuc Gérard ENGOUTOUNo ratings yet

- Ic Data 3Document2 pagesIc Data 3Ssr ShaNo ratings yet

- How To Install OS X Mountain Lion in Virtualbox With HackbootDocument16 pagesHow To Install OS X Mountain Lion in Virtualbox With Hackbootyuganshu_soniNo ratings yet

- Risk Assess Student GuideDocument9 pagesRisk Assess Student GuideAhron SenNo ratings yet

- MSC Nastran 2023.1 Installation and Operations GuideDocument240 pagesMSC Nastran 2023.1 Installation and Operations GuidehyperNo ratings yet

- PSI 300 Particle Size Analyzers Maintenance Manual: Code 10000004258e Revision A Page 1Document22 pagesPSI 300 Particle Size Analyzers Maintenance Manual: Code 10000004258e Revision A Page 1Juan Miguel RodriguezNo ratings yet

- Optical Character RecognitionDocument17 pagesOptical Character RecognitionVivek Shivalkar100% (1)

- Practical 1 @himanshuDocument12 pagesPractical 1 @himanshuMFWAGHDHAGDVUANo ratings yet

- Amedex FB Group GuidelinesDocument7 pagesAmedex FB Group GuidelinesNQ ZNo ratings yet

- INTRODUCTIONDocument80 pagesINTRODUCTIONshaikhNo ratings yet

- Compiler ConstructionDocument7 pagesCompiler Constructionsmumin011No ratings yet