Professional Documents

Culture Documents

Matter

Uploaded by

vemaladileep77599Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Matter

Uploaded by

vemaladileep77599Copyright:

Available Formats

Pneumonia Detection from CT scan using CNN Models

CHAPTER-1

INTRODUCTION

Pneumonia, a prevalent respiratory infection, poses a significant threat to public health,

particularly in densely populated and contaminated areas. Characterized by inflammation and

congestion in the lungs, pneumonia obstructs normal breathing patterns, often leading to

severe respiratory distress. In contemporary healthcare, the diagnosis of pneumonia typically

relies on imaging techniques such as X-rays and CT scans. While X-rays offer a conventional

means of detection, providing fundamental insights into pneumonia, CT scans deliver more

intricate and detailed assessments, enhancing diagnostic accuracy despite limited radiation

exposure.

In recent years, the advent of Deep Learning has revolutionized medical diagnostics,

particularly in the realm of pneumonia detection. Leveraging Convolutional Neural Networks

(CNNs) and transfer learning techniques, researchers have developed robust predictive

models capable of analyzing CT scans with unprecedented efficiency. These models operate

by extracting salient features from CT images through convolutional layers, subsequently

employing dense networks for classification. The primary objective of these models is to

discern whether a given CT scan indicates the presence of pneumonia or not, thereby

facilitating prompt and accurate diagnosis.

In this context, this project aims to explore the application of Deep Learning methodologies

in pneumonia detection from CT scans. Through the utilization of CNNs and transfer

learning, we endeavour to construct predictive models that exhibit superior performance in

distinguishing pneumonia-affected CT scans from healthy ones. By harnessing the power of

advanced computational techniques, we aspire to contribute to the advancement of medical

diagnostics, ultimately improving patient outcomes and mitigating the burden of pneumonia

on global healthcare systems.

SIR C R REDDY COLLEGE OF ENGINEERING 1

Pneumonia Detection from CT scan using CNN Models

CHAPTER-2

LITERATURE SURVEY

2.1. Pneumonia detection in chest X-ray images using an ensemble of deep learning

models:

Pneumonia detection using chest X-ray images has been a subject of extensive research in

recent years, driven by the need for accurate and efficient diagnostic tools to combat this

prevalent respiratory infection. The literature survey on this topic encompasses various

studies focusing on different aspects of pneumonia detection, including image analysis

techniques, deep learning models, and ensemble methods. Some of key findings and

approaches in the existing literature are:[1]

2.1.1 Key findings:

2.1.1.1. Image Analysis Techniques:

Traditional image processing techniques have been utilized for pneumonia detection

in chest X-ray images, including thresholding, edge detection, and texture analysis.

However, these methods often lack robustness and may struggle to handle the

complexities and variations present in medical images.

2.1.1.2. Deep Learning Models:

Deep learning models, particularly convolutional neural networks (CNNs), have

emerged as powerful tools for pneumonia detection. Studies have demonstrated the

effectiveness of CNNs in automatically extracting relevant features from chest X-ray

images and accurately classifying pneumonia cases.

Popular CNN architectures such as GoogLeNet, ResNet, and DenseNet have been

widely used in pneumonia detection tasks. These models offer strong performance

and scalability, making them suitable for real-world applications.

2.1.1.3. Transfer Learning:

Transfer learning has been extensively employed to address the challenge of limited

training data in medical image analysis tasks. Pre-trained CNN models trained on

large-scale image datasets (e.g., ImageNet) are fine-tuned on pneumonia-specific

datasets to leverage learned features and improve model generalization.

SIR C R REDDY COLLEGE OF ENGINEERING 2

Pneumonia Detection from CT scan using CNN Models

2.1.1.4. Ensemble Methods:

Ensemble methods combine predictions from multiple base models to achieve

superior performance compared to individual models. Studies have explored various

ensemble techniques, including simple averaging, weighted averaging, and stacking,

to enhance pneumonia detection accuracy.

Weighted averaging, where the weights assigned to base models are determined based

on their performance, has shown promise in improving ensemble performance and

robustness.

2.1.1.5. Evaluation Metrics:

Evaluation metrics commonly used in pneumonia detection studies include accuracy,

precision, recall (sensitivity). These metrics provide comprehensive assessments of

model performance, considering both true positive and false positive rates.

2.2. A Deep Learning based model for the Detection of Pneumonia from Chest X-Ray

Images using VGG-16 and Neural Networks:

Pneumonia is a viral infection which affects a significant proportion of individuals, especially

in developing and penurious countries where contamination, overcrowded, and unsanitary

living conditions are widespread, along with the lack of healthcare infrastructures.

Pneumonia produces pericardial effusion, a disease wherein fluids fill the chest and create

inhaling problems. It is a difficult step to recognize the presence of pneumonia quickly in

order to receive treatment services and improve survival chances. Deep learning, is a field of

artificial intelligence which is used in the successful development of prediction models. There

are various ways of detecting pneumonia such as CT-scan, pulse oximetry, and many more

among which the most common way is X-ray tomography. On the other hand, examining

chest X-rays (CXR) is a tough process susceptible to subjective variability. In this work, a

deep learning (DL) model using VGG16 is utilized for detecting and classifying pneumonia

using two CXR image datasets. The VGG16 with Neural Networks (NN) provides an

accuracy value of 92.15%, recall as 0.9308, precision as 0.9428, and F1-Score0.937 for the

first dataset. Furthermore, the experiment using NN with VGG16 has been performed on

another CXR dataset containing 6,436 images of pneumonia, normal and covid-19. The

results for the second dataset provide accuracy, recall, precision, and F1-score as 95.4%,

0.954, 0.954, and 0.954, respectively. The research outcome exhibits that VGG16 with NN

SIR C R REDDY COLLEGE OF ENGINEERING 3

Pneumonia Detection from CT scan using CNN Models

provides better performance than VGG16 with Support Vector Machine (SVM), VGG16 with

K-Nearest Neighbor (KNN), VGG16 with Random Forest (RF), and VGG16 with Naïve

Bayes (NB) for both datasets.[2]

2.3. Detection of Pneumonia in Chest X-ray Images Using Neural Networks:

This study investigates the application of neural networks for the detection of pneumonia in

chest X-ray images. Pneumonia is a prevalent and potentially life-threatening condition, and

early detection is crucial for effective treatment. With the advent of deep learning techniques,

particularly convolutional neural networks (CNNs), automated detection systems have shown

promising results. In this work, we propose a novel approach utilizing CNNs to accurately

classify chest X-ray images as either pneumonia-positive or pneumonia-negative. This

methodology involves preprocessing steps, data augmentation, and fine-tuning of pre-trained

models to enhance classification performance. They evaluate their approach on a large dataset

of chest X-ray images and compare its performance with existing methods. The results

demonstrated the efficacy and potential of neural networks in pneumonia detection, providing

a foundation for future research and clinical applications in medical imaging.[3]

SIR C R REDDY COLLEGE OF ENGINEERING 4

Pneumonia Detection from CT scan using CNN Models

CHAPTER-3

EXISTING SYSTEM

In this system, they developed a computer-aided diagnosis system for automatic pneumonia

detection using chest X-ray images. They employed deep transfer learning to handle the

scarcity of available data and designed an ensemble of three convolutional neural network

models: GoogLeNet, ResNet-18, and DenseNet-121. A weighted average ensemble technique

was adopted, where in the weights assigned to the base learners were determined using a

novel approach.

The scores of four standard evaluation metrics, precision, recall, f1-score, and the area under

the curve, are fused to form the weight vector, which in studies in the literature was

frequently set experimentally, a method that is prone to error. The existing approach was

evaluated on two publicly available pneumonia X-ray datasets, provided by Kermany et al.

and the Radiological Society of North America (RSNA), respectively, using a five-fold cross-

validation scheme. The existing system achieved accuracy rates of 98.81% and 86.85% and

sensitivity rates of 98.80% and 87.02% on the Kermany and RSNA datasets, respectively.

Ensemble learning is a popular strategy in which the decisions of multiple classifiers are

fused to obtain the final prediction for a test sample. It is performed to capture the

discriminative information from all the base classifiers, and thus, results in more accurate

predictions. Some of the ensemble techniques that were most frequently used in studies in the

literature are average probability, weighted average probability, and majority voting. The

average probability-based ensemble assigns equal priority to each constituent base learner.

However, for a particular problem, a certain base classifier may be able to capture

information better than others. Thus, a more effective strategy is to assign weights to all the

base classifiers. However, for ensuring the enhanced performance of the ensemble, the value

of the weights assigned to each classifier is the most essential factor. Most approaches set this

value based on experimental results. In this study, we devised a novel strategy for weight

allocation, where four evaluation metrics, precision, recall, f1-score, and area under receiver

operating characteristics (ROC) curve (AUC), were used to assign the optimal weight to three

base CNN models, GoogLeNet, ResNet-18, and DenseNet-121. In studies in the literature, in

general, only the classification accuracy was considered for assigning weights to the base

SIR C R REDDY COLLEGE OF ENGINEERING 5

Pneumonia Detection from CT scan using CNN Models

learners, which may be an inadequate measure, in particular when the datasets are class-

imbalanced. Other metrics may provide better information for prioritizing the base learners .

Early detection of pneumonia is crucial for determining the appropriate treatment of the

disease and preventing it from threatening the patient’s life. Chest radiographs are the most

widely used tool for diagnosing pneumonia; however, they are subject to inter-class

variability and the diagnosis depends on the clinicians’ expertise in detecting early

pneumonia traces. To assist medical practitioners, an automated CAD system was developed

in this study, which uses deep transfer learning-based classification to classify chest X-ray

images into two classes “Pneumonia” and “Normal.” An ensemble framework was developed

that considers the decision scores obtained from three CNN models, GoogLeNet, ResNet-18,

and DenseNet-121, to form a weighted average ensemble. The weights assigned to the

classifiers were calculated using a novel strategy wherein four evaluation metrics, precision,

recall, f1-score, and AUC, were fused using the hyperbolic tangent function. The framework,

evaluated on two publicly available pneumonia chest X-ray datasets, obtained an accuracy

rate of 98.81%, a sensitivity rate of 98.80%, a precision rate of 98.82%, and an f1-score of

98.79% on the Kermany dataset and an accuracy rate of 86.86%, a sensitivity rate of 87.02%,

a precision rate of 86.89%, and an f1-score of 86.95% on the RSNA challenge dataset, using

a five-fold cross-validation scheme. It outperformed state-of-the-art methods on these two

datasets. Statistical analyses of the proposed model using McNemar’s and ANOVA tests

indicate the viability of the approach. Furthermore, the proposed ensemble model is domain-

independent and thus can be applied to a large variety of computer vision tasks

SIR C R REDDY COLLEGE OF ENGINEERING 6

Pneumonia Detection from CT scan using CNN Models

Fig. 3.1 Architecture of pneumonia detection from X-rays

SIR C R REDDY COLLEGE OF ENGINEERING 7

Pneumonia Detection from CT scan using CNN Models

3.1. Advantages:

Availability of Dataset: X-ray datasets are more widely available compared to

datasets obtained from other imaging modalities such as CT scans or MRI. This

availability stems from the routine use of X-rays in clinical practice for diagnosing

various thoracic conditions, including pneumonia Monitoring Progress: X-rays can be

used to monitor the progression of pneumonia and assess the effectiveness of

treatment over time.

Limited Radiation Exposure: X-rays involve lower radiation exposure compared to

other imaging techniques like CT scans. This reduced radiation makes X-rays safer,

especially for vulnerable populations such as children and pregnant women. Since

pneumonia is a condition that may require frequent monitoring and follow-up

imaging, using X-rays mitigates concerns regarding cumulative radiation exposure

over multiple scans.

Cost-effectiveness: X-ray imaging is generally more cost-effective compared to other

modalities such as CT scans or MRI. This affordability makes X-rays accessible in

various healthcare settings, including resource-constrained environments where

advanced imaging modalities may not be readily available.

3.2. Disadvantages:

Limited Sensitivity and Specificity: While X-rays can detect structural abnormalities

in the lungs, they may lack sensitivity and specificity in distinguishing between

different types of pulmonary conditions. This can lead to false positives or false

negatives in pneumonia diagnosis, potentially resulting in misdiagnosis or delayed

treatment.

Radiation Exposure: X-ray imaging involves exposure to ionizing radiation, albeit at

relatively low levels. While the risk of radiation-induced harm from a single X-ray is

minimal, repeated exposure over time may increase the cumulative risk, particularly

in vulnerable populations such as children and pregnant women.

False Positives and Negatives: Like any diagnostic tool, chest X-rays are not

infallible. False positives (indicating pneumonia when it is not present) and false

negatives (missing pneumonia when it is present) can occur. This highlights the

importance of considering clinical symptoms and other diagnostic information.

SIR C R REDDY COLLEGE OF ENGINEERING 8

Pneumonia Detection from CT scan using CNN Models

CHAPTER-4

PROPOSED SYSTEM

4.1. Problem Statement:

Building a web application that takes CT scan images of lungs as input and predicts whether

pneumonia is present or not.

4.2. Objective:

To overcome the limitations of the existing system, in this study, we developed a computer-

aided diagnosis system for automatic pneumonia detection using CT scan images, we

employed deep transfer learning to handle the scarcity of available data and designed an

ensemble of three convolutional neural network models: EfficientNetV2B1, InceptionV3,

VGG16, DenseNet121, ResNet50V2, MobileNetV2, Custom Model.

Fig. 4.1 Architecture of pneumonia detection from CT scan

The proposed model has taken the three CNN models that have higher accuracy than the

remaining CNN models and applied bagging technique for those three CNN models. Increase

in the accuracy minimizes the deaths.

The proposed approach was evaluated on publicly available pneumonia CT scan dataset,

provided by Kaggle, because the availability of datasets is limited as the medical data of the

patients are not disclosed due to security reasons.

SIR C R REDDY COLLEGE OF ENGINEERING 9

Pneumonia Detection from CT scan using CNN Models

4.3. Models:

4.3.1 EfficientNetV2B1:

EfficientNetV2 is an extension of the EfficientNet architecture, designed to achieve

improved performance with fewer parameters and computational resources.

It introduces a compound scaling method that uniformly scales network width, depth,

and resolution with a set of fixed scaling coefficients.

4.3.2. InceptionV3:

InceptionV3 is a deep convolutional neural network architecture developed by

Google.

It is known for its Inception modules, which consist of multiple parallel convolutional

branches with different filter sizes.

InceptionV3 utilizes deep convolutional neural networks with inception modules to

efficiently extract features from images for tasks such as image classification and

object detection.

4.3.3. VGG16:

VGG16 is a convolutional neural network architecture developed by the Visual

Geometry Group (VGG) at the University of Oxford.

It consists of 16 layers with a simple and uniform architecture, comprising multiple

convolutional layers followed by max-pooling layers and fully connected layers.

4.3.4. DenseNet121:

DenseNet (Densely Connected Convolutional Networks) is a neural network

architecture proposed by researchers at Facebook AI Research.

DenseNet121 is a specific variant with 121 layers, characterized by dense

connectivity patterns between layers.

In DenseNet, each layer receives feature maps from all preceding layers as input,

promoting feature reuse and alleviating the vanishing gradient problem.

4.3.5. ResNet50V2:

SIR C R REDDY COLLEGE OF ENGINEERING 10

Pneumonia Detection from CT scan using CNN Models

ResNet (Residual Neural Network) is a deep learning architecture introduced by

Microsoft Research.

ResNet50V2 is a variant with 50 layers, incorporating residual connections that

enable the training of very deep neural networks.

Residual connections facilitate the flow of gradients during training, mitigating the

degradation problem associated with training deep networks.

4.3.6. MobileNetV2:

MobileNetV2 is a convolutional neural network architecture optimized for mobile and

embedded devices, utilizing depth wise separable convolutions and inverted residuals

to achieve high efficiency and accuracy in image classification tasks.

It employs depth wise separable convolutions and linear bottlenecks to reduce the

computational cost while maintaining accuracy.

4.3.7. Custom Model:

This custom model is a convolutional neural network (CNN) designed for binary image

classification. Here's a brief summary of its architecture:

Input Rescaling: The input images are rescaled so that the pixel values range from 0

to 1.

Convolutional Layers: The model consists of three convolutional layers, each

followed by a rectified linear unit (ReLU) activation function. These layers use a 3x3

kernel to extract features from the input images.

Max Pooling Layers: After each convolutional layer, a max-pooling layer is applied

to reduce the spatial dimensions of the feature maps and capture the most important

information.

Flatten Layer: The flattened layer is used to convert the 2D feature maps into a 1D

vector, which can be fed into a fully connected neural network.

Fully Connected Layers: There are two dense (fully connected) layers in the model.

The first dense layer consists of 128 neurons with ReLU activation, allowing the

model to learn complex patterns from the flattened feature maps. The second dense

layer has a single neuron with a sigmoid activation function, which produces the final

binary classification output.

SIR C R REDDY COLLEGE OF ENGINEERING 11

Pneumonia Detection from CT scan using CNN Models

Compilation: The model is compiled using the Adam optimizer and binary cross-

entropy loss function. It is optimized to classify binary labels, and accuracy is used as

the evaluation metric.

SIR C R REDDY COLLEGE OF ENGINEERING 12

Pneumonia Detection from CT scan using CNN Models

SIR C R REDDY COLLEGE OF ENGINEERING 13

Pneumonia Detection from CT scan using CNN Models

Fig. 4.2. Architecture of custom model

CHAPTER-5

REQUIREMENT ANALYSIS

5.1. FUNCTIONAL REQUIREMENTS:

Input Data Compatibility: The system should be capable of accepting CT scan

images in standard formats such as DICOM (Digital Imaging and Communications in

Medicine) or other common image formats.

Preprocessing: Preprocessing steps such as resizing, normalization, and data

augmentation should be applied to the input CT scan images to prepare them for

feature extraction.

Feature Extraction: The system should employ convolutional neural network (CNN)

models for feature extraction from CT scan images. Transfer learning techniques

should be used to leverage pre-trained models for better performance.

Classification: Extracted features should be fed into dense neural networks for

classification, distinguishing between pneumonia-affected and unaffected CT scans.

Model Evaluation: The system should evaluate the performance of trained CNN

models using appropriate metrics such as accuracy, precision, recall, and F1 score to

assess their effectiveness in pneumonia detection.

Real-time Processing: The system should be capable of processing CT scan images

in real-time to provide timely diagnosis and treatment recommendations.

Scalability: The system should be scalable to accommodate a large volume of CT

scan images and support concurrent processing to meet the demands of healthcare

facilities.

SIR C R REDDY COLLEGE OF ENGINEERING 14

Pneumonia Detection from CT scan using CNN Models

5.2. NON-FUNCTIONAL REQUIREMENTS:

Accuracy: The system should achieve a high level of accuracy in pneumonia

detection to minimize false positives and false negatives, ensuring reliable diagnosis.

Performance: The system should be efficient in terms of computational resources

and processing time, providing timely results to healthcare professionals.

Security: The system should adhere to strict security standards to protect patient data

and ensure confidentiality, integrity, and availability.

Usability: The system should have an intuitive user interface that is easy to navigate,

facilitating seamless interaction for healthcare professionals.

Robustness: The system should be resilient to variations in input data quality, noise,

and artifacts commonly encountered in medical imaging.

Compliance: The system should comply with relevant regulations and standards for

medical devices and software, ensuring legal and ethical compliance in healthcare

settings.

Interoperability: The system should be compatible with existing healthcare

information systems and interoperable with other medical imaging tools and devices.

Maintainability: The system should be designed with modularity and extensibility in

mind, allowing for easy maintenance, updates, and integration of new features or

improvements.

SIR C R REDDY COLLEGE OF ENGINEERING 15

Pneumonia Detection from CT scan using CNN Models

CHAPTER-6

DESIGN AND METHODOLOGY

6.1. UML DIAGRAMS:

Unified Modeling Language (UML):

UML diagrams are visual representations used to design and model software systems.

They are standardized diagrams used in software engineering to communicate design

decisions and system architecture.

UML diagrams can help developers, stakeholders, and team members understand the

structure and behaviour of a system before it is implemented.

6.1.1 Use case diagram:

Use case diagrams to illustrate the interactions between users (actors) and a system to

accomplish specific tasks or goals. They help to identify and define the functional

requirements of a system from the user's perspective.

SIR C R REDDY COLLEGE OF ENGINEERING 16

Pneumonia Detection from CT scan using CNN Models

Fig. 6.1. Use case diagram

6.1.2. Sequence diagram:

Sequence diagrams visualize the interactions between objects in a particular scenario or use

case. They show the sequence of messages exchanged between objects over time, helping to

understand the dynamic behaviour of the system.

SIR C R REDDY COLLEGE OF ENGINEERING 17

Pneumonia Detection from CT scan using CNN Models

Fig. 6.2. Sequence diagram

6.1.3. Activity diagram:

Activity diagrams represent the flow of control within a system, showing the sequence of

activities and decision points. They are useful for modeling business processes, workflow,

and the logic of complex operations.

SIR C R REDDY COLLEGE OF ENGINEERING 18

Pneumonia Detection from CT scan using CNN Models

Fig. 6.3. Activity diagram

6.1.4. Class diagram:

Class diagrams depict the static structure of a system by showing classes, their attributes,

methods, and relationships between classes. They provide a blueprint for the implementation

of the system's objects and their interactions.

SIR C R REDDY COLLEGE OF ENGINEERING 19

Pneumonia Detection from CT scan using CNN Models

Fig. 6.4. Class diagram

6.2. Methodology:

Data Collection: Gather a dataset of CT scans containing both pneumonia-positive

and pneumonia-negative cases.

SIR C R REDDY COLLEGE OF ENGINEERING 20

Pneumonia Detection from CT scan using CNN Models

Data Preprocessing: Preprocess the CT scan images, including resizing,

normalization, and augmentation techniques to improve model generalization.

Transfer Learning: Utilize pre-trained CNN models such as MobileNetV2 or

ResNet50 as feature extractors. Fine-tune these models on the pneumonia dataset to

adapt them to the specific task.

Model Architecture: Design the CNN architecture, comprising convolutional layers

for feature extraction and dense layers for classification. Incorporate techniques such

as dropout and batch normalization to prevent overfitting.

Training: Train the CNN models on the preprocessed CT scan dataset using

supervised learning. Use techniques like cross-validation and hyperparameter tuning

to optimize model performance.

Evaluation: Evaluate the trained models using metrics such as accuracy, precision,

recall, and F1-score on a separate test set. Perform error analysis to identify areas for

improvement.

Deployment: Deploy the trained models in a clinical setting for pneumonia detection

from CT scans. Ensure seamless integration with existing healthcare systems and

compliance with regulatory standards.

Monitoring and Maintenance: Continuously monitor the performance of deployed

models and update them as needed based on new data and advancements in deep

learning techniques.

CHAPTER-7

IMPLEMENTATION

7.1. DATA PREPROCESSING:

SIR C R REDDY COLLEGE OF ENGINEERING 21

Pneumonia Detection from CT scan using CNN Models

Fig. 7.1. Data preprocessing

Sample Code:

#importing Libraries

import tensorflow as tf

import cv2

import os

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

import random

7.1.1. Data Collection and Cleaning:

The CT Scan dataset is collected from the Kaggle.

src: https://www.kaggle.com/datasets/anaselmasry/covid19normalpneumonia-ct-

images?select=COVID2_CT

The dataset has around 8 thousand images belong to 3 classes.

Then we cleaned the data by removing images with unnecessary labels.

def load_images_from_folder(folder):

images = []

labels = []

for classes in os.listdir(folder):

SIR C R REDDY COLLEGE OF ENGINEERING 22

Pneumonia Detection from CT scan using CNN Models

count = 0

for filename in os.listdir(folder+'/'+classes):

img = cv2.imread(os.path.join(folder,classes,filename))

if img is not None:

images.append(img)

labels.append(classes)

count+=1

print("no of images in",classes,"is",count)

return images,labels

imgs,labels = load_images_from_folder('G:/Engg/Project/CTDataset')

data = {'images':imgs,'labels':labels}

dataF = pd.DataFrame(data)

7.1.2. Gray Scale Conversion and Image resizing:

Then the 3-channel RGB images are converted to Gray Scale using cv2.

After, the images are resized to 160,160 for transfer learning models.

Original Images are in 512,512 and resized the images to 180,180 for custom model.

(180,180,1) for

(512, 512, 3) to

custom model

(160, 160, 1) for

transfer learning

models

Fig 7.2. Gray

scale conversion

and image resizing

dataF['images'] = dataF['images'].apply(lambda x: cv2.cvtColor(x,

cv2.COLOR_BGR2GRAY))

dataF['images'] = dataF['images'].apply(lambda x: cv2.resize(x,(256,256)))

dataF['images'] = dataF['images'].apply(lambda x: x.reshape((256,256,1)))

7.1.3. Balancing:

SIR C R REDDY COLLEGE OF ENGINEERING 23

Pneumonia Detection from CT scan using CNN Models

As the data set is biased, we balanced the dataset by performing data augmentation on lower

samples class (Normal CT’s) and under sampling techniques on higher samples class

(Pneumonic CT’s)

Fig. 7.3.1 Class distribution diagram Before Oversampling and Down sampling

Fig. 7.3.2 Class distribution diagram After Oversampling and Down sampling

from tensorflow.keras.preprocessing.image import ImageDataGenerator

datagen = ImageDataGenerator(horizontal_flip=True)

aug_iter = datagen.flow(dataF['images'][0].reshape(1,256, 256,1), batch_size=1

def DataAug(data,min_class,datagen,size,target_size):

data_min = data[data['labels']==min_class]

i=0

while(size<target_size):

aug_iter = datagen.flow(data_min['images'][i].reshape(1,256, 256, 1), batch_size=1)

image = next(aug_iter)[0].astype('uint8')

data.loc[len(data.index)] = [image, min_class]

SIR C R REDDY COLLEGE OF ENGINEERING 24

Pneumonia Detection from CT scan using CNN Models

size+=1

i+=1

def DownSampling(data,maj_class,size,target_size):

data_maj_indexes = list(data[data['labels']==maj_class].index)

while(target_size<size):

t = random.randint(0, size-1)

i = data_maj_indexes[t]

data.drop(i,inplace=True)

data_maj_indexes.pop(t)

size-=1

7.1.4. Label Encoding and Normalization:

The images are labelled as Normal_CT and Penumonia_CT.

These values are converted to 0 and 1 using sklearn label encoder.

Normalization rescales the images to from 0-255 to 0 to 1 for better computational

efficiency.

from sklearn.preprocessing import LabelEncoder

le = LabelEncoder()

dataF['labels'] = le.fit_transform(dataF['labels'])

7.1.5. Splitting of Dataset:

The dataset is split to 4:1 ratio 80% data for training and 20% for validation.

The test set is stored in separate directory with around 10% of train set.

train_ds = tf.keras.utils.image_dataset_from_directory(

data_dir, validation_split=0.2, subset="training",seed=123,image_size=(img_height,

img_width),

batch_size=batch_size)

val_ds = tf.keras.utils.image_dataset_from_directory(

data_dir, validation_split = 0.2, subset="validation", seed=123,

SIR C R REDDY COLLEGE OF ENGINEERING 25

Pneumonia Detection from CT scan using CNN Models

image_size=(img_height, img_width), batch_size=batch_size)

7.2. Training the models:

Transfer learning using Keras involves leveraging pre-trained models to address new tasks

efficiently. With Keras, one can easily import popular architectures like VGG, ResNet, or

Inception, which are trained on massive datasets like ImageNet. By removing the top layers

and adding new ones tailored to the specific task at hand, such as image classification or

object detection, one can retrain the model on a smaller dataset. This approach significantly

reduces training time and resource requirements while often achieving impressive

performance, making Keras transfer learning models a go-to choose for many machine

learning practitioners.

Out of the all keras transfer learning models we have selected the 6 models with best

accuracy and reasonable no of parameters.

SIR C R REDDY COLLEGE OF ENGINEERING 26

Pneumonia Detection from CT scan using CNN Models

Table. 7.1. Keras Transfer Learning models

Out of all the 27 models we have selected based on performance and no of parameters:

1. ResNet50v2

2. EfficientNetV2B1

3. VGG16

SIR C R REDDY COLLEGE OF ENGINEERING 27

Pneumonia Detection from CT scan using CNN Models

4. Densenet121

5. MobileNetv2

6. Inception V3

Custom Model Sample code:

model = tf.keras.Sequential([

tf.keras.layers.Rescaling(1./255),

tf.keras.layers.Conv2D(32, 3, activation='relu'),

tf.keras.layers.MaxPooling2D(),

tf.keras.layers.Conv2D(32, 3, activation='relu'),

tf.keras.layers.MaxPooling2D(),

tf.keras.layers.Conv2D(32, 3, activation='relu'),

tf.keras.layers.MaxPooling2D(),

tf.keras.layers.Flatten(),

tf.keras.layers.Dense(128, activation='relu'),

tf.keras.layers.Dense(1, activation='sigmoid')

])

model.compile(

optimizer='adam',

loss=tf.keras.losses.BinaryCrossentropy(from_logits=True),

metrics=['accuracy'])

model.fit(

train_ds,

validation_data=val_ds,

epochs=20

)

Transfer Learning:

#Resnet50V2

base_model = tf.keras.applications.ResNet50V2(input_shape=(160,160,3),

include_top=False,

SIR C R REDDY COLLEGE OF ENGINEERING 28

Pneumonia Detection from CT scan using CNN Models

weights='imagenet')

#EfficientNetV2B1

base_model = tf.keras.applications.EfficientNetV2B1(input_shape=(160,160,3),

include_top=False,

weights='imagenet')

base_model.trainable = False

inputs = tf.keras.Input(shape=(160, 160, 3))

x = tf.keras.applications.vgg16.preprocess_input(inputs)

x = base_model(x, training=False)

x = tf.keras.layers.GlobalAveragePooling2D()(x) # Average pooling

x = tf.keras.layers.BatchNormalization()(x) # Introduce batch norm

x = tf.keras.layers.Dropout(0.2)(x) # Regularize with dropout

outputs = tf.keras.layers.Dense(1, activation='sigmoid')(x)

model = tf.keras.Model(inputs, outputs)

model.compile(

optimizer='adam',

loss=tf.keras.losses.BinaryCrossentropy(from_logits=False),

metrics=['accuracy'])

history = model.fit(

train_ds,

validation_data=val_ds,

epochs=20

)

SIR C R REDDY COLLEGE OF ENGINEERING 29

Pneumonia Detection from CT scan using CNN Models

CHAPTER-8

TESTING

8.1. Evaluation on validation sets:

While training the model we have used 20% data from training set as validation to check the

model’s performance on unseen data.

The train accuracies are lower than the validation accuracies because of the Drop out layers

and other regularizations techniques used to prevent overfitting.

The validation performances are in the order:

1. Custom model

2. EfficientNetV2B1

3. ResNet50V2

4. DenseNet121

5. VGG16

6. MobileNetV2

7. InceptionV3

Out of the seven modes Custom Model, ResNet50V2, EfficientNetV2B1 performed with

better accuracy.

Table 8.1. Training and validation accuracy of various CNN methods

SIR C R REDDY COLLEGE OF ENGINEERING 30

Pneumonia Detection from CT scan using CNN Models

Sample Code:

acc = history.history['accuracy']

val_acc = history.history['val_accuracy']

loss = history.history['loss']

val_loss = history.history['val_loss']

plt.figure(figsize=(8, 8))

plt.subplot(2, 1, 1)

plt.plot(acc, label='Training Accuracy')

plt.plot(val_acc, label='Validation Accuracy')

plt.legend(loc='lower right')

plt.ylabel('Accuracy')

plt.ylim([min(plt.ylim()),1])

plt.title('Training and Validation Accuracy')

plt.subplot(2, 1, 2)

plt.plot(loss, label='Training Loss')

plt.plot(val_loss, label='Validation Loss')

plt.legend(loc='upper right')

plt.ylabel('Cross Entropy')

plt.ylim([0,1.0])

plt.title('Training and Validation Loss')

plt.xlabel('epoch')

plt.show()

SIR C R REDDY COLLEGE OF ENGINEERING 31

Pneumonia Detection from CT scan using CNN Models

def preprocess_image(image_path):

img = cv2.imread(image_path)

img = cv2.resize(img, (160, 160))

img = np.expand_dims(img, axis=0)

return img

# Function to make predictions on a single image

def predict_single_image(image_path):

img = preprocess_image(image_path)

prediction = model2.predict(img)

return prediction

def evaluate_folder(folder_path):

predictions = []

image_paths = [os.path.join(folder_path, f) for f in os.listdir(folder_path) if

os.path.isfile(os.path.join(folder_path, f))]

for image_path in image_paths:

prediction = predict_single_image(image_path)

predictions.append(prediction)

return predictions

test_folder_normal = 'G:/Engg/Project/Test/Normal'

test_folder_pneumonic = 'G:/Engg/Project/Test/Pneumonic'

predictions_normal = evaluate_folder(test_folder_normal)

predictions_pneumonic = evaluate_folder(test_folder_pneumonic)

SIR C R REDDY COLLEGE OF ENGINEERING 32

Pneumonia Detection from CT scan using CNN Models

ground_truth_normal = [0] * len(predictions_normal)

ground_truth_pneumonic = [1] * len(predictions_pneumonic)

actual_labels = ground_truth_normal + ground_truth_pneumonic

predicted_labels = []

predicted_labels.extend(predictions_normal)

predicted_labels.extend(predictions_pneumonic)

predicted_labels = [1 if pred > 0.5 else 0 for pred in predicted_labels]

conf_matrix = confusion_matrix(actual_labels, predicted_labels)

8.1.1. Resnet50V2:

On model training the performance of the model on training (96%) and validation sets (98%).

SIR C R REDDY COLLEGE OF ENGINEERING 33

Pneumonia Detection from CT scan using CNN Models

Fig.8.1. Accuracy and loss of training and validation of Resnet50V2

8.1.2. EfficientNetV2B1:

the performance of EfficientNet model on training (97.41%) and on validation set (99%).

SIR C R REDDY COLLEGE OF ENGINEERING 34

Pneumonia Detection from CT scan using CNN Models

Fig.8.2. Accuracy and loss of training and validation of EfficientNetV2B1

8.1.3. Custom model:

The custom model gave top performance with 99.3% training accuracy and 99.4% on

validation set.

SIR C R REDDY COLLEGE OF ENGINEERING 35

Pneumonia Detection from CT scan using CNN Models

Fig.8.3. Accuracy and loss of training and validation of Custom model

8.2. Model evaluation:

8.2.1 Custom model:

Accuracy : 98.78

Precision : 99.70

Recall : 98.25

Fig.8.4. Confusion matrix of custom model

8.2.2. ResNet50V2:

Accuracy : 96.88

Precision : 96.03

Recall : 98.83

Fig.8.5. Confusion matrix of Resnet50V2

8.2.3. EfficientNetV2B1:

Accuracy : 98.27

Precision : 97.17

Recall : 100.0

SIR C R REDDY COLLEGE OF ENGINEERING 36

Pneumonia Detection from CT scan using CNN Models

Fig.8.6. Confusion matrix of EfficientNetV2B1

ROC curve Custom model:

ROC curve Resnet50V2 model:

ROC curve EfficientNetV2B1 :

SIR C R REDDY COLLEGE OF ENGINEERING 37

Pneumonia Detection from CT scan using CNN Models

Fig.8.7. ROC curves of EfficientNetV2B1, Resnet50V2, Custom models

CHAPTER-9

RESULTS AND DISCUSSION

The user is provided with Interface to upload the CT Scan image of the lung to see the

results.

The Interface is built using the Streamlit a popular python library used to build the web apps

for machine learning and deep learning applications. After the image of scan being uploaded

by the user the image will be passed to the three models Resnet, EfficientNet and Custom

model. The outputs generated by each of the models will be averaged for more robust

prediction of the disease.

SIR C R REDDY COLLEGE OF ENGINEERING 38

Pneumonia Detection from CT scan using CNN Models

Fig. 9.1.1. Output of the pneumonia Fig.9.1.2. Output of the normal

scan scan

CHAPTER-10

CONCLUSION

Ensembling models, particularly a combination of a custom model alongside established

architectures like ResNet50V2 and EfficientNetV2 B1, offers a powerful strategy for

pneumonia detection from CT scans.

Firstly, the custom model can be specifically tailored to the intricacies of the dataset at hand.

By designing a model architecture and training it on the dataset, it can capture subtle nuances

and features that are particularly relevant to pneumonia detection from CT scans. This

customization allows the model to adapt precisely to the characteristics of the images and the

specific patterns indicative of pneumonia.

On the other hand, incorporating pre-trained models such as ResNet50V2 and EfficientNetV2

B1 brings the advantage of leveraging the knowledge learned from vast datasets like

ImageNet. These models have been trained on diverse visual recognition tasks, learning

hierarchical representations of features that are generally useful across a wide range of image

classification tasks. This means that these models have already learned to extract meaningful

features from images, including those relevant to pneumonia detection.

Ensembling these models combines the strengths of both approaches. The custom model

provides domain-specific insights and fine-tuned features, while the pre-trained models offer

a solid foundation of generalizable features. By aggregating the predictions of these models,

either through averaging their outputs or more sophisticated techniques like stacking, the

ensemble can effectively smooth out individual model biases and uncertainties. This results

in a more robust and reliable prediction, less susceptible to errors or noise in any single

model's predictions.

SIR C R REDDY COLLEGE OF ENGINEERING 39

Pneumonia Detection from CT scan using CNN Models

Furthermore, ensembling helps mitigate the risk of overfitting by reducing the reliance on

any one model's predictions. Instead, the ensemble combines multiple viewpoints, leading to

more balanced and accurate predictions across different cases and variations within the

dataset. Increase in the accuracy minimizes the death rate.

CHAPTER-11

REFERENCES

Daniel Joseph Alapat, Malavika Venu Menon, and Sharmila Ashok. “Detection of

Pneumonia in Chest X-ray Images Using Neural Networks”, in Vellore Institute of

Technology, Tamil Nadu, India, 2022.[1]

Rohit Kundu, Ritacheta Das, Zong Woo, Gi-Tae Han, Ram Sarkar. “Pneumonia

detection in chest X-ray images using an ensemble of deep learning models”, in

Jadavpur University, Kolkata, India and Gachon University, Seongnam, South Korea,

2021.[2]

Shagun Sharma, Kalpna Guleria. “A Deep Learning based model for the Detection of

Pneumonia from Chest X-Ray Images using VGG-16 and Neural Networks”, in

Chitkara University, Rajpura, 140401, Punjab, India, 2023.[3]

SIR C R REDDY COLLEGE OF ENGINEERING 40

You might also like

- BaddiDocument43 pagesBaddi01copy100% (3)

- Chest X Ray Detection Project ReportDocument45 pagesChest X Ray Detection Project ReportNikhil Sharma100% (1)

- Pneumonia Lung Opacity Detection and Segmentation in Chest X-Rays by Using Transfer Learning of The Mask R-CNNDocument9 pagesPneumonia Lung Opacity Detection and Segmentation in Chest X-Rays by Using Transfer Learning of The Mask R-CNNWeb ResearchNo ratings yet

- A Survey Paper On Pneumonia Detection in Chest X-Ray Images Using An Ensemble of Deep LearningDocument9 pagesA Survey Paper On Pneumonia Detection in Chest X-Ray Images Using An Ensemble of Deep LearningIJRASETPublicationsNo ratings yet

- Decoding Pneumonia: Leveraging CNNS For Accurate Chest X-Ray ClassificationDocument7 pagesDecoding Pneumonia: Leveraging CNNS For Accurate Chest X-Ray ClassificationInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Covid 19Document18 pagesCovid 19AISHWARYA PANDITNo ratings yet

- Diagnosis of Pneumonia From Chest X-Ray Images Using Deep LearningDocument5 pagesDiagnosis of Pneumonia From Chest X-Ray Images Using Deep LearningShoumik MuhtasimNo ratings yet

- 2004 06578 PDFDocument19 pages2004 06578 PDFSumaNo ratings yet

- ChapterDocument22 pagesChapterAparna MittalNo ratings yet

- 2023 An Improved Faster R-CNN Algorithm For Assisted Detection of Lung NodulesDocument9 pages2023 An Improved Faster R-CNN Algorithm For Assisted Detection of Lung NodulesgraythomasinNo ratings yet

- Automatic Detection of Pneumonia On Compressed Sensing Images Using Deep LearningDocument4 pagesAutomatic Detection of Pneumonia On Compressed Sensing Images Using Deep LearningRahul ShettyNo ratings yet

- Development of Hybrid Convolutional Neural Network and Autoregressive Integrated Moving Average On Computed Tomography Image ClassificationDocument9 pagesDevelopment of Hybrid Convolutional Neural Network and Autoregressive Integrated Moving Average On Computed Tomography Image ClassificationIAES IJAINo ratings yet

- Paper2 15pagesDocument15 pagesPaper2 15pagesCharlène Béatrice Bridge NduwimanaNo ratings yet

- ICMR - Reproducible AI in Medicine and HealthDocument9 pagesICMR - Reproducible AI in Medicine and Healthvignesh16vlsiNo ratings yet

- Ies50839 2020 9231540Document5 pagesIes50839 2020 9231540AhdiatShinigamiNo ratings yet

- Detecting Tuberculosis in Chest X-Ray Images UsingDocument5 pagesDetecting Tuberculosis in Chest X-Ray Images UsingShoumik MuhtasimNo ratings yet

- Anapub Paper TemplateDocument10 pagesAnapub Paper Templatebdhiyanu87No ratings yet

- Jurnal CNN PneumoniaDocument5 pagesJurnal CNN PneumoniadaffaNo ratings yet

- Detecting Pneumonia Using Convolutions and Dynamic Capsule Routing For Chest X-Ray ImagesDocument30 pagesDetecting Pneumonia Using Convolutions and Dynamic Capsule Routing For Chest X-Ray ImagesShoumik MuhtasimNo ratings yet

- 项目论文2:美国有线电视新闻网医学影像诊断新冠肺炎感染综述Document8 pages项目论文2:美国有线电视新闻网医学影像诊断新冠肺炎感染综述lujunNo ratings yet

- Prediction of Pneumonia Using CNNDocument9 pagesPrediction of Pneumonia Using CNNIJRASETPublicationsNo ratings yet

- Measurement: Amit Kumar Jaiswal, Prayag Tiwari, Sachin Kumar, Deepak Gupta, Ashish Khanna, Joel J.P.C. RodriguesDocument8 pagesMeasurement: Amit Kumar Jaiswal, Prayag Tiwari, Sachin Kumar, Deepak Gupta, Ashish Khanna, Joel J.P.C. RodriguesDiego Alejandro Betancourt PradaNo ratings yet

- Deep Convolutional Neural Networks For Lung Nodule Detection: Improvement in Small Nodule IdentificationDocument9 pagesDeep Convolutional Neural Networks For Lung Nodule Detection: Improvement in Small Nodule Identificationdreadrebirth2342No ratings yet

- Research Paper On Lung DetecionDocument5 pagesResearch Paper On Lung DetecionHirdesh KumarNo ratings yet

- Medical Image Classification Using CNN ReportDocument19 pagesMedical Image Classification Using CNN ReportSameer Thadimarri AP20110010028No ratings yet

- 1 s2.0 S0957417422016372 MainDocument14 pages1 s2.0 S0957417422016372 MainMd NahiduzzamanNo ratings yet

- (IJCST-V11I2P5) :Dr.S.Selvakani, K.Vasumathi, M.GopiDocument5 pages(IJCST-V11I2P5) :Dr.S.Selvakani, K.Vasumathi, M.GopiEighthSenseGroupNo ratings yet

- IJEET TemplateDocument5 pagesIJEET TemplateIgt SuryawanNo ratings yet

- An Efficient CNN Model For COVID-19 Disease DetectDocument12 pagesAn Efficient CNN Model For COVID-19 Disease Detectnasywa rahmatullailyNo ratings yet

- Deep Neural Network Ensemble For Pneumonia LocalizationDocument12 pagesDeep Neural Network Ensemble For Pneumonia LocalizationAmílcar CáceresNo ratings yet

- Lung Tumor Localization and Visualization in ChestDocument17 pagesLung Tumor Localization and Visualization in ChestAida Fitriyane HamdaniNo ratings yet

- 2023 Detection and Classification of COVID-19 by Using Faster R-CNN and Mask R-CNN On CT ImagesDocument15 pages2023 Detection and Classification of COVID-19 by Using Faster R-CNN and Mask R-CNN On CT ImagesgraythomasinNo ratings yet

- Identifying Drug-Resistant Tuberculosis in Chest Radiographs Evaluation of CNN Architectures and Training StrategiesDocument4 pagesIdentifying Drug-Resistant Tuberculosis in Chest Radiographs Evaluation of CNN Architectures and Training StrategiesManya K MNo ratings yet

- Chest X-Ray Outlier Detection Model Using Dimension Reduction and Edge DetectionDocument11 pagesChest X-Ray Outlier Detection Model Using Dimension Reduction and Edge DetectionHardik AgrawalNo ratings yet

- 1 s2.0 S138650562030959X MainDocument9 pages1 s2.0 S138650562030959X Main1No ratings yet

- Lung and Pancreatic Tumor Characterization in The Deep Learning Era: Novel Supervised and Unsupervised Learning ApproachesDocument11 pagesLung and Pancreatic Tumor Characterization in The Deep Learning Era: Novel Supervised and Unsupervised Learning ApproachesVipin GeorgeNo ratings yet

- Deep Learning Approach For Unprecedented Lung Disease PrognosisDocument5 pagesDeep Learning Approach For Unprecedented Lung Disease PrognosisMulla Abdul FaheemNo ratings yet

- Survey PneumoniaDocument7 pagesSurvey Pneumonia20220804039No ratings yet

- Pneumonia Disease Detection Using Deep LearningDocument6 pagesPneumonia Disease Detection Using Deep LearningIJRASETPublicationsNo ratings yet

- Lung CancerDocument10 pagesLung Cancerbvkarthik2711No ratings yet

- Comparison of Deep Learning Algorithms For Pneumonia DetectionDocument5 pagesComparison of Deep Learning Algorithms For Pneumonia DetectionInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Enhancing Early Detection of Lung Cancer With An Advanced ALCDC System Utilizing Convolutional Neural NetworkDocument5 pagesEnhancing Early Detection of Lung Cancer With An Advanced ALCDC System Utilizing Convolutional Neural NetworkInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Research Article An Improved Covid-19 Detection Using Gan-Based Data Augmentation and Novel Qunet-Based ClassificationDocument9 pagesResearch Article An Improved Covid-19 Detection Using Gan-Based Data Augmentation and Novel Qunet-Based ClassificationNaoual NassiriNo ratings yet

- Pneumonia Detection in X-Ray Chest Images BasedDocument7 pagesPneumonia Detection in X-Ray Chest Images BasedyuniNo ratings yet

- Resume Paper AgisDocument2 pagesResume Paper AgisAulia AgistaNo ratings yet

- Zhang Et Al. - 2022 - A Multi-Channel Deep Convolutional Neural NetworkDocument11 pagesZhang Et Al. - 2022 - A Multi-Channel Deep Convolutional Neural NetworkJamesLeeNo ratings yet

- Document3 TexDocument4 pagesDocument3 TexNavin M ANo ratings yet

- Artificial Intelligence 1Document8 pagesArtificial Intelligence 1Andy BaiNo ratings yet

- Artificial Intelligence in Respirato - 2021 - Archivos de Bronconeumolog A EnglDocument2 pagesArtificial Intelligence in Respirato - 2021 - Archivos de Bronconeumolog A EnglMomoh GaiusNo ratings yet

- Identifying Pulmonary Nodules or Masses On Chest Radiography Using Deep Learning: External Validation and Strategies To Improve Clinical PracticeDocument8 pagesIdentifying Pulmonary Nodules or Masses On Chest Radiography Using Deep Learning: External Validation and Strategies To Improve Clinical PracticeYuriansyah Dwi Rahma PutraNo ratings yet

- 10 1109@iccsp48568 2020 9182258Document4 pages10 1109@iccsp48568 2020 9182258mindaNo ratings yet

- SirishKaushik2020 Chapter PneumoniaDetectionUsingConvoluDocument14 pagesSirishKaushik2020 Chapter PneumoniaDetectionUsingConvoluMatiqul IslamNo ratings yet

- Pneumonia Detection Using Convolutional Neural Networks (CNNS)Document14 pagesPneumonia Detection Using Convolutional Neural Networks (CNNS)shekhar1405No ratings yet

- Ensemble Deep Learning For Tuberculosis Detection Using Chest X-Ray and Canny Edge Detected ImagesDocument7 pagesEnsemble Deep Learning For Tuberculosis Detection Using Chest X-Ray and Canny Edge Detected ImagesIAES IJAINo ratings yet

- Breast Cancer Classification in Ultrasound ImagesDocument4 pagesBreast Cancer Classification in Ultrasound ImagesTefeNo ratings yet

- CNN Architecture Optimization Using Bio Inspired Algor - 2022 - Computers in BioDocument13 pagesCNN Architecture Optimization Using Bio Inspired Algor - 2022 - Computers in BioFarhan MaulanaNo ratings yet

- Hybrid Deep Learning For Detecting Lung Diseases From X-Ray ImagesDocument23 pagesHybrid Deep Learning For Detecting Lung Diseases From X-Ray ImagesLK AnhDungNo ratings yet

- Application of Deep Learning Techniques For Detection of COVID-19 Casesusing Chest X-Ray Images A Comprehensive StudyDocument12 pagesApplication of Deep Learning Techniques For Detection of COVID-19 Casesusing Chest X-Ray Images A Comprehensive StudyHarshini N BNo ratings yet

- Deep Learning Assisted Predict of Lung Cancer On Computed Tomography Images Using The Adaptive Hierarchical Heuristic Mathematical ModelDocument11 pagesDeep Learning Assisted Predict of Lung Cancer On Computed Tomography Images Using The Adaptive Hierarchical Heuristic Mathematical Modelswathi sNo ratings yet

- 2D3D Clasification 01Document11 pages2D3D Clasification 01sadNo ratings yet

- Course: EC2P001 Introduction To Electronics Lab: Indian Institute of Technology Bhubaneswar School of Electrical ScienceDocument5 pagesCourse: EC2P001 Introduction To Electronics Lab: Indian Institute of Technology Bhubaneswar School of Electrical ScienceAnik ChaudhuriNo ratings yet

- Earning and Stock Split - Asquith Et Al 1989Document18 pagesEarning and Stock Split - Asquith Et Al 1989Fransiskus ShaulimNo ratings yet

- Women's Safety Measures Through Sensor Device Using Iot: T.Sathyapriya, R.Auxilia Anitha MaryDocument3 pagesWomen's Safety Measures Through Sensor Device Using Iot: T.Sathyapriya, R.Auxilia Anitha MaryCSE ROHININo ratings yet

- Report On: Course: MKT 634, Section-1Document35 pagesReport On: Course: MKT 634, Section-1wasifNo ratings yet

- OSY - Chapter1Document11 pagesOSY - Chapter1Rupesh BavgeNo ratings yet

- Nokia Lumia 925 RM-892 - 893 - 910 L1L2 Service ManualDocument63 pagesNokia Lumia 925 RM-892 - 893 - 910 L1L2 Service ManualCretu PaulNo ratings yet

- General Purpose Engine: GX100T GX100UT GX100RTDocument66 pagesGeneral Purpose Engine: GX100T GX100UT GX100RTLupin GonzalezNo ratings yet

- A Covid-19 Based Temperature Detection and Contactless Attendance Monitoring System Using Iris RecognitionDocument18 pagesA Covid-19 Based Temperature Detection and Contactless Attendance Monitoring System Using Iris Recognitionshreya tripathiNo ratings yet

- English Theses PDFDocument630 pagesEnglish Theses PDFshakhy azadNo ratings yet

- Credential Harvestor FacebookDocument23 pagesCredential Harvestor FacebookJ Anthony GreenNo ratings yet

- Databricks Data Processing Addendum 25 Sept 2021 FINALDocument12 pagesDatabricks Data Processing Addendum 25 Sept 2021 FINALVaibhav AntilNo ratings yet

- Konica Minolta Bizhub C 6501 CatalogDocument0 pagesKonica Minolta Bizhub C 6501 CatalogDiscountCopierCenterNo ratings yet

- AC43-18 CHG 1-2 Fabrication of Parts by Maintenance Personnel PDFDocument20 pagesAC43-18 CHG 1-2 Fabrication of Parts by Maintenance Personnel PDFJesseNo ratings yet

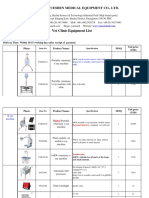

- 2017 Vet Clinic Equipment Pricelist From YuesenmedDocument24 pages2017 Vet Clinic Equipment Pricelist From YuesenmedVictor TomalaNo ratings yet

- Huawei GPON HG8247H WithRouter v2 PDFDocument4 pagesHuawei GPON HG8247H WithRouter v2 PDFJose Norton DoriaNo ratings yet

- CIVL 4750 Numerical Solutions To Geotechnical Problems: I: TA: T V: Tuesday/ C ODocument3 pagesCIVL 4750 Numerical Solutions To Geotechnical Problems: I: TA: T V: Tuesday/ C OChoffo YannickNo ratings yet

- Installation Guide: EV Power Chargers 3kW HEDocument12 pagesInstallation Guide: EV Power Chargers 3kW HEnikhom_dk1565No ratings yet

- HP Compac 4000 Pro Small PCDocument4 pagesHP Compac 4000 Pro Small PCHebert Castañeda FloresNo ratings yet

- Gmail - NMAT by GMAC Exam Appointment ConfirmationDocument2 pagesGmail - NMAT by GMAC Exam Appointment ConfirmationPankti BaxiNo ratings yet

- Moduino ENDocument4 pagesModuino ENaabejaroNo ratings yet

- GFK2749 - RX3i PSM PDFDocument100 pagesGFK2749 - RX3i PSM PDFgabsNo ratings yet

- MT 720 Transfer of A Documentary CreditDocument3 pagesMT 720 Transfer of A Documentary CreditA. T. M. Anisur Rabbani100% (1)

- Problem StatementDocument4 pagesProblem Statementjanardhan gortiNo ratings yet

- Diaphragm Pressure Gauge Guard MDM 902: Corrosion-Free Pressure Transmission For Aggressive MediaDocument4 pagesDiaphragm Pressure Gauge Guard MDM 902: Corrosion-Free Pressure Transmission For Aggressive Mediathiago weniskleyNo ratings yet

- JellDocument1 pageJellMuhammad DanuNo ratings yet

- Certificacion ORCA PDFDocument42 pagesCertificacion ORCA PDFedmuarizt7078No ratings yet

- Green ComputingDocument7 pagesGreen Computingerwin.dee.cicsNo ratings yet

- Kendriya Vidyalaya Sangathan Jaipur Region: Sample Question Paper (Term-I)Document7 pagesKendriya Vidyalaya Sangathan Jaipur Region: Sample Question Paper (Term-I)Samira FarooquiNo ratings yet

- Feed Manufacturing-GrindingDocument33 pagesFeed Manufacturing-GrindingDr Anais AsimNo ratings yet