Professional Documents

Culture Documents

Chapter 4 Theprocessor

Chapter 4 Theprocessor

Uploaded by

MirandaOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Chapter 4 Theprocessor

Chapter 4 Theprocessor

Uploaded by

MirandaCopyright:

Available Formats

Chapter 4

The Processor

[Adapted from Computer Organization and

Design, 4th Edition,

Patterson & Hennessy, © 2008, MK]

[Also adapted from lecture slide by Mary Jane

Irwin, www.cse.psu.edu/~mji]

§4.1 Introduction

Introduction

CPU performance factors

Instruction count

Determined by ISA and compiler

CPI and Cycle time

Determined by CPU hardware

We will examine two MIPS implementations

A simplified version

A more realistic pipelined version

Simple subset, shows most aspects

Memory reference: lw, sw

Arithmetic/logical: add, sub, and, or, slt

Control transfer: beq, j

Chapter 4 — The Processor — 2

Instruction Execution

For every instruction, the first two steps are identical

PC instruction memory, fetch instruction

Register numbers register file, read registers

Depending on instruction class

Use ALU to calculate

Arithmetic and logical operations execution

Memory address calculation for load/store

Branch for comparison

Access data memory for load/store

PC target address (for branch) or PC + 4

Chapter 4 — The Processor — 3

CPU Overview

branch

load

store

immediate

Chapter 4 — The Processor — 4

Multiplexers

Can’t just join

wires together

Use

multiplexers

Chapter 4 — The Processor — 5

Control

(beq)

Chapter 4 — The Processor — 6

§4.2 Logic Design Conventions

Logic Design Basics

Information encoded in binary

Low voltage = 0, High voltage = 1

One wire per bit

Multi-bit data encoded on multi-

wire buses element

Combinational

Operate on data

Output is a function of input

State (sequential) elements

Store information

Chapter 4 — The Processor — 7

Combinational Elements

AND-gate Adder A

Y = A & B + Y

Y=A+B B

A

Y

B

Arithmetic/Logic Unit

Multiplexer Y = F(A, B)

Y = S ? I1 : I0

A

I0 M

u Y ALU Y

I1 x

B

S F

Chapter 4 — The Processor — 8

Sequential Elements

Register: stores data in a circuit

Uses a clock signal to determine when to update the

stored value

Edge-triggered

rising edge: update when Clk changes from 0 to 1

falling edge: update when Clk changes from 1 to 0

Clk

D Q

D

Clk

Q

Chapter 4 — The Processor — 9

Sequential Elements

Register with write control

Only updates on clock edge when write control input

is 1

Used when stored value is required later

Clk

D Q Write

Write D

Clk

Q

Chapter 4 — The Processor — 10

Clocking Methodology

Edge-triggered clocking

Combinational logic transforms data during clock

cycles between clock edges

Input from state elements, output to state element

Longest delay determines clock period

Of course, the clock cycle

must be long enough

[ Edge-triggered clocking ] so that the inputs are

stable

Chapter 4 — The Processor — 11

§4.3 Building a Datapath

Building a Datapath

Datapath

Elements that process data and addresses

in the CPU

Registers, ALUs, mux’s, memories, …

We will build a MIPS datapath incrementally

Refining the overview design

Chapter 4 — The Processor — 12

Instruction Fetch

Increment by

4 for next

instruction

32-bit

register

Chapter 4 — The Processor — 13

R-Format Instructions

Read two register operands

Perform arithmetic/logical operation

Write register result

Ex. : add $t0, $s2, $t0

32 32

1-bit

32 32 32

32

Chapter 4 — The Processor — 14

Load/Store Instructions

lw $t1, offset_value($t2)

offset_value: 16-bit signed offset

Read register operands

Calculate address using 16-bit

offset

Use ALU, but sign-extend offset

Load: Read memory and update register

Store: Write register value to memory

Chapter 4 — The Processor — 15

Branch Instructions

beq $t1, $t2,

offset

if (t1 == t2) branch to instruction labeled offset

Target address = PC + offset × 4

Read register operands

Compare operands

Use ALU, subtract and check Zero output

Calculate target address

Sign-extend displacement

Shift left 2 places (word displacement)

Add to PC + 4

Already calculated by instruction fetch

Chapter 4 — The Processor — 16

Branch Instructions

Just

re-routes

wire

s

Will be using it only to

implement the equal test of branches

Sign-bit wire

replicated

Chapter 4 — The Processor — 17

Composing the Elements

First-cut data path does an instruction in one clock cycle

Each datapath element can only do one function at a

time

Hence, we need separate instruction and data

memories

To share a datapath element between two different

instruction classes

use multiplexers and control signal

Chapter 4 — The Processor — 18

R-Type/Load/Store Datapath

Chapter 4 — The Processor — 19

Full Datapath

Chapter 4 — The Processor — 20

§4.4 A Simple Implementation Scheme

ALU Control

ALU used for

Load/Store: F = add

Branch: F =

subtract

R-type: F depends

on funct field

ALU control Function

0000 AND

0001 OR

0010 add

0110 subtract

0111 set-on-less-than

1100 NOR

Chapter 4 — The Processor — 21

ALU Control

Assume 2-bit ALUOp derived from opcode

Combinational logic derives ALU control

opcode ALUOp Operation funct ALU function ALU control

lw 00 load word XXXXXX add 0010

sw 00 store word XXXXXX add 0010

beq 01 branch equal XXXXXX subtract 0110

R-type 10 add 100000 add 0010

subtract 100010 subtract 0110

AND 100100 AND 0000

OR 100101 OR 0001

set-on-less-than 101010 set-on-less-than 0111

Chapter 4 — The Processor — 22

The Main Control Unit

Control signals derived from instruction

R-type 0 rs rt rd shamt funct

31:26 25:21 20:16 15:11 10:6 5:0

Load/

35 or 43 rs rt address

Store 31:26 25:21 20:16 15:0

Branch 4 rs rt address

31:26 25:21 20:16 15:0

opcode always read, write for sign-extend

read except R-type and add

for load and load

Chapter 4 — The Processor — 23

Datapath With Control

Chapter 4 — The Processor — 24

R-Type Instruction

Chapter 4 — The Processor — 25

Load Instruction

Chapter 4 — The Processor — 26

Branch-on-Equal Instruction

Chapter 4 — The Processor — 27

Implementing Jumps

Jump 2 address

31:26 25:0

Jump uses word address

Update PC with concatenation of

Top 4 bits of old PC

26-bit jump

address 00

Need an extra control signal decoded from opcode

Chapter 4 — The Processor — 28

Datapath With Jumps Added

Chapter 4 — The Processor — 29

Performance Issues

Longest delay determines clock period

Critical path: load instruction

Instruction memory register file ALU data

memory register file

Not feasible to vary period for different instructions

Violates design principle

Making the common case fast

We will improve performance by pipelining

Chapter 4 — The Processor — 30

§4.5 An Overview of Pipelining

Pipelining Analogy

Pipelined laundry: overlapping execution

Parallelism improves performance

Four loads:

Speedup

= 8/3.5 = 2.3

Non-stop:

Speedup

= 2n/0.5n + 1.5 ≈ 4

= number of stages

Chapter 4 — The Processor — 31

MIPS Pipeline

Five stages, one step per stage

1. IF: Instruction fetch from memory

2. ID: Instruction decode & register read

3. EX: Execute operation or calculate address

4. MEM: Access memory operand

5. WB: Write result back to register

Chapter 4 — The Processor — 32

Pipeline Performance

Assume time for stages is

100ps for register read or write

200ps for other stages

Compare pipelined datapath with single-cycle

datapath

Instr Instr fetch Register ALU op Memory Register Total time

read access write

lw 200ps 100 ps 200ps 200ps 100 ps 800ps

sw 200ps 100 ps 200ps 200ps 700ps

R-format 200ps 100 ps 200ps 100 ps 600ps

beq 200ps 100 ps 200ps 500ps

Chapter 4 — The Processor — 33

Pipeline Performance

Single-cycle (Tc= 800ps)

Pipelined (Tc= 200ps)

Chapter 4 — The Processor — 34

Pipeline Speedup

If all stages are balanced

i.e., all take the same time

Time between instructionspipelined

= Time between instructionsnonpipelined

Number of stages

If not balanced, speedup is less

Speedup due to increased throughput

Latency (time for each instruction)

does not decrease

Chapter 4 — The Processor — 35

Pipelining and ISA Design

MIPS ISA designed for pipelining

All instructions are 32-bits

Easier to fetch and decode in one cycle

c.f. x86: 1- to 17-byte instructions

Few and regular instruction formats

Can decode and read registers in one step

Load/store addressing

Can calculate address in 3rd stage, access memory

in 4th stage

Alignment of memory operands

Memory access takes only one cycle

Chapter 4 — The Processor — 36

Hazards

Situations that prevent starting the next instruction in the

next cycle

Structure hazards

A required resource is busy

Data hazard

Need to wait for previous instruction to complete its

data read/write

Control hazard

Deciding on control action depends on previous

instruction

Chapter 4 — The Processor — 37

Structure Hazards

Conflict for use of a resource

In MIPS pipeline with a single memory

Load/store requires data access

Instruction fetch would have to stall for that cycle

Would cause a pipeline “bubble”

Hence, pipelined datapaths require separate

instruction/data memories

Or separate instruction/data caches

Chapter 4 — The Processor — 38

Data Hazards

An instruction depends on completion of data access by

a previous instruction

add $s0, $t0, $t1

sub $t2, $s0, $t3

Chapter 4 — The Processor — 39

Forwarding (aka Bypassing)

Use result when it is computed

Don’t wait for it to be stored in a register

Requires extra connections in the datapath

Chapter 4 — The Processor — 40

Load-Use Data Hazard

Can’t always avoid stalls by forwarding

If value not computed when needed

Can’t forward backward in time!

Chapter 4 — The Processor — 41

Code Scheduling to Avoid Stalls

Reorder code to avoid use of load result in the next

instruction

C code for A = B + E; C = B + F;

lw $t1, 0($t0) lw $t1, 0($t0)

lw $t2, 4($t0) lw $t2, 4($t0)

stall add $t3, $t1, $t2 lw $t4, 8($t0)

sw 12($t0) add $t3, $t1, $t2

$t3, 8($t0) sw $t3, 12($t0)

stall lw $t4, $t1, $t4 add $t1, $t4

add $t5, 16($t0) $t5, sw 16($t0)

sw 13 cycles $t5, 11 cycles

$t5,

Chapter 4 — The Processor — 42

Control Hazards

Branch determines flow of control

Fetching next instruction depends on branch outcome

Pipeline can’t always fetch correct instruction

Still working on ID stage of branch

In MIPS pipeline

Need to compare registers and compute target early

in the pipeline

Add hardware to do it in ID stage

Chapter 4 — The Processor — 43

Stall on Branch

Wait until branch outcome determined before fetching

next instruction

Chapter 4 — The Processor — 44

Branch Prediction

Longer pipelines can’t readily determine branch outcome

early

Stall penalty becomes unacceptable

Predict outcome of branch

Only stall if prediction is wrong

In MIPS pipeline

Can predict branches not taken

Fetch instruction after branch, with no delay

Chapter 4 — The Processor — 45

MIPS with Predict Not Taken

Prediction

correct

Prediction

incorrect

Chapter 4 — The Processor — 46

You might also like

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5813)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1092)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (844)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (590)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (897)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (540)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (348)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (822)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (122)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (401)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (74)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- Practice Final ExamDocument3 pagesPractice Final ExamThành ThảoNo ratings yet

- Usability: Lessons Learned and Yet To Be Learned: James R. LewisDocument22 pagesUsability: Lessons Learned and Yet To Be Learned: James R. LewisLoai F AlzouabiNo ratings yet

- International Journal of Human-Computer Interaction: Click For UpdatesDocument4 pagesInternational Journal of Human-Computer Interaction: Click For UpdatesLoai F AlzouabiNo ratings yet

- Midterm Exam Saturday, November 08, 2014 Duration: 120 MinutesDocument11 pagesMidterm Exam Saturday, November 08, 2014 Duration: 120 MinutesLoai F AlzouabiNo ratings yet

- An Empirical Performance Evaluation of UniversitieDocument8 pagesAn Empirical Performance Evaluation of UniversitieLoai F AlzouabiNo ratings yet

- ICS103 Midterm 141Document16 pagesICS103 Midterm 141Loai F AlzouabiNo ratings yet

- Assessing The Reliability of Heuristic Evaluation For Website Attractiveness and UsabilityDocument10 pagesAssessing The Reliability of Heuristic Evaluation For Website Attractiveness and UsabilityLoai F AlzouabiNo ratings yet

- Assessing Educational Web-Site Usability Using Heuristic Evaluation RulesDocument8 pagesAssessing Educational Web-Site Usability Using Heuristic Evaluation RulesLoai F AlzouabiNo ratings yet

- 1 PDFDocument5 pages1 PDFLoai F AlzouabiNo ratings yet

- What Is Usability in The Context of The Digital Library and How Can It Be Measured?Document11 pagesWhat Is Usability in The Context of The Digital Library and How Can It Be Measured?Loai F AlzouabiNo ratings yet

- Evaluation of Digital Libraries Criteria and Problems From Users Perspectives 2006 Library Information Science ResearchDocument20 pagesEvaluation of Digital Libraries Criteria and Problems From Users Perspectives 2006 Library Information Science ResearchLoai F AlzouabiNo ratings yet

- Users Evaluation of Digital Libraries DLs Their Uses Their Criteria and Their Assessment 2008 Information Processing ManagementDocument28 pagesUsers Evaluation of Digital Libraries DLs Their Uses Their Criteria and Their Assessment 2008 Information Processing ManagementLoai F AlzouabiNo ratings yet

- The Design and Implementation of Colleges Sports Teaching Management SystemDocument4 pagesThe Design and Implementation of Colleges Sports Teaching Management SystemLoai F AlzouabiNo ratings yet

- Ijest10 02 09 149Document8 pagesIjest10 02 09 149Loai F AlzouabiNo ratings yet

- Can Students Complete Typical Tasks On University Websites Successfully?Document7 pagesCan Students Complete Typical Tasks On University Websites Successfully?Loai F AlzouabiNo ratings yet

- Assessing The Usability of University Websites: An Empirical Study On Namik Kemal UniversityDocument9 pagesAssessing The Usability of University Websites: An Empirical Study On Namik Kemal UniversityLoai F AlzouabiNo ratings yet

- Websites Usability: A Comparison of Three University WebsitesDocument11 pagesWebsites Usability: A Comparison of Three University WebsitesLoai F AlzouabiNo ratings yet

- Usability Evaluation of A Web-Based E-LearningDocument336 pagesUsability Evaluation of A Web-Based E-LearningLoai F AlzouabiNo ratings yet

- Can Students Complete Typical Tasks On University Websites Successfully?Document7 pagesCan Students Complete Typical Tasks On University Websites Successfully?Loai F AlzouabiNo ratings yet

- 1 Sciencedirect PDFDocument9 pages1 Sciencedirect PDFLoai F AlzouabiNo ratings yet

- Usefulness of Web PDFDocument10 pagesUsefulness of Web PDFLoai F AlzouabiNo ratings yet

- Usability: Software Product PerspectiveDocument2 pagesUsability: Software Product PerspectiveLoai F AlzouabiNo ratings yet

- 1 SciencedirectDocument9 pages1 SciencedirectLoai F AlzouabiNo ratings yet

- Pipeline: Datahazard, StallDocument12 pagesPipeline: Datahazard, StallMartin Fuentes AcostaNo ratings yet

- Lec18 Tomasulo AlgorithmDocument40 pagesLec18 Tomasulo Algorithmmayank pNo ratings yet

- ICT Course Syllabus 2019-2020 PDFDocument53 pagesICT Course Syllabus 2019-2020 PDFMinh Hiếu100% (1)

- What Are The Basic Components in A MicroprocessorDocument5 pagesWhat Are The Basic Components in A MicroprocessorMobin AkhtarNo ratings yet

- Unit III and Unit IV - Question Bank With AnswersDocument5 pagesUnit III and Unit IV - Question Bank With AnswersRupasharan SaravananNo ratings yet

- HPCA Assignment 1& 2 For Improvement & BacklogDocument3 pagesHPCA Assignment 1& 2 For Improvement & Backlogdebjeet.mailmeNo ratings yet

- Computer ArchitectureDocument667 pagesComputer Architecturevishalchaurasiya360001No ratings yet

- Ec8552 - Cao MCQDocument27 pagesEc8552 - Cao MCQformyphdNo ratings yet

- Coa Lecture Unit 3 PipeliningDocument95 pagesCoa Lecture Unit 3 PipeliningSumathy JayaramNo ratings yet

- Computer Architecture 2 MarksDocument32 pagesComputer Architecture 2 MarksArchanavgs0% (1)

- Lec6 PDFDocument22 pagesLec6 PDFElisée NdjabuNo ratings yet

- Score Board Contd. and Tomasulo's Algorithm: Instructor: Laxmi BhuyanDocument62 pagesScore Board Contd. and Tomasulo's Algorithm: Instructor: Laxmi Bhuyanbobchang321No ratings yet

- c6 CaoDocument77 pagesc6 CaoAh YanNo ratings yet

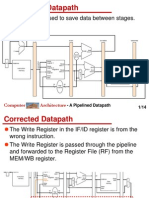

- A Pipelined Datapath: Resisters Are Used To Save Data Between StagesDocument14 pagesA Pipelined Datapath: Resisters Are Used To Save Data Between StagesJohnDaGRTNo ratings yet

- Project1 Hardware Design With Verilog: Submission ModalitiesDocument5 pagesProject1 Hardware Design With Verilog: Submission ModalitiesZulqarnain KhanNo ratings yet

- Project Four: The Completion FileDocument28 pagesProject Four: The Completion FileSajid AliNo ratings yet

- PDF 2Document13 pagesPDF 2Nivedita Acharyya 2035No ratings yet

- Pipeline HazardsDocument37 pagesPipeline HazardsStarqueenNo ratings yet

- Pipelining: Basic ConceptsDocument20 pagesPipelining: Basic Conceptskrupa R MNo ratings yet

- Data HazardsDocument15 pagesData HazardsPetreMaziluNo ratings yet

- Midterm Solutions Mar 30Document6 pagesMidterm Solutions Mar 30Sahil SethNo ratings yet

- Introduction To Digital Signal Processors (DSPS) : Prof. Brian L. EvansDocument30 pagesIntroduction To Digital Signal Processors (DSPS) : Prof. Brian L. Evansdayoladejo777No ratings yet

- CSE 560 - Practice Problem Set 5 SolutionDocument9 pagesCSE 560 - Practice Problem Set 5 SolutionFgfughuNo ratings yet

- Advance Computer Architecture2Document36 pagesAdvance Computer Architecture2AnujNo ratings yet

- Pipeline Hazards: Christos Kozyrakis Stanford UniversityDocument48 pagesPipeline Hazards: Christos Kozyrakis Stanford UniversityMo LêNo ratings yet

- Computer Organization: Instructions andDocument25 pagesComputer Organization: Instructions andmanishNo ratings yet

- A4 SolutionDocument4 pagesA4 SolutionAssam AhmedNo ratings yet

- Unit3 PipeliningDocument54 pagesUnit3 PipeliningDevi PagavanNo ratings yet

- Cse.m-ii-Advances in Computer Architecture (12scs23) - NotesDocument213 pagesCse.m-ii-Advances in Computer Architecture (12scs23) - NotesnbprNo ratings yet