Professional Documents

Culture Documents

Sharma PDF

Uploaded by

Anil KumarOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Sharma PDF

Uploaded by

Anil KumarCopyright:

Available Formats

Latent Learning in Agents

Rati Sharma RATIS@CS.RUTGERS.EDU

Department of Computer Science, Rutgers University, NJ 08854, USA

Abstract Schmajuk (2000). The model is presented as a neural

network that assumes a representation of the environment

Various cognitive models have been proposed to as a set of connected locations. As part of developing a

determine optimal paths in spatial navigation

Cognitive Map, a representation of the structure of the

tasks, some of which demonstrate latent learning

actual environment was stored in the map, which was then

in Agents. We view and present the model as a

Reinforcement Learning problem by using Q- used to guide navigation between specific places. We

Learning and Model-Based Learning in a view robot spatial navigation as a reinforcement learning

deterministic environment. This paper uses a Q- problem and use Q-Learning and Model-Based

Learning algorithm and compares its Reinforcement Learning methods.

performance with the Dyna (Model based)

algorithm on Blocking and Shortcut problems. Reinforcement learning is learning what to do, how to

We conclude that using Model-Based map situations to actions leading maximization of the

Reinforcement learning provides an interesting rewards. The learner is not told which actions to take, but

and efficient solution to blocking and shortcut instead must discover which actions yield the most reward

problems and that the agent demonstrates Latent by trial and error interaction with the environment.

Learning.

2. Latent Learning

1. Introduction

Latent learning is described as learning that is not evident

An important task for autonomous agents including to the observer at the time it occurs and that is apparent

humans, animals and robots is that of spatial navigation. once a reinforcement is introduced. Here is an

How does a robot decide what to do? How does it explanation of latent learning as it occurs in rats. A T-

determine the optimal path from point A to B? The maze proposed by Blodget (Figure 1) was used by

traditional answer has been that the robot should be able Tolman to demonstrate latent learning in Rats. The rats

to plan but one of the serious limitations of planning is were allowed to explore the maze without any reward

that the robot should have an accurate world model. We (food) for several trials. However once a reward was

describe an approach where the robot learns a good set of introduced, the rats with great accuracy could reach the

reactions by trial and error and at the same time plans to goal (food) in the maze. It was proposed that as long as

generate an internal model of the world. This internal the animals were not getting any food at the end of the

model can be considered similar to a Cognitive Map that maze they continued to take their time in going through it,

is compiled during exploration of the environment. they continued to enter many blinds. Once, however, they

knew they were to get food, they demonstrated that during

Tolman (1948) proposed a theory based on his these preceding non-rewarded trials they had learned

experiments performed on rats in a T maze (introduced by where many of the blinds were. They had been building

Blodget) that the animals form cognitive maps i.e. in the up a 'map,' and could utilize the latter as soon as they

course of learning, a field map of the environment gets were motivated to do so, by the introduction of a reward.

established in the rat’s brain. Cognitive maps store the For the purpose of our simulation we use a slight variation

expectations that when situated in place A, if the animal of the above T-maze (Figure 2).

executes response R (a movement in a given direction), it

will end in place B. This Cognitive Map that represents

routes, paths and environmental relationships determines

the response R.

One form of spatial navigation that incorporates the idea

of a Cognitive Map has been presented by Voicu and

The two reinforcement learning algorithms used for this

purpose are described as below:

3.1 Q-Learning

Q-Learning was proposed by Watkins (1989). The main

idea in the algorithm is to approximate the function

assigning each state-action pair the highest possible

payoff, thus for each pair of state s and action a, Q-

learning maintains an estimate Q(s, a) of the value of

taking a in s. The agent does not have a model of the

world in advance and it tries to create an optimal policy

while learning a world model.

Figure 1. T-Maze used by Tolman (From H.C. Blodget, Let Q(s, a) be the evaluation function so that its value

The effect of the introduction of reward upon the maze is the maximum discounted reward that can be achieved

performance of rats. University of California Publications starting from state s and applying action a as the first

in Psychology, 1929, 4, p. 117) action i.e. the value of Q is the reward received

immediately upon executing action a from state s, plus the

discounted value of following the optimal policy

2. A Navigation Task thereafter.

The T-maze in Figure 2 depicts a typical maze used for

the navigation experiments. The maze is a four by seven Q(s, a) = r(s, a) + γ*max Q (δ(s, a), a’)

grid of possible locations or states, one of which is

marked as the starting state (in yellow) and one of which r(s, a) is the reward function,

is marked as the Goal state (in green). The hurdles or

δ(s,a) is the new state.

barriers in the grid are also marked (in red). From each

state there are four possible actions UP, DOWN, RIGHT Q learning algorithm:

and LEFT, which change the state except when the

resulting state would end up in a barrier or take the agent Q^(s, a) refers to the learner’s current hypothesis about

out of the bounds of the maze. The reward for all states is the actual but unknown value Q(s, a)

0 except for the goal state where it is 1. The environment For each s, a initialize the table entry Q^(s, a) to zero.

is considered to be deterministic.

Observe the current state s

In the following section we describe the two learning

algorithms along with their simulations. Do Forever:

1) Select an action a and execute it

2) Receive immediate reward r

3) Observe the new state s’

4) Update the table entry for Q^(s, a) as follows:

Q^(s, a) ← r+γ max Q^(s’, a’)

3.2 Model-based Learning

The agent tries to learn the world-model in advance and

then utilizes the plan to generate navigation plans. In this

paper we work with the Dyna class of reinforcement

learning architectures proposed by Sutton (1990). The

Dyna architecture is based on dynamic programmings

policy iteration. It simultaneously learns by trial and error,

learns a world model and plans optimal routes using an

evolving world model.

3. Learning Algorithms

3.2.1 Overview of Dyna Architecture

Dyna architectures use machine learning algorithms to

approximate the conventional optimal control technique

i.e. Dynamic Programming. Dynamic programming is a

computational method for determining optimal behavior

given a complete model of the task to be solved. Thus,

Dyna performs a form of planning in addition to learning.

While learning updates the value function estimates

according to experience as it actually occurs, planning

differs only in that it updates these same value function

estimates for simulated transitions chosen from the world

model.

The initial Q values, the policy table entries and the

world model were all empty and the initial policy was a

The Dyna architecture consists of 4 components, as

random walk. For our experiments real and hypothetical

shown in Figure 3. The policy is the function formed by

the current set of reactions; it receives as input a experiences were used alternately. For each experience

description of the current state of the world and produces with the real world, k hypothetical experiences were

as output an action to be sent to the world. The world generated with the model.

represents the environment of the robot. It receives

actions from the policy and produces as output the next

state of the agent and a reward. The world-model in the

architecture intends to mimic the one step behavior of the

real world. The reinforcement learner represents a

learning algorithm like Q-Learning.

The Dyna algorithm:

a) Decide if this will be a real experience or a

hypothetical one.

b) Pick a state x (in a real experience choose a current

state).

c) Choose an action: 4. Computer Simulations:

Q (x, a) = E(r+γe (y) | x, a} i.e. the Q value for a state x Problems considered: We perform computer simulations

and an action a is the expected value of the immediate for two problems namely the blocking problem, where by

reward r plus the discounted value of the next state y. after learning an optimal path from the start to the goal

state a block in introduced in the path, forcing the agent

d) Do action a, obtain the next state y and reward r from to consider alternate paths of navigation. The shortcut

world or the world-model. problem represents the opposite approach, in which once

e) If this is a real experience, update world model from x, again after the agent has learnt the optimal path from the

a, y and r. start state to the goal, a shortcut is introduced, and it is

observed whether the agent performs any exploration and

f) Update the evaluation function and update the policy, is able to find the shortcut, therefore, improving over the

i.e. strengthen or weaken the tendency to perform action a previous optimal path.

in state x according to the error in the evaluation function.

We evaluate Q-Learning and Model-based learning in a

g) Go to Step 1. deterministic (grid) environment (Figure 4) for the

blocking problem. The default values for the various

parameters are: Discount factor γ = .9, learning rate = 0.5

This algorithm takes advantage of the fact that exploration

at regular intervals provides a better chance of

determining an optimal policy as opposed to not exploring

once learning has occurred. This will be evident in the

computer simulations.

The resultant graph depicting Q Learning and Model - an internal model is that convergence to the optimal

Based Learning is shown in Figure 5. policy can be very slow and in a large state space many

backups may be required before the necessary

information is propagated to where it is important. From

Average performance of Q-Learning and Dyna on our experiments it is evident that the optimal state value

a Blocking Task function is highest near the goal and declines

exponentially as the number of steps required to reach the

goal increases. The Dyna architecture provides a solution

160

to this problem as it generates and maintains an internal

140 world-model; also the model facilitates the proper

exploration of the environment and as is evident from the

120

Cumulative Reward

results performs much better in dynamically changing

100 Q-Learning environments.

80 We conclude based on these experiments that

Model-based

60 learning

reinforcement learning architectures such as Dyna exhibit

latent learning. As the world model changes they are able

40 to adapt and recover more quickly than model free

20

approaches such as Q-Learning. This behavior is

comparable to that of rats when placed in a maze. Tolman

0 observed in several of his experiments that once latent

learning had occurred in rats, and later on if barriers or

00

00

00

00

0

0

40

80

short cuts were introduced in the environment rats were

12

16

20

24

Time Steps able to adapt quickly and subsequently determine the

optimal paths to the goal.

Figure 5 A graph depicting average performance over 15

runs for the Dyna and Q-Learning algorithms 6. Future Work

We worked with a deterministic grid environment in this

Average Perform ance of Q-Learning and Dyna paper; it would be interesting to see the performance of

on a Shortcut Task these approaches in a non-deterministic world. Also, the

use of a probabilistic approach in determining

160 hypothetical experiences in Dyna would probably yield

more efficient results.

140

Cumulative Reward

120 Q-Learning Acknowledgements

100

80 This project would not have been possible without the

Model-based guidance of Professor Littman. I sincerely thank him for

60 Learning(Dyna

his patience and encouragement.

40 )

20 References

0

Kaelbling, L., Littman, M., Moore, A. (1996)

Reinforcement Learning: A survey. Journal of Artificial

0

0

0

00

00

00

60

95

13

17

21

Intelligence Research, 4, 237-285.

Tim e Steps

Sutton, R. (1990) Integrated Architectures for Learning,

Planning and Reacting based on Approximate Dynamic

Figure 6 A graph depicting the average performance of Programming. Appeared in Proceedings of the Seventh

the Algorithms on a Shortcut problem. International Conference on Machine Learning, pp. 216-

224, Morgan Kaufmann.

5. Analysis and Conclusion Tolman, E.C. (1948). Cognitive maps in Rats and Men.

The Psychological Review. 55(4), 189-208

Q-Learning when combined with sufficient exploration

can be guaranteed to eventually converge to an optimal Voicu, H., Schmajuk, N. (2002). Latent Learning,

policy without ever having to learn an use an internal shortcuts and detours: a computational model.

model of the environment. However, the cost of not using Behavioral Processes 59 (2002) 67-86.

You might also like

- Computer Simulations of Space SocietiesFrom EverandComputer Simulations of Space SocietiesRating: 4 out of 5 stars4/5 (1)

- Playing Geometry Dash With Convolutional Neural NetworksDocument7 pagesPlaying Geometry Dash With Convolutional Neural NetworksfriedmanNo ratings yet

- Issues in Using Function Approximation For Reinforcement LearningDocument9 pagesIssues in Using Function Approximation For Reinforcement Learninga.andreasNo ratings yet

- DQL: A New Updating Strategy For Reinforcement Learning Based On Q-LearningDocument12 pagesDQL: A New Updating Strategy For Reinforcement Learning Based On Q-LearningDanelysNo ratings yet

- Balancing A CartPole System With Reinforcement LeaDocument8 pagesBalancing A CartPole System With Reinforcement LeaKeerthana ChirumamillaNo ratings yet

- Subgoal Discovery For Hierarchical Reinforcement Learning Using Learned PoliciesDocument5 pagesSubgoal Discovery For Hierarchical Reinforcement Learning Using Learned PoliciesjoseNo ratings yet

- Random Sampling of States in Dynamic Programming: Christopher G. Atkeson and Benjamin J. StephensDocument6 pagesRandom Sampling of States in Dynamic Programming: Christopher G. Atkeson and Benjamin J. Stephenscnhzcy14No ratings yet

- Simulation of The Navigation of A Mobile Robot by The Q-Learning Using Artificial Neuron NetworksDocument12 pagesSimulation of The Navigation of A Mobile Robot by The Q-Learning Using Artificial Neuron NetworkstechlabNo ratings yet

- Continuous Action Reinforcement Learning Automata: Performance and ConvergenceDocument6 pagesContinuous Action Reinforcement Learning Automata: Performance and ConvergencealienkanibalNo ratings yet

- Applying Q (λ) -learning in Deep Reinforcement Learning to Play Atari GamesDocument6 pagesApplying Q (λ) -learning in Deep Reinforcement Learning to Play Atari GamesomidbundyNo ratings yet

- Rahman2018 Article ImplementationOfQLearningAndDe PDFDocument6 pagesRahman2018 Article ImplementationOfQLearningAndDe PDFGustavo Pinares MamaniNo ratings yet

- Insect Based Navigation in Multi-Agent Environment.: Supervisor: Prof. Dr. Zulfiquar Ali MemonDocument12 pagesInsect Based Navigation in Multi-Agent Environment.: Supervisor: Prof. Dr. Zulfiquar Ali MemonMehreenNo ratings yet

- Making The Car Faster On Highway in DeeptrafficDocument3 pagesMaking The Car Faster On Highway in Deeptrafficapi-339792990No ratings yet

- LD ArticleDocument8 pagesLD ArticleFaheem ShaukatNo ratings yet

- 1、Bayesian Q-learning(1998)Document8 pages1、Bayesian Q-learning(1998)da daNo ratings yet

- Multi-Agent Deep Reinforcement Learning: Maxim Egorov Stanford UniversityDocument8 pagesMulti-Agent Deep Reinforcement Learning: Maxim Egorov Stanford UniversityCreativ PinoyNo ratings yet

- Research PaperDocument10 pagesResearch Paper李默然No ratings yet

- Asynchronous Methods For Deep Reinforcement LearningDocument28 pagesAsynchronous Methods For Deep Reinforcement LearningscribrrrrNo ratings yet

- RL Project - Deep Q-Network Agent ReportDocument11 pagesRL Project - Deep Q-Network Agent ReportPhạm TịnhNo ratings yet

- The Effects of Memory Replay in Reinforcement LearningDocument14 pagesThe Effects of Memory Replay in Reinforcement Learningcnt dvsNo ratings yet

- Bellemare17a PDFDocument10 pagesBellemare17a PDFMohd Haroon AnsariNo ratings yet

- Combining Deep Q-Networks and Double Q-Learning To Minimize Car Delay at Traffic LightsDocument8 pagesCombining Deep Q-Networks and Double Q-Learning To Minimize Car Delay at Traffic LightsAdriano MedeirosNo ratings yet

- 2000 - GlorennecDocument19 pages2000 - GlorennecFauzi AbdurrohimNo ratings yet

- Solving Stochastic Planning Problems With Large State and Action SpacesDocument9 pagesSolving Stochastic Planning Problems With Large State and Action SpaceskcvaraNo ratings yet

- Assignment 4Document5 pagesAssignment 4adnanNo ratings yet

- 04.02 OBDP2021 RomanelliDocument7 pages04.02 OBDP2021 RomanelliBoul chandra GaraiNo ratings yet

- Li Littman Walsh 2008 PDFDocument8 pagesLi Littman Walsh 2008 PDFKitana MasuriNo ratings yet

- A0C: Alpha Zero in Continuous Action SpaceDocument10 pagesA0C: Alpha Zero in Continuous Action SpaceTiberiu GeorgescuNo ratings yet

- SBRN2002Document7 pagesSBRN2002Carlos RibeiroNo ratings yet

- Neural Motion Fields Encoding Grasp Trajectories As Implicit Value FunctionsDocument4 pagesNeural Motion Fields Encoding Grasp Trajectories As Implicit Value FunctionsQinan ZhangNo ratings yet

- Thesis Fabrizio GalliDocument22 pagesThesis Fabrizio GalliFabri GalliNo ratings yet

- Wcci 14 SDocument7 pagesWcci 14 SCarlos RibeiroNo ratings yet

- Reinforcement Learning As Classification: Leveraging Modern ClassifiersDocument8 pagesReinforcement Learning As Classification: Leveraging Modern ClassifiersIman ZNo ratings yet

- A New Representation of Successor Features For Transfer Across Dissimilar EnvironmentsDocument9 pagesA New Representation of Successor Features For Transfer Across Dissimilar EnvironmentsedyNo ratings yet

- Adavances in Deep-Q Learning PDFDocument64 pagesAdavances in Deep-Q Learning PDFJose GarciaNo ratings yet

- 8200 Non Delusional Q Learning and Value IterationDocument11 pages8200 Non Delusional Q Learning and Value IterationAmri YasirliNo ratings yet

- Genetic Reinforcement Learning Algorithms For On-Line Fuzzy Inference System Tuning "Application To Mobile Robotic"Document31 pagesGenetic Reinforcement Learning Algorithms For On-Line Fuzzy Inference System Tuning "Application To Mobile Robotic"Nelson AriasNo ratings yet

- 10.1109 Lars-Sbr.2015.41 ApbpDocument6 pages10.1109 Lars-Sbr.2015.41 Apbpnarvan.m31No ratings yet

- RetraceDocument18 pagesRetracej3qbzodj7No ratings yet

- Sharma 2019 Dynamics AwareDocument11 pagesSharma 2019 Dynamics AwaredanhNo ratings yet

- Cart-Pole Balancing With Deep Q Network (DQN) : The ObjectiveDocument1 pageCart-Pole Balancing With Deep Q Network (DQN) : The Objective01689373477No ratings yet

- DeepMind WhitepaperDocument9 pagesDeepMind WhitepapercrazylifefreakNo ratings yet

- Exponential Particle Swarm Optimization Approach For Improving Data ClusteringDocument5 pagesExponential Particle Swarm Optimization Approach For Improving Data ClusteringsanfieroNo ratings yet

- Smooth Q-Learning - Accelerate ConvergenceDocument7 pagesSmooth Q-Learning - Accelerate ConvergenceSAMBIT CHAKRABORTYNo ratings yet

- 362 ReportDocument6 pages362 ReportNguyễn Tự SangNo ratings yet

- Control of Memory and Action in MinecraftDocument10 pagesControl of Memory and Action in MinecraftmanantomarNo ratings yet

- ML Assignment6Question GitDocument2 pagesML Assignment6Question Gitvullingala syanthanNo ratings yet

- Cederborg Etal 2015 PDFDocument7 pagesCederborg Etal 2015 PDFbanned minerNo ratings yet

- Opinion CriticDocument9 pagesOpinion CriticPranav VajreshwariNo ratings yet

- RL Intro-2Document24 pagesRL Intro-2Mariana MeirelesNo ratings yet

- RL Course ReportDocument10 pagesRL Course ReportshaneNo ratings yet

- Hanna2021 Article GroundedActionTransformationFoDocument31 pagesHanna2021 Article GroundedActionTransformationFoAyman TaniraNo ratings yet

- Lost Valley SearchDocument6 pagesLost Valley Searchmanuel abarcaNo ratings yet

- Reinforcement Learning: Yijue HouDocument34 pagesReinforcement Learning: Yijue HouAnum KhawajaNo ratings yet

- Katharina Tluk v. Toschanowitz, Barbara Hammer and Helge Ritter - Mapping The Design Space of Reinforcement Learning Problems - A Case StudyDocument11 pagesKatharina Tluk v. Toschanowitz, Barbara Hammer and Helge Ritter - Mapping The Design Space of Reinforcement Learning Problems - A Case StudyGrettszNo ratings yet

- Stochastic Learning AutomataDocument17 pagesStochastic Learning Automatavidyadhar10No ratings yet

- Every Model Learned by Gradient Descent Is Approximately A Kernel MachineDocument12 pagesEvery Model Learned by Gradient Descent Is Approximately A Kernel Machinekallenhard1No ratings yet

- Lecture 29 RLDocument38 pagesLecture 29 RLprakuld04No ratings yet

- Em Ut 1 QP 5 9 2020Document1 pageEm Ut 1 QP 5 9 2020Anil KumarNo ratings yet

- Biodiversity: Life To Our Mother EarthDocument19 pagesBiodiversity: Life To Our Mother EarthAnil KumarNo ratings yet

- DR M Suneela and Dr. K. Jyothi Wildlife SanctuaryDocument13 pagesDR M Suneela and Dr. K. Jyothi Wildlife SanctuaryAnil KumarNo ratings yet

- Domesticated Biodiversity BSAP Ver.2 May 2003 PDFDocument144 pagesDomesticated Biodiversity BSAP Ver.2 May 2003 PDFAnil KumarNo ratings yet

- Methods For Environmental Impact AssessmentDocument63 pagesMethods For Environmental Impact AssessmentSHUVA_Msc IB97% (33)

- Ajp v1 Id1004Document9 pagesAjp v1 Id1004Yusrina ConitatyNo ratings yet

- M. Sc. ZoologyDocument28 pagesM. Sc. ZoologyUmang AggarwalNo ratings yet

- Farmer BookDocument154 pagesFarmer Bookআম্লান দত্তNo ratings yet

- HH - Understanding Stress - ArticleDocument6 pagesHH - Understanding Stress - ArticleAnil KumarNo ratings yet

- Sinc Selection Process 2019 UpdateDocument11 pagesSinc Selection Process 2019 UpdateAnil KumarNo ratings yet

- Importance of WildlifeDocument5 pagesImportance of Wildlifeapi-276711662100% (1)

- Physiology of Memory Compatibility Mode PDFDocument38 pagesPhysiology of Memory Compatibility Mode PDFAdson AlcantaraNo ratings yet

- Low-Voltage Bulk-Driven Amplifier Design and Its Application in I PDFDocument127 pagesLow-Voltage Bulk-Driven Amplifier Design and Its Application in I PDFAnil KumarNo ratings yet

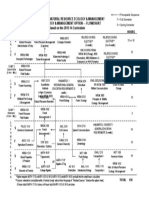

- B.Sc. Time Table Sept. 2020 PDFDocument2 pagesB.Sc. Time Table Sept. 2020 PDFAnil KumarNo ratings yet

- Department of Natural Resource Ecology & Management Forest Ecology & Management Option - Flowchart Based On The 2013-14 CurriculumDocument1 pageDepartment of Natural Resource Ecology & Management Forest Ecology & Management Option - Flowchart Based On The 2013-14 CurriculumAnil KumarNo ratings yet

- Toxicity of Heavy MetalsDocument19 pagesToxicity of Heavy MetalsAnil KumarNo ratings yet

- Unit 3 PDFDocument13 pagesUnit 3 PDFKamal Kishor PantNo ratings yet

- EIA ProcessDocument6 pagesEIA ProcessrohanNo ratings yet

- P AssignmentDocument8 pagesP AssignmentAnil KumarNo ratings yet

- Habitat and Habitat Selection: Theory, Tests, and ImplicationsDocument8 pagesHabitat and Habitat Selection: Theory, Tests, and ImplicationsAnil KumarNo ratings yet

- EiaDocument16 pagesEiaAudrey Patrick KallaNo ratings yet

- Department of Natural Resource Ecology & Management Forest Ecology & Management Option - Flowchart Based On The 2013-14 CurriculumDocument1 pageDepartment of Natural Resource Ecology & Management Forest Ecology & Management Option - Flowchart Based On The 2013-14 CurriculumAnil KumarNo ratings yet

- Question Papers Code's and Title of The Papers PDFDocument8 pagesQuestion Papers Code's and Title of The Papers PDFAnil KumarNo ratings yet

- 1018 Forest Genetic Resources Conservation and Management - Overview Concepts and Some Systematic Approaches - Vol. 1 PDFDocument115 pages1018 Forest Genetic Resources Conservation and Management - Overview Concepts and Some Systematic Approaches - Vol. 1 PDFHilda MartínezNo ratings yet

- Schizophrenia 2Document28 pagesSchizophrenia 2grinakisNo ratings yet

- Psychopharmacology Basics and Beyond Tolisano 2019Document109 pagesPsychopharmacology Basics and Beyond Tolisano 2019Anil Kumar100% (1)

- Principles Neuro PsychopharmacologyDocument11 pagesPrinciples Neuro PsychopharmacologyAnil KumarNo ratings yet

- PSC AssignmentDocument8 pagesPSC AssignmentAnil KumarNo ratings yet

- PSC AssignmentDocument8 pagesPSC AssignmentAnil KumarNo ratings yet

- Deep Learning - Creating MindsDocument2 pagesDeep Learning - Creating MindsSANEEV KUMAR DASNo ratings yet

- Logical Fallacies - PPT 1Document18 pagesLogical Fallacies - PPT 1Dalal Abed al hayNo ratings yet

- Complete Fs 2 Episodes For Learning PackageDocument40 pagesComplete Fs 2 Episodes For Learning PackageElah Grace Viajedor79% (14)

- Nancy, B.ed English (2015-'17)Document14 pagesNancy, B.ed English (2015-'17)Nancy Susan KurianNo ratings yet

- GMAT Pill E-BookDocument121 pagesGMAT Pill E-BookNeeleshk21No ratings yet

- Critical ThinkingDocument38 pagesCritical ThinkingSha SmileNo ratings yet

- Course Module EL. 107 Week 2Document22 pagesCourse Module EL. 107 Week 2Joevannie AceraNo ratings yet

- City Stars 4 PDFDocument153 pagesCity Stars 4 PDFVera0% (1)

- Considering Aspects of Pedagogy For Developing Technological LiteracyDocument16 pagesConsidering Aspects of Pedagogy For Developing Technological LiteracyjdakersNo ratings yet

- 6 Self Study Guide PrimaryDocument6 pages6 Self Study Guide PrimaryDayana Trejos BadillaNo ratings yet

- Thesis Chapter 1 Up To Chapter 5Document41 pagesThesis Chapter 1 Up To Chapter 5Recilda BaqueroNo ratings yet

- 1990 - Read - Baron - Environmentally Induced Positive Affect: Its Impact On Self Efficacy, Task Performance, Negotiation, and Conflict1Document17 pages1990 - Read - Baron - Environmentally Induced Positive Affect: Its Impact On Self Efficacy, Task Performance, Negotiation, and Conflict1Roxy Shira AdiNo ratings yet

- Annex 1.hypnosis and Trance Forms Iss7Document2 pagesAnnex 1.hypnosis and Trance Forms Iss7elliot0% (1)

- What Were Your Responsibilities and Duties While at Practicum?Document2 pagesWhat Were Your Responsibilities and Duties While at Practicum?Keith Jefferson DizonNo ratings yet

- Math Anxiety Level QuestionnaireDocument12 pagesMath Anxiety Level QuestionnaireHzui FS?No ratings yet

- Complete FCE - U2L2 - Essay Type 1Document2 pagesComplete FCE - U2L2 - Essay Type 1Julie NguyenNo ratings yet

- The Power of PresenceDocument10 pagesThe Power of PresenceheribertoNo ratings yet

- How To Pass Principal's TestDocument34 pagesHow To Pass Principal's TestHero-name-mo?No ratings yet

- Ethics in EducationDocument4 pagesEthics in EducationEl ghiate Ibtissame100% (1)

- SLO's: Alberta Education English Language Arts Program of Studies Grade K-6 A GuideDocument11 pagesSLO's: Alberta Education English Language Arts Program of Studies Grade K-6 A Guideapi-302822779No ratings yet

- Lesson 12Document8 pagesLesson 12Giselle Erasquin ClarNo ratings yet

- ReadingDocument3 pagesReadingLivia ViziteuNo ratings yet

- Curriculum of English As A Foreign LanguageDocument13 pagesCurriculum of English As A Foreign LanguageAfriani Indria PuspitaNo ratings yet

- Teaching Writing: Asma Al-SayeghDocument29 pagesTeaching Writing: Asma Al-SayeghSabira MuzaffarNo ratings yet

- ME EngRW 11 Q3 0201 - SG - Organizing Information Through A Brainstorming ListDocument12 pagesME EngRW 11 Q3 0201 - SG - Organizing Information Through A Brainstorming ListPrecious DivineNo ratings yet

- Working Agreement Canvas v1.24 (Scrum)Document1 pageWorking Agreement Canvas v1.24 (Scrum)mashpikNo ratings yet

- Project Management Interview Questions and AnswersDocument2 pagesProject Management Interview Questions and AnswersStewart Bimbola Obisesan100% (1)

- 745 NullDocument5 pages745 Nullhoney arguellesNo ratings yet

- Northern Mindanao Colleges, Inc.: Self-Learning Module For Principles of Marketing Quarter 1, Week 4Document6 pagesNorthern Mindanao Colleges, Inc.: Self-Learning Module For Principles of Marketing Quarter 1, Week 4Christopher Nanz Lagura CustanNo ratings yet

- Code SwitchingDocument6 pagesCode SwitchingMarion Shanne Pastor CorpuzNo ratings yet