Professional Documents

Culture Documents

Information Theory and Coding Techniques: IV B. Tech. - I Semester

Information Theory and Coding Techniques: IV B. Tech. - I Semester

Uploaded by

Ravi KumarOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Information Theory and Coding Techniques: IV B. Tech. - I Semester

Information Theory and Coding Techniques: IV B. Tech. - I Semester

Uploaded by

Ravi KumarCopyright:

Available Formats

IV B. Tech.

–I Semester

(19BT70407)Information Theory and Coding Techniques

(PE - 5)

Int. Marks Ext. Marks Total Marks L T P C

40 60 100 3 - - 3

PRE-REQUISITES: Courses on Analog Communications and Digital Communications

COURSE DESCRIPTION:

Information theory; Channel capacity; Channel coding techniques – Linear block codes, Cyclic

codes, Convolutional codes; Reed-Solomon and Turbo codes.

COURSE OBJECTIVES:

CEO1: To impart knowledge in information theory, coding, decoding and error control

codes.

CEO2: To develop analytical, design and development skills in error coding techniques

for prescribed requirements.

COURSE OUTCOMES: After successful completion of this course, the students will be able to:

CO1: Evaluate entropy for various source coding techniques.

CO2: Evaluate channel capacity for different Gaussian channels

CO3: Analyze various error detection and correction codes to enable reliable data

transmission.

CO4: understand Reed-Solomon Codes, Interleaving and Concatenated Codes and their

Applications

DETAILED SYLLABUS:

UNIT I– INTRODUCTION (10 periods)

Entropy: Discrete stationary sources, Markov sources, Entropy of a discrete Random variable-

Joint, conditional, relative entropy, Mutual Information and conditional mutual information.

Chainrules for entropy, relative entropy and mutual information, Differential Entropy- Joint,

relative, conditional differential entropy and Mutual information.

Loss less Source coding: Uniquely decodable codes, Instantaneous codes, Kraft’s inequality,

optimal codes, Huffman code, Shannon’s Source Coding Theorem.

UNIT II – CHANNEL CAPACITY (9 periods)

Capacity computation for some simple channels, Channel Coding Theorem, Fano’s inequality

and the converse to the Coding Theorem, Equality in the converse to the coding theorem, The

joint source Channel Coding Theorem, The Gaussian channels- Capacity calculation for Band

limited Gaussian channels, Parallel Gaussian Channels, Capacity of channels with colored

Gaussian noise.

UNIT III – CHANNEL CODING-1 (8 periods)

Linear Block Codes: Introduction to Linear block codes, Generator Matrix, Systematic Linear

Department of Electronics and Communication Engineering

Block codes, , Parity Check Matrix, Syndrome testing, Error correction, Decoder

Implementation of Linear Block Codes, Error Detecting and correcting capability of Linear Block

codes.

UNIT IV – CHANNEL CODING-2 (9 periods)

Cyclic Codes: Algebraic Structure of Cyclic Codes, Binary Cyclic Code Properties, Encoding in

Systematic Form, Well-Known Block Codes-Hamming Codes, Extended Golay Code, BCH Codes.

Convolutional Codes: Convolution Encoding, Convolutional Encoder Representation,

Formulation of the Convolutional Decoding Problem, Properties of Convolutional Codes,

Sequential Decoding,

UNIT V – CHANNEL CODING-3 (9 periods)

Reed-Solomon Codes- Reed-Solomon Error Probability, Reed-Solomon Encoding, Reed-Solomon

Decoding, Interleaving and Concatenated Codes- Block Interleaving, Convolutional Interleaving,

Concatenated Codes. Coding and Interleaving Applied to the Compact Disc Digital Audio

System- CIRC Encoding, CIRC Decoding.

Total Periods: 45

Topics of Self-study are provided in the lesson plan

TEXT BOOKS:

1. Thomas M. Cover and Joy A. Thomas, Elements of Information Theory, John Wiley & Sons,

1stEdition, 1999.

2. Bernard sklar, “Digital Communications – Fundamental and Application”, Pearson Education,

2nd Edition, 2009.

REFERENCE BOOKS:

1. John G. Proakis, “Digital Communications”, Mc.GrawHill Publication, 5thEdition, 2008.

2. ShuLin and Daniel J.Costello,Jr., “Error Control Coding–Fundamentals and Applications”,

Prentice Hall,2nd Edition, 2002.

ADDITIONAL LEARNING RESOURCES:

https://nptel.ac.in/courses/117101053

IMPROVEMENTS OVER SVEC16 SYLLABUS:

1. Provided Additional Learning Resources.

2. Syllabus has been fine tuned as per the lecture schedule.

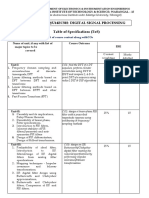

CO-PO-PSO Mapping Table

Program specific

Course Program outcomes

outcomes

outcome

PO1 PO2 PO3 PO4 PO5 PO6 PO7 PO8 PO9 PO10 PO11 PO12 PSO1 PSO2 PSO3

CO1 3 3 - 2 - - - - - - - - - 3 -

CO2 3 3 - 2 - - - - - - - - - 3 -

CO3 3 3 1 2 - - - - - - - - - 3 -

CO4 3 2 - 2 - 1 - - - - - - - 3 -

Average 3 2.7 1 2 - 1 - - - - - - - 3 -

Course

Correlation 3 3 1 2 - 1 - - - - - - - 3 -

Level

Correlation Level: 3-High; 2-Medium; 1-Low

Department of Electronics and Communication Engineering

SREE VIDYANIKETHAN ENGINEERING COLLEGE

(AUTONOMOUS)

SREE SAINATH NAGAR, A. RANGAMPET-517 102

Department of Electronics and Communication Engineering

LESSON PLAN

Name of the Course: (19BT70407) Information Theory and Coding Techniques

Class & Semester: IV B. Tech (ECE) – I Semester

Name(s) of the faculty Member(s): Dr.V.R.Anitha

Book(s)

S. No. of

Topic followe Topics for self study

No. periods

d

UNIT – I:INTRODUCTION

Entropy: Discrete stationarysources, 1 T1 The entropy power

1.

Markov sources inequality and the

Entropy of a discrete Random variable- 2 T1 Brunn–Minkowski

Joint, conditional,relativeentropy,Mutual Inequality, Lempel-Ziv

2.

Information and conditionalmutual coding, Arithmetic

information coding.

Chain rules for entropy, relative entropy 1 T1

3.

and mutual Information

Differential Entropy- Joint,relative, 1 T1

4. conditional differential entropy, Mutual

information

Loss less Source coding:Uniquely 1 T1

5.

decodable codes

6. Instantaneous codes 1 T1

Kraft’s inequality, Optimal codes 1 T1

7.

8. Huffman code 1 T1

9. Shannon’s Source Coding Theorem 1 T1, R1

Total periods required: 10

UNIT – II: CHANNEL CAPACITY

Capacity computation for some simple 1 T1 Rate distortion

10.

channels, Channel Coding Theorem Theory,Arimoto-

Fano’s inequality and the converse to the 2 T1 Blahut algorithm.

11.

Coding Theorem,

Equality in the converse to the coding 1 T1

12.

theorem

The joint source Channel Coding 1 T1

13.

Theorem

The Gaussian channels- Capacity 2 T1

14. calculation for Band limited Gaussian

channels

Parallel Gaussian Channels 1 T1

15.

Capacity of channels with colored 1 T1

16.

Gaussian noise

Department of Electronics and Communication Engineering

Book(s)

S. No. of

Topic followe Topics for self study

No. periods

d

Total periods required: 09

UNIT -III: CHANNEL CODING-1

LinearBlockCodes:IntroductiontoLinear T2 Error probability after

17. 1

blockcodes, GeneratorMatrix decoding, Structured

18. SystematicLinearBlockcodes 1 T2 Sequences, Usefulness

19. ParityCheckMatrix 1 T2 of the Standard Array.

20. Syndrometesting, 1 T2

21. Error correction 1 T2

Decoder implementation for linear block 1 T2

22.

codes

ErrorDetectingandcorrectingcapabilityofL 2 T2

23.

inearBlockcodes

Total periods required: 08

UNIT – IV: CHANNEL CODING-2

CyclicCodes:Algebraic Structure of 1 T2 Trellis-Coded

24.

Cyclic Codes Modulation-The Idea

25. Binary Cyclic Code Properties 1 T2 Behind Trellis-Coded

26. Encoding in Systematic Form 1 T2 Modulation (TCM),

Well-Known Block Codes-Hamming 1 T2 TCM Encoding,

27.

Codes TCM Decoding.

28. Extended Golay Code, BCH Codes 1 T2

ConvolutionalCodes: Convolution 1 T2

29. Encoding, Convolutional Encoder

Representation

Formulation of the Convolutional 1 T2

30. Decoding Problem, Properties of

Convolutional Codes

31. Sequential Decoding, 1 T2

Feedback Decoding, Application of 1 T2

32.

Viterbi and sequential decoding

Total periods required: 09

UNIT – V: CHANNEL CODING-3

Reed-Solomon Codes- Reed-Solom1on 1 T2 Applications of Reed

33.

Error Probability Solomon codes in

34. Reed-Solomon Encoding 1 T2 Deep space

35. Reed-Solomon Decoding 1 T2 Telecommunications

Interleaving and Concatenated Codes- 1 T2

36.

Block Interleaving

37. Convolutional Interleaving 1 T2

38. Concatenated Codes 1 T2

Coding and Interleaving Applied to the 2 T2

39. Compact Disc Digital Audio System-

CIRC Encoding

40. CIRC Decoding 1 T2

Total periods required: 09

Grand total periods required: 45

TEXT BOOKS:

T1.Thomas M Cover and Joy A. Thomas, Elements of Information Theory, John Wiley & Sons,

st

1 Edition, 1999.

Department of Electronics and Communication Engineering

T2. Bernard sklar, “Digital Communications – Fundamental and Application”, Pearson Education,

nd

2 Edition, 2009.

REFERENCE BOOKS:

R1.JohnG.Proakis, “Digital Communications”, Mc.GrawHill Publication, 5thEdition, 2008.

R2.ShuLin and Daniel J.Costello, Jr., “Error Control Coding–Fundamentals and

nd

Applications”, Prentice Hall,2 Edition, 2002.

CODE No.:19BT70407 SVEC-19

SREE VIDYANIKETHANENGINEERINGCOLLEGE

(An Autonomous Institution, Affiliated to JNTUA, Ananthapuramu)

IV B.Tech I Semester (SVEC-19) Regular Examinations

INFORMATION THEORY AND CODING TECHNIQUES

[Electronics and Communication Engineering]

Time: 3 hours Max. Marks: 60

Answer One Question from each Unit

All questions carry equal marks

1. a) 6 Marks L2 CO1 PO2

Explain source coding theorem and develop equation for

efficiency.

b) 6 Marks L4 CO1 PO2

Consider the set of all densities with fixed pair wise marginals

fX1,X2(x1,x2),fX2,X3(x2,x3),…….fXn-1,Xn(xn-1,xn). Show that

maximum entropy process with these marginals is the first

order Markov process with these marginals.

(OR)

2. a) Define the terms 6 Marks L1 CO1 PO1

(i) Entropy (ii) Average information (iii) Mutual information

b) Prove that: 6 Marks L3 CO1 PO2

i. H(x,y)=H(x)+H(Y)

ii. I(x,y)=H(x)+H(y)-H(x,y)

3. a) Describe shannon’s channel coding theorem for memory less 6 Marks L2 CO2 PO1

channels.

b) Derive the Shannon channel coding theorem and design a 6 Marks L4 CO2 PO3

system which has a band width of 5kHz andSNR of 28dB at the

input to the receiver, find its information carrying Capacity.

(OR)

4. a) 6 Marks L4 CO3 PO2

Derive the capacity of channels with colored Gaussian noise.

b) 6 Marks L4 CO3 PO3

Derive the channel capacity for a Water filling channel.

5. a) 6 Marks L2 CO1 PO1

Explain briefly about error detection and correction of linear

block code for communications.

b) Generator matrix of a (7,4) block code is 6 Marks L4 CO3 PO4

G= 0 0 0 1 0 1 1

0010110

Department of Electronics and Communication Engineering

0100111

1000101

(i) Determine the Parity - Check matrix.

(ii) Determine the maximum weight of the code.

(OR)

6. Design the Syndrome calculations circuit diagram for (n, k) 12 Marks L3 CO4 PO3

linear block code.

7. a) 6 Marks L4 CO4 PO3

Design a feedback shift register encoder for an (8, 5) cyclic

code with a generator in systematic form.

b) Explain the Viterbi algorithm with an example. 6 Marks L3 CO1 PO1

(OR)

8. a) Discribe the decoding procedure for BCH codes. 4 Marks L1 CO1 PO2

b) Discuss some of Practical applications of Convolution codes. 8 Marks L2 CO1 PO1

9. 12 Marks L3 CO4 PO3

Design a (7,3) RS decoder for a receiving vector

R=100001101111010110111.

(OR)

10 a) Apply Reed-Solomon code in encoding and decoding? 6 Marks L3 CO3 PO6

. b) 6 Marks L1 CO1 PO1

What is burst error? How these types of errors can be corrected.

Department of Electronics and Communication Engineering

Panel of Examiners

For Question Paper Setting

INFORMATION THEORY AND CODING TECHNIQUES(19BT60406)

III B. Tech. - II Semester

(Electronics and Communication Engineering)

Name and Address

Sl.No. Mobile Number and Email-ID

Dr.P. NarahariSastry,

Associate Professor,

Mobile: 9948397802

1 Department of ECE,

ChaitanyaBharathi Institute of Technology, Email:ananditahari@yahoo.com

Gandipet,

HYDERABAD -500075.

Dr.Gowri Shankar Reddy,

Assistant Professor,

Mobile:8686677877

2 Department of ECE,

S V U College of Engineering, Email:gowri.durgam@gmail.com

S V University

Tirupati – 517 502.

Dr.K.Suresh Reddy ,

Professor& Head, Mobile: 9866178937

3

Department of ECE, Email:sureshkatam@gmail.com

G.Pulla Reddy Engineering College,

Kurnool-518007.

Dr.G. SasiBhushanaRao,

Professor, Mobile: 9849747131

4 Department of ECE,

Andhra University Email:sasigps@gmail.com

College of Engineering

Visakhapatnam-530003.

Dr.M.S.S.Rukmini,

Professor, Mobile: 98662 81571

5 Department of ECE,

Vignan University,

Email:mssrukmini@gmail.com

Vadlamudi,

Guntur-522213

Dr.T. Ramasree,

Professor, Mobile: 9346465050

6 Dept. Of ECE,

SVU College of Engineering, Email:Rama.jaypee@gmail.com

Tirupati- 517502.

Department of Electronics and Communication Engineering

Department of Electronics and Communication Engineering

You might also like

- IES - Electronics Engineering - Signals and SystemsDocument70 pagesIES - Electronics Engineering - Signals and SystemsRakesh Sharma100% (2)

- Isacco Arnaldi - Design of Sigma-Delta Converters in MATLAB® - Simulink®-Springer International Publishing (2019) PDFDocument271 pagesIsacco Arnaldi - Design of Sigma-Delta Converters in MATLAB® - Simulink®-Springer International Publishing (2019) PDFRaúl Choque100% (4)

- Information Theory and CodingDocument10 pagesInformation Theory and CodingThe Anonymous oneNo ratings yet

- VI Sem SyllabusDocument12 pagesVI Sem Syllabusharish solpureNo ratings yet

- ECT306 - Ktu QbankDocument10 pagesECT306 - Ktu Qbankamithtitus2003No ratings yet

- Final Year: Electronics and Telecommunication Engineering: Semester - ViiiDocument13 pagesFinal Year: Electronics and Telecommunication Engineering: Semester - ViiiYeslin SequeiraNo ratings yet

- 3rd Sem SyllabusDocument3 pages3rd Sem Syllabuskundan kumarNo ratings yet

- UNIT 1 and 2Document37 pagesUNIT 1 and 2meghwalarjun75No ratings yet

- Be CifDocument3 pagesBe CifVratikaNo ratings yet

- ECE3001 ACS SyllabusDocument4 pagesECE3001 ACS Syllabusvenki iyreNo ratings yet

- BOS Final - 4 Sem - 18EC42Document4 pagesBOS Final - 4 Sem - 18EC42‡ ‡AnuRaG‡‡No ratings yet

- ECT305 - SyllabusDocument11 pagesECT305 - SyllabusShanu NNo ratings yet

- Analog Digital CommunicationDocument3 pagesAnalog Digital CommunicationHarsha M VNo ratings yet

- Course Description:: 4. Reference BooksDocument4 pagesCourse Description:: 4. Reference BooksRUDRESH PRATAP SINGHNo ratings yet

- Course Name: Digital Signal Processing Course Code: EE 605A Credit: 3Document6 pagesCourse Name: Digital Signal Processing Course Code: EE 605A Credit: 3nspNo ratings yet

- Information Theory and CodingDocument8 pagesInformation Theory and CodingRavi_Teja_44970% (1)

- ECE - EEE - F311 Communication Systems - Handout - 03aug23Document4 pagesECE - EEE - F311 Communication Systems - Handout - 03aug23f20202449No ratings yet

- AD&A Lab ManualDocument37 pagesAD&A Lab ManualM SarithaNo ratings yet

- Ece4007 Information-Theory-And-Coding Eth 1.1 49 Ece4007Document3 pagesEce4007 Information-Theory-And-Coding Eth 1.1 49 Ece4007Santosh KumarNo ratings yet

- Coding Theory and PracticeDocument3 pagesCoding Theory and PracticeAvinash BaldiNo ratings yet

- ECE4007 - Information Theory and Coding - 24.10.17Document3 pagesECE4007 - Information Theory and Coding - 24.10.17Hello WorldNo ratings yet

- Ece-V-Information Theory & Coding (10ec55) - NotesDocument217 pagesEce-V-Information Theory & Coding (10ec55) - NotesPrashanth Kumar0% (1)

- Syllabus 4th SemDocument12 pagesSyllabus 4th Semwanab41931No ratings yet

- EC401 Information Theory & CodingDocument2 pagesEC401 Information Theory & CodingPavithra A BNo ratings yet

- DcsDocument4 pagesDcsSai AkshayNo ratings yet

- Curricula & Syllabi: Bachelor of TechnologyDocument12 pagesCurricula & Syllabi: Bachelor of TechnologyPranjal DubeyNo ratings yet

- ECT453 - Error Control Codes SyllabusDocument9 pagesECT453 - Error Control Codes Syllabuslakshmivs23No ratings yet

- Info TheoryDocument3 pagesInfo TheoryRishabh ShahNo ratings yet

- EC401 Information Theory & CodingDocument2 pagesEC401 Information Theory & Codingakhil babu0% (1)

- ECE4007 Information-Theory-And-Coding ETH 1 AC40Document3 pagesECE4007 Information-Theory-And-Coding ETH 1 AC40harshitNo ratings yet

- EE301Document6 pagesEE301savithaNo ratings yet

- Gujarat Technological University: Electronics (10) & Electronics and Communication Engineering (11) SUBJECT CODE: 2161001Document3 pagesGujarat Technological University: Electronics (10) & Electronics and Communication Engineering (11) SUBJECT CODE: 2161001MUSICMG MusicMgNo ratings yet

- UntitledDocument71 pagesUntitled203005 ANANTHIKA MNo ratings yet

- Antennas and Radiation Mechanism of Various Antennas and Antenna ArraysDocument11 pagesAntennas and Radiation Mechanism of Various Antennas and Antenna ArrayssridharchandrasekarNo ratings yet

- ECC15: Digital Signal ProcessingDocument7 pagesECC15: Digital Signal ProcessingPragyadityaNo ratings yet

- Cpu CaDocument6 pagesCpu CaHari YeligetiNo ratings yet

- Ktunotes - in EC302 Digital CommunicationDocument3 pagesKtunotes - in EC302 Digital CommunicationAravind R KrishnanNo ratings yet

- Syllabus 2021-22 (Anurag)Document18 pagesSyllabus 2021-22 (Anurag)dabas7355No ratings yet

- Data CommunicationDocument4 pagesData CommunicationRamachandra ReddyNo ratings yet

- Prerequisites Course Objectives: Department of Technical Education Board of Technical Examinations, BengaluruDocument9 pagesPrerequisites Course Objectives: Department of Technical Education Board of Technical Examinations, BengaluruRakshithNo ratings yet

- Section-A: MDU B.Tech Syllabus (ECE) - II YearDocument2 pagesSection-A: MDU B.Tech Syllabus (ECE) - II YearBindia HandaNo ratings yet

- Ilovepdf MergedDocument163 pagesIlovepdf MergedShreya Jain 21BEC0108No ratings yet

- Lab Oriented Theory CourseDocument2 pagesLab Oriented Theory CourseBENAZIR BEGAM RNo ratings yet

- Syll 6Document7 pagesSyll 6BT21EE017 Gulshan RajNo ratings yet

- DC15 16Document3 pagesDC15 16D21L243 -Hari Vignesh S KNo ratings yet

- EC302 Digital CommunicationDocument3 pagesEC302 Digital CommunicationnikuNo ratings yet

- ME (Updated)Document2 pagesME (Updated)AanjanayshatmaNo ratings yet

- Syllabus OrganizedDocument9 pagesSyllabus OrganizedDatta RajendraNo ratings yet

- DC SyllabusDocument4 pagesDC SyllabusShreyansh AgarwalNo ratings yet

- Digital Communication SyllabusDocument4 pagesDigital Communication Syllabusnimish kambleNo ratings yet

- 4ECE SyllabusDocument15 pages4ECE Syllabussk788977No ratings yet

- Gujarat Technological University: Page 1 of 3Document3 pagesGujarat Technological University: Page 1 of 3ALL ÎÑ ÔÑÈNo ratings yet

- Introduction To Algorithms, 2 Edition, The MIT Press, 2001Document12 pagesIntroduction To Algorithms, 2 Edition, The MIT Press, 2001lokesh kumarNo ratings yet

- GSM Basics Chapter7 DetailDocument71 pagesGSM Basics Chapter7 DetailGhulam RabbaniNo ratings yet

- ECE2004 - TLF 19.3.19 With CO, COBDocument3 pagesECE2004 - TLF 19.3.19 With CO, COBNanduNo ratings yet

- Ece-V-Information Theory & Coding (10ec55) - NotesDocument199 pagesEce-V-Information Theory & Coding (10ec55) - NotesTapasRoutNo ratings yet

- Professional Elective - IDocument10 pagesProfessional Elective - IsridharchandrasekarNo ratings yet

- Eeng360 Fall2010 2011 Course DescriptDocument2 pagesEeng360 Fall2010 2011 Course DescriptEdmond NurellariNo ratings yet

- Dit University, Dehradun: Semester I 2017 - 2018 Course HandoutDocument2 pagesDit University, Dehradun: Semester I 2017 - 2018 Course HandoutNarendra KumarNo ratings yet

- TV & Video Engineer's Reference BookFrom EverandTV & Video Engineer's Reference BookK G JacksonRating: 2.5 out of 5 stars2.5/5 (2)

- Microwave System Engineering PrinciplesFrom EverandMicrowave System Engineering PrinciplesRating: 4.5 out of 5 stars4.5/5 (3)

- Experiment: Auto Correlation and Cross Correlation: y (T) X (T) H (T)Document5 pagesExperiment: Auto Correlation and Cross Correlation: y (T) X (T) H (T)Ravi KumarNo ratings yet

- 9ec304 75Document54 pages9ec304 75Ravi KumarNo ratings yet

- Analog Communications LabDocument54 pagesAnalog Communications LabjaswanthjNo ratings yet

- Color Image Processing: Prepared by T. Ravi Kumar NaiduDocument60 pagesColor Image Processing: Prepared by T. Ravi Kumar NaiduRavi KumarNo ratings yet

- 9 FET Amplifier PDFDocument21 pages9 FET Amplifier PDFabdullah mohammedNo ratings yet

- Mti and Pulsed DopplerDocument33 pagesMti and Pulsed DopplerWaqar Shaikh67% (3)

- Recursive Least-Squares Algorithm (RLS) : September 30, 2020Document17 pagesRecursive Least-Squares Algorithm (RLS) : September 30, 2020jaffaNo ratings yet

- Microfono Yaesu MD-200A8XDocument8 pagesMicrofono Yaesu MD-200A8XGuido B. Silva EricksenNo ratings yet

- Antmix Manual Eng Ita Deu Lo PDFDocument57 pagesAntmix Manual Eng Ita Deu Lo PDFMarco DefrancescoNo ratings yet

- Selected Answers CH 9-13Document15 pagesSelected Answers CH 9-13VibhuKumarNo ratings yet

- 2011 - IEEE - Image Quality Assessment by Separately Evaluating Detail Losses and Additive ImpairmentsDocument15 pages2011 - IEEE - Image Quality Assessment by Separately Evaluating Detail Losses and Additive Impairmentsarg.xuxuNo ratings yet

- Fast Fourier Transforms Sidney BurrusDocument254 pagesFast Fourier Transforms Sidney BurrusMayam AyoNo ratings yet

- DSP Course Outline 2023Document2 pagesDSP Course Outline 2023perry ofosuNo ratings yet

- Excess Loop Delay in Continuous-Time Delta-Sigma Modulators: James A. Cherry,, and W. Martin SnelgroveDocument14 pagesExcess Loop Delay in Continuous-Time Delta-Sigma Modulators: James A. Cherry,, and W. Martin Snelgrovebhargav nagarajuNo ratings yet

- Impedance MatchingDocument7 pagesImpedance MatchingchinchouNo ratings yet

- Jensen Transformers 1977Document114 pagesJensen Transformers 1977Hugues ValotNo ratings yet

- DSP Processors and ArchitecturesDocument1 pageDSP Processors and ArchitecturesK S RajasekharNo ratings yet

- Maximally Decimated Filter BanksDocument21 pagesMaximally Decimated Filter BanksShabeeb Ali OruvangaraNo ratings yet

- Acoustic Noise CancellationDocument21 pagesAcoustic Noise CancellationNESEGANo ratings yet

- 400W/500W X-Band Hubmount Sspa/ SSPB Super Compact TT SeriesDocument2 pages400W/500W X-Band Hubmount Sspa/ SSPB Super Compact TT SeriesWai YanNo ratings yet

- Analog-to-Digital ConverterDocument21 pagesAnalog-to-Digital ConverterThành TrườngNo ratings yet

- Digital Audio EffectsDocument47 pagesDigital Audio Effectscap2010100% (1)

- Fundamentals of Spatial Filtering: Outline of The LectureDocument6 pagesFundamentals of Spatial Filtering: Outline of The LectureSyedaNo ratings yet

- AdgenpsfDocument75 pagesAdgenpsfTsdfsd YfgdfgNo ratings yet

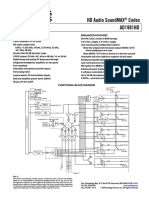

- HD Audio Soundmax Codec Ad1981Hd: Features Enhanced FeaturesDocument16 pagesHD Audio Soundmax Codec Ad1981Hd: Features Enhanced FeatureseloigneeNo ratings yet

- OSD SVC 300 - 300W Impedance Matching Decora Style Rotary Speaker Volume Control KitDocument2 pagesOSD SVC 300 - 300W Impedance Matching Decora Style Rotary Speaker Volume Control KitMohammad FamuNo ratings yet

- Reducing Artificial Reverberation Algorithm Requirements Using Time-Variant Feedback Delay NetworksDocument130 pagesReducing Artificial Reverberation Algorithm Requirements Using Time-Variant Feedback Delay NetworksPhilip KentNo ratings yet

- PDF of Digital Signal Processing Ramesh Babu 2 PDFDocument2 pagesPDF of Digital Signal Processing Ramesh Babu 2 PDFMani RockyNo ratings yet

- Leds-C4 The One 2014Document928 pagesLeds-C4 The One 2014VEMATELNo ratings yet

- Embedded System Lab Question PaperDocument4 pagesEmbedded System Lab Question PapervickyskarthikNo ratings yet

- Col 10550Document254 pagesCol 10550adrianl136378No ratings yet

- Chapter 1Document48 pagesChapter 1CharleneKronstedtNo ratings yet