Professional Documents

Culture Documents

(IJIT-V7I6P1) :yew Kee Wong

Uploaded by

IJITJournalsOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

(IJIT-V7I6P1) :yew Kee Wong

Uploaded by

IJITJournalsCopyright:

Available Formats

International Journal of Information Technology (IJIT) – Volume 7 Issue 6, Nov - Dec 2021

RESEARCH ARTICLE OPEN ACCESS

APPLYING EFFECTIVE ASSESSMENT

INDICATORS IN ONLINE LEARNING

Yew Kee Wong

School of Information Engineering, HuangHuai University, Henan - China.

ABSTRACT

The assessment outcome for many online learning methods are based on the number of correct answers

and than convert it into one final mark or grade. We discovered that when using online learning, we can

extract more detail information from the learning process and these information are useful for the assessor

to plan an effective and efficient learning model for the learner. Statistical analysis is an important part

of an assessment when performing the online learning outcome. The assessment indicators include the

difficulty level of the question, time spend in answering and the variation in choosing answer. In this

paper we will present the findings of these assessment indicators and how it can improve the way the

learner being assessed when using online learning system. We developed a statistical analysis algorithm

which can assess the online learning outcomes more effectively using quantifiable measurements. A

number of examples of using this statistical analysis algorithm are presented.

Keywords: - Online Learning, Artificial Intelligence, Statistical Analysis Algorithm, Assessment

Indicator

I. INTRODUCTION

Many online learning assessment systems that use multiple choice approach is based on the correct

answers to judge a learner on their understanding of what they have learned [1]. We have carry out

various experiments on these quantifiable measurements (assessment indicators) on Mathematics and

English subject on different learner groups. With these assessment indicators, assessors and learners can

easily assess the online learning performance [2].

We carry out experiments from 100 online voluntary learners from 8 different countries: USA, Canada,

United Kingdom, Malaysia, Singapore, Philippines, Thailand and Indonesia. The experiments involved

learners from the age between 9 and 10. The experiment questions we have used is the UK National

Mathematics Curriculum, Year 5, Geometry topic, multiple choice questions. All 100 online voluntary

learners, speak and write fluent English and have knowledge in this Geometry topic.

II. ASSESSMENT INDICATORS AND STATISTICAL ANALYSIS

In this research, we have identified 3 critical assessment indicators which can influence the learners’

learning progress and understanding. These 3 assessment indicators have inter-relationships with the

underlying final mark from the assessment [3]. At the end of the assessment, the artificial intelligence

(AI) engine will analyse all the statistical information from these 3 indicators and provide a

recommendation for the assessor and learner.

(1). Difficulty Level (measure by the complexity of the questions [4]).

Each question will have the difficulty level embedded. For example, we take a topic in Addition from

Mathematics subject. For an Addition topic, we can assign the Difficulty level to these 3 questions

depending on the complexity, i.e. 4 + 3 = ? (Low Difficulty), 755 + 958 = ? (Medium Difficulty) and

7,431,398,214 + 32,883,295 = ? (High Difficulty).

Level Terms Quantitative Measurement

High (hardest) 3

Medium 2

Low (easiest) 1

ISSN: 2454-5414 www.ijitjournal.org Page 1

International Journal of Information Technology (IJIT) – Volume 7 Issue 6, Nov - Dec 2021

(2). Understanding Level (measure by the time from the question appear to submission).

Each question will have the understanding level embedded. For example, assuming there is a question

with Level Term - High (from a to b) where “a = 3 seconds” and “b = 5 seconds”. In this example, if the

learner can submit the answer between 3 to 5 seconds after the question appeared than the answer will be

assigned 2 points for the Understanding indicator.

Level Terms Quantitative Measurement

High (from a to b) fastest 2

Medium (from b to c) 1

Low (from c onwards) slowest 0

(3). Confident Level (variation in choosing an answer before submission).

For each question, we will capture the behaviour of the learner when choosing an answer before

submission [5]. For example, for most learners if they are confident and prudent on choosing the correct

answer, they will submit the answer once decided without making any changes. If the learner didn’t

make any changes when answer this example question, than this answer will be assigned 2 points for the

Confident indicator.

Level Terms Quantitative Measurement

High (no change on first pick) 2

Medium (one change) 1

Low (two changes or more) 0

In this research, we have carry out multiple experiments to evaluate the use of assessment indicators and

the statistical information generated when the learner performing the assessment [6][7]. We have

conducted 3 detail experiments and the outcomes generated show promising result on the learners’ overall

learning performance. Below are the 3 experiments summary which we have conducted on 100 online

voluntary learners. All marks and indicators have been converted to percentage (%) prior for further

analysis by the artificial intelligence engine.

2.1. Experiments

Experiment 1:

20 Multiple Choice Questions (Difficulty level : 1)

Type of questions : Year 5 - UK National Mathematics Curriculum (Topic: Geometry)

Standard marking scheme : Lowest (0 / 20) and Highest (20 / 20) {marks}

Understanding level : Lowest (0 / 40) and Highest (40 / 40) {20 questions x 2 points}

Confident level : Lowest (0 / 40) and Highest (40 / 40) {20 questions x 2 points}

Number of learners involved in this experiment : 100

Summary Results :

◦ 10% of the learners who cannot move on to Experiment 2 in the 1st attempt, majority of their

Understanding level and/or Confident level are under 50%. And the learners are required to

have a 2nd attempt.

◦ In the 2nd attempt, all the remaining 10% learners have successfully move on to Experiment

2 and both indicators show a major improvement and all indicators above 50%.

◦ 90% of the learners who achieve moving to Experiment 2 in the 1st attempt, have shown

promising result of over 50% in both Understanding level and Confident level.

Experiment 2:

20 Multiple Choice Questions (Difficulty level : 2)

Type of questions : Year 5 - UK National Mathematics Curriculum (Topic: Geometry)

Standard marking scheme : Lowest (0 / 20) and Highest (20 / 20) {marks}

Understanding level : Lowest (0 / 40) and Highest (40 / 40) {20 questions x 2 points}

Confidence level : Lowest (0 / 40) and Highest (40 / 40) {20 questions x 2 points}

Number of learners involved in this experiment : 100

ISSN: 2454-5414 www.ijitjournal.org Page 2

International Journal of Information Technology (IJIT) – Volume 7 Issue 6, Nov - Dec 2021

Summary Results :

◦ 23% of the learners who cannot move on to Experiment 3 in the 1st attempt, majority of their

Understanding level and/or Confident level are under 50%. And the learners are required to

have a 2nd attempt.

◦ In the 2nd attempt, 17% out of 23% learners have successfully move on to Experiment 3 and

both indicators show a major improvement and all indicators above 50%.

◦ In the 3rd attempt, all the remaining 5% out of 23% learners have successfully move on to

Experiment 3 and both indicators show a major improvement and all indicators above 50%.

◦ 77% of the learners who achieve moving to Experiment 3 in the 1st attempt, have shown

promising result of over 50% in both Understanding level and Confident level.

Experiment 3:

20 Multiple Choice Questions (Difficulty level : 3)

Type of questions : Year 5 - UK National Mathematics Curriculum (Topic: Geometry)

Standard marking scheme : Lowest (0 / 20) and Highest (20 / 20) {marks}

Understanding level : Lowest (0 / 40) and Highest (40 / 40) {20 questions x 2 points}

Confidence level : Lowest (0 / 40) and Highest (40 / 40) {20 questions x 2 points}

Number of learners involved in this experiment : 100

Summary Results :

◦ 56% of the learners who cannot complete the Experiment 3 in the 1st attempt, majority of

their Understanding level and/or Confident level are under 50%. And the learners are

required to have a 2nd attempt.

◦ In the 2nd attempt, 19% out of 56% learners have successfully completed the Experiment 3

and both indicators show a major improvement and all indicators above 75%.

◦ In the 3rd attempt, all the remaining 15% out of 37% (56% - 19%) learners have successfully

completed the Experiment 3 and both indicators show a major improvement and all

indicators above 75%.

◦ In the 4th attempt, all the remaining 22% (37% - 15%) learners have successfully completed

the Experiment 3 and both indicators show a major improvement and all indicators above

75%.

◦ 44% of the learners who completed the Experiment 3 in the 1st attempt, have shown

promising result of over 75% in both Understanding level and Confident level.

Both the assessment indicators and statistical information stand alone do not have any representations and

it is meaningless without others being analysed altogether [8]. Furthermore, in order to have an effective

and efficient online learning outcome for the learner, the assessor requires to design and develop the

curriculum, learning materials and Q&A using a hybrid integrated model [9]. The curriculum needs to be

an all rounded learning blueprint, where learner can improve their understanding in a progressive manner

and user-friendly approach.

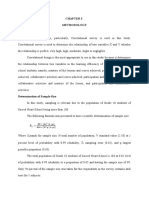

III. AI RULES

The AI rules define the way the online learning system assigned learning materials and exercises for the

learner to follow. These are the basic rules which we have carry out in our experiments, in which we find

it effective in improving the learners understanding.

Rule Difficulty Correct Understanding Confident Recommendation (Response)

number level answers level (%) level (%)

(%)

1 1 < 50 Nil Nil Repeat the same difficulty level = 1 exercise

2 1 ≥ 50 < 50 < 50 Repeat the same difficulty level = 1 exercise

3 1 ≥ 50 < 50 ≥ 50 Repeat the same difficulty level = 1 exercise

4 1 ≥ 50 ≥ 50 < 50 Repeat the same difficulty level = 1 exercise

5 1 ≥ 50 ≥ 50 ≥ 50 Move to next difficulty level = 2 exercise

ISSN: 2454-5414 www.ijitjournal.org Page 3

International Journal of Information Technology (IJIT) – Volume 7 Issue 6, Nov - Dec 2021

6 2 < 50 Nil Nil Repeat the same difficulty level = 2 exercise

7 2 ≥ 50 < 50 < 50 Repeat the same difficulty level = 2 exercise

8 2 ≥ 50 < 50 ≥ 50 Repeat the same difficulty level = 2 exercise

9 2 ≥ 50 ≥ 50 < 50 Repeat the same difficulty level = 2 exercise

10 2 ≥ 50 ≥ 50 ≥ 50 Move to next difficulty level = 3 exercise

11 3 < 75 Nil Nil Repeat the same difficulty level = 3 exercise

12 3 ≥ 75 < 50 < 50 Repeat the same difficulty level = 3 exercise

13 3 ≥ 75 < 50 ≥ 50 Repeat the same difficulty level = 3 exercise

14 3 ≥ 75 ≥ 50 < 50 Repeat the same difficulty level = 3 exercise

15 3 ≥ 75 ≥ 50 ≥ 50 Move to next topic exercise

Figure 1. The AI rules applied in the experiments.

Online learning assessor and learner can modify all the assessment indicators accordingly (depending on

various conditions and overall standard requirements) [10].

IV. ONLINE LEARNING USING AI SYSTEM

The online learning using AI system include several components which can be integrated as one complete

artificial intelligence online learning system [11]. These are the standard components:-

1. Reasoning − It is the set of processes that empowers us to provide basis for judgement, making

decisions, and prediction.

2. Learning − It is the activity of gaining information or skill by studying, practising, being

educated, or experiencing something. Learning improves the awareness of the subjects of the study.

3. Problem Solving − It is the procedure in which one perceives and tries to arrive at a desired

solution from a current situation by taking some path, which is obstructed by known or unknown

hurdles.

4. Perception − It is the way of acquiring, interpreting, selecting, and organizing sensory

information.

5. Linguistic Intelligence − It is one’s ability to use, comprehend, talk, and compose the verbal and

written language. It is significant in interpersonal communication.

The potential of online learning system include 4 factors of accessibility, flexibility, interactivity, and

collaboration of online learning afforded by the technology. In terms of the challenges to online learning,

6 are identified: defining online learning; proposing a new legacy of epistemology-social constructivism

for all; quality assurance and standards; commitment versus innovation; copyright and intellectual

property; and personal learning in social constructivism [12].

Figure 2. The AI online learning system components.

ISSN: 2454-5414 www.ijitjournal.org Page 4

International Journal of Information Technology (IJIT) – Volume 7 Issue 6, Nov - Dec 2021

V. CONCLUSIONS Knowledge Inventory for High School

Teachers in a Greek Context.

This paper proposed AI online learning involves

3 stages. Stage One involves design and [10] Nick Bostrom and Eliezer Yudkowsky,

development of the curriculum with learning (2011). The Ethics of Artificial Intelligence,

materials, Q&A and other assessment Cambridge University Press.

indicators. Stage Two involves the [11] Gus Bekdash, (2019). Using Human

implementation of a creative AI rules. And History, Psychology, and Biology to Make AI

Stage Three involves the user-friendly learning Safe for Humans, Chapman & Hall/CRC.

process and analysis operation. This model can

generates flexibility when designing and [12] The Student Circles.com, Artificial

developing the online learning system. The new Intelligence Study Notes

statistical analysis algorithm with various https://www.thestudentcircle.com/quic

assessment indicators show promising results in kguide.php?url=artificial-intelligence

AI online learning and further evaluation and

research is in progress.

AUTHOR

REFERENCES Prof. Yew Kee Wong (Eric) is a Professor of

[1] Bill Joy, (2000). Why the Future Artificial Intelligence (AI) & Advanced

Doesn’t Need Us, WIRED-IDEAS, USA. Learning Technology at the HuangHuai

University in Henan, China. He obtained his

[2] William Li, (2018). Prediction BSc (Hons) undergraduate degree in Computing

Distortion in Monte Carlo Tree Search and an Systems and a Ph.D. in AI from The

Improved Algorithm, Journal of Nottingham Trent University in Nottingham,

Intelligent Learning Systems and Applications, U.K. He was the Senior Programme Director at

Vol. 10, No. 2. The University of Hong Kong (HKU) from

[3] Ofra Walter, Vered Shenaar-Golan and 2001 to 2016. Prior to joining the education

Zeevik Greenberg, (2015). Effect of Short-Term sector, he has worked in international

Intervention Program on Academic technology companies, Hewlett-Packard (HP)

Self-Efficacy in Higher Education, Psychology, and Unisys as an AI consultant. His research

Vol. 6, No. 10. interests include AI, online learning, big data

analytics, machine learning, Internet of Things

[4] Calum Chace, (2019). Artificial (IOT) and blockchain technology.

Intelligence and the Two Singularities,

Chapman & Hall/CRC.

[5] Piero Mella, (2017). Intelligence and

Stupidity – The Educational Power of Cipolla’s

Test and of the “Social Wheel”, Creative

Education, Vol. 8, No. 15.

[6] Zhongzhi Shi, (2019). Cognitive

Machine Learning, International Journal of

Intelligence Science, Vol. 9, No. 4.

[7] Crescenzio Gallo and Vito Capozzi,

(2019). Feature Selection with Non Linear

PCA: A Neural Network Approach, Journal of

Applied Mathematics and Physics, Vol. 7, No.

10.

[8] Charles Kivunja, (2015). Creative

Engagement of Digital Learners with Gardner’s

Bodily- Kinesthetic Intelligence to Enhance

Their Critical Thinking, Creative Education,

Vol. 6, No. 6.

[9] Evangelia Foutsitzi, Georgia

Papantoniou, Evangelia Karagiannopoulou,

Harilaos Zaragas and Despina Moraitou,

(2019). The Factor Structure of the Tacit

ISSN: 2454-5414 www.ijitjournal.org Page 5

You might also like

- Validity and Reliability of Classroom TestDocument32 pagesValidity and Reliability of Classroom TestDeAnneZapanta0% (1)

- 13 HMEF5053 T9 (Amend 14.12.16)Document22 pages13 HMEF5053 T9 (Amend 14.12.16)kalpanamoorthyaNo ratings yet

- (IJETA-V8I4P11) :yew Kee WongDocument6 pages(IJETA-V8I4P11) :yew Kee WongIJETA - EighthSenseGroupNo ratings yet

- 13 Hmef5053 T9Document24 pages13 Hmef5053 T9chenzNo ratings yet

- Scoring Techniques and Interpreting Test Score in The Teacher Made TestsDocument2 pagesScoring Techniques and Interpreting Test Score in The Teacher Made TestsEILEEN MAY CAJUCOMNo ratings yet

- Item Analysis (Evaluation of Objective Tests) : Most Useful in and Reviewing Questions Done Through Calculating The andDocument13 pagesItem Analysis (Evaluation of Objective Tests) : Most Useful in and Reviewing Questions Done Through Calculating The andAMANUEL NUREDINNo ratings yet

- G5 Assignment 2023-1Document35 pagesG5 Assignment 2023-1Khadira MohammedNo ratings yet

- Multinomial Problem StatementDocument28 pagesMultinomial Problem StatementMoayadNo ratings yet

- Effectiveness of Extension ProgramDocument7 pagesEffectiveness of Extension ProgramChynna Grace MaderaNo ratings yet

- Unit Guide: Mat102 Statistics For Business Trimester 3 2021Document12 pagesUnit Guide: Mat102 Statistics For Business Trimester 3 2021JeremyNo ratings yet

- Analyzing Item Difficulty and Discrimination in Multiple Choice ExamsDocument29 pagesAnalyzing Item Difficulty and Discrimination in Multiple Choice ExamsErick Robert PoresNo ratings yet

- My Action Research ReportDocument7 pagesMy Action Research ReportRhea RaposaNo ratings yet

- Topic: Analysis of Test ScoresDocument21 pagesTopic: Analysis of Test ScoreschenzNo ratings yet

- 3rd Module in Assessment of Learning 1Document10 pages3rd Module in Assessment of Learning 1Jayson Coz100% (1)

- 10 National Creativity Aptitude Test: PresentDocument4 pages10 National Creativity Aptitude Test: PresentSyed ShahbazNo ratings yet

- اGED past papersDocument21 pagesاGED past papersshashideshpande0% (1)

- MAT102 - Statistics For Business - UEH-ISB - T3 2022 - Unit Guide - DR Chon LeDocument12 pagesMAT102 - Statistics For Business - UEH-ISB - T3 2022 - Unit Guide - DR Chon LeVĩnh Khánh HoàngNo ratings yet

- Item Analysis SendDocument34 pagesItem Analysis Sendgerielpalma123No ratings yet

- Assessment: Center of Excellence For Teacher EducationDocument7 pagesAssessment: Center of Excellence For Teacher EducationWyzty DelleNo ratings yet

- MAT102 - Statistics For Business - UEH-ISB - T1 2021 - Unit Guide - SB1Document14 pagesMAT102 - Statistics For Business - UEH-ISB - T1 2021 - Unit Guide - SB1Minh Anh HoangNo ratings yet

- Math 1530 Syllabus-Ada Compliant f19Document6 pagesMath 1530 Syllabus-Ada Compliant f19api-502745314No ratings yet

- Educ 202 QuizDocument5 pagesEduc 202 QuizGrace Ann AquinoNo ratings yet

- Research DesignDocument3 pagesResearch DesignChai ChaiNo ratings yet

- Asl Villaber FinalDocument9 pagesAsl Villaber FinalIan Dante ArcangelesNo ratings yet

- Item AnalysisDocument33 pagesItem AnalysisEduardo YabutNo ratings yet

- Analysis of Grammar Test-Langguage AssessmentDocument24 pagesAnalysis of Grammar Test-Langguage AssessmentIndriRBNo ratings yet

- Sdiaz2@stu - Edu: Course # Course Name Credit Class ScheduleDocument9 pagesSdiaz2@stu - Edu: Course # Course Name Credit Class ScheduleSteven DiazNo ratings yet

- Student Problem Solving Skills on ThermodynamicsDocument8 pagesStudent Problem Solving Skills on ThermodynamicsThanh NgNo ratings yet

- School of Business and Economics Department of Economics: Syed - Ehsan@northsouth - EduDocument5 pagesSchool of Business and Economics Department of Economics: Syed - Ehsan@northsouth - EduShahabuddin ProdhanNo ratings yet

- Test Item Analysis: Key Elements for Evaluating Test QualityDocument31 pagesTest Item Analysis: Key Elements for Evaluating Test QualityDivine Grace SamortinNo ratings yet

- MethodologyDocument4 pagesMethodologyluffyNo ratings yet

- Item AnalysisDocument13 pagesItem AnalysisParbati samantaNo ratings yet

- MAT102 - Statistics For Business - UEH-ISB - T2 2022 - Unit Guide - MR Tuan BuiDocument12 pagesMAT102 - Statistics For Business - UEH-ISB - T2 2022 - Unit Guide - MR Tuan BuiPhucNo ratings yet

- Assessment of Learning Unit 3Document44 pagesAssessment of Learning Unit 3Pauline Adriane BejaNo ratings yet

- Module 1 in Assessment of Learning 2 UploadDocument11 pagesModule 1 in Assessment of Learning 2 Uploadreyes.jenniferNo ratings yet

- APP 006 Questionnaire A1 G1211 GASDocument4 pagesAPP 006 Questionnaire A1 G1211 GASJOHN STEVE VASALLONo ratings yet

- Research Design & Methodology ChapterDocument8 pagesResearch Design & Methodology ChapterMaria Lean FrialNo ratings yet

- The Effect of Vlog Toward Students' Speaking Skill at The Tenth Grade of MAN 2 Serang Rizkiana Amelia Ledy Nurlely Rosmania RimaDocument13 pagesThe Effect of Vlog Toward Students' Speaking Skill at The Tenth Grade of MAN 2 Serang Rizkiana Amelia Ledy Nurlely Rosmania RimaMr. PainNo ratings yet

- Scoring and Interpretation of Test ScoresDocument13 pagesScoring and Interpretation of Test ScoresSarimNo ratings yet

- International Journals Call For Paper HTTP://WWW - Iiste.org/journalsDocument12 pagesInternational Journals Call For Paper HTTP://WWW - Iiste.org/journalsAlexander DeckerNo ratings yet

- Administering ScoringDocument61 pagesAdministering Scoringvijeesh_theningalyahNo ratings yet

- Thesis Chapter IIIDocument10 pagesThesis Chapter IIIreinareguevarraNo ratings yet

- Dds and Mathematical Skills On The Basic Operations of Integers of Grade 7 StudentsDocument7 pagesDds and Mathematical Skills On The Basic Operations of Integers of Grade 7 StudentsJohn Lloyd Lumberio BriosoNo ratings yet

- Final Pre-Training Teacher Assessment Literacy Inventory PDFDocument23 pagesFinal Pre-Training Teacher Assessment Literacy Inventory PDFEmily EspejoNo ratings yet

- Student Grade Prediction Using Machine LearningDocument9 pagesStudent Grade Prediction Using Machine LearningTrần XuânNo ratings yet

- Item AnalysisDocument12 pagesItem Analysisswethashaki100% (8)

- Chapter-2-sample-HANDOUTDocument71 pagesChapter-2-sample-HANDOUTjayyyyyrg16No ratings yet

- Project Report - Cleansing and ReliabilityDocument7 pagesProject Report - Cleansing and Reliabilitybm36architjNo ratings yet

- Development of Mathematics Diagnostic TestsDocument24 pagesDevelopment of Mathematics Diagnostic TestskaskaraitNo ratings yet

- (Template) Assessment in Learning I MODULE IIIDocument12 pages(Template) Assessment in Learning I MODULE IIIShiela Mae InitanNo ratings yet

- NCAT-2023 BrochureDocument4 pagesNCAT-2023 BrochureWickNo ratings yet

- Administering Scoring and Reporting A TestDocument61 pagesAdministering Scoring and Reporting A TestAnilkumar Jarali100% (3)

- PROJECT 1 STA 108 BaruDocument26 pagesPROJECT 1 STA 108 Baruhyebibie100% (1)

- Philippine College of Science and Technology Course Outcomes Assessment PlanDocument2 pagesPhilippine College of Science and Technology Course Outcomes Assessment PlanBLOCK 8 / TAMAYO / K.No ratings yet

- ADMINISTERING, SCORING AND REPORTING A TESTDocument61 pagesADMINISTERING, SCORING AND REPORTING A TESTHardeep KaurNo ratings yet

- 10B Group 1 - Chapter 3 Third RevisionDocument6 pages10B Group 1 - Chapter 3 Third RevisionAnne Margaret IlarinaNo ratings yet

- Grades That Show What Students Know PDFDocument7 pagesGrades That Show What Students Know PDFCharlene OmzNo ratings yet

- Exams Briefing For Final Year Students Spring 2021Document30 pagesExams Briefing For Final Year Students Spring 2021Van TeaNo ratings yet

- Assembling, Administering and Appraising Classroom Tests and AssessmentsDocument27 pagesAssembling, Administering and Appraising Classroom Tests and AssessmentsMalik Musnef60% (5)

- (IJIT-V8I2P4) :abdulqader Mohsen, Wedad Al-Sorori, Abdullatif GhallabDocument7 pages(IJIT-V8I2P4) :abdulqader Mohsen, Wedad Al-Sorori, Abdullatif GhallabIJITJournalsNo ratings yet

- (IJIT-V8I5P1) :dr. A. Alphonse John Kenneth, Mrs. J. Mary AmuthaDocument4 pages(IJIT-V8I5P1) :dr. A. Alphonse John Kenneth, Mrs. J. Mary AmuthaIJITJournalsNo ratings yet

- (IJIT-V8I4P1) :nor Adnan Yahaya, Mansoor Abdullateef Abdulgabber, Shadia Yahya BaroudDocument8 pages(IJIT-V8I4P1) :nor Adnan Yahaya, Mansoor Abdullateef Abdulgabber, Shadia Yahya BaroudIJITJournals100% (1)

- (IJIT-V9I3P1) :T. Primya, A. VanmathiDocument6 pages(IJIT-V9I3P1) :T. Primya, A. VanmathiIJITJournalsNo ratings yet

- (IJIT-V8I3P5) :B. Siva Kumari, Pavani Munnam, Nallabothu Amrutha, Vasanthi Penumatcha, Chapa Gayathri Devi, Tondapu Sai SwathiDocument5 pages(IJIT-V8I3P5) :B. Siva Kumari, Pavani Munnam, Nallabothu Amrutha, Vasanthi Penumatcha, Chapa Gayathri Devi, Tondapu Sai SwathiIJITJournalsNo ratings yet

- (IJIT-V8I2P2) :yew Kee WongDocument3 pages(IJIT-V8I2P2) :yew Kee WongIJITJournalsNo ratings yet

- (IJIT-V8I3P3) :vikash Prajapat, Rupali Dilip TaruDocument8 pages(IJIT-V8I3P3) :vikash Prajapat, Rupali Dilip TaruIJITJournalsNo ratings yet

- (IJIT-V8I3P4) :maha Lakshmi B, Lavanya M, Rajeswari Haripriya G, Sushma Sri K, Teja M, Jayasri MDocument4 pages(IJIT-V8I3P4) :maha Lakshmi B, Lavanya M, Rajeswari Haripriya G, Sushma Sri K, Teja M, Jayasri MIJITJournalsNo ratings yet

- (IJIT-V8I2P3) :Hemanjali.N, Krishna Satya Varma .MDocument10 pages(IJIT-V8I2P3) :Hemanjali.N, Krishna Satya Varma .MIJITJournalsNo ratings yet

- (IJIT-V7I5P7) :ankita GuptaDocument5 pages(IJIT-V7I5P7) :ankita GuptaIJITJournalsNo ratings yet

- (IJIT-V8I2P1) :yew Kee WongDocument4 pages(IJIT-V8I2P1) :yew Kee WongIJITJournalsNo ratings yet

- Big Iot Data Analytics: Architecture, Tools and Cloud SolutionsDocument8 pagesBig Iot Data Analytics: Architecture, Tools and Cloud SolutionsIJITJournalsNo ratings yet

- (IJIT-V8I1P4) :wagdy A.A. MohamedDocument11 pages(IJIT-V8I1P4) :wagdy A.A. MohamedIJITJournalsNo ratings yet

- (IJIT-V8I1P3) :inas Fouad Noseir, Shahera Saad AliDocument5 pages(IJIT-V8I1P3) :inas Fouad Noseir, Shahera Saad AliIJITJournalsNo ratings yet

- (IJIT-V7I6P3) :anthony Musabi, Moses M. Thiga, Simon M. KarumeDocument5 pages(IJIT-V7I6P3) :anthony Musabi, Moses M. Thiga, Simon M. KarumeIJITJournalsNo ratings yet

- (IJIT-V7I6P4) :mithlesh Kumar Prajapati, DR Asha AmbhaikarDocument7 pages(IJIT-V7I6P4) :mithlesh Kumar Prajapati, DR Asha AmbhaikarIJITJournalsNo ratings yet

- (IJIT-V7I6P2) :yew Kee WongDocument7 pages(IJIT-V7I6P2) :yew Kee WongIJITJournalsNo ratings yet

- (IJIT-V7I4P6) :smt. G. Santhalakshmi, Dr. M. MuninarayanappaDocument6 pages(IJIT-V7I4P6) :smt. G. Santhalakshmi, Dr. M. MuninarayanappaIJITJournalsNo ratings yet

- (IJIT-V7I5P6) :priyanka Mishra, Sanatan ShrivastavaDocument7 pages(IJIT-V7I5P6) :priyanka Mishra, Sanatan ShrivastavaIJITJournalsNo ratings yet

- (IJIT-V7I5P3) :sujith Krishna B, Janardhan Guptha SDocument5 pages(IJIT-V7I5P3) :sujith Krishna B, Janardhan Guptha SIJITJournalsNo ratings yet

- (IJIT-V7I5P4) :nitesh Sharma, Mohammad Afzal, Asst. Prof Ankita DixitDocument7 pages(IJIT-V7I5P4) :nitesh Sharma, Mohammad Afzal, Asst. Prof Ankita DixitIJITJournalsNo ratings yet

- (IJIT-V7I5P5) :koganti Aditya, Vinod RongalaDocument5 pages(IJIT-V7I5P5) :koganti Aditya, Vinod RongalaIJITJournalsNo ratings yet

- (IJIT-V7I4P7) :yew Kee WongDocument4 pages(IJIT-V7I4P7) :yew Kee WongIJITJournalsNo ratings yet

- (IJIT-V7I5P1) :yew Kee WongDocument5 pages(IJIT-V7I5P1) :yew Kee WongIJITJournalsNo ratings yet

- (IJIT-V7I4P5) :swapnil Prachande, Tejas Nipankar, Dr. K.S. WaghDocument5 pages(IJIT-V7I4P5) :swapnil Prachande, Tejas Nipankar, Dr. K.S. WaghIJITJournalsNo ratings yet

- (IJIT-V7I4P8) :yew Kee WongDocument6 pages(IJIT-V7I4P8) :yew Kee WongIJITJournalsNo ratings yet

- (IJIT-V7I4P4) :anuradha T, Ashish Deshpande, NandkishorDocument6 pages(IJIT-V7I4P4) :anuradha T, Ashish Deshpande, NandkishorIJITJournalsNo ratings yet

- (IJIT-V7I4P3) :rupali Singh, Roopesh Shukla, Manish Thakur, Saylee Shinde, Abhijit PatilDocument6 pages(IJIT-V7I4P3) :rupali Singh, Roopesh Shukla, Manish Thakur, Saylee Shinde, Abhijit PatilIJITJournalsNo ratings yet

- (IJIT-V7I4P2) :selvam. S, Arulraj. RDocument12 pages(IJIT-V7I4P2) :selvam. S, Arulraj. RIJITJournalsNo ratings yet

- Line Sizing ProcessDocument13 pagesLine Sizing ProcessEngr Theyji0% (1)

- Determine Earth's Magnetic Field Using Tangent GalvanometerDocument26 pagesDetermine Earth's Magnetic Field Using Tangent GalvanometerKusum SukhijaNo ratings yet

- E 4000 Medidor GLPDocument56 pagesE 4000 Medidor GLPBode JuniorNo ratings yet

- Accurate Doppler Current Sensor for Measuring Sea Current Speed and DirectionDocument4 pagesAccurate Doppler Current Sensor for Measuring Sea Current Speed and DirectionKiran VepanjeriNo ratings yet

- MK300 - Catalogue Relay bảo vệ dòng rò (Earth Leakage Relay) MK300 của MikroDocument2 pagesMK300 - Catalogue Relay bảo vệ dòng rò (Earth Leakage Relay) MK300 của MikroconmavtvnNo ratings yet

- Aiats Practise Test-1Document46 pagesAiats Practise Test-1Arunanshu Pal75% (4)

- UNIT IV Aserf, Bhvaya, Joshith, Kabilesh, Sangeetha, Saramgi.SDocument65 pagesUNIT IV Aserf, Bhvaya, Joshith, Kabilesh, Sangeetha, Saramgi.SAshik M AliNo ratings yet

- 12 Bar Blues EssayDocument3 pages12 Bar Blues EssayblondieajbNo ratings yet

- Segmentsjointin Precast Tunnel Lining DesignDocument9 pagesSegmentsjointin Precast Tunnel Lining Designsravan_rubyNo ratings yet

- Oracle PLSQL Interview AdvDocument25 pagesOracle PLSQL Interview AdvZainul AbdeenNo ratings yet

- Facial BoneDocument34 pagesFacial BoneIrfanHadiWijayaNo ratings yet

- TX6CRX6C New PDFDocument9 pagesTX6CRX6C New PDFbilalNo ratings yet

- Horary astrology chart interpretationDocument3 pagesHorary astrology chart interpretationSanjeev AgarwalNo ratings yet

- Ai Lab13Document5 pagesAi Lab13Hamna AmirNo ratings yet

- Features Supported by The Editions of SQL Server 2012Document25 pagesFeatures Supported by The Editions of SQL Server 2012Sinoj AsNo ratings yet

- Alia AUF790 Ultrasonic Open Channel FlowmeterDocument4 pagesAlia AUF790 Ultrasonic Open Channel FlowmeterRexCrazyMindNo ratings yet

- Party Types Organisation and FunctionsDocument29 pagesParty Types Organisation and FunctionsNashiba Dida-AgunNo ratings yet

- Saptarshi PDFDocument15 pagesSaptarshi PDFViriato SouzaNo ratings yet

- Electro Analytical TechniquesDocument24 pagesElectro Analytical TechniquesCranema KaayaNo ratings yet

- An Empirical Study of C++ Vulnerabilities in Crowd-Sourced Code ExamplesDocument14 pagesAn Empirical Study of C++ Vulnerabilities in Crowd-Sourced Code ExamplesThadeus_BlackNo ratings yet

- El Niño Modoki Dan Pengaruhnya Terhadap Perilaku Curah Hujan Monsunal Di IndonesiaDocument62 pagesEl Niño Modoki Dan Pengaruhnya Terhadap Perilaku Curah Hujan Monsunal Di IndonesiaSarNo ratings yet

- Eddu Current Testing Reference From LP ProjectDocument34 pagesEddu Current Testing Reference From LP ProjectTahir AbbasNo ratings yet

- Pham - 2020 - J. - Phys. - Condens. - Matter - 32 - 264001Document10 pagesPham - 2020 - J. - Phys. - Condens. - Matter - 32 - 264001Kay WhiteNo ratings yet

- Lua ProgrammingDocument38 pagesLua ProgrammingPaul EduardNo ratings yet

- PracticeProblems 4315 SolutionsDocument9 pagesPracticeProblems 4315 SolutionsKashifRizwanNo ratings yet

- DS-PDSMK-4 (BAR) Wired Smoke Sensor 20190917Document2 pagesDS-PDSMK-4 (BAR) Wired Smoke Sensor 20190917jose paredesNo ratings yet

- حلول لوكDocument2 pagesحلول لوكاحمدعطيهNo ratings yet

- Medical ImagingDocument412 pagesMedical Imagingalexandraiuliana0% (1)

- Mensuration: Area of A TrapeziumDocument61 pagesMensuration: Area of A TrapeziumSahil EduNo ratings yet