Professional Documents

Culture Documents

Efficient Data Cube Computation

Uploaded by

Mag Creation0 ratings0% found this document useful (0 votes)

118 views27 pagesOriginal Title

Unit 4 - Data Cube Technology

Copyright

© © All Rights Reserved

Available Formats

PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

0 ratings0% found this document useful (0 votes)

118 views27 pagesEfficient Data Cube Computation

Uploaded by

Mag CreationCopyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

You are on page 1of 27

Unit 4

Data Cube Technology

Prepared By

Arjun Singh Saud, Asst. Prof. CDCSIT

Data Mining-CSIT 7th Prepared BY: Arjun Saud

Efficient Method for Data Cube

Computation

• Data cube computation is an essential task in data warehouse

implementation. The precomputation of all or part of a data

cube can greatly reduce the response time and enhance the

performance of on-line analytical processing.

• However, such computation is challenging because it may

require substantial computational time and storage space.

Data Mining-CSIT 7th Prepared BY: Arjun Saud

Efficient Methods for Data Cube

Computation

Cube Materialization

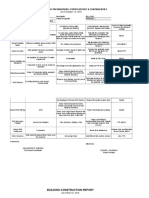

• The figure shows a 3-D data cube for the dimensions A, B, and

C for some aggregate measure, M. A data cube is a lattice of

cuboids.

Data Mining-CSIT 7th Prepared BY: Arjun Saud

Efficient Methods for Data Cube

Computation

Cube Materialization

• Each cuboid represents a group-by. ABC is the base cuboid,

containing all three of the dimensions. The base cuboid is the

least generalized of all of the cuboids in the data cube.

• The most generalized cuboid is the apex cuboid, commonly

represented as all. It contains one value.

• To drill down in the data cube, we move from the apex cuboid,

downward in the lattice. To roll up, we move from the base

cuboid, upward.

Data Mining-CSIT 7th Prepared BY: Arjun Saud

Efficient Methods for Data Cube

Computation

Cube Materialization

• In order to ensure fast on-line analytical processing, it is

sometimes desirable to precompute cubes. The different kinds

of cubes that can be precomputed are given below.

• Full Cube

• Iceberg Cube

• Close Cube

• Shell Cube

Data Mining-CSIT 7th Prepared BY: Arjun Saud

Efficient Methods for Data Cube

Computation

Full Cube

• Full cube is the data cube in which all cells or cuboids for the

data cube are precomputed.

• This, however, is exponential to the number of dimensions.

That is, a data cube of n dimensions contains 2n cuboids. There

are even more cuboids if we consider concept hierarchies for

each dimension.

• Thus, precomputation of the full cube can require huge and

often excessive amounts of memory.

Data Mining-CSIT 7th Prepared BY: Arjun Saud

Efficient Methods for Data Cube

Computation

Iceberg Cube

• Instead of computing the full cube, we can compute only a

subset of the data cube’s cuboids.

• In many situations, it is useful to materialize only those cells in

a cuboid (group-by) whose measure value is above some

minimum threshold. For example, in a data cube for sales, we

may wish to materialize only those cells for which count>10, or

only those cells representing sales>$100. Such partially

materialized cubes are known as iceberg cubes.

Data Mining-CSIT 7th Prepared BY: Arjun Saud

Efficient Methods for Data Cube

Computation

Iceberg Cube

• An iceberg cube can be specified with an SQL query, as shown

in the following example.

compute cube sales-iceberg as

select month, city, CustomerGroup, count(*)

from salesInfo

cube by month, city, CustomerGroup

having count(*) >= min-sup

Data Mining-CSIT 7th Prepared BY: Arjun Saud

Efficient Methods for Data Cube

Computation

Base and Aggregate Cells

• A cell in the base cuboid is a base cell. A cell from a non-base cuboid

is an aggregate cell.

• An aggregate cell aggregates over one or more dimensions, where

each aggregated dimension is indicated by a * in the cell notation.

• Suppose we have an n-dimensional data cube. Let a= (a1, a2, …. , an,

measures) be a cell from one of the cuboids making up the data cube.

• We say that a is an m-dimensional cell if exactly m (m<n) values

among {a1, a2,……, an)} are not *. If m=n, then a is a base cell;

otherwise, it is an aggregate cell.

Data Mining-CSIT 7th Prepared BY: Arjun Saud

Efficient Methods for Data Cube

Computation

Base and Aggregate Cells

• Consider a data cube with the dimensions month, city, and

customer group, and the measure sales.

• (Jan, * , * , 2800) and (*, Chicago, * , 1200) are 1-D cells; (Jan, * ,

Business, 150) is a 2-D cell; and (Jan, Chicago, Business, 45) is a

3-D cell.

• Here, all base cells are 3-D, whereas 1-D and 2-D cells are

aggregate cells.

Data Mining-CSIT 7th Prepared BY: Arjun Saud

Efficient Methods for Data Cube

Computation

Ancestor and Descendent Cells

• In an n-dimensional data cube, an i-D cell a =(a1, a2, ….. , an,

measuresa) is an ancestor of a j-D cell b= (b1, b2, : : : , bn,

measuresb), and b is a descendant of a, if and only if

• i < j, and

• For 1<k< n, ak= bk whenever ak≠*

• In particular, cell a is called a parent of cell b, and b is a child of

a, if and only if j= i +1.

Data Mining-CSIT 7th Prepared BY: Arjun Saud

Efficient Methods for Data Cube

Computation

Ancestor and Descendent Cells

• 1-D cell a=(Jan, * ,* ,2800) and 2-D cell b = (Jan, * , Business, 150)

are ancestors of 3-D cell c = (Jan, Chicago, Business, 45); c is a

descendant of both a and b; b is a parent of c; and c is a child of b.

Data Mining-CSIT 7th Prepared BY: Arjun Saud

Efficient Methods for Data Cube

Computation

Closed Cube

• A cell, c, is a closed cell if there exists no cell, d, such that d is a

specialization (descendant) of cell c, and d has the same

measure value as c. A closed cube is a data cube consisting of

only closed cells.

Fig. Three closed cells forming the lattice of a closed cube

Data Mining-CSIT 7th Prepared BY: Arjun Saud

Efficient Methods for Data Cube

Computation

Shell Cube

• Another strategy for partial materialization is to precompute

only the cuboids involving a small number of dimensions, such

as 3 to 5. These cuboids form a shell cube.

• Queries on additional combinations of the dimensions will have

to be computed on the fly.

• For example, we could compute all cuboids with 3 dimensions

or less in an n-dimensional data cube, resulting in a Shell cube

of size 3.

Data Mining-CSIT 7th Prepared BY: Arjun Saud

General Strategies for Efficient Cube

Computation

• The following are general optimization techniques for the efficient

computation of data cubes.

Optimization Technique 1: Sorting, hashing, and grouping

• Sorting, hashing, and grouping operations should be applied to the

dimension attributes in order to reorder and cluster related tuples.

• In cube computation, aggregation is performed on the tuples (or

cells) that share the same set of dimension values.

• For example, to compute total sales by branch, day, and item, it is

more efficient to sort tuples or cells by branch, and then by day, and

then group them according to the item name.

Data Mining-CSIT 7th Prepared BY: Arjun Saud

General Strategies for Efficient Cube

Computation

• Optimization Technique 2: Simultaneous aggregation and

caching intermediate results

• In cube computation, it is efficient to compute higher-level

aggregates from previously computed lower-level aggregates,

rather than from the base fact table.

• Moreover, simultaneous aggregation from cached intermediate

computation results may lead to the reduction of expensive disk

I/O operations.

• For example, to compute sales by branch, we can use the

intermediate results derived from the computation of a lower-

level cuboid, such as sales by branch and month.

Data Mining-CSIT 7th Prepared BY: Arjun Saud

General Strategies for Efficient Cube

Computation

• Optimization Technique 3: Aggregation from the smallest

child, when there exist multiple child cuboids.

• When there exist multiple child cuboids, it is usually more

efficient to compute the desired parent (i.e., more generalized)

cuboid from the smallest, previously computed child cuboid.

• For example, to compute a sales cuboid, Cbranch, when there exist

two previously computed cuboids, C{branch,year} and C{branch,item}, it

is obviously more efficient to compute Cbranch from the former

than from the latter if there are many more distinct items than

distinct years.

Data Mining-CSIT 7th Prepared BY: Arjun Saud

General Strategies for Efficient Cube

Computation

• Optimization Technique 4: The Apriori pruning method can

be explored to compute iceberg cubes efficiently

• The Apriori property, in the context of data cubes, states as

follows: If a given cell does not satisfy minimum threshold, then no

descendant of the cell will satisfy minimum threshold. This property

can be used to substantially reduce the computation of iceberg

cubes.

Data Mining-CSIT 7th Prepared BY: Arjun Saud

Attribute Oriented Induction for Data

Characterization

• The attribute-oriented induction (AOI) approach to concept

description is alternative of the data cube approach.

• Generally, the data cube approach performs off-line

aggregation before an OLAP or data mining query is submitted

for processing.

• On the other hand, the attribute-oriented induction approach is

basically a query-oriented and performs on-line data analysis.

Data Mining-CSIT 7th Prepared BY: Arjun Saud

Attribute Oriented Induction for Data

Characterization

• The general idea of attribute-oriented induction is to first collect

the task-relevant data using a database query and then perform

generalization based on the examination of the number of

distinct values of each attribute in the relevant set of data.

• The generalization is performed by either attribute removal or

attribute generalization. The following examples illustrate the

process of attribute-oriented induction.

Data Mining-CSIT 7th Prepared BY: Arjun Saud

Attribute Oriented Induction for Data

Characterization

Example: Suppose that a user would like to describe the general

characteristics of graduate students in the Big University database,

given the attributes Name, Gender, Major, BirthPlace, BirthDate,

Residence, Phone#, and GPA. A data mining query for this

characterization can be expressed in the data mining query language,

DMQL, as follows:

Use BigUniversityDB

mine characteristics as “GraduateStudents”

in relevance to Name, Gender, Major, BirthPlace, BirthDate, Residence,

Phone#, GPA

From Student

where Status in “Graduate”

Data Mining-CSIT 7th Prepared BY: Arjun Saud

Attribute Oriented Induction for Data

Characterization

• The data mining query presented above is transformed into the

following relational query for the collection of the task-relevant

set of data:

Use BigUniversityDB

Select Name, Gender, Major, BirthPlace, BirthDate, Residence, Phone#, GPA

From student

Where status in {“M.Sc.”, “M.A.”, “M.B.A.”, “Ph.D.”}

• The result of above query is called the (task-relevant) initial

working relation. Now that the data are ready for attribute-

oriented induction.

Data Mining-CSIT 7th Prepared BY: Arjun Saud

Attribute Oriented Induction for Data

Characterization

• Attribute removal is based on the following rule

1. If there is a large set of distinct values for an attribute of the initial

working relation, but there is no generalization operator on the

attribute (e.g., there is no concept hierarchy defined for the attribute)

then remove the attribute

2. If there is a large set of distinct values for an attribute of the initial

working relation, but its higher-level concepts are expressed in terms

of other attributes, then the attribute should be removed from the

working relation.

Data Mining-CSIT 7th Prepared BY: Arjun Saud

Attribute Oriented Induction for Data

Characterization

• Attribute generalization is based on the following rule: If there is

a large set of distinct values for an attribute in the initial working

relation, and there exists a set of generalization operators on the

attribute, then a generalization operator should be selected and applied

to the attribute.

Data Mining-CSIT 7th Prepared BY: Arjun Saud

Mining Class Comparisons: Discriminating

between Different Classes

• In many applications, users may not be interested in having a single

class (or concept) described or characterized, but rather would prefer

to mine a description that compares or distinguishes one class (or

concept) from other comparable classes (or concepts).

• Class discrimination or comparison mines descriptions that

distinguish a target class from its contrasting classes.

• The target and contrasting classes must be comparable in the sense

that they share similar dimensions and attributes. For example, the

three classes, person, address, and item, are not comparable. However,

the sales in the last three years are comparable classes, and so are

computer science students versus physics students.

Data Mining-CSIT 7th Prepared BY: Arjun Saud

Mining Class Comparisons: Discriminating

between Different Classes

• How is class comparison performed?” In general, the procedure is

as follows:

1. Data Collection: The set of relevant data in the database is

collected by query processing and is partitioned respectively

into a target class and one or a set of contrasting class(es).

2. Dimension Relevance Analysis: If there are many

dimensions, then dimension relevance analysis should be

performed on these classes to select only the highly relevant

dimensions for further analysis.

Data Mining-CSIT 7th Prepared BY: Arjun Saud

Mining Class Comparisons: Discriminating

between Different Classes

3. Synchronous Generalization: Generalization is performed on

the target class and contrasting class(es) to the level controlled

by a user- or expert-specified dimension threshold.

4. Presentation of the Derived Comparison: The resulting class

comparison description can be visualized in the form of

tables, graphs, and rules. This presentation usually includes a

“contrasting” measure such as count% (percentage count).

The user can adjust the comparison description by applying

drill-down, roll-up, and other OLAP operations to the target

and contrasting classes, as desired.

Data Mining-CSIT 7th Prepared BY: Arjun Saud

You might also like

- Unit 2 - Introduction of Data MiningDocument64 pagesUnit 2 - Introduction of Data MiningMag CreationNo ratings yet

- Eight Units DWDMDocument119 pagesEight Units DWDMdasarioramaNo ratings yet

- Mining Frequent Itemsets Using Vertical Data FormatDocument14 pagesMining Frequent Itemsets Using Vertical Data FormatANANTHAKRISHNAN K 20MIA1092No ratings yet

- ICS 2206 Database System JujaDocument61 pagesICS 2206 Database System JujaEnock OmariNo ratings yet

- Market Basket Analysis and Advanced Data Mining: Professor Amit BasuDocument24 pagesMarket Basket Analysis and Advanced Data Mining: Professor Amit BasuDinesh GahlawatNo ratings yet

- Unit 4 FodDocument21 pagesUnit 4 Fodit hod100% (1)

- Q.1. Why Is Data Preprocessing Required?Document26 pagesQ.1. Why Is Data Preprocessing Required?Akshay Mathur100% (1)

- Introduction To Data Mining: Saeed Salem Department of Computer Science North Dakota State University Cs - Ndsu.edu/ SalemDocument30 pagesIntroduction To Data Mining: Saeed Salem Department of Computer Science North Dakota State University Cs - Ndsu.edu/ SalemAshish SharmaNo ratings yet

- Oracle Apps Quality ModuleDocument17 pagesOracle Apps Quality ModuleSantOsh100% (2)

- Data Science MCQ Topics: Protect Your Account Without Compromising On Speed or ConnectionDocument6 pagesData Science MCQ Topics: Protect Your Account Without Compromising On Speed or ConnectionABCNo ratings yet

- DATA MINING Chapter 1 and 2 Lect SlideDocument47 pagesDATA MINING Chapter 1 and 2 Lect SlideSanjeev ThakurNo ratings yet

- E-commerce Data Mining for Personalization and Pattern DetectionDocument22 pagesE-commerce Data Mining for Personalization and Pattern DetectionmalhanrakeshNo ratings yet

- Implementing and Improvisation of K-Means Clustering: International Journal of Computer Science and Mobile ComputingDocument5 pagesImplementing and Improvisation of K-Means Clustering: International Journal of Computer Science and Mobile ComputingandikNo ratings yet

- Cluster Methods in SASDocument13 pagesCluster Methods in SASramanujsarkarNo ratings yet

- Cluster AnalysisDocument38 pagesCluster AnalysisShiva KumarNo ratings yet

- Clustering: A Guide to K-Means ClusteringDocument32 pagesClustering: A Guide to K-Means ClusteringDaneil RadcliffeNo ratings yet

- WQD7005 Final Exam - 17219402Document12 pagesWQD7005 Final Exam - 17219402AdamZain788100% (1)

- Data Base Normalization and ERDDocument23 pagesData Base Normalization and ERDsxurdcNo ratings yet

- WEKA Machine Learning Tool OverviewDocument81 pagesWEKA Machine Learning Tool OverviewwhaleedNo ratings yet

- 3d Optical Data StorageDocument15 pages3d Optical Data Storageutanuja0% (1)

- GridDocument51 pagesGridapi-3741442No ratings yet

- Data Mining Unit 1Document91 pagesData Mining Unit 1ChellapandiNo ratings yet

- 04 - Logical Design in Data WarehouseDocument39 pages04 - Logical Design in Data WarehouseMuhamad Lukman NurhakimNo ratings yet

- Credit Risk Modelling (EDA & Classification) - KaggleDocument21 pagesCredit Risk Modelling (EDA & Classification) - KaggleJunaid AhmedNo ratings yet

- Data Mining and WarehousingDocument16 pagesData Mining and WarehousingAshwathy MNNo ratings yet

- Concepts and Techniques: Data MiningDocument101 pagesConcepts and Techniques: Data MiningJiyual MustiNo ratings yet

- T1 Dynamic Memory AllocationDocument62 pagesT1 Dynamic Memory AllocationBharani DaranNo ratings yet

- Rayleigh ModelDocument9 pagesRayleigh ModelKaran GuptaNo ratings yet

- Data Warehouse - Bitmap IndexingDocument24 pagesData Warehouse - Bitmap IndexingDianaNo ratings yet

- Winsem2012-13 Cp0535 Qz01ans DWDM Quiz Key FinalDocument6 pagesWinsem2012-13 Cp0535 Qz01ans DWDM Quiz Key FinalthelionphoenixNo ratings yet

- Data Mining MCQ Chapter 1Document11 pagesData Mining MCQ Chapter 1VismathiNo ratings yet

- A Detailed Analysis of The Supervised Machine Learning AlgorithmsDocument5 pagesA Detailed Analysis of The Supervised Machine Learning AlgorithmsNIET Journal of Engineering & Technology(NIETJET)No ratings yet

- Gower's Similarity CoefficientDocument7 pagesGower's Similarity Coefficientapi-2690138575% (4)

- Specific Answers Are Given To The Questions, Students Need To Modify Their Answers As Per The Questions or Case Given in The Paper.Document14 pagesSpecific Answers Are Given To The Questions, Students Need To Modify Their Answers As Per The Questions or Case Given in The Paper.Lokesh BhattaNo ratings yet

- System AnalysisDocument2 pagesSystem Analysisholylike88No ratings yet

- Analyzing Health Data with TableauDocument2 pagesAnalyzing Health Data with TableauSami Shahid Al IslamNo ratings yet

- CS 445/545 Homework 1: PerceptronsDocument2 pagesCS 445/545 Homework 1: PerceptronsNeha GuptaNo ratings yet

- Data Preprocessing For PythonDocument3 pagesData Preprocessing For Pythonabdul salamNo ratings yet

- Blended Learning Activity - Star Schema Exercise 2Document4 pagesBlended Learning Activity - Star Schema Exercise 2Alia najwaNo ratings yet

- Chapter-3 DATA MINING PDFDocument13 pagesChapter-3 DATA MINING PDFRamesh KNo ratings yet

- Domain Driven Architecture Technical ReferenceDocument98 pagesDomain Driven Architecture Technical Referenceyoogi85No ratings yet

- Data Warehousing and Data Mining Final Year Seminar TopicDocument10 pagesData Warehousing and Data Mining Final Year Seminar Topicanusha5c4No ratings yet

- Data GeneralizationDocument3 pagesData GeneralizationMNaveedsdkNo ratings yet

- How Predictive Analytics Can Help HealthcareDocument3 pagesHow Predictive Analytics Can Help HealthcareFizza ChNo ratings yet

- Solid Modeling PresentationDocument27 pagesSolid Modeling PresentationNilesh RanaNo ratings yet

- Union-Find Algorithms: Network Connectivity Quick Find Quick Union Improvements ApplicationsDocument40 pagesUnion-Find Algorithms: Network Connectivity Quick Find Quick Union Improvements ApplicationsprintesoiNo ratings yet

- Name: Reena Kale Te Comps Roll No: 23 DWM Experiment No: 1 Title: Designing A Data Warehouse Schema For A Case Study and PerformingDocument7 pagesName: Reena Kale Te Comps Roll No: 23 DWM Experiment No: 1 Title: Designing A Data Warehouse Schema For A Case Study and PerformingReena KaleNo ratings yet

- Matrix Decomposition 2019Document25 pagesMatrix Decomposition 2019SupsNo ratings yet

- Data WarehousingDocument19 pagesData Warehousingvenkataswamynath channaNo ratings yet

- Sharif Zhanel MID Explain Clustering and Data Independency by Giving An Example in DatabaseDocument4 pagesSharif Zhanel MID Explain Clustering and Data Independency by Giving An Example in DatabaseZhanel ShariffNo ratings yet

- Data Warehouse - Logical DesignDocument40 pagesData Warehouse - Logical DesignDianaNo ratings yet

- (CS2102) Group 4 Project ReportDocument22 pages(CS2102) Group 4 Project ReportFrancis PangNo ratings yet

- ClassificationDocument2 pagesClassificationRaja VeluNo ratings yet

- Data Scales and representation in data miningDocument27 pagesData Scales and representation in data miningScion Of VirikvasNo ratings yet

- 17CS81 IOT Notes Module4Document17 pages17CS81 IOT Notes Module4steven678No ratings yet

- Deep Collaborative Learning Approach Over Object RecognitionDocument5 pagesDeep Collaborative Learning Approach Over Object RecognitionIjsrnet EditorialNo ratings yet

- Data Warehousing and Data MiningDocument4 pagesData Warehousing and Data MiningRamesh YadavNo ratings yet

- DWDM Unit 2 Part 2 by Jithender TulasiDocument63 pagesDWDM Unit 2 Part 2 by Jithender TulasiTulasi JithenderNo ratings yet

- DWDM Module 2Document76 pagesDWDM Module 2leena chandraNo ratings yet

- Full Cube Computation - SKDocument10 pagesFull Cube Computation - SKShanta KumarNo ratings yet

- Unit 1Document19 pagesUnit 1Mag CreationNo ratings yet

- Unit 7. Database TuningDocument16 pagesUnit 7. Database TuningMag CreationNo ratings yet

- Evolution of Management Theories in 40 CharactersDocument20 pagesEvolution of Management Theories in 40 CharactersMag CreationNo ratings yet

- Unit 4 - Cloud Programming ModelsDocument21 pagesUnit 4 - Cloud Programming ModelsMag CreationNo ratings yet

- Unit 3Document18 pagesUnit 3Mag CreationNo ratings yet

- Tablespace and Storage ManagementDocument44 pagesTablespace and Storage ManagementMag CreationNo ratings yet

- Principles of Management: Unit 1 Nature of OrganizationsDocument11 pagesPrinciples of Management: Unit 1 Nature of OrganizationsMag CreationNo ratings yet

- CSC315 System Analysis and DesingDocument4 pagesCSC315 System Analysis and DesingMag CreationNo ratings yet

- CSC315 System Analysis and DesingDocument4 pagesCSC315 System Analysis and DesingMag CreationNo ratings yet

- CSC321 Image ProcessingDocument5 pagesCSC321 Image ProcessingMag CreationNo ratings yet

- CSC318 Web TechnologyDocument7 pagesCSC318 Web TechnologyMag CreationNo ratings yet

- CSC317 Simulation and ModelingDocument5 pagesCSC317 Simulation and ModelingMag CreationNo ratings yet

- Data Preprocessing ConceptsDocument40 pagesData Preprocessing ConceptsMag CreationNo ratings yet

- Introduction To Computer NetworksDocument16 pagesIntroduction To Computer NetworksMag CreationNo ratings yet

- CSC314 Design and Analysis of AlgorithmsDocument5 pagesCSC314 Design and Analysis of AlgorithmsMag CreationNo ratings yet

- Capsule Neural NetworkDocument42 pagesCapsule Neural NetworkMag Creation100% (1)

- File SystemsDocument40 pagesFile SystemsMag CreationNo ratings yet

- Relational Database DesignDocument9 pagesRelational Database DesignMag CreationNo ratings yet

- CSC316 CryptographyDocument6 pagesCSC316 CryptographyMag CreationNo ratings yet

- Hospital Management SystemDocument23 pagesHospital Management SystemMag CreationNo ratings yet

- Relational ModelDocument20 pagesRelational ModelMag CreationNo ratings yet

- Relational ModelDocument20 pagesRelational ModelMag CreationNo ratings yet

- Regular ExpressionDocument17 pagesRegular ExpressionMag CreationNo ratings yet

- Introduction To OSDocument12 pagesIntroduction To OSMag CreationNo ratings yet

- SQL Most Asked QuestionsDocument7 pagesSQL Most Asked QuestionsMag CreationNo ratings yet

- Integrity ConstraintsDocument11 pagesIntegrity ConstraintsMag CreationNo ratings yet

- SQL Most Asked QuestionsDocument7 pagesSQL Most Asked QuestionsMag CreationNo ratings yet

- Solutions Manual Fundamentals PDFDocument11 pagesSolutions Manual Fundamentals PDFMuhammad IsmaeelNo ratings yet

- 1571-1635319494618-Unit 04 Leadership and ManagementDocument48 pages1571-1635319494618-Unit 04 Leadership and ManagementdevindiNo ratings yet

- (PDF) Biochemistry and Molecular Biology of Plants: Book DetailsDocument1 page(PDF) Biochemistry and Molecular Biology of Plants: Book DetailsArchana PatraNo ratings yet

- Lateral capacity of pile in clayDocument10 pagesLateral capacity of pile in clayGeetha MaNo ratings yet

- Fermat points and the Euler circleDocument2 pagesFermat points and the Euler circleKen GamingNo ratings yet

- 10 Tips To Support ChildrenDocument20 pages10 Tips To Support ChildrenRhe jane AbucejoNo ratings yet

- $R6RN116Document20 pages$R6RN116chinmay gulhaneNo ratings yet

- Getting the Most from Cattle Manure: Proper Application Rates and PracticesDocument4 pagesGetting the Most from Cattle Manure: Proper Application Rates and PracticesRamNocturnalNo ratings yet

- L Williams ResumeDocument2 pagesL Williams Resumeapi-555629186No ratings yet

- SHORT STORY Manhood by John Wain - Lindsay RossouwDocument17 pagesSHORT STORY Manhood by John Wain - Lindsay RossouwPrincess67% (3)

- History and Development of the Foodservice IndustryDocument23 pagesHistory and Development of the Foodservice IndustryMaria Athenna MallariNo ratings yet

- DCAD OverviewDocument9 pagesDCAD OverviewSue KimNo ratings yet

- IPO Fact Sheet - Accordia Golf Trust 140723Document4 pagesIPO Fact Sheet - Accordia Golf Trust 140723Invest StockNo ratings yet

- Monster Visual Discrimination CardsDocument9 pagesMonster Visual Discrimination CardsLanaNo ratings yet

- CURRICULUM AUDIT: GRADE 7 MATHEMATICSDocument5 pagesCURRICULUM AUDIT: GRADE 7 MATHEMATICSjohnalcuinNo ratings yet

- PedigreesDocument5 pagesPedigreestpn72hjg88No ratings yet

- QP P1 APR 2023Document16 pagesQP P1 APR 2023Gil legaspiNo ratings yet

- Friction, Gravity and Energy TransformationsDocument12 pagesFriction, Gravity and Energy TransformationsDaiserie LlanezaNo ratings yet

- Drug Study TramadolDocument7 pagesDrug Study TramadolZyrilleNo ratings yet

- Class Opening Preparations Status ReportDocument3 pagesClass Opening Preparations Status ReportMaria Theresa Buscato86% (7)

- The Unbounded MindDocument190 pagesThe Unbounded MindXtof ErNo ratings yet

- Akhmatova, Anna - 45 Poems With Requiem PDFDocument79 pagesAkhmatova, Anna - 45 Poems With Requiem PDFAnonymous 6N5Ew3No ratings yet

- Second Quarterly Examination Math 9Document2 pagesSecond Quarterly Examination Math 9Mark Kiven Martinez94% (16)

- Variables in Language Teaching - The Role of The TeacherDocument34 pagesVariables in Language Teaching - The Role of The TeacherFatin AqilahNo ratings yet

- spl400 Stereo Power Amplifier ManualDocument4 pagesspl400 Stereo Power Amplifier ManualRichter SiegfriedNo ratings yet

- Kltdensito2 PDFDocument6 pagesKltdensito2 PDFPutuWijayaKusumaNo ratings yet

- Managing Post COVID SyndromeDocument4 pagesManaging Post COVID SyndromeReddy VaniNo ratings yet

- Common Pesticides in AgricultureDocument6 pagesCommon Pesticides in AgricultureBMohdIshaqNo ratings yet

- Hajj A Practical Guide-Imam TahirDocument86 pagesHajj A Practical Guide-Imam TahirMateen YousufNo ratings yet

- List of household items for relocationDocument4 pagesList of household items for relocationMADDYNo ratings yet

- United Airlines Case Study: Using Marketing to Address External ChallengesDocument4 pagesUnited Airlines Case Study: Using Marketing to Address External ChallengesSakshiGuptaNo ratings yet