Professional Documents

Culture Documents

Data

Uploaded by

julius anthony magallon0 ratings0% found this document useful (0 votes)

11 views1 pageThis document compares the performance of different machine learning algorithms and data sampling techniques for imbalanced classification. Random forest and gradient boosting classifiers were tested on datasets using various wavelet families for feature extraction. The results show that SMOTE oversampling generally improved accuracy for the random forest classifier compared to the original imbalanced data, while ADASYN worked best for gradient boosting in many cases. Accuracy varied depending on the specific wavelet type and sampling method used.

Original Description:

Original Title

data (4)

Copyright

© © All Rights Reserved

Available Formats

XLSX, PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentThis document compares the performance of different machine learning algorithms and data sampling techniques for imbalanced classification. Random forest and gradient boosting classifiers were tested on datasets using various wavelet families for feature extraction. The results show that SMOTE oversampling generally improved accuracy for the random forest classifier compared to the original imbalanced data, while ADASYN worked best for gradient boosting in many cases. Accuracy varied depending on the specific wavelet type and sampling method used.

Copyright:

© All Rights Reserved

Available Formats

Download as XLSX, PDF, TXT or read online from Scribd

0 ratings0% found this document useful (0 votes)

11 views1 pageData

Uploaded by

julius anthony magallonThis document compares the performance of different machine learning algorithms and data sampling techniques for imbalanced classification. Random forest and gradient boosting classifiers were tested on datasets using various wavelet families for feature extraction. The results show that SMOTE oversampling generally improved accuracy for the random forest classifier compared to the original imbalanced data, while ADASYN worked best for gradient boosting in many cases. Accuracy varied depending on the specific wavelet type and sampling method used.

Copyright:

© All Rights Reserved

Available Formats

Download as XLSX, PDF, TXT or read online from Scribd

You are on page 1of 1

Sheet1

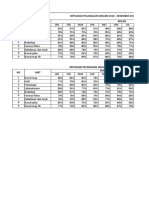

Wavelet Without oversamplingOversampling techniqWith oversampling

R.Forest G.Boost R.Forest G.Boos'{

Daubechie 10% 5% SMOTE(regular): 80% 85%

ADASYN: 85% 80%

SMOTE-Tomek: 85% 85%

SMOTE-ENN: 90% 80%

Symlets 65% 70% SMOTE: 80% 85%

ADASYN: 85% 75%

SMOTE-T. 80% 80%

SMO" : 75% 75%

Coiflets 10% 65% SMOTE(regular min): 715% 85%

ADASYN: 80% 80%

SMOTE-Tomek: 80% 75%

SMOTE-ENN: 75% 85%

Biorthogon 65% 65% SMOTE(reg min/borde 80% 0%

ADASYN: 80% 90%

SMOTETomek: 80% 90%

SMOTE-ENN: 85% 85%

Page 1

You might also like

- CatalystAthletics ClassicCycleDocument32 pagesCatalystAthletics ClassicCyclejustasNo ratings yet

- Dashboard OEE ExcellDocument7 pagesDashboard OEE ExcellNurhadi100% (1)

- F23 - H1 DashboardDocument11 pagesF23 - H1 DashboardAnand R100% (1)

- Gráfica Trend Pareto Paynter Action Efficiency 031219Document9 pagesGráfica Trend Pareto Paynter Action Efficiency 031219alonsoNo ratings yet

- Plant Loss Tree DataDocument1 pagePlant Loss Tree DataJoseph OrjiNo ratings yet

- DataDocument1 pageDatajulius anthony magallonNo ratings yet

- Employee SatisfactionDocument6 pagesEmployee SatisfactionEkane NgemeNo ratings yet

- 17.1 Costo de Implementacion Del Plan111Document2 pages17.1 Costo de Implementacion Del Plan111Zaida EANo ratings yet

- 17.1 Costo de Implementacion Del Plan111Document3 pages17.1 Costo de Implementacion Del Plan111AngelaMariaNo ratings yet

- 0280TK021-S Curve 14 AugDocument1 page0280TK021-S Curve 14 AugYorgieNo ratings yet

- Persentase Kepatuhan StafDocument12 pagesPersentase Kepatuhan Stafinanadutt10No ratings yet

- Resultados de ExcelnnDocument11 pagesResultados de ExcelnnJUAN DIEGONo ratings yet

- High Carbon Steel Abrasive SpecificationDocument1 pageHigh Carbon Steel Abrasive SpecificationPrabath MadusankaNo ratings yet

- Indikator Nasional Mutu Rsud BrebesDocument4 pagesIndikator Nasional Mutu Rsud BrebesOl T DagelanNo ratings yet

- Heat MapDocument11 pagesHeat MapAhlex Van der AllNo ratings yet

- Laporan Bulanan Rujukan, SampahDocument5 pagesLaporan Bulanan Rujukan, Sampahlaely setyaNo ratings yet

- Edit Coba2Document4 pagesEdit Coba2Alfikar FahriNo ratings yet

- Insuficient 1 Elemental 9 Adecuado 8Document4 pagesInsuficient 1 Elemental 9 Adecuado 8monicaj_sanhueza4147No ratings yet

- Noakhali Gold Foods Ltd. West Char Uria, Mannan Nagar, Sadar, Noakhali. Standard Wastage of Fish & ShrimpDocument3 pagesNoakhali Gold Foods Ltd. West Char Uria, Mannan Nagar, Sadar, Noakhali. Standard Wastage of Fish & Shrimpaktaruzzaman bethuNo ratings yet

- Progress Rencana-Aktual Harian Pekerjaan Finishing Kolom-Balok 19032024Document2 pagesProgress Rencana-Aktual Harian Pekerjaan Finishing Kolom-Balok 19032024windamokeNo ratings yet

- Grafik Cakupan K1 Januari-September 2021: Column B Column CDocument16 pagesGrafik Cakupan K1 Januari-September 2021: Column B Column CRismawati ArifNo ratings yet

- Bottom Audit Bulan JuniDocument12 pagesBottom Audit Bulan JuniHealth Safety JX Pratama Abadi IndustriNo ratings yet

- Vacunometro Barrido DPTDocument4 pagesVacunometro Barrido DPTnetlab.ellm1.00004538No ratings yet

- AA - Heat Transfer - Pressure - Drop - On - MC - CoilsDocument1 pageAA - Heat Transfer - Pressure - Drop - On - MC - CoilsTô Thiên ĐăngNo ratings yet

- Pareto ActualizadoDocument15 pagesPareto ActualizadoHIERRO ECO ROMERO HUAYTONo ratings yet

- 2020 League Table - GI Maxima - TBM PDFDocument6 pages2020 League Table - GI Maxima - TBM PDFMITHUN NANDYNo ratings yet

- Sds Educate Center Mas Daftar Nilai TAHUN PELAJARAN 2018/ 2019Document3 pagesSds Educate Center Mas Daftar Nilai TAHUN PELAJARAN 2018/ 2019Melissa ManaluNo ratings yet

- Gestão A VistaDocument16 pagesGestão A VistaRoda6038cNo ratings yet

- QUINTO A Reporte Beereaders - 1 - 105628947Document2 pagesQUINTO A Reporte Beereaders - 1 - 105628947LEONARDO RODRIGO CUTIPA APAZANo ratings yet

- Estrategia de Goles Over 1.5Document2 pagesEstrategia de Goles Over 1.5Paolo Haro CasanovaNo ratings yet

- Detailed Tech Audit ChecklistsDocument21 pagesDetailed Tech Audit ChecklistsSayed Abo ElkhairNo ratings yet

- Pareto ActualizadoDocument15 pagesPareto ActualizadoHIERRO ECO ROMERO HUAYTONo ratings yet

- PerhitunganDocument1 pagePerhitunganDedy AlfiliantoNo ratings yet

- HR Accounts Maintenance Electric Mechanical Production Dispatch QualityDocument9 pagesHR Accounts Maintenance Electric Mechanical Production Dispatch QualityErection DepartmentNo ratings yet

- Time To Quiz 2da Quincena Abr 2019Document35 pagesTime To Quiz 2da Quincena Abr 2019Tattan ValdesNo ratings yet

- Grafik Gizi Desa 2015Document12 pagesGrafik Gizi Desa 2015Siti RisniaNo ratings yet

- Grafik Gizi Posy 2016Document12 pagesGrafik Gizi Posy 2016Siti RisniaNo ratings yet

- Dusun Kasus Capaian ABJ DBD Jan Feb Mar Apr Mei Jun Desa TegaltirtoDocument2 pagesDusun Kasus Capaian ABJ DBD Jan Feb Mar Apr Mei Jun Desa TegaltirtoDona ViolitaNo ratings yet

- Kepuasan Pelanggan Januari 2018 - Desember 2018 NO Unit BulanDocument2 pagesKepuasan Pelanggan Januari 2018 - Desember 2018 NO Unit BulanSaepulNo ratings yet

- Ianuarie 2023Document6 pagesIanuarie 2023Alex ButucaruNo ratings yet

- Semester Berjalan (AB)Document1 pageSemester Berjalan (AB)Cikita HoworNo ratings yet

- Audit KKTDocument2 pagesAudit KKTWildyNo ratings yet

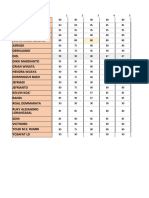

- Num. de Orden Mat. Prom. Mat. Asist. Soc. Prom. Leng - ESP. Prom. Leng - Esp. Asist. Naturales Prom. Naturales AsistDocument1 pageNum. de Orden Mat. Prom. Mat. Asist. Soc. Prom. Leng - ESP. Prom. Leng - Esp. Asist. Naturales Prom. Naturales Asistargenis de la cruzNo ratings yet

- Format Kehadiran PramukaDocument3 pagesFormat Kehadiran PramukaDinaNo ratings yet

- RepairreplaceavequipmentDocument1 pageRepairreplaceavequipmentRishika ReddyNo ratings yet

- Book 1Document2 pagesBook 1Akmal HafizhNo ratings yet

- Rekap PTS IpaDocument23 pagesRekap PTS IpaArief Setiawan MuslimNo ratings yet

- Case "Morskie Oko"Document4 pagesCase "Morskie Oko"Yanina SalamandraNo ratings yet

- Final Score Kpi Assessment: Brownfield Isbl Jetty & Building Kariangau OsblDocument3 pagesFinal Score Kpi Assessment: Brownfield Isbl Jetty & Building Kariangau OsblIrvan IrvanNo ratings yet

- Updated Incentives Plan - Sheet1Document1 pageUpdated Incentives Plan - Sheet1Shashank RaiNo ratings yet

- Kayonza Rwandaequip Weekly Performance - Live DashboardDocument1 pageKayonza Rwandaequip Weekly Performance - Live Dashboarddannytuyisenge1998No ratings yet

- Indicator Target: Mock Drill in Emergency Department TAT Laboratorium For OPD PatientDocument27 pagesIndicator Target: Mock Drill in Emergency Department TAT Laboratorium For OPD PatientyuliNo ratings yet

- Glassdoor Ratings of The Picks - Seeking Alpha Investing GroupsDocument2 pagesGlassdoor Ratings of The Picks - Seeking Alpha Investing GroupsAndrew OstaNo ratings yet

- Internal Revenue Allotment DependencyDocument10 pagesInternal Revenue Allotment DependencyRheii EstandarteNo ratings yet

- Calculo Auditores GaaDocument5 pagesCalculo Auditores GaaEyner VelasquezNo ratings yet

- Pre SupuestoDocument14 pagesPre SupuestoMafe RojasNo ratings yet

- Cinta Queen Gil 4Document1 pageCinta Queen Gil 4Carlos Gutierrez LondoñoNo ratings yet

- Nilai PKL TKRDocument17 pagesNilai PKL TKRMuhammad TaufiqramliNo ratings yet

- Cuci Tangan Semester 2 2018Document1 pageCuci Tangan Semester 2 2018NopiNo ratings yet

- DataDocument1 pageDatajulius anthony magallonNo ratings yet

- Sample and HoldDocument12 pagesSample and Holdjulius anthony magallonNo ratings yet

- Math BDocument334 pagesMath Bjulius anthony magallonNo ratings yet

- Assignment #10: Massachusetts Institute of Technology - Physics DepartmentDocument2 pagesAssignment #10: Massachusetts Institute of Technology - Physics Departmentjulius anthony magallonNo ratings yet