Professional Documents

Culture Documents

23 - PDFsam - Escholarship UC Item 5qd0r4ws

23 - PDFsam - Escholarship UC Item 5qd0r4ws

Uploaded by

Mohammad0 ratings0% found this document useful (0 votes)

1 views1 pageOriginal Title

23_PDFsam_eScholarship UC item 5qd0r4ws

Copyright

© © All Rights Reserved

Available Formats

PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

0 ratings0% found this document useful (0 votes)

1 views1 page23 - PDFsam - Escholarship UC Item 5qd0r4ws

23 - PDFsam - Escholarship UC Item 5qd0r4ws

Uploaded by

MohammadCopyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

You are on page 1of 1

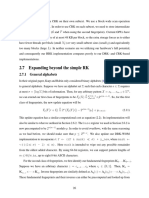

at a time.

This scenario is referred to as branch divergence and should be avoided as much as

possible in order to reach high warp efficiency.

1.2.2 GPU Memory Hierarchy

GPUs possess several memory resources with organized into a memory hierarchy, each with

different capacity, access time, and access scope (to be shared collectively). All threads have

access to a global DRAM (global memory). For example, the Tesla K40c GPU has 12 GB of

global memory available. This is the largest memory resource available in the device, but it is

generally the slowest one as well, i.e., it requires the most instruction cycles to fetch specific

memory contents. All threads within a thread-block have access to a faster but more limited

shared memory. Shared memory is behaves like a manually controlled cache in the programmer’s

disposal, with orders of magnitude faster access time compared to global memory but much

less available capacity (48 KB to be shared among all resident blocks per SM on a Tesla K40c).

Shared memory contents are assigned to thread-blocks by the scheduler, and cannot be accessed

by other thread-blocks (even those on the same SM). All contents are lost and the portion is

re-assigned to a new block once a block’s execution is finished. At the lowest level of the

hierarchy, each thread has access to local registers. While registers have the fastest access time,

they are only accessible by their own thread (64 KB to be shared among all resident blocks per

SM on a Tesla K40c). On GPUs there are also other types of memory such as the L1 cache

(on-chip 16 KB per SM, mostly used for register spills and dynamic indexed arrays), the L2

cache (on-chip 1.5 MB on the whole Tesla K40c device), constant memory (64 KB on a Tesla

K40c), texture memory, and local memory [78].

On GPUs, memory accesses are done in units of 128 bytes. As a result, it is preferred that

the programmer writes the program in such a way that threads within a warp require consecutive

memory elements (coalesced memory access) to access 32 consecutive 4-byte units (such as

float, integer, etc.). Any other memory access pattern results in a waste in the available memory

bandwidth of the device.

You might also like

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5819)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1092)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (845)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (590)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (897)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (540)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (348)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (822)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (122)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (401)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (74)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- 34 - PDFsam - Escholarship UC Item 5qd0r4wsDocument1 page34 - PDFsam - Escholarship UC Item 5qd0r4wsMohammadNo ratings yet

- 43 - PDFsam - Escholarship UC Item 5qd0r4wsDocument1 page43 - PDFsam - Escholarship UC Item 5qd0r4wsMohammadNo ratings yet

- 45 - PDFsam - Escholarship UC Item 5qd0r4wsDocument1 page45 - PDFsam - Escholarship UC Item 5qd0r4wsMohammadNo ratings yet

- 35 - PDFsam - Escholarship UC Item 5qd0r4wsDocument1 page35 - PDFsam - Escholarship UC Item 5qd0r4wsMohammadNo ratings yet

- 36 - PDFsam - Escholarship UC Item 5qd0r4wsDocument1 page36 - PDFsam - Escholarship UC Item 5qd0r4wsMohammadNo ratings yet

- 40 - PDFsam - Escholarship UC Item 5qd0r4wsDocument1 page40 - PDFsam - Escholarship UC Item 5qd0r4wsMohammadNo ratings yet

- 25 - PDFsam - Escholarship UC Item 5qd0r4wsDocument1 page25 - PDFsam - Escholarship UC Item 5qd0r4wsMohammadNo ratings yet

- 39 - PDFsam - Escholarship UC Item 5qd0r4wsDocument1 page39 - PDFsam - Escholarship UC Item 5qd0r4wsMohammadNo ratings yet

- 26 - PDFsam - Escholarship UC Item 5qd0r4wsDocument1 page26 - PDFsam - Escholarship UC Item 5qd0r4wsMohammadNo ratings yet

- 13 - PDFsam - Escholarship UC Item 5qd0r4wsDocument1 page13 - PDFsam - Escholarship UC Item 5qd0r4wsMohammadNo ratings yet

- 22 - PDFsam - Escholarship UC Item 5qd0r4wsDocument1 page22 - PDFsam - Escholarship UC Item 5qd0r4wsMohammadNo ratings yet

- 28 - PDFsam - Escholarship UC Item 5qd0r4wsDocument1 page28 - PDFsam - Escholarship UC Item 5qd0r4wsMohammadNo ratings yet

- 38 - PDFsam - Escholarship UC Item 5qd0r4wsDocument1 page38 - PDFsam - Escholarship UC Item 5qd0r4wsMohammadNo ratings yet

- 16 - PDFsam - Escholarship UC Item 5qd0r4wsDocument1 page16 - PDFsam - Escholarship UC Item 5qd0r4wsMohammadNo ratings yet

- 24 - PDFsam - Escholarship UC Item 5qd0r4wsDocument1 page24 - PDFsam - Escholarship UC Item 5qd0r4wsMohammadNo ratings yet

- 9 - PDFsam - Escholarship UC Item 5qd0r4wsDocument1 page9 - PDFsam - Escholarship UC Item 5qd0r4wsMohammadNo ratings yet

- 17 - PDFsam - Escholarship UC Item 5qd0r4wsDocument1 page17 - PDFsam - Escholarship UC Item 5qd0r4wsMohammadNo ratings yet

- 22 - PDFsam - Beginning Rust - From Novice To Professional (PDFDrive)Document1 page22 - PDFsam - Beginning Rust - From Novice To Professional (PDFDrive)MohammadNo ratings yet

- 4 - PDFsam - Escholarship UC Item 5qd0r4wsDocument1 page4 - PDFsam - Escholarship UC Item 5qd0r4wsMohammadNo ratings yet

- 12 - PDFsam - Escholarship UC Item 5qd0r4wsDocument1 page12 - PDFsam - Escholarship UC Item 5qd0r4wsMohammadNo ratings yet

- 8 - PDFsam - Escholarship UC Item 5qd0r4wsDocument1 page8 - PDFsam - Escholarship UC Item 5qd0r4wsMohammadNo ratings yet

- 20 - PDFsam - Beginning Rust - From Novice To Professional (PDFDrive)Document1 page20 - PDFsam - Beginning Rust - From Novice To Professional (PDFDrive)MohammadNo ratings yet

- 9.11 Performing Multiple Operations On A List Using FunctorsDocument3 pages9.11 Performing Multiple Operations On A List Using FunctorsMohammadNo ratings yet

- 9.11 Performing Multiple Operations On A List Using FunctorsDocument1 page9.11 Performing Multiple Operations On A List Using FunctorsMohammadNo ratings yet

- 19 - PDFsam - Beginning Rust - From Novice To Professional (PDFDrive)Document1 page19 - PDFsam - Beginning Rust - From Novice To Professional (PDFDrive)MohammadNo ratings yet

- Deep Learning For Assignment of Protein Secondary Structure Elements From CoordinatesDocument7 pagesDeep Learning For Assignment of Protein Secondary Structure Elements From CoordinatesMohammadNo ratings yet

- 2 - PDFsam - Beginning Rust - From Novice To Professional (PDFDrive)Document1 page2 - PDFsam - Beginning Rust - From Novice To Professional (PDFDrive)MohammadNo ratings yet

- 9.11 Performing Multiple Operations On A List Using FunctorsDocument4 pages9.11 Performing Multiple Operations On A List Using FunctorsMohammadNo ratings yet

- 18.4 Being Notified of The Completion of An Asynchronous DelegateDocument3 pages18.4 Being Notified of The Completion of An Asynchronous DelegateMohammadNo ratings yet

- 18.4 Being Notified of The Completion of An Asynchronous DelegateDocument2 pages18.4 Being Notified of The Completion of An Asynchronous DelegateMohammadNo ratings yet