Professional Documents

Culture Documents

Early Diabetic Risk Prediction Using Machine Learning Classification Techniques

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Early Diabetic Risk Prediction Using Machine Learning Classification Techniques

Copyright:

Available Formats

Volume 6, Issue 9, September – 2021 International Journal of Innovative Science and Research Technology

ISSN No:-2456-2165

Early Diabetic Risk Prediction using Machine

Learning Classification Techniques

Adetunji Olusogo Julius1, Ayeni Olusola Ayokunle2, Fasanya Olawale Ibrahim3

1,3

Department of Computer Engineering, Ajayi Polytechnic Ikere- Ekiti, Ekiti State, Nigeria.

2

Department of Computer Science, Federal Polytechnic Ado Ekiti, Ekiti State, Nigeria.

Abstract:- Diabetes is a metabolic disorder that results The dataset is obtained from the research paper

from deficiency of the insulin secretion to control high (Faniqul Islam et al., 2020). The dataset contains 520

sugar contents in the body system. At early stage, samples with 17 distinct attributes.

diabetes can be managed and controlled. Prolong

diabetes leads to complication disorders such as diabetes II. RELATED WORK

retinopathy, angina, heart attack, stroke, atherosclerosis

and even death. Therefore, assessment of diabetic risk Sakshi Gujral et al. (2017) adopted the application of

prediction is necessary at early stage by using machine classification techniques namely Support Vector Machine

learning classification techniques based on observed and Decision Trees for the prediction of diabetes mellitus.

sample features. The dataset used for this paper was The dataset employed for this paper was obtained from

obtained from Irvine (UCI) repository of machine PIMA Indian Diabetes Data-set. PIMA India is concerned

learning databases and was analyzed on WEKA with women’s health. Support vector machine has the higher

application platform. The dataset contains 520 samples accuracy of 82%.

with 17 distinct attributes. Machine learning algorithms

used as classifier are K-Nearest Neighbors algorithm Deepti Sisodia et al.(2018) employed support vector

(KNN), Support Vector Machine (SVM), Functional machine, decision tree and naïve bayes algorithms. The

Tree (FT). The evaluated results were based on research was performed on the Pima Indians Diabetes

parameters such as accuracy, specificity and precision. Database (PIDD) which is sourced from UCI machine

KNN has the highest accuracy, specificity and precision learning repository. The results obtained showed that naïve

of 𝟗𝟖. 𝟎𝟖%, 𝟗𝟗% and 𝟗𝟗. 𝟑𝟔% respectively. bayes outperforms other algorithms with the accuracy of

76.30% comparatively other algorithm.

Keywords:- Machine Learning Algorithm, WEKA, Diabetes,

KNN, SVM, FT. Sneha and Gangil (2019) applied decision tree, Naïve

Bayesian and random forest algorithms for the early

I. INTRODUCTION prediction of diabetes mellitus using optimal features

selection. The proposed method focused on selecting the

Diabetes poses threat to human lives and attributed by attributes for the early detection of diabetes using predictive

hyperglycemia (Zou et al., 2008). Diabetes is one of the analysis. Decision tree and random forest algorithms have

chronic disorders resulted from deficiency of insulin the highest specificity of 98.20% and 98.00%, respectively

secretion to control high sugar contents in the body system while Naïve Bayesian has the best accuracy of 82.30%.

and it affects both sexes worldwide (Mahboob et al., 2018).

Based on World Health Organization (WHO) 2021 report, Hassan, Malaserene and Leema (2020) conducted

four hundred and twenty two million (422 000 000) people prediction of diabetes mellitus using classification

are diabetic patients worldwide with one million and six techniques like Decision Tree, KNearest Neighbors, and

hundred thousand deaths each year, most of which are from Support Vector Machines. It was observed that support

underdeveloped or developing countries. Diabetes is not vector machine (SVM) outperforms decision tree and KNN

only affected by the major factor of “high sugar with highest accuracy of 90.23%.

concentration” in the body system but with some other

factors such as obesity, partial paresis, age, delayed healing, III. METHODOLOGY

muscle stiffness, hereditary factors and deficiency in insulin

secretion (Deepti and Dilip, 2018). However, early risk The proposed system includes Data collection, Data

prediction of diabetes is a remedy from its complications Pre-processing, System Architecture and System Evaluation.

(Vijayan and Anjavili, 2015). Early risk prediction of

diabetes would safe medical practitioners from wasting their 3.1 Data Collection

time and energy from clinical diagnosis of diabetic patients The data set used in this paper was obtained from

(Muhammad et al., 2018). Irvine (UCI) repository of machine learning databases. The

dataset contains 520 samples with 17 distinct attributes.

IJISRT21SEP287 www.ijisrt.com 502

Volume 6, Issue 9, September – 2021 International Journal of Innovative Science and Research Technology

ISSN No:-2456-2165

3.2 Data Pre – Processing TP = True Positive

The dataset is in csv format and is being converted to TN = True Negative

arff format which is form suitable for WEKA application. FN = False Negative

FP = False Positive

3.3 System Architecture

The figure 3. 1 depicts the architecture of the ii. Specificity: Specificity is defined as the proportion of

proposed system in which the early stage diabetic risk actual negatives, which got predicted as the negative (or

prediction dataset is classified with K-Nearest Neighbors true negative).

algorithm (KNN), Random Forest (RF), Support Vector

Machine (SVM) and Functional Tree (FT) classifiers. True Negative

Specificity =

True Negative + False Positive

iii. Precision: Precision is calculated as the number of true

positives divided by the total number of true positives

and false positives.

True Positive

Precision =

True Positive + False Positive

3.6 Experimental Set Up

The experimental set up involved.

i. WEKA Software: Waikato Environment for Knowledge

Analysis (WEKA) is a collection of machine learning

algorithms for tasks in mining of data. It implements

algorithms for data pre-processing, classification,

regression, clustering and association rules, visualization

tools are also included.

Figure 3.1 System Architecture

3.4 System Pseudo code

The pseudo code of the system is enumerated in the

arrangement below:

Step 1: load Dataset of diabetes into weka application

Step 2: Pre-processing of the dataset

Step 3: Classification using support vector machine (SVM)

and record the result

Step 4: Classification using K-Nearest Neighbors algorithm

(KNN) and record the result

Step 5: Classification using Functional Tree (FT) algorithm

and record the result. Figure 3.2: WEKA tool Applications interface (GUI

Step 6: Evaluation of the results. Chooser)

Step 1 and step 2 involved loading of the dataset into ii. Dataset: The dataset employed in this paper was

the weka application and conversion of the dataset from csv obtained from Irvine (UCI) repository of machine

format to arff format respectively. Steps 3, 4 and 5 are the learning databases and the paper that generated the

classifications using support vector machine (SVM), K- dataset is (Faniqul Islam et al., 2020).

Nearest Neighbors and Functional Tree (FT) algorithms iii. K-Fold Cross Validation: The experimental set up

respectively. Step 6 is the evaluation of the results based on using weka application in this paper is made up of 10-

metrics namely accuracy, specificity and precision. fold cross validation. The training dataset is randomly

partitioned into 10 groups, the first 9 groups are used for

3.5 Metrics used for classification training the classifier and the other group was used as the

i. Accuracy: The percentage of the correctly classified dataset to test on.

instances i.e. accuracy, is obtained by subtracting the

percentage of incorrectly classified instances from 100.

Accuracy is obtained by

Tp +TN 100

Accuracy = TP+TN+FN+FP

× 1

%.

IJISRT21SEP287 www.ijisrt.com 503

Volume 6, Issue 9, September – 2021 International Journal of Innovative Science and Research Technology

ISSN No:-2456-2165

IV. RESULTS

4.1 Experimental Results

Figure 4.1 demonstrates how to load the dataset into WEKA application.

Figure 4.1: Loading dataset into WEKA Application

Figure 4.2: Application of Functional Tree (FT) Algorithm

IJISRT21SEP287 www.ijisrt.com 504

Volume 6, Issue 9, September – 2021 International Journal of Innovative Science and Research Technology

ISSN No:-2456-2165

Figure 4.3: Application of Support Vector Machine (SVM) Algorithm

Figure 4.4: Application of K-Nearest Neighbors Algorithm

IJISRT21SEP287 www.ijisrt.com 505

Volume 6, Issue 9, September – 2021 International Journal of Innovative Science and Research Technology

ISSN No:-2456-2165

Figure 4.5: Application of Random Forest Algorithm

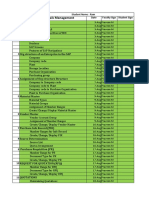

Table 4.1: Summary of confusion matrix and Results of evaluation metrics for the classification using machine learning

algorithms

Number Algorithms True False False True Accuracy Precision Specificity

Positive Negative Positive Negative (%) (%) (%)

1 K-Nearest 312 8 2 198 98.08 99.36 99.00

Neighbors

2 Support 300 20 10 190 94.23 96.77 95.00

Vector

Machine

(SVM)

3 Functional 298 22 11 189 93.65 96.44 94.50

Tree (FT)

4 Random 313 7 6 194 97.50 98.12 97.00

Forest

(RF)

V. CONCLUSION FUTURE WORK

The research work was conducted using support vector Further studies can be carried out using other

machine (SVM), K-Nearest Neighbors (KNN), Functional classification techniques, adoption of feature extraction/

Tree (FT) and Random Forest (RF) Algorithms as classifiers feature selection techniques or using other datasets.

in which K-Nearest Neighbors perform better in terms of

accuracy, precision and specificity. The research work

produced highest accuracy, specificity and precision through

K-Nearest Neighbors algorithm.

IJISRT21SEP287 www.ijisrt.com 506

Volume 6, Issue 9, September – 2021 International Journal of Innovative Science and Research Technology

ISSN No:-2456-2165

REFERENCES

[1]. Deepti, S. and Dilip S. (2018). Prediction of Diabetes

using Classification Algorithms. International

Conference on Computational Intelligence and Data

Science.

[2]. Faniqul Islam M.M et al. (2020) 'Likelihood prediction

of diabetes at early stage using data mining

techniques.' Computer Vision and Machine

Intelligence in Medical Image Analysis. Springer,

Singapore, 113-125.

[3]. Hassan A.S et al. (2020). Diabetes Mellitus Prediction

using Classification Techniques. International Journal

of Innovative Technology and Exploring Engineering

(IJITEE) ISSN: 2278-3075, Volume-9 Issue-5.

[4]. Mahboob T. A et al. (2018). A model for early

prediction of diabetes. Informatics in Medicine

Unlocked.

[5]. Muhammad A. et al.(2018). Prediction of Diabetes

Using Machine Learning Algorithms in Healthcare.

Proceedings of the 24th International Conference on

Automation & Computing, Newcastle University.

[6]. Sakshi Gujral et al. (2017). Detecting and Predicting

Diabetes Using Supervised Learning: An Approach

towards Better Healthcare for Women.International

Journal of Advanced Research in Computer Science

Volume 8, No. 5.

[7]. Naveen K.G et al. (2020). Prediction of Diabetes

Using Machine Learning Classification Algorithms.

International Journal of Scientific and Technology

Research.

[8]. Vijayan, V.V and Anjavili, C. (2015). Prediction and

diagnosis of diabetes mellitus. A machine learning

approach IEEE Recent advances in Intelligent

Computational Systems.

[9]. Zou et al., (2018). Predicting Diabetes Mellitus with

Machine Learning Techniques. Frontiers in genetics.

IJISRT21SEP287 www.ijisrt.com 507

You might also like

- Nanocomposite Coating:a ReviewDocument19 pagesNanocomposite Coating:a ReviewA. SNo ratings yet

- JK Rotatory Breakage TesterDocument2 pagesJK Rotatory Breakage TesterBenito Quispe A.No ratings yet

- RBM Amenability - StudyDocument40 pagesRBM Amenability - StudyMgn SanNo ratings yet

- As 4156.3-2008 Coal Preparation Magnetite For Coal Preparation Plant Use - Test MethodsDocument8 pagesAs 4156.3-2008 Coal Preparation Magnetite For Coal Preparation Plant Use - Test MethodsSAI Global - APACNo ratings yet

- ToniLIME Manual English R 1 - 2Document45 pagesToniLIME Manual English R 1 - 2Kamal PashaNo ratings yet

- Angle of Repose: Instructions (Read These Carefully or You Will Have To Redo Your Responses!)Document6 pagesAngle of Repose: Instructions (Read These Carefully or You Will Have To Redo Your Responses!)Rohit GadekarNo ratings yet

- #21. Pearce, Gagnon, Klein, MacIver, Makni, Fisher, - Kumar - Investigation of A Novel HPGR and Size Classification Circuit PDFDocument11 pages#21. Pearce, Gagnon, Klein, MacIver, Makni, Fisher, - Kumar - Investigation of A Novel HPGR and Size Classification Circuit PDFManolo Sallo ValenzuelaNo ratings yet

- Mill PerformanceDocument4 pagesMill PerformanceDuong VuNo ratings yet

- KC-MD3 User Manual Rev 4.0 - 201710 - ENDocument74 pagesKC-MD3 User Manual Rev 4.0 - 201710 - ENJulio BarreraNo ratings yet

- HydroFloat CPF - Cu ApplicationDocument34 pagesHydroFloat CPF - Cu ApplicationNicolas PerezNo ratings yet

- PowderDocument10 pagesPowder1977julNo ratings yet

- Tosf15 5Document240 pagesTosf15 5Samantha PowellNo ratings yet

- Chrome Ore Beneficiation ChallengesDocument6 pagesChrome Ore Beneficiation ChallengesSudikondala PurushothamNo ratings yet

- System Control Solution of Ball MillDocument8 pagesSystem Control Solution of Ball MillCloves KelherNo ratings yet

- Flotation Circuit Design Using A Geometallurgical ApproachDocument10 pagesFlotation Circuit Design Using A Geometallurgical ApproachNataniel LinaresNo ratings yet

- 〈92〉 Growth Factors and Cytokines Used in Cell Therapy ManufacturingDocument4 pages〈92〉 Growth Factors and Cytokines Used in Cell Therapy Manufacturingmehrdarou.qaNo ratings yet

- Fluid IzationDocument11 pagesFluid IzationnovitaNo ratings yet

- The Effect of External Gasislurry Contact On The Flotation of Fine ParticlesDocument130 pagesThe Effect of External Gasislurry Contact On The Flotation of Fine ParticlesOmid VandghorbanyNo ratings yet

- Sgs Min Tp2002 04 Bench and Pilot Plant Programs For Flotation Circuit DesignDocument10 pagesSgs Min Tp2002 04 Bench and Pilot Plant Programs For Flotation Circuit DesignevalenciaNo ratings yet

- Cerro Corona Testwork Report May09.2Document4 pagesCerro Corona Testwork Report May09.2Freck Pedro OliveraNo ratings yet

- MMT 151.1 ComminutionDocument18 pagesMMT 151.1 Comminutionvince coNo ratings yet

- Bond Rod Mill Index - JKTechDocument2 pagesBond Rod Mill Index - JKTechBenito Quispe A.No ratings yet

- Scope:: About The Ballbal - Direct Spreadsheet ..Document14 pagesScope:: About The Ballbal - Direct Spreadsheet ..alonsogonzalesNo ratings yet

- JK Drop Weight Test ParametersDocument3 pagesJK Drop Weight Test ParametersRobert BrittNo ratings yet

- Department of Chemical EngineeringDocument40 pagesDepartment of Chemical EngineeringRavid GhaniNo ratings yet

- Middle East Technical UniversityDocument16 pagesMiddle East Technical Universityiremnur keleşNo ratings yet

- Minerals Engineering: J. Yianatos, F. Contreras, P. Morales, F. Coddou, H. Elgueta, J. OrtízDocument8 pagesMinerals Engineering: J. Yianatos, F. Contreras, P. Morales, F. Coddou, H. Elgueta, J. OrtízCristian Eduardo Ortega MoragaNo ratings yet

- Storage PDFDocument12 pagesStorage PDFanasabdullahNo ratings yet

- Riza Marie L. Castorico BSEM-4th Yr. Activity No. 3 Comminution (Disc Pulverizer)Document5 pagesRiza Marie L. Castorico BSEM-4th Yr. Activity No. 3 Comminution (Disc Pulverizer)Riza CastoricoNo ratings yet

- Micromine 2018 Mar 18 LR2Document2 pagesMicromine 2018 Mar 18 LR2Hafidz Arsenal0% (1)

- Quality Improvement in The Production Process of Grinding BallsDocument5 pagesQuality Improvement in The Production Process of Grinding BallsYaser Mohamed AbasNo ratings yet

- How To Formulate An Effective Ore Comminution Characterization ProgramDocument24 pagesHow To Formulate An Effective Ore Comminution Characterization ProgramRenzo Pinto Muñoz100% (1)

- A Look at The Greenfield Foundries of 2020Document12 pagesA Look at The Greenfield Foundries of 2020skluxNo ratings yet

- Modelamento MatemáticoDocument4 pagesModelamento MatemáticoDaniella Gomes RodriguesNo ratings yet

- MIPAC MPA App Note SAG MillsDocument2 pagesMIPAC MPA App Note SAG MillsTanaji_maliNo ratings yet

- Coal Handling System Flow & ConsumptionDocument36 pagesCoal Handling System Flow & ConsumptionMel Ann B. BayorNo ratings yet

- simuladorJKSimMet Rev1.2Document28 pagessimuladorJKSimMet Rev1.2Freddy OrlandoNo ratings yet

- Comminution Ore TestingDocument35 pagesComminution Ore TestingHarrison Antonio Mira NiloNo ratings yet

- Towards A Virtual Comminution MachineDocument12 pagesTowards A Virtual Comminution MachinePatricio LeonardoNo ratings yet

- T Exeter Hydrocyclonemodel2 PDFDocument7 pagesT Exeter Hydrocyclonemodel2 PDFqweNo ratings yet

- SOP 006 Knelson EGRG Procedure Rev 3 PDFDocument8 pagesSOP 006 Knelson EGRG Procedure Rev 3 PDFjose hernandezNo ratings yet

- Strategies for Instrumentation and Control of ThickenersDocument21 pagesStrategies for Instrumentation and Control of ThickenersPablo OjedaNo ratings yet

- Comprehensive Range of Pilot PlantsDocument22 pagesComprehensive Range of Pilot PlantsKannan RamanNo ratings yet

- 1 Fundamental Concepts of Fluid Mechanics For Mine VentilationDocument29 pages1 Fundamental Concepts of Fluid Mechanics For Mine VentilationRiswan RiswanNo ratings yet

- SO 13320, Particle Size Analysis - Laser Diffraction Methods - Part 1: General PrinciplesDocument5 pagesSO 13320, Particle Size Analysis - Laser Diffraction Methods - Part 1: General PrincipleslouispriceNo ratings yet

- 3 +GPS+SegmentsDocument10 pages3 +GPS+SegmentsLaxmana GeoNo ratings yet

- Jankovic-Validation of A Closed Circuit Ball Mill Model PDFDocument7 pagesJankovic-Validation of A Closed Circuit Ball Mill Model PDFrodrigoalcainoNo ratings yet

- The Use of Oxidising Agents For Control of Electrochemical Potential in FlotationDocument9 pagesThe Use of Oxidising Agents For Control of Electrochemical Potential in FlotationRodrigo YepsenNo ratings yet

- Srim 08Document35 pagesSrim 08Goldenworld IcelandNo ratings yet

- Water: Role of Nanomaterials in The Treatment of Wastewater: A ReviewDocument30 pagesWater: Role of Nanomaterials in The Treatment of Wastewater: A Reviewrevolvevijaya123100% (1)

- Recoverable Resources Estimation: Indicator Kriging or Uniform Conditioning?Document4 pagesRecoverable Resources Estimation: Indicator Kriging or Uniform Conditioning?Elgi Zacky ZachryNo ratings yet

- Data Reconciliation For Material BalanceDocument11 pagesData Reconciliation For Material BalanceUtkarsh SankrityayanNo ratings yet

- Bond - Op. Work Index (HGE SRL)Document3 pagesBond - Op. Work Index (HGE SRL)Pedro RodriguezNo ratings yet

- HAUS Milk Separator PDFDocument8 pagesHAUS Milk Separator PDFSupatmono NAINo ratings yet

- SAIL BSBK Visit Docx-15.06. R-1rtfDocument7 pagesSAIL BSBK Visit Docx-15.06. R-1rtfsssadangi100% (1)

- Utkarsh Sankrityayan-Effect of Particle Size Distribution On Grinding Kinetics in Dry and Wet Ball Milling OperationsDocument26 pagesUtkarsh Sankrityayan-Effect of Particle Size Distribution On Grinding Kinetics in Dry and Wet Ball Milling OperationsUtkarsh SankrityayanNo ratings yet

- Mineral Processing UG2 Concentrator Process Flow ReportDocument41 pagesMineral Processing UG2 Concentrator Process Flow ReportPortia ShilengeNo ratings yet

- AFP vs CCD Solution Recovery Cost ComparisonDocument7 pagesAFP vs CCD Solution Recovery Cost ComparisonCesar Cordova NuñezNo ratings yet

- Prob (Comminution) PDFDocument3 pagesProb (Comminution) PDFanasabdullahNo ratings yet

- Analysis and Prediction of Diabetes DiseDocument5 pagesAnalysis and Prediction of Diabetes DiseShayekh M ArifNo ratings yet

- Advancing Healthcare Predictions: Harnessing Machine Learning for Accurate Health Index PrognosisDocument8 pagesAdvancing Healthcare Predictions: Harnessing Machine Learning for Accurate Health Index PrognosisInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Investigating Factors Influencing Employee Absenteeism: A Case Study of Secondary Schools in MuscatDocument16 pagesInvestigating Factors Influencing Employee Absenteeism: A Case Study of Secondary Schools in MuscatInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Exploring the Molecular Docking Interactions between the Polyherbal Formulation Ibadhychooranam and Human Aldose Reductase Enzyme as a Novel Approach for Investigating its Potential Efficacy in Management of CataractDocument7 pagesExploring the Molecular Docking Interactions between the Polyherbal Formulation Ibadhychooranam and Human Aldose Reductase Enzyme as a Novel Approach for Investigating its Potential Efficacy in Management of CataractInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- An Analysis on Mental Health Issues among IndividualsDocument6 pagesAn Analysis on Mental Health Issues among IndividualsInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- The Relationship between Teacher Reflective Practice and Students Engagement in the Public Elementary SchoolDocument31 pagesThe Relationship between Teacher Reflective Practice and Students Engagement in the Public Elementary SchoolInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- The Making of Object Recognition Eyeglasses for the Visually Impaired using Image AIDocument6 pagesThe Making of Object Recognition Eyeglasses for the Visually Impaired using Image AIInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Terracing as an Old-Style Scheme of Soil Water Preservation in Djingliya-Mandara Mountains- CameroonDocument14 pagesTerracing as an Old-Style Scheme of Soil Water Preservation in Djingliya-Mandara Mountains- CameroonInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Dense Wavelength Division Multiplexing (DWDM) in IT Networks: A Leap Beyond Synchronous Digital Hierarchy (SDH)Document2 pagesDense Wavelength Division Multiplexing (DWDM) in IT Networks: A Leap Beyond Synchronous Digital Hierarchy (SDH)International Journal of Innovative Science and Research TechnologyNo ratings yet

- Harnessing Open Innovation for Translating Global Languages into Indian LanuagesDocument7 pagesHarnessing Open Innovation for Translating Global Languages into Indian LanuagesInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- The Utilization of Date Palm (Phoenix dactylifera) Leaf Fiber as a Main Component in Making an Improvised Water FilterDocument11 pagesThe Utilization of Date Palm (Phoenix dactylifera) Leaf Fiber as a Main Component in Making an Improvised Water FilterInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Formulation and Evaluation of Poly Herbal Body ScrubDocument6 pagesFormulation and Evaluation of Poly Herbal Body ScrubInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Diabetic Retinopathy Stage Detection Using CNN and Inception V3Document9 pagesDiabetic Retinopathy Stage Detection Using CNN and Inception V3International Journal of Innovative Science and Research TechnologyNo ratings yet

- Auto Encoder Driven Hybrid Pipelines for Image Deblurring using NAFNETDocument6 pagesAuto Encoder Driven Hybrid Pipelines for Image Deblurring using NAFNETInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- The Impact of Digital Marketing Dimensions on Customer SatisfactionDocument6 pagesThe Impact of Digital Marketing Dimensions on Customer SatisfactionInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Electro-Optics Properties of Intact Cocoa Beans based on Near Infrared TechnologyDocument7 pagesElectro-Optics Properties of Intact Cocoa Beans based on Near Infrared TechnologyInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- A Survey of the Plastic Waste used in Paving BlocksDocument4 pagesA Survey of the Plastic Waste used in Paving BlocksInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Automatic Power Factor ControllerDocument4 pagesAutomatic Power Factor ControllerInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Comparatively Design and Analyze Elevated Rectangular Water Reservoir with and without Bracing for Different Stagging HeightDocument4 pagesComparatively Design and Analyze Elevated Rectangular Water Reservoir with and without Bracing for Different Stagging HeightInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Cyberbullying: Legal and Ethical Implications, Challenges and Opportunities for Policy DevelopmentDocument7 pagesCyberbullying: Legal and Ethical Implications, Challenges and Opportunities for Policy DevelopmentInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Explorning the Role of Machine Learning in Enhancing Cloud SecurityDocument5 pagesExplorning the Role of Machine Learning in Enhancing Cloud SecurityInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Design, Development and Evaluation of Methi-Shikakai Herbal ShampooDocument8 pagesDesign, Development and Evaluation of Methi-Shikakai Herbal ShampooInternational Journal of Innovative Science and Research Technology100% (3)

- Review of Biomechanics in Footwear Design and Development: An Exploration of Key Concepts and InnovationsDocument5 pagesReview of Biomechanics in Footwear Design and Development: An Exploration of Key Concepts and InnovationsInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Navigating Digitalization: AHP Insights for SMEs' Strategic TransformationDocument11 pagesNavigating Digitalization: AHP Insights for SMEs' Strategic TransformationInternational Journal of Innovative Science and Research Technology100% (1)

- Studying the Situation and Proposing Some Basic Solutions to Improve Psychological Harmony Between Managerial Staff and Students of Medical Universities in Hanoi AreaDocument5 pagesStudying the Situation and Proposing Some Basic Solutions to Improve Psychological Harmony Between Managerial Staff and Students of Medical Universities in Hanoi AreaInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Formation of New Technology in Automated Highway System in Peripheral HighwayDocument6 pagesFormation of New Technology in Automated Highway System in Peripheral HighwayInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- A Review: Pink Eye Outbreak in IndiaDocument3 pagesA Review: Pink Eye Outbreak in IndiaInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Hepatic Portovenous Gas in a Young MaleDocument2 pagesHepatic Portovenous Gas in a Young MaleInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Mobile Distractions among Adolescents: Impact on Learning in the Aftermath of COVID-19 in IndiaDocument2 pagesMobile Distractions among Adolescents: Impact on Learning in the Aftermath of COVID-19 in IndiaInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Drug Dosage Control System Using Reinforcement LearningDocument8 pagesDrug Dosage Control System Using Reinforcement LearningInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- The Effect of Time Variables as Predictors of Senior Secondary School Students' Mathematical Performance Department of Mathematics Education Freetown PolytechnicDocument7 pagesThe Effect of Time Variables as Predictors of Senior Secondary School Students' Mathematical Performance Department of Mathematics Education Freetown PolytechnicInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- DC Motor Load Characteristics ExperimentDocument6 pagesDC Motor Load Characteristics Experimentbilalkhan3567No ratings yet

- CPAR Summary - WK 144Document6 pagesCPAR Summary - WK 144NagarajNo ratings yet

- An Improved TS Algorithm For Loss-Minimum Reconfiguration in Large-Scale Distribution SystemsDocument10 pagesAn Improved TS Algorithm For Loss-Minimum Reconfiguration in Large-Scale Distribution Systemsapi-3697505No ratings yet

- Houghton Mifflin Harcourt Sap Document of UnderstandingDocument10 pagesHoughton Mifflin Harcourt Sap Document of UnderstandingSunil KumarNo ratings yet

- GATE Previous Year Solved Papers CSDocument152 pagesGATE Previous Year Solved Papers CSNagaraja Rao100% (1)

- PSC Vacancy Government SpokespersonDocument3 pagesPSC Vacancy Government SpokespersonMoreen WachukaNo ratings yet

- HACCP Plan With Flow Chart-1Document23 pagesHACCP Plan With Flow Chart-1Anonymous aZA07k8TXfNo ratings yet

- Chapter 6Document53 pagesChapter 6Sam KhanNo ratings yet

- Laboratory Testing of Oilwell CementsDocument41 pagesLaboratory Testing of Oilwell Cementsbillel ameuriNo ratings yet

- Basf TapesDocument3 pagesBasf TapesZoran TevdovskiNo ratings yet

- Community Development and Umbrella BodiesDocument20 pagesCommunity Development and Umbrella Bodiesmoi5566No ratings yet

- Sample BudgetDocument109 pagesSample BudgetAnjannette SantosNo ratings yet

- S Sss 001Document4 pagesS Sss 001andy175No ratings yet

- Seed FilesDocument1 pageSeed Filesارسلان علیNo ratings yet

- 001-Numerical Solution of Non Linear EquationsDocument16 pages001-Numerical Solution of Non Linear EquationsAyman ElshahatNo ratings yet

- Stenhoj ST40 ST50 ST60 Wiring Diagram T53065 ST40 60 230 400V 50HzDocument21 pagesStenhoj ST40 ST50 ST60 Wiring Diagram T53065 ST40 60 230 400V 50HzLucyan IonescuNo ratings yet

- Subhasis Patra CV V3Document5 pagesSubhasis Patra CV V3Shubh SahooNo ratings yet

- Wedding Planning GuideDocument159 pagesWedding Planning GuideRituparna Majumder0% (1)

- Toaz - Info Module in Ergonomics and Planning Facilities For The Hospitality Industry PRDocument33 pagesToaz - Info Module in Ergonomics and Planning Facilities For The Hospitality Industry PRma celine villoNo ratings yet

- Complete HSE document kit for ISO 14001 & ISO 45001 certificationDocument8 pagesComplete HSE document kit for ISO 14001 & ISO 45001 certificationfaroz khanNo ratings yet

- Ram SAP MM Class StatuscssDocument15 pagesRam SAP MM Class StatuscssAll rounderzNo ratings yet

- T-18 - Recommended Target Analysis For Ductile IronsDocument2 pagesT-18 - Recommended Target Analysis For Ductile Ironscrazy dNo ratings yet

- ) (Significant Digits Are Bounded To 1 Due To 500m. However I Will Use 2SD To Make More Sense of The Answers)Document4 pages) (Significant Digits Are Bounded To 1 Due To 500m. However I Will Use 2SD To Make More Sense of The Answers)JeevikaGoyalNo ratings yet

- Instrument Landing SystemDocument35 pagesInstrument Landing SystemDena EugenioNo ratings yet

- Tribology Aspects in Angular Transmission Systems: Hypoid GearsDocument7 pagesTribology Aspects in Angular Transmission Systems: Hypoid GearspiruumainNo ratings yet

- Excellent Hex Key Wrench: English VersionDocument54 pagesExcellent Hex Key Wrench: English Versionmg pyaeNo ratings yet

- SDA HLD Template v1.3Document49 pagesSDA HLD Template v1.3Samuel TesfayeNo ratings yet

- EE370 L1 IntroductionDocument38 pagesEE370 L1 IntroductionAnshul GoelNo ratings yet

- OT Lawsuit CPDDocument20 pagesOT Lawsuit CPDDan LehrNo ratings yet