Professional Documents

Culture Documents

Use of Adaptive Boosting Algorithm To Estimate User's Trust in The Utilization of Virtual Assistant Systems

Original Description:

Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Use of Adaptive Boosting Algorithm To Estimate User's Trust in The Utilization of Virtual Assistant Systems

Copyright:

Available Formats

Volume 8, Issue 1, January – 2023 International Journal of Innovative Science and Research Technology

ISSN No:-2456-2165

Use of Adaptive Boosting Algorithm to Estimate

User’s Trust in the Utilization of

Virtual Assistant Systems

1

Akazue Maureen, 2Onovughe Anthonia, 3Edith Omede,

1,2,3

Department of Computer Science, Faculty of science, Delta State University Abraka

4

Hampo, John Paul A.C.

4

Computer Science Department, Federal University of Technology, Owerri

Abstract:- User trust in technology is an essential factor Machine learning is the ability of a computer or a program

for the usage of a system or machine. AI enabled to learn from experience concerning some tasks by the

technologies such as virtual digital assistants simplify a increase in the computer or program’s performance.

lot of process for humans starting from simple search to

a more complex action like house automation and Machine learning can be supervised, unsupervised or

completion of some transitions notably Amazon’s Alexa. reinforcement learning. In supervised machine learning,

Can human actually trust these AI enabled technologies? models can be built that learn from the data, thereby the

Hence, this research applied adaptive boosting ensemble model having the ability to guesstimate the pattern and

learning approach to predict users trust in virtual relation for the outputs.

assistants. A technology trust dataset was obtained from

figshare.com and engineered before training the Many algorithms can be used during the development of

adaptive boosting (AdaBoost) algorithm to learn the a model in supervised learning, which is developed in

trends and pattern. The result of the study showed that different mathematical backgrounds. They are:

AdaBoost had an accuracy of 94.31% for the testing set. Linear and polynomial regression,

Basis function construction using adaptive modeling,

Keywords:- Machine Learning, Ensemble Model, Predictive Relevance vector machines using the Bayesian

Model, Trust, Intelligent Virtual Assistants framework,

Support vector machines,

I. INTRODUCTION Regression trees using recursive partitioning or

continuous class learning,

Intelligent machines, and more broadly, intelligent Multilayer perceptrons using neural networks.

systems, are becoming more prevalent in people's daily Development of models using supervised learning is

lives. Despite major advances in automation, human done following the procedure below:

supervision and intervention are still required in almost

every industry, from manufacturing to transportation to

After Deciding on the Input Parameters, Gather a

crisis management and healthcare [1]. AI is becoming more Decent Amount of Training and Test Examples;

prevalent in various fields in the world notably in the

Analyze the correlation between the input parameters.

medical industry. However, there are certain worries about

Less correlated inputs will give more reliable results;

A.I.'s ability to make critical decisions in a way that humans

Choose one of the algorithms from the above list and

would consider fair, to be aware of and aligned with human

values that are relevant to the problems being addressed, and decide upon the other parameters required for that

to explain its thinking and decision-making. At its core, AI algorithm;

produces automated decisions, which can lead to a decision- Train the model using the training examples and the

making bias because physicians are more likely to trust chosen algorithm;

diagnostic test results generated by AI-driven machines Lastly, check the reliability and accuracy by predicting

without putting them through rigorous scrutiny [2]. the target values for the test dataset using the developed

model and comparing them with the actual values.

Machine learning (ML) is a subset of data mining

(DM). Data mining is the analysis of large data to discover Ensemble techniques of machine learning will be

hidden trends, patterns, and relationships, thereby applied in the development of the predictive model to

developing a computer model to assist in decision-making. estimate user trust. Ensemble machine learning as a machine

Data mining is also termed knowledge discovery in learning technique employs the development of various

databases and datasets [3]; [4], and it constitutes a stage in models and strategically integrating the various model to

Knowledge Discovery Database (KBB) process with other solve a problem which most times are computational

stages being data selection, data cleaning, and evaluation. intelligence problems [5]. Multiple learning algorithms are

IJISRT23JAN727 www.ijisrt.com 502

Volume 8, Issue 1, January – 2023 International Journal of Innovative Science and Research Technology

ISSN No:-2456-2165

combined to solve a single problem, hence having better Personal assistants enabled with AI technologies are

prediction and classification performance, than the usually referred to as Intelligent Virtual Assistants (IVA) or

performance from a single algorithm. Ensemble learning has as Virtual Personal Assistants (VPN) [11]. IVA is a software

been applied to an eclectic array of topics in regression, that uses human voice and rational data to give help by

classification, feature selection, and abnormal point noticing inquiries in the usual dialect, making proposals, and

reduction [5]. accomplishing activities.

Trust plays an important role in the usage of Virtual assistants whether software or hardware (as in

technological devices, the growth, and advancement of a the case of Personal Digital Assistant) is an agent with the

system [6]. Trust is a term coined in most literature of social sole goal of assisting the user [12]; this is similar to the case

sciences, however, it has been adopted by the sciences, of assistants in real-life settings. Intelligent virtual assistants

especially in computing. Trust is a measure of reliability, are sometimes capable of operating in pre-recorded voices.

utility, and availability; that improves the overall Some of the tasks an intelligent virtual assistant can do are:

functionalities of technological systems like the quality of questioning (online search), controlling home automation

services, reputation, availability, risk, and confidence. devices (switching on the air conditioner remotely), media

playback, emails, to-do lists, and appointments. Some other

Human trust is essential for successful human-machine activities IVAs can do are making informed suggestions and

interactions and human trust can be divided into three kinds cracking funny one-liner jokes.

in the context of autonomous systems: dispositional,

situational, and learned [7]. Situational and learned trust is Table 1. Some IVAS and their Developer

based on a given situation (e.g., task difficulty) and S/N INTELLIGENT VIRTUAL DEVELOPER

experience (e.g., machine reliability), respectively. ASSISTANT

Dispositional trust refers to the component of trust that is 1. Bixby Samsung

dependent on demographics such as gender and culture,

2. Alexa Amazon

whereas dispositional and learned trust is based on a given

situation (e.g., task difficulty) and experience (e.g., machine 3. Siri Apple

reliability). Situational and acquired trust factors "may alter 4. Google Assistant Google

throughout a single conversation" [7], whereas all of these 5. Cortana Microsoft

trust factors influence how humans make judgments when

engaging with intelligent computers. Summarily, Intelligent Virtual Assistants Though Like

Software Chatbots, are More than Chatbots in their

Human trust has been attempted to be predicted using Range of Operation and Service Coverage [13]. Hence,

dynamic models based on human experience and/or self- they:

reported behavior [8]. However, retrieving human self- Do things for users by focusing on task completion

reported behavior continually for use in a feedback control Get what the user says thereby having intent

system is impractical. Although these measurements have understanding through the conversation between the

been linked to human trust levels [9], they have not been user and the iva

investigated in the context of real-time trust sensing. This Get to know the user thereby learning the user’s

research work offers a model to predict user trust based on personal information and applying them.

collected datasets on users’ interaction with artificial

intelligence (AI) enabled machines and devices. II. MATERIALS AND METHODS

Human-computer interaction is the interaction that Jupyter notebook and Python programming language

exists between humans and computers. The human is was used to develop this model in association with machine

referred to as the user while the computer can be referred to learning and data science frameworks such as Scikit learn,

as a machine. [10] defined human-computer interaction Pandas, NumPy, Matplotlib, and Seaborn. Scikit learn was

(HCI) as a discipline concerned with the design, evaluation, used for building the model; it has all the algorithms and

and implementation of interactive computing systems for techniques used in the model-building process. NumPy and

human use and with the study of major phenomena Pandas were used for linear Algeria and Scientific

surrounding them. computing of this research. Pandas were also used in the

cleaning and engineering of the data. Seaborn and

Human-computer interaction has a basic goal and a Matplotlib are also data science packages that were used for

long-term goal. The basic goal is to improve the interactions the visualization of the patterns in the dataset. The dataset

between users and computers by making computers more used in this research was downloaded from figsshare.com.

usable and receptive to the user's needs. In the long run, HCI

has a goal of designing systems that minimize the barrier A. Methodology

between the human's cognitive model of what they want to The existing system is a single model adopted by [14].

accomplish and the computer's understanding of the user's They implemented Decision Tree (DT) algorithm in

task. building their model which gave them a precision of 92%.

IJISRT23JAN727 www.ijisrt.com 503

Volume 8, Issue 1, January – 2023 International Journal of Innovative Science and Research Technology

ISSN No:-2456-2165

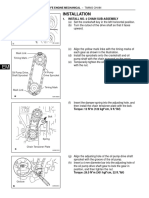

The model presented in this study is an enhanced an easy file format for python to import using the pandas'

machine learning model for predicting users' trust in library. The data in the CSV file is then converted to vectors

technology using a trust dataset from figshare.com. The which is the format the model can be trained on, the

architecture of the model is shown in Figure 1. The hyperparameters are tuned till the model is ready for use,

development process starts by pulling data from the storage once the performance was sufficient the model was then

repository from figshare.com, having a .csv format; which is saved to disk for prediction.

Fig 1 High Level Architecture of the Proposed Model

During the development of the model, the trust data Different algorithms come into play to develop this

was obtained from figshare.com and the fields were trust predictive system. These algorithms are dataset

engineered to fit the proper predictive model of this cleaning, dataset engineering, model splitting, model

research. Using the train-test-split algorithm of scikit-learn building, and metric and evaluation algorithms.

python framework, the dataset was split into 75% for

training and 25% for testing, the dataset was then converted B. Pseudocode For Data Cleansing

to vectors and trained using logistic regression from the sci- def dropna():

kit-learn library which was then exported for deployment data = {"group": ["g1", np.nan, "g1", "g2", np.nan], "B": [0,

and use outside the development environment, the model's 1, 2, 3, 4]}

hyperparameters were tuned for the model to achieve the df = pd.DataFrame(data)

best accuracy and this continues iteratively till the training is grouped = df.groupby("group", dropna=False)

completed and the model is saved to storage which can be result = grouped.indices

deployed on several platforms for predicting users trust in dtype = np.intp

technology. The process of training the model is shown in expected = {

figure 2. "g1": np.array([0, 2], dtype=dtype),

"g2": np.array([3], dtype=dtype),

np.nan: np.array([1, 4], dtype=dtype),

}

for result_values, expected_values in zip(result.values(),

expected.values()):

tm.assert_numpy_array_equal(result_values,

expected_values)

assert np.isnan(list(result.keys())[2])

assert list(result.keys())[0:2] == ["g1", "g2"]

C. Pseudocode For Data Splitting

train_test_split(*arrays, test_size=None,

train_size=None, random_state=None, shuffle=True,

stratify=None)

Fig 2 Training the prediction model to estimate user’s trust

in technology

IJISRT23JAN727 www.ijisrt.com 504

Volume 8, Issue 1, January – 2023 International Journal of Innovative Science and Research Technology

ISSN No:-2456-2165

Parameters Once the python web app is triggered using the

*Arrays: inputs such as lists, arrays, data frames, or command, python app.py on command prompt. The app

matrices runs on the localhost with address 127.0.0.1 and port 5000.

Test_size: this is a float value whose value ranges When the localhost address is typed on the browser, the web

between 0.0 and 1.0. It represents the proportion of our app is made available as shown in figure 3. Figure 4 and

test size. Its default value is none. figure 5 shows the input interface and the output interface

Train_size: this is a float value whose value ranges respectively for prediction of users trust using the deployed

between 0.0 and 1.0. It represents the proportion of our web application.

train size. Its default value is none.

Random_state: this parameter is used to control the

shuffling applied to the data before applying the split. It

acts as a seed.

Shuffle: this parameter is used to shuffle the data before

splitting. Its default value is true.

Stratify: this parameter is used to split the data in a

stratified fashion.

Algorithm

AdaBoost which is an acronym for Adaptive Boosting

builds a strong classifier from multiple weak classifiers.

The algorithm is simply below:

Initialize the dataset and assign equal weight to each of

the data points.

Provide this as input to the model and identify the

wrongly classified data points.

Increase the weight of the wrongly classified data points.

if (got required results)

Goto step 5 Fig 4 Input Interface of the Deployed Model

else

Goto step 2 Prediction is based on the values as computed from the

end if selections made by the user, with respect to the listed

End intelligent virtual assistant. The final values for Trust,

III. IMPLEMENTATION Neutral and Untrust is fed into the deployed machine

learning model for the prediction of the user’s trust. If the

The pickled model was made available on visual studio class after prediction is 1, then the user trust technology and

code. It was de-pickled (loaded) using the pickle module in the probability of trust is given. Also, if the outputted class

python programming language and deployed using flask after prediction is 0, then the user does not trust technology.

framework. It consists of HTML, CSS, JavaScript and The probability of trust which is close to 0 and less than 0.5

python programming language. is also given.

Fig 3 Trustly Home Page Fig 5 Output Interface of the Deployed Model

IJISRT23JAN727 www.ijisrt.com 505

Volume 8, Issue 1, January – 2023 International Journal of Innovative Science and Research Technology

ISSN No:-2456-2165

IV. PERFORMANCE EVALUATION training features are stored. Matplotlib and Seaborn were

used for visualization. Pandas visualization module was also

A. Accuracy was Used to Evaluate the Performance of the employed.

Model.

This study has shown that users' trust prediction

Below are vital performance metrics: concerning technology is better with an ensemble model

True positive – this is the total number of correctly using adaptive boosting algorithm which gave an accuracy

classified attacks. It is denoted as TP of 94.31%.

True negative – this is the total number of correctly

classified non-attack. It is denoted as TN This work can be applied to estimate user’s trust in

False positive – this is the total number of wrongly virtual intelligent assistants which are AI enabled

classified attack and it is denoted as FP technologies. The virtual intelligent assistants that were

False negative – this is the total number of wrongly tested are Google Assistant, Siri, Cortana, Bixby and Alexa.

classified non-attack and it is denoted as FN

The Suggestions for Further Studies are as Follows:

Further research can be carried out using the

hybridization of ensemble learning and deep learning.

Further research could seek to deploy the model into a

mobile system.

REFERENCES

[1]. Yue, W. and Fumin, Z. (2017). Trends in Control and

Decision-Making for Human–Robot Collaboration

Systems.

[2]. Dorado J., del Toro X., Santofimia M.J., Parren õ , A.,

Cantarero, R., Rubio, A. and Lopez, J.C. (2019). A

computer-vision-based system for at-home

rheumatoid arthritis rehabilitation. International

Journal of Distributed Sensor Networks.15(9).

doi:10.1177/1550147719875649

[3]. Divya, K. and Srinivasan B. (2021). A TRUST-

Fig 6 Model’s Confusion Matrix BASED PREDICTIVE MODEL FOR MOBILE AD

HOC NETWORKS. International Journal on AdHoc

Accuracy – the accuracy of the classification is given as: Networking Systems (IJANS) Vol. 11, No. 3,

𝑇𝑃 + 𝑇𝑁 [4]. Sefer, K. and Yaseen, A. (2019). Automatic

𝐶𝐴 = ∗ 100 Malware Detection using Data Mining Techniques

𝑇𝑃 + 𝑇𝑁 + 𝐹𝑃 + 𝐹𝑁

Accuracy gave 94.31% Based on Power Spectral Density (PSD),

Precision rate – percentage of all correctly classified of all International Journal of Computer Science and

attack packets, given as: Mobile Computing (IJCSMC), 8(3), PP27-30

𝑇𝑃 [5]. Akazue, M. and Ojeme, B. (2014) Building Data

𝑃𝑅 = ∗ 100 Mining for Phone Business. Orient.J. Comp. Sci. and

𝑇𝑃 + 𝐹𝑃

Precision rate is 94.87% Technol;7(3)

Recall – percentage of all rightly classified attack in the [6]. Yan, L., and Liu, Y. (2020). An Ensemble Prediction

dataset. This is given as: Model for Potential Student Recommendation Using

𝑇𝑃 Machine Learning. Symmetry, 12(5), 728. MDPI AG.

𝑅𝐶 = ∗ 100 Retrieved from

𝑇𝑃 + 𝐹𝑁

Recall is 70.66%. http://dx.doi.org/10.3390/sym12050728

[7]. Kelvin, H. A. & Masooda, B. (2014). Trust in

V. CONCLUSION Automation: Integrating Empirical Evidence on

Factors That Influence Trust. Human Factors: The

This study proposes a predictive model to estimate Journal of the Human Factors and Ergonomics

users' trust in technology. The model is an enhanced Society, (), 0018720814547570–

machine learning statistical model, trained on the data from . doi:10.1177/0018720814547570

figshare.com where the model learns predictive features [8]. Hussein, A., Elsawah, S. and Abbass, H. (2019). A

related to users' trust in technology. System Dynamics Model for Human Trust in

Automation under Speed and Accuracy

The model was built using python and a couple of Requirements. Proceedings of the Human Factors

machine learning libraries, sci-kit-learn (aka sklearn) was and Ergonomics Society 2019 Annual Meeting

used to build the machine learning model, NumPy for vector

processing, and Pandas to handle CSV files where the

IJISRT23JAN727 www.ijisrt.com 506

Volume 8, Issue 1, January – 2023 International Journal of Innovative Science and Research Technology

ISSN No:-2456-2165

[9]. Akash, K., Hu, W., Jain, N. & Reid, T. (2018). A

Classification Model for Sensing Human Trust in

Machines Using EEG and GSR. ACM Trans.

Interact. Intell. Syst. 8, 4, 20 pages.

https://doi.org/10.1145/3132743

[10]. Pravallika, B. (2018). LECTURE NOTES ON

HUMAN COMPUTER INTERACTION.

Information Technology, Institute of Aeronautical

Engineering

[11]. Bhosale, V., Raverkar, D. and Kadam, K, (2021).

GOOGLE ASSISTANT: NEED OF THE HOUR.

Contemporary Research in India

[12]. Batura, A. (2019). INTEGRATING VIRTUAL

ASSISTANT TECHNOLOGY INTO OMNI-

CHANNEL PROCESSES. Bachelor’s thesis Degree

programme in business logistics, South Eastern

University of Applied Science

[13]. Natale, S. (2020). To believe in Siri: A critical

analysis of AI voice assistants. Loughborough

University, UK.

[14]. El-Sayed, H., Ignatious, A.H., Kulkarni, P. and

Bouktif, S. (2020). Machine learning based trust

management framework for vehicular networks.

Vehicular Communications 25, 100256. DOI:

https://doi.org/10.1016/j.vehcom.2020. 100256

IJISRT23JAN727 www.ijisrt.com 507

You might also like

- Robust Details Manual 2015Document463 pagesRobust Details Manual 2015Ben Beach100% (1)

- PalmistryDocument115 pagesPalmistryverne4444100% (3)

- Local Viz Anatomy of Type PosterDocument2 pagesLocal Viz Anatomy of Type PosterdvcvcNo ratings yet

- Credit Card Score Prediction Using Machine LearningDocument8 pagesCredit Card Score Prediction Using Machine LearningInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Suitable Star For Marriage MatchingDocument12 pagesSuitable Star For Marriage MatchingVenkata RamaNo ratings yet

- Big Data and IOTDocument68 pagesBig Data and IOTNova MagomaniNo ratings yet

- Machine Learning Algorithms and Its Application A SurveyDocument9 pagesMachine Learning Algorithms and Its Application A SurveyIJRASETPublicationsNo ratings yet

- Kelley Frontiers of History Historical Inquiry in The Twentieth Century PDFDocument311 pagesKelley Frontiers of History Historical Inquiry in The Twentieth Century PDFElias Jose PaltiNo ratings yet

- Lecture 1. Introduction To Machine LearningDocument23 pagesLecture 1. Introduction To Machine LearningАббосали АбдурахмоновNo ratings yet

- Intel Artificial Intelligence EguideDocument15 pagesIntel Artificial Intelligence Eguidenikhil_805No ratings yet

- GRADE 11 General Mathematics Week 2 Quarter 1 ModuleDocument12 pagesGRADE 11 General Mathematics Week 2 Quarter 1 ModuleCasey Erica Nicole CarranzaNo ratings yet

- Machine Learning and Deep Learning TechnDocument9 pagesMachine Learning and Deep Learning TechnAndyBarredaMoscosoNo ratings yet

- Artificial Intelligence Reference MaterialDocument17 pagesArtificial Intelligence Reference MaterialSai KrishnaNo ratings yet

- Iot Early Flood Detection & AvoidanceDocument43 pagesIot Early Flood Detection & Avoidance8051projects83% (6)

- AI Presentation Machine LearningDocument42 pagesAI Presentation Machine LearningXoha Fatima100% (1)

- Cae Use of EnglishDocument3 pagesCae Use of EnglishIñigo García Fernández100% (1)

- Opinion Mining For Text Data An OverviewDocument13 pagesOpinion Mining For Text Data An OverviewIJRASETPublicationsNo ratings yet

- Proposal Cafe Janji JiwaDocument24 pagesProposal Cafe Janji JiwaIbamz SiswantoNo ratings yet

- (IJETA-V9I1P1) :yew Kee WongDocument7 pages(IJETA-V9I1P1) :yew Kee WongIJETA - EighthSenseGroupNo ratings yet

- (IJETA-V8I6P2) :yew Kee WongDocument5 pages(IJETA-V8I6P2) :yew Kee WongIJETA - EighthSenseGroupNo ratings yet

- (IJIT-V7I5P2) :yew Kee WongDocument6 pages(IJIT-V7I5P2) :yew Kee WongIJITJournalsNo ratings yet

- (IJIT-V7I6P2) :yew Kee WongDocument7 pages(IJIT-V7I6P2) :yew Kee WongIJITJournalsNo ratings yet

- (IJETA-V8I5P1) :yew Kee WongDocument5 pages(IJETA-V8I5P1) :yew Kee WongIJETA - EighthSenseGroupNo ratings yet

- Machine LearningDocument9 pagesMachine Learningsujaldobariya36No ratings yet

- WP Machine Learning Putman LTR en LRDocument8 pagesWP Machine Learning Putman LTR en LRrahul kumarNo ratings yet

- (IJCST-V9I4P18) :yew Kee WongDocument5 pages(IJCST-V9I4P18) :yew Kee WongEighthSenseGroupNo ratings yet

- Basic Components of AIDocument17 pagesBasic Components of AIaman kumarNo ratings yet

- Modern Analytics A Foundation To Sustained AI SuccessDocument14 pagesModern Analytics A Foundation To Sustained AI SuccessJonasBarbosaNo ratings yet

- Artificial Intelligence Student Management Based On Embedded SystemDocument7 pagesArtificial Intelligence Student Management Based On Embedded SystemAfieq AmranNo ratings yet

- Data Representation in MLDocument21 pagesData Representation in MLAmrin MulaniNo ratings yet

- Ai White Paper UpdatedDocument21 pagesAi White Paper UpdatedRegulatonomous OpenNo ratings yet

- ATARC AIDA Guidebook - FINAL 1RDocument6 pagesATARC AIDA Guidebook - FINAL 1RdfgluntNo ratings yet

- An Overview of Supervised Machine Learning Paradigms and Their ClassifiersDocument9 pagesAn Overview of Supervised Machine Learning Paradigms and Their ClassifiersPoonam KilaniyaNo ratings yet

- Machine Learning Technical ReportDocument12 pagesMachine Learning Technical ReportAnil Kumar GuptaNo ratings yet

- Survey On Analytics, Machine Learning and The Internet of ThingsDocument5 pagesSurvey On Analytics, Machine Learning and The Internet of Thingsfx_ww2001No ratings yet

- 08250771Document8 pages08250771neelam sanjeev kumarNo ratings yet

- Be The One - Season 3 - Tech Geeks - MDIDocument6 pagesBe The One - Season 3 - Tech Geeks - MDIYASH KOCHARNo ratings yet

- Machine LearningDocument6 pagesMachine LearningRushabh RatnaparkhiNo ratings yet

- Foundation For AI With Microsoft FabricDocument14 pagesFoundation For AI With Microsoft Fabricvinay.pandey9498No ratings yet

- AI and Software Testing: Vikram Raghuwanshi Senior ConsultantDocument23 pagesAI and Software Testing: Vikram Raghuwanshi Senior ConsultantPrafula BartelNo ratings yet

- A Review On Machine Learning Tools and TechniquesDocument16 pagesA Review On Machine Learning Tools and TechniquesIJRASETPublicationsNo ratings yet

- 6machine Learning in Business - Formatted PaperDocument7 pages6machine Learning in Business - Formatted PaperENRIQUE ARTURO CHAVEZ ALVAREZNo ratings yet

- Artificial Intelligence Applications in Cybersecurity: 3. Research QuestionsDocument5 pagesArtificial Intelligence Applications in Cybersecurity: 3. Research Questionsjiya singhNo ratings yet

- Name: Sujit Rajbhar Roll No: 81 Div: A UID: 120CP1206A Essay On Engineer'S DayDocument13 pagesName: Sujit Rajbhar Roll No: 81 Div: A UID: 120CP1206A Essay On Engineer'S DaySujitNo ratings yet

- ATARC AIDA Guidebook - FINAL 1TDocument6 pagesATARC AIDA Guidebook - FINAL 1TdfgluntNo ratings yet

- How To QA Test Software That Uses AI and Machine Learning - DZone AIDocument3 pagesHow To QA Test Software That Uses AI and Machine Learning - DZone AIlm7kNo ratings yet

- AIDocument3 pagesAICher Angel BihagNo ratings yet

- Artificial IntelligenceDocument2 pagesArtificial IntelligenceChi Keung Langit ChanNo ratings yet

- STCL AssignmentDocument8 pagesSTCL AssignmentRushabh RatnaparkhiNo ratings yet

- Machine Learning PDocument9 pagesMachine Learning PbadaboyNo ratings yet

- Using AI in Higher EducationDocument31 pagesUsing AI in Higher EducationDeepak ManeNo ratings yet

- Data Science and Analytics - Assignment I: Health PassportDocument2 pagesData Science and Analytics - Assignment I: Health PassportDhritiman DuttaNo ratings yet

- Chapter 3Document13 pagesChapter 3last manNo ratings yet

- Final RequirementDocument7 pagesFinal RequirementerickxandergarinNo ratings yet

- Proposed MGF Gen AI 2024Document23 pagesProposed MGF Gen AI 2024Danny HanNo ratings yet

- URL Based Phishing Website Detection by Using Gradient and Catboost AlgorithmsDocument8 pagesURL Based Phishing Website Detection by Using Gradient and Catboost AlgorithmsIJRASETPublicationsNo ratings yet

- 1 s2.0 S2772528621000352 MainDocument9 pages1 s2.0 S2772528621000352 MainAdamu AhmadNo ratings yet

- 3 Artificial IntelligenceDocument24 pages3 Artificial Intelligencekigali acNo ratings yet

- ATARC AIDA Guidebook - FINAL 2YDocument4 pagesATARC AIDA Guidebook - FINAL 2YdfgluntNo ratings yet

- 情报分析的未来人工智能对情报分析影响的任务级观点 20201020 提交Document20 pages情报分析的未来人工智能对情报分析影响的任务级观点 20201020 提交chou youNo ratings yet

- Techniques, Solutions and Models Machine Learning Applied To Cybersecurity - Version 1Document4 pagesTechniques, Solutions and Models Machine Learning Applied To Cybersecurity - Version 1Cesar BarretoNo ratings yet

- SiasDocument26 pagesSiassajadul.cseNo ratings yet

- Transparency in AIDocument31 pagesTransparency in AISuvadeep DasNo ratings yet

- Emerging Technologies in BusinessDocument35 pagesEmerging Technologies in BusinesshimanshuNo ratings yet

- Importance of Machine LearningDocument36 pagesImportance of Machine Learningvishalpalv43004No ratings yet

- A Survey of Machine Learning Algorithms For Big Data AnalyticsDocument4 pagesA Survey of Machine Learning Algorithms For Big Data Analyticspratiknavale1131No ratings yet

- Android Application For Structural Health Monitoring and Data Analytics of Roads PDFDocument5 pagesAndroid Application For Structural Health Monitoring and Data Analytics of Roads PDFEngr Suleman MemonNo ratings yet

- Sentiment Analysis For Enhancing Business Process Using Naive BayesDocument10 pagesSentiment Analysis For Enhancing Business Process Using Naive BayesInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Influence of Principals’ Promotion of Professional Development of Teachers on Learners’ Academic Performance in Kenya Certificate of Secondary Education in Kisii County, KenyaDocument13 pagesInfluence of Principals’ Promotion of Professional Development of Teachers on Learners’ Academic Performance in Kenya Certificate of Secondary Education in Kisii County, KenyaInternational Journal of Innovative Science and Research Technology100% (1)

- Entrepreneurial Creative Thinking and Venture Performance: Reviewing the Influence of Psychomotor Education on the Profitability of Small and Medium Scale Firms in Port Harcourt MetropolisDocument10 pagesEntrepreneurial Creative Thinking and Venture Performance: Reviewing the Influence of Psychomotor Education on the Profitability of Small and Medium Scale Firms in Port Harcourt MetropolisInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Sustainable Energy Consumption Analysis through Data Driven InsightsDocument16 pagesSustainable Energy Consumption Analysis through Data Driven InsightsInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Vertical Farming System Based on IoTDocument6 pagesVertical Farming System Based on IoTInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Osho Dynamic Meditation; Improved Stress Reduction in Farmer Determine by using Serum Cortisol and EEG (A Qualitative Study Review)Document8 pagesOsho Dynamic Meditation; Improved Stress Reduction in Farmer Determine by using Serum Cortisol and EEG (A Qualitative Study Review)International Journal of Innovative Science and Research TechnologyNo ratings yet

- Detection and Counting of Fake Currency & Genuine Currency Using Image ProcessingDocument6 pagesDetection and Counting of Fake Currency & Genuine Currency Using Image ProcessingInternational Journal of Innovative Science and Research Technology100% (9)

- Impact of Stress and Emotional Reactions due to the Covid-19 Pandemic in IndiaDocument6 pagesImpact of Stress and Emotional Reactions due to the Covid-19 Pandemic in IndiaInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Designing Cost-Effective SMS based Irrigation System using GSM ModuleDocument8 pagesDesigning Cost-Effective SMS based Irrigation System using GSM ModuleInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- An Overview of Lung CancerDocument6 pagesAn Overview of Lung CancerInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- An Efficient Cloud-Powered Bidding MarketplaceDocument5 pagesAn Efficient Cloud-Powered Bidding MarketplaceInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Utilization of Waste Heat Emitted by the KilnDocument2 pagesUtilization of Waste Heat Emitted by the KilnInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Ambulance Booking SystemDocument7 pagesAmbulance Booking SystemInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Digital Finance-Fintech and it’s Impact on Financial Inclusion in IndiaDocument10 pagesDigital Finance-Fintech and it’s Impact on Financial Inclusion in IndiaInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Forensic Advantages and Disadvantages of Raman Spectroscopy Methods in Various Banknotes Analysis and The Observed Discordant ResultsDocument12 pagesForensic Advantages and Disadvantages of Raman Spectroscopy Methods in Various Banknotes Analysis and The Observed Discordant ResultsInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Study Assessing Viability of Installing 20kw Solar Power For The Electrical & Electronic Engineering Department Rufus Giwa Polytechnic OwoDocument6 pagesStudy Assessing Viability of Installing 20kw Solar Power For The Electrical & Electronic Engineering Department Rufus Giwa Polytechnic OwoInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Auto Tix: Automated Bus Ticket SolutionDocument5 pagesAuto Tix: Automated Bus Ticket SolutionInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Examining the Benefits and Drawbacks of the Sand Dam Construction in Cadadley RiverbedDocument8 pagesExamining the Benefits and Drawbacks of the Sand Dam Construction in Cadadley RiverbedInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Unmasking Phishing Threats Through Cutting-Edge Machine LearningDocument8 pagesUnmasking Phishing Threats Through Cutting-Edge Machine LearningInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Predictive Analytics for Motorcycle Theft Detection and RecoveryDocument5 pagesPredictive Analytics for Motorcycle Theft Detection and RecoveryInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Effect of Solid Waste Management on Socio-Economic Development of Urban Area: A Case of Kicukiro DistrictDocument13 pagesEffect of Solid Waste Management on Socio-Economic Development of Urban Area: A Case of Kicukiro DistrictInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Comparative Evaluation of Action of RISA and Sodium Hypochlorite on the Surface Roughness of Heat Treated Single Files, Hyflex EDM and One Curve- An Atomic Force Microscopic StudyDocument5 pagesComparative Evaluation of Action of RISA and Sodium Hypochlorite on the Surface Roughness of Heat Treated Single Files, Hyflex EDM and One Curve- An Atomic Force Microscopic StudyInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- An Industry That Capitalizes Off of Women's Insecurities?Document8 pagesAn Industry That Capitalizes Off of Women's Insecurities?International Journal of Innovative Science and Research TechnologyNo ratings yet

- Blockchain Based Decentralized ApplicationDocument7 pagesBlockchain Based Decentralized ApplicationInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Cyber Security Awareness and Educational Outcomes of Grade 4 LearnersDocument33 pagesCyber Security Awareness and Educational Outcomes of Grade 4 LearnersInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Factors Influencing The Use of Improved Maize Seed and Participation in The Seed Demonstration Program by Smallholder Farmers in Kwali Area Council Abuja, NigeriaDocument6 pagesFactors Influencing The Use of Improved Maize Seed and Participation in The Seed Demonstration Program by Smallholder Farmers in Kwali Area Council Abuja, NigeriaInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Computer Vision Gestures Recognition System Using Centralized Cloud ServerDocument9 pagesComputer Vision Gestures Recognition System Using Centralized Cloud ServerInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Impact of Silver Nanoparticles Infused in Blood in A Stenosed Artery Under The Effect of Magnetic Field Imp. of Silver Nano. Inf. in Blood in A Sten. Art. Under The Eff. of Mag. FieldDocument6 pagesImpact of Silver Nanoparticles Infused in Blood in A Stenosed Artery Under The Effect of Magnetic Field Imp. of Silver Nano. Inf. in Blood in A Sten. Art. Under The Eff. of Mag. FieldInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Visual Water: An Integration of App and Web To Understand Chemical ElementsDocument5 pagesVisual Water: An Integration of App and Web To Understand Chemical ElementsInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Parastomal Hernia: A Case Report, Repaired by Modified Laparascopic Sugarbaker TechniqueDocument2 pagesParastomal Hernia: A Case Report, Repaired by Modified Laparascopic Sugarbaker TechniqueInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Smart Health Care SystemDocument8 pagesSmart Health Care SystemInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Vce Chemistry Unit 3 Sac 2 Equilibrium Experimental Report: InstructionsDocument5 pagesVce Chemistry Unit 3 Sac 2 Equilibrium Experimental Report: InstructionsJefferyNo ratings yet

- Lesson Plan Week 1 The Eve of The Viking AgeDocument3 pagesLesson Plan Week 1 The Eve of The Viking AgeIsaac BoothroydNo ratings yet

- Sincronizacion de Motor Toyota 2az-FeDocument12 pagesSincronizacion de Motor Toyota 2az-FeWilliams NavasNo ratings yet

- Alice in Wonderland Literary AnalysisDocument5 pagesAlice in Wonderland Literary AnalysisRica Jane Torres100% (1)

- Qualifying Exam Study Guide 01-26-2018Document20 pagesQualifying Exam Study Guide 01-26-2018Abella, Marilou R. (marii)No ratings yet

- Cantilever Lab PDFDocument6 pagesCantilever Lab PDFDuminduJayakodyNo ratings yet

- TeSys Control Relays - CAD32G7Document3 pagesTeSys Control Relays - CAD32G7muez zabenNo ratings yet

- JVC KD r540 Manual de InstruccionesDocument16 pagesJVC KD r540 Manual de InstruccionesJesus FloresNo ratings yet

- Predominance Area DiagramDocument5 pagesPredominance Area Diagramnaresh naikNo ratings yet

- Sakaka Project 405 MW: Checklist - Electrical Installation of ACCBDocument1 pageSakaka Project 405 MW: Checklist - Electrical Installation of ACCBVenkataramanan SNo ratings yet

- Relative Measure of VariationsDocument34 pagesRelative Measure of VariationsHazel GeronimoNo ratings yet

- Conventional As Well As Emerging Arsenic Removal Technologies - A Critical ReviewDocument21 pagesConventional As Well As Emerging Arsenic Removal Technologies - A Critical ReviewQuea ApurimacNo ratings yet

- Introduction To Industry 4.0 and Industrial IoT Week 3 Quiz SolutionsDocument5 pagesIntroduction To Industry 4.0 and Industrial IoT Week 3 Quiz SolutionssathyaNo ratings yet

- Social Studies Subject For Middle School Us ConstitutionDocument63 pagesSocial Studies Subject For Middle School Us ConstitutionViel Marvin P. BerbanoNo ratings yet

- Booklist Shubham Kumar PDFDocument11 pagesBooklist Shubham Kumar PDFVivek VishwakarmaPSH'076No ratings yet

- But You Shouldn't Effect An Affect - That's ActingDocument2 pagesBut You Shouldn't Effect An Affect - That's ActingAlex WhiteNo ratings yet

- Daily Lesson Plan Year 1: ? V Saf3F0XwayDocument6 pagesDaily Lesson Plan Year 1: ? V Saf3F0XwayAinaa EnowtNo ratings yet

- LXE10E - A12 (Part Lis)Document50 pagesLXE10E - A12 (Part Lis)Manuel Alejandro Pontio RamirezNo ratings yet

- PM0010 AnswersDocument20 pagesPM0010 AnswersSridhar VeerlaNo ratings yet

- Eder606 Albersworth Reality Check FinalDocument16 pagesEder606 Albersworth Reality Check Finalapi-440856082No ratings yet

- How To Kill It at Law School - Harshil Vijayvargiya-Pages-8-15Document8 pagesHow To Kill It at Law School - Harshil Vijayvargiya-Pages-8-15VANSH CHOUHANNo ratings yet