Professional Documents

Culture Documents

AML Questions 15 - Chandigarh University

AML Questions 15 - Chandigarh University

Uploaded by

Mohd Yusuf0 ratings0% found this document useful (0 votes)

26 views1 pageThis document contains 15 questions about advanced machine learning concepts including perceptrons, multilayer perceptrons, backpropagation, support vector machines, k-nearest neighbors, clustering algorithms, self-organizing maps, Gaussian mixture models, dimensionality reduction techniques, ensemble learning combination schemes, error-correcting output codes, bagging, and boosting techniques such as Adaboost.

Original Description:

Original Title

AML questions 15_Chandigarh University

Copyright

© © All Rights Reserved

Available Formats

PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentThis document contains 15 questions about advanced machine learning concepts including perceptrons, multilayer perceptrons, backpropagation, support vector machines, k-nearest neighbors, clustering algorithms, self-organizing maps, Gaussian mixture models, dimensionality reduction techniques, ensemble learning combination schemes, error-correcting output codes, bagging, and boosting techniques such as Adaboost.

Copyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

0 ratings0% found this document useful (0 votes)

26 views1 pageAML Questions 15 - Chandigarh University

AML Questions 15 - Chandigarh University

Uploaded by

Mohd YusufThis document contains 15 questions about advanced machine learning concepts including perceptrons, multilayer perceptrons, backpropagation, support vector machines, k-nearest neighbors, clustering algorithms, self-organizing maps, Gaussian mixture models, dimensionality reduction techniques, ensemble learning combination schemes, error-correcting output codes, bagging, and boosting techniques such as Adaboost.

Copyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

You are on page 1of 1

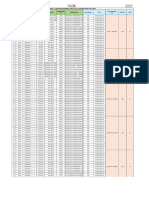

Advance Machine Learning Questions:

1. Explain the concept of a perceptron and how it works for binary

classification.

2. Describe the architecture of a multilayer perceptron (MLP) and how it

differs from a perceptron

3. Discuss the backpropagation algorithm and its role in training MLPs.

4. Explain the concept of support vector machines (SVM) and their use in

linear classification.

5. Discuss kernel functions and their role in transforming data for non-linear

SVM classification

6. Explain the k-nearest neighbors (KNN) algorithm and how it works for

classification.

7. Explain the hierarchical clustering algorithms AGNES and DIANA and

their differences.

8. Discuss partitional clustering algorithms and explain the concept of

K-Mode clustering

9. Describe the self-organizing map (SOM) and its applications in

unsupervised learning.

10.Explain Gaussian mixture models (GMM) and their use in modeling

complex data distributions

11..Discuss Principal Component Analysis (PCA) and Locally Linear

Embedding (LLE) as dimensionality reduction techniques in

unsupervised learning.

12..Explain combination schemes in ensemble learning and discuss the

voting-based approach.

13..Describe the Error-Correcting Output Codes (ECOC) technique and its

role in ensemble learning. C04

14..Discuss the concept of bagging and its implementation using

RandomForest Trees.

15..Explain the boosting technique, specifically Adaboost, and its

advantages in ensemble learning. C01

You might also like

- Study Notes To Ace Your Data Science InterviewDocument7 pagesStudy Notes To Ace Your Data Science InterviewDănuț DanielNo ratings yet

- Assignment and ImportantDocument2 pagesAssignment and ImportantSH GamingNo ratings yet

- 21cs54 Tie SimpDocument5 pages21cs54 Tie Simprockyv9964No ratings yet

- 21CS54 SIMP Questions - 21SCHEME: To Pass and Score Decent Just Study Module 1,2 3Document5 pages21CS54 SIMP Questions - 21SCHEME: To Pass and Score Decent Just Study Module 1,2 3vinaykumarms343No ratings yet

- 21AI502 SyllbusDocument5 pages21AI502 SyllbusTharun KumarNo ratings yet

- AIML Question Bank For Oral ExaminationDocument3 pagesAIML Question Bank For Oral ExaminationDon pablo100% (1)

- Machine Learning Mastery NotesDocument4 pagesMachine Learning Mastery NotesGauravJainNo ratings yet

- Machine-Learning QBDocument8 pagesMachine-Learning QBSarmi HarshaNo ratings yet

- Question BankDocument5 pagesQuestion Bankmanisha mudgalNo ratings yet

- ML Question Bank - Beena KapadiaDocument3 pagesML Question Bank - Beena KapadiaJhon GuptaNo ratings yet

- Assignment 3, 4Document1 pageAssignment 3, 4Pratham AgrawalNo ratings yet

- ML Question BankDocument4 pagesML Question Bankmahih16237No ratings yet

- Int 354Document4 pagesInt 354sjNo ratings yet

- FDS QP - ThyDocument1 pageFDS QP - ThyadityakolheliteNo ratings yet

- MTech I YEAR - II SEM QBDocument12 pagesMTech I YEAR - II SEM QBcse.vimalathithanNo ratings yet

- Machine LearningDocument2 pagesMachine LearningNikkNo ratings yet

- What Are The Components of A Neural Network? Explain: Unit - I Part - ADocument8 pagesWhat Are The Components of A Neural Network? Explain: Unit - I Part - AK.P.Revathi Asst prof - IT DeptNo ratings yet

- Data ScienceDocument6 pagesData Sciencexodelam182No ratings yet

- Neural Networks QuestionbankDocument2 pagesNeural Networks QuestionbankDurastiti samayaNo ratings yet

- Question Bank Module 3 - 6Document3 pagesQuestion Bank Module 3 - 6Arjun Singh ANo ratings yet

- Data Mining Question Bank U3 & U4Document3 pagesData Mining Question Bank U3 & U4Snehal BoroleNo ratings yet

- 18ai72 Aml QP SolutionsDocument39 pages18ai72 Aml QP SolutionsSahithi BhashyamNo ratings yet

- Machine Learning QBDocument3 pagesMachine Learning QBJyotsna SuraydevaraNo ratings yet

- ML QuestionsDocument2 pagesML QuestionsShriganesh BhandariNo ratings yet

- Artificial Intelligence Course CurriculumDocument7 pagesArtificial Intelligence Course CurriculumHashmi MajidNo ratings yet

- ML Interpretability AssignmentDocument5 pagesML Interpretability AssignmentKrishna Priya GazulaNo ratings yet

- NEURAL NETWORKS and Deep Learning: Going Deep About Neural NetworkDocument4 pagesNEURAL NETWORKS and Deep Learning: Going Deep About Neural NetworkrahuleluridpsNo ratings yet

- AI and ML SyllabusDocument3 pagesAI and ML SyllabusDrPrashant NeheNo ratings yet

- Unit-4 RelDocument3 pagesUnit-4 Rel20AD022 KAMALI PRIYA SNo ratings yet

- What Is Machine Learning?Document8 pagesWhat Is Machine Learning?Pooja PatwariNo ratings yet

- BasicsDocument3 pagesBasicschandreshpadmani9993No ratings yet

- COMP9417 Review NotesDocument10 pagesCOMP9417 Review NotesInes SarmientoNo ratings yet

- CP5191 Machine Learning Techniques L T P C3 0 0 3Document7 pagesCP5191 Machine Learning Techniques L T P C3 0 0 3indumathythanik933No ratings yet

- Question Bank Module-1Document2 pagesQuestion Bank Module-1ADITYA BhedasurNo ratings yet

- Python Machine Learning - Machine Learning and Deep Learning With Python Scikit Learn and Tensorflow 2 Third EditionDocument4 pagesPython Machine Learning - Machine Learning and Deep Learning With Python Scikit Learn and Tensorflow 2 Third EditionMatheus VasconcelosNo ratings yet

- Machine LearningDocument10 pagesMachine Learningread4freeNo ratings yet

- Ai QBDocument6 pagesAi QBraydbadmanNo ratings yet

- Practice Q - 1Document1 pagePractice Q - 1Mayank guptaNo ratings yet

- Predicting Structured DataDocument29 pagesPredicting Structured DatahmnaikNo ratings yet

- ME Soft ComputingDocument6 pagesME Soft Computingmarshel007No ratings yet

- Geometrical Interpretation and Architecture Selection of MLPDocument28 pagesGeometrical Interpretation and Architecture Selection of MLPWantong LiaoNo ratings yet

- 2018 Book DataScienceAndPredictiveAnalyt PDFDocument851 pages2018 Book DataScienceAndPredictiveAnalyt PDFshuvob4No ratings yet

- MACHINE LEARNING Imp QuesDocument1 pageMACHINE LEARNING Imp Quesmr.verma.shivamNo ratings yet

- 70 ML Interview QuestionsDocument7 pages70 ML Interview QuestionsRocky StallonNo ratings yet

- Introduction of MLDocument53 pagesIntroduction of MLchauhansumi143No ratings yet

- Questions-09 01 2024Document4 pagesQuestions-09 01 2024Rumana BegumNo ratings yet

- Machine Learning Syllabus V2Document5 pagesMachine Learning Syllabus V2AnandKumarPrakasamNo ratings yet

- 2024 Internal Assessment - 26 StudentsDocument2 pages2024 Internal Assessment - 26 Studentssarojram1108No ratings yet

- Sem 620Document21 pagesSem 620arkarajsaha2002No ratings yet

- Classification System For Handwritten Devnagari Numeral With A Neural Network ApproachDocument8 pagesClassification System For Handwritten Devnagari Numeral With A Neural Network Approacheditor_ijarcsseNo ratings yet

- A Survey and Analysis of Multi-Label Learning Techniques For Data StreamsDocument5 pagesA Survey and Analysis of Multi-Label Learning Techniques For Data StreamsInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- AI-Driven Time Series Forecasting: Complexity-Conscious Prediction and Decision-MakingFrom EverandAI-Driven Time Series Forecasting: Complexity-Conscious Prediction and Decision-MakingNo ratings yet

- ML Imp QuestionDocument2 pagesML Imp QuestionAman PalNo ratings yet

- (IJCST-V4I4P11) :rajni David Bagul, Prof. Dr. B. D. PhulpagarDocument6 pages(IJCST-V4I4P11) :rajni David Bagul, Prof. Dr. B. D. PhulpagarEighthSenseGroupNo ratings yet

- DATA MINING and MACHINE LEARNING. CLASSIFICATION PREDICTIVE TECHNIQUES: NAIVE BAYES, NEAREST NEIGHBORS and NEURAL NETWORKS: Examples with MATLABFrom EverandDATA MINING and MACHINE LEARNING. CLASSIFICATION PREDICTIVE TECHNIQUES: NAIVE BAYES, NEAREST NEIGHBORS and NEURAL NETWORKS: Examples with MATLABNo ratings yet

- AIML Assignment3Document1 pageAIML Assignment3Black ShadowNo ratings yet

- Question BankDocument4 pagesQuestion BankAanchal ChaudharyNo ratings yet

- MLP Assignment 2Document2 pagesMLP Assignment 2cherithsaireddyNo ratings yet

- Support Vector Machines and Perceptrons Learning, Optimization, Classification, and Application To Social NetworksDocument103 pagesSupport Vector Machines and Perceptrons Learning, Optimization, Classification, and Application To Social NetworksJaime Andres Aranguren CardonaNo ratings yet

- Yusuf 22BAI80002Document5 pagesYusuf 22BAI80002Mohd YusufNo ratings yet

- Mohd Yusuf - 22BAI80002Document5 pagesMohd Yusuf - 22BAI80002Mohd YusufNo ratings yet

- 22BAI80002 MohdYusufDocument4 pages22BAI80002 MohdYusufMohd YusufNo ratings yet

- Untitled DocumentDocument1 pageUntitled DocumentMohd YusufNo ratings yet

- Lecture 2.3.1 - AutoencodersDocument6 pagesLecture 2.3.1 - AutoencodersMohd YusufNo ratings yet

- LCTM and GruDocument62 pagesLCTM and GruMohd YusufNo ratings yet

- Lecture 2.3.3RNNDocument8 pagesLecture 2.3.3RNNMohd YusufNo ratings yet

- Lecture 2.1.2activation FunctionDocument15 pagesLecture 2.1.2activation FunctionMohd YusufNo ratings yet

- Important Questions Unit 2Document8 pagesImportant Questions Unit 2Mohd YusufNo ratings yet

- MST1-AML - Chandigarh UniversityDocument1 pageMST1-AML - Chandigarh UniversityMohd YusufNo ratings yet

- DCPD - SoftSkills Reappear External VIVA Schedule - Nov'23Document2 pagesDCPD - SoftSkills Reappear External VIVA Schedule - Nov'23Mohd YusufNo ratings yet

- AML - Lab - Syllabus - Chandigarh UniversityDocument9 pagesAML - Lab - Syllabus - Chandigarh UniversityMohd YusufNo ratings yet

- AML - Theory - Syllabus - Chandigarh UniversityDocument4 pagesAML - Theory - Syllabus - Chandigarh UniversityMohd YusufNo ratings yet

- 3171281e-7052-495c-90cb-9535289ceb19Document2 pages3171281e-7052-495c-90cb-9535289ceb19Mohd YusufNo ratings yet