Professional Documents

Culture Documents

Ceragon FibeAir IP-10G Product Description ETSI For 7.1.2 Rev B.03

Uploaded by

Eloy AndrésOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Ceragon FibeAir IP-10G Product Description ETSI For 7.1.2 Rev B.03

Uploaded by

Eloy AndrésCopyright:

Available Formats

ETSI Version

FibeAir® IP-10G

Product Description for i7.1.2

June 2014

Hardware Release: R2 and R3

Software Release: i7.1.2

Document Revision B.03

Copyright © 2014 by Ceragon Networks Ltd. All rights reserved.

FibeAir® IP-10G Product Description for i7.1.2

Notice

This document contains information that is proprietary to Ceragon Networks Ltd. No part of this

publication may be reproduced, modified, or distributed without prior written authorization of

Ceragon Networks Ltd. This document is provided as is, without warranty of any kind.

Trademarks

Ceragon Networks®, FibeAir® and CeraView® are trademarks of Ceragon Networks Ltd.,

registered in the United States and other countries.

Ceragon® is a trademark of Ceragon Networks Ltd., registered in various countries.

CeraMap™, PolyView™, EncryptAir™, ConfigAir™, CeraMon™, EtherAir™, CeraBuild™, CeraWeb™,

and QuickAir™, are trademarks of Ceragon Networks Ltd.

Other names mentioned in this publication are owned by their respective holders.

Statement of Conditions

The information contained in this document is subject to change without notice. Ceragon

Networks Ltd. shall not be liable for errors contained herein or for incidental or consequential

damage in connection with the furnishing, performance, or use of this document or equipment

supplied with it.

Open Source Statement

The Product may use open source software, among them O/S software released under the GPL or

GPL alike license ("GPL License"). Inasmuch that such software is being used, it is released under

the GPL License, accordingly. Some software might have changed. The complete list of the

software being used in this product including their respective license and the aforementioned

public available changes is accessible on http://www.gnu.org/licenses/.

Information to User

Any changes or modifications of equipment not expressly approved by the manufacturer could

void the user’s authority to operate the equipment and the warranty for such equipment.

Ceragon Proprietary and Confidential Page 2 of 413

FibeAir® IP-10G Product Description for i7.1.2

Table of Contents

1. Synonyms and Acronyms .............................................................................. 22

2. Introduction .................................................................................................... 25

2.1 Product Overview ......................................................................................................... 26

2.2 IP-10G Advantages ...................................................................................................... 27

2.2.1 Efficient Utilization of Spectrum Assets ....................................................................... 27

2.2.2 Spectral Efficiency........................................................................................................ 27

2.2.3 Radio Link .................................................................................................................... 27

2.2.4 Wireless Network ......................................................................................................... 28

2.2.5 Scalability ..................................................................................................................... 28

2.2.6 Availability .................................................................................................................... 28

2.2.7 Network Level Optimization ......................................................................................... 29

2.2.8 Network Management .................................................................................................. 29

2.2.9 Power Saving Mode with High Power Radio ............................................................... 29

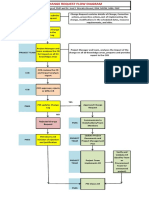

2.3 Functional Block Diagrams .......................................................................................... 30

2.4 Nodal Configuration Option .......................................................................................... 32

2.4.1 Nodal Configuration Benefits ....................................................................................... 32

2.4.2 Nodal Design ................................................................................................................ 32

2.4.3 Nodal Enclosure Design............................................................................................... 33

2.4.4 Nodal Management ...................................................................................................... 34

2.4.5 Centralized System Features in a Nodal Configuration ............................................... 35

2.4.6 Ethernet Connectivity in a Nodal Configuration ........................................................... 35

2.5 Solution Overview ........................................................................................................ 36

2.6 System Overview ......................................................................................................... 40

3. Release and Version Information .................................................................. 41

3.1 New Features and Enhancements............................................................................... 42

3.2 Hardware Compatibility ................................................................................................ 43

3.3 Version Matrix .............................................................................................................. 44

4. Hardware Description..................................................................................... 49

4.1 Hardware Architecture ................................................................................................. 50

4.2 Front Panel Description................................................................................................ 51

4.3 Ethernet Interfaces ....................................................................................................... 53

4.3.1 GbE Interfaces ............................................................................................................. 54

4.3.2 100Base-FX support .................................................................................................... 55

4.4 Management Interfaces ............................................................................................... 56

4.5 Link Aggregation (LAG)................................................................................................ 57

4.5.1 Creating a LAG Group ................................................................................................. 57

4.5.2 Adding Ports to a LAG Group ...................................................................................... 58

4.5.3 Removing Ports from a LAG Group ............................................................................. 59

4.6 TDM Interface Options ................................................................................................. 60

4.7 Radio Interface ............................................................................................................. 61

Ceragon Proprietary and Confidential Page 3 of 413

FibeAir® IP-10G Product Description for i7.1.2

4.8 Power Interfaces .......................................................................................................... 62

4.9 Additional Interfaces..................................................................................................... 63

4.10 Front Panel LEDs ......................................................................................................... 64

4.11 External Alarms ............................................................................................................ 65

5. Licensing......................................................................................................... 66

5.1 License Overview ......................................................................................................... 67

5.2 Working with License Keys .......................................................................................... 67

5.3 Licensed Features ........................................................................................................ 67

6. Feature Description ........................................................................................ 69

6.1 Equipment Protection ................................................................................................... 70

6.1.1 Equipment Protection Overview ................................................................................... 71

6.1.2 1+1 HSB Protection ..................................................................................................... 72

6.1.3 2+0 Multi-Radio and 2+0 Multi-Radio with IDU and Line Protection ........................... 76

6.1.4 2+2 HSB Protection ..................................................................................................... 78

6.1.5 Switchover Triggers ..................................................................................................... 80

6.1.6 Automatic State Propagation (ASP) with HSB Protection ........................................... 80

6.2 Ethernet Line Protection............................................................................................... 81

6.2.1 Ethernet Line Protection Options ................................................................................. 82

6.2.2 Multi-Unit LAG .............................................................................................................. 84

6.2.3 Ethernet Line Protection Using Splitters ...................................................................... 87

6.3 Capacity and Latency................................................................................................... 88

6.3.1 Capacity Summary ....................................................................................................... 89

6.3.2 Ethernet Header Compression .................................................................................... 90

6.3.3 Latency ......................................................................................................................... 97

6.3.4 Frame Cut-Through...................................................................................................... 98

6.3.5 Asymmetrical Scripts .................................................................................................. 100

6.4 Radio Features ........................................................................................................... 103

6.4.1 Adaptive Coding Modulation (ACM) ........................................................................... 104

6.4.2 ACM with Adaptive Transmit Power .......................................................................... 109

6.4.3 ACM Adaptive Mode with Frequency Diversity .......................................................... 110

6.4.4 Radio Traffic Priority................................................................................................... 112

6.4.5 Cross Polarization Interface Canceller (XPIC) ........................................................... 113

6.4.6 Multi-Radio ................................................................................................................. 117

6.4.7 Automatic State Propagation in Multi-Radio .............................................................. 120

6.4.8 Diversity...................................................................................................................... 121

6.4.9 ATPC Override Timer................................................................................................. 127

6.4.10 Disabling the Radio .................................................................................................... 128

6.4.11 Behavior in Radio Disable Conditions ........................................................................ 129

6.5 Ethernet Features ...................................................................................................... 130

6.5.1 Ethernet Switching ..................................................................................................... 131

6.5.2 Ethernet Services ....................................................................................................... 134

6.5.3 Network Resiliency and xSTP .................................................................................... 138

6.5.4 Automatic State Propagation ..................................................................................... 148

6.6 Quality of Service (Traffic Manager) .......................................................................... 150

6.6.1 Integrated Quality of Service (QoS) Overview ........................................................... 151

6.6.2 Standard QoS ............................................................................................................ 153

Ceragon Proprietary and Confidential Page 4 of 413

FibeAir® IP-10G Product Description for i7.1.2

6.6.3 Enhanced QoS ........................................................................................................... 157

6.6.4 Standard and Enhanced QoS Comparison................................................................ 169

6.7 TDM Solution ............................................................................................................. 170

6.7.1 TDM Trails and Cross-Connect (XE) ......................................................................... 171

6.7.2 Smart TDM Pseudowire ............................................................................................. 177

6.7.3 Wireless SNCP .......................................................................................................... 187

6.7.4 Adaptive Bandwidth Recovery (ABR) ........................................................................ 192

6.7.5 ACM for TDM Services .............................................................................................. 202

6.7.6 AIS Signaling and Detection ...................................................................................... 204

6.8 Synchronization .......................................................................................................... 205

6.8.1 Synchronization Overview.......................................................................................... 206

6.8.2 IP-10G Synchronization Solution ............................................................................... 207

6.8.3 Available Synchronization Interfaces ......................................................................... 208

6.8.4 Synchronization Configuration ................................................................................... 209

6.8.5 Synchronization Using TDM Trails ............................................................................. 211

6.8.6 SyncE from Co-Located TDM Trails .......................................................................... 212

6.8.7 Native Sync Distribution Mode ................................................................................... 213

6.8.8 SyncE PRC Pipe Regenerator Mode ......................................................................... 217

6.8.9 SSM Support and Loop Prevention in Radio Interfaces ............................................ 218

6.8.10 ESMC PDU Support for Loop Prevention in Ethernet Interfaces .............................. 219

7. Radio Frequency Units (RFUs) .................................................................... 221

7.1 RFU Overview ............................................................................................................ 222

7.2 RFU Selection Guide ................................................................................................. 223

7.3 RFU-C ........................................................................................................................ 224

7.3.1 Main Features of RFU-C ............................................................................................ 224

7.3.2 RFU-C Frequency Bands ........................................................................................... 225

7.3.3 RFU-C Mechanical, Electrical, and Environmental Specifications............................. 236

7.3.4 RFU-C Mediation Device Losses ............................................................................... 237

7.3.5 RFU-C Antenna Connection ...................................................................................... 237

7.3.6 RFU-C Waveguide Flanges ....................................................................................... 238

7.4 1500HP/RFU-HP ........................................................................................................ 239

7.4.1 Main Features of 1500HP/RFU-HP ........................................................................... 239

7.4.2 1500HP/RFU-HP Frequency Bands .......................................................................... 241

7.4.3 1500HP/RFU-HP Mechanical, Electrical, and Environmental Specifications ............ 242

7.4.4 1500HP/RFU-HP Functional Block Diagram and Concept of Operation ................... 243

7.4.5 1500HP/RFU-HP Comparison Table ......................................................................... 245

7.4.6 1500HP/RFU-HP System Configurations .................................................................. 246

7.4.7 1500HP/RFU-HP Space Diversity Support ................................................................ 246

7.4.8 Split Mount Configuration and Branching Network .................................................... 248

7.4.9 Split-Mount Branching Loss ....................................................................................... 253

7.4.10 1500HP/RFU-HP All Indoor Configurations and Branching Network ........................ 254

7.4.11 1500HP/RFU-HP All Indoor Compact (Horizontal) .................................................... 265

7.4.12 1500HP/RFU-HP Models and Part Numbers............................................................. 269

7.4.13 OCB Part Numbers .................................................................................................... 270

7.4.14 Generic All-Indoor Configurations Part Numbers ...................................................... 271

7.5 RFH-HS ...................................................................................................................... 275

7.5.1 Main Features of RFU-HS.......................................................................................... 275

7.5.2 RFU-HS Frequency Bands ........................................................................................ 276

7.5.3 RFU-HS Mechanical, Electrical, and Environmental Specifications .......................... 277

7.5.4 RFU-HS Antenna Types ............................................................................................ 277

Ceragon Proprietary and Confidential Page 5 of 413

FibeAir® IP-10G Product Description for i7.1.2

7.5.5 RFU-HS Antenna Connection .................................................................................... 278

7.5.6 RFU-HS Mediation Device Losses ............................................................................ 278

7.6 RFU-SP ...................................................................................................................... 280

7.6.1 Main Features of RFU-SP .......................................................................................... 280

7.6.2 RFU-SP Frequency Bands ........................................................................................ 281

7.6.3 RFU-SP Mechanical, Electrical, and Environmental Specifications .......................... 282

7.6.4 RFU-SP Direct Mount Installation .............................................................................. 283

7.6.5 RFU-SP Antenna Connection .................................................................................... 283

7.6.6 RFU-SP Mediation Device Losses ............................................................................. 284

7.7 1500P ......................................................................................................................... 285

7.7.1 1500P Mechanical, Electrical, and Environmental Specifications ............................. 285

7.7.2 1500P Mediation Device Losses ................................................................................ 286

8. Typical Configurations ................................................................................. 287

8.1 IP-10G Configuration Options .................................................................................... 288

8.2 Point-to-Point Configurations ..................................................................................... 289

8.2.1 Basic 1+0 Configuration ............................................................................................. 290

8.2.2 1+1 HSB ..................................................................................................................... 291

8.2.3 1+0 with 32 E1s.......................................................................................................... 292

8.2.4 1+0 with 64 E1s.......................................................................................................... 293

8.2.5 2+0/XPIC Link with 64 E1s – No Multi-Radio ............................................................ 294

8.2.6 2+0/XPIC Link with 64 E1s – Multi-Radio .................................................................. 295

8.2.7 2+0/XPIC Link with 32 E1s + STM-1 Mux Interface, no Multi-Radio, up to 168 E1s over

the radio ..................................................................................................................... 296

8.2.8 1+1 HSB with 32 E1s ................................................................................................. 297

8.2.9 1+1 HSB with 64 E1s ................................................................................................. 298

8.2.10 1+1 HSB with 84 E1s ................................................................................................. 299

8.2.11 1+1 HSB Link with 16 E1s+ STM-1 Mux Interface (Up to 84 E1s over the radio) ..... 300

2

8.2.12 Native 2+2/XPIC/Multi-Radio MW Link, with 2xSTM-1 Mux (up to 150 E1s over the

radio) .......................................................................................................................... 301

8.3 Nodal Configurations.................................................................................................. 302

8.3.1 Chain with 1+0 Downlink and 1+1 HSB Uplink, with STM-1 Mux .............................. 303

8.3.2 Node with 2 x 1+0 Downlinks and 1 x 1+1 HSB Uplink ............................................. 304

8.3.3 Chain with 1+1 Downlink and 1+1 HSB Uplink, with STM-1 Mux .............................. 305

2

8.3.4 Native Ring with 3 x 1+0 Links + STM-1 Mux Interface at Main Site ........................ 306

2

8.3.5 Native Ring with 3 x 1+1 HSB Links + STM-1 Mux Interface at Main Site ............... 307

8.3.6 Node with 1 x 1+1 HSB Downlink and 1 x 1+1 HSB Uplink with STM-1 Mux ........... 308

2

8.3.7 Native Ring with 4 x 1+0 Links, with STM-1 Mux ...................................................... 309

2

8.3.8 Native Ring with 3 x 1+0 Links + Spur Link 1+0 ....................................................... 310

2

8.3.9 Native Ring with 4 x 1+0 MW Links and 1 x Fiber Link (5 hops total), with STM-1 Mux

................................................................................................................................... 311

8.3.10 Native2 Ring with 2 x 2+0/XPIC MW Links and 1 x Fiber Link (3 hops total), with 2 x

STM-1 Mux ................................................................................................................. 312

9. FibeAir IP-10G Management ........................................................................ 313

9.1 Management Overview .............................................................................................. 314

9.2 Management Communication Channels and Protocols ............................................. 315

9.3 Web-Based Element Management System (Web EMS) ........................................... 317

9.4 Command Line Interface (CLI) ................................................................................... 318

9.4.1 Text CLI Configuration Scripts ................................................................................... 318

Ceragon Proprietary and Confidential Page 6 of 413

FibeAir® IP-10G Product Description for i7.1.2

9.5 Floating IP Address .................................................................................................... 319

9.6 In-Band Management................................................................................................. 320

9.6.1 In-Band Management Isolation in Smart Pipe Mode ................................................. 320

9.6.2 Limiting the Ethernet MTU for Management Packets ................................................ 321

9.7 Out-of-Band Management ......................................................................................... 321

9.8 System Security Features .......................................................................................... 322

9.8.1 Ceragon’s Layered Security Concept ........................................................................ 322

9.8.2 Defenses in Management Communication Channels ................................................ 323

9.8.3 Defenses in User and System Authentication Procedures ........................................ 324

9.8.4 Secure Communication Channels ............................................................................. 326

9.8.5 Security Log ............................................................................................................... 329

9.8.6 Configuration Log File ................................................................................................ 330

9.9 Ethernet Statistics ...................................................................................................... 332

9.9.1 Ingress Line Receive Statistics .................................................................................. 332

9.9.2 Ingress Radio Transmit Statistics .............................................................................. 332

9.9.3 Egress Radio Receive Statistics ................................................................................ 333

9.9.4 Egress Line Transmit Statistics .................................................................................. 333

9.9.5 Radio Ethernet Capacity ............................................................................................ 333

9.9.6 Radio Ethernet Utilization........................................................................................... 333

9.9.7 Port Ethernet Utilization ............................................................................................. 333

9.10 Software Update Timer .............................................................................................. 334

9.11 CeraBuild ................................................................................................................... 334

10. Network Management................................................................................... 335

10.1 OAM ........................................................................................................................... 336

10.1.1 Configurable RSL Threshold Alarms and Traps ........................................................ 336

10.1.2 Alarms Editing ............................................................................................................ 336

10.1.3 Connectivity Fault Management (CFM) ..................................................................... 337

10.2 Automatic Network Topology Discovery with LLDP Protocol .................................... 339

10.3 NMS Options .............................................................................................................. 340

11. Standards and Certifications ....................................................................... 341

11.1 Carrier Ethernet Functionality .................................................................................... 342

11.2 Supported Ethernet Standards .................................................................................. 343

11.3 MEF Certifications for Ethernet Services ................................................................... 343

11.4 Supported Pseudowire Encapsulations ..................................................................... 344

11.5 Standards Compliance ............................................................................................... 345

11.6 Network Management, Diagnostics, Status, and Alarms ........................................... 346

12. Specifications ............................................................................................... 347

12.1 General Specifications ............................................................................................... 348

12.1.1 6-15 GHz .................................................................................................................... 348

12.1.2 18-42 GHz .................................................................................................................. 349

12.2 Transmit Power Specifications ................................................................................... 350

12.2.1 RFU-C Transmit Power (dBm) ................................................................................... 351

12.2.2 1500HP/RFU-HP Transmit Power (dBm) .................................................................. 351

Ceragon Proprietary and Confidential Page 7 of 413

FibeAir® IP-10G Product Description for i7.1.2

12.2.3 RFU-HS Transmit Power (dBm) ................................................................................ 352

12.2.4 RFU-SP Transmit Power (dBm) ................................................................................. 352

12.2.5 1500P Transmit Power (dBm) .................................................................................... 352

12.3 Receiver Threshold Specifications ............................................................................. 353

12.3.1 RFU-C Receiver Threshold (RSL) (dBm @ BER = 10-6) .......................................... 354

12.3.2 1500HP/RFU-HP Receiver Threshold (RSL) (dBm @BER = 10-6) .......................... 356

12.3.3 RFU-HS Receiver Threshold (RSL) (dBm @ BER = 10-6) ....................................... 358

12.3.4 RFU-SP Receiver Threshold (RSL) (dBm @ BER = 10-6)........................................ 360

12.3.5 1500P Receiver Threshold (RSL) (dBm @ BER = 10-6)........................................... 362

12.4 Radio Capacity Specifications ................................................................................... 364

12.4.1 Radio Capacity without Header Compression ........................................................... 364

12.4.2 Radio Capacity with Legacy MAC Header Compression .......................................... 368

12.4.3 Radio Capacity with Multi-Layer Enhanced Header Compression ............................ 372

12.5 Ethernet Latency Specifications ................................................................................. 376

12.5.1 Ethernet Latency – 3.5 MHz Channel Bandwidth ...................................................... 376

12.5.2 Ethernet Latency – 7 MHz Channel Bandwidth ......................................................... 376

12.5.3 Ethernet Latency – 14 MHz Channel Bandwidth ....................................................... 377

12.5.4 Ethernet Latency – 28 MHz Channel Bandwidth ....................................................... 377

12.5.5 Ethernet Latency – 40 MHz Channel Bandwidth ....................................................... 378

12.5.6 Ethernet Latency – 56 MHz Channel Bandwidth ....................................................... 378

12.6 E1 Latency Specifications .......................................................................................... 379

12.6.1 E1 Latency – 3.5 MHz Channel Bandwidth ............................................................... 379

12.6.2 E1 Latency – 7 MHz Channel Bandwidth .................................................................. 379

12.6.3 E1 Latency – 14 MHz Channel Bandwidth ................................................................ 380

12.6.4 E1 Latency – 28 MHz Channel Bandwidth ................................................................ 380

12.6.5 E1 Latency – 40 MHz Channel Bandwidth ................................................................ 381

12.6.6 E1 Latency – 56 MHz Channel Bandwidth ................................................................ 381

12.7 Interface Specifications .............................................................................................. 382

12.7.1 Ethernet Interface Specifications ............................................................................... 382

12.7.2 E1 Interface Specifications ........................................................................................ 382

12.7.3 Smart TDM Pseudowire Interface Specifications ...................................................... 382

12.7.4 Optical STM-1 SFP Interface Specifications .............................................................. 383

12.7.5 Auxiliary Channel Specifications ................................................................................ 383

12.8 Mechanical Specifications .......................................................................................... 384

12.9 Power Input Specifications ......................................................................................... 384

12.10 Power Consumption Specifications ........................................................................... 385

12.10.1 Power Consumption with RFU-HP in Power Saving Mode ....................... 385

12.11 Environmental Specifications ..................................................................................... 386

13. Components and Accessories .................................................................... 387

13.1 Cable and Accessory Overview ................................................................................. 388

13.2 IDU Unit ...................................................................................................................... 391

13.3 Nodal Enclosure Units................................................................................................ 391

13.4 T-Card Options ........................................................................................................... 392

13.5 SFP Options ............................................................................................................... 393

13.6 Additional IDU Accessories ........................................................................................ 393

Ceragon Proprietary and Confidential Page 8 of 413

FibeAir® IP-10G Product Description for i7.1.2

13.7 Ethernet Cables and Splitters (Electrical) .................................................................. 394

13.7.1 Ethernet Cables and Splitters (Copper) ..................................................................... 394

13.7.2 Ethernet RJ45 - RJ45 Cables .................................................................................... 394

13.7.3 WSC Protection Cable ............................................................................................... 395

13.7.4 Ethernet Cross-Connect Cable .................................................................................. 395

13.7.5 Ethernet Y Cable ........................................................................................................ 396

13.8 Ethernet Cables and Splitters (Optical) ...................................................................... 397

13.8.1 Optical Y Cables, Adaptors, and Extension Cables ................................................... 397

13.8.2 Optical H Cables ........................................................................................................ 398

13.9 E1 Cables ................................................................................................................... 399

13.9.1 E1 Open-End Extension Cable .................................................................................. 399

13.9.2 E1 Extension Cable with RJ-45 Female End ............................................................. 399

13.9.3 E1 RJ-45 Male-to-Male Extension Cable ................................................................... 400

13.9.4 E1 Termination Cables............................................................................................... 401

13.9.5 E1 RJ-45 - RJ-45 Cables ........................................................................................... 402

13.9.6 E1 MDR69 - MDR69 Cross Cables (for Chaining Applications) ................................ 402

13.9.7 E1 Special Cables ...................................................................................................... 403

13.9.8 E1 Y Cable ................................................................................................................. 404

13.10 E1 Expansion Panels ................................................................................................. 405

13.10.1 E1 Expansion Panel with RJ-45 Female Sockets ..................................... 405

13.10.2 E1 Expansion Panel to 75 ohm ................................................................. 406

13.10.3 E1 75 ohm Extension for 1+1 HSB Configurations ................................... 407

13.11 Alarms Cables ............................................................................................................ 408

13.12 User Channel Cables ................................................................................................. 409

13.13 IF Cable ...................................................................................................................... 410

13.14 Software License Marketing Models .......................................................................... 411

13.14.1 ACM License ............................................................................................. 411

13.14.2 L2 Switch License ...................................................................................... 411

13.14.3 Capacity Upgrade License ........................................................................ 411

13.14.4 Network Resiliency License ....................................................................... 412

13.14.5 TDM Traffic Only License .......................................................................... 412

13.14.6 Synchronization Unit License .................................................................... 412

13.14.7 Enhanced QoS License ............................................................................. 413

13.14.8 Asymmetrical Scripts License .................................................................... 413

13.14.9 Enhanced Header Compression License .................................................. 413

13.14.10 Frame Cut-Through License ...................................................................... 413

Ceragon Proprietary and Confidential Page 9 of 413

FibeAir® IP-10G Product Description for i7.1.2

List of Figures

Functional Block Diagram ................................................................................... 30

FibeAir IP-10G Block Diagram ............................................................................ 31

Main Nodal Enclosure.......................................................................................... 33

Extension Nodal Enclosure ................................................................................. 33

Scalable Nodal Enclosure ................................................................................... 34

IP-10G Complete Support for TDM and Packet Transport Networks................ 36

IP-10G in Hybrid TDM and Ethernet Network ..................................................... 37

IP-10G All-Packet Solution with Integrated Switching and Pseudowire .......... 37

IP-10G in Wireless Native2 Ring ......................................................................... 38

IP-10G End-to-End Service Management ........................................................... 38

Integrated Hybrid/All-Packed Solution Using FibeAir IP-10 Products.............. 39

Typical Point-to-Point Configurations ................................................................ 40

Typical Node Configurations .............................................................................. 40

IP-10G Front Panel and Interfaces ...................................................................... 51

IP-10G Front Panel with Dual Feed Power ......................................................... 51

IP-10G Front Panel with Dual Feed Power and 16 X E1 T-Card ........................ 51

1+1 HSB Protection – Connecting the IDUs ....................................................... 72

1+1 HSB Node with BBS Space Diversity........................................................... 73

3 x 1+1 Aggregation Site ..................................................................................... 73

Path Loss on Secondary Path of 1+1 HSB Protection Link .............................. 74

Multi-Radio 2+0 with Line Protection – Traffic Flow .......................................... 77

Hardware Protection with Single Interface Using Optical Splitter .................... 82

Full protection with Dual Interface Using Optical Splitters and LAG ............... 82

Full Protection Using Multi-Unit LAG ................................................................. 82

Multi-Unit LAG – Basic Operation ....................................................................... 85

Layer 1 Header Suppression ............................................................................... 91

Legacy MAC Header Compression ..................................................................... 92

Multi-Layer (Enhanced) Header Compression ................................................... 94

Propagation Delay with and without Frame Cut-Through ................................. 98

Frame Cut-Through.............................................................................................. 99

Ceragon Proprietary and Confidential Page 10 of 413

FibeAir® IP-10G Product Description for i7.1.2

Frame Cut-Through Operation ............................................................................ 99

Symmetrical Chain Example ............................................................................. 100

Asymmetrical Chain Example ........................................................................... 100

Symmetrical Aggregation Site Example ........................................................... 101

Asymmetrical Aggregation Site Example ......................................................... 101

Adaptive Coding and Modulation with Eight Working Points ......................... 105

Adaptive Coding and Modulation ..................................................................... 106

IP-10G ACM with Adaptive Power Contrasted to Other ACM Implementations

....................................................................................................................... 109

Channel Mask Comparison ............................................................................... 110

Dual Polarization ................................................................................................ 113

XPIC - Orthogonal Polarizations ....................................................................... 114

XPIC – Impact of Misalignments and Channel Degradation ........................... 114

XPIC – Impact of Misalignments and Channel Degradation ........................... 115

Typical 2+0 Multi-Radio Link Configuration ..................................................... 117

Typical 2+2 Multi-Radio Terminal Configuration with HSB Protection........... 118

Direct and Reflected Signals ............................................................................. 122

Diversity Signal Flow ......................................................................................... 123

Ethernet Switching............................................................................................. 131

Carrier Ethernet Services Based on IP-10G ..................................................... 135

Carrier Ethernet Services Based on IP-10G - Node Failure............................. 135

Carrier Ethernet Services Based on IP-10G - Node Failure (continued) ........ 136

Ring-Optimized RSTP Solution ......................................................................... 140

Resilient In-Band Ring Management ................................................................ 144

Resilient Out-of-Band Ring Management ......................................................... 145

Basic IP-10G Wireless Carrier Ethernet Ring ................................................... 145

IP-10G Wireless Carrier Ethernet Ring with Dual-Homing .............................. 146

IP-10G Wireless Carrier Ethernet Ring - 1+0 .................................................... 146

IP-10G Wireless Carrier Ethernet Ring - Aggregation Site .............................. 147

Smart Pipe Mode QoS Traffic Flow ................................................................... 151

Managed Switch and Metro Switch QoS Traffic Flow ...................................... 152

Ceragon Proprietary and Confidential Page 11 of 413

FibeAir® IP-10G Product Description for i7.1.2

IP-10G Enhanced QoS ....................................................................................... 158

Classifier Traffic Flow ........................................................................................ 159

TrTCM Policers and MEF 10.2 ........................................................................... 160

TrTCM Policers – Leaky Bucket Mechanism .................................................... 161

Synchronized Packet Loss ................................................................................ 163

Random Packet Loss with Increased Capacity Utilization Using WRED ....... 164

WRED Profile Curve ........................................................................................... 165

IP-10G Configuration Example .......................................................................... 166

Example 1 – Hybrid Scheduling – Illustration .................................................. 167

Example 1 – Hierarchical Scheduling – Illustration ......................................... 168

Basic Cross-Connect Operation ....................................................................... 171

Cross-Connect Configurations ......................................................................... 173

TDM Cross-Connect Aggregation Example ..................................................... 174

1+1 HSB Protection for STM-1 T-Cards ............................................................ 175

Uni-directional MSP for STM-1 T-Cards............................................................ 175

PW T-Card Connected to Ethernet Port (Eth3) ................................................. 177

Smart TDM Pseudowire Bandwidth Utilization with CESoP ........................... 178

Migration from Hybrid to All-Packet Network – PW processing T-Card in Tail

Sites............................................................................................................... 180

Migration from Hybrid to All-Packet Network – PW processing T-Card in

Intermediate Aggregation Sites ................................................................... 180

Migration from Hybrid to All-Packet Network – PW processing T-Card in Fiber

PoP Sites ....................................................................................................... 181

Smart TDM Pseudowire with Native Service Stitching at Fiber Site ............... 181

Smart TDM Pseudowire End-to-End Overlay ................................................... 182

Smart TDM Pseudowire as part of Integrated CSG Solution .......................... 182

Smart TDM Pseudowire Path Protection .......................................................... 185

Wireless SNCP Operation.................................................................................. 188

Wireless SNCP - Branching Points ................................................................... 188

Wireless SNCP – Mixed Wireless Optical Network .......................................... 190

SNCP and ABR Comparison ............................................................................. 192

Dual Homing with ABR-Based TDM Protection ............................................... 195

Ceragon Proprietary and Confidential Page 12 of 413

FibeAir® IP-10G Product Description for i7.1.2

TDM and Ethernet Aggregation Case Study .................................................... 196

TDM-only Aggregation Ring with 100% Protection Based on SNCP 1+1 ....... 197

TDM Aggregation Ring - SNCP 1:1 Protection Bandwidth is Used for Ethernet

....................................................................................................................... 197

A Native Ethernet Ring with 100% or Partial Protection Based on STP ......... 198

Normal State ....................................................................................................... 198

Non-Affecting Failure......................................................................................... 198

Medium Severity Failure .................................................................................... 199

Worst Case Failure............................................................................................. 199

A Native2 Ring with Protected-ABR at Work.................................................... 199

ABR Advantages: Double Data Capacity, with no Impact on TDM in Failure

State .............................................................................................................. 200

Ceragon’s Unique ACM Adaption for TDM ....................................................... 202

Ceragon's Unique ACM Adaption for TDM ....................................................... 203

Synchronous Ethernet (SyncE)......................................................................... 207

Synchronization Configuration ......................................................................... 210

Synchronization using Native E1 Trails ........................................................... 211

Sync from Co-Located E1 Mode ....................................................................... 212

Native Sync Distribution Mode ......................................................................... 213

Native Sync Distribution Mode Usage Example .............................................. 214

Native Sync Distribution Mode – Tree Scenario .............................................. 215

Native Sync Distribution Mode – Ring Scenario (Normal Operation) ............. 215

Native Sync Distribution Mode – Ring Scenario (Link Failure) ....................... 216

Synchronization Resiliency Using SSM and ESMC ......................................... 220

Figure 1: 1500HP 2RX in 1+0 SD Configuration ............................................... 243

Figure 2: 1500HP 1RX in 1+0 SD Configuration ............................................... 243

Space Diversity with Multiple RFUs .................................................................. 247

Space Diversity with Single RFU ...................................................................... 247

All-Indoor Vertical Branching ............................................................................ 248

Split-Mount Branching and All-Indoor Compact .............................................. 248

Old OCB .............................................................................................................. 249

New OCB ............................................................................................................ 249

Ceragon Proprietary and Confidential Page 13 of 413

FibeAir® IP-10G Product Description for i7.1.2

Old OCB – Type 1 ............................................................................................... 250

Old OCB – Type 1 and Type 2 Description ....................................................... 250

Block Diagram of Trunk System ....................................................................... 254

All-Indoor System with Five IP-10 Carriers ...................................................... 254

All-Indoor System with Ten IP-10 Carriers ....................................................... 255

All-Indoor Installations ...................................................................................... 255

Subrack for ETSI Rack....................................................................................... 256

RFU with Branching ........................................................................................... 256

ICB Branching Chain ......................................................................................... 257

ICC ...................................................................................................................... 258

ICCD .................................................................................................................... 258

Fan Tray in 19” Frame Rack .............................................................................. 259

T12 Rigid Waveguide ......................................................................................... 259

T13 Rigid Waveguide ......................................................................................... 259

4+1 XPIC Assembly Configuration.................................................................... 260

Additional Assembly Configuration Examples ................................................ 260

Lab Rack (Open Frame) Examples ................................................................... 261

19” Rack Example .............................................................................................. 262

ETSI Rack Example ............................................................................................ 262

Configuration with More than Ten Carriers – Two Connected Racks ............ 263

1500HP RFU All-Indoor 1Rx RF Unit ................................................................. 265

1500HP RFU All-Indoor Space Diversity........................................................... 265

1500HP RFU All-Indoor 1Rx RF Unit, 11G 40MHz ............................................ 266

1+1 HSB Compact Front View ........................................................................... 266

1+1 HSB Compact Rear View ............................................................................ 266

PDU with 10 Switches PN: 32T-PDU10 ............................................................. 268

Basic 1+0 Configuration .................................................................................... 290

1+1 HSB Configuration ...................................................................................... 291

1+0 with 32 E1s .................................................................................................. 292

1+0 with 64 E1s .................................................................................................. 293

2+0/XPIC Link with 64 E1s – No Multi-Radio .................................................... 294

Ceragon Proprietary and Confidential Page 14 of 413

FibeAir® IP-10G Product Description for i7.1.2

2+0/XPIC Link with 64 E1s – Multi-Radio .......................................................... 295

2+0/XPIC Link, with 32 E1s + STM-1 Mux Interface, no Multi-Radio, up to 168

E1s Over the Radio....................................................................................... 296

1+1 HSB with 32 E1s .......................................................................................... 297

1+1 HSB with 64 E1s .......................................................................................... 298

1+1 HSB with 84 E1s .......................................................................................... 299

1+1 HSB Link with 16 E1s+ STM-1 Mux Interface ............................................ 300

Native2 2+2/XPIC/Multi-Radio MW Link, with 2xSTM-1 Mux (up to 150 E1s over

the radio) ....................................................................................................... 301

Chain with 1+0 Downlink and 1+1 HSB Uplink, with STM-1 Mux .................... 303

Node with 2 x 1+0 Downlinks and 1 x 1+1 HSB Uplink .................................... 304

Chain with 1+1 Downlink and 1+1 HSB Uplink, with STM-1 Mux .................... 305

Native2 Ring with 3 x 1+0 Links + STM-1 Mux Interface at Main Site ............. 306

Native2 Ring with 3 x 1+1 HSB Links + STM-1 Mux Interface at Main Site ..... 307

Node with 1 x 1+1 HSB Downlink and 1 x 1+1 HSB Uplink with STM-1 Mux .. 308

Native2 Ring with 4 x 1+0 Links, with STM-1 Mux ........................................... 309

Native2 Ring with 3 x 1+0 Links + Spur Link 1+0 ............................................. 310

Native2 Ring with 4 x 1+0 MW Links and 1 x Fiber Link (5 hops total), with STM-

1 Mux ............................................................................................................. 311

Native2 Ring with 2 x 2+0/XPIC MW Links and 1 x Fiber Link (3 hops total), with

2 x STM-1 Mux .............................................................................................. 312

Integrated IP-10G Management Tools .............................................................. 314

In-Band Management Isolation ......................................................................... 320

Security Solution Architecture Concept........................................................... 322

OAM Functionality ............................................................................................. 336

IDU 1+0 ............................................................................................................... 388

Termination Cable .............................................................................................. 388

Adaptors ............................................................................................................. 388

IDU 1+1 ............................................................................................................... 388

Protection (Y) Cable ........................................................................................... 388

Termination Cable .............................................................................................. 388

Adaptors ............................................................................................................. 388

Ceragon Proprietary and Confidential Page 15 of 413

FibeAir® IP-10G Product Description for i7.1.2

Ethernet + 32 E1s, 1+0 ....................................................................................... 389

Ethernet + 32 E1s, 1+1 HSB............................................................................... 390

Basic IP-10G Unit ............................................................................................... 391

IP-10G Unit with Dual-Feed Power .................................................................... 391

Main Nodal Enclosure Unit ................................................................................ 391

Extension Nodal Enclosure Unit ....................................................................... 391

E1 T-Card ............................................................................................................ 392

STM-1 T-Card...................................................................................................... 392

Pseudowire T-Card ............................................................................................ 392

SFP Optical Interface Plug-In ............................................................................ 393

WSC Protection Cable ....................................................................................... 395

Ethernet Cross-Connect Cable ......................................................................... 395

Ethernet Y Cable ................................................................................................ 396

Optical Y Cable, Adaptor, and Extension Cable .............................................. 397

E1 Open-End Extension Cable .......................................................................... 399

E1 Extension Cable with RJ-45 Female End .................................................... 399

E1 Male-to-Male Extension Cable ..................................................................... 400

E1 Y Cable .......................................................................................................... 404

E1 Expansion Panel with RJ-45 Female Sockets ............................................. 405

E1 75 ohm Expansion Panel .............................................................................. 406

E1 75 ohm Extension for 1+1 HSB Configurations .......................................... 407

Alarms Cable ...................................................................................................... 408

Alarms Y Cable................................................................................................... 408

User Channel Cable ........................................................................................... 409

User Channel Cable with Y Cable ..................................................................... 409

User Channel Cable with Two Y Cables (Synchronous) ................................. 409

Ceragon Proprietary and Confidential Page 16 of 413

FibeAir® IP-10G Product Description for i7.1.2

List of Tables

FibeAir IP-10 Series Overview ............................................................................. 36

New Features in Version i7.1. .............................................................................. 42

Enhancements of Existing Features in Version I7.1 .......................................... 42

Feature Support in R2 and R3 ............................................................................. 44

Feature Support by Software Version ................................................................ 44

IP-10G Interfaces.................................................................................................. 52

Ethernet Interface Functionality.......................................................................... 54

Management Interfaces ....................................................................................... 56

T-Card in Add-In Slot ........................................................................................... 60

16 X E1 T-Card...................................................................................................... 60

STM 1 Mux T-Card ................................................................................................ 60

16 x E1 TDM Pseudowire (PW) Processing T-Card............................................ 60

License Types ...................................................................................................... 67

Comparison of IP-10G Protection Options ......................................................... 71

HSB Protection Switchover Triggers .................................................................. 80

Ethernet Line Protection Comparison ................................................................ 83

Multi-Unit LAG Failure Scenarios ....................................................................... 86

Header Compression ........................................................................................... 90

Ethernet Header Compression Comparison Table ............................................ 96

ACM Working Points (Profiles) ......................................................................... 105

BBS and IFC Comparison.................................................................................. 126

Managed Switch Mode....................................................................................... 132

VLANs Reserved for Internal Use in Managed Switch Mode .......................... 132

Metro Switch Mode ............................................................................................ 133

Carrier Grade Ethernet Feature Summary ........................................................ 134

Provider Bridge RSTP PDUs in CN Ports ......................................................... 139

Provider Bridge RSTP PDUs in PN Ports ......................................................... 139

Per-Queue Counters Availability....................................................................... 162

Example 1 – Hybrid Scheduling ........................................................................ 167

Ceragon Proprietary and Confidential Page 17 of 413

FibeAir® IP-10G Product Description for i7.1.2

Example 2 – Hierarchical Scheduling ............................................................... 168

IP-10G Standard and Enhanced QoS Features ................................................ 169

RFU Selection Guide.......................................................................................... 223

RFU-C Mechanical, Electrical, and Environmental Specifications ................. 236

RFU-C Mediation Device Losses....................................................................... 237

RFU-C – Waveguide Flanges ............................................................................. 238

1500HP/RFU-HP Mechanical, Electrical, and Environmental Specifications . 242

1500HP/RFU-HP Comparison Table .................................................................. 245

New OCB Component Summary ....................................................................... 252

All-Indoor Compact Placement Components................................................... 267

RFU Models ........................................................................................................ 269

OCB Part Numbers............................................................................................. 270

OCB Part Numbers for All Indoor Compact ..................................................... 270

All-Indoor Configurations (1+0 /1+1 HSB) ........................................................ 271

All-Indoor Configurations (N+0/N+1 XPIC) ....................................................... 271

All-Indoor Configurations (N+0 / N+1 XPIC Space Diversity) .......................... 272

All-Indoor Configurations (N+0 / N+1 XPIC Space Diversity) .......................... 272

All-Indoor Configurations (N+0/N+1 Single Pol) .............................................. 273

All-Indoor Configurations (N+0/N+1 Single Pol Space Diversity) ................... 273

All-Indoor Configurations (N+0/N+1 XPIC Upgrade ready) ............................. 273

All-Indoor Configurations (N+0/N+1 XPIC Space Diversity Upgrade-Ready) . 274

All-Indoor Configurations (19" Without Rack) ................................................. 274

RFU-HS Mechanical, Electrical, and Environmental Specifications ............... 277

RFU-SP Frequency Bands ................................................................................. 281

RFU-SP Mechanical, Electrical, and Environmental Specifications ............... 282

RFU-HS-SP Antennas ........................................................................................ 283

1500P Mechanical, Electrical, and Environmental Specifications .................. 285

1500P Mediation Device Losses ....................................................................... 286

1+1 Components ................................................................................................ 290

1+1 HSB Components........................................................................................ 291

1+0 with 32 E1s Components (Each Side of Link) ........................................... 292

Ceragon Proprietary and Confidential Page 18 of 413

FibeAir® IP-10G Product Description for i7.1.2

1+0 with 64 E1s Components (Each Side of Link) ........................................... 293

2+0/XPIC Link with 64 E1s (no Multi-Radio) Components (Each Side of Link)294

2+0/XPIC Link with 64 E1s (Multi-Radio) Components (Each Side of Link) ... 295

Required Components (Each Side of Link) ...................................................... 296

1+1 HSB with 32 E1s Components (Each Side of the Link) ............................ 297

1+1 HSB with 64 E1s Components (Each Side of the Link) ............................ 298

1+1 HSB with 84 E1 Components (Each Side of the Link) .............................. 299

1+1 HSB Link with 16 E1s+ STM-1 Components (Each Side of the Link) ...... 300

Native2 2+2/XPIC/Multi-Radio MW Link, with 2xSTM-1 Components (Each Side

of the Link) .................................................................................................... 301

Chain with 1+0 Downlink and 1+1 HSB Uplink, with STM-1 Mux Components

(Entire Chain) ................................................................................................ 303

Node with 2 x 1+0 Downlinks and 1 x 1+1 HSB Uplink Components (Entire

Node) ............................................................................................................. 304

Chain with 1+1 Downlink and 1+1 HSB Uplink, with STM-1 Mux Components

(Entire Chain) ................................................................................................ 305

Native2 Ring with 3 x 1+0 Links + STM-1 Mux Interface at Main Site

Components (Entire Ring) ........................................................................... 306

Native2 Ring with 3 x 1+1 HSB Links + STM-1 Mux Interface at Main Site

Components (Entire Ring) ........................................................................... 307

Node with 1 x 1+1 HSB Downlink and 1 x 1+1 HSB Uplink with STM-1 Mux

Components (Entire Node) .......................................................................... 308

Native2 Ring with 4 x 1+0 Links, with STM-1 Components (Entire Ring) ........ 309

Native2 Ring with 3 x 1+0 Links + Spur Link 1+0 Components (Entire Ring) . 310

Native2 Ring with 4 x 1+0 MW Links and 1 x Fiber Link with STM-1 Mux

Components (Entire Ring) ........................................................................... 311

Native2 Ring with 2 x 2+0/XPIC MW Links and 1 x Fiber Link with 2 x STM-1

Components (Entire Ring) ........................................................................... 312

Dedicated Management Ports ........................................................................... 315

PolyView Server Receiving Data Ports ............................................................. 316

Web Sending Data Ports ................................................................................... 316

Web Receiving Data Ports ................................................................................. 316

Additional Management Ports for IP-10G ......................................................... 316

Supported Ethernet Standards ......................................................................... 343

Ceragon Proprietary and Confidential Page 19 of 413

FibeAir® IP-10G Product Description for i7.1.2

Ethernet Cable and Splitter (Copper) Marketing Models ................................. 394

Ethernet RJ45 - RJ45 Cable Marketing Models ................................................ 394

WSC Protection Cable Marketing Model .......................................................... 395

Ethernet Protection Cable Marketing Model .................................................... 395

Ethernet Y Cable Marketing Model ................................................................... 396

Optical Y Cables, Adaptors, and Extension Cable Marketing Models ............ 397

Optical H Cable Marketing Models.................................................................... 398

E1 Open-End Extension Cable Marketing Models ........................................... 399

E1 Extension Cable with RJ-45 Female End Marketing Models...................... 399

E1 Male-to-Male Extension Cable Marketing Models ....................................... 400

E1 Open-End Termination Cables..................................................................... 401

E1 RJ-45 Female (Socket) Termination Cables ................................................ 401

E1 RJ-45 Male Termination Cables ................................................................... 401

E1 MDR69 - MDR69 Cross Cables (for Chaining Applications) ...................... 402

E1 Special Cables .............................................................................................. 403

E1 Y Cable Marketing Models ........................................................................... 404

Expansion Panel, Adaptor, and Cable Marketing Models ............................... 405

75 ohm Expansion Panel Marketing Models .................................................... 406

75 ohm Extension Marketing Models................................................................ 407

Alarm Cable Marketing Models ......................................................................... 408

User Channel Cable Marketing Models ............................................................ 409

IF Cable Marketing Models ................................................................................ 410

Ceragon Proprietary and Confidential Page 20 of 413

FibeAir® IP-10G Product Description for i7.1.2

About This Guide

This document describes the main features, components, and specifications of

the FibeAir IP-10G high capacity IP and Migration-to-IP network solution. This

document also describes a number of typical FibeAir IP-10G configuration

options. This document applies to hardware versions R2 and R3 and software

version I7.1.2.

What You Should Know

This document describes applicable ETSI standards and specifications. A

North America version of this document (ANSI, FCC) is also available.

Target Audience

This manual is intended for use by Ceragon customers, potential customers,

and business partners. The purpose of this manual is to provide basic